How to Think in the AI Era: A 90-Minute Crash Course

6 Disciplines · 6 AI Failure Modes · One Rule

Two people open the same AI tool on Monday morning. Same task: figure out whether their company should hire a senior strategy lead or invest the same money in licenses, infrastructure, and design time to build an AI workforce that augments every existing consultant. Both have access to Claude, ChatGPT, and Gemini. Both have the same week to decide.

Person A finishes Friday with a defensible recommendation, a documented record of every claim she accepted and rejected, and three reversal triggers the board can hold her to. Person B finishes Friday with a polished memo that mostly restates the analysis AI handed back on Monday, and no answer when the CFO asks why paragraph four landed where it did.

Same tools. Same problem. Different outcomes. The difference is not which model they prompted. It is not which features they used. It is not which prompt-engineering tricks they applied. The difference is cognitive: Person A formed a position before opening any AI; Person B inherited a position from the first reasonable-sounding paragraph she read.

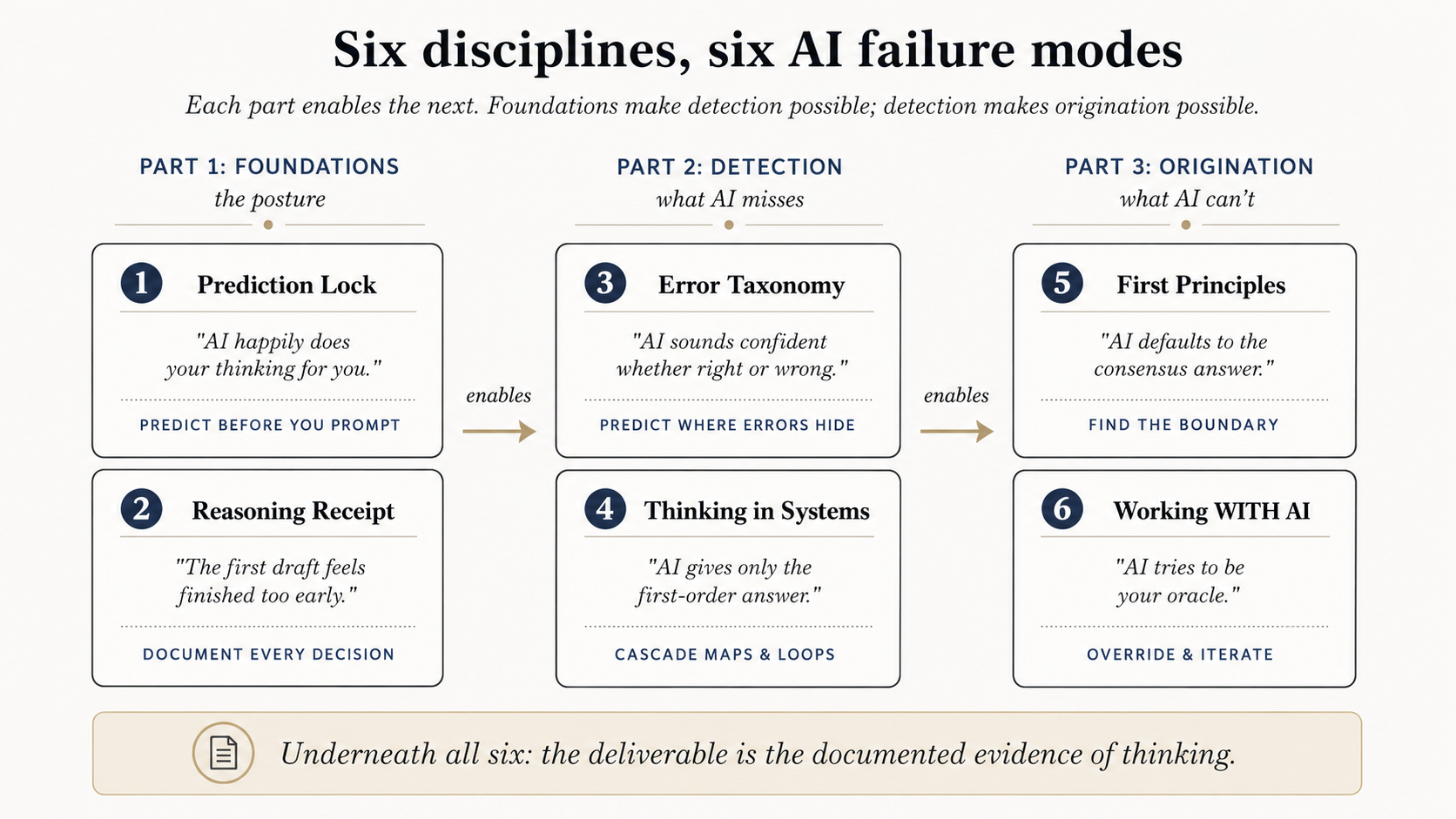

That gap is what this crash course closes. Six disciplines, three short parts, no code. Each discipline addresses a distinct way AI fails when left to run unsupervised. Together they convert AI from an oracle (you ask, it answers, you accept) into a sparring partner (you predict, it answers, you compare, you decide).

Prerequisites. This page assumes you have finished AI Prompting in 2026. That course taught the mechanics: context, reasoning modes, deep research, multimodal, AI desktop apps. This course teaches the discipline that makes the mechanics pay off. Open a free account with Claude, ChatGPT, or Gemini in another tab now. You will use it in the practice callouts.

The thesis in one line

The deliverable is never the answer. The deliverable is the documented evidence of thinking.

The essentials (five bullets)

These five bullets are the shape of the page. They are not the page. Read them as the map; read the disciplines below as the territory. The bullets tell you what to do; the disciplines below are how you actually do it without falling back into the patterns you have been using since you started prompting in 2023.

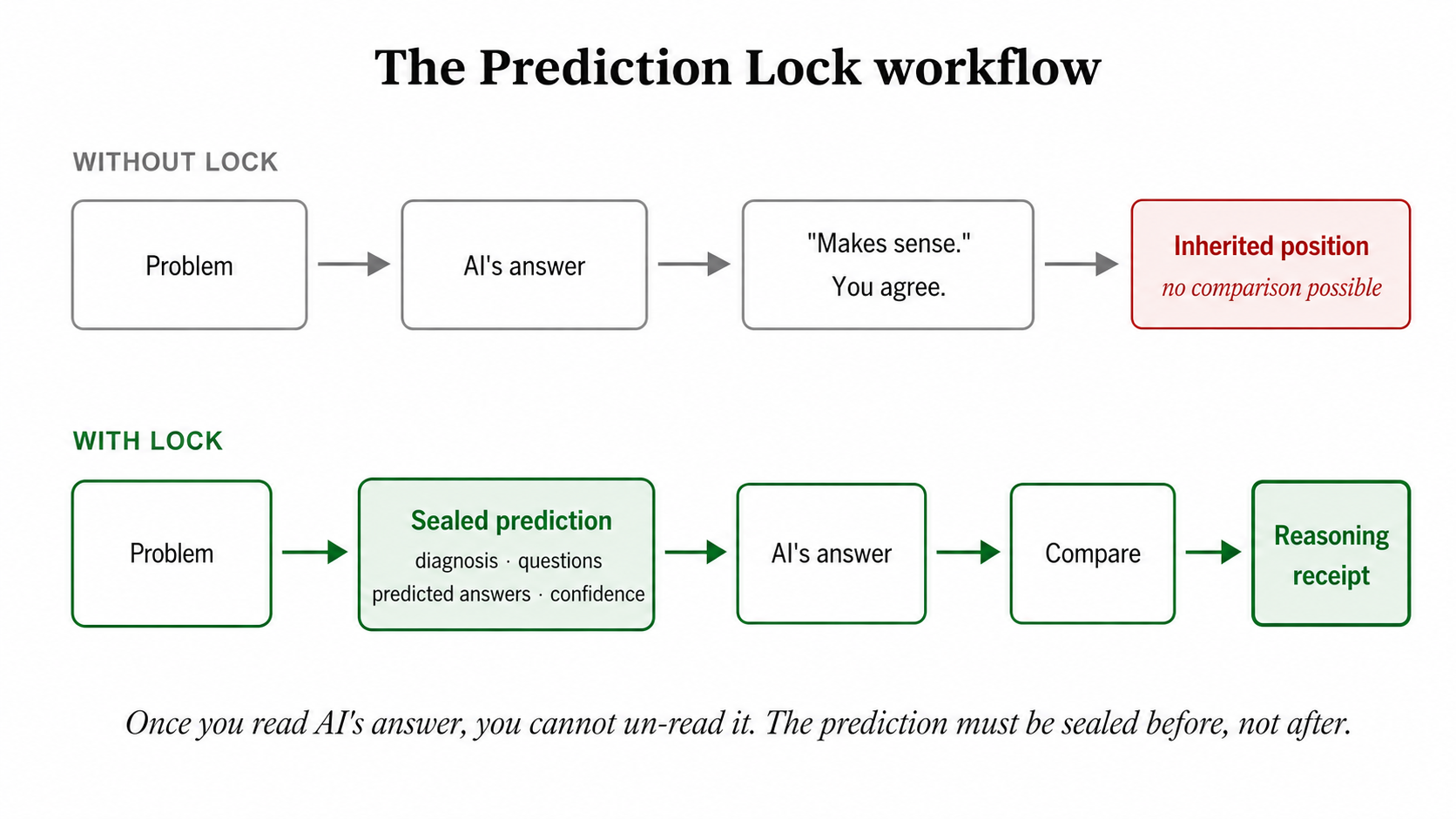

- Predict before you prompt. Once you read AI's answer, you cannot un-read it. The first reasonable-sounding paragraph occupies the space where your own position would have gone. Seal a diagnosis, ranked questions, predicted answers, and confidence before you open any tool.

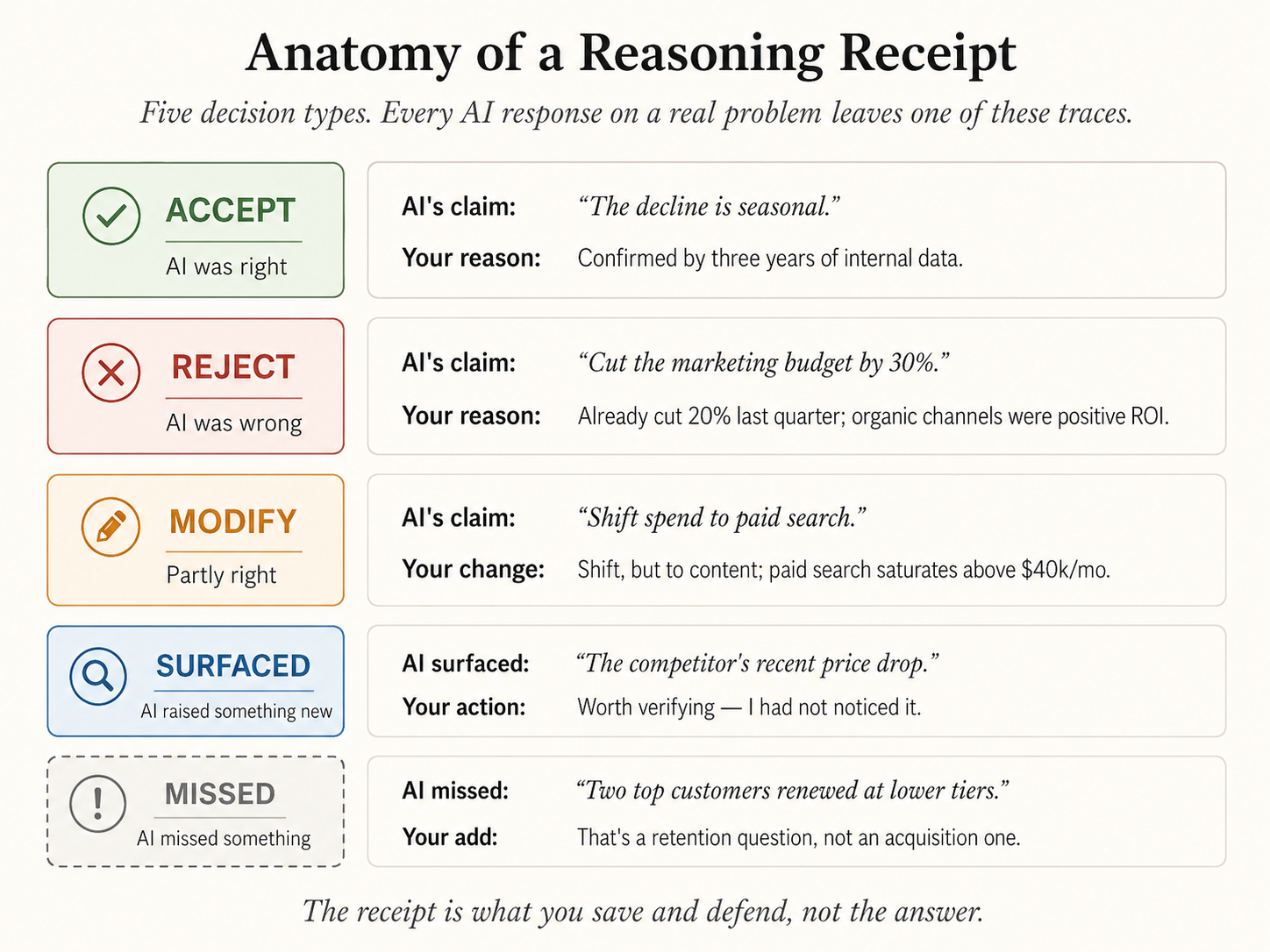

- The receipt is the deliverable. For every load-bearing claim AI makes, you record ACCEPT, REJECT, MODIFY, SURFACED, or MISSED with one sentence of why. An empty receipt or an all-ACCEPT receipt means no real thinking happened.

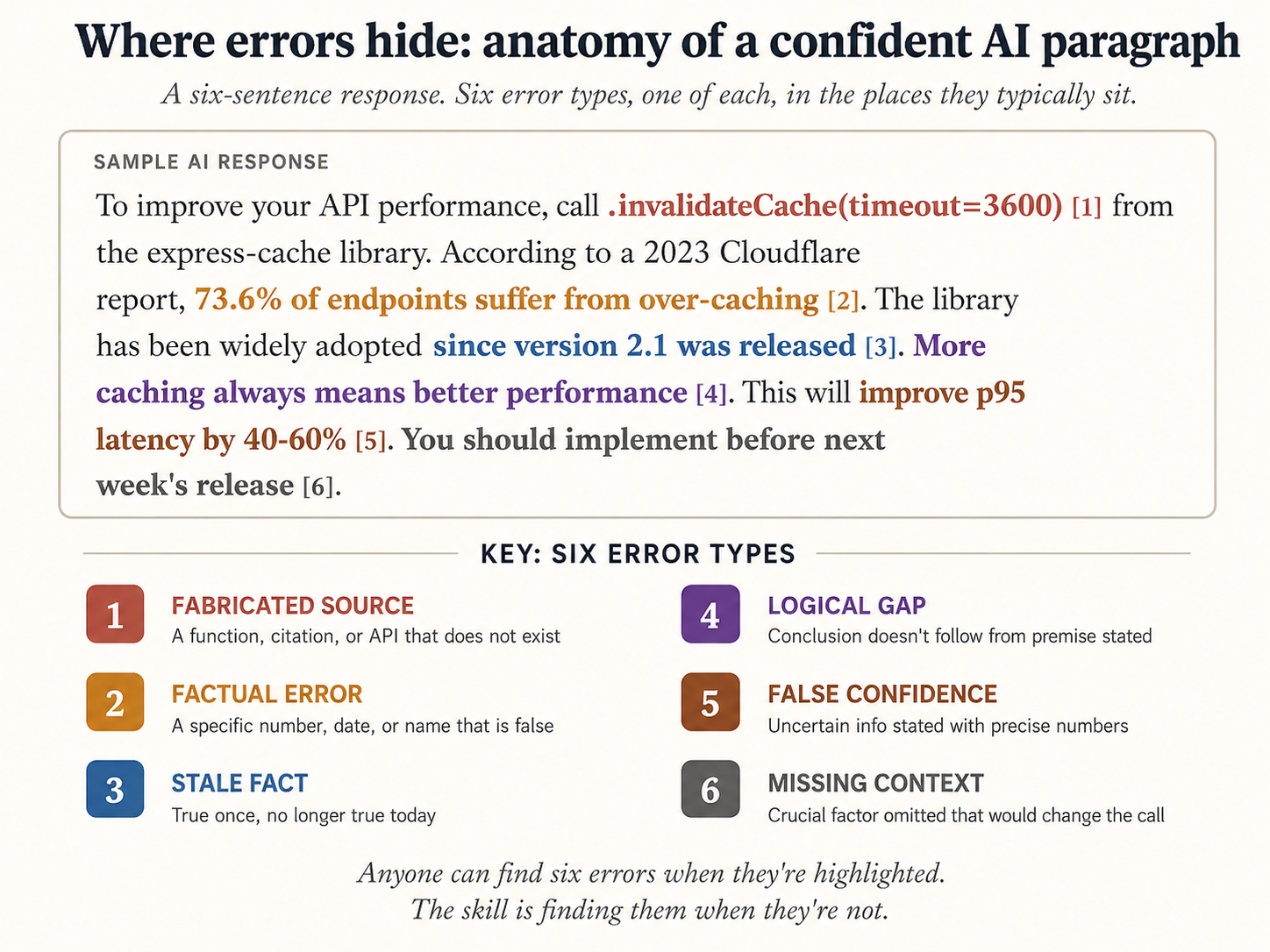

- Fluent prose is not the same as accurate prose. AI sounds confident whether right or wrong. Six error types hide inside polished output. Scan for them by name before you forward, ship, or act.

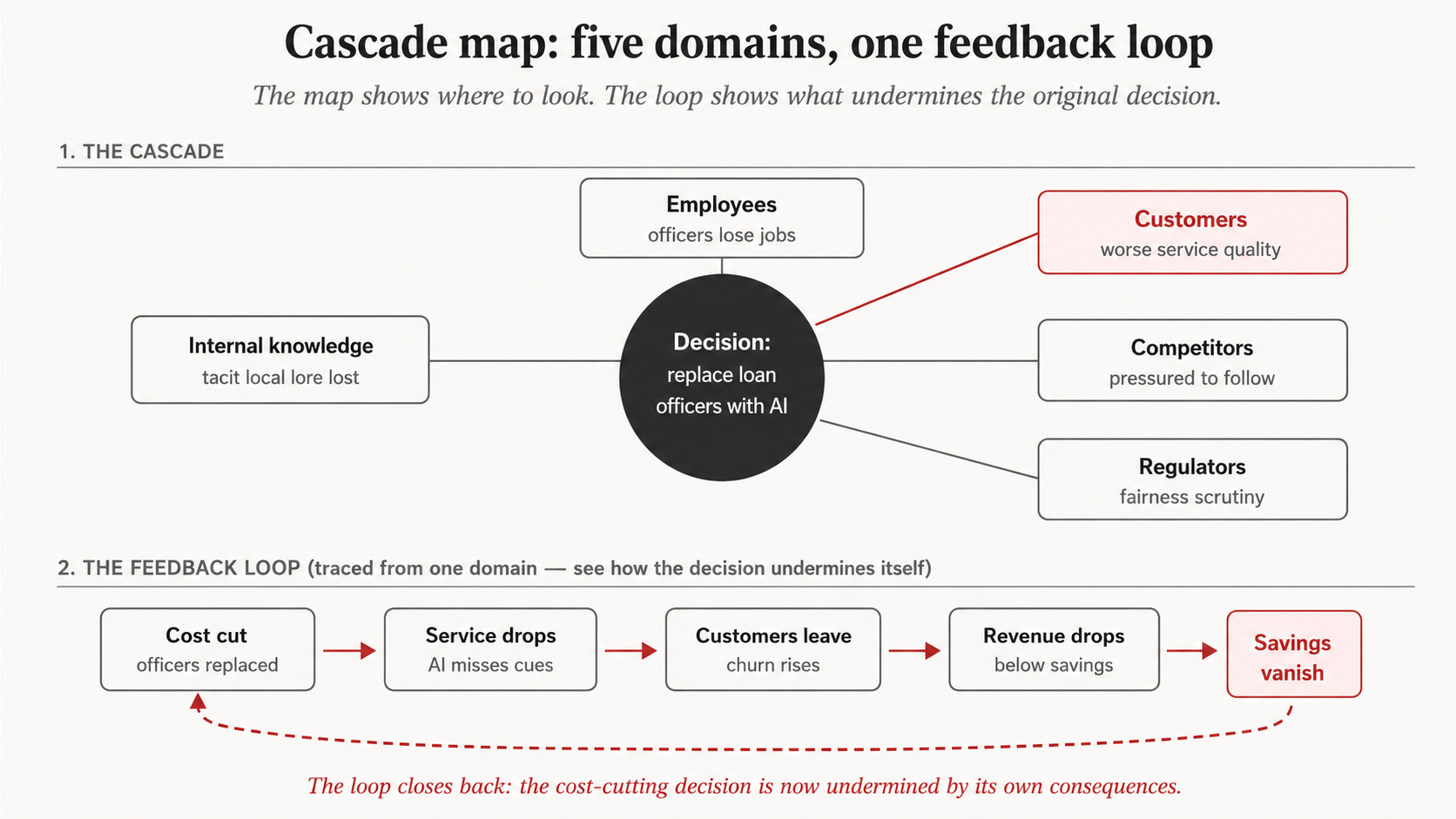

- First-order is never the whole answer. AI optimizes the visible variable and ignores the three it just disturbed. Cascade-map any decision worth a meeting. Find at least one feedback loop. Insist on mechanisms, not labels.

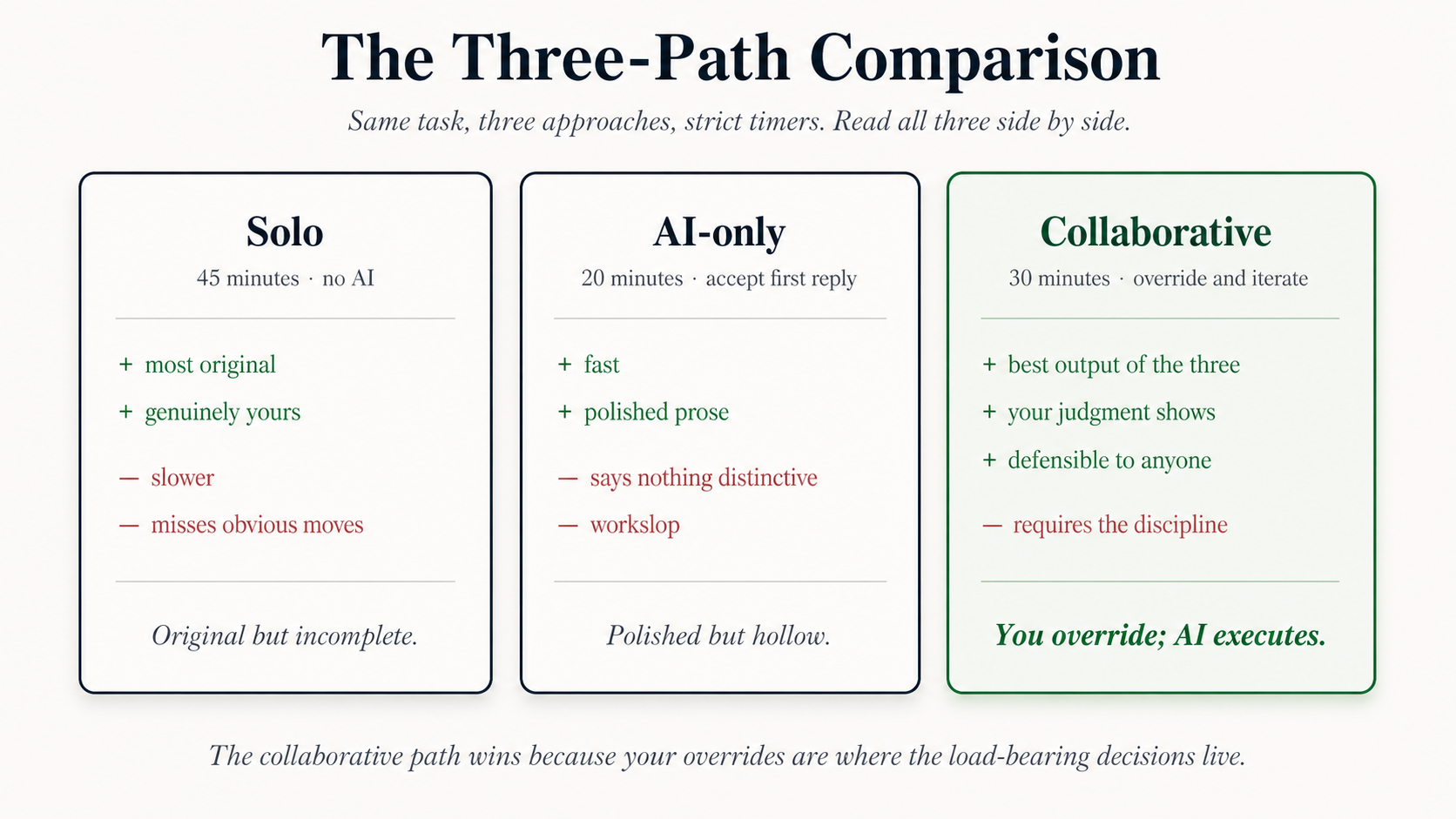

- Collaboration is the third path. Solo loses on speed. AI-only loses on originality. Collaboration wins both, but only when you do the load-bearing reasoning and AI does the tedium. Reverse that split and you become a middleman between a question and an answer. Middlemen get automated.

The sixth discipline (First Principles) and the practice scaffolding behind all five bullets above are how you operationalize this list in real work.

Figure 1: Six disciplines map to six AI failure modes, arranged in three arcs. Detection requires Foundations; Origination requires both.

Figure 1: Six disciplines map to six AI failure modes, arranged in three arcs. Detection requires Foundations; Origination requires both.

Why these disciplines look obvious in hindsight

Read the five bullets above and the reaction is usually a half-shrug: yes, of course. Predict before you prompt. Document your decisions. Check for errors. Trace the second-order effects. Find the boundary where consensus breaks. Collaborate instead of accepting. These are not new ideas. The Lindy Effect (the heuristic that what has survived long is likely to keep surviving) says exactly this: ideas in the cognition category that look old usually look old because they have already been tested by every prior generation of thinker. Predict-before-you-prompt is a 400-year-old courtroom rule. Reasoning receipts are how editors have always read drafts. Cascade maps are second-year systems engineering. First principles is Aristotle.

What changed in 2026 is not the disciplines. It is the cost of skipping them. When polished output was expensive, the bottleneck was production: could you actually make the thing? AI made polished output free. Any twelve-year-old with a free account can produce a memo that looks finished. The bottleneck moved to evaluation: can you tell whether the thing is right? A confidently wrong AI analysis is more dangerous than no analysis, because it looks finished. The disciplines below are not optional good habits anymore. They are the place where your judgment becomes visible to others, and your judgment is the only thing AI cannot fake.

The other half of the Lindy point: tools change every six months; thinking does not. The teams that built their entire 2023 workflow around one AI product mostly rebuilt it in 2024 and again in 2025 as model releases reshuffled the surface. The skills underneath the products keep their value when the products do not. Bet on what lasts.

Reading paths

Three ways to read this page, depending on how much time you have today:

- 30-minute taste (first-time read, or if you only have a coffee break): Read Disciplines 1, 2, 3, and 6. The four cover the shifts that matter most: predict before you prompt, document your verdicts as you work, scan AI output for the six error types by name, and stop treating AI as an oracle. Discipline 2 (Reasoning Receipt) is short but load-bearing. Skip it and you end up with a Prediction Lock you cannot defend in the room. Come back for Disciplines 4 and 5 on a separate sitting once you have felt the difference in real work.

- 90-minute essential read (the standard path, recommended): Read all six disciplines in order. Read every worked example. Run at least one practice callout per discipline this week. Skip the optional Blank Page Sprint extension.

- Full read with practices (about two hours plus a week of real-work application): Everything on this page, plus every practice exercise run on actual decisions you face in the next seven days. This is the path that builds the instincts. The first time you try the Prediction Lock, your predicted answers will be vague or wrong. That is the point. The gap between your prediction and AI's answer is where the calibration happens.

Pick the path that matches your week. The disciplines stick when you run them on real problems, not when you read about them once.

Part 1: Foundations (the posture)

Two habits underlie everything that follows. If you skip every other section, do not skip these two. The first failure mode of AI in 2026: it happily does your thinking for you. It hands you a finished-sounding answer before you have formed your own position. The second failure mode: the first draft feels finished too early. AI's polish looks like completeness, so you ship before you have evaluated. Discipline 1 (Prediction Lock) is the posture you take before you open AI. Discipline 2 (Reasoning Receipt) is the artifact you produce while you work with it. Together they keep the judgment with the human while the model does the heavy lifting. Everything in Parts 2 and 3 assumes you have these two in place.

Discipline 1: The Prediction Lock

You know the trap. You paste a real problem into Claude or ChatGPT, the answer comes back fast and fluent, you skim it and nod, and three minutes later it's in your slides. Two days later in a meeting your boss asks why you went that direction and you realize you can't actually defend the reasoning. You inherited it. You didn't earn it.

Here's the fix. Ten minutes the first time, three minutes after that. Before you open AI, write four lines.

- What's actually going on. Two sentences. Name a mechanism, not a topic. "Sales fell because returning customers shifted to online and our marketing chased acquisition instead of conversion" is a mechanism. "Competition is up" is a topic.

- The one question that would split the room. If you got an honest answer, which one would make some of your options look obviously wrong?

- What you think the answer is. Be specific. A number, a name, a yes/no with a threshold. Not "it depends."

- How sure you are, and what would flip you. "60% confident; if X is above 50%, my theory collapses."

Those four lines together are called the Prediction Lock. Locked because they carry a timestamp earlier than AI's response. (On real decisions worth a meeting, expand line 2 to 5-10 ranked questions. For the exercise below, one sharp question is enough to teach the move.)

Once you read AI's answer, you can't un-read it. Write your prediction first, or skip writing it at all.

Once you read AI's answer, you can't un-read it. Write your prediction first, or skip writing it at all.

Here's what this looks like in real life.

A regional bank head had a board deadline in ten days. The bank could open three new branches in growing suburbs, or close two underperforming ones. Both options were defensible. Her CFO leaned toward closure. Her COO leaned toward expansion. She had Claude, ChatGPT, and a licensed internal tool sitting open. The easy move was to brief one of them and let it draft the board memo.

She didn't. Before opening anything, she wrote four lines:

| Line | Her entry |

|---|---|

| Diagnosis | The two losing branches lose money because the surrounding ZIP codes shifted to mobile-only customers who never visit a branch. The three growth-suburb sites are at the inflection point where new homeowners still sign mortgages in person. |

| Sharpest question | What fraction of the two losing branches' deposit balances belongs to customers whose primary contact has been mobile-only for 12+ months? |

| Predicted answer | 70% or higher. |

| Confidence + reversal | 60%. If the in-person signing requirement in the new ZIPs is above 60%, the closure-only case collapses. |

Then she opened Claude and asked the question. The answer was 45%, not 70%. Her prediction was wrong. Her question was right. She didn't throw out the lock; she wrote the gap down next to AI's answer and noted "the high-mobility customers are the transaction base, not the deposit base." That note became the first line of the board memo. The version she would have written without the lock would have opened with whatever framing Claude handed her first.

The same person without the lock writes something like this:

| Line | Weak entry | Why it fails |

|---|---|---|

| Diagnosis | Branches are losing money because of competition. | Names a topic, not a mechanism. Could mean ten different things. |

| Sharpest question | Should we close them or open new ones? | The same yes/no the board is already arguing about. Doesn't narrow anything. |

| Predicted answer | Probably close some, open others. | Restates the diagnosis as a prediction. Nothing to be wrong about. |

| Confidence + reversal | Medium. Need more data. | Unfalsifiable. "More data" isn't a reversal trigger because anything could count. |

Same person, same hour, same situation. The difference isn't intelligence. It's whether you wrote the lock as a forcing function or a fig leaf.

Try it yourself

You head retail at a mid-size apparel chain. Same-store sales fell 12% last quarter while marketing spend rose 18%, and two competitors in your region reported flat-to-up numbers. Your CEO wants a diagnosis and a recommendation by Friday. Three department heads are already lobbying for their preferred answer: discount harder, cut marketing, or change merchandising.

What's going on, and what would you ask first?

(If retail isn't your work, swap the surface but keep the shape: an unexplained drop, competing internal theories, a deadline, and the data to test it. Or pull a real decision from your week. That's what makes it stick.)

One note before the form. The feedback below was tuned for a frontier model (Claude Sonnet 4.5+, Opus 4.7, GPT-5, Gemini 2.5 Pro). Smaller models tend to handwave regardless of input quality.

Here's what the AI will check:

- Does your diagnosis name a mechanism, or just a topic? Rate 1-10. Quote the part of my work that decides.

- Would your question, if answered, narrow your real options? Rate 1-10. Name one alternative explanation (a different cause that fits the same situation) that this question would NOT distinguish from mine.

Don't rewrite my work. Don't grade me on personality or "thinking style." If a field is empty or vague, say so plainly in one line.

Your diagnosis (2 sentences; name a mechanism, not a topic):

Your sharpest question (1 sentence; name a specific measurement, file, ratio, or decision rule):

Your guess at the answer (1 sentence; a specific prediction, not a hedge):

Your confidence and what would change your mind (1 sentence):

Discuss with an AI. Question your scores.

Come back when you have your BEST evaluation.

Plan on 8-15 minutes the first time. Faster after that. The most useful thing you can do with the AI's feedback is find one place you disagree. That's where your judgment actually lives. If you can't find a place to push back, the AI was right; if you can, you just got the value.

What you just did is half the move. The other half (logging AI's answer next to your prediction, writing accept / reject / modify for each load-bearing claim) is what comes next, in Discipline 2. Without it, the Prediction Lock is a one-shot exercise; with it, it becomes an audit trail you can defend in a room.

Want a strong sample to compare against? (Open after you submit your own.)

A reader running the same retail scenario wrote this. It isn't the only good answer; it just shows the shape.

| Line | Their entry |

|---|---|

| Diagnosis | Same-store sales fell because the channel mix shifted: returning customers are buying online while the marketing lift pushed acquisition instead of conversion. Foot traffic is probably down even though the marketing bought visits. |

| Sharpest question | What share of last quarter's online revenue came from existing customers who also visited a store in the prior 12 months, versus net-new customers? |

| Predicted answer | Existing-customer share is 60% or higher. |

| Confidence + reversal | 55%. If existing-customer share is below 40%, the channel-shift theory dies and the real story is marketing inefficiency or competitive loss. |

What makes this work: the diagnosis names a mechanism (channel shift + acquisition-vs-conversion mismatch), not a topic. The question names a specific measurement (existing-customer share), a specific window (prior 12 months), and produces a number that would actually let the writer rule out competing theories. The reversal trigger is binary and falsifiable.

What it doesn't try to do: be brilliant. The mechanism is just plausible. The discipline is in writing it down before opening AI, not in being right on the first try.

If you want the research backing (click to expand)

Pre-committing your position before consulting an authority is a well-studied move. It predates AI by decades.

- Klein, G. (2007). "Performing a Project Pre-mortem." Harvard Business Review. The closest direct ancestor. Klein's pre-mortem says: before you start, imagine the project failed and write the reasons. Same mechanism: force a written position before the consensus-forming dynamic kicks in.

- Tetlock, P. (2015). Superforecasting. The Good Judgment Project showed that scoring predictions against outcomes drives calibration: you can't score what you didn't record. The Prediction Lock applies that mechanism to AI, where the analog is recording your position before the model contaminates it.

- Tversky, A. & Kahneman, D. (1974). "Judgment under Uncertainty: Heuristics and Biases." Science. The anchoring research showed that exposure to an anchor pulls subsequent estimates toward it, even when the anchor is irrelevant. The 1974 paper was about numeric anchors; the AI version is closer to Anderson, Lepper & Ross's (1980) belief perseverance and Klein's (1998) Recognition-Primed Decision model. Once a coherent narrative occupies the cognitive slot where your own thinking would have gone, you can't tell what you would have thought without it.

No single trial has tested the Prediction Lock for AI specifically. The cognitive pattern is well-studied; transferring it to AI is the obvious extension, not a separately validated finding.

The full version of this exercise (10 ranked questions plus the Reasoning Receipt template; 45-60 minutes) lives in Part 0 Chapter 1, Lesson 1. This page teaches the move. That page makes it a system.

Discipline 2: The Reasoning Receipt

You spent the morning iterating with Claude on a real document. The output is clean. You dropped it into your slides, ran the meeting, moved on. Two weeks later in a post-mortem your boss asks "which parts did you actually push back on?" and you realize you can't remember. You skimmed, you accepted, you shipped. The deliverable passed. The thinking didn't.

Here's the fix. As you work with AI, log each load-bearing claim it makes with one of five verdicts. Load-bearing means: if this claim turned out to be wrong, the recommendation changes.

| Verdict | What it means | The one-sentence why |

|---|---|---|

| ACCEPT | You took the claim as-is. | Why you trusted it (source, prior experience). |

| REJECT | You discarded the claim. | What evidence beat it. |

| MODIFY | You used a changed version. | What you changed and why. |

| SURFACED | AI raised a point you hadn't considered. You kept it. | Why it matters. |

| MISSED | You raised a point AI didn't catch. You added it. | What AI missed and why it matters. |

The log is called the Reasoning Receipt. On a real document the receipt grows row-by-row as the conversation moves. For the exercise below, you'll receipt five claims at once.

A receipt is one decision per row. The verdict tells you what you did. The why tells you why a future reader (including future you) should trust it.

A receipt is one decision per row. The verdict tells you what you did. The why tells you why a future reader (including future you) should trust it.

Here's what this looks like in real life.

A product lead asked Claude to draft the launch plan for a new feature. Claude returned a clean three-page plan. Instead of dropping it into a doc, the product lead opened a side-by-side and built a receipt as she read each load-bearing claim:

| AI's claim | Verdict | Why |

|---|---|---|

| "Ship the launch with a single primary CTA to maximize conversion." | ACCEPT | Matches our last three launches; one-CTA tests beat two-CTA tests every time. |

| "Start with the 10% rollout cohort that includes paid users." | REJECT | Paid users are our least churn-tolerant cohort; we burn trust if the rollout has bugs. |

| "Send the launch announcement on a Tuesday morning." | MODIFY | Tuesday yes; morning no. Our engagement window for this segment is Tuesday 6-8pm. |

| "The feature has overlap with [competitor]'s March release; lead with differentiation." | SURFACED | Hadn't compared to competitor's release timing. The differentiation framing wins. |

| (AI did not mention paid-tier pricing implications of the new feature.) | MISSED | I added a note: pricing review must happen before launch or we hand discounts to legacy. |

She sent the receipt with the launch plan. Two weeks later the CEO asked why she'd skipped the paid cohort in the rollout. She pointed at row 2. The conversation took ninety seconds. Without the receipt it would have been a thirty-minute defend-yourself meeting where she couldn't reconstruct what she'd actually decided.

The same product lead without a receipt produces this:

| AI's claim | Verdict | Why |

|---|---|---|

| "Ship the launch with a single primary CTA." | ACCEPT | Sounds right. |

| "Start with the 10% rollout cohort that includes paid users." | ACCEPT | Sounds right. |

| "Send the launch announcement on a Tuesday morning." | ACCEPT | Sounds right. |

| "Lead with differentiation against [competitor]." | ACCEPT | Sounds right. |

| (Nothing logged.) |

Five ACCEPTs in a row means one of two things: AI is right about everything (rare) or the receipt isn't doing real work. An all-ACCEPT receipt is the same as no receipt at all. The friction of writing each "why" is the discipline. If you can't write a real "why," you didn't actually accept the claim. You inherited it.

Try it yourself

You asked AI: "Should we ship this feature now, or wait two weeks for more testing?" Below are five claims from AI's response. Receipt each one, using one of the five verdicts and a one-sentence why.

- "Ship now. Speed-to-market is the dominant variable in early adoption."

- "The two-week delay risks losing the news cycle, since [competitor] launched their version last week."

- "Production telemetry from your last three launches shows defects surface in week 1-3, so two extra weeks of testing won't catch them."

- "Customer support load typically rises 40% in the first week after launch."

- "Engineering velocity drops 15% during a defended ship."

(If you don't ship software, swap the surface: AI just gave you a five-claim recommendation on whatever real decision you're actually making this week. Use those instead.)

One note before the form. The feedback below was tuned for a frontier model (Claude Sonnet 4.5+, Opus 4.7, GPT-5, Gemini 2.5 Pro). Smaller models tend to handwave regardless of input quality.

Here's what the AI will check:

- Did you write a real "why" for each verdict, or did you write "sounds right" / "makes sense" patterns? Rate 1-10. Quote the weakest "why" in my receipt.

- Is there at least one REJECT or MODIFY, plus at least one SURFACED or MISSED? If every verdict is ACCEPT, the receipt isn't working. Rate 1-10. If my receipt is all-ACCEPT, say so plainly in one line.

Don't rewrite my work. Don't grade me on personality. If a field is empty or vague, say so plainly.

For claim 1 ("Ship now. Speed-to-market is the dominant variable"):

For claim 2 ("The two-week delay risks losing the news cycle"):

For claim 3 ("Production telemetry shows defects surface in week 1-3"):

For claim 4 ("Customer support load typically rises 40%"):

For claim 5 ("Engineering velocity drops 15% during a defended ship") OR your own MISSED row (something AI didn't raise):

Discuss with an AI. Question your scores.

Come back when you have your BEST evaluation.

Plan on 10-15 minutes the first time. Faster after that. The most useful thing you can do with the AI's feedback is find one row where you wrote "sounds right" without earning it. That row is where you almost shipped someone else's reasoning under your name. Receipt that one again with a real "why" and you've already returned the cost of the exercise.

What you just did catches the load-bearing claims one at a time. What it doesn't catch is the technical errors hiding inside each claim (fabricated citations, stale facts, false confidence). That scan is Discipline 3.

Want a strong sample to compare against? (Open after you submit your own.)

A reader running the same ship-now-or-wait scenario wrote this. It isn't the only good answer; it shows the shape.

| Claim | Verdict | Why |

|---|---|---|

| 1 | REJECT | Speed-to-market dominates in commodity markets, not ours: we sell into compliance-bound buyers who punish bugs harder than slowness. |

| 2 | MODIFY | Competitor launched a related feature, not ours: differentiation matters more than the news cycle, and the cycle is already over. |

| 3 | ACCEPT | Matches our last three launches: hotfixes happen in weeks 1-3, almost never in weeks 4-6. |

| 4 | SURFACED | I had budgeted for 20% support lift, not 40%: support team has 1.5 weeks of headcount cushion, which closes a real risk for me. |

| 5 | MISSED | AI didn't raise that two more weeks pushes us into our biggest customer's annual planning lock-in window; that's the binding constraint. |

What makes this work: only one ACCEPT (the one with real evidence behind it). Two of the five Whys quote prior internal data, not vibes. The MISSED row catches a constraint AI couldn't have known about (the customer's planning calendar). The decision the reader ends up with is "wait, because of the customer lock-in" - which is a different answer from "wait, because more testing." Same verdict, different reasoning, defensible in a room.

What it doesn't try to do: be brilliant. Most rows are one sentence. The discipline is in writing real Whys, not literary ones.

If you want the research backing (click to expand)

The receipt is older than AI by a long way.

- Schön, D. (1983). The Reflective Practitioner. The direct ancestor. Schön's "reflection-in-action" is the move of building a written track of decisions as the work happens, so the practitioner can defend each one later. A reasoning receipt is reflection-in-action applied to working with a model.

- Argyris, C. (1977). "Double Loop Learning in Organizations." Harvard Business Review. Single-loop learning corrects errors against the existing model; double-loop learning surfaces the model itself. An all-ACCEPT receipt is single-loop at best. The friction of writing each Why forces the second loop.

- Brown, P., Roediger, H., & McDaniel, M. (2014). Make It Stick. The retrieval-practice and elaboration research: writing one sentence about why something matters, in your own words, dramatically improves what you remember six months later. A receipt is retrieval practice on your own decisions; the boss-asks-six-months-later moment is exactly the scenario the research is about.

No single trial has tested the Reasoning Receipt for AI specifically. The mechanism (write down decisions as you make them, defend them later) is well-studied; applying it to AI interactions is the obvious extension, not a separately validated finding.

Go deeper: Part 0 Chapter 1: Asking Better Questions. The full version (a 10-row receipt against a real AI conversation, plus the Contradiction Challenge where you have a different AI attack your reasoning, 45-60 min) lives there as part of the foundational sequence. This page teaches the move. That chapter makes it a habit you can run on every load-bearing AI conversation you have.

Part 2: Detection (catching what AI misses)

Foundations gave you the posture. Detection trains the pattern recognition that catches what AI consistently misses. Two failure modes dominate here. AI sounds confident whether it is right or wrong, and most of its errors hide in the paragraphs that read as most professional. AI also optimizes the visible variable and ignores the three variables it just disturbed. Discipline 3 (Error Taxonomy) is the named-category scan you run against fluent prose to find six specific error types before they ship. Discipline 4 (Thinking in Systems) is the cascade map you draw against any decision worth a meeting to find the second-order effects AI did not trace.

Discipline 3: The Error Taxonomy

You know the trap. You paste a real document into Claude or ChatGPT, the answer comes back polished and fluent, and you read it the way you read your own writing: for general sense, looking for the shape of the argument. It flows. You nod. The error sits in the most professional paragraph in the draft, the one your eye skipped because nothing felt wrong. Three days later it ships, and the one fabricated number, or the one citation that does not exist, becomes the thing your reader catches first.

Here's the fix. Scan AI output by name, one error type at a time, instead of reading for "feel." Six types, each with a where-to-look-first prompt.

| Error type | What it looks like | Where to look first |

|---|---|---|

| Factual error | A demonstrably false specific claim: a number, a date, a name, a citation, an API method. | Any sentence with a specific number, especially decimals. Precision creates the appearance of research. I could tell you that 73.6% of analysts fail to verify AI figures, and it would sound credible. I made it up ten seconds ago. |

| Logical gap | The conclusion does not actually follow from the premises stated. | The bridge between "evidence" and "therefore." Bracket the "therefore" and ask: does this follow, or am I supplying the missing link? |

| False confidence | Uncertain information stated in a certain tone. | The most fluent paragraphs. Hedging language ("may," "could") is a signal AI knows it is on thin ice; its absence on a contested topic is the red flag. |

| Missing context | A crucial factor was omitted that would change the analysis. | Whatever your subject-matter expert would ask first. If you'd ask, "wait, did you consider X?", AI probably didn't. |

| Fabricated source | A citation, library function, or API that does not exist, or exists but does not say what AI claimed. | Every citation, every quoted statistic, every external function call. Verify before you forward or run. |

| Stale fact | True once, no longer true now. | Anything time-sensitive: prices, leadership, laws, API versions, capabilities of the tool itself. |

On real documents, scan for each by name. For the exercise below, we'll do two named scans (start with Factual and Fabricated Source) so you experience the move; the full six-row pass is what the worked example shows.

The six error types do not announce themselves. They hide inside the paragraphs that read as most professional, which is exactly why scanning by name beats reading by feel.

The six error types do not announce themselves. They hide inside the paragraphs that read as most professional, which is exactly why scanning by name beats reading by feel.

Here's what this looks like in real life.

A buy-side equity analyst was building a recommendation memo for a $25M position in a mid-cap industrial name. The Investment Committee met in ninety minutes. She asked Claude to draft the four-paragraph thesis section, fed it the company's last two 10-Qs, the latest analyst-day transcript, and her own notes. Claude returned a clean draft: revenue growth, multiple expansion, a cited bank-analyst quote, a Q3 cash-flow figure, a thesis paragraph. She did not paste it into the memo. She ran the six-row scan.

| Error type | What she found in the draft | Verdict |

|---|---|---|

| Factual error | Draft said: "Q3 operating cash flow of $182M, up 14% year-over-year." Her 10-Q tab said $164M, up 9%. Off by 11%. | Caught. Corrected from primary source. |

| Logical gap | Draft said: "Comparable peers trade at 14x forward EBITDA; therefore the name is undervalued at 11x." The "therefore" smuggled in the assumption that the peer set was actually comparable. Two of the three peers had higher margins. | Caught. Rewrote with a margin-adjusted multiple. |

| False confidence | Draft said: "Management's $2.3B revenue guidance for next year is conservative." No hedge. No basis. The word "conservative" was doing all the work. | Caught. Rewrote as "above consensus by 4%." |

| Missing context | Draft did not mention that the company's largest customer (22% of revenue) was in an active RFP that would close before the IC's next quarterly review. Her sector notes had it; Claude did not have her notes for that one. | Caught. Added as the first risk bullet. |

| Fabricated source | Draft cited: "As Morgan Stanley's industrials desk noted in their November initiation, the multiple compression is overdone." She searched FactSet. No such note existed. Claude had blended two real reports into a confident fiction. | Caught. Removed the quote; rewrote without citation. |

| Stale fact | Nothing time-sensitive in this draft slipped through. Pricing data, leadership, and rules were all current. | Actively scanned. Clean. |

Five of the six categories triggered on a single four-paragraph draft, and the fabricated bank quote was the one she almost missed because it sounded exactly like the kind of thing a Morgan Stanley desk would write. The version that went into the IC deck was re-evidenced line by line. The IC approved the position. The version she would have submitted without scanning would have had a fake quote attributed to a real bank in a memo with her name on it.

The same person without scanning by name would have shipped this:

| Reader habit | What gets missed | Why it fails |

|---|---|---|

| Read top-to-bottom looking for "does this argument hold up?" | Specific numbers. The eye skims figures inside a fluent paragraph. | The $182M cash-flow figure is the kind of detail that gets nodded past. Scanning for "Factual" by name forces a stop at every number. |

| Trust citations because they look credible | The Morgan Stanley quote. Real bank, plausible thesis, fabricated note. | "Looks credible" is the failure mode itself. Scanning for "Fabricated Source" by name forces verification on every citation before it counts. |

| Read the "therefore" as a connector word | The peer-comparable logical gap. The word "therefore" hides whether the bridge actually holds. | Reading for argument shape lets connector words do load-bearing work. Bracketing every "therefore" forces the bridge to defend itself. |

| Notice missing things only if they jump out | The 22% customer in an active RFP. It's not in the draft, so there's nothing visual to catch. | Missing context never raises a flag on the page. You have to actively ask what the analyst on the next desk would notice that the model could not. |

Same person, same draft, same hour. The difference isn't smarts. It's whether you scanned by name or by feel.

Try it yourself

You are an investment analyst. You asked an AI to draft the recommendation memo for a $25M position in a mid-cap industrial name. The memo cites revenue growth, a comparable-company multiple, an analyst quote from a major bank, and a Q3 cash-flow figure. The Investment Committee meets in 90 minutes. Scan the memo through the six error types by name, starting with Factual and Fabricated Source (the two with the highest cost-of-miss), and fill the grid below.

(If buy-side analysis isn't your work, swap the surface but keep the shape: an AI-drafted document going to a decision-maker who outranks you, fluent prose, named claims you can verify, and not enough time to read it three times. A grant report, a clinical summary, a board memo, a vendor risk note. The taxonomy doesn't care about the domain.)

One note before the form. The feedback below was tuned for a frontier model (Claude Sonnet 4.5+, Opus 4.7, GPT-5, Gemini 2.5 Pro). Smaller models tend to confirm whatever scan you paste in, which defeats the exercise.

Here's what the AI will check:

- Did you scan by name, or did you read for feel and label it after? Rate 1-10. Quote the row of my grid that decides. A real named scan produces a verdict for every row, even "actively scanned, found nothing." A blank row without that note is the tell.

- Are your quoted sentences load-bearing, or did you flag the easy lines? Rate 1-10. For any row where I quoted a sentence, name one stronger candidate from the same draft (if my AI draft contains one) that I should have caught first.

Don't rewrite my work. Don't grade me on writing style. If a row is empty without an "actively scanned, none found" note, say so plainly in one line.

Your 6-row scan grid (quote the exact AI sentence per row; leave a row blank ONLY if you actively scanned and found nothing, and write "actively scanned, none found" in that row):

Your confidence per row (1-10 per error type; one sentence on why):

Discuss with an AI. Question your scores.

Come back when you have your BEST evaluation.

Plan on 8-15 minutes the first time. Faster after that. The most useful thing you can do with the AI's feedback is find one place AI disagrees with your scan. That disagreement is where the next round of your judgment is built.

What you just did catches local errors in the output you have. What it doesn't catch is the second-order effects of decisions YOUR output will trigger downstream: the morale hit when a recommendation lands, the customer behavior change when a policy ships, the loop where cost savings cause service quality to drop and churn to rise. That's the cascade map, Discipline 4.

Want a strong sample to compare against? (Open after you submit your own.)

A reader running the investment-memo scenario produced this grid. It isn't the only good answer; it just shows the shape.

| Error type | Sentence quoted from the AI draft | Why it triggers this category |

|---|---|---|

| Factual error | "Q3 operating cash flow of $182M, up 14% year-over-year." | Specific number, verifiable against the 10-Q. The 10-Q says $164M, up 9%. Both the level and the growth rate are wrong. |

| Logical gap | "Comparable peers trade at 14x forward EBITDA; therefore the name is undervalued at 11x." | The "therefore" assumes the peer set is comparable, which has not been argued. Two of the three peers have structurally higher margins, which justifies part of the multiple gap. |

| False confidence | "Management's $2.3B revenue guidance for next year is conservative." | No hedging word. No basis cited. "Conservative" is a directional claim presented as a fact. On a contested forecast, the absence of "may" or "could" is the red flag. |

| Missing context | (Missing from draft.) The company's largest customer, ~22% of revenue, is in an active RFP that closes before the IC's next review. | Not on the page is the whole point of this row. The taxonomy works because you scan for what isn't there. A sector analyst on the next desk would name this in 30 seconds; the model could not. |

| Fabricated source | "As Morgan Stanley's industrials desk noted in their November initiation, the multiple compression is overdone." | Real bank, plausible quote, no such note exists. Verifiable via FactSet or the bank's own publication tracker. The exact failure mode the row is designed to catch. |

| Stale fact | Actively scanned, none found. Pricing, leadership, and capital-allocation policy were all current as of the draft date. | A blank row without this note would be a skip, not a finding. The note is what makes the row count. |

What makes this work: every row has a verdict, even the one with no error. Quoted sentences are load-bearing (they would change the IC's read), not throwaway. The Missing Context row is specific enough that another analyst could verify it (named customer concentration, specific event, dated deadline). The Fabricated Source row quotes the exact sentence and names how to falsify it.

What it doesn't try to do: be exhaustive. The taxonomy is a scan, not an audit. Six rows in fifteen minutes is the target. Three real catches beat thirty performative ones.

If you want the research backing (click to expand)

The taxonomy is a 2026 application of older work on why confident prose disarms scrutiny.

- Tversky, A. & Kahneman, D. (1974). "Judgment under Uncertainty: Heuristics and Biases." Science. The cognitive-ease line in this body of work showed that fluent, easy-to-process information is judged more credible than disfluent information, independent of its actual accuracy. Polished AI prose is the modern industrial-scale version of that effect. Scanning by named category interrupts the ease-equals-truth shortcut by forcing the reader to look for specific failure shapes instead of an overall vibe of credibility.

- Silver, N. (2012). The Signal and the Noise. Silver's central argument is that confidence and calibration are independent traits. The forecasters who sound most certain are routinely the least calibrated, and the pattern repeats across pundits, models, and (now) generative AI. The taxonomy's False Confidence row is the operationalization of his thesis for AI output.

- Gigerenzer, G. (2002). Calibration of Probabilities. Gigerenzer's calibration work, in this and later books, formalized the gap between subjective confidence and observed accuracy and showed that calibration improves when forecasters are forced to commit predictions in writing and check them against outcomes. The error-taxonomy scan is the equivalent for AI: it forces you to commit a verdict on every category instead of accepting the draft as a whole.

No single trial has measured how much the named taxonomy improves AI-error detection specifically. The cognitive pattern is well-studied; the application to AI output is the obvious extension.

Go deeper: Part 0 Chapter 2: Detecting Broken Reasoning. The full version (8-category taxonomy, dual-AI cross-check, prediction-vs-actual calibration; 60-75 min) makes this a system.

Discipline 4: Thinking in Systems

You know the trap. You asked AI to analyze the staffing change, the answer came back clean, three bullet points and a crisp recommendation. You shipped it the same afternoon.

Three months later morale collapsed in the team next door, two clients started routing around your group, the manager who quietly took on the displaced work burned out, and when leadership asked what happened you couldn't explain why. The first-order answer was correct. The second-order effects ate it. The third-order effects are the ones still in the room.

Here's the fix. Twenty minutes the first time, ten after that. Before you open AI on any decision worth a meeting, draw five lines.

- Decision at the center. One sentence, no hedging. Not "consider raising prices" but "raise list prices 18% on new contracts starting next quarter."

- Five domains spoking outward. Employees, customers, competitors, regulators, internal knowledge. One branch per domain.

- Three "and then what?" layers per domain. First-order effect. Then the consequence of that effect. Then the consequence of that consequence.

- Name at least one feedback loop. Find one place where a downstream effect circles back and changes the original decision. State the mechanism, not the label. Not "customers churn" but "customers churn because the new automated tier cannot escalate to a human, which their previous vendor did inside ten seconds."

- Finish only when the map looks messy. If it is neat, you stopped too early. Most strategic disasters are loops nobody mapped.

That drawing is called a Cascade Map. The point isn't to predict the future. The point is to refuse to ship the clean answer.

AI optimizes the variable you asked about; it does not reason about the three variables that one disturbs. Humans tend to miss breadth (the second domain over, the stakeholder you didn't name). AI tends to miss loops (the feedback that circles back six months later and unwinds the gain). The blind spots are complementary. That's why you draw the map first and then bring AI in to stress-test the branches.

On a real decision worth a meeting, the map can take 20-30 minutes. The exercise below uses a smaller scope so you can feel the muscle.

Five domains, three layers of consequence, one named feedback loop. The mess is the feature, not a bug.

Five domains, three layers of consequence, one named feedback loop. The mess is the feature, not a bug.

Here's what this looks like in real life.

A city planner had a six-week window to recommend whether to add protected bike lanes on a 2.3-mile downtown commercial corridor. The first-order case was clean: bike infrastructure correlates with mode shift, lower emissions, fewer cyclist injuries. The corridor's cyclist-injury rate was 2x the city average. The advocacy coalition was organized and patient. AI cheerfully validated the case.

Before forwarding the memo she drew a cascade map. Central decision: install protected bike lanes; remove one vehicle lane each direction; remove 40% of curbside parking. Three layers across five domains. Most of the second-order effects were predictable (cyclists happy, drivers grumpy, some parking displacement). The third layer was where the recommendation broke open.

The named loop was the one that changed her memo: corridor businesses lose weekend visitor revenue, which shrinks the local tax base, which feeds council pressure, which weakens the policy in the next session, which erodes the original mode-shift gain, which kills the case for the next corridor across town. The whole reason for doing this corridor was to win the case for the next ten.

She didn't kill the project. She added a 12-month loading-zone pilot, a guaranteed bus-stop redesign budget, a quarterly revenue threshold (>15% sustained drop triggers a revisit), and a transit-agency MOU on bus-stop access. That version survived council 7-2. The clean AI version did not contain any of those provisions, and a colleague's similar recommendation in a different city (no cascade, no provisions) was repealed inside fourteen months.

| Domain | 1st-order | 2nd-order | 3rd-order |

|---|---|---|---|

| Employees | Public-works repaints curbs | Parking enforcement budget rises to cover loading conflicts | Transit drivers grieve when buses can't pull cleanly to relocated stops |

| Customers | Cyclists gain protected route | Delivery drivers double-park into bike lane | Corridor businesses see weekend revenue dip; 3 threaten relocation |

| Competitors | Adjacent corridor stays car-friendly | That corridor courts the threatened businesses | Tax base shifts neighborhoods over 18 months |

| Regulators | State DOT grant terms apply | ADA review flags bus-stop curb cuts | Compliance retrofit pushes timeline 6 months and adds cost |

| Internal knowledge | Old mode-shift study (3 yrs) | Assumptions stale; weekend traffic pattern shifted | Planning dept can't defend forecast without a refresh |

Named loop: corridor revenue loss → tax-base reduction → council pressure → policy weakened in next session → mode-shift gains erode → defenders lose the case for the next corridor. That loop is the reason the recommendation grew teeth.

The same person without cascading writes something like this:

| Domain | 1st-order | Why it fails |

|---|---|---|

| Cyclists | Safer rides | One domain, one layer. No loop. Missed the delivery-driver double-parking dynamic entirely. |

| Emissions | Lower CO2 per mile | A metric, not a stakeholder. Missed the corridor-business revenue loop and the council feedback. No mechanism named, just an outcome asserted. |

Same person, same hour. The difference isn't smarts. It's whether you mapped messy or shipped clean.

Try it yourself

You are the head of revenue at a 200-person B2B SaaS company. Next quarter, leadership wants to raise list prices 18% on all new contracts and shorten the standard discount ladder. You are the decision-recommender. Cascade this pricing change before the exec read-out on Thursday. Five domains apply directly: account executives, existing customers up for renewal, two named competitors, the procurement teams at your top accounts, and your own sales-enablement collateral.

(If pricing isn't your work, swap the surface but keep the shape: a leadership decision with multi-stakeholder blast radius, a real deadline, and at least one place where the second-order effects feed back into the first-order outcome. Or pull a real decision from your week. That's what makes it stick.)

One note before the form. The feedback below was tuned for a frontier model (Claude Sonnet 4.5+, Opus 4.7, GPT-5, Gemini 2.5 Pro). Smaller models tend to handwave regardless of input quality.

Here's what the AI will check:

- Did your map go five domains wide and three layers deep, with a mechanism (not a label) at each link? Rate 1-10. Name the thinnest domain and one specific effect you missed in it.

- Is your feedback loop a real loop, with a mechanism stated as a causal sentence? Rate 1-10. If the loop is just a label ("regulators react"), flag it and propose one additional loop I did not name, with the mechanism written out (not "regulators react" but "regulators react because X triggers Y which forces Z").

Don't redraw my map. Don't rate me on style. If a field is empty or vague, say so plainly in one line.

Your cascade map (central decision, then 5 domains x 3 layers; rough text is fine, just keep the structure visible):

Your feedback loop, stated as one causal sentence (not a label):

Discuss with an AI. Question your scores.

Come back when you have your BEST evaluation.

Plan on 15-20 minutes the first time. Cascade maps run longer than a Prediction Lock because the value is in the messy middle layers, and the first three or four "and then what?" questions feel forced before the real ones surface. The fourth or fifth is usually where the third-order effect that actually matters shows up. Faster once the muscle is built; experienced cascaders run a full map in eight to twelve minutes.

The most useful thing you can do with the AI's feedback is find one place where the AI added a domain you missed. That's where your blind spot lives, and it's the cheapest lesson you'll get this week. If the AI added a loop you missed, mark it as a separate find. Loops are the move-the-needle ones because they tell you when the announced decision (18% list price increase) is going to show up in the world as something quite different (4-6% realized).

What you just did stress-tests the second- and third-order effects of an existing plan. What it doesn't do is question whether the plan rests on the right assumptions in the first place.

A perfectly cascaded plan built on the wrong premise still hits the wall, just later and with better documentation. That's Discipline 5.

Want a strong sample to compare against? (Open after you submit your own.)

A reader running the pricing scenario wrote this. It isn't the only good answer; it just shows the shape.

Central decision: Raise list prices 18% on new contracts effective Q3; shorten discount ladder from 7 tiers to 4.

| Domain | 1st-order | 2nd-order | 3rd-order |

|---|---|---|---|

| Account execs | Quota math gets harder mid-quarter | AEs concentrate on smaller deals where discount approval is faster | Top-of-funnel slows on enterprise; sales mix shifts down-market without anyone deciding to |

| Renewal customers | Renewal price benchmarked to new list | Procurement re-opens "most favored nation" clauses in 3 top accounts | Two largest accounts negotiate multi-year freezes that lock in below the new list price |

| Competitors | Competitor A holds, Competitor B undercuts | Competitor B starts targeted outbound to our top 50 prospects | Win rate on competitive deals drops 8-12 pts; CAC payback stretches a full quarter |

| Procurement teams | Approval workflow adds a finance gate | Deal cycle extends by 11-18 days on average | Q3 forecast misses by the deal-cycle slippage alone, before any won-loss effect |

| Sales collateral | Old pricing sheets still cached in CRM | AEs quote stale prices for 2-3 weeks during the transition | A handful of contracts get signed at the old price; legal flags whether to honor or renegotiate |

Named loop: AE quota pressure under the new list pushes deeper one-off discounts on flagship deals, which leak into renewal benchmarks via procurement reference checks, which compresses realized net price below list, which means the headline 18% increase shows up as 4-6% realized, which triggers another pricing review next year that the team has now lost credibility to lead.

What makes this work: five real domains, not just three with two metrics tacked on.

Each chain names a mechanism, not just an outcome ("procurement re-opens MFN clauses" not "procurement reacts"). The loop is a loop: the effect circles back and changes the original decision (the announced 18% becomes a realized 4-6%).

The map is messy enough that the reader could clearly see the loading-zone-equivalent: the realized-vs-announced gap that nobody in the exec read-out had named.

What it doesn't try to do: be exhaustive. There are at least three more loops in this scenario (channel partner margin, competitive-tier customer migration, renewal-cycle timing). The discipline is naming one real loop with a real mechanism, not naming all of them on the first map.

If your map looks tidier than this one, that's the signal: go one more "and then what?" deeper in your two weakest domains and look again for a loop.

If you want the research backing (click to expand)

Mapping a decision before consulting any analyst (human or AI) is a well-studied move. It predates AI by decades.

The Cascade Map sits at the intersection of two lineages: stakeholder breadth (Meadows, Sterman) and feedback-loop depth (Forrester). The five-domain spoke enforces the first; the named-loop requirement enforces the second.

- Meadows, D. (2008). Thinking in Systems: A Primer. Chelsea Green. The canonical short text. Meadows's argument: the highest-leverage interventions in any system are almost never the variables managers obsess over. They are the feedback loops and the rules that govern them, which most analyses never name. The Cascade Map enforces the second half of that argument: you cannot intervene on a loop you didn't name.

- Forrester, J. W. (1958). "Industrial Dynamics: A Major Breakthrough for Decision Makers." Harvard Business Review. The foundational paper of system dynamics. Forrester's industrial-supply studies showed that linear cause-and-effect reasoning systematically blinds operators to the loops that actually drive long-run behavior. The bullwhip effect is the most famous example; the underlying point generalizes to any multi-stakeholder decision.

- Sterman, J. (2000). Business Dynamics: Systems Thinking and Modeling for a Complex World. Irwin McGraw-Hill. The textbook treatment of the Meadows/Forrester lineage applied to management decisions. Sterman's empirical work (especially the Beer Game) shows that even smart, motivated decision-makers reliably miss loops when they're not forced to draw them. The Cascade Map is a five-minute forced-draw version.

No single trial has tested the Cascade Map against AI specifically. The mechanism (humans miss breadth, AI misses loops, drawing the map closes both gaps) is the extension; the underlying body of work is established.

Go deeper: Part 0 Chapter 3: Thinking in Systems. The full version (peer review plus AI counter-analysis plus the assessment rubric; 60 minutes) makes this a system.

Part 3: Origination (doing what AI cannot)

Foundations gave you a posture. Detection trained you to catch what AI misses. Origination is the third arc, and it answers a different question: what work is yours to do because AI structurally cannot do it? Two failure modes live here. The first is consensus drift: AI hands back the average answer from its training data, and you ship that average without testing whether it fits your specific situation. The second is the oracle reflex: you start outsourcing judgment to the tool that has no judgment of its own. Disciplines 5 and 6 close both gaps.

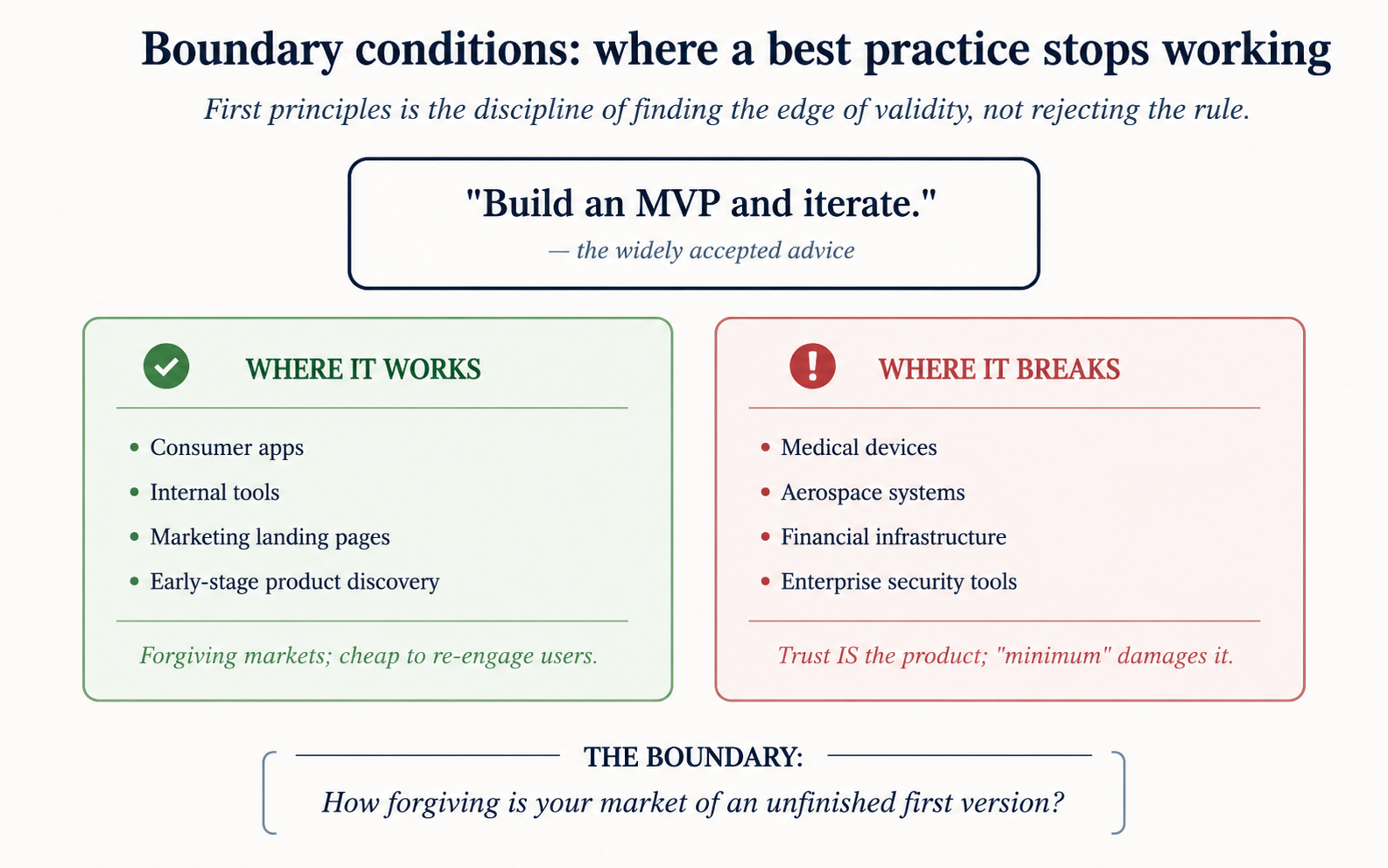

Discipline 5: First Principles

You run a vertical SaaS company. Three competitors just raised prices 12% inside one quarter. Your board, two of your three investors, and your head of finance all push the same line: match the move, capture the margin, ride the wave. Your CEO friend at another company tells you the same thing over coffee. You open Claude and ask. The summary agrees. Five days into the conversation, every signal you've gotten points the same way.

That convergence is the failure mode. The consensus is doing its job (it's pulling you toward the obvious answer) and the obvious answer is right for someone else's company in someone else's market. AI is the last and loudest voice in the chorus because it averages over everyone who has ever written about pricing strategy. It cannot tell you where the chorus stops applying to your situation.

Here's the fix. Pick the consensus you're being told to follow. Write three rows. Each row names a specific condition under which the consensus stops working, traced to a mechanism with a named threshold (a number, a count, a state). If you can't get to three rows with thresholds, you have been following the consensus without understanding it.

| The consensus you're examining | Where it stops working, with a named threshold |

|---|---|

The "named threshold" requirement is the discipline. A row with a threshold ("when team size is below ~20 engineers, microservices coordination cost exceeds the deploy-isolation benefit") is a boundary condition. A row without one ("microservices are sometimes wrong") is a gripe. Gripes don't change decisions; thresholds do.

Every consensus has boundaries. The exercise walks those boundaries on purpose, before a bad decision finds them for you.

Every consensus has boundaries. The exercise walks those boundaries on purpose, before a bad decision finds them for you.

Here's what this looks like in real life.

The SaaS founder above didn't open his laptop and write three perfect rows. His first draft of the second boundary read: "Sometimes the competitive set is a bad reason to raise." That's a gripe, not a boundary. He went back, traced what was actually different about his situation, and rewrote it as: "When competitors are reacting to a cost shock we've hedged against (we locked a multi-year infrastructure contract last year, so our unit economics didn't move), matching their hike telegraphs weakness we don't have." The rewrite has a named threshold (the existence of the hedge), names a mechanism (signaling), and points at a decision the consensus doesn't see.

Three rows after about 90 minutes of revision:

| Consensus: "Always match competitor price hikes." |

|---|

| Boundary 1. When retention (not acquisition) is the binding constraint, every percentage point of churn from a hike costs more in lifetime value than the increase recovers, especially when switching costs are dropping (a named threshold: new data-portability rules that drop switching cost below the prior decade's norm). |

| Boundary 2. When competitor moves are reactive to a cost shock you've hedged against (you locked a multi-year infrastructure contract last year, so your unit economics didn't move), matching their hike telegraphs weakness you don't have. The named threshold is the existence of the hedge. |

| Boundary 3. When the competitive set is consolidating, holding price is a positioning move that pulls accounts off competitors' renewal lists, and acquisition cost on those accounts is near zero because they're already evaluating alternatives. The named threshold is the competitor-renewal window (~90 days out). |

He brought the three boundaries to the board. They held price. Six months later net revenue retention was up four points and he had taken three accounts off competitors' renewal lists at zero acquisition cost. None of the three boundaries appeared in the consensus brief. None appeared in AI's first summary either.

The same founder, the same hour, without the named-threshold discipline:

| Consensus: "Always match competitor price hikes." | Why it fails |

|---|---|

| Sometimes you shouldn't raise because customers will leave. | No threshold. "Sometimes" is a gripe; the boundary it points at could trigger at 1% churn or at 30%. Indistinguishable from the consensus. |

| Competitors don't always know what they're doing. | A complaint about competitors, not a boundary on the practice. Doesn't change any decision. |

| It depends on the situation. | Not a row. Restating "context matters" doesn't tell you where the context matters. |

Same person, same hour, same situation. The difference isn't intelligence. It's whether you required a named threshold or accepted "it depends."

Try it yourself

You're the COO of a 35-person services firm. A key role has been open for five months. Your CEO keeps quoting "hire slow, fire fast" (an old founder-playbook line meaning: take your time on hires, but cut quickly when a hire isn't working). Two strong-on-paper candidates are about to take other offers this week. The whole leadership team accepts the practice as obviously correct. Your job: walk the boundary of "hire slow, fire fast" before Friday's leadership meeting. Write three rows.

(If hiring isn't where the work is for you, swap the consensus but keep the move: pick any widely-accepted best practice in your domain that someone keeps quoting at you, and find the boundary. The discipline is the same.)

One note before the form. The feedback below was tuned for a frontier model (Claude Sonnet 4.5+, Opus 4.7, GPT-5, Gemini 2.5 Pro). Smaller models tend to handwave on the threshold check.

Bold rule for this exercise: if your third row will not come, the consensus you picked is one you've been following without understanding. Switch to a different consensus rather than padding the third row. That is itself a finding.

Here's what the AI will check:

- Does each row name a threshold (a number, a count, a state, or a specific condition)? Rate 1-10. Quote the weakest row's threshold (or the row that lacks one).

- Is each row principle-based (a mechanism that holds across companies in the same situation) or example-based (a story about one company dressed up as a rule)? Rate 1-10. Flag any row that's a gripe rather than a boundary.

Don't rewrite my rows. Don't grade me on personality. If a row is empty or vague, say so plainly in one line.

The consensus practice I'm examining (one sentence):

Boundary row 1 (specific condition + named threshold + mechanism):

Boundary row 2:

Boundary row 3:

Discuss with an AI. Question your scores.

Come back when you have your BEST evaluation.

Plan on 15-25 minutes the first time. Slower than D1's lock because thresholds are hard. The most useful thing you can do with the AI's feedback is find one row where you wrote "sometimes" or "it depends" and rewrite that row with a specific threshold. That rewrite is where the discipline lives. If you can't rewrite it, the row probably isn't a boundary at all and you should drop or replace it.

What you just did finds the boundary of a single practice. What it doesn't do is help you collaborate with AI on a problem where there's no obvious consensus to challenge. That's Discipline 6.

Want a strong sample to compare against? (Open after you submit your own.)

A reader running the "hire slow, fire fast" scenario wrote this. It isn't the only good answer; it shows what thresholds look like in a different domain.

| Consensus: "Hire slow, fire fast." |

|---|

| Boundary 1. In small founder-led teams (named threshold: under ~40 people), "slow" hiring quietly turns into "no" hiring because the founder is the bottleneck on every loop. The mechanism: every additional interview round adds founder calendar contention that competes with shipping. The company never reaches the scale at which "fast firing" becomes the corrective lever the practice promises. |

| Boundary 2. When the role has been open longer than two replacement cycles (named threshold: 4+ months), the cost of further "slow" is no longer protective; it's protective theater. The mechanism: opportunity cost on the missing person's deliverables compounds, and the team takes on debt that fast-firing later cannot recover. Slowness past 4 months is hiring inaction wearing the costume of hiring discipline. |

| Boundary 3. In high-trust services markets (named threshold: when client tenure averages 24+ months), "fast firing" tears the trust relationships the client bought into. The mechanism: clients hired into the firm partly to work with the people in it; rotating senior staff fast destroys the implicit asset. The cost of the wrong hire is real, but the cost of a fast fire is sometimes higher. |

What makes this work: every row names a threshold (40 people, 4 months, 24-month client tenure). Each boundary points at a mechanism that holds for any company in the same shape, not just this one. The third boundary is the strongest because it inverts the practice rather than qualifying it.

What it doesn't try to do: be brilliant. The mechanisms are just plausible. The discipline is in the thresholds, not the prose.

If you want the research backing (click to expand)

Walking the boundary of a consensus is older than the AI version by a long way.

- Gigerenzer, G. (1999). Simple Heuristics That Make Us Smart. The closest direct ancestor. Gigerenzer's ecological rationality frames every heuristic (including "best practice") as a tool whose accuracy depends on the environment it's applied in. The job of the practitioner is to know the environment well enough to know where the heuristic stops being ecologically valid. The named-threshold requirement in this exercise is operational ecological rationality.

- Klein, G. (1998). Sources of Power. Klein's Recognition-Primed Decision model shows how experts pattern-match to the first plausible script and run with it. The Defend the Opposite move is a deliberate interruption of that pattern-match: by forcing yourself to write the conditions where the script fails, you make the boundary visible before the pattern-match runs.

- Popper, K. (1959 English / 1934 German). The Logic of Scientific Discovery. Popper's falsifiability was originally a demarcation criterion for science (separating science from non-science), not a meaning theory. The relevant move here is narrower: a claim is operationally useful only if you can state the conditions under which you'd abandon it. The named-threshold column makes the boundary stateable.

No single trial has tested the Defend the Opposite move for AI specifically. The mechanism (require the boundary before accepting the practice) is well-studied; applying it to AI's averaged-over-training-data answers is the obvious extension, not a separately validated finding.

Go deeper: Part 0 Chapter 4: Reasoning from First Principles. The full version (the Blank Page Sprint: write 500 words against a practice you have been following, then run a structured AI counter-analysis and a peer review, 60 min) lives in Part 0. This page teaches the row shape. That page teaches the longform argument.

Discipline 6: Working WITH AI

You spent the morning iterating with Claude on the strategy memo. The output is polished. The framing is tight. The numbers line up. Then your CEO reads it over your shoulder and asks, "Why did you land here and not on the other option?" You open your mouth and realize you can't actually separate your judgment from the model's. Some of those sentences are yours. Some are the model's. Most are a blur. The memo is good. You just don't know which parts of it you can defend.

Here's the fix. On a real memo worth a board meeting, run the same task three ways under timed constraints. Then read all three side by side.

- Solo. 45 minutes, no AI. Just you and the problem.

- AI-only. 20 minutes. You prompt, AI answers, you accept the first response with no edits.

- Collaborative. 30 minutes. You prompt, evaluate, push back, override, iterate. AI as sparring partner, not oracle.

Rate each draft on four axes: depth, breadth, originality, time-to-value. The collaborative version usually wins, but the win is only useful if you can point to the specific overrides that made it win. That's the Three-Path Comparison. Locked because the comparison is the diagnostic, not the drafts themselves.

On a real memo worth a board meeting, the full 95-minute comparison is the discipline. For the exercise below, a 10-minute on-ramp version (3-min Solo, 2-min AI-only, 5-min Collaborative, on a short email) teaches the felt difference fast enough to do today.

The comparison is how you see where your judgment is irreplaceable. Without the side-by-side, you cannot tell whether you collaborated or surrendered.

The comparison is how you see where your judgment is irreplaceable. Without the side-by-side, you cannot tell whether you collaborated or surrendered.

Here's what this looks like in real life.

A medical-practice owner ran a 14-provider primary-care group and had to write a two-page memo to her partners proposing a shift to a value-based-care contract with the largest regional payer. Three-year revenue implications. A culture component. An operational lift that touched every clinician. She decided to test her collaboration posture before sending it, so she ran the same task three ways.

Solo, 45 minutes. She drafted a careful memo grounded in the operational risks she knew best. It was specific, defensive, and honest. It also buried the strongest financial point on page two, and it never addressed the partner who had openly opposed every payer-mix shift for the last two years. She knew the gap. She did not have the time to close it before the meeting.

AI-only, 20 minutes. She handed the model the brief and accepted the first response with no edits. The draft was polished and structurally clean. It opened with a generic "value-based care benefits" framing identical to one three competing practices had used the previous quarter. It named no partner. It cited no risk specific to her market. It read like an industry brochure.

Collaborative, 30 minutes. She wrote the structural argument herself, named the three load-bearing financial assumptions, and asked the model to surface the strongest counter-argument from the perspective of the opposing partner. The model proposed an objection she had not anticipated, and she rewrote the memo to address it head-on. She also asked the model for an executive summary; the model's version softened the ask, so she rewrote that paragraph because the ask was the load-bearing piece. The memo landed. Two of the three partners flipped. The opposing partner filed a written objection that the memo had already addressed on page one. The collaborative draft won, and it won because her overrides on the financial assumption and on the partner-specific counter-argument carried the load, not the model's prose.

The same person who never compared three paths writes only the Collaborative version:

| What they lose | Why it fails |

|---|---|

| Cannot name where their judgment was load-bearing. | Without the Solo and AI-only baselines, every sentence feels equally theirs. The CEO's "why did you land here?" question has no answer. |

| Cannot show the Collaborative draft is actually better. | "It feels better" is not a defense. The 4-axis side-by-side is the evidence. Without it, the team treats the polished draft as the answer. |

| Cannot catch where they were sliding into oracle-mode. | Surrender looks identical to collaboration from the inside. The AI-only draft is the diagnostic: if it is uncomfortably close to your Collaborative, you over-accepted. |

Same person, same hour. The difference isn't smarts. It's whether you ran the comparison or just felt the comparison.

Try it yourself

You are a VP of Strategy at a 400-person SaaS company. Your CEO has asked you for a one-page memo to the executive team recommending whether to acquire a smaller competitor that just lost its largest customer. The memo lands in the board pre-read. Your recommendation will be quoted back to you for the next three years.

What is the recommendation, and how do you know?

(If acquisition strategy isn't your work, swap the surface but keep the shape: a one-page memo, a real decision on your desk this week, stakes that travel with you. The closer to a real deliverable, the sharper the comparison.)

For the on-ramp version, pick the next email or short memo (under 200 words) you would have drafted with AI today. Solo it (3 min), AI-only it (2 min), then collaborate on it (5 min). Lay all three side by side. The point is not the email. The point is the felt difference.

One note before the form. The feedback below was tuned for a frontier model (Claude Sonnet 4.5+, Opus 4.7, GPT-5, Gemini 2.5 Pro). Smaller models tend to flatter the Collaborative draft regardless of input quality.

Here's what the AI will check:

- Do your three path summaries actually describe three different drafts, or three rephrasings of the same draft? Rate 1-10. Quote one sentence from each summary that decides. If the Solo and Collaborative summaries are near-identical, say so plainly.

- Are your load-bearing overrides specific enough that removing any one of them would visibly weaken the Collaborative draft? Rate 1-10. For each of the three overrides, name what the draft would look like without it. If any override is generic ("I added more detail"), say so plainly.

Don't rewrite my work. Don't flatter the human-edited version. If a field is empty or vague, say so plainly in one line.

Your three path summaries (one paragraph per path describing what you wrote, what surprised you, and where it fell short):

Your three load-bearing overrides (name 3 specific places in the Collaborative draft where your judgment was load-bearing, meaning the draft would have failed without that override):

Which of the three drafts would you actually send to the recipient, and why:

Discuss with an AI. Question your scores.

Come back when you have your BEST evaluation.

Plan on 15-20 minutes for the 10-minute on-ramp version including reflection. The 95-minute full version is what you run on real high-stakes work this week. The most useful thing you can do with the AI's feedback is find a place where it says the Solo draft was stronger on an axis. That is a signal about where your overrides did not carry load. If the AI cannot find one, push it harder; if it can, you just learned where your collaboration posture is still soft.

What you just did is the whole crash course in miniature. You formed a position before AI (D1), documented your verdicts on each claim (D2), scanned the outputs for fabrications (D3), traced the second-order effects of the recommendation (D4), tested where the consensus framing breaks (D5), and kept judgment with the human when the model wanted to drift into oracle mode (D6). The deliverable is never the answer. The deliverable is the documented evidence of thinking, and you now have six disciplines that produce that evidence on demand.

Want a strong sample to compare against? (Open after you submit your own.)

A reader running the same VP-of-Strategy acquisition scenario wrote this. It isn't the only good answer; it just shows the shape.

| Path | Their summary |

|---|---|

| Solo (45 min) | Recommended against acquisition. Strong on the customer-concentration risk (the target lost 38% of revenue overnight). Weak on the integration thesis: never named what the acquirer's product team would actually do with the engineering hires. Buried the recommendation on page one's last line. |

| AI-only (20 min) | Recommended a "structured acquisition with earn-out triggers." Polished. Contained two phrases ("strategic optionality," "tuck-in upside") that the CEO had publicly criticized in the last all-hands. Did not address that the target's remaining customers were geographically concentrated in a region the acquirer had no presence in. |

| Collaborative (30 min) | Recommended against acquisition but proposed a 60-day standstill offer (talent hire + IP license) that captured 70% of the strategic value at 15% of the cost. The standstill framing came from the model. The 60-day window and the IP-license carve-out were the user's overrides. The recommendation in the opening line was the user's. |

Three load-bearing overrides in the Collaborative draft:

- Rejected the model's "strategic optionality" framing. The model used the phrase three times. The user replaced every instance because it would have triggered the CEO's known objection in paragraph one. Without this, the memo would have been dead on arrival.

- Added the geographic-concentration point. The model never raised it. The user knew from the last QBR that the target's remaining customer base was 80% in a region the acquirer had no GTM in. This was the load-bearing reason the acquisition's revenue model collapsed. Without this, the recommendation would have been "no" on weak grounds.

- Overrode the model's earn-out structure. The model proposed a three-year earn-out tied to revenue. The user replaced it with the 60-day standstill because the firm's own M&A history showed earn-outs above 18 months had 80%+ founder-departure rates. Without this, the alternative proposal would have inherited the same risk it was trying to avoid.

What makes this work: each override traces to a specific piece of context the model did not have (CEO's language, last QBR's geographic data, the firm's own M&A track record). The user can point to each override and say, "Here is what I knew that the model did not, and here is what I did with it." That sentence is the test. If you cannot say it for at least three places in your Collaborative draft, you have not collaborated; you have edited.

What it doesn't try to do: be brilliant on its own. The standstill structure came from the model. The judgment about when to use it and how to bound it came from the user. That is the whole posture.

If you want the research backing (click to expand)

The collaboration posture is not new theory. It predates the LLM era by more than two decades.