Glossary: AI Terms for Beginners

You don't need a computer science degree to read this book. But you do need to speak the language. This glossary defines every important term you'll encounter, using plain English, real-life examples, and everyday analogies.

How to use this page: Start with the Top 30 Terms: these appear on almost every page of the book. Then use the full glossary as a reference. Terms are grouped by topic, with book-specific vocabulary first. Use

Ctrl+F(orCmd+Fon Mac) to search for any term.

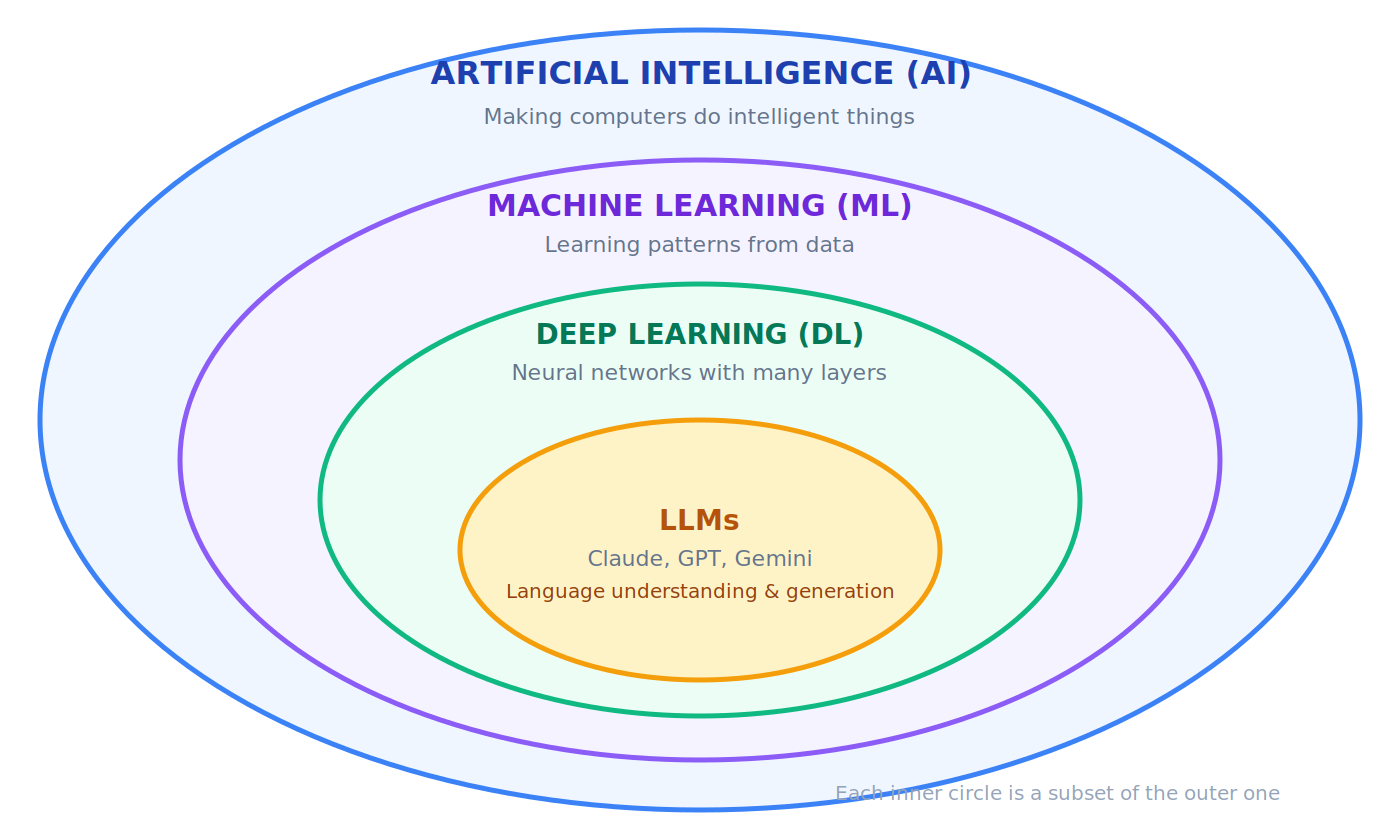

The AI Landscape at a Glance

Before diving into individual terms, here's how the major concepts relate to each other:

Top 30 Terms You Must Know First

These appear on almost every page. Read these before you open Chapter 1.

Note: terms related to agents as buyers (ACP, AP2, x402, MPP, authority envelopes, signed mandates) are covered in Section 11 and not included in Top 30 terms.

1. AI (Artificial Intelligence): Making computers do things that normally require human intelligence.

🔹 When your phone's keyboard predicts the next word you're typing, that's AI.

2. LLM (Large Language Model): A giant AI system trained on billions of pages of text, capable of understanding and generating human language and code. Claude, GPT, and Gemini are LLMs.

💡 Think of an LLM as a research assistant who has read every book in the world's largest library. You ask a question, they answer from everything they've read.

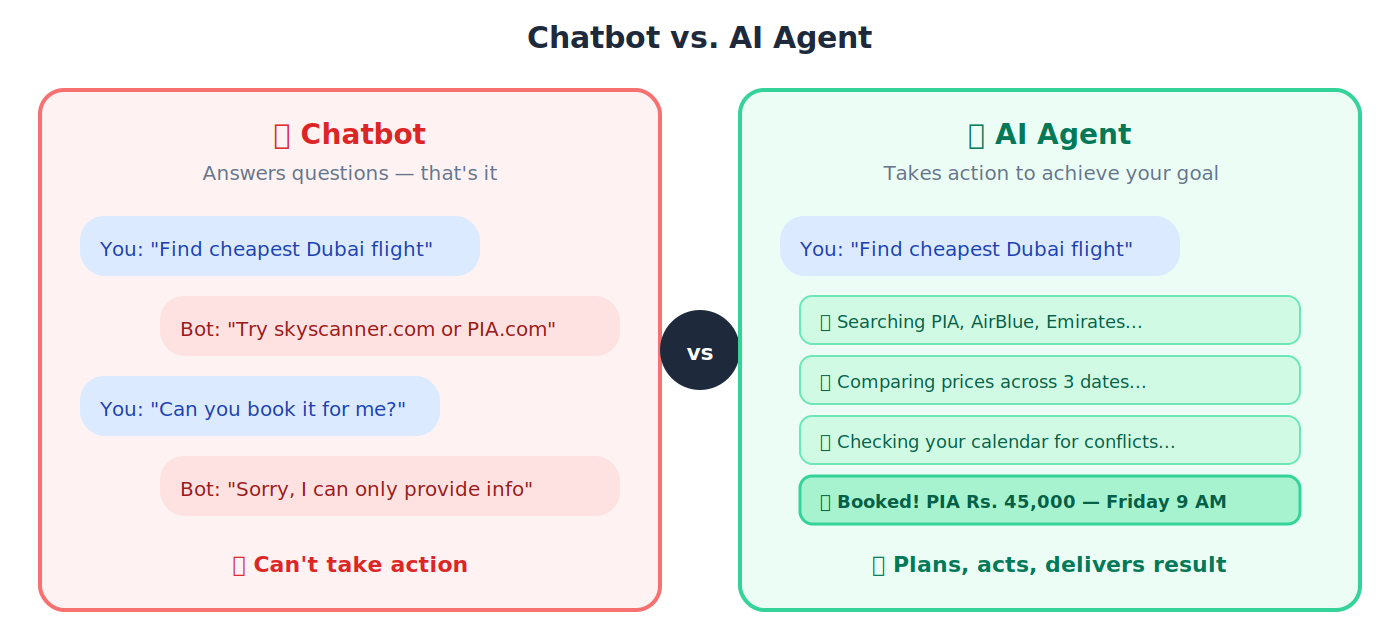

3. Agent (AI Agent): An AI that doesn't just answer questions. It takes action, makes plans, and gets things done on its own.

🔹 A chatbot answers "What's the cheapest flight to Dubai?" An agent actually searches airlines, compares prices, and books the ticket for you.

4. Agentic AI: The category of AI focused on building agents that plan, reason, and act autonomously. This is the frontier of AI in 2026 and the focus of this entire book.

🔹 Regular AI: you ask a question, you get an answer. Agentic AI: you give it a goal ("reduce customer churn by 15%") and it researches, plans, executes, and reports back, making decisions along the way.

5. Digital FTE (Digital Full-Time Equivalent): An "AI employee" that does the continuous work of a full-time human worker, 24/7, at a fraction of the cost. Also called an AI Worker in the thesis — same role, different register.

🔹 A Digital FTE for customer support handles 500 conversations per day, every day — doing the work of 5-10 human agents.

6. Agent Factory: The central concept of this book. The spec-driven, human-supervised, Claude-Code-powered process by which AI Workers are designed, manufactured, and deployed. Not a product you buy; a practice you adopt. The Agent Factory builds the AI-Native Company, and the AI-Native Company employs Digital FTEs.

💡 Like an assembly line: each station performs one specialized task, parts move through in order, and what emerges at the end is a finished product built to spec. The Agent Factory industrializes the making of AI employees.

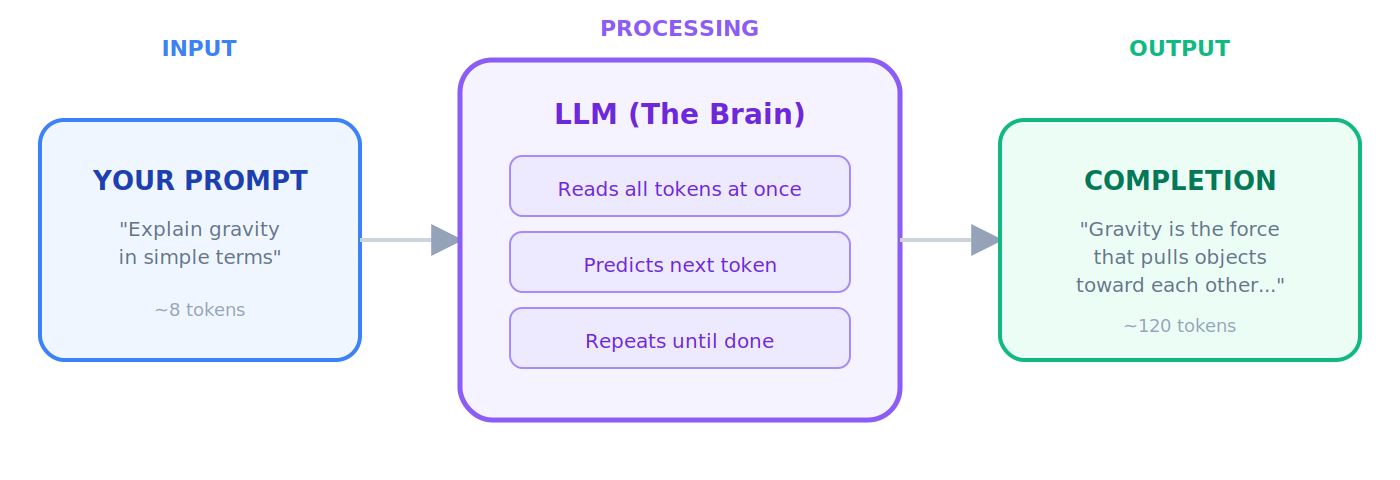

7. Prompt: The instruction or question you type into an AI model.

🔹 "Summarize this report in three bullet points" is a prompt. Better prompts = better answers.

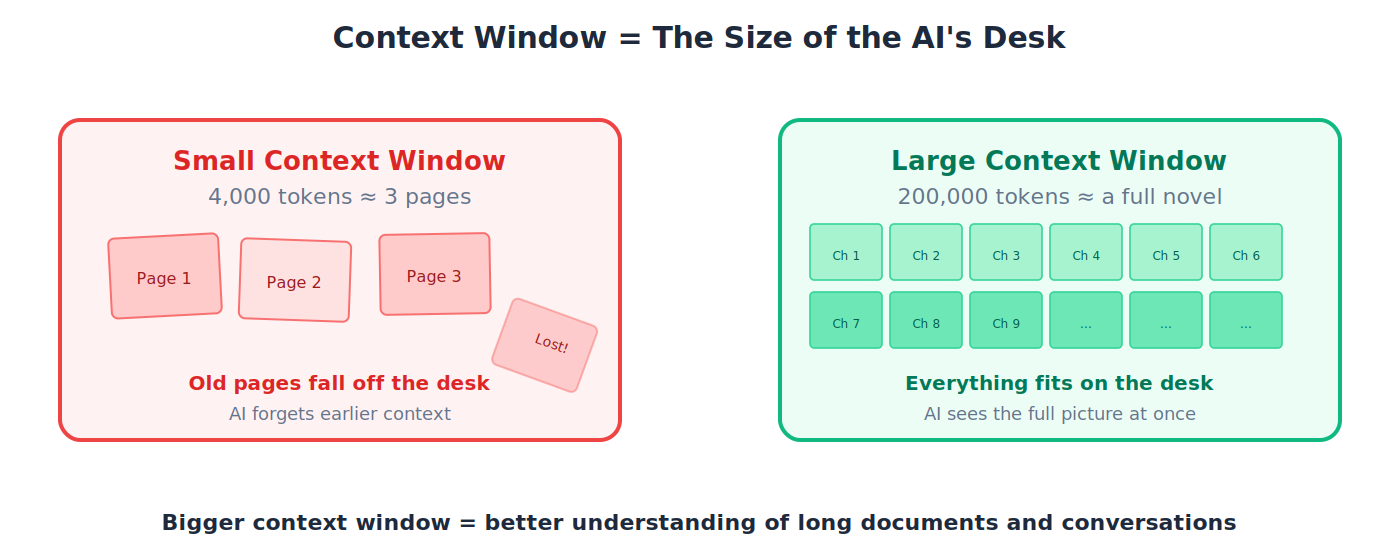

8. Context Window: The AI's "working memory": how much text it can read and think about at one time.

💡 A small context window is like a tiny desk where you can only spread out a few pages. Claude's large context window is like a huge conference table where you can lay out an entire novel at once.

9. Token: The basic unit of text an LLM reads. Roughly ¾ of a word. "I love biryani" ≈ 4 tokens.

🔹 You pay per token when using AI APIs. A full page of text ≈ 500-700 tokens.

10. Hallucination: When AI confidently generates something that isn't true.

🔹 You ask about a Supreme Court case and the AI invents a fake judgment with fake citation numbers, and presents it as fact. It sounds right, but it's fabricated.

11. Spec (Specification): A detailed blueprint describing exactly what you want built: goals, inputs, outputs, constraints.

💡 An architect's blueprint for a house. No builder starts by guessing. They follow the plan. In AI development, the spec is that plan.

12. Spec-Driven Development (SDD): Write the blueprint first, then let AI generate the code, tests, and documentation from that blueprint.

🔹 You write: "Build an API for a bookstore with endpoints for listing, adding, searching, and deleting books." Claude Code generates the entire application.

13. Claude Code: Anthropic's AI coding agent. You talk to it in the terminal and it reads your entire codebase, understands your project, and writes code.

🔹 You type "Add user authentication to my app": Claude Code reads your existing code, generates the auth module, writes tests, and integrates everything.

14. Cowork: Anthropic's desktop agent for non-coding knowledge tasks: documents, research, file management.

🔹 "Organize my Downloads folder by project and summarize all PDFs from this month." Cowork does it while you focus on other things.

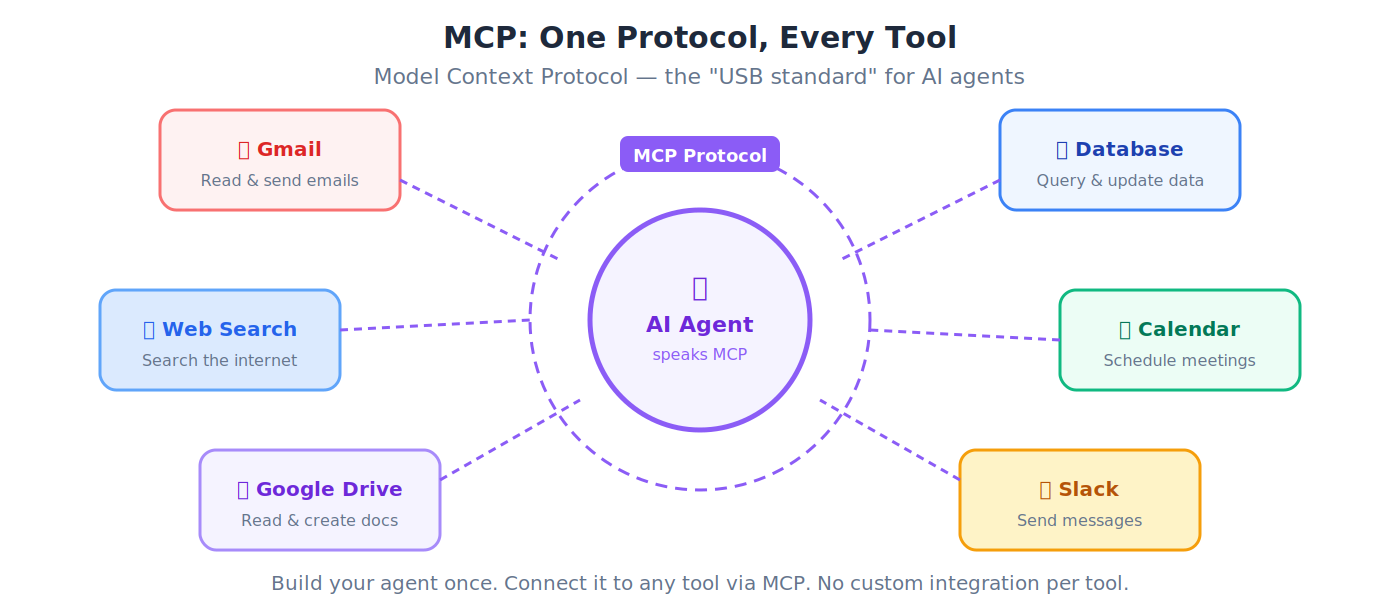

15. MCP (Model Context Protocol): The universal standard that lets any AI agent connect to any external tool: databases, email, calendars, file systems. MCP is the protocol for agents calling tools. For the separate protocol family that handles agents paying for those tools, see Section 11: ACP, AP2, x402, and MPP.

💡 Before USB, every phone had a different charger. MCP is the "USB standard" for AI: one protocol that lets any agent plug into any tool.

16. API (Application Programming Interface): Rules that let different software programs talk to each other. APIs are how agents interact with the outside world.

💡 A restaurant menu is an API. You (client) look at the menu (docs), place an order (request), and the kitchen (server) delivers your food (response).

17. SDK (Software Development Kit): A pre-built toolkit for building applications on a specific platform.

💡 An SDK is like a LEGO set: pre-made pieces with instructions so you can build things quickly, instead of carving every piece from scratch.

18. Python: The most popular programming language in AI. Readable, versatile, and the primary language in this book.

🔹 Python reads almost like English:

if age > 18: print("Adult"). This readability is why the AI world chose Python.

19. Git: A system that records every change to your code: who changed what, when, and why. You can always go back to any previous version.

💡 "Track Changes" in Microsoft Word, but for entire software projects. Every edit is recoverable.

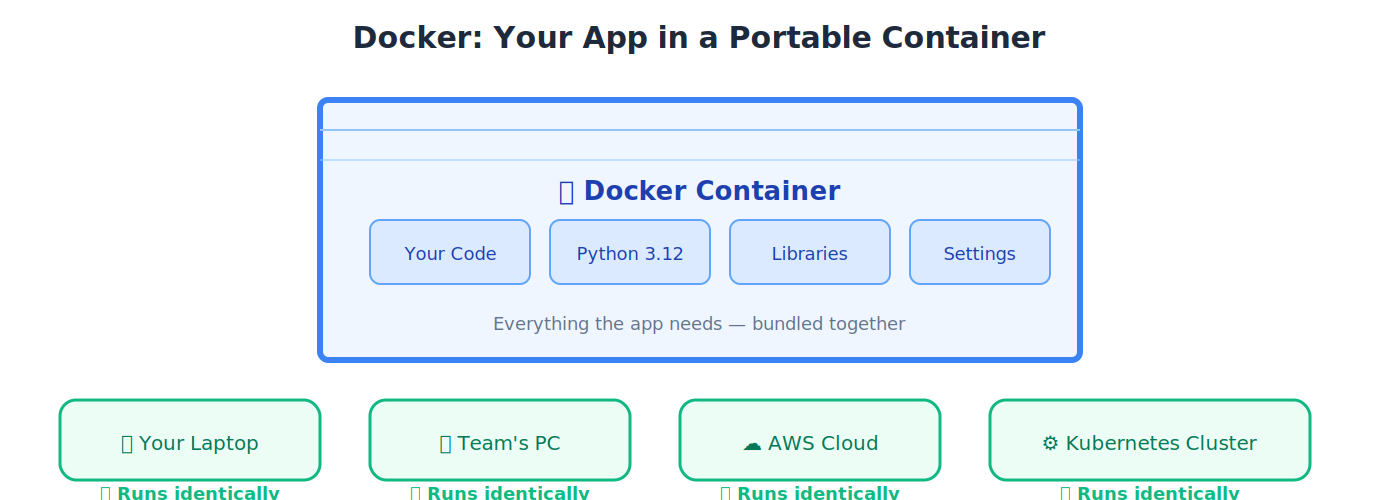

20. Docker: A tool that packages your app into a portable box (container) that runs identically anywhere: your laptop, a colleague's machine, or a cloud server.

💡 A shipping container. Whether it's on a truck in Karachi or a ship in the ocean, the contents inside are identical and self-contained.

21. Context Engineering: Designing the full information environment an agent receives. The #1 skill that separates a $2,000/month agent from one nobody wants.

💡 A Toyota factory has quality controls ensuring every car meets spec. Context engineering is quality control for your AI agents: ensuring consistent, reliable output.

22. Tool Use: An agent's ability to use external tools (searching the web, querying databases, sending emails) rather than just answering from memory.

🔹 You ask "What's the weather in Karachi?": an agent with tool use actually checks a weather service and gives live data. Without tool use, it would just guess.

23. Guardrails: Safety constraints that prevent an agent from doing things it shouldn't.

🔹 A financial agent has a guardrail: no transactions above Rs. 5,000,000 without human approval. Like the barriers on a motorway that keep cars from going off the road.

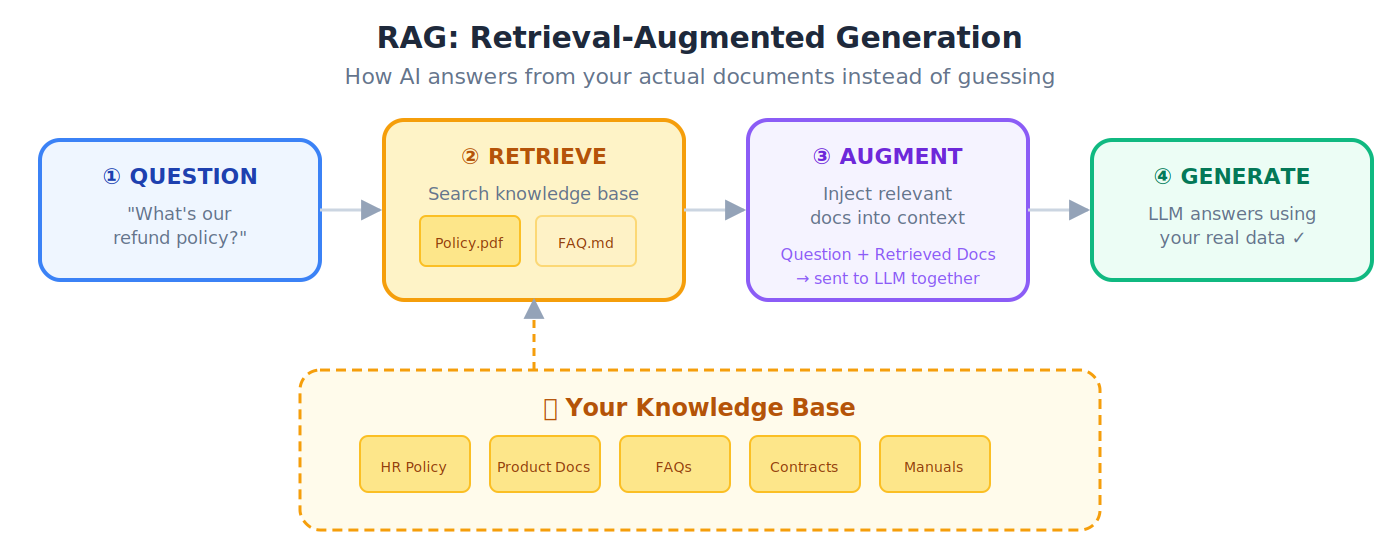

24. RAG (Retrieval-Augmented Generation): Giving AI access to external documents so it answers from facts, not from (potentially wrong) memory.

💡 Taking an open-book exam instead of a closed-book exam. The AI looks up facts in your documents before answering: much more accurate.

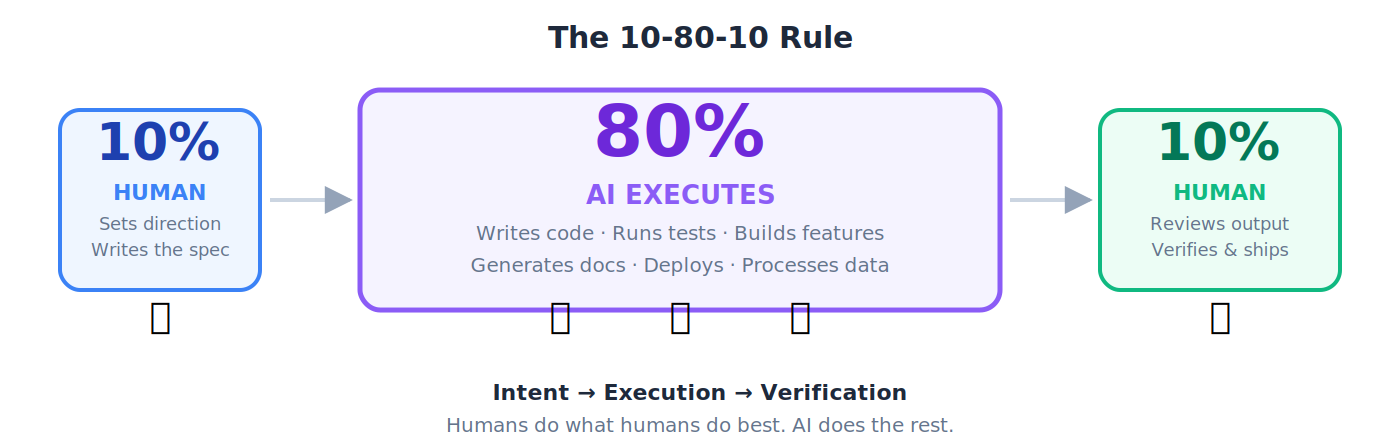

25. 10-80-10 Rule: The operating rhythm of the AI workforce: human sets direction (10%) → AI executes (80%) → human verifies (10%).

🔹 You write a project brief (10%), Claude Code builds the entire application (80%), you review, test, and approve (10%).

26. AGENTS.md / CLAUDE.md: Configuration files that tell your AI agent the rules of your project: coding standards, preferences, architectural decisions.

💡 The onboarding document you give a new employee: "Here's how we work. Here's our style. Here's what we never do." Loaded into every interaction.

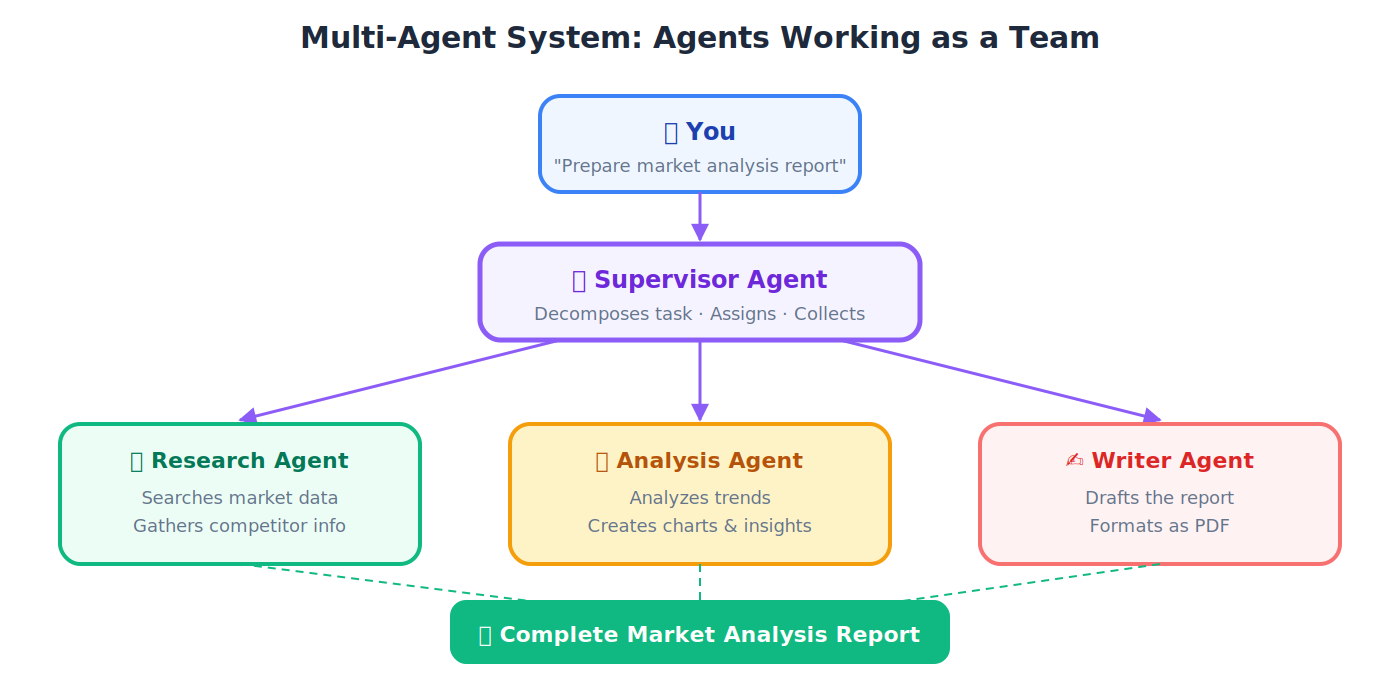

27. Orchestration: Coordinating multiple agents to work together on a task.

💡 A cricket team captain positions fielders, sets bowling rotations, and adjusts strategy. They don't do everything themselves; they coordinate specialists toward a shared goal.

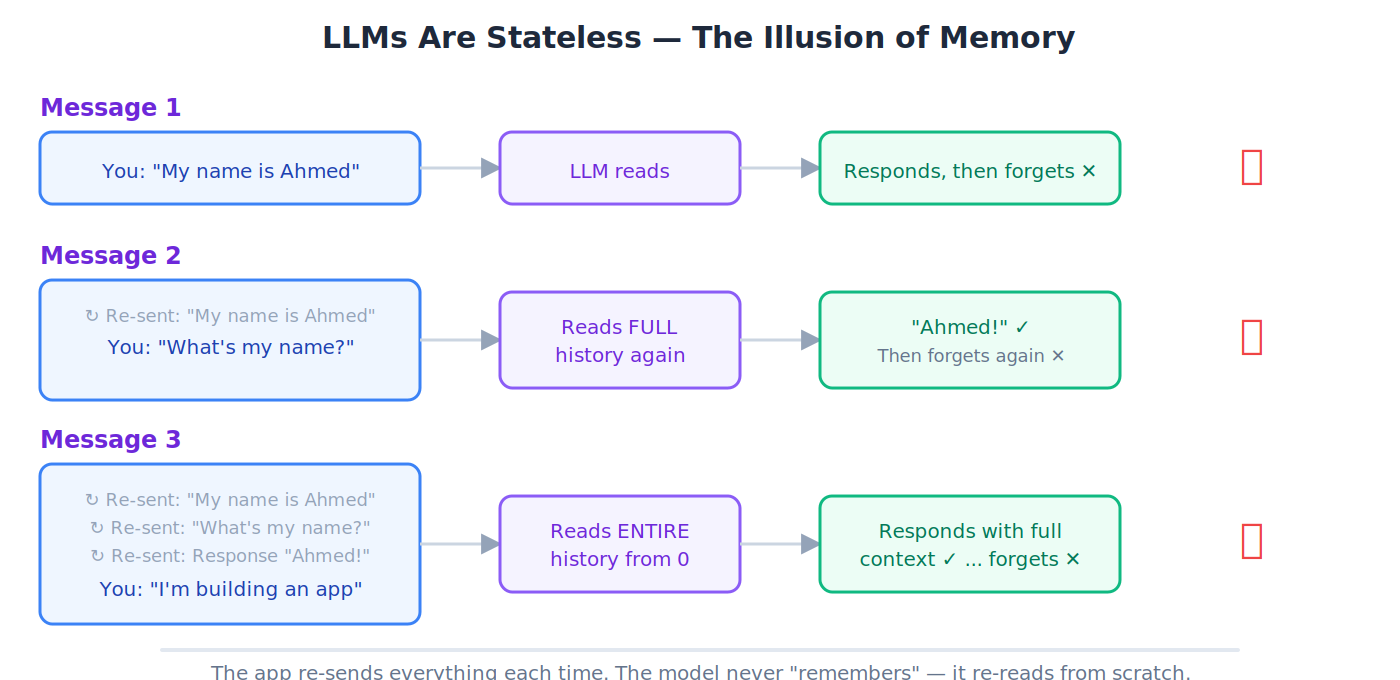

28. Stateless: The AI forgets everything between conversations. Every new chat starts from absolute zero.

💡 A shopkeeper with amnesia: every time you walk in, they greet you as a stranger, even if you were there 5 minutes ago. Chat apps create the illusion of memory by re-sending the full conversation each time.

29. Deployment: Making your application live and available to real users on the internet.

🔹 Your app works on your laptop. Deployment puts it on a cloud server so 10,000 people can use it simultaneously.

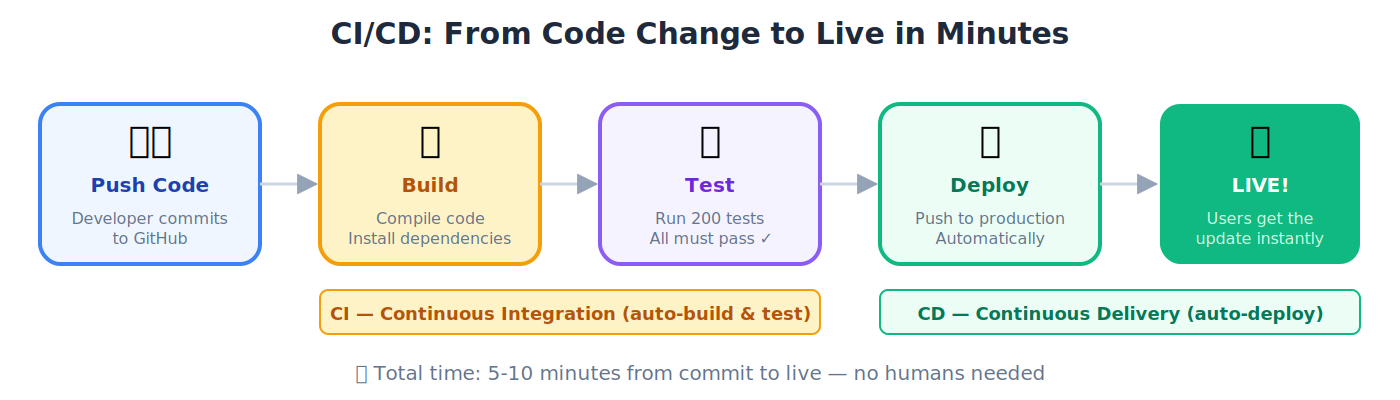

30. CI/CD (Continuous Integration / Continuous Delivery): Automatically testing and deploying code every time a developer makes a change.

🔹 A developer pushes code at 2 PM. Tests run automatically in 3 minutes. All pass. The new version is live by 2:10 PM: zero manual steps.

Architecture: The Runtime Stack

These terms name the components of the AI-Native Company that the Agent Factory produces. They appear throughout the architecture chapters and the thesis. Read them once here; you'll meet them again in every build.

💡 How the pieces fit together: The Agent Factory (the process) builds the AI-Native Company (the output). Inside that company, humans set direction from the Edge Layer, and Digital FTEs execute in the AI Workforce Layer. Paperclip manages the workforce. Each Digital FTE runs on a runtime engine of its choice. Triggers wake the system from the outside world.

AI Worker

The workforce of the AI-Native Company. Role-based agents that get hired, assigned, rostered, and retired. Same concept as Digital FTE and Digital Worker: the thesis uses AI Worker, the book uses Digital FTE. Pick whichever term fits your audience.

📌 Workforce vs. staff (load-bearing distinction): Only AI Workers are workforce. The delegate (OpenClaw) and the manager (Paperclip) are permanent staff, not workforce. Runtime engines are not staff at all; they are the skills the workforce runs on. When the thesis says agent, it means anyone in the building (staff or workforce). When it says AI Worker, it means the workforce specifically.

🔹 Example: A resume-screening AI Worker reads 200 resumes per day, scores them against a job spec, and hands the top 10 to a human recruiter. It's a Digital FTE inside the AI-Native Company's HR workforce — hired by Paperclip, rosterable, retirable.

AI-Native Company

The output of the Agent Factory. The running enterprise: a firm staffed by AI Workers (Digital FTEs), coordinated by a management plane, and directed by humans at the edge. The AI-Native Company is what you end up running. The book also calls this the Agentic Enterprise — same concept, business-facing name.

💡 Analogy: The Agent Factory is the process, like a method of building skyscrapers. The AI-Native Company is the skyscraper that method produces — the thing you actually run.

📌 The triad: Agent Factory (process) → AI-Native Company (output) → AI Workers (workforce inside the output). Three terms, three different roles. Not interchangeable.

Two-Layer Model

The architectural pattern that makes the Agent Factory thesis complete: humans set intent from the Edge Layer, AI Workers execute in the AI Workforce Layer, and specs are the contract language between them.

🔹 Example: A CEO tells their OpenClaw delegate (Edge Layer) to "run the weekly customer-churn report." The delegate hands the task to a Digital FTE in the AI Workforce Layer. The Digital FTE pulls the data, generates the report, and hands it back through the delegate to the CEO for verification.

Principal

The human at the apex of the runtime stack: the one who sets intent, defines the budget, draws the authority envelope, and owns the outcome. Invariant 1 of the thesis. Every legitimate chain of action originates with a principal; a system that acts without one is not autonomous, it is unowned (no liability, no alignment target, no budget owner, no judge of outcome).

🔹 Example: A CFO writes a spec: "Reduce accounts receivable aging by 20% within a $30K budget, without changing payment terms." That spec carries intent, budget, and constraints, the principal layer in concrete form. The delegate (OpenClaw) reads it and brokers work to the workforce; the principal returns to verify the outcome.

📌 What can replace it: nothing. Reference implementations of every other layer can change; the principal layer is non-transferable.

Edge Layer

The layer of the AI-Native Company that serves the individual human. Each human has one agent at the Edge — a personal identic agent (such as OpenClaw) that knows their context, speaks on their behalf, and delegates work downstream.

💡 Analogy: The Edge Layer is the chief-of-staff floor. One agent per executive, representing them across the company.

AI Workforce Layer

The layer of the AI-Native Company that serves the enterprise. This is where AI Workers (Digital FTEs) live and execute — managed by Paperclip, running on runtime engines, coordinating through specs.

💡 Analogy: The AI Workforce Layer is the production floor. Many Digital FTEs, each doing specialized work, all coordinated by the management plane.

Delegate

The personal agent at the Edge Layer that holds the principal's context, represents their judgment, carries their authority envelope, and brokers all downstream work on their behalf. Invariant 2 of the thesis. Without a delegate, the human bottleneck returns and scale collapses to typing speed. OpenClaw is the reference implementation; any MCP-speaking personal agent that holds identity, context, and authority qualifies.

💡 Analogy: A CEO's chief of staff. One per executive, knows their priorities, speaks for them, routes work to the right specialists.

See also: OpenClaw (as Delegate) below for the reference implementation, and Identic AI in Section 1 for the human-sovereignty framing.

OpenClaw (as Delegate)

OpenClaw is the reference implementation of the delegate at the Edge Layer — the "chief of staff" agent that represents the human, knows their context, and speaks on their behalf. Every human in an AI-Native Company needs a delegate; OpenClaw is how we build one.

🔹 Example: When you ask OpenClaw "summarize my week and draft three priorities for Monday," it pulls from your calendar, email, and Slack (tools it's authorized to access), synthesizes an answer in your voice, and waits for you to approve before acting on anything. It is you, at machine speed.

See also: the OpenClaw entry earlier in the glossary for the framework itself.

Manager (Management Plane)

The orchestrator that turns a pile of AI Workers into a workforce: assigns work, enforces budgets, approves risky moves, audits execution, keeps the ledger, and exposes hiring as a callable API. Invariant 3 of the thesis. Without it, agents collide, budgets leak, and no one can answer what the workforce cost or produced. Paperclip is the reference implementation; any orchestrator meeting the management contract qualifies.

💡 Analogy: If the delegate is the chief of staff, the manager is the chief operating officer. One-to-one with the human; one-to-many with the workforce.

See also: Paperclip below for the reference implementation.

Paperclip

The management plane of the AI-Native Company. Paperclip is the COO — it hires Digital FTEs, assigns them work, enforces their budgets, approves risky moves, and keeps the ledger. It exposes hiring as an API that any authorized agent can call, which is how the workforce grows on demand.

💡 Analogy: If OpenClaw is the chief of staff, Paperclip is the chief operating officer. One-to-one with the human; one-to-many with the workforce.

🔹 Example: A customer writes in Bahasa Indonesia. No Digital FTE on the roster speaks it. Paperclip detects the capability gap and, within its authority envelope, calls its own hiring API to manufacture a new Bahasa-speaking Digital FTE. The new worker reads the message and replies. No human was woken.

Meta-Layer (Hiring as a Callable Capability)

The layer that exposes hiring as an API any authorized agent can call to provision a new AI Worker at runtime, inside the principal's authority envelope, without waking a human. Invariant 5 of the thesis. Solves the frozen-roster problem: when a capability gap appears (a customer writes in a language no current Worker speaks), the workforce staffs up on demand under policy. Claude Managed Agents is the reference implementation; any managed-agent API that can generate an agent and provision its environment at runtime qualifies.

🔹 Example: The Bahasa Indonesia trace under Paperclip above is the meta-layer firing. Paperclip detects the gap; the meta-layer's hiring API manufactures the new Worker; the Worker is registered with the manager and stays on the roster.

📌 Dual role: Claude Managed Agents serves as both an engine option (Invariant 4) and the meta-layer (Invariant 5). The same runtime-provisioning capability that runs a Worker also creates new ones, which is why the meta-layer is callable rather than batch-provisioned.

Runtime Engine

The execution substrate a Digital FTE runs on. Each Digital FTE picks its own engine based on what the job demands — not one engine per company. Options include Dapr Agents (durable execution for mission-critical work), Claude Managed Agents (hosted and operated for you), OpenAI Agents SDK (self-hosted, portable), and OpenClaw-native (lightweight, fast to deploy). Internally, every engine has two planes: a harness (control plane) and a compute plane (execution plane / sandbox). See the next two entries.

💡 Analogy: The runtime engine is the skillset the employee brings to the job. A nurse on a heart-surgery team needs different skills than a nurse at a clinic. Same role, different engine.

Harness (Agent Harness)

The control plane of an agent engine: everything around the model that turns it into a working system. Includes the agent loop, tool dispatch, approvals, tracing, context management, recovery, instructions, skills, and validators. Captured in the practitioner shorthand Agent = Model + Harness: the model is the brain you rent from a frontier lab, and the harness is the body, workplace, and standard operating procedure built around it. The compute plane (sandbox) sits next to the harness, not inside it. Credentials stay in the harness while model-generated code runs in the sandbox.

💡 Analogy: If the model is the CPU and the context window is the RAM, the harness is the operating system: it boots, dispatches drivers (tools), curates context, and manages the agent's lifecycle. Your agent code is the application running on top.

🔹 Examples: Claude Agent SDK is a harness you assemble. OpenClaw is a harness you extend through skills. Claude Code, Cursor, and Codex are harnesses tuned for coding work. Claude Managed Agents is a harness Anthropic runs for you behind stable interfaces.

📌 Lineage: The word evolved from test harness (software engineering scaffolding that drives code under test) to eval harness (lm-eval-harness, the scaffolding that drives a model through a benchmark) to agent harness (the scaffolding that drives a model through real-world work). All three are scaffolding around something that does the actual work.

Compute Plane / Sandbox Runtime

The execution plane that sits next to the harness: the secure sandbox where model-generated code actually runs (reads files, executes commands, writes artifacts). Distinct from cloud infrastructure (the metal, Kubernetes, networking) below it and from the harness (the orchestration logic) beside it. The split is load-bearing for security and portability: credentials stay in the harness, model-directed code runs in the sandbox, and the sandbox vendor (E2B, Cloudflare, Daytona, Modal, Runloop, Vercel, Blaxel, or your own Kubernetes) can be swapped without rewriting the agent.

🔹 Example: OpenAI Agents SDK is the harness; you choose the compute plane separately. Claude Managed Agents fuses both behind one API. Dapr Agents assumes Kubernetes as its compute plane.

📌 Three things called "runtime": language runtime (Node.js, Python interpreter) is pure infrastructure. Execution runtime / sandbox is this entry. Agent runtime is sometimes used as a synonym for the harness itself. Watch for the conflation when reading vendor docs.

Trigger

The way the outside world calls the AI-Native Company into motion — a schedule comes due, a webhook arrives, an API call lands, a customer walks in. Claude Code Routines is the reference implementation: it turns each external event into a session that wakes the delegate and fires the chain. Without triggers, the system only moves when a human types a prompt — which isn't really a company, just an assistant with extra steps.

🔹 Example: Every Monday at 9 a.m., a scheduled trigger wakes OpenClaw, which asks Paperclip to run the weekly customer-health report. A Digital FTE pulls the data, generates the report, and emails the executive team. The human configured the trigger once; the system runs on its own from then on.

Summary

A one-sentence taxonomy to memorize:

The Agent Factory (process) builds the AI-Native Company (output). The AI-Native Company employs AI Workers (workforce), who operate across the Two-Layer Model: humans at the Edge Layer (via OpenClaw, the delegate), Digital FTEs in the AI Workforce Layer (managed by Paperclip), each running on a runtime engine of its choice, woken by triggers from the outside world.

You could add this as the last lin

You now know enough to start reading. The full glossary below goes deeper into each term and covers 250+ more.

1. The Agent Factory: Book-Specific Terms

These are the concepts and vocabulary unique to this book. You'll encounter them from Chapter 1 onward, so they come first.

Agent Factory

The process. The spec-driven, human-supervised, Claude-Code-powered method by which AI Workers are designed, manufactured, and deployed. Raw material is human intent; the finished product is a verified outcome. The Agent Factory builds the AI-Native Company, and the AI-Native Company employs AI Workers (Digital FTEs).

📌 Practice, not product. The Agent Factory is not something you buy or install. It is what you learn to operate. The book teaches the practice; the AI-Native Company is what you end up running once you operate it.

💡 Analogy: A car factory takes raw steel and produces finished cars. The Agent Factory takes your business intent ("I need a 24/7 customer support agent") and produces a finished, working Digital FTE.

Industrialized Stack

The thesis's three-layer framing of how value moves through the Agent Factory: Intent (the high-level blueprint of goals, constraints, budgets, and permissions) → Production Engine (the architecture that transforms intent into outcomes) → Outcome (high-fidelity actions and artifacts, verified for accuracy and improved through feedback loops).

🔹 Example: A CFO's directive ("reduce AR aging by 20% within $30K") is intent. The chain of OpenClaw → Paperclip → AI Workers running on engines is the Production Engine. The verified, ledger-updated reduction in days-sales-outstanding is the outcome.

Production Engine

The mechanism that transforms intent into outcome inside the Industrialized Stack. Not an app you download, an architecture: spec-driven instructions feeding role-based AI Workers, packaged skills they bring to the job, MCP for connecting to tools, and feedback loops that close the quality gap over time. The thesis names it "the most important idea in this entire thesis."

💡 Analogy: A car factory's assembly line. Raw steel enters one end, a finished car rolls out the other. Each station does one specialized job, parts move in order, and the result is verified before delivery. The Production Engine works the same way: intent in, verified outcome out, AI Workers as the specialized stations.

Six Invariants

The structural rules that make the AI-Native Company runnable: (1) Principal: the human is the principal; (2) Delegate: every human needs a delegate; (3) Manager: the workforce needs a manager; (4) Engine: each Worker picks its own engine; (5) Meta: the workforce is expandable under policy; (6) Trigger: the world calls the system. Each is a rule about how the company runs; the named products that realize them today (OpenClaw, Paperclip, Claude Managed Agents, Inngest) can be swapped tomorrow without changing the architecture.

📌 See the thesis for the full claim, failure mode if absent, and current realization for each invariant.

Invariant vs. Reference Implementation

The thesis's framing trick. An invariant is a structural requirement that stays true across every version of the system, regardless of which specific product realizes it. A reference implementation is the concrete product used in 2026 to realize an invariant. The invariants are the thesis; the named products are this year's best fit. When a product is named (OpenClaw, Paperclip, Claude Managed Agents, Inngest), the invariant is the rule and the product is one instance.

💡 Analogy: "A house must have a way to enter and exit" is an invariant. "Mahogany double doors with brass handles" is a reference implementation. Replace the doors next year and the house still works; remove the entry-and-exit invariant and it stops being a house.

🔹 Example: Invariant 4 says "each AI Worker picks its own engine." The reference implementations in 2026 are Dapr Agents, Claude Managed Agents, OpenAI Agents SDK, and OpenClaw-native. Swap any of them next year and the invariant still holds.

Digital FTE (Digital Full-Time Equivalent)

An 'AI employee' that does the continuous work of a full-time human worker, 24/7, at a fraction of the cost. A Digital FTE works 168 hours a week with zero fatigue. Same role as the thesis's AI Worker (the workforce of the AI-Native Company): hired, assigned, rostered, retired. Distinct from the delegate (OpenClaw) and manager (Paperclip), which are permanent staff, not workforce. See the Architecture section for how Digital FTEs fit into the runtime stack.

🔹 Example: A Digital FTE for customer support handles 500 conversations per day, every day — doing the work of 5-10 human agents.

Digital Worker / AI Employee

Synonyms for Digital FTE. An AI agent performing sustained, role-based work within an organization; not a one-off chatbot, but a permanent team member.

Spec / Specification

A detailed written description of exactly what needs to be built: goals, constraints, inputs, expected outputs, and behavior. This is the "blueprint" the AI follows.

💡 Analogy: A spec is like an architect's blueprint. A builder doesn't start construction by guessing. They follow detailed plans. In AI development, the spec is the plan, and the AI is the builder.

Spec-Driven Development (SDD)

A development methodology where you write the detailed specification first, then let AI generate the code, tests, and documentation from that spec. The spec is the source of truth; not the code.

📌 The four phases: Research → Specification → Refinement → Implementation.

🔹 Example: You want a REST API for a bookstore. Instead of coding, you write a spec: "The API must have endpoints for listing books, adding a book, searching by author, and deleting by ISBN. Each book has a title, author, ISBN, price, and stock count. All inputs must be validated. Return JSON." You hand this spec to Claude Code, and it generates the entire FastAPI application, tests, and documentation.

💡 Analogy: A spec is like an architect's blueprint. No construction company starts building by guessing what the house should look like. They follow detailed plans. In SDD, the spec is the plan, and the AI is the construction crew.

Test-Driven Generation (TDG)

The Python-specific form of SDD. You write tests first (defining what the code should do), then let Claude Code generate the code that passes those tests.

💡 Analogy: Before baking a cake, you write down exactly what a perfect cake looks like: height, texture, taste. Then you try a recipe. If the cake doesn't match your criteria, you try again. The criteria are the tests; the recipe is the generated code.

10-80-10 Rule

The operating rhythm of the AI workforce: a human provides the first 10% (intent and direction), AI handles the middle 80% (execution), and the human returns for the final 10% (verification and judgment).

📌 Origin: Steve Jobs followed this pattern at Apple: set the vision (10%), let his team build (80%), return to polish and ship (10%). Now replace "team" with "AI employees."

AGENTS.md / CLAUDE.md

Configuration files that provide persistent context to an AI coding agent. They contain your project's rules, coding standards, architectural decisions, and preferences, loaded into every interaction.

💡 Analogy: When a new employee joins your team, you give them an onboarding document: "Here's how we work. Here's our coding style. Here's what we never do." AGENTS.md is that onboarding document for your AI agent.

SPEC.md

A specific file containing the detailed specification for a project. The single "source of truth" for what the software should do.

🔹 Example: Your SPEC.md might say: "Build a WhatsApp chatbot for a restaurant. It must show the menu, take orders, confirm delivery address, calculate total with GST, and send an order confirmation. Maximum response time: 2 seconds. Language: Urdu and English."

SKILL.md

A file that packages a reusable capability (skill) for an AI agent, containing instructions, best practices, and templates for a specific type of task (e.g., generating PDFs, deploying Docker containers).

🔹 Example: A Docker SKILL.md might contain: "When containerizing a FastAPI app, always use a multi-stage build. Base image: python:3.12-slim. Always include a health check endpoint. Never run as root." The agent reads this skill file and follows these practices automatically every time it does Docker work.

Skill Library

A collection of SKILL.md files that an AI agent can draw from, giving it expertise across many domains, like a reference library an employee can consult.

Agent Skills

The specific capabilities an AI agent has, defined by its tools, knowledge, and SKILL.md files.

🔹 Example: A human employee has skills like "Excel proficiency" or "contract negotiation." An AI agent has skills like "PDF generation," "database querying," or "email drafting."

Agent Triangle

A framework in this book describing the three components every effective agent needs: (1) a clear role, (2) specific tools, and (3) well-defined constraints. Miss any one, and the agent underperforms.

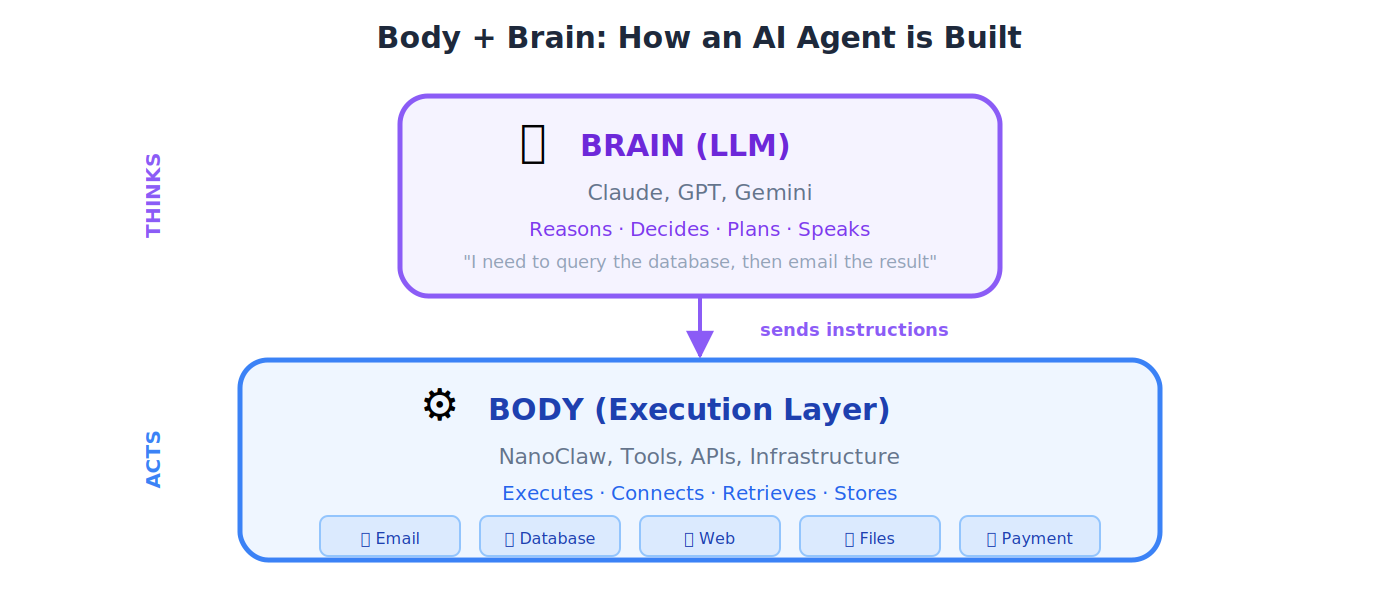

Body + Brain

An agent architecture pattern. The Brain is the LLM that reasons and makes decisions. The Body is the execution layer (tools, APIs, infrastructure) that carries out those decisions.

💡 Analogy: Your brain decides "I want to pick up that glass." Your hand (body) executes the action. In an AI agent, Claude (brain) decides "I need to query the database," and NanoClaw (body) executes the query.

NanoClaw

A lightweight container runtime that serves as the "Body" of an agent in the OpenClaw architecture, executing tasks, running tools, and managing the agent's environment.

💡 Analogy: If the LLM (Brain) is the pilot who decides where to fly, NanoClaw (Body) is the airplane that actually carries out the flight: engines, wings, controls, and all.

OpenClaw

An open-source application framework for building agent-powered applications. In the thesis architecture, OpenClaw is the delegate at the Edge Layer — the "chief of staff" agent that represents the human, knows their context, and speaks on their behalf. NanoClaw is its container-based execution layer.

TutorClaw

A 24/7 AI tutor delivered via WhatsApp, built on the Agent Factory architecture. TutorClaw reads from this book as its system of record — teaching from verified knowledge rather than probabilistic generation. It's the book's first Digital FTE, and a live example of how the Agent Factory produces AI Workers."

Claude Code

Anthropic's AI coding agent, run from the terminal (command line). It reads your entire codebase, understands your project context, and generates code based on your specifications. The primary development tool in this book.

Cowork

Anthropic's desktop agent for non-coding knowledge tasks: document management, research, and file organization. Think of it as your AI office assistant.

Dispatch

A feature that lets you assign work to Cowork from your phone. You send a task while commuting; Claude works on your desktop. When it finishes, you get a push notification.

💡 Analogy: Dispatch turns Cowork from a tool you sit next to into an employee you manage remotely, like texting your assistant "prepare the report" while you're in a meeting.

Computer Use

A research preview feature where Claude can see and control your screen on macOS (clicking buttons, typing in applications, navigating interfaces) like a remote employee using your computer.

🔹 Example: You tell Claude: "Open the spreadsheet on my desktop, update the Q3 revenue column with these numbers, then email it to the finance team." Claude sees your screen, opens Excel, types in the data, opens your email client, and sends it, just like a human assistant sitting at your computer.

Claude Desktop

The desktop application for interacting with Claude, which hosts Cowork, Computer Use, and Dispatch features.

Hooks

Automated actions that trigger before or after Claude Code performs certain operations, like automatic code formatting after every file save, or running tests before every commit.

💡 Analogy: Hooks are like standing instructions to an assistant: "Every time you finish writing a letter, run spell-check before showing it to me."

Subagents

Specialist agents that Claude Code can spawn to handle specific subtasks within a larger project, each with its own focused context.

💡 Analogy: A project manager (main agent) delegates the design work to a graphic designer (subagent) and the accounting to a bookkeeper (subagent). Each focuses on their specialty.

Tasks System

A built-in feature of Claude Code for managing persistent state across sessions, tracking what's been done, what's pending, and what's next in a multi-step project.

Context Engineering

The quality-control discipline for Digital FTE manufacturing. Designing the full information environment an agent receives to ensure consistent, high-quality output. This is the #1 skill that separates a $2,000/month sellable agent from one nobody wants.

💡 Analogy: A Toyota factory has systematic quality controls ensuring every car meets specification. Context engineering ensures your Digital FTEs deliver consistent, sellable value.

Context Injection

Inserting relevant external information into the AI's context window right before it generates a response, giving it the right information at the right time.

💡 Analogy: Before a lawyer walks into court, their assistant hands them a folder with all the relevant case files. Context injection does the same for AI.

Context Isolation

Starting a fresh session with clean context instead of carrying over potentially confused or contradictory state from a long previous session.

💡 Analogy: When your desk gets so cluttered you can't think, you clear everything off and start fresh. Context isolation is the same for AI; sometimes a clean slate produces better results than a messy history.

Harness Engineering

The discipline of designing and continuously improving the environment around an AI agent so it can do useful work reliably without supervision. Sits as the third layer in a progression: prompt engineering optimizes one exchange, context engineering manages what the model sees at once, and harness engineering builds the execution environment the agent operates in across hundreds of decisions. The named practice was crystallized in early 2026 by Mitchell Hashimoto, who described his daily habit of engineering a permanent fix into the agent's environment every time the agent made a mistake. OpenAI and Anthropic published expanding articles within weeks. The slogan version: don't fix the agent, fix the world the agent lives in.

💡 Analogy: Prompt fixes are bandaids; harness fixes are vaccines. A prompt fix solves one instance of a failure. A harness fix (adding a tool, a validator, a skill, a check, an instruction) closes that class of failure forever, for every future agent that runs in the same harness.

🔹 Example: TutorClaw gives feedback that's too harsh for a beginner. The naive fix is to rewrite the prompt. The harness fix is to add a tone-check skill that gates output through a rubric. Every future TutorClaw answer goes through it, no prompt change needed.

📌 In OpenClaw: The unit of harness extension is the SKILL.md file. Every skill your students write is a harness engineering artifact, and the same Hashimoto loop (observe failure → ask why it was possible → engineer the permanent fix → verify it compounds) applies.

Progress Files

Files that track the state of a long-running project across multiple Claude Code sessions, documenting what's been completed, decisions made, and what's next.

💡 Analogy: A construction site logbook. Every day, the foreman records what was built, what problems arose, and what's planned for tomorrow. When a new crew arrives (new session), they read the log and continue seamlessly.

Session Architecture

Designing how you structure and sequence your interactions with an AI agent across multiple sessions for a large project, deciding when to start fresh, when to carry forward, and what context to preserve.

🔹 Example: For a 30-chapter book project, you don't dump the entire book into one session. You design an architecture: Session 1 covers the outline, Session 2 writes Chapter 1 (carrying forward the outline as context), Session 3 writes Chapter 2 (carrying forward the outline + Chapter 1 summary), and so on. Each session gets exactly the context it needs, no more, no less.

Five Powers

The five capabilities that enable the shift from traditional user interfaces to autonomous AI agents: (1) natural language understanding, (2) reasoning, (3) tool use, (4) memory, and (5) planning. Combined, they allow agents to understand intent and execute independently.

💡 Analogy: Think of a capable human assistant. They can (1) understand what you say, (2) think through problems, (3) use tools like phones and computers, (4) remember your preferences, and (5) plan multi-step projects. An AI agent with all Five Powers can do the same: that's what makes the shift from "software you operate" to "software that operates for you."

Agent Maturity Model

A five-level framework describing the stages of an organization's AI adoption:

| Level | Name | Description |

|---|---|---|

| 1 | Experimental | Individual developers trying AI coding tools |

| 2 | Standardized | Organization-wide adoption with governance |

| 3 | AI-Driven | Specs become living documentation; workflows redesigned |

| 4 | AI-Native | Products where AI/LLMs are core components |

| 5 | Autonomous | Entire organization AI-native; self-improving systems |

AI-Assisted Development

Using AI as a helper or copilot: code completion, bug detection, documentation generation. The human still writes most code.

🔹 Example: GitHub Copilot suggesting the next line of code as you type.

AI-Driven Development

AI generates significant code from human-written specifications. The human acts as architect, director, and reviewer; not typist.

🔹 Example: You write a SPEC.md describing a REST API, and Claude Code generates the entire FastAPI application, tests, and documentation.

AI-Native Development

Applications architected around AI capabilities from the ground up: AI isn't added as a feature; it's the core of the product.

🔹 Example: TutorClaw isn't a textbook with a chatbot bolted on. The AI tutor is the product. The entire architecture is built around the LLM's capabilities.

Nine Pillars of AIDD

Nine foundational principles of AI-Driven Development as defined in this book: covering everything from specification-first design to continuous verification.

OODA Loop (Observe, Orient, Decide, Act)

A rapid decision-making cycle applied to working with AI agents. You observe the agent's output, orient yourself by checking if it matches the spec, decide whether to accept or redirect, and act by either approving or giving new instructions.

📌 Origin: A military strategy framework developed by fighter pilot John Boyd, now applied to the fast iterative cycles of AI-driven work.

PRIMM-AI+

A pedagogical framework used in this book: Predict what the code will do → Run it → Investigate the output → Modify it → Make your own version. The "AI+" means AI is embedded in every step.

Identic AI

A concept where each human has a personal AI agent that reflects their judgment, preferences, and authority: delegating tasks across multiple AI systems on their behalf. In this book's reference architecture, OpenClaw is the identic AI — the delegate at the Edge Layer."

💡 Analogy: A CEO has an executive assistant who knows their priorities and decision-making style so well they can act on the CEO's behalf. Identic AI is the AI version: your personal representative in the Agent Factory.

System of Record / Source of Truth

The one authoritative data source that everyone trusts as accurate. When there are conflicting versions, the system of record is the final word.

🔹 Example: If your company's HR system says an employee's salary is Rs. 200,000 but a spreadsheet says Rs. 180,000, the HR system is the system of record.

Bounded Workflow

A workflow with clearly defined start points, end points, and constraints: the agent knows exactly what it can and cannot do. No ambiguity, no scope creep.

Escalation Protocol

A predefined rule for when an agent should stop and hand a task to a human: because it's too complex, too risky, or outside the agent's authority.

🔹 Example: A customer service agent handles routine questions, but if a customer threatens legal action, the escalation protocol transfers the conversation to a human manager.

Tool Interface

The defined contract for how an agent connects to and uses an external tool, specifying what inputs the tool expects and what outputs it returns.

Vertical Intelligence

Deep expertise in a specific industry's terminology, regulations, workflows, and pain points, packaged into an agent.

🔹 Example: An AI agent for Pakistani textile exporters that understands SRO notifications, HS codes, LC documentation, and SBP regulations; not just generic business knowledge.

Agentic Enterprise

An organization that has embedded AI agents into its core operations, with Digital FTEs alongside human employees as a standard way of working. In the thesis, this is called the AI-Native Company — the running enterprise the Agent Factory produces. The two terms refer to the same thing.

🔹 Example: A logistics company where AI agents handle order tracking, route optimization, and customer notifications 24/7, while human employees focus on partnerships, exception handling, and strategy. The agents aren't a side project; they're part of the org chart.

Custom-Built AI Employee

An AI agent you build from scratch for a specific business need, tailored exactly to your workflow and domain.

🔹 Example: A textile exporter builds an agent that reads incoming LC (Letter of Credit) documents, checks them against SBP regulations, flags discrepancies, and drafts amendment requests. No off-the-shelf tool does this; it's custom-built for their exact workflow.

Pre-Built AI Employee

An off-the-shelf AI agent you can use immediately without custom development, like using ChatGPT, Claude, or an existing customer service bot.

🔹 Example: Using Claude directly to draft emails, summarize documents, or answer questions. No development needed; you start immediately. The trade-off: it works for general tasks but isn't specialized for your unique business process.

Build vs. Buy

The strategic decision: build your own custom AI agent (more control, higher cost, takes longer) or use an existing one (faster deployment, less customization)?

🔹 Example: A hospital needs a patient scheduling agent. Buy: Use an existing healthcare AI platform (deployed in weeks, but limited customization. Build: Create a custom agent integrated with their specific EMR system, doctor preferences, and Urdu/English support) takes months but fits perfectly. The right choice depends on budget, timeline, and how unique the workflow is.

FTE Development Plugin

A tool or extension that aids in the development and deployment of Digital FTEs, streamlining the Agent Factory workflow.

Skill Shim

A thin adapter layer that translates between different agent skill formats, enabling compatibility across platforms.

💡 Analogy: A travel power adapter. Your Pakistani plug doesn't fit a UK socket, but a shim (adapter) makes them compatible without rewiring anything.

Gateway Proxy Pattern

An architectural pattern where a single entry point (gateway) routes requests to the correct backend agent or service, managing authentication, rate limiting, and load distribution.

💡 Analogy: The reception desk of a large hospital. All patients enter through reception, which checks their appointment, verifies their identity, and directs them to the right department.

Piggyback Protocol

A startup strategy referenced in the book: building your product on top of an existing platform's distribution to reach users quickly, before building your own independent channels.

🔹 Example: Instead of building your own messaging app to deliver TutorClaw, you build on top of WhatsApp: which already has 100+ million users in Pakistan. You "piggyback" on WhatsApp's distribution to reach students instantly, without convincing anyone to download a new app.

2. Core AI and Machine Learning

These are the foundational ideas behind everything in this book.

AI ⊃ ML ⊃ DL ⊃ LLMs

(Each is a subset of the one before it)

AI (Artificial Intelligence)

Making computers do things that normally require human intelligence, such as understanding language, recognizing images, making decisions, solving problems.

🔹 Example: When your phone's keyboard predicts your next word in Urdu or English, that's AI. When Careem estimates your ride time based on traffic, that's AI.

ML (Machine Learning)

A way of teaching computers by showing them examples instead of writing explicit rules. The computer finds patterns in data and learns from them.

🔹 Example: YouTube recommends videos you might like. Nobody programmed a rule that says "if user watched cricket highlights, suggest more cricket." The system learned this pattern from billions of viewing habits.

💡 Analogy: Imagine teaching a child to recognize mangoes. You don't explain the biology. You show them dozens of mangoes and say "mango." Eventually, they recognize mangoes they've never seen, even different varieties like Chaunsa and Sindhri. That's machine learning.

DL (Deep Learning)

A more powerful version of machine learning that uses "neural networks" with many layers. It can learn extremely complex patterns, like understanding speech, generating images, or translating between languages.

🔹 Example: When Google Translate converts an Urdu paragraph into fluent English, deep learning powers that translation.

💡 Analogy: If ML is learning to recognize simple shapes, DL is learning to recognize faces in a crowded Saddar Bazaar: far more complex, but the same principle of learning from examples.

Model

A program that has been trained on data and can now make predictions or generate outputs. When people say "GPT-4" or "Claude," they're referring to models.

💡 Analogy: A model is like a student who has studied millions of textbooks. You ask questions, they answer based on everything they've read. Different models are like different students: some are better at math, others at creative writing.

Foundation Model

A very large, general-purpose model trained on enormous data. It can be adapted to many different tasks without retraining from scratch. Claude, GPT-4, and Gemini are foundation models.

💡 Analogy: A foundation model is like a university graduate with a broad education. They haven't specialized yet, but they can quickly adapt to many jobs: accounting, writing, research, management.

Neural Network

A computing system inspired by the human brain, with layers of interconnected "nodes" that process information, each layer extracting increasingly complex patterns.

💡 Analogy: Imagine a series of sieves with different mesh sizes. You pour raw data through the first sieve (catches large patterns), then the next (catches finer patterns), then the next (catches the finest details). A neural network works similarly, with each layer refining the information.

Transformer

The specific neural network architecture that powers all modern LLMs. Invented in 2017, it's especially good at understanding relationships between words, knowing that "bank" means something different in "river bank" vs. "bank account."

💡 Analogy: Older AI read sentences word by word, like reading through a keyhole (you see one word at a time and guess the meaning. Transformers read the entire sentence at once, like opening the whole door) they see every word simultaneously and understand how each word relates to every other word. This is why they're so much better at understanding language.

💡 Why it matters: Every AI model in this book (Claude, GPT, Gemini) is built on transformers. You don't need to understand the math, but you'll see the term often.

Multimodal Model

A model that can work with multiple types of input (text, images, audio, video) not just one.

🔹 Example: You photograph a restaurant bill and ask Claude "What's the total?" The model understands both the image and your text question. That's multimodal capability.

Reasoning Model

A model designed to "think through" complex problems step by step before answering, rather than responding instantly. Often more accurate on hard problems.

💡 Analogy: In a cricket match, some batsmen play instinctive shots (fast, sometimes reckless). Others study the field, read the bowler, and plan each shot deliberately. A reasoning model is the second type: slower but more reliable on difficult deliveries.

Training

The process of feeding massive amounts of data to a model so it learns patterns. This happens before you ever interact with the model; it's the "education" phase.

💡 Analogy: Training is like a chef spending years at culinary school: tasting thousands of dishes, learning techniques, practicing recipes. By the time they open their restaurant (when you use the model), the learning has already happened.

Pretraining

The first, most expensive phase of training. The model reads enormous amounts of text (books, websites, code, conversations) and learns general knowledge about language and the world.

Post-Training

Additional training after pretraining to make the model helpful, safe, and aligned with human expectations. This is where a model learns to follow instructions, be polite, and refuse harmful requests.

💡 Analogy: Pretraining is like getting a general education (school and university). Post-training is like workplace orientation: learning company culture, communication style, and professional norms.

Fine-Tuning

Training an existing model further on a specific, smaller dataset to make it an expert in a particular domain.

🔹 Example: Taking a general-purpose model and fine-tuning it on thousands of Pakistani tax rulings so it becomes especially good at tax advisory.

💡 Analogy: A general doctor completing additional training to become a cardiologist. Same foundational education, now specialized.

Parameters

The internal numbers of a model that get adjusted during training. More parameters generally means a more capable model. Modern LLMs have billions or trillions of parameters.

💡 Analogy: Parameters are like the individual threads in a massive carpet. During training, each thread is adjusted (color, tension, placement) until the complete pattern emerges. A model with 100 billion parameters has 100 billion threads forming an incredibly complex pattern.

Weights

The specific numerical values of the parameters after training. When someone says "downloading the weights," they mean the file containing all those trained numbers: the model's learned knowledge.

Dataset

A collection of data used to train or evaluate an AI model.

🔹 Example: A dataset for training a spam filter might contain 1 million emails, each labeled "spam" or "not spam." A dataset for training a translation model might contain millions of English-Urdu sentence pairs.

Benchmark

A standardized test for measuring and comparing how well different AI models perform.

🔹 Example: Just like CSS or Cambridge exams let you compare students, benchmarks like MMLU (general knowledge) or HumanEval (coding ability) let researchers compare AI models fairly.

Inference

The process of a trained model generating a response to your input. Every time you ask Claude a question and get an answer, that's inference.

💡 Analogy: Training is studying for an exam. Inference is sitting the exam. The learning already happened: now the model applies what it learned. You pay for inference (each API call costs money), not for training.

3. LLM Basics

LLMs are the engines powering every AI agent in this book. This section explains how they work at a practical level.

LLM (Large Language Model)

A very large AI model trained on vast amounts of text that can understand and generate human-like language and code. Claude, GPT-4, and Gemini are all LLMs.

💡 Analogy: An LLM is like an incredibly well-read research assistant who has read every Wikipedia article, millions of books, and billions of web pages. You can ask them about almost anything, and they'll draw on that reading to help: writing, analysis, code, translation, and more.

Prompt

The input you give to an AI model: your question, instruction, or request. The quality of your prompt directly affects the quality of the response.

🔹 Example: "Write something about marketing" is a weak prompt. "Write a 500-word LinkedIn post about why Pakistani textile exporters should use AI agents for order tracking, in a professional but conversational tone" is a strong prompt.

System Prompt

Hidden instructions given to an AI before your conversation starts. Set by the developer, not the user. They shape the model's personality, behavior, and constraints.

🔹 Example: A banking chatbot's system prompt might say: "You are a helpful assistant for HBL. Answer in Urdu or English based on the customer's language. Never reveal account balances without OTP verification. If asked about loans, direct to the loans page."

💡 Analogy: A system prompt is like a manager's briefing to a new employee on day one: "Here's who we are, here's how we talk to customers, here's what you must never do."

User Prompt

The message you (the user) actually type. This is your side of the conversation.

Instruction

A specific directive within a prompt telling the model what to do.

🔹 Example: "Summarize this in three bullet points," "Translate to Urdu," "Fix the bug in this code", each is a clear instruction.

Context

All the information available to a model during a conversation: the system prompt, conversation history, uploaded documents, and your current message combined.

💡 Analogy: When you ask a colleague for advice on a deal, the "context" is everything they know: the client history, previous emails, the contract terms, your company's policies. The more relevant context, the better the advice.

Context Window

The maximum amount of text an LLM can process at once, measured in tokens. Think of it as the model's "working memory."

🔹 Example: Claude models offer context windows ranging from 200,000 to over 1 million tokens. Even 200,000 tokens is roughly 150,000 words (an entire novel). Older models might handle only 4,000 tokens (a few pages).

💡 Analogy: A context window is like the size of a desk. A small desk holds only a few papers, and you keep removing old ones to make room. A huge desk lets you spread out an entire project and see everything at once. Bigger context window = bigger desk.

Token

The basic unit of text an LLM processes. A token is roughly ¾ of a word. Short words like "the" are one token. Longer words like "unbelievable" get split into 3-4 tokens. Spaces and punctuation also consume tokens.

🔹 Example: "I love biryani" ≈ 4 tokens. A full page of text ≈ 500-700 tokens. You're charged per token when using AI APIs.

Completion / Generation

The output an LLM produces in response to your prompt. When the model "completes" your request, that response is the completion.

Structured Output

When an LLM generates its response in a specific, machine-readable format (like JSON) instead of conversational text, so other software can easily process it.

🔹 Example: Instead of "The temperature in Karachi is 35 degrees and it's sunny," a structured output would be:

{"city": "Karachi", "temp": 35, "condition": "sunny"}. Software reads this format effortlessly.

Hallucination

When an AI model confidently generates false, inaccurate, or fabricated information, presenting it as fact.

🔹 Example: You ask about a Supreme Court judgment and the model invents a case (complete with fake citation numbers and a fake bench) and presents it as real.

💡 Analogy: A student who doesn't know the answer on an exam but writes a very confident, detailed response anyway. It reads like it's correct, but it's entirely made up.

Grounding

Connecting an AI model to factual, verified data sources so it gives accurate answers instead of hallucinating.

💡 Analogy: Grounding is like letting a student use their textbook during an exam. Now their answers are based on real information, not unreliable memory.

Temperature

A setting that controls creativity vs. predictability in an LLM's responses. Low temperature (0) = very consistent. High temperature (1+) = more creative and varied.

💡 Analogy: Temperature is like a chef's freedom in the kitchen. Temperature 0: "Follow the recipe exactly, no substitutions." Temperature 1: "Improvise freely." You want exact recipes for medication dosages, but creative freedom for a new dish.

Latency

The time delay between sending a request and receiving a response. Lower latency = faster. Measured in milliseconds or seconds.

🔹 Example: If Claude responds in 1 second, that's low latency. If it takes 15 seconds, that's high latency. Users get impatient beyond 2-3 seconds.

Throughput

How many requests a system can handle per unit of time. High throughput = serving many users simultaneously.

💡 Analogy: Latency is how fast one car passes through a toll plaza. Throughput is how many cars the toll plaza handles per hour. You want both low latency and high throughput.

Deterministic vs. Non-Deterministic

Deterministic: Same input always produces the exact same output (like a calculator: 2+2 always equals 4). Non-deterministic: Same input can produce different outputs each time.

LLMs are non-deterministic: ask the same question twice, and you may get slightly different (but equally valid) answers. This isn't a bug; it's fundamental to how the technology works.

Stateless

Having no memory between separate interactions. Each new conversation with an LLM starts from absolute zero: the model has no knowledge of any previous conversation.

💡 Analogy: A shopkeeper with amnesia. Every time you walk in, they greet you as a stranger, even if you were there five minutes ago. Chat apps create the illusion of memory by re-sending the entire conversation history with every message.

Prompt Engineering

The skill of crafting clear, specific instructions to get the best possible output from an AI model. Not just "what you ask" but "how you ask it."

🔹 Example: Instead of "Write about AI," a prompt engineer writes: "You are a technology journalist writing for Dawn newspaper. Write a 600-word article explaining how Pakistani banks are using AI agents for fraud detection. Include one real example. Use simple language accessible to a non-technical business reader."

NLP (Natural Language Processing)

The branch of AI dealing with understanding, interpreting, and generating human language, the foundation that makes LLMs possible.

🔹 Example: When you type a search query in broken English and Google still understands what you meant, that's NLP at work.

Copilot

An AI assistant integrated into a software environment (like a code editor) that works alongside you to boost productivity, suggesting, auto-completing, and reviewing as you work.

🔹 Example: GitHub Copilot suggests code as you type. It's like having a knowledgeable colleague looking over your shoulder, finishing your sentences.

4. Knowledge, Retrieval, and Context

These terms describe how AI agents access and use external knowledge for better, more accurate answers.

RAG (Retrieval-Augmented Generation)

A technique where an AI first retrieves relevant information from external documents or databases, then uses that information to generate a more accurate response.

💡 Analogy: Taking an open-book exam. Instead of relying only on memorized (possibly wrong) knowledge, you look up specific facts in your reference material before writing your answer. RAG gives AI its own reference library.

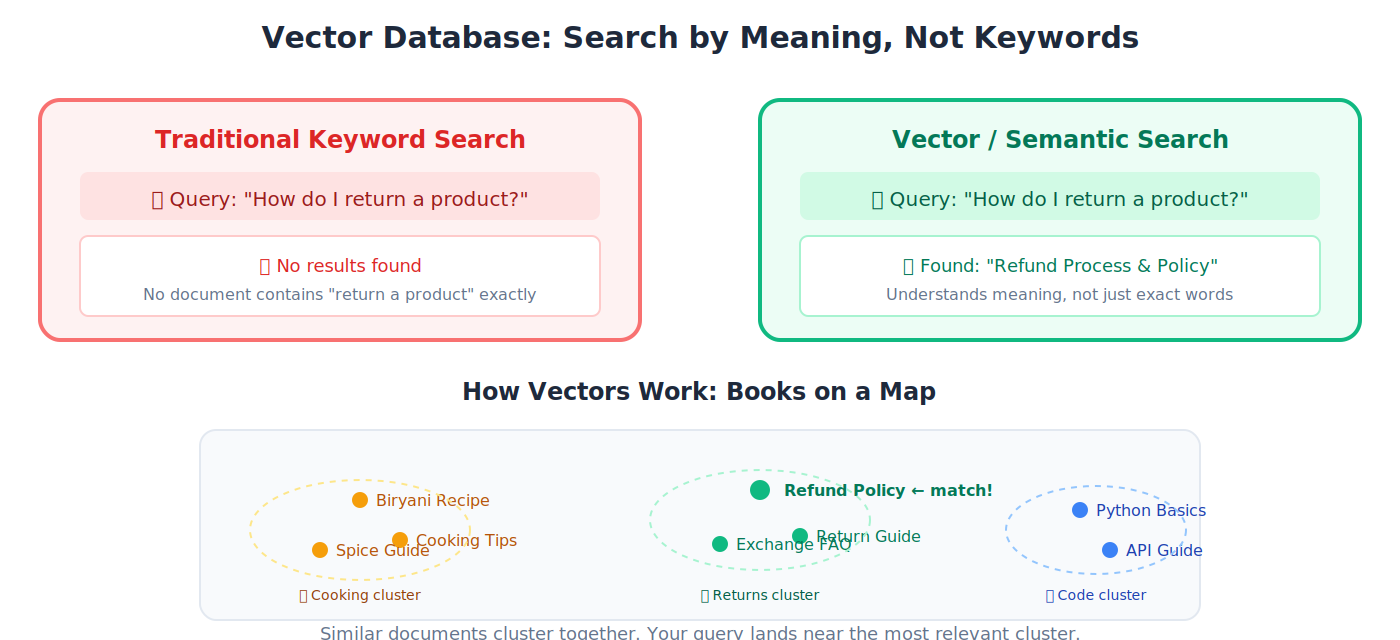

Embedding

Converting text into numerical coordinates so a computer can measure how similar different pieces of text are, capturing meaning, not just keywords.

💡 Analogy: Imagine placing every book in a library on a giant map where similar books cluster together. Cookbooks sit near each other, far from physics textbooks. Embeddings create this "similarity map" in mathematical space.

Vector

A list of numbers representing a piece of text in mathematical space. When text is converted to an embedding, the result is a vector.

🔹 Example: The word "cricket" might become

[0.8, 0.3, 0.7, 0.1, ...]: a long list of numbers that captures both the sport and the insect meanings, distinguished by surrounding context.

Vector Database

A specialized database for storing and quickly searching vectors, finding similar content by meaning rather than exact keyword match.

🔹 Example: You store 10,000 company documents as vectors. When someone asks "What's our return policy?" the vector database finds the most relevant documents instantly, even if none of them contain the exact phrase "return policy."

💡 Analogy: A traditional database searches by exact keywords (like searching a phonebook by name). A vector database searches by meaning (like asking a librarian "find me books similar to this one").

Semantic Search

Searching by meaning rather than exact keywords. "How do I return a product?" matches a document titled "Refund Process" even though the words are completely different.

🔹 Example: An employee searches "how to take time off" in the company knowledge base. Semantic search finds the document titled "Annual Leave Policy and Procedures", even though none of the search words appear in the title. Traditional keyword search would find nothing.

Retrieval

Fetching relevant information from a data source (database, document collection, the web) for an AI to use in generating a response.

🔹 Example: A customer asks your support agent "What's your warranty on laptops?" The agent retrieves the warranty policy document from your knowledge base, reads the relevant section, and generates an accurate answer based on your actual policy; not a guess.

Reranking

After retrieving multiple results, re-ordering them by relevance so the most useful result appears first: a quality filter after the initial search.

Chunking / Chunk

Breaking a large document into smaller pieces so they can be stored and searched individually.

🔹 Example: A 200-page HR manual is split into paragraph-sized chunks. When someone asks about leave policy, the system retrieves only the 3-4 most relevant paragraphs, not the entire manual.

Knowledge Base

An organized collection of information (documents, FAQs, manuals, policies) that an AI can search and reference.

🔹 Example: A company's internal wiki containing product docs, HR policies, and training materials, structured so an AI agent can find answers instantly.

Grounding Data

The specific factual data connected to an AI model to ensure accurate, fact-based responses rather than hallucinated guesses.

MCP (Model Context Protocol)

An open standard (created by Anthropic, now governed by the Linux Foundation) that lets any AI agent connect to any external tool using a universal protocol: search, databases, email, calendars, file systems. MCP is the protocol for agents calling tools. For the separate protocol family that handles agents paying for those tools, see Section 11: ACP, AP2, x402, and MPP.

💡 Analogy: Before USB, every phone had a different charger. USB became the universal connector. MCP is the "USB standard" for AI agents: one protocol that lets any agent plug into any tool. Build your agent once, connect it to everything.

Connector

A specific integration linking an AI agent to an external service using MCP or another protocol.

🔹 Example: A "Gmail connector" lets an AI agent read, search, and send emails. A "Google Drive connector" lets it read and create documents.

System Integration

Connecting different software systems so they share data and work together seamlessly: the "plumbing" behind any enterprise agent deployment.

🔹 Example: Your Digital FTE needs to read customer data from Salesforce, check inventory in SAP, process payments through JazzCash, and send confirmations via email. System integration connects all four systems so the agent can work across them in a single workflow.

5. Agentic AI Concepts

The heart of this book: AI systems that don't just answer questions, but take action.

Agent (or AI Agent)

An AI system that can independently perceive its environment, make decisions, and take actions to achieve a goal, without a human guiding every step.

🔹 Example: A chatbot just answers questions. An AI agent receives a goal like "find me the cheapest Karachi-to-Dubai flight next Friday" and then searches airlines, compares prices, checks your calendar, and books the ticket, all on its own.

💡 Analogy: A chatbot is a librarian who answers questions from behind a desk. An agent is a personal assistant who takes your request and goes out into the world to get things done.

Agentic AI

The category of AI focused on building agents that plan, reason, act, and adapt autonomously. This is the frontier of AI in 2026.

General Agent

An AI agent used through natural language for a wide range of tasks. It isn't built for one specific job; it's a versatile "Swiss Army knife" that can help with coding, writing, research, file management, and more.

🔹 Example: Claude Code is a general agent: you can ask it to organize files, write an API, analyze a spreadsheet, or debug a Python error. It adapts to whatever you need, using natural language instructions.

💡 Analogy: A general agent is like a highly capable executive assistant. You don't hire them for one task; you give them different assignments each day, and they figure out how to get each one done.

Autonomy

The degree to which an AI agent can operate independently without human approval at every step.

💡 Analogy: A junior employee who needs permission for every email has low autonomy. A senior director who makes decisions independently has high autonomy. Agents exist on this same spectrum: some need human approval for every action; others operate with full independence within defined boundaries.

Reasoning

An agent's ability to think through a problem logically: analyzing information, weighing options, and drawing conclusions before acting.

🔹 Example: You ask an agent: "Should we launch in Lahore or Islamabad first?" A non-reasoning agent might just pick one. A reasoning agent analyzes: "Lahore has 2x the population, but Islamabad has higher per-capita income. Your product targets professionals, so Islamabad's demographics are a better fit. I recommend Islamabad first, then Lahore in month 3."

Acting

When an agent actually does something in the real world: sending an email, writing a file, querying an API, placing an order, booking an appointment.

Planning

An agent's ability to break a complex goal into a sequence of steps and determine the order to execute them.

🔹 Example: You tell an agent: "Prepare a market analysis report on Pakistani cement exports." The agent plans: (1) search for export data, (2) gather competitor info, (3) analyze trends, (4) write the report, (5) format and export as PDF.

Task Decomposition

Breaking a large, complex task into smaller, manageable subtasks that can be solved individually.

💡 Analogy: "Plan a wedding" is overwhelming as one task. Decomposed: find a venue, choose a caterer, design invitations, arrange flowers, hire a photographer. Each subtask is solvable. AI agents decompose complex goals the same way.

Orchestration

Coordinating multiple agents or tools to work together, managing the flow of information between them.

💡 Analogy: A cricket team captain doesn't bowl, bat, and field all at once. They position fielders, set bowling rotations, and adjust strategy based on the match situation. Agent orchestration works similarly: coordinating specialists toward a shared goal.

Multi-Agent System

A system where multiple AI agents collaborate (each handling different parts of a task) to accomplish something none could do alone.

🔹 Example: One agent researches competitor pricing, another drafts the analysis, a third formats the slides, and a fourth prepares speaker notes. They work as a team.

Supervisor Agent

An agent whose job is to coordinate and manage other agents: distributing tasks, monitoring progress, and collecting results.

💡 Analogy: A construction site foreman. They don't lay bricks or wire outlets. They assign specialists to each task, check quality, and make sure everything comes together correctly.

Handoff

When one agent passes a task (and its context) to another agent, like a relay runner passing the baton to the next.

Tool Use / Function Calling

An agent's ability to use external tools (searching the web, querying databases, sending emails, running code) rather than just generating text from memory.

💡 Analogy: A person answering questions only from memory vs. a person who can pick up a phone, open a laptop, and look things up. Tool use gives the agent access to the world beyond its training data.

State

The current condition or data of a system at any given moment. "Maintaining state" means remembering where things stand in an ongoing process.

🔹 Example: You're filling a 10-page NADRA form online and you're on page 7. The "state" includes everything you've entered on pages 1-6 plus which page you're currently on.

Memory (Agent Memory)

Mechanisms that let an agent remember information across interactions: previous conversations, user preferences, or learned facts.

💡 Analogy: State is short-term memory (what's happening right now in this conversation). Memory is long-term memory (what happened across past conversations). Without memory, every interaction starts from zero.

Session

A single continuous interaction between a user and an AI system. Starting a new chat = starting a new session.

Reflection

When an agent reviews its own output, identifies mistakes or weaknesses, and tries again with improvements.

💡 Analogy: A writer who finishes a draft, re-reads it, notices weak arguments, and revises before submitting. The agent does this automatically.

Retry / Fallback

Retry: Attempting the same action again when it fails (maybe the server was temporarily unavailable). Fallback: Switching to an alternative approach when the primary one keeps failing.

🔹 Example: Agent tries to fetch data from a website. Site is down (retry: try again in 30 seconds). Still down after 3 retries (fallback: try a different data source for the same information).

Guardrails

Safety constraints preventing an agent from taking harmful, inappropriate, or unauthorized actions. The financial version of guardrails — spending limits, vendor allowlists, audit triggers — is the authority envelope. See Section 11.

🔹 Example: A financial agent has a guardrail preventing transactions above Rs. 5,000,000 without human approval. A customer service agent has a guardrail preventing it from making promises about refunds it can't guarantee.

💡 Analogy: Guardrails on the Motorway keep cars from going off the road. AI guardrails keep agents from going off-limits.

HITL (Human in the Loop)

A design pattern where a human reviews, approves, or intervenes at critical points in an agent's workflow.

🔹 Example: An agent drafts a client email, but it's not sent until a human reads and approves it. The agent does 80% of the work; the human provides the 10% of verification.

Reliability

How consistently an agent produces correct, expected results. A reliable agent gets it right 99 out of 100 times, not 60.

🔹 Example: A reliable invoice-processing agent correctly extracts vendor name, amount, due date, and tax from 99% of invoices, across different formats, languages, and layouts. An unreliable one gets confused by unusual layouts and misreads amounts 20% of the time. The difference between a sellable product and a liability.

Verifiability

The ability to check and confirm that an agent's output is correct, that its code passes tests, its numbers add up, its references exist.

Auditability

The ability to trace back through every decision and action an agent took, understanding exactly what it did and why.

💡 Analogy: A bank statement traces every transaction. An audit trail for an AI agent traces every decision, tool call, and output, all critical for compliance and debugging.

Workflow

A defined sequence of steps an agent follows to complete a task from start to finish.

💡 Analogy: A workflow is like a recipe: step-by-step instructions that, followed correctly, produce a predictable result.

6. Programming and Software Terms

You don't need to be a programmer, but you'll encounter these throughout.

Python

The most popular programming language in AI: readable, versatile, and the primary language in this book. Nearly every AI framework supports Python first.

💡 Why Python? Python reads almost like English.

if age > 18: print("Adult")is understandable even if you've never coded. This readability is why the AI world chose Python, and why this book teaches it. You don't need to know Python before starting; Part 4 teaches you from scratch.

TypeScript

A typed superset of JavaScript used for web applications and realtime interfaces. Covered in Part 9 of this book.

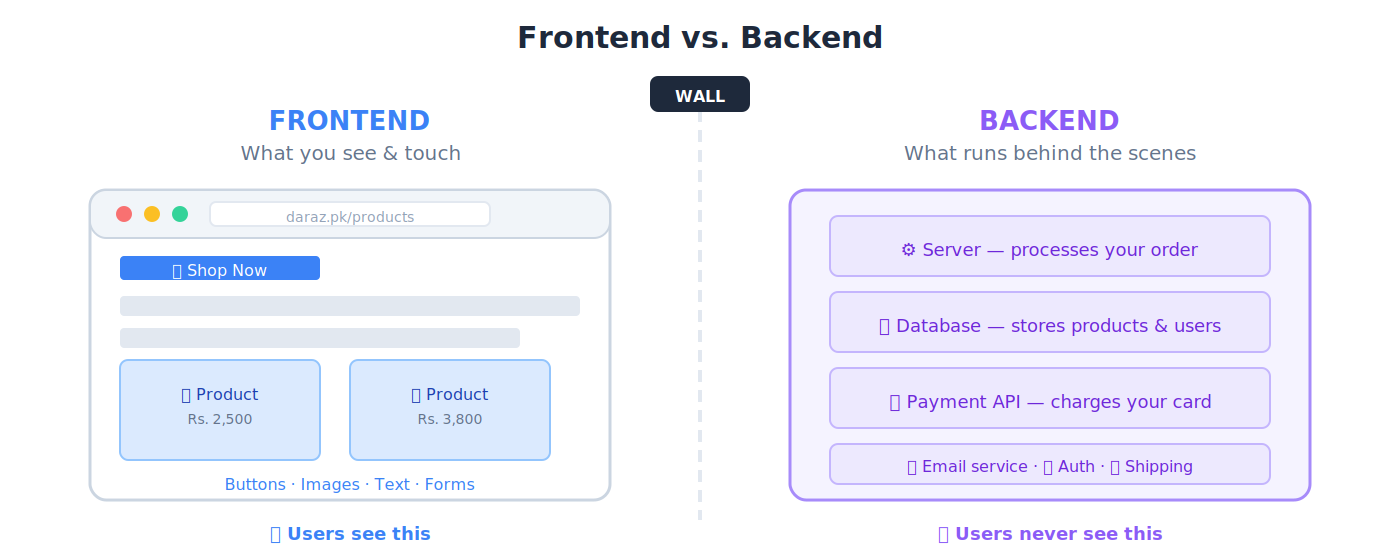

Frontend

The part of an application users see and interact with: buttons, menus, text, images on screen.

🔹 Example: When you use Daraz.pk, the product images, search bar, shopping cart, and checkout page are the frontend.

Backend

The part running behind the scenes (servers, databases, business logic) that users never see directly.

🔹 Example: When you click "Place Order" on Daraz, the backend processes your payment, checks inventory, notifies the seller, and schedules delivery.

Full-Stack

A developer or application that handles both frontend and backend.

API (Application Programming Interface)

A set of rules allowing different software programs to communicate with each other. APIs are how agents interact with the outside world.

💡 Analogy: A restaurant menu is like an API. You (the customer) look at the menu (API documentation), place an order (make a request), and the kitchen (server) prepares your meal (sends a response). You don't need to know how the kitchen works; you just use the menu.

SDK (Software Development Kit)

A pre-built toolkit for developing applications on a specific platform.

💡 Analogy: An SDK is like a LEGO set: pre-shaped pieces with instructions so you can build specific things quickly, instead of carving every piece from raw wood.

CLI (Command-Line Interface)

A text-based way of interacting with a computer by typing commands instead of clicking buttons.

🔹 Example: Instead of dragging a file to a folder, you type

mv report.pdf documents/. Claude Code runs entirely through the CLI.

HTTP / HTTPS

The communication protocol of the web. Every website visit, every API call uses HTTP (or its secure version, HTTPS).