Part 4: Programming in the AI Era

If AI can write code, why should you learn programming?

It is the most common question in 2026. And the answer is counterintuitive: programming has become more important, not less -- but what programming means has fundamentally changed.

Traditional Python education teaches bottom-up: syntax first, verification last. Python Crash Course teaches features through projects, with testing arriving at Chapter 11. Learning Python devotes 1,270 pages to deep Python, with OOP starting at page 687. Both assume the bottleneck is producing code -- typing functions, loops, and classes from a blank page.

AI eliminated that bottleneck. Claude Code generates hundreds of lines of working code in seconds. The mechanical act of writing code is no longer the human's job. But someone must still define what the code should do, and someone must verify that it does it correctly. The AI handles the middle. You handle everything that matters.

Part 3 used Claude Cowork to deploy agents without writing code. Part 4 switches to Claude Code -- your AI coding agent for the rest of the book. Every chapter, every exercise, and every project iteration uses Claude Code. Part 4 applies the Spec-Driven Development methodology from Chapter 16 to Python. The core teaching model is simple:

- INPUT: You write specifications -- descriptions of what the code should do, using type labels and checks (tests). Then you prompt Claude Code to generate the implementation.

- OUTPUT: You verify the result -- you run automated tools to prove the generated code is correct. You never accept output on faith.

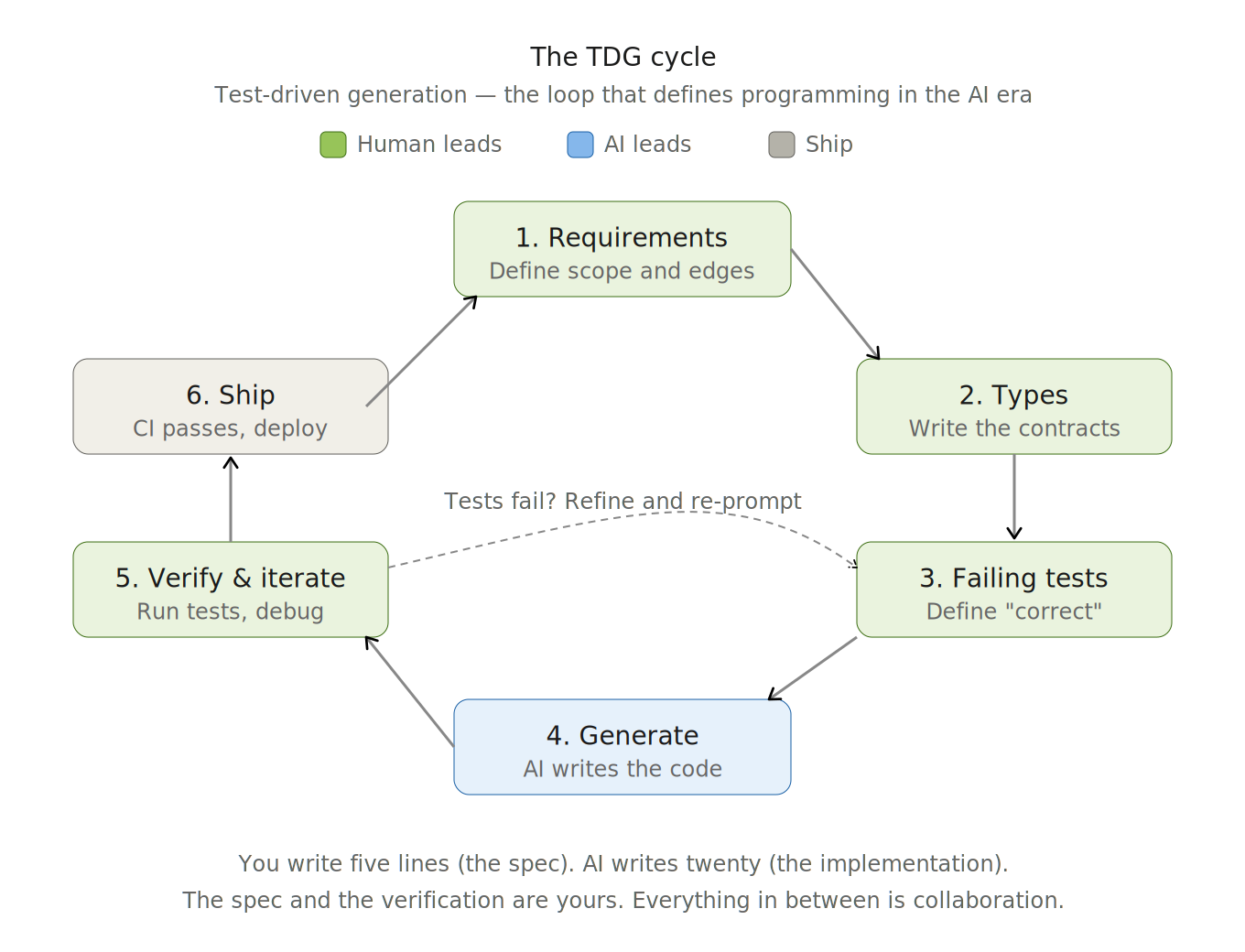

This workflow -- specify first, generate second, verify third -- is called Test-Driven Generation (TDG), the Python-specific form of Spec Driven Development (SDD).

GitClear's 2025 analysis of 211 million lines of code from Google, Microsoft, Meta, and enterprise repositories found that code duplication quadrupled after widespread AI adoption, while refactoring dropped from 25% to under 10% of changes. Code generated fast, but revised just as fast -- 7.9% of newly added lines required changes within two weeks, up from 5.5% before AI tools. Separately, Qodo's State of AI Code Quality report found that 76% of developers using AI assistants fall into what researchers call the "red zone" -- frequent hallucinations paired with low confidence in shipping. The teams that escaped this pattern shared one trait: they used AI for testing and review, not just generation, and their confidence in code quality jumped from 27% to 61%. Speed without verification produces churn. Speed with verification produces software.

This part inverts the traditional order. You learn to read before you write. You learn types before syntax. You learn testing before building. And you learn it all through a single method that defines programming in the AI era: Test-Driven Generation (TDG).

Before You Begin

Part 4 assumes no programming experience -- you do not need to have written code before. But it does build on skills from earlier parts of the book. If any of these are new to you, don't worry -- here's where to go first:

- Using a terminal -- opening a terminal, navigating directories, running commands → Part 2, Chapter 22

- Driving Claude Code -- writing clear prompts, evaluating responses, iterating → practiced throughout Parts 1 and 2

- Spec-Driven Development (SDD) -- writing specifications before code, the four-phase SDD workflow → Chapter 16 (required prerequisite)

- Version control basics --

git add,git commit,git push→ Chapter 23 - Building something with Claude Code -- directing Claude Code to create a working project → Part 2 projects

That is exactly who Phase 1 is designed for. Chapter 44 walks you through every installation step with exact commands and expected output. Chapter 45 teaches you to read Python from scratch -- no prior syntax knowledge required. You will not be asked to write code until you can read it confidently. The course meets you where you are.

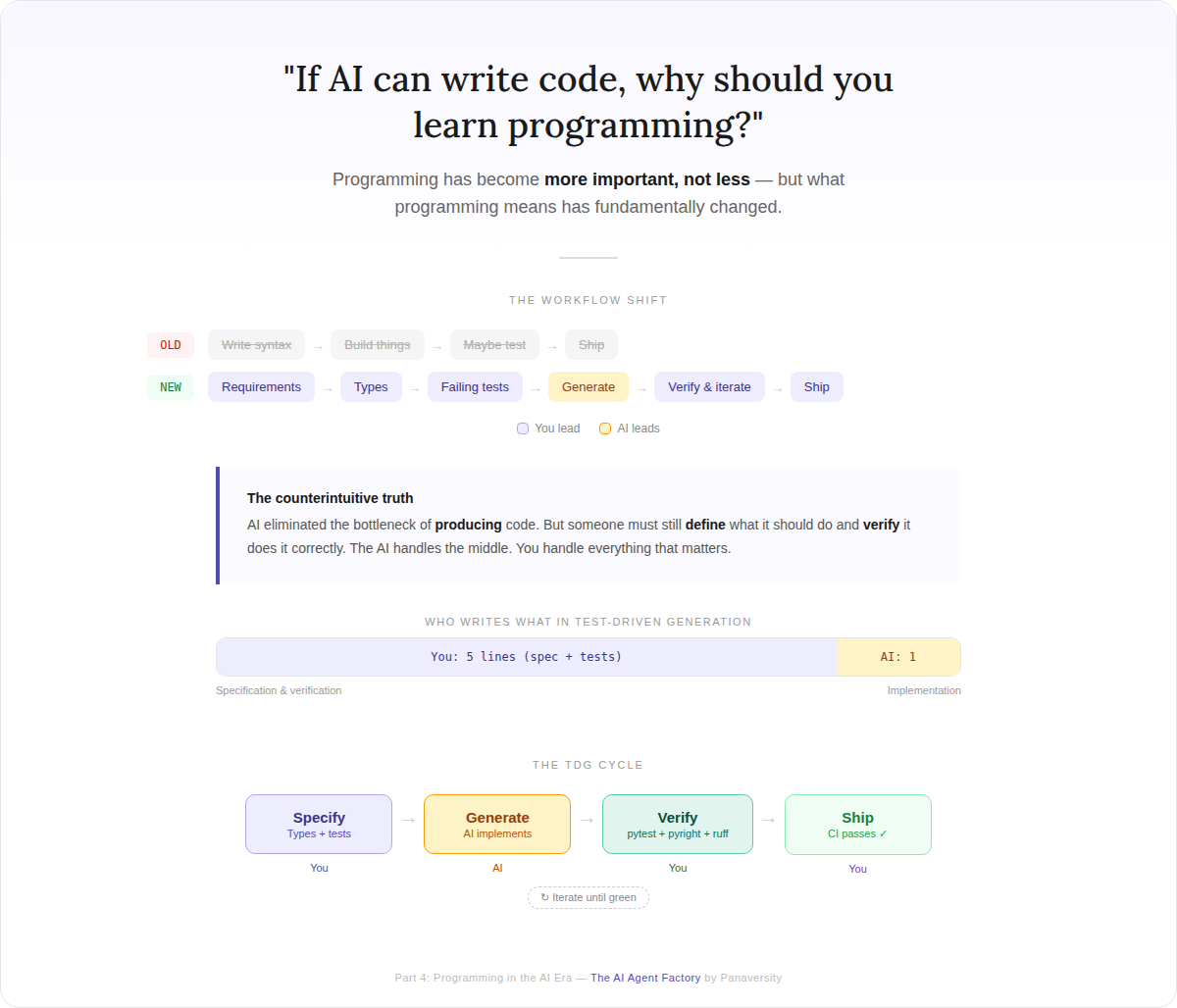

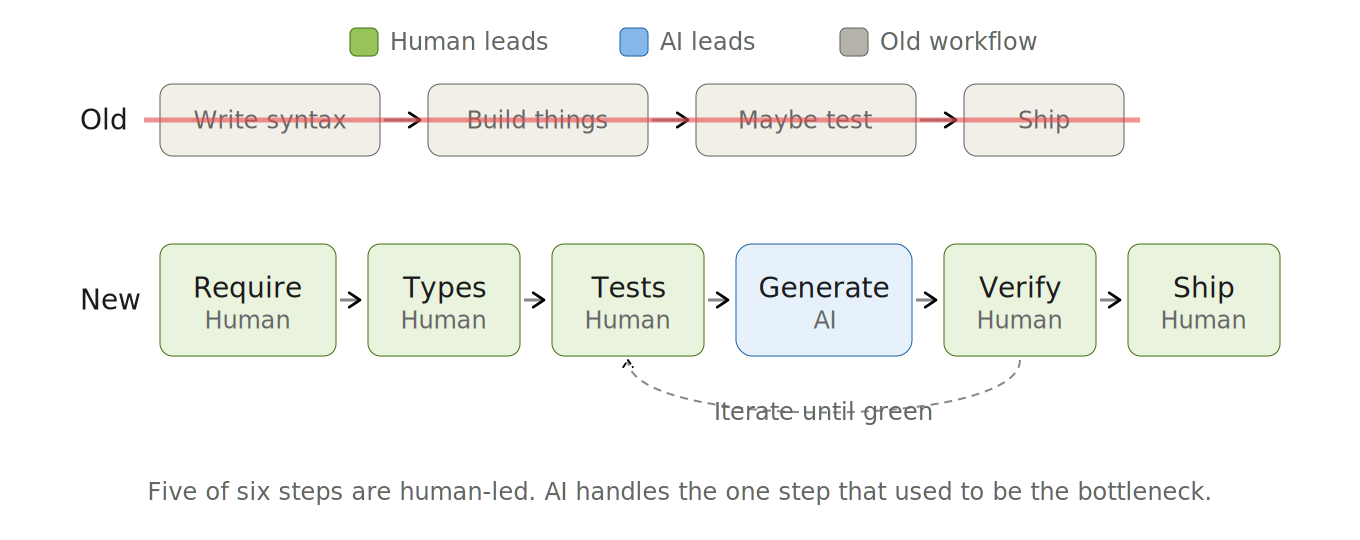

The New Workflow

OLD: Write syntax → Build things → Maybe test → Ship

NEW: Requirements → Types → Test Criteria & Failing Tests → Generate → Verify & Iterate → Ship

This workflow is the programmer's version of the 10-80-10 rule from the thesis: humans own the first 10% — defining intent, writing type contracts, and specifying correctness criteria as failing tests; AI handles the middle 80% — generating the implementation; humans own the final 10% — verifying behavior, diagnosing failures, and deciding when it ships.

These six steps are not sequential phases you hand off and forget. They are a loop -- and AI is present throughout. What changes across the steps is who is driving. Here is the full loop at a glance -- if some terms are unfamiliar, the note box below the table explains each one:

| Step | What happens | Who leads | AI role |

|---|---|---|---|

| Requirements | Decide what you're building -- what it does, what it accepts, what it returns | Human | Assists: spots gaps, challenges assumptions |

| Types | Describe your data and functions precisely -- labels that tell AI the shape of your code | Human | Assists: suggests structures, validates design |

| Test Criteria & Failing Tests | Write checks that define "correct" -- they fail because nothing is built yet | Human | Assists: suggests cases you missed |

| Generate | AI writes the code to pass your checks | AI | Leads: produces full implementation |

| Verify & Iterate | Run your checks, read failures, debug, refine, repeat until everything passes | Human | Assists: explains errors, refines output |

| Ship | Save your work, automated pipeline verifies, deploy | Human | Assists: security review, changelog |

The key insight: you never start from a blank page, and you never accept output blindly. You start with a requirement and end with a passing test suite. Everything in between is a collaboration -- but the intent, the correctness criteria, and the final verification are yours.

Why do humans write the tests?

In TDG, tests are not verification — they are specification. When you write assert total_with_tax(100.0, 0.15) == 115.0, you are not checking code that already exists. You are declaring what correct means before any implementation exists. That declaration is the requirement. If you delegate it to AI, you have delegated the requirement — and you are now verifying AI-generated code against AI-generated expectations. You have no independent signal. The test must come from a human mind that understands the domain, because the test is the only artifact in the entire cycle that defines ground truth. AI can suggest edge cases you missed. AI can help you write the test syntax. But the decision about what correct looks like is yours — that is the 10% that makes the other 90% trustworthy.

Some of these terms may be unfamiliar. Here is what they mean in plain English:

- Types are labels that describe what kind of data something is -- text, a whole number, a decimal, true/false. You will learn these in Chapter 45.

- A test is a short piece of code that checks whether another piece of code does what you expect. Think of it as a checklist: "If I give it 100 and 15%, I should get 115."

- A failing test is a test you write before the code exists. It fails because there is nothing to check yet. Then AI writes the code to make it pass. That is the core idea of TDG.

- pytest is the tool that runs your tests automatically and tells you which passed and which failed.

You do not need to memorize any of this now. Each term gets its own lesson with step-by-step explanations.

What "Writing Code" Means Now

In the old model, writing code meant typing implementation -- functions, loops, conditionals -- from scratch. That skill still has value, but it is no longer the primary bottleneck or the primary skill.

In the new model, writing code means three things:

-

Describing what you want precisely. You write a clear description of what the code should accept and return -- its inputs, outputs, and data types. The more precise your description, the better AI's output.

-

Defining what "correct" means before AI writes anything. You write checks (tests) that specify the expected behavior: "If I give it 100 and 15%, I should get 115." These checks become the requirement document AI implements against.

-

Verifying output critically. AI optimizes for plausibility, not correctness. Your checks are the only reliable signal. When they fail, you diagnose why -- you do not re-prompt blindly. When they pass, you review for edge cases the checks may have missed.

This is Test-Driven Generation (TDG) -- the method that defines programming in the AI era. Here is what the cycle looks like in plain English:

- You tell the computer: "I need a calculation that takes a price and a tax rate and gives me the total."

- You write two checks: "If the price is 100 and tax is 15%, the answer should be 115" and "If the price is 0, the answer should be 0."

- You ask AI to write the actual calculation.

- You run your checks. If they pass, the calculation is correct. If they fail, you debug and iterate.

That is all TDG is -- describe what you want, write checks, let AI do the math, verify the answer. You will learn the syntax piece by piece starting in Chapter 45. By the time you reach Your First TDG Cycle, you will see this cycle in real Python code and every line will make sense.

If this reminds you of Test-Driven Development (TDD), you are right -- TDG is TDD with AI in the generation step. The difference: in TDD, you write the failing test and then write the implementation yourself. In TDG, you write the failing test and AI writes the implementation. Your job shifts from typing code to specifying precisely enough that AI gets it right on the first pass -- and verifying that it did.

What You Need to Be Able to Do This

TDG requires a skill that the old model treated as optional: reading code fluently.

You cannot write good type specifications if you cannot recognize good types when you see them. You cannot write good tests if you cannot trace what code does. You cannot verify AI output if you cannot read it critically.

This is why Part 4 teaches you to read before it teaches you to specify. Not as a workflow step -- you do not read code each time you build a feature -- but as a foundational capability that makes every other step possible. A surgeon does not study anatomy during an operation. They studied it before ever entering the operating room. Reading code fluently is your pre-operative training.

How Every Chapter Is Structured

Every Python feature in Part 4 follows a five-step progression that builds from reading to full TDG:

- See it -- AI generates code containing the feature

- Read it -- The lesson explains what it does and why

- Predict it -- "What will this output?" exercises build your mental model

- Test it -- You define expected behavior with pytest and verify with AI assistance

- Build it -- You specify types and tests with AI assistance, prompt AI to implement, and verify the output

Steps 1--3 build your reading fluency. Steps 4--5 are the TDG cycle. By the end of Part 4, steps 4--5 feel as natural as steps 1--3 do now.

Notice that you see and read before you are asked to do anything. This is deliberate. You will not be thrown into writing tests or specifying types without first understanding what they look like and how they work. Every new concept is shown to you, explained, and practiced through prediction exercises before you use it yourself.

Your SmartNotes Project For Part 4

SmartNotes is a Personal AI Knowledge Base -- your own note-taking tool that understands what you wrote. You save notes, tag and categorize them, search by meaning (not just keywords), and ask AI to summarize or connect ideas across notes. By the end of Phase 8, SmartNotes is a complete application with a command-line tool, a web API, a database, AI-powered search, and an automated pipeline that verifies every change -- a portfolio-grade project you built yourself.

You do not build nine throwaway exercises. You build SmartNotes once and grow it across Phases 1 through 8. Each phase adds a layer using the SDD workflow: you write the specification (types + tests), prompt Claude Code to generate the implementation, and verify the output. The project is the vehicle; TDG is the method. Phase 9 is different -- you build a completely new project from scratch to prove you can do it without scaffolding.

| Phase | What You Add to SmartNotes | What You Learn |

|---|---|---|

| 1 | Read and annotate a pre-built prototype | Reading code, setting up tools, your first code review |

| 2 | Data structures for notes, tags, and collections | Describing your data precisely so AI builds the right thing |

| 3 | Decision logic + 30 automated checks that prove it works | Writing tests before code exists |

| 4 | Find and fix planted bugs, then do a full cycle solo | Debugging and working independently |

| 5 | Organize code into objects with real behavior | Designing systems, not just scripts |

| 6 | Database storage, file import/export, project organization | Building production-grade features |

| 7 | A command-line tool + a web API with AI integration | Shipping tools other people can use |

| 8 | Automated pipeline that verifies every change + security audit | Making sure nothing breaks and nothing is vulnerable |

Each phase produces a working version of SmartNotes. By the end of Phase 8, you have a polished, portfolio-grade project that demonstrates every skill you have learned. Then Phase 9 proves you can do it again -- on a brand-new project, from scratch, without guidance.

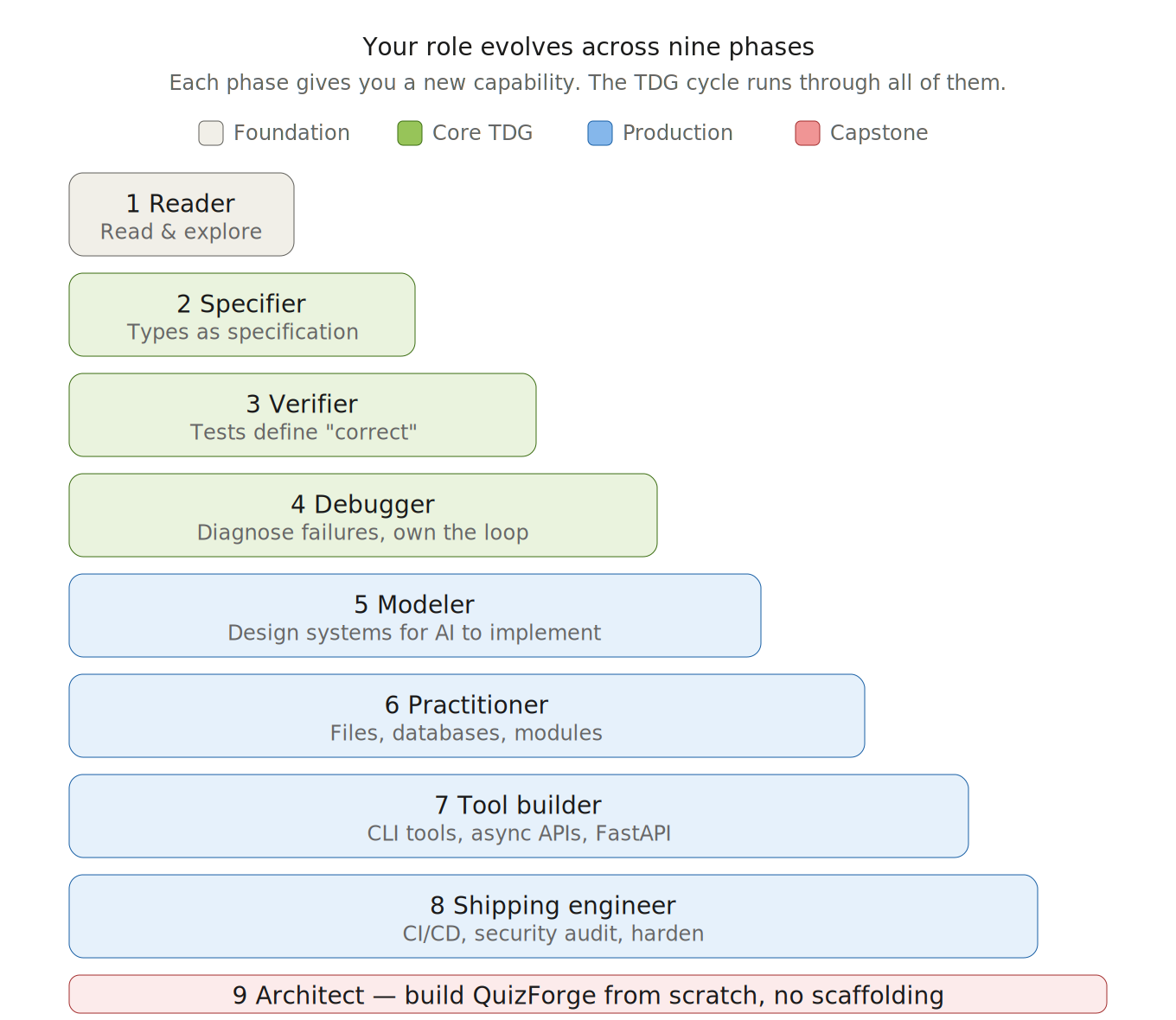

The Nine Phases

Part 4 is organized into nine phases. Each phase gives you a new capability, and your role evolves from passive reader to full system architect. The TDG cycle runs through every phase -- what changes is how much of it you own and how deeply you can specify.

Each phase takes roughly 1-2 weeks at a few hours per day. The full Part 4 is designed for 3-5 months of steady practice. Some phases (1 and 4) are shorter; others (5 and 6) are longer because they cover more ground. Go at your own pace -- building a strong foundation matters more than speed.

| Phase | Title | Your Role | Chapters |

|---|---|---|---|

| 1 | The Workbench | Reader | Ch 42-46 |

| 2 | Specify with Types | Specifier | Ch 47-49 |

| 3 | Tests as Specification | Verifier | Ch 50-55 |

| 4 | Debug & Master | Debugger | Ch 56-57 |

| 5 | The Python Object Model | Modeler | Ch 58-61 |

| 6 | Real-World Python | Practitioner | Ch 62-64 |

| 7 | CLI & Concurrency | Tool Builder | Ch 65-67 |

| 8 | Production Systems | Shipping Engineer | Ch 68-69 |

| 9 | Capstone | Architect | Ch 70-71 |

Deliverables: SDD specification documents, type definitions, object model diagram, passing test suites, AI-generated and human-verified implementation, security audit, green CI pipeline, and a deployed QuizForge application with CLI, API, and AI features. You finish Part 4 with two portfolio-grade projects -- SmartNotes (guided) and QuizForge (independent) -- proving you can drive the complete TDG cycle at production scale.

What You Will Be Able To Do

By the end of Part 4, you will be able to:

- Read AI-generated code critically -- understand what it does, predict its output, and catch mistakes before they reach your project

- Tell AI exactly what to build -- write precise descriptions using Python's type system so AI generates what you actually want

- Prove code is correct -- write automated checks that define correct behavior and catch failures before users do

- Debug when things go wrong -- read error messages, find the root cause, and fix bugs instead of blindly re-prompting AI

- Drive the full cycle independently -- go from "I need this feature" to working, verified code without hand-holding

- Design systems, not just scripts -- organize code into objects and modules that model real domains and scale cleanly

- Build real tools people can use -- command-line applications, web APIs, and database-backed projects

- Ship with confidence -- automated pipelines that verify every change, plus security reviews that catch what AI misses

- Know when NOT to use AI -- recognize when manual coding is faster and when AI output needs human judgment

- Architect complete applications -- combine all skills to specify, build, test, secure, and ship a production-grade project

What's Next

After completing Part 4, continue to Part 6: Building Agent Factories where you apply your Python skills and axiom-grounded thinking to build production AI agents with SDKs like OpenAI Agents SDK, Google ADK, and the Anthropic SDK. The async patterns you mastered in Phase 7, the typed interfaces you designed in Phase 5, the security review skills from Phase 8, and the testing discipline you built in Phase 3 feed directly into agent development.

The transformation of software development is underway. You are not just learning a language. You are learning to direct and verify the AI systems that write it. SmartNotes and QuizForge are the proof that you can.

Key Terms (60-Second Glossary)

Refer back to this table whenever a term feels unfamiliar. You do not need to memorize anything now -- each term gets its own lesson with step-by-step explanation.

📖 Glossary

| Term | Plain English |

|---|---|

| Python | A programming language -- the one you are learning in this part |

| Type | A label that says what kind of data something is: text, whole number, decimal, or true/false |

| Type annotation | A note in code that declares a variable's type, like age: int = 25 (the : int part is the annotation) |

| Variable | A named container that holds a value -- like a labeled jar |

| Function | A reusable block of code with a name. You give it inputs, it gives you an output |

| Function signature | The first line of a function that declares its name, inputs, and output type -- the contract |

| Test | A short piece of code that checks whether another piece of code does what you expect |

| pytest | The tool that runs your tests automatically and reports which passed and which failed |

| Pyright | A tool that checks your type annotations and catches type mismatches before you run the code |

| Ruff | A tool that checks code style and formatting -- like a spell-checker for code |

| uv | The package manager that installs Python and your project's tools |

| Git | A tool that tracks every change you make to your code, so you can undo mistakes and collaborate |

| SDD | Spec-Driven Development -- write the specification first, then let AI generate the implementation (Chapter 16) |

| TDG | Test-Driven Generation -- SDD applied to Python: your specification is types + tests, Claude Code generates, you verify |

| PRIMM | Predict-Run-Investigate -- a method for reading code by predicting what it does before running it |

| Claude Code | Your primary AI coding agent throughout Part 4 -- generates, explains, and reviews code based on your specifications |

Let's begin.