From Founder Bottleneck to Owner Delegate: Scaling an AI-Native Company Past Its Owner's Attention

15 Concepts and two lab tracks. The "half-day" in the title is the simulated track: about 2 to 3 hours of conceptual reading plus 2 to 3 hours of simulated lab with mock endpoints. The full-implementation track is a separate 1-day sprint to 2-day workshop: about 3 hours of reading plus 6 to 10 hours of hands-on lab. The full track does not modify Paperclip's codebase; it registers your Identic AI as a Paperclip agent, builds the signing-and-verification layer in your own integration skill, and exercises the full cryptographic round-trip against real Paperclip approval routes. Pick the track before Decision 1; see Part 4 for details.

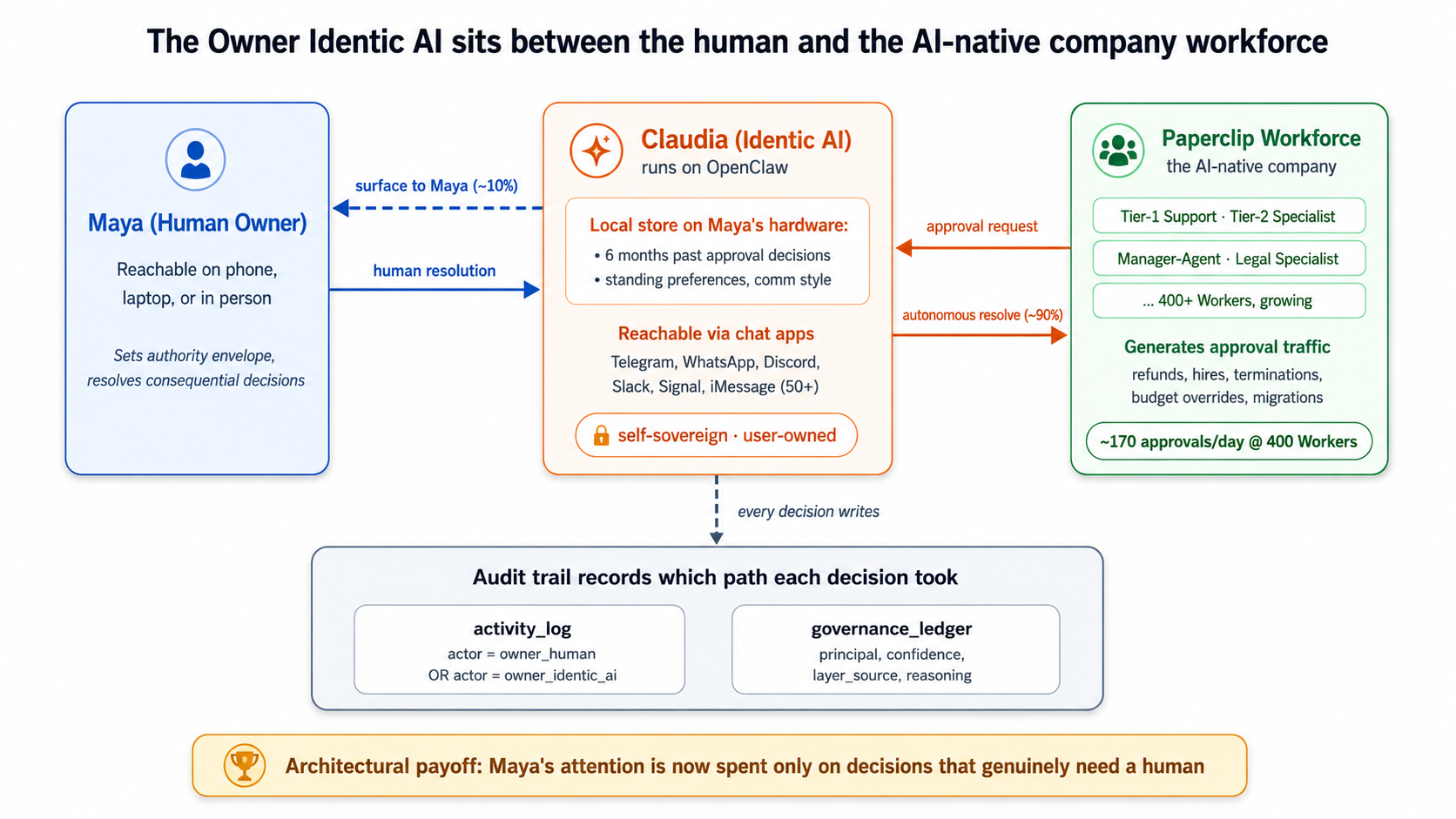

A continuation crash course. This is Course Eight of nine in the agentic-coding track, and it is the deep operationalization of Invariant 2 of the Agent Factory thesis: every human needs a delegate. Courses Five, Six, and Seven taught you to build, govern, and grow an AI-native company's hired Workers. What those three courses left unresolved is what happens when the workforce gets large enough that the human owner can no longer read every approval thread. Course Eight names this as the architecture's last bottleneck and teaches the trust-delegation pattern that removes it: how the owner's delegate (her Identic AI, Don Tapscott's term for a personal AI that carries its user's identity, preferences, and authority) takes routine governance traffic on her behalf, applies her judgment, and surfaces only the decisions that genuinely need her.

The single insight that makes everything else click: an AI-native company stops scaling at the owner's attention, not at the hiring API; the Owner Identic AI is the primitive that removes the owner as the bottleneck without removing the owner from control. Courses Five through Seven let you hire a thousand Workers. Nothing in those courses prevents the owner from drowning in approval threads when she does. Every Concept in Course Eight either expands what the delegate decides autonomously, or sharpens the seam where a decision must come back to the human. Both are the architecture.

The Agent Factory thesis names seven structural rules an AI-native company must obey. Invariant 2 says each human needs a personal AI agent, a "delegate," that holds their context, their judgment, and their authority on their behalf. Here is the architect's framing sentence, the one this entire course is built around:

"A founder can hire ten Workers and read every approval; a founder cannot hire a thousand Workers and read every approval. Without an Identic AI that acts on the owner's behalf, applying the owner's known judgment to routine decisions and surfacing only the ones that aren't routine, every previous invariant in the AI-native company caps out at the owner's attention. The owner's Identic AI is not a productivity tool. It is the only architectural answer to the question of how an AI-native company scales past its founder."

The thesis specifies OpenClaw as the delegate it ships. This course teaches how to configure OpenClaw for one specific use of the delegate: as the company owner's governance delegate, the agent that holds the owner's authority envelope and brokers approval traffic on her behalf. The course extends the thesis without contradicting it: the thesis defines the delegate; the course teaches what the delegate has to do, cryptographically and operationally, to safely absorb a workforce's worth of approval traffic.

Courses Three through Seven operationalized other invariants in depth: Three covered Invariant 4 (engine choice, the runtime each agent runs on), Four covered Invariant 5 (system of record via MCP), Five covered Invariant 7 (the nervous system with Inngest), Six covered Invariant 3 (the management layer with Paperclip), and Seven covered Invariant 6 (hiring as a callable capability). Course Eight completes Invariant 2 with the same depth. Course Nine then adds the cross-cutting discipline, eval-driven development, that turns every Worker, every hire, and every delegated decision into something measurably trustworthy in production.

Courses Five through Seven taught you to use Paperclip as the management layer, Inngest as the operational envelope, and Claude Managed Agents as the worked-example runtime for hired Workers. Course Eight introduces OpenClaw (openclaw.ai, github.com/openclaw/openclaw, docs.openclaw.ai) as the runtime for the owner's delegate, the same OpenClaw the Agent Factory thesis names in Invariant 2.

OpenClaw is an open-source personal AI assistant that runs on the user's local machine (Mac, Windows, or Linux), is reachable through chat apps the user already uses, and is built around persistent memory, user data sovereignty, and skill extensibility. It exposes 50+ integrations, including 15+ chat channels (WhatsApp, Telegram, Discord, Slack, Signal, iMessage, and more). Concept 4 walks through what OpenClaw actually is, why the thesis ships it as the delegate, and what alternatives would look like if you wanted to swap the runtime.

The delegated governance primitive Course Eight teaches, how an owner's Identic AI signs requests to the company's Paperclip workforce, is not a shipped integration between OpenClaw and Paperclip. It is an architectural pattern Course Eight teaches you to assemble using OpenClaw's skills system and Paperclip's verified-identity primitives. Both products ship the building blocks; the course wires them together.

- OpenClaw is fast-moving. The project is open source under an MIT license, which is a real continuity guarantee, but it is still adding skills, integrations, and primitives weekly, and its institutional position has shifted more than once in its first six months. The course teaches against the stable surface of openclaw.ai and docs.openclaw.ai as of May 2026; specific dates, version numbers, and integration counts should be verified against the official site before being treated as authoritative. Treat anything not on those URLs as in motion.

- The owner's judgment-learning loop, how the Identic AI learns the owner's values from their past approval decisions, is at the frontier of what's shipped today. Course Eight teaches the architectural commitments and the practical patterns that work in May 2026, and explicitly names which parts are open research (Concepts 13 through 15).

The four claims in order:

- An AI-native company stops scaling at the owner's attention, not at the hiring API. Courses Five through Seven let you hire a thousand Workers; nothing in those courses prevents the owner from drowning in approval threads when she does.

- The architectural answer is to give the owner an Identic AI, a personal AI delegate that lives on the owner's hardware, knows her judgment patterns, and acts on her behalf for routine decisions while surfacing the consequential ones.

- OpenClaw is the credible shipped runtime for this in May 2026. It is open source, user-owned, reachable through the owner's existing chat apps, and built around persistent memory of the user. The course teaches how to configure it as a governance delegate, not as a general-purpose assistant.

- The trust-delegation primitive, the architectural pattern that lets the human owner and the owner's Identic AI both act with authority while remaining distinguishable in the audit log, is the central technical move Course Eight teaches. It is what makes delegated governance safe rather than reckless.

In Course Seven, we built a small AI company that could hire a fourth AI Worker (the Legal Specialist) under approval from the human owner. That works when the company has four Workers. When the company has four hundred Workers, the human owner can't read every approval anymore; she would be reading approvals all day. This course teaches the owner how to have her own personal AI assistant (using an open-source tool called OpenClaw that runs on her laptop) that reads the approvals on her behalf, makes the easy decisions itself using patterns it has learned from her past decisions, and only wakes her up when something genuinely needs her judgment. The course also teaches the company's management system how to tell the difference between the owner herself clicking "approve" and the owner's personal AI clicking "approve" on her behalf, because both are authorized, but the audit trail has to distinguish them.

The architectural payoff: the owner's attention is now spent only on decisions that genuinely benefit from a human's judgment.

Courses Five through Seven recap: where things were left (click to expand)

If you've just finished Course Seven, skim and move on. If you're picking this up cold or it's been a while, the four pieces of context below are load-bearing for the rest of Course Eight.

From Course Five (the operational envelope): the company's Workers run inside Inngest's durable-execution wrapper, which gives them crash-safe runs, retry-on-failure, structured logging to two specific tables (activity_log and cost_events), and the step.wait_for_event durable-pause primitive. That Inngest primitive lets a Worker pause for hours or days without consuming compute during the wait. Paperclip's approvals, introduced in Course Six, are a separate mechanism: an approval is a decision record, not a paused Worker. Approving one does not auto-resume anything; continuing the work is an explicit next step. What Course Eight changes is the governance decision: the routine approval that used to wait for the owner's attention is now fielded first by the owner's Identic AI, and only the consequential ones reach the owner herself.

From Course Six (the management plane): the company runs on Paperclip, an open-source management layer (docs.paperclip.ing) that gives the workforce an org chart, authority envelopes (rules bounding what each Worker can do), approval gates (refunds over $500 require human approval), and a shared system of record. The Workers in the worked example are Tier-1 Support, Tier-2 Specialist, and Manager-Agent. Course Eight extends Paperclip once more, adding the delegated-approval recognition path.

From Course Seven (the hiring API): Paperclip exposes hiring as a callable capability. The Manager-Agent can detect a capability gap and propose a new hire, which routes through the same approval gate. Course Seven also introduces the talent ledger (an SQL-queryable audit stream of every hire, eval, and retirement) and the auto-approval policy primitive, which auto-approves routine hires that meet envelope, budget, and eval-pack thresholds. The company hired a fourth Worker, the Legal Specialist, running on Claude Managed Agents via Paperclip's http adapter.

What's left at the human owner's keyboard after Course Seven: every approval that doesn't meet the auto-approval thresholds. Every envelope-extension hire. Every refund over the auto-approval ceiling. Every termination. Every CMA migration. Every standing-policy edit. This is the bottleneck Course Eight removes. Three terms recur throughout the course: Worker (an AI agent the company hired), envelope (the bounds on what a Worker is allowed to do), and Claude Managed Agents / CMA (the hosted-agent runtime Course Seven used for the worked example).

If any of the four feels shaky, the linked Course Five, Six, and Seven docs go all the way back to first principles.

Where this fits: cheat sheet

The 15 Concepts and 7 Decisions of Course Eight, at a glance:

| # | Concept | Part | One-line description |

|---|---|---|---|

| 1 | An AI-native company stops scaling at the owner's attention | Why | The math from Course Seven: about 5 to 10 approvals a day at 10 Workers; hundreds at 1,000. The owner becomes the bottleneck unless something acts on her behalf. |

| 2 | What Identic AI means: Tapscott's framing made concrete | Why | Five properties: personalized, value-reflecting, extension-of-self, self-sovereign, persistent memory. Distinguishes Identic AI from any other "AI assistant." |

| 3 | Why the owner specifically: not the workforce, not the customer | Why | Other Identic AI use cases exist (customer-side, employee-side), but the load-bearing case for the AI-native company is the owner's. The course teaches one well, not three poorly. |

| 4 | OpenClaw: what it actually is | Architecture | Verified from openclaw.ai. Local machine runtime, chat-app reachability, persistent memory, open source, user-owned data. |

| 5 | Persistent memory and the owner's local context | Architecture | Where the owner's accumulated judgment lives on her filesystem. The session primitive from docs.openclaw.ai. What persists, what doesn't. |

| 6 | Chat apps as the interface layer | Architecture | Why OpenClaw reaches the owner through WhatsApp, Telegram, and Discord rather than a web app. The owner is already in chat; the Identic AI lives where the owner is. |

| 7 | The trust-delegation problem | Governance | Two authorized principals (the owner-human and the owner's-Identic-AI). The Manager-Agent has to verify which one is acting and what authority that one carries. |

| 8 | Signed delegation from local credentials | Governance | What OpenClaw can sign with locally. What the Paperclip management layer verifies. Passkeys, hardware-backed keys, and the failure modes when the owner's machine is stolen. |

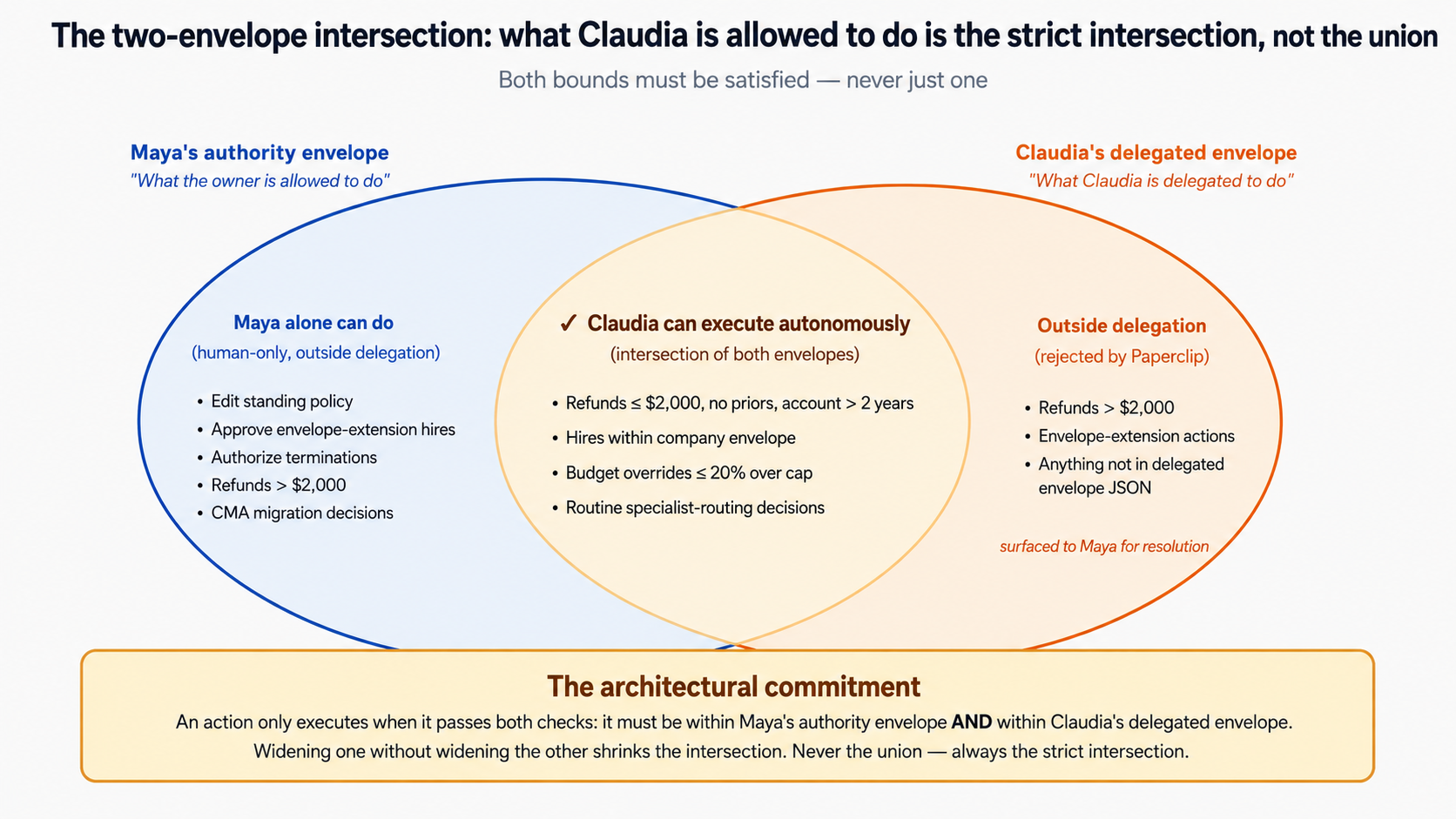

| 9 | The two-envelope intersection | Governance | The owner's authority envelope ("I can approve anything") meets the Identic AI's delegated envelope ("I can approve routine decisions up to these ceilings"). Their intersection is what executes. |

| Part 4 | The 7 lab Decisions | Lab | Install OpenClaw, onboard with the owner's context, build a Paperclip-integration skill, wire delegated approvals, demonstrate end-to-end, handle the owner-overrides case, test the device-switch and stolen-laptop cases. |

| 10 | What the Identic AI learns over six months | Audit | The owner's accumulated decision patterns. The judgment-learning loop. How OpenClaw's session system stores this. What makes a pattern teachable vs. what stays at the owner's level. |

| 11 | The governance ledger: the Identic AI's audit stream | Audit | A parallel audit log of what the Identic AI decided on the owner's behalf. The owner reads it weekly the same way the board reads Course Seven's talent ledger. |

| 12 | When the Identic AI's judgment and the owner's diverge | Audit | What happens when the Identic AI auto-approves something the owner would have declined. The recalibration loop. Why this is a healthy signal, not a failure. |

| 13 | Self-sovereign memory: where accumulated judgment lives long-term | Open | Three architectural options. What ships in May 2026. What Tapscott calls "reinventing the AI stack." Honest about what isn't solved. |

| 14 | Value alignment beyond pattern-matching | Open | The frontier: how an Identic AI learns the owner's values (the rules under the patterns), not just the patterns themselves. Research preview, not curriculum-ready. |

| 15 | What's next: the Identic AI economy and the eval discipline | Forward | The architectural completion at the end of Course Eight; Course Nine adds the eval discipline that makes the architecture measurably trustworthy. |

Are you ready for this course?

Five-item checklist. If any feels shaky, the linked refreshers below get you there.

- You completed Courses Five through Seven, or have built the equivalent: an Inngest-wrapped Worker, a Paperclip management layer with the approval primitive, and a working hiring API. Course Eight assumes the company-side architecture exists. If it doesn't, build it first.

- You can read TypeScript and shell scripts, even if you can't write them fluently. The lab uses TypeScript for the Paperclip-integration skill and shell for OpenClaw setup. Your AI assistant (Claude Code or OpenCode) types both; you brief, review, and approve. The briefing pattern from Courses Three through Seven continues unchanged.

- You have a Mac, Linux, or Windows machine you can install OpenClaw on. OpenClaw's one-line installer (

curl -fsSL https://openclaw.ai/install.sh | bash) needs admin rights on first run for Homebrew on macOS. The lab depends on having a real running OpenClaw instance; there is no cloud-only shortcut for Course Eight. - You have at least one chat app you can use for the lab: WhatsApp, Telegram, Discord, Slack, Signal, or iMessage. The course defaults to Telegram in examples because the OpenClaw docs do, but the choice is yours.

- You're comfortable with the idea of an AI making decisions on your behalf, even if you're skeptical about how far it should go. The course's central technical move is delegated authority; if you can't get past "the AI is approving things in my name," the lab won't land. Concept 12 addresses the failure cases directly; skim it before deciding.

If any of the five feel shaky, start with the linked refreshers before continuing. The course is dense; the prereqs make it feel light.

Course Eight closes the architectural side of the Agent Factory track; Course Nine adds the discipline of eval-driven development that turns the architecture into measurably trustworthy production behavior. If the five prerequisites above sound unfamiliar, work backwards: Course Seven: From Fixed to Dynamic Workforce is the direct prerequisite (the hiring API and the management plane Course Eight extends). Before that: Course Six: From One Worker to a Workforce (Paperclip plus the management plane), Course Five: From Digital FTE to Production Worker (Inngest plus the operational envelope), Course Three: Build AI Agents (the agent loop), and the PRIMM-AI+ chapter if you're new to AI-assisted coding entirely. Course Eight references Course Six and Seven concepts every page or two; coming in cold is harder than completing the on-ramp.

If you can't do the on-ramp right now but want to follow the concepts, you can fake the prerequisites with less than the full stack: read the Paperclip Quickstart and Approvals page; read the OpenClaw Getting Started; skim PRIMM-AI+ Lesson 1 for the prediction-then-run rhythm the course uses in every Concept. With those substitutes, you can follow Parts 1 through 3 and Parts 5 through 6 conceptually. The Part 4 lab requires both a working Paperclip install (from Course Seven) and a working OpenClaw install (from this course); there are no shortcuts.

Glossary: 26 terms a beginner can reference (click to expand)

Course Eight uses vocabulary from across the Agent Factory track plus several new terms specific to the Identic AI architecture. Terms grouped by what they describe.

People and roles

- Owner / Founder / CEO: the human principal who owns the AI-native company. Courses Six and Seven called this "the board" when the decision was governance-level; Course Eight uses "owner" to emphasize that we're talking about an individual human with an Identic AI, not a multi-person board.

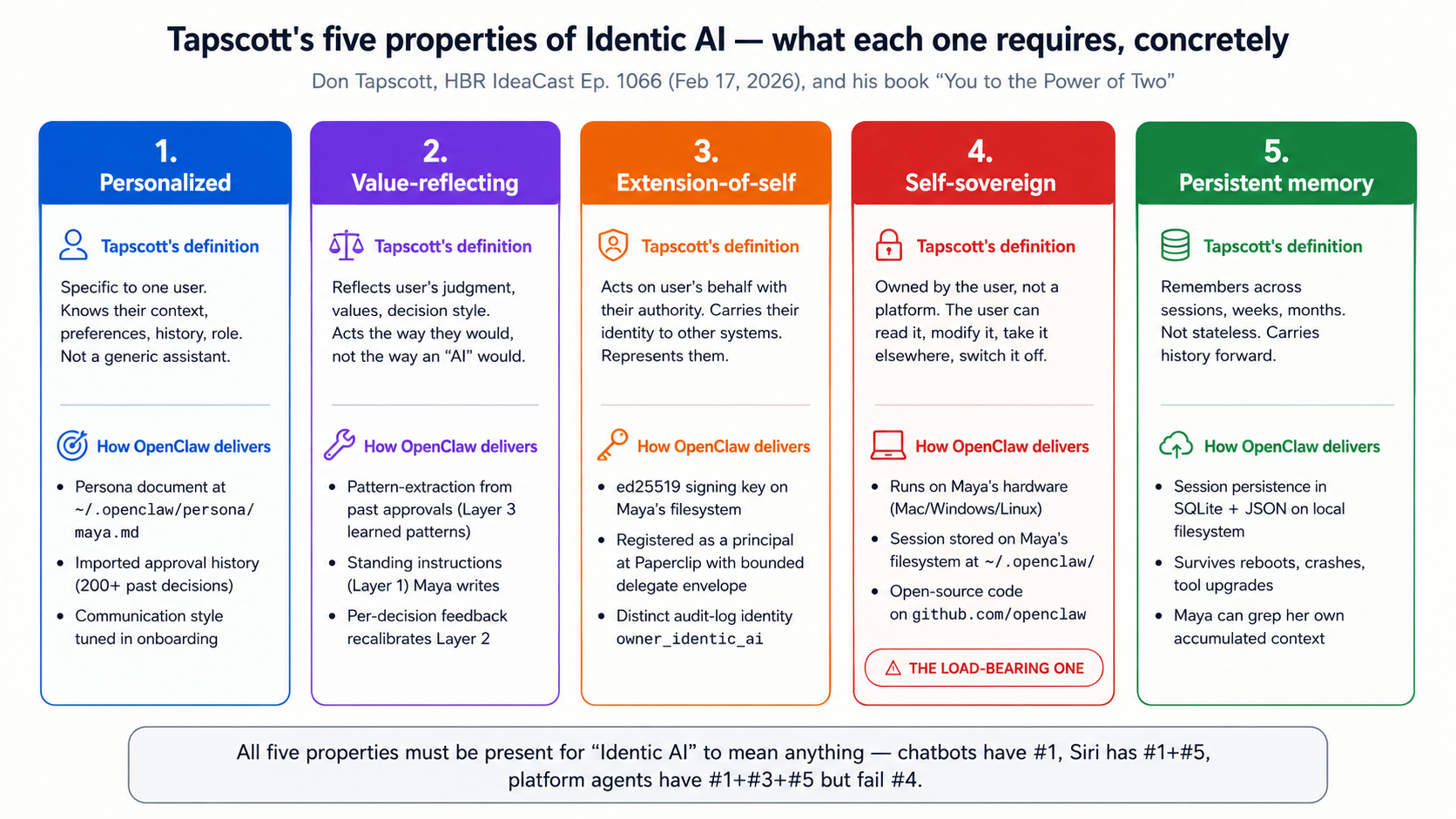

- Identic AI: Don Tapscott's term (HBR IdeaCast Ep. 1066, Feb 17, 2026, and his book You to the Power of Two) for a personalized AI that reflects its user's values, persists their context, and acts as an extension of them. Five properties: personalized, value-reflecting, extension-of-self, self-sovereign, persistent memory.

- Maya: Course Eight's worked-example owner. Founder and CEO of the customer-support company built across Courses Five through Seven. The course follows her through the lab.

OpenClaw primitives

- OpenClaw: open-source personal AI assistant (openclaw.ai). Runs on the user's local machine; reachable through chat apps; persistent memory; full system access; skills and plugins. Course Eight's worked-example runtime for Maya's Identic AI.

- Skill: OpenClaw's extensibility primitive. A skill is a unit of capability (read Gmail, control Spotify, talk to Paperclip). Skills can be written by the user, contributed by the community (ClawHub), or written by OpenClaw itself. Note: same word as Paperclip's skill primitive but a distinct concept; Paperclip skills install onto Workers, OpenClaw skills install onto the user's local OpenClaw.

- Session: OpenClaw's persistent-memory primitive (docs.openclaw.ai/concepts/session). The unit of accumulated context for a user across time, across devices, across chat apps. What makes OpenClaw an Identic AI rather than a stateless assistant.

- Onboard: OpenClaw's setup process.

openclaw onboardwalks the user through persona setup, model selection, chat-app integration, and initial skill installation. - Companion App: OpenClaw's macOS menubar app (beta as of May 2026). An alternative to chat-app interaction; useful when the owner is at her desk rather than on her phone.

Course Eight's architectural concepts

- Owner Identic AI: the specific configuration of an Identic AI that acts as a governance delegate for an AI-native company owner. The course's central technical artifact. Maya's OpenClaw, configured per Course Eight's pattern, is her Owner Identic AI.

- Delegated governance: the load-bearing primitive of Course Eight. The pattern by which Maya's Owner Identic AI receives approval requests from the Paperclip management layer, applies Maya's known judgment to the routine ones, and surfaces only the consequential ones to Maya herself.

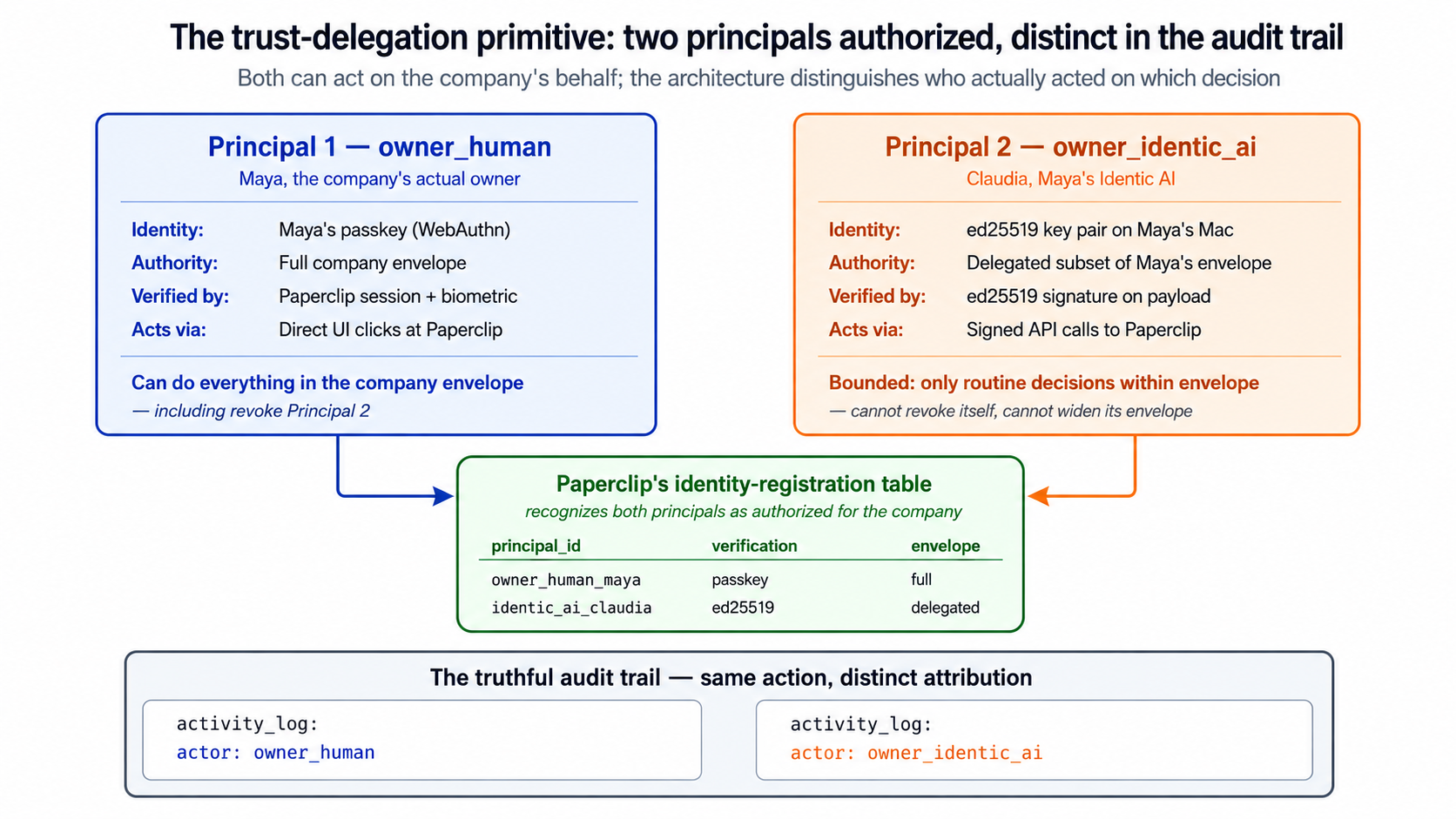

- Trust delegation: the verification mechanism that lets the Paperclip management layer distinguish between Maya-the-human and Maya's-Identic-AI when an approval click arrives. Both are authorized; the audit trail records which one acted.

- Owner-authority envelope: Maya's personal authority envelope (analogous to Course Six's authority envelope concept). What Maya herself can approve. Distinct from the Identic AI's delegated envelope.

- Identic AI's delegated envelope: the subset of Maya's authority that her Identic AI is allowed to exercise on her behalf. Set by Maya, recorded in the governance ledger, narrower than Maya's full authority by design.

- Governance ledger: Course Eight's parallel to Course Seven's talent ledger. The append-only audit stream of every decision Maya's Identic AI made on her behalf. Maya reads it weekly.

- Judgment-learning loop: how Maya's Identic AI accumulates a model of her decisions over time. Concept 10 covers the mechanics; Concept 14 covers the frontier of value alignment (the open research problem of going from patterns to values).

- Recalibration: the loop by which Maya corrects her Identic AI when its judgment diverges from hers. Concept 12 covers this. Recalibration events are themselves recorded in the governance ledger.

From Courses Three through Seven (referenced, defined more fully there)

- Worker: a single AI agent doing work for the company. Course Six defined this; Course Eight uses it throughout.

- Manager-Agent: the Worker that orchestrates other Workers. Course Six and Seven's protagonist; Course Eight's interlocutor for Maya's Identic AI.

- Paperclip: the management layer Course Six introduced and Course Seven extended. Course Eight extends it once more, adding the delegated-approval recognition path.

- Authority envelope: Course Six's mechanism for bounding what a Worker can do. The Course Eight analog is the owner-authority envelope plus delegated envelope split.

- Activity log: the append-only audit stream of every workforce action. Distinct from Course Eight's governance ledger (which is the Identic AI's audit stream).

- Approval: Course Six and Seven's governance gate. In Paperclip, an approval is a decision record, not a paused process: a board member or a registered agent records a decision on it. (

step.wait_for_eventis Inngest's durable-pause primitive from Course Five, a separate mechanism; Paperclip approvals are not wired to it.) Course Eight changes who fields the routine ones: Maya's Identic AI before Maya herself.

Thesis-level

- AI-native company: Course Six and Seven's thesis-level term for a company whose work is done primarily by AI Workers under human governance. Course Eight argues that the human governance part of this definition is incoherent at scale without an Owner Identic AI.

- Invariant 2 / the delegate: Invariant 2 of the Agent Factory thesis: every human needs a delegate. A personal agent that holds the user's identity, context, and authority envelope, and brokers all downstream work on the user's behalf. The thesis names OpenClaw as the delegate; Course Eight teaches how to configure that delegate as a governance delegate for an AI-native company owner.

- Self-sovereign: Tapscott's commitment that an Identic AI should be owned by the user, not a platform. Course Eight inherits this commitment; OpenClaw is the shipped product that operationalizes it.

Part 1: Why the owner is the bottleneck

The thesis of Courses Five through Seven was that an AI-native company can scale its workforce indefinitely. The hiring API is callable. The approval primitive is reusable. The talent ledger is queryable. Nothing in the architecture stops the company from hiring its tenth Worker, its hundredth, its thousandth.

But something does cap the architecture, and Course Seven left it implicit. Every consequential decision still routes to the human owner's attention. A company that can hire 1,000 Workers but expects one human to read 1,000 Workers' worth of approval threads is not scaling. It is moving the bottleneck from "recruiting" to "owner attention." Part 1 makes this argument concrete, then introduces the architectural response: the owner's Identic AI. Three Concepts.

Concept 1: An AI-native company stops scaling at the owner's attention, not at the hiring API

This is the heaviest concept in the course because everything else rests on it. If the argument here doesn't land, the rest of Course Eight reads like forward-looking speculation about personal AI. If the argument does land, the rest of Course Eight reads like solving a concrete problem the previous three courses created.

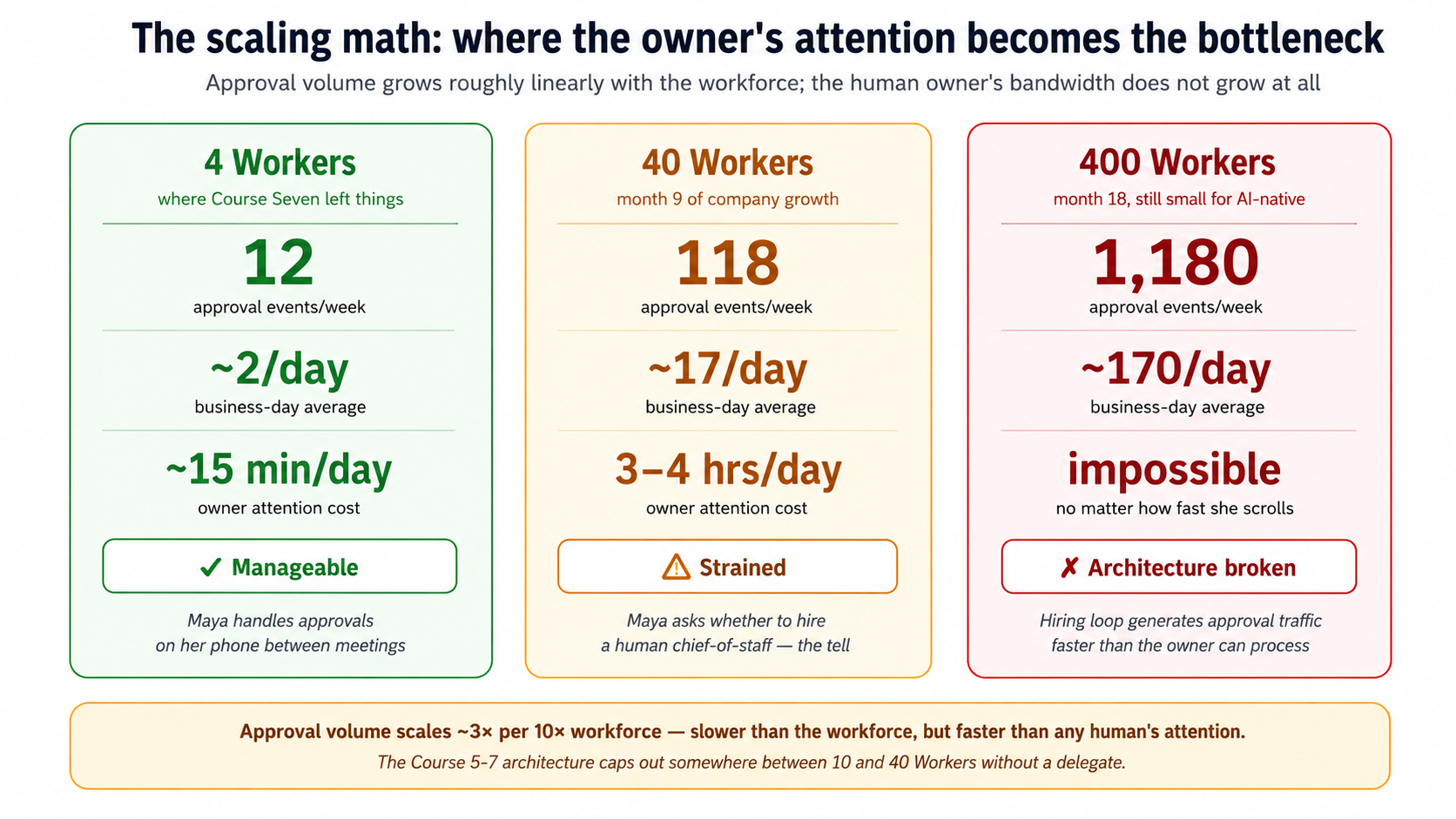

The argument is structural, but the easiest way to see it is to put numbers on it. We'll use Course Seven's actual worked example: the customer-support company built across Courses Five through Seven, which by the end of Course Seven has four Workers (Tier-1 Support, Tier-2 Specialist, Manager-Agent, and the Legal Specialist just hired in Course Seven's Decision 4). Maya, the company's founder and CEO, is the human owner. By the end of Course Seven, every consequential approval still pings Maya's phone.

The math at four Workers. Let's count what hits Maya's phone in a typical week with the Course Seven workforce, using realistic rates from the operational examples in Courses Six and Seven.

| Approval source | Per Worker per week | At 4 Workers |

|---|---|---|

Refund > envelope ceiling (Course Six's refund_max=$500) | ~2 | ~8 |

| Envelope-extension hire (Course Seven's Concept 8: auto-approval can't bypass) | ~0.1 | ~0.4 |

| Termination decision | ~0.05 | ~0.2 |

| Budget override (Worker hit its monthly ceiling) | ~0.25 | ~1 |

| CMA migration or substrate change | ~0.05 | ~0.2 |

| Standing-policy edit (new auto-approval rule) | ~0.5 | ~2 |

| Total per week | ~12 |

Twelve approval events per week. About two per business day. Maya can handle this on her phone between meetings. The owner-attention cost is real but manageable. This is the regime Course Seven's architecture was designed for.

The math at forty Workers. Now suppose Maya's company has grown over six months. The Manager-Agent has detected eight more capability gaps and hired Workers for each: a Billing Specialist, a Refund Analyst, an Onboarding Worker, a Churn-Risk Worker, three more Tier-2 Specialists for different product lines, and a Senior Legal Reviewer. The auto-approval policy from Concept 9 of Course Seven covers most Tier-1 burst hires. So far so good. But each Worker generates its own stream of consequential approvals.

| At 40 Workers | Per week |

|---|---|

| Refunds > envelope ceiling | ~80 |

| Envelope-extension hires | ~4 |

| Termination decisions | ~2 |

| Budget overrides | ~10 |

| CMA migrations / substrate changes | ~2 |

| Standing-policy edits | ~20 |

| Total per week | ~118 |

About 17 per business day. Maya is now spending three to four hours daily reading approval threads. This is the regime where Maya starts asking whether she should hire a human chief-of-staff to triage the queue. That's a tell. The architecture is starting to fail the original thesis: Maya is being asked to add humans to scale the workforce.

The math at four hundred Workers. Suppose Maya keeps growing. By month 18, the company runs 400 Workers, still small for an AI-native company, but well past the size where the Course 5-7 architecture was stress-tested.

| At 400 Workers | Per week |

|---|---|

| Refunds > envelope ceiling | ~800 |

| Envelope-extension hires | ~40 |

| Termination decisions | ~20 |

| Budget overrides | ~100 |

| CMA migrations / substrate changes | ~20 |

| Standing-policy edits | ~200 |

| Total per week | ~1,180 |

About 170 approval events per business day. Maya cannot read 170 approval threads per day, no matter how fast she scrolls. The architecture has stopped working. The hiring loop is now generating more approval traffic than the owner can process. Maya has hit the owner-attention bottleneck.

The scaling math gives the bottleneck a number: an AI-native company hits its owner-attention ceiling somewhere between 10 and 40 Workers, well before any other scaling constraint binds.

What are the architectural responses available to her? There are exactly three, and the course needs you to internalize that two of them are wrong before the third one (the Identic AI) reads as the answer rather than as a novelty.

Wrong response A: auto-approve more aggressively. Maya could expand the auto-approval policy from Concept 9 of Course Seven to cover more categories. Currently the policy auto-approves Tier-1 burst hires under a $250/month envelope; she could raise the ceiling to $1,000/month, or include refund decisions up to $2,000. This would reduce the queue. It would also abandon the safety property that the entire seven-invariant thesis is built on. The reason Course Seven kept the envelope-extension check outside the auto-approval surface (Concept 8) is that any authority a Worker has that no Worker had before is a decision the human has to consciously make. Auto-approving more aggressively reverses that commitment. It says: we can't be bothered to govern at scale, so we'll declare that scale doesn't need governance. That's the AI-native equivalent of an unreviewed pull-request culture. It works until it doesn't.

Wrong response B: add humans to the approval pool. Maya could hire a human chief-of-staff to triage approvals, or appoint two co-founders as additional approvers. This would reduce the per-person queue. It would also reintroduce the org-chart hierarchy that AI-native companies were supposed to flatten. Course Six's whole architectural argument was that the Manager-Agent absorbs the middle-management coordination layer; if Maya now adds a human management layer above the Manager-Agent to handle approvals, she has rebuilt the company shape Courses 5-7 spent three courses removing. Worse: each human she adds has the same scaling ceiling she does. Two co-founders process about 30 approvals per business day instead of 17. Three handle about 50. The architecture still caps out at about 10 times the workforce per human; it just caps out at a slightly higher number. You cannot scale an AI-native company by adding humans to its governance loop. If you could, it wouldn't be AI-native.

Right response: the owner's Identic AI. A personal AI delegate, running on Maya's hardware, that has learned Maya's approval patterns from her past 200 decisions. When a new approval request arrives, the Identic AI either resolves it autonomously (when Maya's own pattern is clear and the request is routine) or surfaces it to Maya (when the request is novel, consequential, or outside the patterns the Identic AI has confidently learned). Maya's attention is now spent only on the decisions that genuinely benefit from a human's judgment, and the workforce can grow without re-creating the bottleneck.

Notice what this response does not do. It does not auto-approve under a policy Maya wrote once and forgot about. It applies Maya's judgment, which the Identic AI has accumulated from watching Maya decide. It does not delegate the authority: Maya remains the principal; the Identic AI acts on her behalf, with her explicit consent, and every action is recorded in an audit stream Maya reviews. The owner remains in the loop; the owner's attention does not remain in the loop on routine traffic.

This is the architectural primitive Invariant 2 of the thesis names: the delegate. The thesis says it abstractly ("every human needs a delegate that holds their context, represents their judgment, carries their authority envelope, and brokers all downstream work on their behalf"). Course Eight operationalizes it concretely for the owner of an AI-native company. Without it, the previous six operationalized invariants (engines, system of record, nervous system, management layer, hiring API) cap out at a workforce of a few dozen. With it, the architecture scales to the size of the workforce, not the size of the owner's calendar.

You're Maya. Your company has 80 Workers at month nine. The auto-approval policy from Concept 9 of Course Seven covers Tier-1 burst hires. The Manager-Agent's gap-detection signals fire on a new pattern: an unexpected volume of Spanish-language customer questions over three weeks. The Manager-Agent drafts a hire proposal for a Spanish-Language Tier-2 Specialist. The proposed authority envelope is identical to the existing English-language Tier-2 (no envelope-extension check needed). The proposed budget is $800/month, well within Course Seven's auto-approval ceiling. The eval pack passes.

Two predictions, written separately on paper before you read on:

- Under Course Seven's auto-approval policy (Concept 9 of Course Seven, which only checks envelope, budget, and eval-pack thresholds): does this hire get auto-approved without involving Maya? Predict yes or no.

- Under what Course Eight will teach (the Owner Identic AI applying Maya's accumulated judgment): does Maya's Identic AI auto-approve this, or surface it to Maya? Predict surface or auto-approve.

If your two predictions are the same, you haven't yet seen the distinction Course Eight is built on. If they're different, you've seen it. Either is fine; read on.

Answers:

- Yes, Course Seven's auto-approval policy fires. The hire fits the policy's stated criteria: known envelope, eval pack passes, within budget ceiling. The policy has no way to know this hire is different from a routine burst-capacity hire.

- The Owner Identic AI surfaces it to Maya, not because Course Seven's policy is wrong, but because the Identic AI encodes a class of judgment Course Seven's policy cannot. Spanish-language support is a strategic expansion of Maya's market, not just additional capacity. Maya might want to think about whether the company is ready to commit to bilingual support contractually, whether the privacy policy and terms of service need Spanish translations, whether this hire signals a broader product direction.

The distinction is rule vs. judgment. A rule can be encoded once and applied uniformly. A judgment is learned from the owner's past decisions and applied per-situation. Course Seven's policy is a rule; the Owner Identic AI is a judgment-applier. Both are valuable: the rule handles 90% of routine cases at zero owner-attention cost, the judgment handles the remaining 10% that genuinely benefit from a human-trained pattern. This is what the course will teach you to build.

Bottom line: Concept 1's math gives the workforce-vs-attention bottleneck a number. The AI-native company hits its owner-attention ceiling somewhere between 10 and 40 Workers, well before any other scaling constraint. The delegate primitive Invariant 2 names is what removes the cap, not by reducing the number of decisions, but by routing routine ones through the owner's known judgment instead of the owner's actual attention. Two architectural responses are wrong (auto-approve more aggressively; add humans to the loop) and only one is right (the delegate). The math is the case for why.

Concept 2: What Identic AI means: Tapscott's framing made concrete

The architectural primitive that solves the bottleneck is Identic AI, as defined by Don Tapscott in his HBR IdeaCast interview (Episode 1066, February 17, 2026) and his book You to the Power of Two: Redefining Human Potential in the Age of Identic AI. Course Eight inherits Tapscott's vocabulary because it's the cleanest available framing for what we're building, and because adopting it ties the course to a current management-discourse conversation rather than coining yet another term.

Tapscott's definition, verbatim from the transcript:

"the rise of intelligent companions that really learn who we are, and they reflect our values and ultimately operate as extensions of ourselves... a subset of agentic AI, we call them identic AI"

Five properties he names, each of which Course Eight will return to:

- Personalized: a single individual's agent, not a workforce agent. Maya's Identic AI is Maya's. There is no shared instance, no team account, no organizational tier. The unit is one human, one Identic AI.

- Reflects user's values and judgment: trained on the user's documents, decisions, communications, accumulated context. The Identic AI is not a generic AI assistant configured with the user's name; it is an AI that has learned the user over time.

- Extension of self: meant to feel like extended cognition, not a tool the user invokes. In Tapscott's framing: "it's becoming a part of the human experience." In Maya's case: she does not "log in to her Identic AI" the way she logs in to Paperclip. The Identic AI is reachable in her chat apps, on her phone, in her menu bar; it acts when she acts; it knows what she's working on.

- Self-sovereign: user-owned, not platform-owned. Tapscott's emphasis throughout the transcript: "identic AI needs to be self-sovereign. We need to own our own superintelligence." The accumulated context, the learned patterns, the judgment model: these belong to the user. They are not held hostage by a platform. They survive the user changing devices, changing employers, changing chat-app preferences.

- Persistent memory: accumulates knowledge of the user continuously. Tapscott's example from the transcript: "For digital Don, for example, I've input about 500 documents, everything I could find that I've written my speeches, my PowerPoints, my books and articles and interviews and all kinds of stuff like that. And it's learning about me and how I view things and how I think about things."

All five properties must be present together; the fifth, self-sovereign, is the one most easily abandoned and the one that determines the runtime choice in Concept 4.

These five properties are the discriminating definition. They distinguish Identic AI from:

- General-purpose AI assistants (which lack persistent memory and don't reflect the user's specific judgment)

- Workforce agents (which are owned by a company, not by an individual; Course 5-7's Workers are workforce agents)

- Customer-side AI agents (which serve a user but are typically platform-owned and platform-hosted; they fail the self-sovereign property)

- Personal assistants without learning (a chat-app bot that schedules meetings is helpful but doesn't accumulate the user's judgment over time)

The discriminator most readers under-weight is self-sovereignty. Tapscott returns to it repeatedly because, in his view, the AI industry's default trajectory is toward platform-owned Identic AI and against user-owned Identic AI. From the transcript: "The biggest question for me is who's going to own digital Adi? Mark Zuckerberg? Google? This is an extension of you and your intelligence. And if they own it, that's a big problem." If Maya's Identic AI is hosted by a platform that can read her judgment patterns, modify them, downrank them, or revoke her access to them, then her Identic AI is not actually acting on her behalf. It is acting on the platform's behalf with Maya's data. Course Eight commits to the self-sovereign property as a non-negotiable architectural property, and that commitment is what determines the runtime choice in Concept 4 (OpenClaw, because it runs on Maya's hardware and stores her context on her filesystem, not as an opt-in, as the default architecture).

How Identic AI maps onto Course Eight's specific use case. Course Eight is not teaching general-purpose Identic AI. It is teaching one specific configuration: the Owner Identic AI, an Identic AI configured to act as a governance delegate for the owner of an AI-native company. Other valid Identic AI configurations exist (a personal Identic AI that runs your household, manages your calendar, drafts your email; Tapscott's "digital Don" example fits this) and Course Eight will return to them briefly in Concept 15 as the broader Identic AI economy the architecture enables. But the course's load-bearing example is Maya's Identic AI in its capacity as her governance delegate. That capacity is what operationalizes Invariant 2 for the AI-native company, and that's the capacity Course Eight teaches.

The four lower-numbered properties (personalized, value-reflecting, extension-of-self, persistent memory) are operational requirements; the fifth (self-sovereign) is an architectural commitment. Without the fifth, the other four still work, but they work against the owner rather than for her. Course Eight teaches all five, and treats the fifth as the load-bearing one.

Bottom line: Identic AI, in Don Tapscott's framing, is a personalized AI that reflects its user's values, persists their context across time, acts as an extension of them, and remains owned by the user, not by a platform. Five properties; the fifth (self-sovereign) is the one most easily abandoned and the one the course refuses to compromise on. Owner Identic AI is the specific configuration Course Eight teaches: an Identic AI configured to act as the AI-native company owner's governance delegate.

Concept 3: Why the owner specifically: not the workforce, not the customer

A reasonable reader at this point can ask: if Identic AI is so important, why is the course only teaching the owner's? Why not teach customer-side Identic AI, or employee-side Identic AI, or peer-to-peer Identic AI interactions, or the Identic AI economy more broadly? The answer is that Course Eight makes a deliberate scope choice, and the reasoning matters.

There are at least four categories of Identic AI use case relevant to an AI-native company:

| Use case | Whose Identic AI | What it does | Maturity in May 2026 |

|---|---|---|---|

| Owner / governance delegate | The owner of an AI-native company | Pre-filters approval traffic, applies owner's judgment, surfaces consequential decisions | Shipped (OpenClaw plus the pattern Course Eight teaches) |

| Customer-side personal AI | An individual customer | Interacts with companies on the customer's behalf, holds the customer's context, makes purchases | Partially shipped (OpenClaw exists; companies don't yet accept signed customer-Identic-AI requests as a normal interaction channel) |

| Employee-side delegate | An employee of an AI-native company | Drafts emails, prepares for meetings, manages the employee's own work delegated by the workforce | Partially shipped (OpenClaw can do this; integration with company workflows varies) |

| Peer-to-peer / Identic AI economy | Two individuals' Identic AIs interacting directly | Negotiate deals, schedule, coordinate without humans in the loop | Speculative; Tapscott's "infinite number of vice presidents" end-state |

Course Eight teaches the first one (the owner's) and does not teach the others. Three reasons:

First, the load-bearing argument from Concept 1 is about the owner specifically. The scaling-impossibility math doesn't apply to customers or employees in the same way. A customer with no Identic AI is mildly inconvenienced; the company workforce can still serve them through traditional channels. An employee without an Identic AI works the same way employees always have; productivity is lower than it could be but the company functions. Only the owner's bottleneck stops the company from scaling. The course's claim, that the Owner Identic AI is what operationalizes Invariant 2 for an AI-native company, is true specifically because the owner's case is the one that makes the architecture incoherent without it. The other cases are valuable but not load-bearing.

Second, the customer-side Identic AI use case isn't fully shipped yet, and the architecture has open gaps Course Eight cannot honestly close. Tapscott's transcript gestures at the customer-side use case ("a doctor that's been to every medical school in the world, your tutor that's literally a know-it-all") but the trust-delegation between a stranger's Identic AI and a company's workforce is a harder open problem than the owner's case. With Maya, both the owner-authority envelope and the Identic AI's delegated envelope are configured by Maya herself; the trust model is one-party. With an arbitrary customer, the company has to verify identity, authority, and authorization across an untrusted boundary; the trust model is multi-party with no shared root. Courses 5-7 didn't teach that level of cross-party trust either; teaching it in Course Eight would require introducing primitives the rest of the track doesn't depend on. Honest pedagogy: teach the load-bearing case well; gesture at the broader case as the open frontier.

Third, a course that tries to teach all four cases teaches none of them well. The track's pattern across Courses 3-7 is to teach one thing deeply, with a complete worked example, and to gesture at adjacent cases in sidebars and the forward-look section. Course Six taught the workforce, not the broader AI-native organization. Course Seven taught the hiring API, not the full lifecycle including offboarding to outside counsel. Course Eight teaches the Owner Identic AI, not the broader Identic AI economy. Concept 15 returns to the broader picture as the closing forward-look, naming the customer-side and employee-side and peer-to-peer cases as the next architectural frontiers. But the lab, the worked example, the 15 Concepts of teaching: all about Maya's case.

A useful test for whether Course Eight made the right scope choice: if a reader finishes Course Eight and successfully sets up Maya's Owner Identic AI in their own AI-native company, the architecture works at scale. The owner's attention is no longer the bottleneck. The seven invariants from Courses 3-7 are now complete. If the same reader wants to extend Owner Identic AI patterns to other use cases (a customer-side Identic AI in their product, an employee-side delegate for their team), they have the architectural tools to do so, and Course Eight names where each extension is shipped vs. open. The course delivers the load-bearing case completely and names the rest honestly. That's the pedagogical commitment.

Course Eight teaches the Owner Identic AI and not the other three use cases. Each option below is a real argument someone could make for that scope choice. Predict which one is the course's actual reasoning, before you read on. The point of the prediction is that more than one of these is defensible; you have to pick the one that matches the course's central argument specifically.

(a) The owner's case is the only one mature enough to ship: customer-side and peer-to-peer Identic AI need cross-party trust primitives that don't exist in May 2026, so the course teaches what's buildable today. (b) The owner's case is the load-bearing one: Concept 1's scaling math shows that without the Owner Identic AI, Courses 5-7's architecture itself is incoherent past a few dozen Workers. The other cases are valuable but the company still functions without them. (c) The owner's case is the pedagogically simplest: it's a one-party trust model (Maya configures both envelopes herself), so it's the cleanest place to teach the trust-delegation primitive before the harder cases. (d) The owner's case has the clearest commercial demand: AI-native company owners are the buyers who will pay for a governance delegate first, so the course teaches to the market.

Answer: (b). All four contain something true, which is why this is a real prediction and not a reading check. (a) is true (Concept 3 says exactly this about customer-side maturity) but it's a consequence of the scope choice, not the reason for it. (c) is true (Concept 3 names the one-party trust model as a teaching advantage) but again it's a benefit, not the load-bearing reason. (d) may well be true but the course makes no commercial-demand argument. The course's actual reasoning is (b): the owner's bottleneck is the one whose absence breaks the architecture. Concept 1's math is the case. The customer-side, employee-side, and peer-to-peer cases are valid Identic AI configurations, but the company functions without them; only the owner's case makes Courses 5-7 incoherent at scale. Course Eight teaches the load-bearing case completely; Concept 15 names the others as the open frontier.

Bottom line: Course Eight teaches one specific Identic AI configuration, the Owner Identic AI, because that's the use case whose absence makes Courses 5-7's architecture incoherent at scale. Other Identic AI cases (customer-side, employee-side, peer-to-peer) are valuable but not load-bearing for the AI-native company's scaling argument. The course delivers the load-bearing case completely and names the rest honestly in Concept 15.

Part 2: The OpenClaw runtime

Part 1 named the problem and committed to a scope. Part 2 introduces the runtime, OpenClaw, and walks through what it actually is, where it lives, and how Maya talks to it. Three Concepts: what OpenClaw is, verified from the official source (Concept 4); where Maya's accumulated context lives on her filesystem (Concept 5); and why OpenClaw chose chat apps over a web app as the interface layer (Concept 6).

Concept 4: OpenClaw, what it actually is

OpenClaw is the runtime Course Eight teaches against. Before any of the architectural patterns in Concepts 7 through 15 make sense, you need a clean mental model of what OpenClaw actually is, verified from openclaw.ai and docs.openclaw.ai, not from pattern-matching against earlier personal-AI products.

The verified facts, drawn directly from the official site and docs:

- Runs on the user's local machine. Mac, Windows, or Linux. The installer is

curl -fsSL https://openclaw.ai/install.sh | bashornpm i -g openclaw. After install,openclaw onboardwalks the setup. - Open source. github.com/openclaw/openclaw. MIT-licensed. The user can read, fork, modify, and self-host.

- Reachable through chat apps the user already uses. OpenClaw lists 50+ integrations, including 15+ chat channels (WhatsApp, Telegram, Discord, Slack, Signal, iMessage, and more) at openclaw.ai/integrations. The user does not install a new app; they message OpenClaw in their existing chat app.

- Persistent memory. The site's headline phrase: "Remembers you and becomes uniquely yours. Your preferences, your context, your AI." The

sessionprimitive (docs.openclaw.ai/concepts/session) is the unit of accumulated context. - Full system access on the user's machine. Read and write files, run shell commands, execute scripts (docs.openclaw.ai/bash). Full access or sandboxed: the user's choice.

- Browser control. Can browse the web, fill forms, extract data from any site (docs.openclaw.ai/browser).

- Skills and plugins. Extensible with community skills (clawhub.ai) or user-built ones (docs.openclaw.ai/skills). OpenClaw can even write its own.

- Model-agnostic. Anthropic, OpenAI, or local models. The user picks during onboarding.

- Companion app. A macOS menubar app (beta in May 2026) for desktop access alongside the chat-app interface.

Institutional signals worth noting. OpenClaw's most load-bearing signal is structural rather than reputational: the project is open source under an MIT license. That license is a continuity guarantee, because the codebase at github.com/openclaw/openclaw can be forked and self-hosted by anyone, regardless of what happens to the project's stewards. Beyond the license, the project has a very large GitHub following (hundreds of thousands of stars), and it has major-vendor sponsorship; as of May 2026 the sponsor list includes OpenAI, GitHub, NVIDIA, Vercel, and Convex, though a sponsor list is the kind of fact that shifts, so treat it as an as-of-May-2026 example rather than a load-bearing claim. The founder, Peter Steinberger, joined OpenAI in early 2026 while the project continues as an open-source effort. Specific counts and sponsor details should be verified at openclaw.ai and the project's GitHub before being quoted authoritatively.

Why these signals matter for Course Eight. Course Eight's central architectural commitment is self-sovereignty: Maya's accumulated judgment is hers, not a platform's. The risk with any open-source personal-AI project is that it shuts down, gets acquired into a closed-source product, or pivots its data-ownership model. The signal that pushes hardest against that risk is the MIT license itself. Institutional backing (the major sponsor list, the founder's hire by OpenAI) indicates the project has resources and an industry stake, but sponsorship and hiring can both change. Open source is the durability guarantee that survives a change of stewards: even if the project's direction shifts, the codebase can be forked and self-hosted, and Maya's runtime can continue. None of these signals is a proof of long-term durability. Together, with the MIT license as the anchor, they make OpenClaw the most credible self-sovereign Identic AI runtime to build a curriculum against in May 2026.

The mental model: how OpenClaw differs from a chatbot. A reader coming to OpenClaw from ChatGPT or Claude.ai has a mental model of "an AI in a chat window I open when I want to ask something." That model is wrong for an Identic AI in three specific ways:

| Mental model | Chatbot | Owner Identic AI (OpenClaw) |

|---|---|---|

| When does the AI act? | Only when the user explicitly opens the app and types | Continuously, in the background; proactively when the user has standing instructions; via heartbeat checks |

| Where does the AI live? | In a tab the user opens | In the user's chat apps already (WhatsApp, Telegram) and in the user's filesystem |

| What does the AI remember? | Within-conversation context that's discarded between sessions | Persistent session across all interactions, all devices, all time |

| Who initiates? | The user, every time | Either party: the user can ask, and the AI can also surface things proactively |

The fourth row is the most important and the easiest to miss. In a chatbot, the user is always the initiator. In an Identic AI, the AI is also an initiator. Claudia can send Maya a Telegram message at any moment ("a customer's refund request just arrived; based on our patterns, this is one I'd auto-approve. OK to proceed?"). This bidirectional initiation is what makes an Identic AI useful for governance delegation. A chatbot Maya has to open and consult cannot pre-filter approval threads; an Identic AI that messages Maya when it needs to can.

Concretely, what a first session looks like. A user who runs curl -fsSL https://openclaw.ai/install.sh | bash then openclaw onboard walks through approximately this sequence (per the docs at docs.openclaw.ai/getting-started):

- Pick a model. Anthropic Claude, OpenAI GPT, or a local model. (For Course Eight, we'll use Claude opus-4-7 as the default.)

- Name your OpenClaw. Users name their Identic AI, "Claudia," "Jarvis," "Brosef," and pick a persona tone. The naming makes the Identic AI feel like an entity rather than a service: once it has a name, users tend to refer to their OpenClaw by name in conversation.

- Pick a primary chat app. Telegram (most common, easiest bot setup), WhatsApp, Discord, Signal, iMessage, or Slack. The user creates a bot in their chat app (for example, via

@BotFatheron Telegram) and pastes the token into OpenClaw. - Initial skill installation. OpenClaw asks what the user wants their Identic AI to be good at: calendar management, email, code, governance, all of the above. Selected skills install from clawhub.ai (the community skills marketplace). For Course Eight's purposes, none of the default skills handle Paperclip integration; we'll write that one in Decision 3.

- A first conversation. The user opens their chat app, finds their OpenClaw bot, types "hello." OpenClaw responds in the chosen persona, and from that moment on, the Identic AI is reachable in the user's normal communication flow. No separate app to open.

The whole onboard takes 5 to 10 minutes. The architectural commitments Course Eight depends on, local filesystem storage, persistent session, chat-app reachability, are baked into the install rather than configured per-user.

Why OpenClaw as Maya's runtime, not an alternative:

| Alternative | Why not for Course Eight |

|---|---|

| Claude Agent SDK + custom Identic AI | You can build it, but you assemble persistence, chat-app integration, skill management, and onboarding yourself. OpenClaw ships all of those. |

| OpenAI Agents SDK + custom Identic AI | Same problem, plus the architecture is closer to the cloud-workforce shape than to a personal-AI shape. |

| A hosted SaaS personal AI (for example, a hypothetical "Claude for Personal") | Fails the self-sovereign property. Maya's accumulated judgment lives on a platform that can read, modify, or revoke it. Tapscott's central commitment is violated. |

| Roll-your-own using Inngest + a custom front-end | Possible, but you spend the course teaching plumbing rather than teaching Identic AI. The course's value is in the architectural patterns, not the runtime mechanics. |

OpenClaw fits the requirements on each axis: it ships, it's open source, it stores the user's context on the user's filesystem by default, and it reaches the user through chat apps they're already in. For Course Eight's worked example, it's the runtime the thesis names. For your real deployment, the architectural patterns transfer to other runtimes; OpenClaw is the example, not the requirement.

If you can't use OpenClaw: transfer guidance. Compliance constraints, model-provider restrictions, or build-vs-buy preferences may put OpenClaw out of reach. The architectural patterns in Course Eight still apply; you'll just operationalize them on a different runtime. Here's how the load-bearing OpenClaw primitives map to what you'd build yourself:

OpenClaw primitive What you need to provide Suggested substrate The agent loop (Concept 4) A model-calling loop with tool execution Claude Agent SDK or OpenAI Agents SDK with a long-running daemon process Local-machine runtime (Concept 4) A process that survives reboots and stays running on the owner's hardware systemdon Linux,launchdon macOS, a Windows service, or a small Docker container the owner runs locallyThe sessionprimitive (Concept 5)Persistent context storage on the owner's filesystem A directory the daemon reads and writes; structured JSON or SQLite is enough; whatever schema you pick is yours Chat-app reachability (Concept 6) A way for the owner to message the agent and get responses A single chat-app bot (Telegram bots are simplest; the BotFather flow takes about 5 minutes). The 50+ integrations are OpenClaw's value-add, not a requirement of the architecture Skills and plugins system An extension mechanism for capabilities like "talk to Paperclip" Hand-written Python or TypeScript functions registered as tools in your Agent SDK The signing key (Concept 8) An ed25519 key pair stored on the owner's filesystem Standard library code: crypto.subtlein browser,cryptoin Node,cryptographyin PythonWhat changes versus Course Eight as written: you write more glue code (skill manifests, daemon supervision, chat-app integration). What stays the same: the trust-delegation primitive (Concepts 7 through 9), the governance ledger schema (Concept 11), the two-envelope intersection, the recalibration loop. Build effort to assemble a Course-Eight-equivalent on the Claude Agent SDK: roughly a weekend for an experienced engineer. The discipline is in the patterns, not the runtime.

Bottom line: OpenClaw is the open-source, user-owned personal AI runtime Course Eight teaches against. It runs on the owner's local machine, stores context on the owner's filesystem, and is reachable through the chat apps the owner already uses. As of May 2026, it is the credible shipped operationalization of Tapscott's self-sovereign Identic AI commitment: open source under an MIT license, with a large GitHub following and major-vendor sponsorship. The course's patterns transfer to other runtimes, but OpenClaw is the worked example.

Concept 5: Persistent memory and the owner's local context

The single property that turns OpenClaw from "another AI chatbot" into "Maya's Identic AI" is persistent memory. Without it, Maya would have to re-explain her judgment to OpenClaw on every interaction; the value of an Identic AI is precisely that it doesn't forget. Concept 5 walks through how OpenClaw's persistent memory works, where Maya's accumulated context actually lives, and what the architecture implies for Course Eight's central use case.

The session primitive. OpenClaw organizes persistent context around what its docs call the session: the unit of accumulated context for a user across time, across devices, across chat apps. Three things to know about the session model:

- Storage is local. Maya's session lives on Maya's hardware, in her filesystem, in her home directory under the OpenClaw config path. It is not synced to a cloud account by default. There is no "OpenClaw Cloud" that stores Maya's context. (Maya can opt in to syncing for multi-device use; Concept 13 walks the tradeoffs.)

- Storage is human-readable. The session is structured data on Maya's disk that she can inspect, edit, version with

git, encrypt, back up, or destroy at her discretion. Tapscott's self-sovereignty commitment is operationalized here as a filesystem property: Maya owns the files; the files are Maya's context. - Storage accumulates. Every interaction adds to the session. Maya messages OpenClaw on Telegram about a refund decision; that decision joins the session. She approves a hire over coffee through the menubar app; that approval joins the session. Six months in, the session contains hundreds of Maya's recorded decisions, preferences, communication patterns, and explicit instructions.

What gets persisted, concretely. OpenClaw's session captures more than just the chat transcript. From the docs:

| Persisted | What it is | Why it matters for Owner Identic AI |

|---|---|---|

| Conversation history | The literal exchange of messages between Maya and OpenClaw across chat apps | The raw record. Maya can re-read what she said and what OpenClaw did. |

| User preferences | Explicit statements Maya makes ("I prefer morning meetings"; "Don't approve anything over $5,000 without me") | Standing instructions the Identic AI applies thereafter |

| Skills installed and configured | Which OpenClaw skills Maya has installed; their configurations | The Identic AI's capability surface, including the Paperclip-integration skill Course Eight builds |

| Persona | Maya's named identity for OpenClaw (for example, "Maya's Lobster" or "Claudia") and the persona OpenClaw projects back | Continuity of identity across chats and devices |

| Activity log | What OpenClaw did and when: every skill invocation, every external API call, every decision | The audit trail that becomes Course Eight's governance ledger (Concept 11) |

| Derived patterns | The Identic AI's accumulated model of Maya's judgment, learned over time | The judgment-learning loop the course is centrally about |

What does not get persisted by default: ephemeral environmental state (which chat app Maya happened to use for a given message, the precise timestamp at millisecond resolution, and similar). These are recorded but not surfaced as part of the identity-relevant session. The distinction matters because Concept 13's question, what travels with Maya across devices and employers, is exactly the question of which subset of the session is identity-relevant.

Why filesystem-local storage is the load-bearing architectural commitment. This is the part of the OpenClaw architecture most easily missed. Many AI products advertise "persistent memory" while quietly meaning "we store your memory in our cloud, and you trust us with it." OpenClaw's choice, Maya's filesystem by default, is qualitatively different. It means:

- Maya can read her own context with

cat ~/.openclaw/session/*.json(or whatever the path is at the time of writing; consult docs.openclaw.ai/concepts/session for the canonical layout). - Maya can back up her context with the same tools she uses for any other files (Time Machine,

rsync, Git, encrypted external drives). - Maya can move her context to a new device by moving the files.

- Maya can destroy her context by deleting the files. There is no "delete request" to file with a vendor.

- Maya can encrypt her context at rest with her existing disk-encryption tools.

- Maya's context is not held hostage by any platform. If OpenClaw the project shut down tomorrow, Maya's accumulated context would still exist on her disk; she could read it, parse it, and load it into a different runtime.

This is the property that satisfies Tapscott's self-sovereignty commitment. It is also the property that makes Maya's Identic AI survive her changing her chat app, her model provider, even (with effort) her runtime. The session is Maya's; the runtime is configurable.

Maya has been using OpenClaw for six months. Her session directory on her Mac is about 240 MB. She switches employers: she sells her current AI-native company and starts a new one. Which of the following parts of her session should she expect to carry forward to her new company, and which should not?

Items: (a) her communication style and tone preferences, (b) her past approval decisions on Workers at the old company, (c) the Paperclip-integration skill's configuration pointing at the old company's API endpoint, (d) her standing instruction "always escalate envelope-extension hires", (e) the activity log of every action OpenClaw took at the old company.

Predict for each: travels with Maya / stays with the old company / it's complicated. Then read on.

Answer: (a) travels. Communication style is a personal pattern, not company property. (b) it's complicated. The patterns derived from those decisions ("Maya tends to approve hires with envelope extensions when X but not Y") travel; the decisions themselves (which were about specific Workers and specific issues at the old company) stay, as a matter of confidentiality. (c) stays. That skill's configuration is pointed at the old company's endpoint; the new company has different endpoints, possibly different auth. (The skill itself can travel as a recipe and be reconfigured.) (d) travels. That's a standing personal instruction; it applies anywhere. (e) stays. The activity log records actions taken at the old company against the old company's systems; it's audit data the old company has rights to, not data Maya owns.

This is the Harper Carroll seam from Course Seven's Concept 13, applied to Maya's session: patterns travel, specific records don't. The filesystem layout of the OpenClaw session makes this distinction enforceable. Maya can package and migrate the personal-patterns subset and leave the company-records subset behind.

Bottom line: Maya's Identic AI is Maya's session, a structured local store of her conversation history, preferences, skills, persona, activity log, and derived patterns. It lives on her filesystem, in human-readable files she owns. This is what makes the architecture self-sovereign and what makes Maya's accumulated judgment survive every change of device, employer, or chat app. The session is Maya's; everything else is configuration.

Concept 6: Chat apps as the interface layer

The non-obvious architectural choice OpenClaw makes is that it does not have its own user interface for the user-to-AI conversation. There is no OpenClaw web app, no OpenClaw mobile app for chatting. The user talks to OpenClaw through chat apps the user already uses: WhatsApp, Telegram, Discord, Slack, Signal, iMessage. Concept 6 walks through why this is the right architectural choice for an Identic AI and what it implies for the Course Eight worked example.

Why not a dedicated app. The default move for an AI product is to ship a chat UI of its own: a web app, a mobile app, both. OpenClaw deliberately doesn't. The reasoning, drawn from the project's design choices, is that an Identic AI should live where the user already lives, not where the product wants the user to live. The user is already in their chat apps all day: group threads with their team, DMs with their family, WhatsApp with their partner. Putting the Identic AI in those apps means:

- The user doesn't context-switch to talk to it.

- The user can include the Identic AI in group chats with other humans (Maya can add her OpenClaw to a board-discussion Slack channel; her Identic AI participates as a peer).

- The user's existing notification system handles attention routing (Maya's phone buzzes for OpenClaw the same way it buzzes for messages from her team).

- The user has no separate app to remember to open.

- The Identic AI inherits all the conveniences of the chat apps: voice messages, file attachments, group threads, search history.

The chat-channel integrations are an architectural commitment, not a feature list. OpenClaw lists 50+ integrations, including 15+ chat channels (WhatsApp, Telegram, Discord, Slack, Signal, iMessage, and more) at openclaw.ai/integrations. The chat-channel set is the part that matters here. The user picks their preferred chat app or apps during openclaw onboard; OpenClaw integrates with those; the user starts messaging. There is no learning curve for the chat interface because the user already knows how their chat app works.

What this means for Maya. In the Course Eight worked example, Maya configures OpenClaw to be reachable through Telegram. (We pick Telegram because the OpenClaw docs use it as the default example, and because Telegram has clean bot semantics. Maya could equally use WhatsApp or Signal.) Maya names her OpenClaw "Claudia" during onboarding. From then on:

- When Maya wants to message her Identic AI, she opens Telegram, finds the chat with Claudia, and types. Same gesture as messaging a teammate.

- When her Identic AI wants to surface something to her (an approval that needs her judgment, a weekly governance-ledger summary), it sends her a Telegram message. Same notification flow as any other message.

- When Maya is at her desk, she can use OpenClaw's macOS menubar app instead of switching to Telegram. The session is shared.

- When Maya travels and uses her phone, the same Claudia is there. The session syncs across her devices (with the architectural caveats from Concept 13 about how that sync works).

The implication for the trust-delegation problem. This is subtle and worth noting before Part 3 walks into it. Because OpenClaw lives in chat apps the user already trusts, the user-to-OpenClaw trust is inherited from the chat app's own auth. Maya is already logged in to Telegram as Maya; she is already the recipient of messages sent to her Telegram account; OpenClaw doesn't need to re-authenticate her at the user-to-AI boundary. The trust-delegation problem in Course Eight is therefore not "how does OpenClaw know it's Maya", which is solved by Telegram's own auth, but "how does the Paperclip management layer know that an approval request originating from Maya's OpenClaw is genuinely Maya-authorized." That's Concept 7's problem.

Paste this into your AI coding assistant:

"OpenClaw reaches the user through chat apps as its primary interface: WhatsApp, Telegram, Discord, Slack, Signal, iMessage, and more. The architectural commitment is that the Identic AI lives where the user already lives, not in a separate app. From the user's perspective, this is a clear win: zero context switching, existing notifications, group chats. From a security perspective, list three things the user should verify about their chosen chat app before treating their OpenClaw conversation as trustworthy for governance decisions. For example: is the chat encrypted end-to-end? What happens if the chat-app provider is compelled to hand over messages? What is the recovery story if the user's chat-app account is compromised?"

What you're learning: the chat-app interface inherits trust from the chat app, and that trust has real properties Maya should verify. Telegram's end-to-end encryption is opt-in (only for "Secret Chats"); WhatsApp is end-to-end by default; iMessage is end-to-end within Apple's ecosystem. Each choice has implications for whether the Paperclip integration is treating chat-app messages as cryptographic evidence of Maya's intent (it should not) or as a convenience channel (it should). The signed-delegation primitive in Concept 8 is what makes governance decisions cryptographically grounded regardless of which chat app routes them.

Bottom line: OpenClaw's chat-app-first interface architecture is a deliberate choice: the Identic AI lives where the owner already lives, not in a separate app. For Maya, this means Telegram (or WhatsApp, or any of the supported chat channels) becomes her interface to her Owner Identic AI. The architectural implication for trust delegation is that user-to-OpenClaw auth is inherited from the chat app; the load-bearing trust problem is on the OpenClaw-to-Paperclip boundary, which Concept 7 takes up.

Part 3: Trust delegation and governance

Parts 1-2 named the problem (Maya can't read approvals at scale), the architectural primitive that solves it (Identic AI), and the runtime that operationalizes it (OpenClaw). Part 3 takes up the load-bearing technical move of Course Eight: how the company's Paperclip management layer can safely accept approval decisions from Maya's Identic AI without abandoning the safety property the entire seven-invariant thesis depends on. Three Concepts.

Concept 7: The trust-delegation problem

When Maya logs into Paperclip and clicks "approve" on a hire proposal, the Manager-Agent records that the human owner approved. When Maya's Identic AI ("Claudia") clicks "approve" on a routine hire proposal at 3 AM while Maya is asleep, the Manager-Agent records that something approved. The question Concept 7 takes up is what should be different about these two cases and what should be the same.

The naive answers are both wrong:

Naive answer A: treat them the same. "Claudia is authorized; her click is Maya's click; record it as Maya-approved." This collapses the distinction and produces an audit trail that lies. Six months later when Maya is reviewing how a particular decision was made, she cannot tell from the activity log whether she made it herself or her Identic AI made it on her behalf. The information needed for recalibration (Concept 12), namely did my Identic AI handle this well, or do I need to correct it?, is gone. Truthful auditing requires distinguishing the two principals.

Naive answer B: always require the human. "Claudia can't approve anything; she can only message Maya the approval requests, and Maya clicks 'approve' herself." This is the architecture without an Identic AI; we have not made progress. The whole point of Part 1's argument is that Maya can't be in the loop on every routine approval. An Identic AI that can only relay messages is not solving the scaling problem.

The correct answer is structurally a three-part move:

- The Identic AI has its own identity in the system. Maya's OpenClaw, configured as Claudia, has a distinct identity that Paperclip recognizes: Claudia is registered as a Paperclip agent, with her own key. Claudia is not a user account; she is a delegated agent of Maya's. This is a new principal type the previous courses didn't need.

- The Identic AI's authority is a subset of Maya's authority, set by Maya, with clear limits. Maya can approve anything in the owner-authority envelope; Claudia can approve a configured subset (Concept 9 walks the intersection). The subset is recorded: Maya knows what Claudia is allowed to do; Claudia knows; Paperclip stores it.

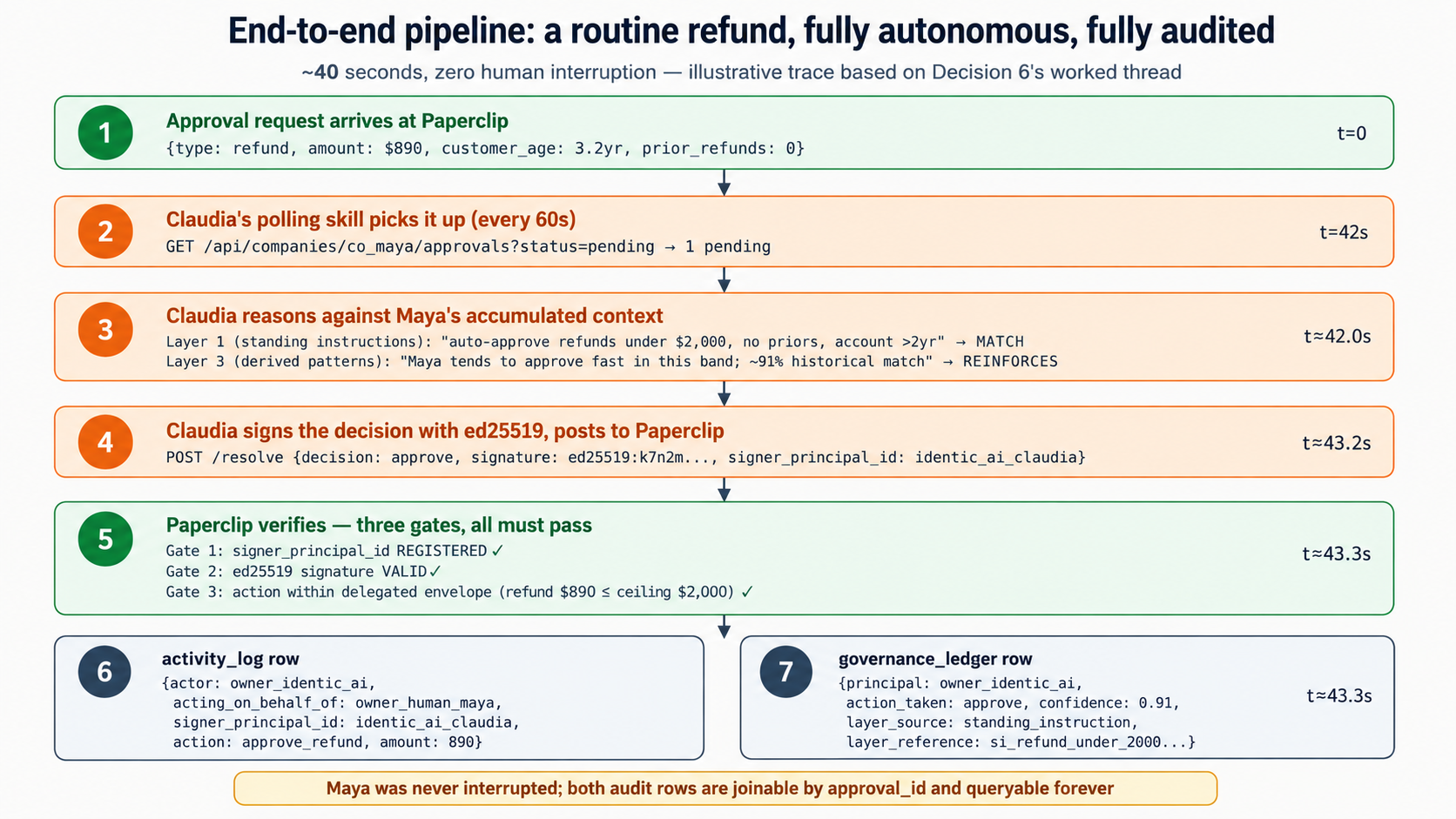

- The audit trail records which principal acted, and you have to be honest about where that record lives. Here is the part that is easy to get wrong, so the course states it plainly up front. Against real Paperclip 2026.513.0, the approval routes are board-scoped: an approve, reject, or request-revision call is recorded as a board action, so Paperclip's own

activity_logwritesactor_type='user'whether Maya clicked it or Claudia drove it. Paperclip does not natively distinguish owner-human from owner-identic-ai on an approval. So the two-principal distinction lives in the course's owngovernance_ledgertable: every decision Claudia makes writes agovernance_ledgerrow carryingprincipal='owner_identic_ai', her attestation, and her reasoning. A Maya-resolved approval has no such row. Join the two tables on the approval id and the distinction is fully recoverable. (The simulated-track mock takes a shortcut: it implements nativeactor: owner_identic_aiattribution directly, purely as a teaching simplification. Real Paperclip does not, and the full-implementation track is honest about that throughout Part 4.)

This three-part move is what makes delegated governance safe rather than reckless. The principle holds in both tracks: two principals, one human, distinct audit truth. What differs is the mechanism: native attribution in the mock, the governance_ledger against real Paperclip. Maya delegates, but doesn't disappear: the audit record makes her always recoverable.