Claude Code and OpenCode: A 45-Minute Crash Course

15 Concepts, 80% of Real Use

A practical crash course. No filler, no upsell. By the end you'll know what to use, when to use it, and where to look when you need more, in either tool.

The single insight that makes everything else click: agentic coding is a context-management problem wearing the costume of a coding tool. Almost every "advanced technique" reduces to the same thing: getting the right information into the model at the right time, and keeping the wrong information out. Read each section through that lens.

Claude Code and OpenCode are two implementations of the same idea, with mostly the same vocabulary and slightly different keybinds.

Two tools, not one. On purpose. The discipline here has to outlive any single tool. Pricing changes, access restrictions, model preferences, and strategic shifts shouldn't strand the lessons you've learned. Showing each concept in both tools is also the strongest test of whether the concept is real: if a technique only works in Claude Code, it's a Claude Code trick; if it works in both (same shape, different keybinds), it's a piece of how agentic coding actually behaves. Reach for Claude Code when frontier model performance is the constraint; reach for OpenCode when flexibility, cost control, or openness is. The skills you build either way are the same. That's the point, not a compromise.

Current as of April 2026. Both tools ship fast: config schemas, command names, and install commands change. If you don't have the tools yet, installation instructions are on those same docs pages: Claude Code, OpenCode. When in doubt about anything else, those pages are also where to start.

Assumed background: you're comfortable on a command line, you've used git, and you've read or written JSON config before. You don't need prior experience with LLM coding agents. That's what this is for. If you're brand new to MCP, hooks, or the protocol layer between LLMs and external tools, you'll pick those up from context as we go.

Throughout this page, sections that diverge between Claude Code and OpenCode have a switcher. Pick one and every switcher on the page syncs to it; your choice persists across visits.

There's a complete worked example in Part 6: one realistic task end-to-end, run twice, once in each tool. If you learn better from watching than from reading definitions, jump there first and come back.

Part 1: Foundations

These first three concepts apply identically in both tools. Where commands or keybinds differ, the difference is called out inline.

1. What these tools actually are

Most people's mental model is "a chatbot that knows code." That's wrong, and the wrongness is the problem.

A chatbot answers questions. Claude Code and OpenCode take actions. They read your files, edit them, run commands on your machine, hit the network, and chain all of that together until a task is done. You give them a brief, they work, you review.

The mindset shift: stop typing questions, start writing briefs. "How do I add auth?" is a chatbot prompt. "Add email/password auth to this Express app using bcrypt and JWT, store users in the existing Postgres users table, write tests, don't touch the OAuth code" is an agentic-coding prompt. The second one will work; the first one will produce a wandering monologue.

The two tools differ in defaults but not in fundamentals. Claude Code is Anthropic's, ships with Claude models, polished out of the box. OpenCode is open-source, model-agnostic (Claude, GPT, Gemini, local), and configurable down to the tool-level permission. Pick one based on your model preferences and how much config control you want; the rest of this crash course works in both.

Don't have either installed yet? Grab the canonical one-liner before you read any further; the sections after this assume you can press a key and see something happen. Auth, IDE plugins, and troubleshooting live on the official docs.

# macOS / Linux / WSL — recommended (auto-updates)

curl -fsSL https://claude.ai/install.sh | bash

# Windows PowerShell

irm https://claude.ai/install.ps1 | iex

# macOS Homebrew (no auto-update — run `brew upgrade claude-code` periodically)

brew install --cask claude-code

# npm fallback

npm install -g @anthropic-ai/claude-code

Full reference: docs.claude.com/claude-code.

2. Plan mode (the most underused feature)

The implementations differ slightly but the idea is identical: before letting the model write anything, force it to produce a written plan you can review.

Press Shift+Tab to cycle through permission modes. The first press puts you in auto-accept; the second press puts you in plan mode. In plan mode, the model can read but cannot write: no file edits, no shell commands.

Either way, the move slows you down for two reasons that are actually speedups:

- It catches misunderstandings before they cost you. The plan is a contract. If the model misread the brief, you see it in the plan, not in a 200-line diff you have to revert.

- It produces better code on the second pass. A pre-written plan compresses the relevant context (file paths, function names, intended approach) into a clean artifact that the implementation phase can lean on. Without a plan, the model is reasoning and writing simultaneously, and reasoning loses.

Rule of thumb: anything more than a 10-minute change goes through plan mode first. For larger features, ask the model to save the plan to a markdown file (docs/plans/feature-x.md) so you can resume it later or feed it to a fresh session.

3. Permissions discipline

Both tools ask for approval before taking action. Both let you skip approvals globally: Claude Code has --dangerously-skip-permissions, OpenCode lets you set "permission": "allow". Don't, at least not at first.

The pattern that works: start manual for a few sessions, notice which actions are safe enough to auto-approve, then write that down in config.

.claude/settings.json:

{

"permissions": {

"allow": [

"Read",

"Edit",

"Write",

"Bash(npm test)",

"Bash(npm run lint)",

"Bash(npm run build)",

"Bash(git status)",

"Bash(git diff *)",

"Bash(git log *)"

],

"deny": ["Bash(rm -rf *)", "Bash(npm publish *)", "Bash(git push *)"]

}

}

Then install a notification helper. The pattern that makes agentic coding feel fast isn't faster execution: it's the ability to walk away. Set off a long task, switch to other work, come back when you're pinged. If you run multiple sessions in parallel (a real thing, and a real productivity unlock), notifications are how you keep track of which one needs you.

Claude Code has community helpers like cc-notify. OpenCode's desktop app sends system notifications natively; for the TUI, write a plugin that subscribes to session.idle and shells out to osascript (macOS) or notify-send (Linux).

Part 2: Context management

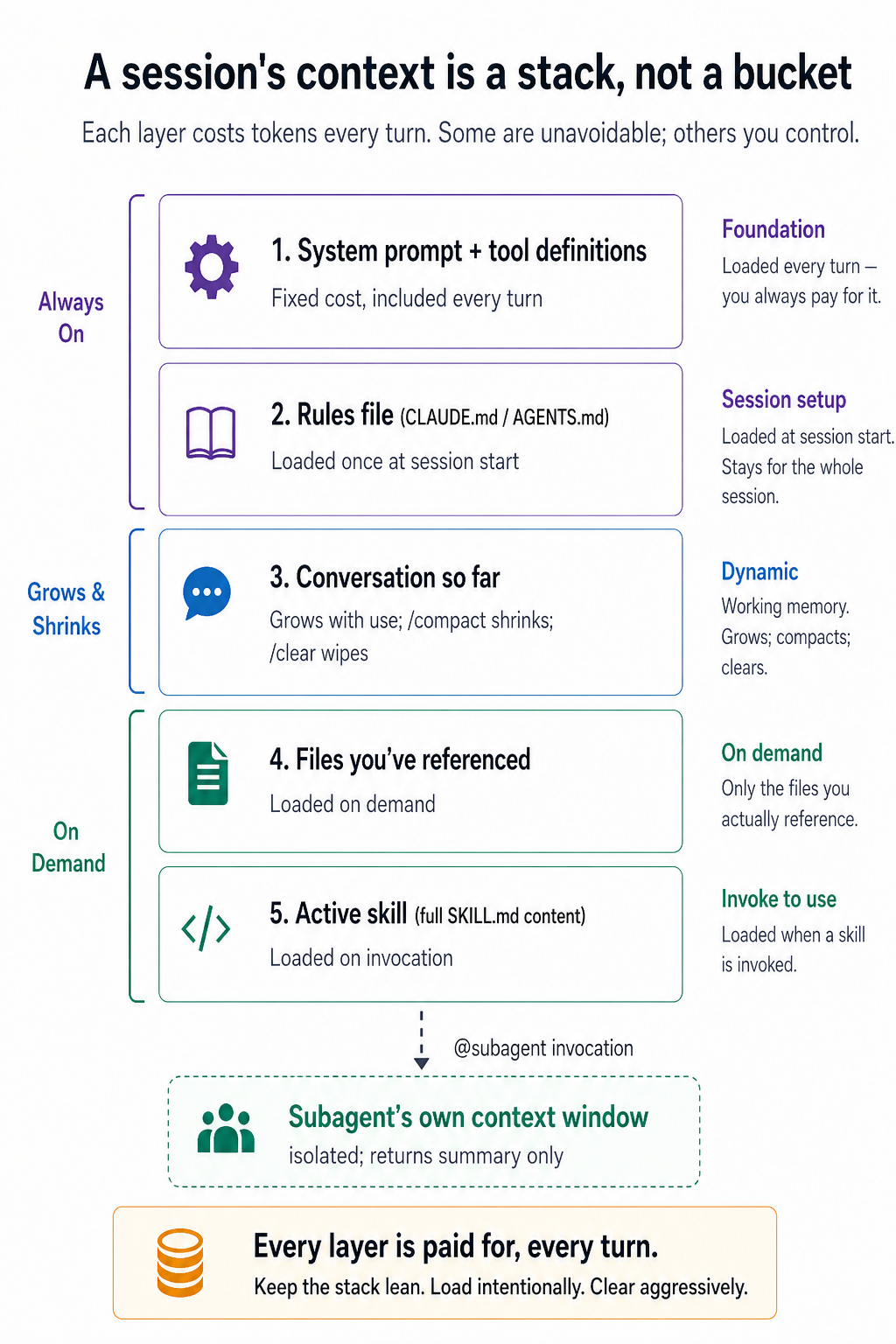

This is the most important section in the crash course. If you only internalize one part, make it this one. Everything here applies identically in both tools, only the command names occasionally differ.

4. Context rot is real

The context window is the amount of text the model can hold at once. Modern models have huge windows: hundreds of thousands of tokens, sometimes a million. That sounds limitless. It isn't.

Two things to understand:

Real usage fills the window faster than you'd think. A few code files, a stack trace, some docs you pasted in, and a 30-turn conversation will eat tens of thousands of tokens before you notice. Your laptop doesn't show a fuel gauge.

Recall degrades long before the window is full. Studies (and your own experience, if you pay attention) show that as token counts climb, the model gets noticeably worse at remembering specific details from earlier in the context. The model doesn't forget exactly. It just stops weighting that information correctly. Symptoms: it ignores a constraint you stated 20 messages ago, repeats work it already did, hallucinates a function signature it had right earlier.

There's also a financial dimension. Every message re-bills the entire context window: a 50K-token conversation pinged twenty times during a debug session is a million tokens of input, charged each turn. On per-token APIs (most OpenCode setups, raw Anthropic API) that's wallet damage; on subscription tiers (Claude Code Pro/Max) it shows up as rate-limit hits. Either way, bloated context drains your budget right when you most need to keep working.

The takeaway is unromantic but practical: less context, used deliberately, beats more context dumped in hope.

5. /clear and /compact

Two commands, two situations.

Wipe the conversation and start fresh. Use when switching to an unrelated task. Old context is irrelevant noise that will only confuse the new task.

- Claude Code:

/clear - OpenCode:

/new(also aliased as/clear)

Summarize the conversation, keep the summary, discard the rest. Use when deep in a single long task and the context is getting bloated, but you can't lose the thread.

- Both tools:

/compact(in OpenCode, also aliased as/summarize)

You can guide what to keep in the compact: /compact keep the API contract decisions and current file paths.

These are not interchangeable. /clear is "new conversation." /compact is "same conversation, less baggage." Picking the wrong one either loses important context or keeps useless context.

6. Resume sessions

Conversations are saved. Both tools let you resume, same idea, different command:

- Claude Code:

claude --resumeand pick a session from the list. - OpenCode:

/sessions(also aliased as/resume) inside the TUI to switch between saved sessions.

Two patterns where this matters:

- Pick up tomorrow. Long features rarely fit in one sitting. Resume lets you stop without losing the model's working memory of the project.

- Run multiple branches. Resume any session, not just the last. You can have one session deep on a backend task and another working on the frontend, and switch between them.

If you have a saved plan file (docs/plans/feature-x.md), even a fresh session can be brought up to speed in one message: "read docs/plans/feature-x.md and continue from step 4." Resume is faster, but the plan file is the safety net.

Conversation rewind exists in both, but they differ on file changes. In Claude Code, press Esc twice (double-tap Esc) to open a list of your previous user messages; pick one and the session forks from that point, dropping everything that came after. This rewinds the conversation but does NOT revert file edits the model already made on disk. OpenCode goes one step further: /undo rewinds the last user message and reverts the file changes from that turn (it uses git under the hood, so your project must be a git repo). /redo reverses the last /undo. If you find yourself in a deep hole after a bad prompt and want both the conversation and your files rolled back in one move, OpenCode's /undo is the genuine bonus; if you only need to redirect the conversation while keeping current file state, Claude Code's Esc Esc is faster.

Quick decision rule for the three commands:

Diagnostic: when context goes bad

Context rot has visible symptoms. Watch for any of these:

- The model starts apologizing repeatedly without making progress.

- It rewrites the same block of code with no meaningful changes.

- It references variables, files, or functions that don't exist.

- It contradicts a constraint you stated earlier in the same session.

- Each response gets longer, vaguer, and more deferential.

When you see this, stop typing. The instinct to "just one more clarifying prompt" is wrong; that just adds more polluted context to a context that's already polluted. The right move is to reset and re-brief. Run /compact if the conversation has a useful core to preserve, /clear (or OpenCode's /new) if you should genuinely start over. Five minutes of resetting beats an hour of arguing with a model whose context is poisoned.

Part 3: The rules file

7. CLAUDE.md / AGENTS.md, done well

Both tools load a project-root markdown file into context at the start of every session. It's the closest thing to a project-level system prompt.

Claude Code uses CLAUDE.md. OpenCode uses AGENTS.md. For migrators, OpenCode also reads CLAUDE.md as a fallback when no AGENTS.md is present; if both files exist, AGENTS.md wins and CLAUDE.md is ignored. If you've already maintained a CLAUDE.md, OpenCode will pick it up unless you set OPENCODE_DISABLE_CLAUDE_CODE=1 to opt out entirely. For partial disable, OPENCODE_DISABLE_CLAUDE_CODE_PROMPT=1 skips only the rules-file fallback and OPENCODE_DISABLE_CLAUDE_CODE_SKILLS=1 skips only the skills fallback. This is the single biggest portability win between the two tools.

The mistake nearly everyone makes is treating this file like documentation, stuffing in architecture overviews, full coding standards, and every quirk of the codebase. The result is a 20,000-token file that eats your context budget on every task, including tasks where 90% of it is irrelevant. Remember from Part 2: anything in this file is paid for on every single message, whether or not it's relevant.

The right model is table of contents, not encyclopedia. Aim for under ~2,500 tokens. Reference deeper context that loads on demand:

CLAUDE.md. The @filename syntax auto-loads referenced files when relevant:

# Project: my-app

## Stack

Next.js 14, TypeScript, Postgres, Drizzle ORM.

## Commands

- `npm run dev`: start local server

- `npm test`: run vitest

- `npm run db:migrate`: apply migrations

## Critical rules

- Never edit files in `src/generated/`. They're rebuilt by codegen.

- All API routes use the auth middleware in `src/lib/auth.ts`.

- See @docs/conventions.md for naming and folder rules.

- See @docs/db-schema.md for table structure.

For each line you're tempted to add, ask: "if I delete this, will the model make a mistake it wouldn't otherwise make?" If no, delete it. Don't tell the model to use TypeScript: it can see the .ts files. Tell it the things it can't infer: the codegen folder, the auth pattern, the things that have bitten you before.

This file should grow from real failures, not from imagined ones. Commit it to git. Let your team add to it.

If you're starting from scratch, run /init in either tool. The model scans your project and drafts the file. Then ruthlessly delete the parts you don't need.

Part 4: Personalizing your tool

There are four extension types in both tools. The decision tree is identical:

Every one of these is a context-management tool. Custom commands and skills inject the right context on demand. Hooks and plugins enforce rules without spending tokens on the model "remembering" them. Subagents quarantine context so it doesn't leak into your main thread. Same problem, different shapes.

8. Slash commands

A slash command is a saved prompt with a memorable shortcut. Both tools support them, with very similar shapes.

Markdown files in .claude/commands/ (project) or ~/.claude/commands/ (personal). Example .claude/commands/review.md:

Review the current diff for:

1. Bugs and edge cases

2. Test coverage gaps

3. Naming and readability

4. Adherence to @docs/conventions.md

Be specific. Quote the lines you're commenting on.

Both invoke as /review. Both support $ARGUMENTS (and OpenCode adds positional $1, $2, etc.). OpenCode also supports !`shell command` to inject command output into the prompt and agent: plan / subtask: true frontmatter to route the command to a specific agent or run it in a subagent. This is useful for keeping review workflows out of your main context.

The unifying test in both tools: if you find yourself typing the same instructions more than twice, it should be a command.

Note (Claude Code, 2026): As of 2026, slash commands and skills have been unified. Files in

.claude/commands/still work and will appear as slash commands, but new work is increasingly going into.claude/skills/because skills support more (auto-invocation, subagent execution, file-path filters). Treat the two as a continuum.

9. Skills

A skill is a custom command's bigger sibling. Same idea, packaged expertise, but with three meaningful upgrades that are essentially identical between the two tools:

- Auto-invocation. The model reads skill descriptions at the start of every session and invokes the right skill when a task matches.

- Progressive disclosure. A skill has a

SKILL.mdfile (the entry point) and can reference other files in the same folder. OnlySKILL.mdloads up front; the rest loads on demand. This is the context-management principle made into a file format. - Frontmatter controls. YAML at the top of

SKILL.mdconfigures behavior.

The file format converged. A skill written for one tool largely works in the other.

.claude/skills/extract-transcript/SKILL.md:

---

name: extract-transcript

description: Extract a clean transcript from a YouTube video URL. Use when the user provides a YouTube link and asks for the transcript, captions, or text of the video.

---

# Extract YouTube transcript

1. Take the URL from the user's message.

2. Run `yt-dlp --skip-download --write-auto-sub --sub-format vtt "$URL"`.

3. Convert the VTT to plain text: strip timestamps, deduplicate overlapping captions.

4. Save to `transcripts/{video-id}.txt`.

For formatting conventions (paragraph breaks, speaker labels), see `references/style.md`.

Yes, those are byte-identical. OpenCode also reads ~/.claude/skills/ and .claude/skills/ as fallbacks, so any skill you've already written for Claude Code works in OpenCode without changes.

The description is the most important field: it's what the model uses to decide whether the skill applies. Vague descriptions ("helps with videos") fire on everything; specific ones ("Use when the user provides a YouTube link...") fire only when relevant.

Keep SKILL.md itself short: under ~200 lines is a good rule. Push depth into reference files in the same directory and link to them. That way a quick task only pays for the overview; a deep task can pull in the references it needs.

Don't write skills from scratch. Claude Code ships a skill-creator skill that scaffolds new skills correctly. Because OpenCode reads ~/.claude/skills/ as a fallback, that same skill-creator is loaded by OpenCode too: install it once and both tools can invoke it. (Note: file discovery is shared, but skill bodies that reference Claude Code-specific tools may need light edits to run cleanly in OpenCode. For new agents, which are a separate concept in OpenCode, use opencode agent create for an interactive agent scaffolder.)

One more pattern: chain small skills, don't build monoliths. A "weekly content digest" skill that researches, writes, formats, and reviews in one go is harder to maintain and worse at each step than four separate skills (research, draft, format, review) that hand off to each other. The handoff is usually filesystem-mediated: research writes its output to tmp/research.md, then draft is instructed to read from it; draft writes to tmp/draft.md, and so on. You can also just prompt the model to run them in sequence ("use the research skill, then draft a post from the output"), but the file-based pipeline is more durable across /clear and easier to debug. Either way, each skill stays focused on its own step. The reusability is nice, but the real win is per-step context isolation: the format skill doesn't need to load the research notes, and the research skill doesn't need to know about formatting conventions. Same principle as subagents, applied at a smaller granularity.

10. Hooks (Claude Code) / Plugins (OpenCode)

Skills are probabilistic: the model decides whether to invoke them. Hooks and plugins are deterministic: they fire on specific events, every time, no model judgment involved.

This is where the two tools' implementations diverge most sharply. Same idea, different machinery.

Hooks are shell commands attached to lifecycle events in .claude/settings.json. The big events: SessionStart, UserPromptSubmit, PreToolUse (exit code 2 blocks), PostToolUse. Example: block rm -rf no matter how the model is feeling:

{

"hooks": {

"PreToolUse": [

{

"matcher": "Bash",

"command": "if echo \"$TOOL_INPUT\" | grep -q 'rm -rf'; then echo 'Blocked dangerous command' >&2; exit 2; fi"

}

]

}

}

The trade is real: OpenCode plugins are more capable; Claude Code hooks are simpler.

Anyone who can write bash can write a Claude Code hook in five minutes and ship it to a repo without a Node toolchain. OpenCode plugins need Bun or Node, npm dependencies, and TypeScript or JavaScript fluency. For a one-line check ("block rm -rf"), shell wins on cost. For anything that needs structured logging, async work, or multiple event subscriptions, the JS/TS module is genuinely cleaner. Pick the tool whose constraints match what you're building.

A useful pattern from people running these tools on production codebases: don't block at write time, block at commit time. Let the model finish its work (interrupting mid-edit confuses it) and run a hook/plugin on the commit step that checks tests pass, types check, formatter is happy. If something fails, force the model back into a fix loop.

Use hooks/plugins for the things you need to be true 100% of the time. Use skills for the things you'd like the model to remember most of the time.

11. Subagents

A subagent is an isolated agent instance with its own context window. You delegate a task to it; it works in private; it returns a summary. Its file searches, log dumps, and exploratory reads never touch your main thread.

Why this matters, in context-management terms: the most context-poisoning thing you can do is "explore the codebase to find where X happens." That kind of task pulls dozens of files into context, most of which you don't need. Doing it in a subagent means your main session sees only the conclusion ("X happens in src/services/billing.ts:142"), not the search.

Both tools ship with a read-only Explore subagent (among others; OpenCode also ships a General subagent for broader delegated work) that handles codebase exploration without polluting your main thread. In Claude Code, plan mode auto-delegates to Explore: you'll mostly use it without thinking about it, and the model itself can even self-enter plan mode when asked. In OpenCode, the Plan agent can auto-invoke subagents the same way (subagent delegation is a normal primary-agent capability), or you can call it explicitly with @explore; entering Plan mode itself, however, is a deliberate user action via Tab.

For custom subagents:

.claude/agents/doc-fetcher.md:

---

name: doc-fetcher

description: Fetches and summarizes external library documentation. Use when the user references a library and we need to understand its current API.

tools: WebFetch, Read, Write

---

You are a documentation researcher. Given a library name and a topic, fetch the official docs, extract only the API surface relevant to the topic, and write a focused summary to `tmp/docs-{library}.md`. Don't paste full pages: extract the patterns and signatures we need.

Invocation differs more in style than in capability: both tools auto-invoke subagents based on description and both support explicit invocation. OpenCode surfaces @subagent-name as a first-class invocation in autocomplete, which makes the explicit form more reachable; Claude Code leans more on description-based auto-pick. Same primitives, slightly different defaults in how often you'll reach for which.

The general rule, identical in both: if a task involves a lot of reading that won't be relevant to the final answer, it belongs in a subagent.

Part 5: Connecting to the world

12. MCP, used honestly

MCP (Model Context Protocol) is a standardized way to expose external tools (Slack, Notion, your database, GitHub, whatever) to the agent. Both Claude Code and OpenCode support MCP using the same protocol. A server written for one works in the other.

Configuration shape differs cosmetically:

Typically configured via the CLI (claude mcp add ...) or in .claude/settings.json.

The pitch is real in both tools: connecting the agent to your tools means it can act on your work, not just talk about it. Reading a Linear ticket, posting a Slack update, querying production read-replicas: all reasonable.

The honest qualification, which applies in both tools: MCP is not always the right answer, and some experienced users have moved away from heavy MCP use in favor of simple CLIs. The reasoning: an MCP server abstracts the underlying tool into a fixed set of operations, and every operation pays a context cost up front in tool descriptions. A CLI is just a CLI: the agent can read its --help, compose flags, pipe outputs, and improvise. For stateless tools (GitHub, AWS, Jira), gh, aws, and jira CLIs are often more flexible than their MCP equivalents.

OpenCode's docs explicitly call this out: "MCP servers add to your context, so you want to be careful with which ones you enable. Certain MCP servers, like the GitHub MCP server, tend to add a lot of tokens and can easily exceed the context limit."

A useful working model: use MCP for stateful or auth-heavy services where the protocol layer is genuinely doing work (Playwright is the canonical example: managing a browser session is hard). Use CLIs for everything else. Don't install ten MCP servers because they exist; install one when there's a real reason.

Part 6: A complete worked example, twice

This is the section the rest of the crash course points at. One realistic task, every concept, both tools. Run once in Claude Code, then once in OpenCode. Watch the differences and notice how few of them matter.

Setup (shared). You have an Express + TypeScript API. You want to add rate limiting on the public endpoints: 60 requests per minute per IP for unauthenticated traffic, using your existing Redis instance. Tests must pass; the existing session middleware must keep working.

6A. The Claude Code version

Step 1: Bootstrap CLAUDE.md. Open Claude Code, run /init. The draft is fine but bloated; you delete most of it down to:

# api-service

## Stack

Express 4, TypeScript, Redis (existing instance, see src/redis.ts).

## Commands

- `npm test`: vitest, hits a real Redis on port 6380

- `npm run lint`: eslint

- `npm run build`: tsc

## Critical rules

- All middleware goes in `src/middleware/`. Routes wire it in `src/app.ts`.

- The Redis client in `src/redis.ts` is shared. Don't create a second one.

- Tests assume Redis db 1; production uses db 0. Don't hardcode db numbers.

Step 2: Plan mode. Shift+Tab twice. Brief:

> Add rate limiting to public API endpoints. 60 requests per minute per

> IP for unauthenticated requests. Use the existing Redis instance.

> Don't break the session middleware. Include standard rate-limit

> response headers.

Claude explores via the built-in Explore subagent. Your main conversation stays clean. After a minute it returns a plan: middleware in src/middleware/rate-limit.ts, applied to four identified public routes, returning 429 with the right headers, with tests covering under/at/over limit and X-Forwarded-For extraction.

You read it. The IP extraction is a security footgun (trusting X-Forwarded-For regardless of proxy config), and the plan doesn't say what happens when Redis is down. You push back:

> Two changes: (1) Only trust X-Forwarded-For if `app.set('trust proxy', ...)`

> is configured. Fall back to req.ip otherwise. (2) When Redis is unavailable,

> fail open with a logged warning.

Claude updates the plan. You ask it to save the final plan to docs/plans/rate-limit.md.

Step 3: Implement. Approve the plan. Shift+Tab to exit plan mode. Claude installs the dep, writes the middleware, edits src/app.ts to wire it up, writes the tests. Edits and writes go through silently because they're in your allow list.

Step 4: Compact mid-task. Conversation is at maybe 40K tokens after the exploration and writing phases. You're not done (tests still need to pass) but the early exploration is irrelevant now.

> /compact keep the rate-limit implementation decisions, the file paths

> we touched, and the failing-open-on-redis-down requirement

Conversation continues at maybe 8K tokens. Recall improves immediately.

Step 5: Hooks catch the failing tests. npm test. Eleven pass, two fail because Redis state is leaking between tests. Your PreToolUse hook on git commit runs the test suite first and exits with code 2 when anything fails:

{

"hooks": {

"PreToolUse": [

{

"matcher": "Bash(git commit *)",

"command": "npm test --silent || (echo 'Tests failing: fix before committing' >&2 && exit 2)"

}

]

}

}

Claude tries to commit, hook blocks it, Claude reads the error, adds await redis.flushDb() to test setup, runs tests again, all pass. Hook lets the commit through. You did nothing for this loop.

Step 6: Subagent for the docs lookup. You realize the 429 response body should follow your team's standard error envelope, but you can't remember the exact field names. You also want to verify the rate-limiter-flexible API. You delegate the second one: "Use the doc-fetcher agent to look up rate-limiter-flexible's consume() API."

The subagent fetches the docs, writes a focused summary to tmp/docs-rate-limiter.md. Your main conversation sees three lines, not three pages.

Step 7: Save it as a skill. Third API in two months you've added rate limiting to.

> Create a skill at ~/.claude/skills/add-rate-limiting/SKILL.md based on

> what we just did. Capture: plan first, fail open on Redis errors,

> trust-proxy check for X-Forwarded-For, standard rate-limit headers,

> tests including IP extraction.

Claude generates it. Next project, you'll type "add rate limiting" and the model will pull this in automatically.

6B. The same example in OpenCode: what changes

Run the same task in OpenCode and most of it is byte-identical: same /init and trim, same plan iteration, same /compact mid-task, same self-correcting test loop, same skill saved at the end. Four steps actually differ. Everything else is decoration.

Step 2: Plan mode is an agent, not a state. Press Tab to switch from the Build agent to the Plan agent. Same exploration via the built-in Explore subagent (you can also invoke it explicitly with @explore), same plan iteration, same save to docs/plans/rate-limit.md. Architecturally cleaner: plan mode in OpenCode is an agent whose default permissions set edit and bash to ask, meaning writes need your explicit approval rather than being blocked outright. (You can tighten to deny in opencode.json if you want behavior closer to Claude Code's plan mode.)

Step 5: The hook becomes a plugin. Same idea, block git commit if tests fail, written as a JavaScript module subscribing to tool.execute.before:

// .opencode/plugins/test-before-commit.js

export const TestBeforeCommitPlugin = async ({ $ }) => {

return {

"tool.execute.before": async (input, output) => {

if (

input.tool === "bash" &&

output.args.command?.startsWith("git commit")

) {

const result = await $`npm test --silent`.nothrow();

if (result.exitCode !== 0) {

throw new Error("Tests failing: fix before committing");

}

}

},

};

};

The expressiveness gain is real: plugins can shell out via Bun's $, manipulate args, log structured events, and subscribe to richer events than shell hooks can practically reach. Hooks can do the same things with enough shell, but plugins make the intent readable.

Step 6: Subagent invocation is more visible. Both tools auto-invoke subagents by description match, and both support explicit invocation. The difference is idiomatic: OpenCode surfaces @subagent-name as a first-class invocation method (it appears in autocomplete), so in practice you'll type @doc-fetcher look up rate-limiter-flexible's consume() API more often than you'd reach for an equivalent in Claude Code. Same primitives underneath; OpenCode's UI just makes the explicit form more reachable.

Step 7: The skill file is byte-identical. Save to ~/.config/opencode/skills/add-rate-limiting/SKILL.md. The frontmatter and content are the same format Claude Code uses. This skill works in Claude Code unchanged if you ever switch back, and a Claude Code skill works in OpenCode without porting. The migration story made literal: not "easy port," actually "no port."

What to notice

The eight context decisions that got made are the same in both runs. The keybinds, command names, and config syntaxes differ. The thinking doesn't.

That's the proof of the thesis. The concepts are the tool; the configs are decoration. If you learn to think about context this way, you can switch agentic coding tools whenever it suits you, and bring everything you know with you.

Part 7: Where to run it, and how to grow

13. Terminal, IDE, or desktop?

Both tools run in several places.

Claude Code: terminal, VS Code / JetBrains plugins, the Claude desktop app, and cloud-hosted variants for async work.

OpenCode: terminal (TUI is the flagship), desktop app (in beta on macOS/Windows/Linux), IDE extension via ACP support, web interface via the SDK, plus GitHub and GitLab integrations.

Pick based on what you're doing. For greenfield development with lots of file editing, IDE plugins are hard to beat: you watch the diff happen. For long-running tasks where you want notifications and parallel sessions, the terminal is fine. For non-coding work and scheduled jobs, the desktop apps are more pleasant.

The strong recommendation, in either tool: start in the terminal or an IDE plugin. Once you understand what's happening underneath (which files the model reads, which commands it runs, which decisions it makes), every other interface becomes a thin wrapper you can read through. If you start in a heavily abstracted UI, you'll struggle to debug when things go sideways, because you won't know what can go sideways.

14. Build a personal context library, slowly

Once you have a few projects using either tool, you'll notice you're writing similar things into every rules file: your code style, your commit conventions, your testing philosophy. Stop copy-pasting.

Put the shared parts in your home config and reference them from each project's rules file:

~/.claude/:

# CLAUDE.md

@~/.claude/style/typescript.md

@~/.claude/style/commits.md

## Project-specific

[only the things unique to this project]

Same idea works for skills. A commit-message skill or code-review skill in ~/.claude/skills/ (which OpenCode also reads) or ~/.config/opencode/skills/ is available everywhere automatically.

The discipline: don't pre-build this. It's tempting to spend a Saturday designing the perfect personal setup. Resist. Every entry should come from a real failure: a moment where the model got something wrong, or you typed the same thing for the third time. Build from regret, not from imagination. The library that grows from real friction is small and useful; the one designed in advance is large and ignored.

15. Memory beyond the basics

Built-in resume + a well-tended rules file covers most people most of the time. If you need more (search across past conversations, persistent project notes, knowledge that survives /clear), you have options:

- A

notes/folder in the project, with the agent writing structured notes after major work. Cheap, durable, greppable. Surprisingly effective. Works in both tools without any setup. - A memory MCP server. Several exist; they give the agent an explicit save/recall API. Works in both tools.

- Vector search over your conversation history for "have I solved this before" queries. Tool-specific implementations exist; check the ecosystem pages.

Pick based on what you actually want to remember. If it's "decisions and gotchas for this codebase," a notes/ folder is enough. If it's "everything I've ever told the model across all projects," you want something heavier. Don't install infrastructure you don't have a problem for.

How to actually get good at this

Reading this crash course does not make you good at agentic coding. Using it does, and the path looks like this:

You start manual. You feel the friction: every approval prompt, every "wait, why doesn't it know X." That friction is the curriculum. Each piece of friction maps to one of the fifteen concepts above:

- "Why does it keep forgetting the auth pattern?" → rules file is missing or bloated.

- "Why did it just delete my migrations folder?" → permissions weren't tight enough.

- "Why is it so slow after an hour?" → context rot; you needed

/compact. - "Why am I typing the same thing every Monday?" → that's a skill.

- "Why did my tests pass locally but break in CI?" → that's a hook or plugin.

- "Why does exploring the codebase always pollute my conversation?" → that's a subagent.

Build the response to each problem when you hit it, not before. Your rules file should be ten lines, then twelve, then twenty: each line earned by a mistake it now prevents. Your skills folder should have one skill before it has ten. Your hooks/plugins should exist because something broke without them, not because someone said hooks were powerful.

The 80/20 isn't memorizing fifteen concepts. It's noticing which one a given problem belongs to, fast enough that you reach for the right tool. That noticing is the skill, and it only comes from time spent watching agentic coding tools succeed and fail on real work.

The portability dividend. Once you've built that noticing for one tool, it transfers. The friction-to-concept map above is identical in Claude Code and OpenCode. Pick one to start, learn its specific config, and the moment you decide to switch (for cost reasons, model preference, license, or a new tool that doesn't exist yet) your knowledge comes with you. The configs change. The thinking doesn't.

Start with one project. Use plan mode for everything non-trivial. Watch your context. The rest builds itself.

Quick reference

The 15 concepts in one line each

- Tools take actions, not just answers. Write briefs, not questions.

- Plan mode (Shift+Tab in CC, Tab to Plan agent in OC). Read-only investigation before any writes.

- Permissions discipline. Start manual, codify allow/deny over time. Never auto-approve

rm -rf. - Context rot is real. Recall degrades long before the window fills, and every turn re-bills the whole context.

/clearvs/compact. New task vs same task lighter. Pick wrong and you lose the wrong thing.- Resume sessions. Pick up where you left off without re-explaining; pair with saved plan files.

CLAUDE.md/AGENTS.mdis a table of contents. Under ~2,500 tokens, references load on demand.- Slash commands. Saved prompts you trigger by name when you keep typing the same thing.

- Skills. Saved expertise the model auto-invokes by description; progressive disclosure via

SKILL.mdplus references. - Hooks (CC) / Plugins (OC). Deterministic guardrails on lifecycle events. Not optional for production.

- Subagents. Isolated context windows for noisy reads (codebase exploration, doc fetching).

- MCP, used carefully. Standard protocol for external tools; CLIs often beat MCP for stateless services.

- Where to run. Start in terminal or IDE plugin so you can see what the agent is doing.

- Personal context library, slowly. Share configs across projects; build from regret, not imagination.

- Memory beyond basics. Built-in resume + good rules file is enough for most.

notes/folder if you need more.

Command quick-ref

| Want to... | Claude Code | OpenCode |

|---|---|---|

| Initialize project rules file | /init | /init |

| Enter plan mode | Shift+Tab (twice) | Tab (cycle to Plan agent) |

| Wipe conversation, start fresh | /clear | /new (or /clear) |

| Summarize and continue | /compact | /compact (or /summarize) |

| Resume a saved session | claude --resume | /sessions (or /resume) |

| Jump back to a previous message | Esc Esc | /undo (also reverts files) |

| Undo last message + file changes | (not bundled) | /undo (needs git) |

Redo (reverse the last /undo) | (not bundled) | /redo |

| Share session | /share | /share |

File location quick-ref

| What | Claude Code | OpenCode |

|---|---|---|

| Project rules | CLAUDE.md | AGENTS.md (also reads CLAUDE.md as fallback) |

| Project permissions | .claude/settings.json | opencode.json |

| Project commands | .claude/commands/*.md | .opencode/commands/*.md |

| Project skills | .claude/skills/<name>/SKILL.md | .opencode/skills/<name>/SKILL.md (also reads .claude/skills/) |

| Project hooks/plugins | .claude/settings.json (hooks block) | .opencode/plugins/*.{js,ts} |

| Project subagents | .claude/agents/*.md | .opencode/agents/*.md |

| Personal/global rules | ~/.claude/CLAUDE.md | ~/.config/opencode/AGENTS.md |

| Personal commands/skills/agents | ~/.claude/{commands,skills,agents}/ | ~/.config/opencode/{commands,skills,agents}/ |

| MCP servers | .claude/settings.json (mcp block) | opencode.json (mcp block) |

Extension type decision tree

Need to do the same thing repeatedly, manually?

→ Slash command (CC) / Custom command (OC)

Want the model to apply expertise automatically when a task matches?

→ Skill

Need something to happen every single time, no model judgment?

→ Hook (CC) / Plugin (OC)

Need a chunk of work done in isolation so it doesn't pollute main context?

→ Subagent

When something feels wrong

Model apologizing without progress, rewriting the same code,

hallucinating variables, contradicting earlier constraints?

→ Context is poisoned. Stop typing. Run /compact or /clear.

Don't try to fix it with another prompt.

Flashcards Study Aid

Quiz: 50 Questions on the 15 Concepts

A comprehensive bank covering every concept in the crash course. Each session shows a fresh batch of 18 questions, so retakes give you new material. Pick your tool above to ground the answers in your toolchain.