From Digital FTE to Production Worker: A 90-Minute Crash Course

15 Concepts, ~80% of Real Use - Durable Execution, Triggers, Flow Control

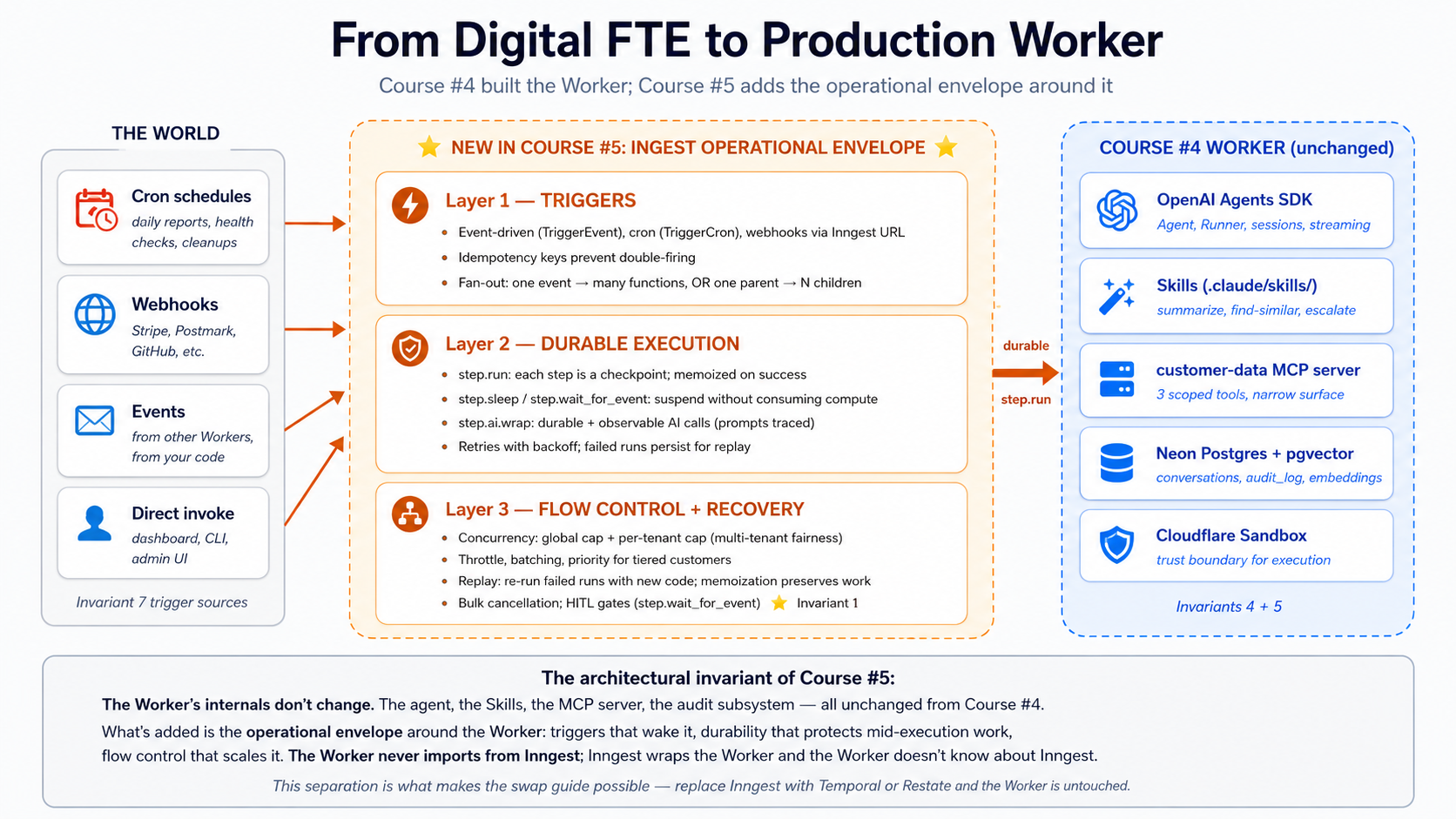

A continuation crash course. This is course #5 in the agentic-coding track. The previous course, From Agent to Digital FTE, ended with a customer-support Worker: same OpenAI Agents SDK foundation, three portable Skills, a Neon Postgres system of record, and a custom MCP server. That Worker runs only when you call it. You open Claude Code or OpenCode, you type, the agent responds. A real Production Worker has no human typing at the prompt.

The single insight that makes everything else click: turning a Digital FTE into a Production Worker is one architectural addition: a durable execution engine that lets the world call the Worker (instead of you), survives crashes mid-flight, and rate-limits itself at scale. Inngest is the durable-execution platform we use; the patterns transfer one-to-one to Temporal, Restate, or Dapr Agents, but Inngest's hosted Hobby tier gives the friendliest on-ramp: free, no credit card, one-command dev server, a dashboard you can poke at while you code.

In plain English: the Digital FTE from Course #4 is a function you call. The Production Worker this course builds is a function the world calls, through scheduled cron jobs, through webhooks from your inbox and billing system, through events fired by other Workers. When it runs, it runs durably: a crash halfway through a six-step refund flow does not lose the first three steps' work; the Worker resumes from where it broke. And when 500 customers email at once, the Worker handles them at a controlled rate that does not blow your OpenAI rate limit or your Postgres connection pool. None of that machinery is yours to build; your code stays just functions decorated with @inngest.create_function.

Day AI, the CRM for AI-native companies, calls Inngest "the nervous system" of their product. Two founding engineers describe it the same way, independently. Their stack uses every primitive this course teaches: durable LLM workflows, wait-for-event coordination, replay on failure, debounce + throttle + concurrency, and multi-tenant fairness so one organization's spike does not slow everyone else. The framing is not curriculum branding; it is the production language of an AI-native company in the market.

A single agent crash mid-workflow is annoying. A workforce of fifty agents handling customer-facing work without a nervous-system substrate is impossible: you adopt a platform that gives it to you, or spend six months building a worse version yourself. Four properties make durable execution uniquely important for agents:

- Each step costs real money. Naive retry after a crash re-pays for steps that already succeeded; step memoization (Concept 7) pays once.

- Workflows compound failure. A six-step agent at 95% per-step reliability has a 26% chance of failing somewhere. Step memoization plus targeted retries lift overall reliability to ~99.7%.

- Side effects are real-world. Agents email customers, charge cards, post to Slack. Step memoization plus provider-level idempotency keys make these safe.

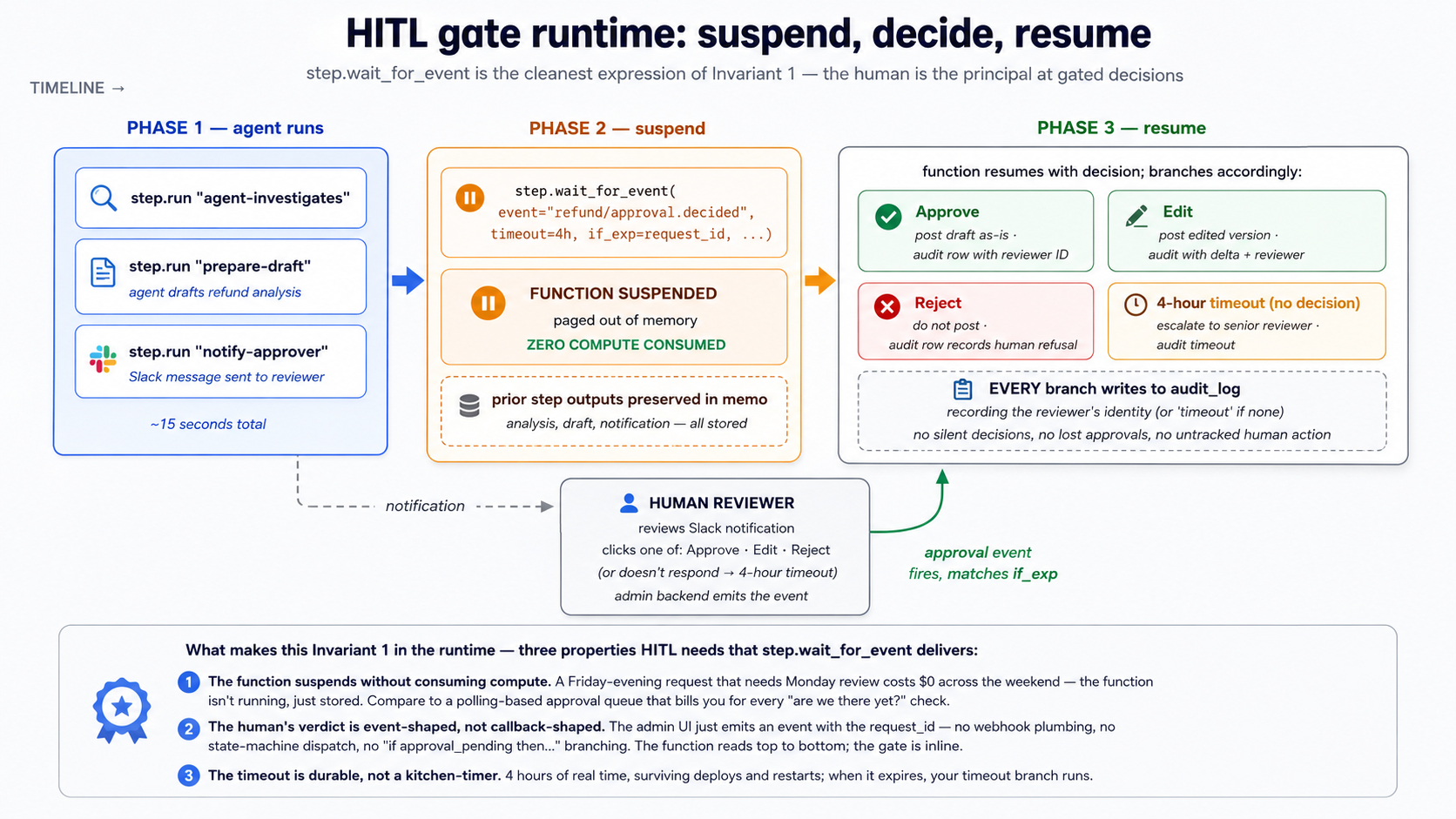

- Agents need human approval at high-stakes moments. Without

step.wait_for_event(Concept 15), you build an approval queue yourself: database table, polling, timeout handling, audit trail. That is a project, not a feature.

Start here: the architectural placement and the 15-concept cheat sheet

Where this course sits in the architecture. The Agent Factory thesis describes Seven Invariants any production agent system must satisfy. Courses #3 and #4 covered Invariants 4 (engine) and 5 (system of record). This course covers two more, plus a piece of Invariant 1:

- Invariant 7: The world calls the system. Triggers (schedules, webhooks, inbound API calls, events from other Workers) wake the Worker. Inngest is one realization.

- Invariant 1, in part: The human is the principal. Approval gates are where authored intent re-enters the runtime.

step.wait_for_eventis the cleanest expression on any platform: the agent suspends, a human emits the awaited event, the agent resumes. - Durable execution as a thesis-implicit invariant. Audit answers "what happened?"; durability answers "do it again from where it broke." Replayable, retriable, resumable after failure.

The 15 concepts, at a glance. A failure in production almost always traces to one of three root causes: a trigger that did not fire (or fired twice), an execution that broke and lost state, or a flow-control gap that let one customer's traffic starve everyone else. The 15 concepts map onto those three layers. This is the first-pass version: concept plus a one-line gist. The full diagnostic table (with the question each concept answers) is in the Quick reference at the end, where you will reach for it during a build.

| # | Concept | One-line gist |

|---|---|---|

| Triggers | how the world calls the Worker | |

| 1 | Events vs requests | A request is sync and someone waits; an event is async and the world has moved on. |

| 2 | Cron triggers | A schedule wakes the function. One line: TriggerCron(cron="0 9 * * *"). |

| 3 | Webhook triggers | An inbound HTTP payload becomes a named event; your function reacts to the name. |

| 4 | Idempotency and event semantics | Event IDs and step names make a duplicate event (or retry) a no-op. |

| 5 | Fan-out and sub-agent delegation | One event, N subscribing functions; or one parent firing N child events. |

| Durable execution | keeping the Worker correct when something breaks | |

| 6 | step.run and the durable function model | Each step.run is a checkpoint; the function can crash between steps and resume. |

| 7 | Memoization, the mechanic underneath | Completed steps return stored output instead of re-executing. |

| 8 | step.sleep and step.wait_for_event | Both suspend the function durably, for a duration or for an event. |

| 9 | Retries, error handling, dead-letter | Automatic backoff retries; after N tries the failed run persists for replay. |

| 10 | step.run for AI calls in Python | Wrap OpenAI calls in step.run; step.ai.infer offloads inference (step.ai.wrap is TypeScript-only). |

| Flow control | keeping the Worker healthy under load | |

| 11 | Concurrency and throttling | concurrency caps active runs; throttle caps starts-per-second. |

| 12 | Priority and fairness | Priority orders the queue; per-key concurrency gives each tenant a fair share. |

| 13 | Batching | Accumulate events into one batched function call for cheap bulk work. |

| 14 | Replay and bulk cancellation | Replay failed runs with new code; bulk-cancel runs you no longer want. |

| 15 | HITL gates with step.wait_for_event | The function suspends until a human approves, then resumes with the decision. |

Once you have this mapping, the rest of the document is mostly mechanics. A failure in production traces to one of: a trigger that did not match (event name typo, schedule did not fire), a step that broke without memoization (so retry restarts the whole flow), a flow-control gap that did not cap concurrency (so one customer drowned the others), or a HITL gate that timed out waiting (so the escalation never happened). The diagnostic table in the Quick reference tells you which.

Audience. This is the third intermediate-to-advanced crash course in the agentic-coding track. You need to have completed Courses #3 and #4 (or be comfortable with everything they taught), because this course extends the customer-support Worker from Course #4's Part 4 worked example. The OpenAI Agents SDK, sessions, streaming, function tools, sandboxing, Skills, Neon Postgres with pgvector, MCP servers, audit logging: all assumed.

Prerequisites. This page assumes five things.

- You have completed From Agent to Digital FTE. Non-negotiable. We pick up where Course #4 left off: same

chat-agent/project, same Skills, samecustomer-dataMCP server.- You have the Agentic Coding Crash Course discipline. Plan mode, rules files, slash commands, the read-first-then-write workflow.

- You have done at least one PRIMM-AI+ cycle. The Predict prompts in this course assume the rhythm.

- You have Node.js 20+ available, even if your agent is Python. The Inngest dev server is distributed as a Node CLI (

npx inngest-cli@latest dev).- You have a working mental model of "event-driven" vs "request/response." If "the world fires an event and zero, one, or many functions react to it" reads as familiar, you are calibrated. If not, Concept 1 gives you the shape.

How to read this page on first pass (click to expand)

- Expand on first read: anything labeled "What you'll see," "Sample run," "Expected output," "Verify." Runnable behavior to check predictions against.

- Skip on first read: long file listings in Part 4's worked example. The narrative above each block tells you what changed; you only need the file contents when you actually build.

- Optional throughout: the "Try with AI" blocks. Extension prompts for Claude Code or OpenCode connected to the Inngest dev-server MCP.

The goal of first pass is to internalize the three-layer model: triggers wake the Worker, durable execution keeps it correct, flow control keeps it healthy. Second pass with your hands on the keyboard is where you build.

Glossary: terms you'll meet (click to expand)

Each term is explained in context where it first appears; this list is a quick reference for terms most likely to trip a first-pass reader.

- Production Worker: A Digital FTE with an operational envelope: triggers that wake it, durable execution that survives failures, flow control that scales it under load.

- Event: A named, immutable message describing that something happened. Example:

{"name": "customer/email.received", "data": {"customer_id": "..."}}. The trigger surface. - Inngest function: A Python function decorated with

@inngest_client.create_function, declaring triggers and steps. The unit of durable work. - Step: A unit of work inside an Inngest function wrapped in

ctx.step.run(),ctx.step.sleep(),ctx.step.wait_for_event(), orctx.step.ai.infer(). Each step is independently retried and memoized. - Memoization: When a function crashes and restarts, Inngest re-runs the function code from the top but returns stored outputs for any

step.runwhose result is already cached. The function catches up to where it broke without redoing work. - Flow control: Per-function policies:

concurrency(max active runs),throttle(max starts per second),priority(queue order),batch_events(accumulate before invoking). - HITL (Human In The Loop): A function pauses to wait for human approval or input before continuing.

step.wait_for_eventis the primitive. - Replay: Re-running a failed function from where it broke, with the new code after a bug fix.

- Dev server: Inngest's local dev environment via

npx inngest-cli@latest dev. Dashboard athttp://127.0.0.1:8288; MCP endpoint at/mcp.

Current as of May 14, 2026. Verified against inngest-py 0.5.18 (released March 11, 2026), Inngest CLI v1+, and the Inngest Python quick start. The durable-execution architecture this course teaches does not change when the SDK does; the SDK is this year's interface to that architecture.

The Course #3 and #4 stack is the foundation of this course, not a stepping stone we move past. Your Part 4 Worker still uses Agent, Runner, function_tool, Skills from .claude/skills/, the customer-data MCP server, and the six-table audit-aware schema. What changes: those primitives now run inside Inngest functions that wrap each agent invocation in step.run() for durability, declare event/cron triggers, and apply concurrency and throttling policies. The Worker's internals do not change. The Worker's operational envelope does.

This is a Python-first course like its predecessors, using inngest-py, Inngest's Python SDK. The Inngest dev server itself is language-agnostic; it works with the official Python SDK identically to how it works with TypeScript or Go.

The dual-tool pattern continues. Sections that diverge between Claude Code and OpenCode have a switcher; pick one and the page syncs across visits.

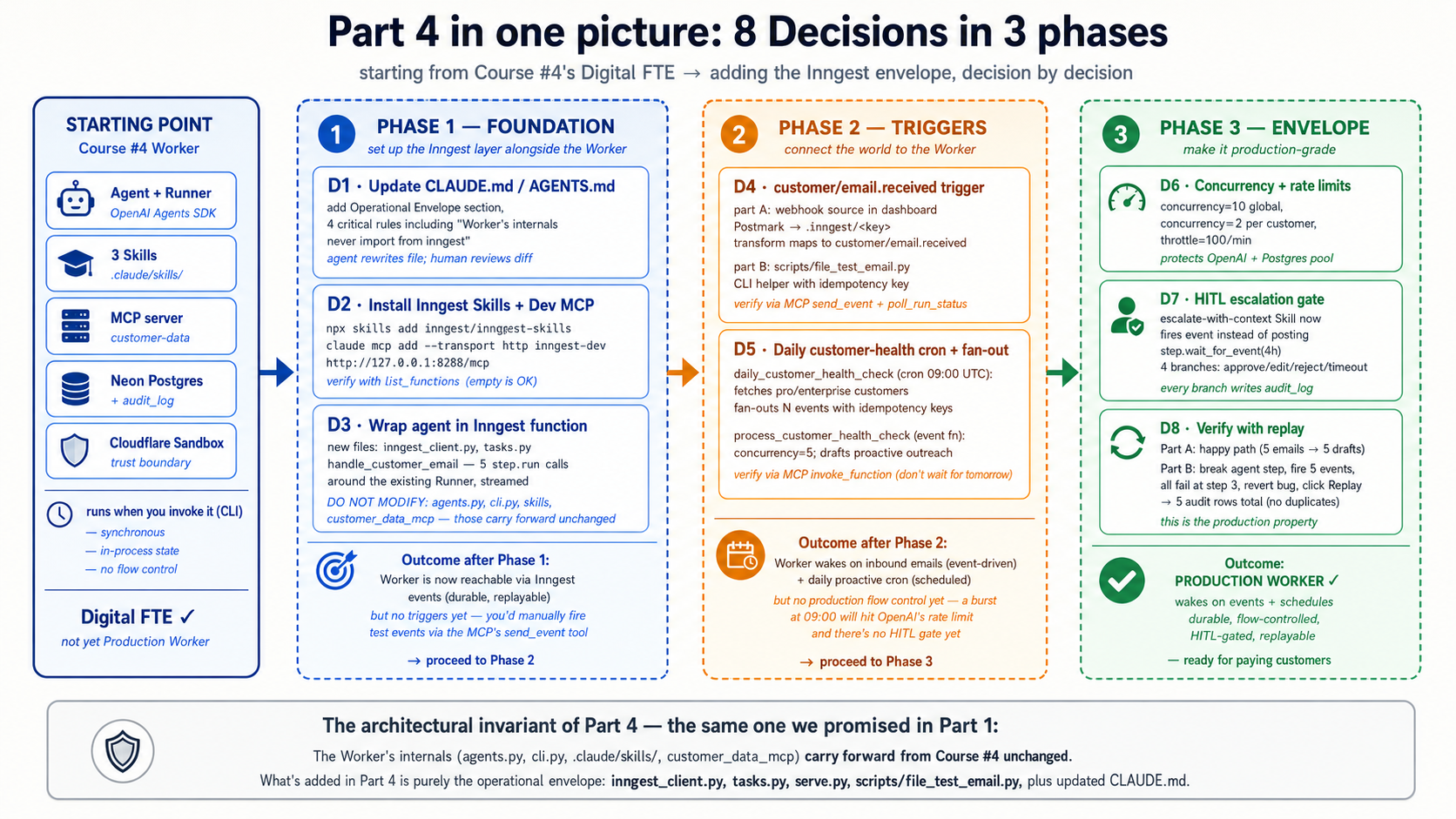

There is a complete worked example in Part 4: the customer-support Worker from Course #4 wrapped in an Inngest layer, with event triggers, cron health checks, HITL escalation gates, concurrency limits, and full replay support. Eight build decisions, same shape as Courses #3 and #4. If you learn better from doing than reading definitions, skim Parts 1-3 and jump to Part 4.

Architecture in one line. Engine = OpenAI Agents SDK + Cloudflare Sandbox (Course #3). Capability + Truth + Connector = Skills + Neon Postgres + MCP (Course #4). Operational Envelope = Inngest's triggers + durable execution + flow control (Course #5, this one). The Worker's internals are unchanged from Course #4; what is new is the layer above it that lets the world wake it, lets failures not lose state, and lets one Worker serve a workforce's worth of traffic. If only one sentence of this whole document sticks, that is the one.

The fifteen-minute quick win: see durability with your own eyes

Before you read the 15 concepts that explain why this architecture works, build the smallest possible version that actually works. Two files, four uv and npx commands, one shell session. By the end of this section you will have:

- one Inngest function with one

step.runand onestep.sleep - the Inngest dev server running locally with a dashboard at

http://127.0.0.1:8288 - a successful run you triggered from the dashboard

- a failed run you replayed after fixing the bug, watching the completed steps return from memo without re-executing

This is not the Part 4 worked example; that is the full Production Worker, eight Decisions, hundreds of lines. This is one screen. If you only have one sitting, do this, then come back for the concepts when you want to know why each piece was shaped the way it was.

Step 1. Create a fresh project directory and install the SDK plus a tiny web framework. (You can swap fastapi for any ASGI framework Inngest supports; FastAPI is the simplest.)

mkdir hello-inngest && cd hello-inngest

uv init

uv add inngest "fastapi[standard]"

Step 2. Write one file with one durable function. Save as hello.py:

# hello.py

import logging

from datetime import timedelta

import inngest

import inngest.fast_api

from fastapi import FastAPI

inngest_client = inngest.Inngest(

app_id="hello-inngest",

logger=logging.getLogger("uvicorn"),

is_production=False,

)

@inngest_client.create_function(

fn_id="greet-customer",

trigger=inngest.TriggerEvent(event="demo/greet"),

)

async def greet_customer(ctx: inngest.Context) -> dict[str, str]:

name = ctx.event.data.get("name", "friend")

greeting = await ctx.step.run("compose-greeting", lambda: f"Hello, {name}!")

await ctx.step.sleep("wait-fifteen-seconds", timedelta(seconds=15))

farewell = await ctx.step.run("compose-farewell", lambda: f"Goodbye, {name}.")

return {"greeting": greeting, "farewell": farewell}

app = FastAPI()

inngest.fast_api.serve(app, inngest_client, [greet_customer])

Three things to notice. The function shape is plain Python: an async def decorated with create_function. The two ctx.step.run calls wrap operations that should be memoized. The ctx.step.sleep between them suspends the function durably (the process can crash, restart, or be redeployed during the sleep; the run resumes at the next line when the timer fires).

Step 3. Start the function host in one terminal.

uv run uvicorn hello:app --reload --port 8000

You should see uvicorn report Started server process and Application startup complete. The function host is now listening at http://127.0.0.1:8000/api/inngest.

Step 4. In a second terminal, start the Inngest dev server.

npx inngest-cli@latest dev

The dev server prints a banner and opens a dashboard at http://127.0.0.1:8288. It auto-discovers the function host you started in Step 3.

Step 5. Open http://127.0.0.1:8288 in a browser. Click Functions in the sidebar; you should see greet-customer listed. Click Events in the sidebar, then Send event. Paste this payload and click Send:

{

"name": "demo/greet",

"data": { "name": "Sara" }

}

Step 6. Click Runs in the sidebar. You will see one run for greet-customer with status Running and a step labeled compose-greeting marked complete. Click into the run to see the step trace.

Step 7. Watch the wait-fifteen-seconds step. The dashboard shows it in a Sleeping state with the resume time. Nothing in your code is running. The uvicorn terminal is idle. After fifteen seconds the run resumes, compose-farewell completes, and the run status flips to Completed. Open the Output panel to see the returned dict.

Step 8. Now break it on purpose. In hello.py, add a small helper above greet_customer and have the step call it:

def fail_on_purpose() -> str:

raise RuntimeError("forced failure")

# ...inside greet_customer, replace the compose-farewell step:

farewell = await ctx.step.run("compose-farewell", fail_on_purpose)

Save the file; uvicorn auto-reloads. Send the same demo/greet event again from the dashboard. Watch the run: compose-greeting completes, wait-fifteen-seconds sleeps and resumes, compose-farewell retries with backoff (Inngest defaults to four attempts), then the run lands in Failed state with the RuntimeError visible in the step trace.

Now fix the bug: revert compose-farewell to the original lambda: f"Goodbye, {name}.". Save. In the dashboard, click the failed run, then click Replay. Watch the replay: compose-greeting completes in milliseconds (memo hit, no re-execution), wait-fifteen-seconds completes in milliseconds (memo hit), compose-farewell executes for real with the new code and succeeds. The run completes.

You just ran a durable function, watched a step sleep without consuming compute, broke it, fixed it, and replayed it. The next 90 minutes scale this up: real triggers (cron, webhook, fan-out), real durability (the agent invocation wrapped in step.run), real flow control (concurrency, throttle, priority), and the HITL gate that turns "the agent might mess this up" into "the agent drafts, a human approves, the action issues."

If something did not work, the most common Quick Win failures are: (1) the dev server cannot reach the function host (check that uvicorn is running on port 8000); (2) is_production=False is missing from the client constructor (without it, the SDK requires a signing key); (3) the function does not appear in the dashboard (uvicorn did not auto-reload; restart it manually); (4) a run hangs with no error and no progress (a de-synced host produces silent stalls; restart both the function host and the dev server together, and run one function host against one dev server). Four problems, four fixes, then keep going.

Part 1: Triggers, how the world calls the Worker

The Course #4 Worker runs when you call it. A real Production Worker runs when the world fires events: a customer emails, a webhook arrives, a cron fires at 09:00 daily, another Worker hands off work. Part 1's five concepts establish the event-driven mental model, the three trigger surfaces (cron, webhook, event), the semantics that prevent double-processing, and the fan-out patterns that let one event wake many Workers.

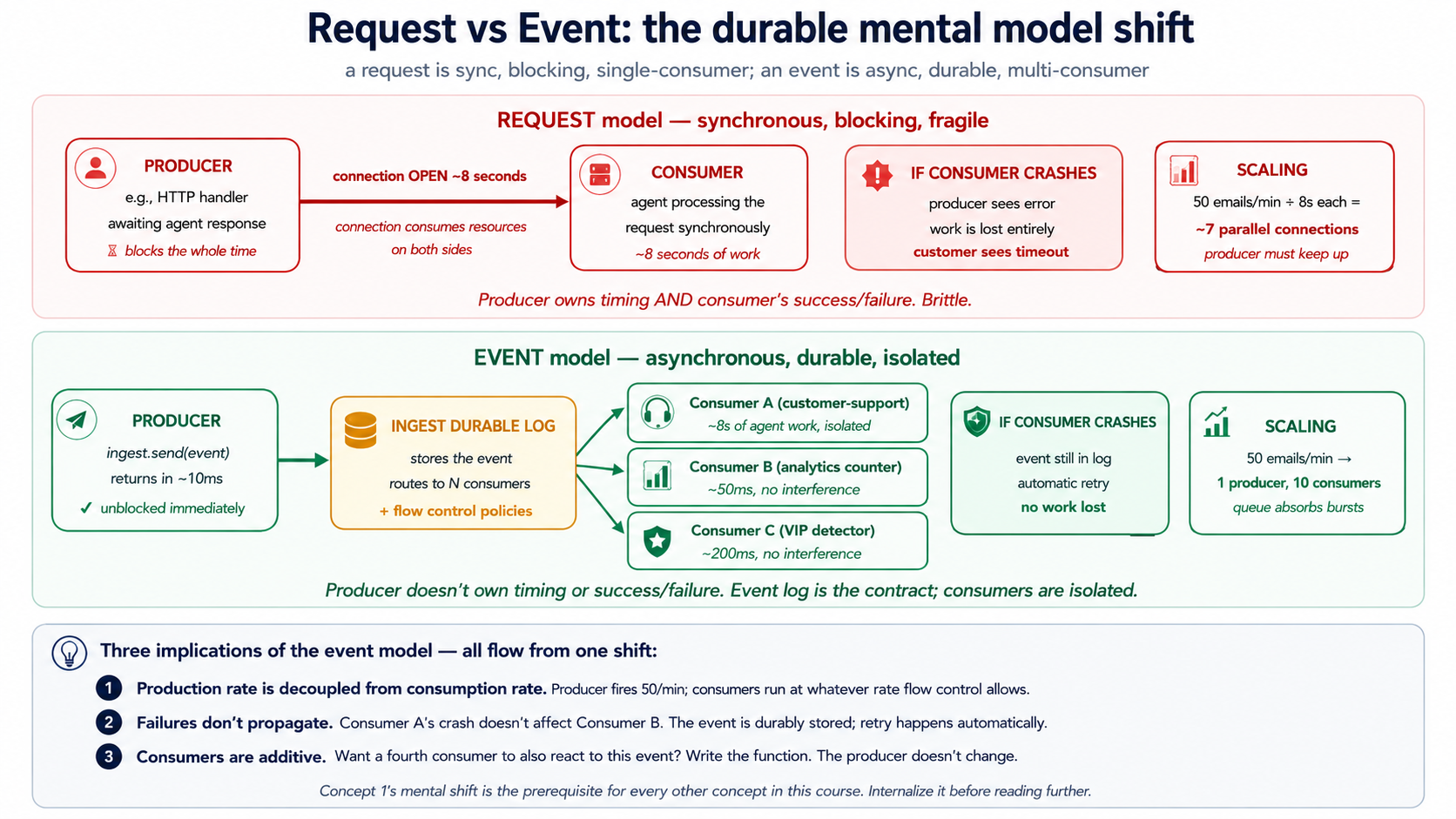

Concept 1: Events vs requests, the durable mental model shift

A request is a synchronous conversation. Someone calls; you handle; you return; they continue. A connection stays open; a human or service is waiting. If you crash, the caller gets an error. The Course #4 chat agent is a request: you typed, it streamed back, the conversation belonged to your terminal session.

An event is an asynchronous message. Something happened in the world (a customer signed up, an email arrived, a payment cleared), and the originator emits a named record of that fact. Zero, one, or many functions react to the event independently. No connection stays open. The originator does not know who is listening, does not wait for results, and is not blocked. The world has moved on.

# A request: I'm here, waiting, blocking

result = await agent.handle_customer_message(text=user_input)

print(result) # I unblock when the agent finishes

# An event: I fire-and-forget

await inngest_client.send(events=[

inngest.Event(

name="customer/email.received",

data={"customer_id": "c-4429", "body": email_body, "subject": subject},

),

])

# I return immediately. Somewhere else, one or more Inngest

# functions react to this event on their own schedule.

The shift sounds small. It is not. Once you think in events, durability and scale fall out almost for free, because:

- The producer cannot be slowed by the consumer (the email-receiver does not wait for the agent to finish drafting a reply).

- The consumer can crash and restart without losing the work (the event is durably stored; Inngest re-delivers it).

- New consumers can be added without changing producers (a second function, say an analytics counter, can subscribe to

customer/email.receivedwithout the email-receiver knowing). - Backpressure becomes a flow-control policy, not a code change (Inngest caps concurrency; the producer keeps firing; events queue).

The whole rest of this course is implications of this single mental shift.

PRIMM, Predict. Your customer-support Worker takes 8 seconds to respond to an email: three seconds for the agent's reasoning, four seconds for two MCP tool calls, one second for the database write. At peak load you receive 50 emails per minute. If you use the request model (the email parser blocks until the agent finishes), how many parallel HTTP connections to your email parser does that imply? If you use the event model (the email parser fires an event and returns immediately), how many? Confidence 1-5.

The answer: request model needs about 7 concurrent parsers (50/min × 8 seconds = ~6.7 parallel handlers, plus headroom). Event model needs one parser (it fires the event and returns in ~10ms; the event queue absorbs the 50/min spike; Inngest functions consume the queue at whatever concurrency you allow). The event model decouples production rate from consumption rate. That is not just a scaling fact; it is an architectural one. The event becomes a durable boundary between "what happened in the world" and "what the Worker does about it." Crash the consumer mid-processing and the event is still there to retry. Add three more consumer types and the producer does not notice. Events are how you stop owning the timing of work.

Try with AI

Walk me through three scenarios. For each, classify it as REQUEST-MODEL

or EVENT-MODEL, and explain which one fits better:

A) A user clicks "Submit refund request" in the support portal and

expects to see "Refund issued: $30" within 2 seconds.

B) A nightly cron job at 02:00 runs a customer-health-check across

all 5,000 customers and writes a report to Slack.

C) A customer sends an email to support@; we want a draft response

ready within 60 seconds for the on-call agent to review and send.

For each, name (a) what the human's expectation of timing is and

(b) what failure looks like if the model crashes mid-execution.

Concept 2: Cron triggers, work that runs because time passed

The simplest trigger is the clock. Many things a Production Worker does are not reactions to outside events; they are scheduled work: daily health reports, weekly cleanups, hourly recalculations. Inngest's cron trigger is one line of code.

import inngest

@inngest_client.create_function(

fn_id="daily-customer-health-check",

trigger=inngest.TriggerCron(cron="0 9 * * *"), # 09:00 every day, UTC

)

async def daily_health_check(ctx: inngest.Context) -> dict[str, int]:

"""Run a customer-health pass for every Pro/Enterprise customer."""

customers = await ctx.step.run("fetch-pro-customers", fetch_pro_customer_ids)

# fan out: one event per customer, one Worker run per event

await ctx.step.run("fan-out", fan_out_per_customer_events, customers)

return {"customers_scheduled": len(customers)}

Three things to notice:

-

The schedule is just standard cron syntax.

0 9 * * *is 09:00 UTC every day;*/15 * * * *is every 15 minutes;0 9 * * 1is Mondays at 09:00. Inngest evaluates the cron in UTC; if you need a different timezone, that is a function parameter, not a different concept. -

The function still uses

ctx.step.run. Cron-triggered or event-triggered, the function shape is identical. Steps work the same. Durability works the same. Flow control works the same. The trigger is just how the function starts. -

The cron output is a regular Inngest function run. It shows up in the dashboard, has a run ID, has a trace, supports replay. If your Monday-morning cron run fails at step 3, Tuesday's cron will run normally and Monday's failure stays available for replay after you fix the bug.

What happens if your service is down when the cron fires? This is the question that separates real schedulers from kitchen-timer schedulers. Inngest's cron runs are durably recorded the moment the schedule fires; if your function endpoint is unreachable, Inngest retries with backoff until it succeeds or hits the retry ceiling. The cron fired at 09:00 does not "miss" because your deploy was rolling at 09:00; the run waits, you finish your deploy, the run completes. Cron triggers in development have one quirk worth knowing about: the local dev server only fires crons while it is running. Production runs them on Inngest's infrastructure, which is always running.

Quick check. Three claims. Mark each True or False. (a) If a cron function takes 45 minutes to run and is scheduled every 15 minutes, three concurrent instances will be running at any given time. (b) You can use

step.sleepinside a cron-triggered function to spread work across the day. (c) A cron-triggered function can also be invoked manually from the dashboard for testing.

Answers: (a) Depends on concurrency policy: by default Inngest will queue the overlapping runs; if you set concurrency=1 they serialize; if you set concurrency=10 they parallelize. The default is sane. (b) True, and it is a common pattern for "spread daily work across hours to smooth load." (c) True: the Inngest dashboard lets you invoke any function on demand for testing, regardless of its trigger.

Try with AI

With my AI coding assistant connected to the Inngest dev server MCP,

write a cron-triggered Inngest function in Python that:

1. Runs every Monday at 09:00 UTC.

2. Queries the audit_log table for all conversations resolved in the

prior week (status='resolved' in that window).

3. Computes per-agent metrics: total conversations resolved, average

resolution time, count of escalations, count of refunds issued.

4. Returns the metrics as a JSON object.

After you write the function, use the MCP's `invoke_function` tool to

test it manually (instead of waiting for Monday). Confirm the audit

SQL is correct by using `grep_docs` to search Inngest's docs for

"step.run" examples.

Concept 3: Webhook triggers, when the outside world calls in

The second trigger surface is HTTP. An external system (Stripe, your email provider, a customer-portal form, a GitHub webhook) wants to call your Worker. Without Inngest, you would have to: stand up an HTTPS endpoint, parse the payload, validate the source, write to a queue, write a worker consuming from the queue, handle retries, handle idempotency, ship telemetry. Each one is a week of infrastructure work.

With Inngest, the endpoint is provided. You configure a webhook in the Inngest dashboard with a URL like https://inn.gs/e/<your-key>, point Stripe (or whatever) at that URL, and the webhook payload becomes an event in your event stream. Any function with a matching event-name trigger now fires.

@inngest_client.create_function(

fn_id="handle-stripe-refund-failed",

trigger=inngest.TriggerEvent(event="stripe/charge.refund.failed"),

)

async def on_refund_failed(ctx: inngest.Context) -> dict[str, str]:

"""Triggered by Stripe webhook → Inngest event → this function."""

charge_id = ctx.event.data["charge_id"]

customer_id = ctx.event.data["customer_id"]

# Look up which support ticket originated this refund

ticket = await ctx.step.run(

"find-ticket-for-refund", lookup_ticket_by_charge, charge_id,

)

# Wake the customer-support Worker with the full context

await ctx.step.run(

"notify-support-agent",

notify_support_agent_of_refund_failure,

ticket_id=ticket["id"], charge_id=charge_id,

)

return {"ticket": ticket["id"], "action": "notified"}

The flow: Stripe fails to refund a charge → Stripe POSTs to the Inngest webhook URL → Inngest creates an event named stripe/charge.refund.failed → the function above (matching that event name) fires → the function uses steps to look up the ticket and notify the support agent. None of the HTTP plumbing is yours to write. No endpoint, no parser, no queue, no consumer.

Two adjacent patterns worth naming:

- Generic JSON webhooks. If the source is not a known vendor, you point any JSON-emitting service at the same kind of endpoint and pick the event name. Slash-namespaced names (

vendor/event.subtype) are the convention; nothing enforces it, but the dashboard sorts cleanly when you follow it. - Webhook transforms. If the incoming payload does not match the shape you want, Inngest lets you define a "transform" function that runs server-side at receipt time and reshapes the event before it enters your event stream. This keeps your function code clean of provider-specific fields.

PRIMM, Predict. A Stripe webhook fires

stripe/charge.refund.failedat the exact same millisecond as your customer-support Worker is also callinginngest_client.sendto emit a different event namedcustomer/refund.investigation_needed. Both events arrive in the system simultaneously; the function above triggers on the Stripe event only. Will the function run once or twice? Confidence 1-5.

The answer: once. The function is registered to trigger on stripe/charge.refund.failed only; the customer/refund.investigation_needed event has a different name and matches a different function (or no function, if you have not written one). An event's name is its routing key. Two events with different names never accidentally trigger the same function, even if they arrive at the same instant. This is one reason naming discipline matters: a typo in an event name (customer/email_received vs customer/email.received) means the function never fires, and the symptom is silent. Inngest's dashboard helps catch this: unmatched events appear in a separate stream you can audit.

Try with AI

I need to handle three webhook sources for my customer-support Worker:

A) Stripe: refund failed, charge disputed

B) Postmark (email service): bounced email, complaint

C) My internal admin UI: manual "investigate this ticket" button

For each, decide:

1. What event names you'd use (vendor/event.subtype format).

2. Whether the function reacting to it should run synchronously (the

caller is waiting) or asynchronously (fire and continue).

3. Whether you'd write a webhook transform to reshape the payload, or

consume it raw.

Then write the Inngest function for the Stripe refund-failed case in

Python, using the MCP's grep_docs to find the current syntax for

TriggerEvent and the dev-server MCP's send_event tool to test it.

Concept 4: Idempotency and event semantics, the same event firing twice

Webhooks are not exactly-once. They are at-least-once: the sender retries if it does not get an acknowledgment. Networks drop packets, services restart, your endpoint times out and the sender retries even though you actually succeeded. Without idempotency, every webhook system eventually double-bills, double-emails, or double-refunds someone. This is not a theoretical concern; it is the most common production bug in event systems.

Two layers of defense, both built into Inngest.

Layer 1: Event ID seeds at the source. When you send an event yourself (rather than receiving it from a webhook), you can attach an idempotency key:

await inngest_client.send(events=[

inngest.Event(

name="customer/refund.requested",

data={"order_id": "o-4429", "amount_cents": 5000},

id=f"refund-request-{order_id}-{request_timestamp}", # idempotency key

),

])

If a second event with the same id is sent within the dedup window (24 hours by default), Inngest drops the duplicate. Same logical event, same id, only one function run.

Layer 2: Step-level idempotency. Inside a function, each step.run is identified by its name. If a function crashes between step 3 and step 4, the retry re-runs the function code from the top, but for steps 1, 2, and 3, Inngest returns the stored outputs without re-executing the step body. Step 4 runs normally for the first time. This is what makes a function "durable": the side effects of completed steps do not re-happen on retry.

@inngest_client.create_function(

fn_id="issue-customer-refund",

trigger=inngest.TriggerEvent(event="customer/refund.requested"),

)

async def issue_refund(ctx: inngest.Context) -> dict[str, str]:

# Step 1: look up the order. If the function retries, this returns

# the SAME order data it computed the first time, from Inngest's memo.

order = await ctx.step.run(

"lookup-order", lookup_order_by_id, ctx.event.data["order_id"],

)

# Step 2: call Stripe. If the function retries AFTER this step

# succeeded, the Stripe call does NOT happen again. The refund is

# issued exactly once even if the function runs three times.

refund = await ctx.step.run(

"issue-stripe-refund", call_stripe_refund_api,

charge_id=order["stripe_charge_id"],

amount=ctx.event.data["amount_cents"],

)

# Step 3: write the audit row. Same property: runs at most once.

await ctx.step.run(

"audit-refund", write_audit_refund_issued,

order_id=order["id"], refund=refund,

)

return {"refund_id": refund["id"]}

If this function crashes during step 3, the retry re-enters step 1 (gets cached order data, no DB call), re-enters step 2 (gets cached refund data, no Stripe call), runs step 3 for real, returns. The customer's card is charged once, even if the function ran three times. This is the killer feature. It is what makes Inngest qualitatively different from a queue with a retry loop.

Inngest's memoization gives you exactly-once step completion from the function's perspective: once step.run records a step as successful, it will not re-execute. But there is a narrow window. If your step's body calls Stripe (the side effect happens on Stripe's servers), then crashes before Inngest records the result, the retry will re-call Stripe. From Inngest's perspective the step "did not complete." From Stripe's perspective the charge already happened. The production-grade pattern is Inngest step memoization plus provider-level idempotency keys: Stripe's Idempotency-Key header, Postmark's MessageID reuse, your own MCP server's idempotency contract. Treat step.run and provider idempotency keys as complementary, not substitutes: step.run keeps your function's internal logic exactly-once; the provider's idempotency key keeps the external side effect exactly-once.

Quick check. True or false. (a)

step.runmakes the step idempotent only if the function inside is also idempotent. (b) An event with a duplicate ID outside the dedup window will be treated as a new event. (c) Ifstep.runfails mid-execution (the step's code throws an exception), Inngest stores the failure and retries the step on the next attempt without re-running prior steps.

Answers: (a) False: step.run makes the step's invocation idempotent (it will run at most once on success), but if the function inside is non-idempotent (like calling Stripe), the at-most-once guarantee is exactly what you want. The whole point is that you do not have to make Stripe-calling idempotent yourself. (b) True: Inngest's dedup window is 24 hours by default; events with the same ID after that window are treated as new. (c) True: failure replay is itself memoized; Inngest knows step 3 failed at attempt 1 and retries just step 3 on attempt 2. Prior successful steps do not re-execute.

Try with AI

Here are three scenarios. For each, decide: idempotency PROBLEM or

NO PROBLEM, and if it's a problem, what's the fix:

A) Stripe sends the same charge.refund.failed webhook three times

in 90 seconds (because their first two attempts timed out at

your endpoint). Your function emails the customer.

B) A customer clicks "Issue refund" three times because the page

was slow. Your function calls Stripe and writes audit_log.

C) Your nightly cron at 09:00 sends a customer-health-check event

to each Pro customer. If two crons fire at the same time (a deploy

bug), what happens?

For each problem case, propose ONE specific fix: event ID seed

inside the function, idempotency key in inngest_client.send, or

function-level deduplication on the trigger.

Concept 5: Fan-out and sub-agent delegation, one event many Workers

Often a single event needs to trigger work in many places. The Stripe charge.refund.failed event might need to: notify the support agent, write to audit, update the customer's risk score, alert finance ops, post to Slack. Five reactions, all independent, all from one event.

The Inngest pattern: subscribe many functions to the same event. No fan-out code; just multiple @inngest_client.create_function decorators with the same TriggerEvent. Each function runs independently, has its own retries, has its own step trace, fails independently of the others.

@inngest_client.create_function(

fn_id="refund-failed-notify-support",

trigger=inngest.TriggerEvent(event="stripe/charge.refund.failed"),

)

async def notify_support(ctx: inngest.Context) -> dict[str, str]:

# ... runs the customer-support Worker to draft a response ...

return {"status": "drafted"}

@inngest_client.create_function(

fn_id="refund-failed-update-risk-score",

trigger=inngest.TriggerEvent(event="stripe/charge.refund.failed"),

)

async def update_risk_score(ctx: inngest.Context) -> dict[str, float]:

# ... runs the risk-scoring Worker ...

return {"new_risk_score": 0.42}

@inngest_client.create_function(

fn_id="refund-failed-post-slack",

trigger=inngest.TriggerEvent(event="stripe/charge.refund.failed"),

)

async def post_to_slack(ctx: inngest.Context) -> None:

# ... posts a Slack notification ...

return None

One Stripe webhook arrives. Inngest creates one event. Three functions fire, each in its own run. If post_to_slack fails because Slack is down, the other two are unaffected and complete normally. The failed run sits in the dashboard for replay once Slack recovers. This is the core of multi-Worker coordination, and it is the architectural pattern your future manager layer (a later course) will compose at scale.

The other fan-out pattern: parent-fires-N-children. Sometimes the fan-out is dynamic. Your daily cron needs to fire a customer-health event for each Pro customer, which might be 500 or 5,000 depending on the week. The parent function sends N events:

from datetime import date

async def fan_out_per_customer_events(

customers: list[str],

) -> int:

events = [

inngest.Event(

name="customer/health_check.requested",

data={"customer_id": cid},

id=f"daily-health-{cid}-{date.today().isoformat()}", # idempotency

)

for cid in customers

]

await inngest_client.send(events=events)

return len(events)

5,000 events get sent in a single send call. 5,000 function runs fire, each with its own customer_id, each isolated, each independently retryable. Flow control (Concept 11) caps how many run concurrently so you do not melt your downstream APIs. The cron function returns in seconds; the fan-out runs at whatever rate Inngest's flow-control policies allow.

Sub-agent delegation is a special case of fan-out. Inside a Worker run, you can call await inngest_client.send(...) to delegate sub-tasks to other Worker types. The parent does not wait for the children unless it explicitly uses step.invoke to run them synchronously and collect their results.

PRIMM, Predict. You have three functions all triggered by

customer/email.received: the customer-support agent that drafts a reply (15 seconds), an analytics counter (50ms), and a "VIP detector" that checks if the customer is high-value (200ms). When an email arrives, what does the user-visible latency look like for each? Three options: (a) all three add up to ~15 seconds; (b) all three run in parallel, total latency is ~15 seconds (the slowest); (c) each runs independently with no shared latency at all. Confidence 1-5.

The answer: (c). Each function is its own run, in its own process slot. The customer-support agent does not block the analytics counter; the VIP detector does not block the agent. From the outside, the latency for any particular function is just that function's own time. No function ever waits on a sibling function. This is why fan-out scales: the consumers are isolated. If the agent crashes, the analytics counter is unaffected.

Try with AI

Design the fan-out architecture for these three scenarios. For each,

sketch the event names and the functions that subscribe:

A) New customer signs up. Need to: send welcome email, create

Stripe customer, post to Slack #new-customers, write to

audit_log, schedule a 7-day follow-up.

B) Customer support email arrives. Need to: draft a reply (agent),

detect sentiment, check if VIP, update customer's "last contact"

timestamp, attach to the right ticket thread.

C) Daily cron at 09:00 needs to run customer-health-check on

~5,000 Pro customers. Each check takes ~30 seconds. We want

the whole batch to complete by 11:00 (a 2-hour window).

For each, decide: how many event types, how many subscriber

functions, what the idempotency story is, and one specific failure

mode this design protects against.

Part 2: Durable execution, what happens when something breaks

Triggers wake the Worker. Durable execution makes the Worker survive what comes next. The Course #4 Worker calls an agent, the agent calls three tools, the tools call Postgres and Stripe and OpenAI: six external calls in a single conversation, any of which can fail. Without durability, a single transient failure mid-conversation restarts the whole flow from the top. Durability is the property that says: when something fails mid-execution, the work already completed stays completed, and execution resumes from where it broke. Inngest delivers this with one primitive (step.run) and a memoization mechanic underneath. Part 2 explains both, plus the time-based variants (step.sleep, step.wait_for_event), the retry semantics, and the step.ai primitives.

First-pass compression note. If you are scanning, the load-bearing concepts are 6 (

step.run) and 7 (memoization). Concepts 8-10 build on them. Read 6 and 7 carefully; the rest will read fast once you have those two in your head.

Concept 6: step.run and the durable function model

A normal Python function runs once, top to bottom. If it crashes halfway through, you start over from the top. If it makes three API calls before crashing, the next attempt makes those three calls again, and pays for them, and possibly double-charges someone, again.

An Inngest function is durable. Each operation you want to be checkpointed gets wrapped in step.run(name, fn, ...). The function still runs top to bottom on each attempt, but steps that have already completed return their stored outputs instead of re-executing. The function "catches up" to where it broke, then continues forward.

@inngest_client.create_function(

fn_id="customer-support-conversation",

trigger=inngest.TriggerEvent(event="customer/email.received"),

)

async def handle_email(ctx: inngest.Context) -> dict[str, str]:

customer_id = ctx.event.data["customer_id"]

# Step 1: load the customer record (one DB call)

customer = await ctx.step.run(

"load-customer", load_customer_by_id, customer_id,

)

# Step 2: load the conversation thread (one DB call)

thread = await ctx.step.run(

"load-thread", load_thread_for_customer, customer_id,

)

# Step 3: run the OpenAI Agents SDK agent (the Course Four Worker)

response = await ctx.step.run(

"run-agent",

run_customer_support_agent,

customer=customer,

thread=thread,

email_body=ctx.event.data["body"],

)

# Step 4: write the draft reply to the database

await ctx.step.run(

"save-draft-reply", save_reply,

customer_id=customer_id, text=response.draft,

)

# Step 5: notify the on-call human reviewer via Slack

await ctx.step.run(

"notify-reviewer", post_slack_for_review, response=response,

)

return {"status": "drafted", "reviewer_notified": True}

Five steps. Each one is independently checkpointed.

What durability buys you here, in three failure scenarios:

-

Scenario A: the agent step throws a timeout. Without

step.runwrapping the agent call, the next retry of this function reloads the customer, reloads the thread, and reruns the agent from scratch, paying for OpenAI tokens twice for work the agent already partially did. Withstep.run, the customer and thread loads are memoized (steps 1-2 do not re-execute); only step 3 retries. Inngest's automatic retries handle transient OpenAI errors without your code knowing. -

Scenario B: the function process gets killed between step 3 and step 4 (a deploy rolled out, a node restarted, the container OOMed). Without durability, the agent's response is lost and the customer's email goes unanswered until someone notices. With durability, the function resumes after the restart: steps 1, 2, 3 return their stored outputs in milliseconds, step 4 runs for real, step 5 runs for real, the customer gets the drafted reply.

-

Scenario C: Slack returns a 503 on step 5. Without

step.run, you would either lose the work or write retry-and-backoff logic by hand for the Slack call specifically. Withstep.run, Inngest retries step 5 with exponential backoff until Slack recovers; meanwhile steps 1-4 stay completed and will not re-execute. The draft reply is already in the database; the notification is the only thing pending.

You do not write any retry loops, any "did I already do this" checks, any state machines. The state machine is the sequence of step.run calls. Each step is a node; each transition is durable.

The one rule of step.run. The function passed to step.run should be deterministic given its inputs: calling it twice with the same arguments should produce the same result. That is automatic for pure functions; it is automatic for idempotent API calls (Stripe's idempotency_key, your own MCP server tools); it requires care for things like "generate a random ID" or "call an LLM with default temperature" (a retry could produce different output than the original attempt, which sometimes matters). When the operation is not deterministic, you make it deterministic: pass a seed, pre-generate the random value outside the step, or accept that the retry may differ from the original (often fine for an agent response).

Quick check. True or false. (a) The function body re-executes from the top on every retry, including all the imports and variable assignments outside

step.runcalls. (b) If a step takes 30 seconds to complete, and the function crashes 25 seconds in, the retry continues that step from second 25. (c)step.runoutputs are stored in Inngest's infrastructure, not in your application.

Answers: (a) True, and this is why you keep the work inside step.run. Code outside step.run re-runs on every retry; code inside runs once per attempt and is memoized on success. (b) False: step.run is the atomic unit; if a step is interrupted, the retry re-runs the entire step. If your step is so long it cannot be allowed to restart, you break it into smaller steps. (c) True: the step output store is part of Inngest, not your DB. This is why you can replay runs even after your database schema has changed.

The build-agents crash course Decision 4 documents an openai-agents==0.17.2 streamed-path SDK bug with DeepSeek's reasoning models on tool-calling turns: a spurious empty assistant message between the tool_calls message and the tool result, which DeepSeek's strict parser rejects. If your Course Four Worker streams DeepSeek with @function_tool, apply that course's OpenAI-fallback resolution before wrapping Runner.run_streamed in step.run below.

Try with AI

With my AI coding assistant connected to the Inngest dev server MCP,

re-shape my Course Four customer-support Worker into an Inngest

durable function. Take the existing Runner.run_streamed invocation

that processes a customer email and wrap each of these inside its

own step.run:

1. Load the customer from the customer-data MCP server

2. Load the related conversation thread

3. Run the agent (the OpenAI Agents SDK Runner)

4. Persist the draft reply

5. Notify the on-call reviewer in Slack

Use grep_docs to find the current Python SDK syntax. Use

invoke_function to test it with a synthetic email payload. Then

deliberately raise an exception in step 4 and use get_run_status

to confirm steps 1-3 don't re-execute on retry.

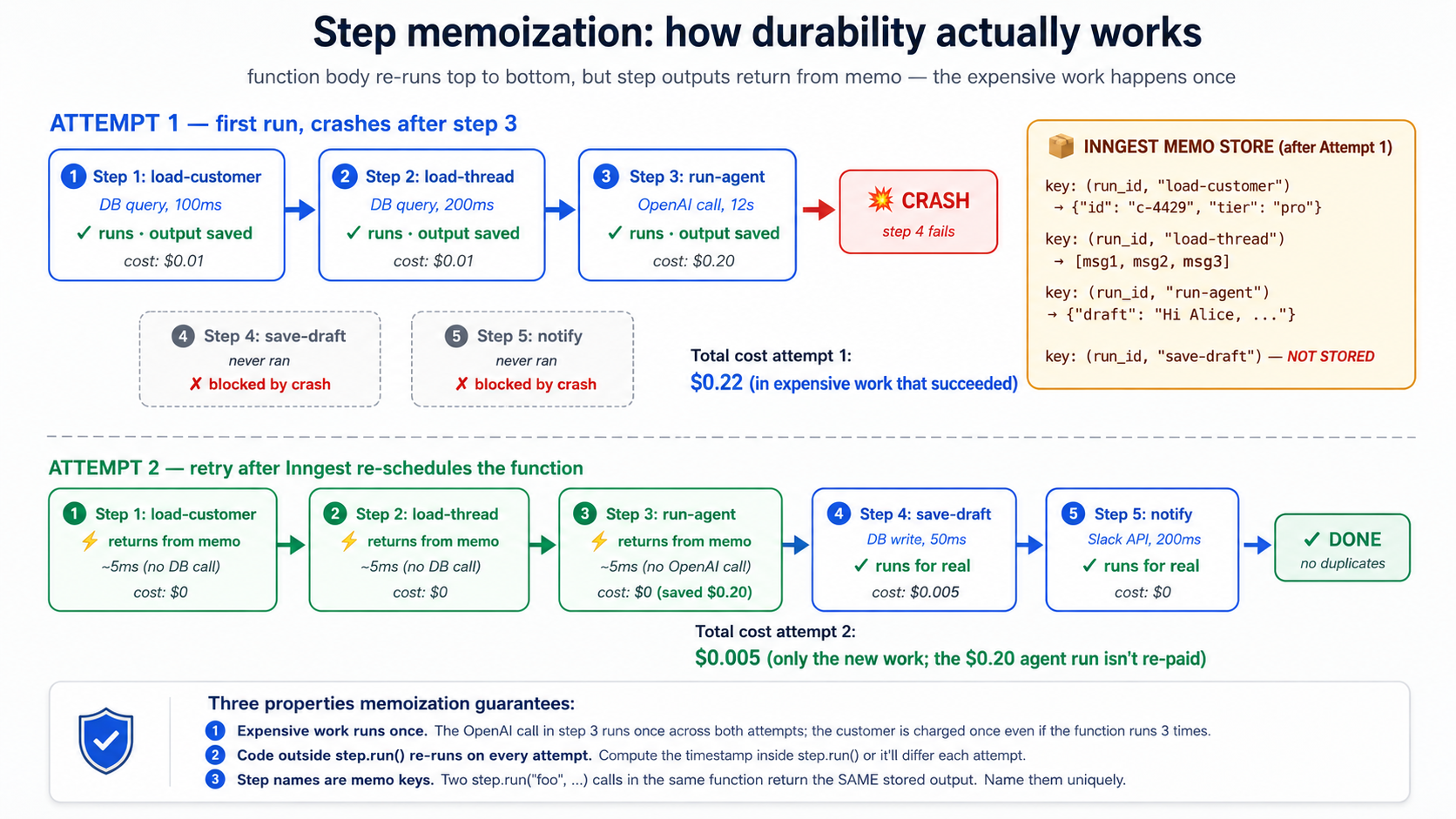

Concept 7: Memoization, the mechanic underneath resumability

Concept 6 said "steps that have already completed return their stored outputs instead of re-executing." That mechanism is memoization and it is worth understanding the mechanic, because every other Inngest primitive uses it.

When you call await ctx.step.run("load-customer", load_customer_by_id, "c-4429"), three things happen on the first attempt:

- Inngest checks its memo store: "is there a stored result for step

load-customerin this run?" There is not. - The function

load_customer_by_id("c-4429")runs. It returns{"id": "c-4429", "tier": "pro", ...}. - Inngest writes that result into the memo store, keyed by

(run_id, step_name="load-customer"). Then it returns the result to your code.

If the function crashes after step 3 and Inngest retries, on the second attempt the function body re-runs from the top. When execution reaches the same line, three different things happen:

- Inngest checks its memo store: "is there a stored result for step

load-customerin this run?" Yes, it was stored on attempt 1. - The function

load_customer_by_id("c-4429")does not run. The DB call does not happen. - Inngest returns the stored result to your code in milliseconds.

This is why retries are cheap: the expensive work is already cached. It is why durability is correct: the expensive work does not happen twice. And it is why the "function body re-runs top to bottom" is fine despite sounding wasteful: the work inside steps does not actually re-run; only the orchestration code between steps does.

The implication that surprises new users. Code outside step.run runs on every attempt. If you do this:

async def handle_email(ctx: inngest.Context) -> dict[str, str]:

# ANTI-PATTERN: this runs on every retry. Don't do this.

expensive_thing: dict = await fetch_expensive_data(ctx.event.data["id"])

await ctx.step.run("do-something", do_something_with, expensive_thing)

return {"status": "done"}

fetch_expensive_data runs on every retry. If it costs $0.10 a call and the function retries 5 times, you just spent $0.50 fetching the same data five times. The fix is to wrap the expensive thing in its own step:

async def handle_email(ctx: inngest.Context) -> dict[str, str]:

expensive_thing: dict = await ctx.step.run(

"fetch-expensive-data", fetch_expensive_data, ctx.event.data["id"],

)

await ctx.step.run("do-something", do_something_with, expensive_thing)

return {"status": "done"}

Now fetch_expensive_data is memoized; retries do not pay for it again.

The step name is the memo key. This is why step names must be unique within a function. If you have two step.run("load-customer", ...) calls in the same function, Inngest will return the first one's stored output for both calls. That is almost never what you want. If you have a loop that calls a step N times, name them uniquely (step.run(f"load-customer-{i}", ...)) so each iteration has its own memo slot.

PRIMM, Predict. Your function has three steps. Step 1 (

load-customer) costs $0.01 in DB calls and takes 100ms. Step 2 (run-agent) costs $0.20 in OpenAI tokens and takes 12 seconds. Step 3 (save-draft) costs $0.005 in DB calls and takes 50ms. Step 2 fails 30% of the time due to OpenAI rate limits; Inngest retries with backoff. What is the cost difference between (a) wrapping all three instep.runand (b) wrapping only step 2 instep.run? Confidence 1-5.

The answer: with (a), a single retry costs you the cost of step 2 only ($0.20). The customer and the save-draft are memoized; they do not re-execute. With (b), every retry costs you steps 1 and 3 plus step 2: $0.215 per retry. Over a thousand emails with a 30% retry rate, that is a difference of about $4.50 in pure waste, plus the operational complexity of figuring out what got partially written when step 3 ran twice. Wrap everything you do not want re-executed in step.run. It is not optional once you understand the mechanic.

Try with AI

With my AI coding assistant: review the Inngest function we built

in Concept 6's Try-with-AI and identify any code BETWEEN step.run

calls that should be wrapped in its own step but isn't. Common

candidates:

- Computed values (timestamps, IDs, formatting) that we want to be

stable across retries

- Calls to logging or metrics services

- Reads from Redis, environment variables, secret managers

Then propose a refactor that moves each of these into its own step

with a meaningful name. For each, explain whether the side effect

is one you want to happen once (use step.run) or every retry

(leave it outside).

Concept 8: step.sleep and step.wait_for_event, durability through time

Some work has to wait. A welcome-email pipeline sends an email immediately, then waits three days, then sends a follow-up. A refund-investigation needs to wait for a human to approve. A trial-conversion flow watches for "user upgraded to paid" within 7 days and sends a different email depending on what it sees.

In a normal Python function, "wait three days" means hold a process open for three days. That is untenable: your process restarts, your hosting bills you for 72 hours of idle compute, your timer gets lost. In Inngest, "wait three days" is one line:

from datetime import timedelta

@inngest_client.create_function(

fn_id="trial-welcome-series",

trigger=inngest.TriggerEvent(event="user/trial.started"),

)

async def welcome_series(ctx: inngest.Context) -> dict[str, str]:

user_id = ctx.event.data["user_id"]

await ctx.step.run("send-welcome-email", send_welcome_email, user_id)

# Wait three days. The function gets paged out of memory. Nothing

# is consuming compute. Three days later, Inngest pages it back in

# and resumes execution at the next line.

await ctx.step.sleep("wait-three-days", timedelta(days=3))

await ctx.step.run("send-followup", send_followup_email, user_id)

return {"status": "completed"}

step.sleep is durable. The function suspends; Inngest stores the resume time; nothing consumes compute while you wait; the function resumes at the right time, with all prior step outputs still memoized. step.sleep (and step.sleep_until) can wait up to one year on paid plans, up to seven days on the free Hobby plan (Inngest usage limits). The seven-day Hobby ceiling is wide enough for every sleep this course uses.

The more powerful sibling is step.wait_for_event. Instead of waiting for time, wait for another event. The function suspends until a matching event arrives, or until a timeout you set expires. This is what makes Inngest the cleanest expression of HITL (Concept 15) and inter-agent coordination patterns:

@inngest_client.create_function(

fn_id="refund-with-approval",

trigger=inngest.TriggerEvent(event="customer/refund.requested"),

)

async def refund_with_approval(ctx: inngest.Context) -> dict[str, str]:

request = ctx.event.data

request_id = request["request_id"]

# If amount is over $500, require approval before issuing

if request["amount_cents"] >= 50_000:

# Notify a human via Slack/email/whatever

await ctx.step.run("notify-approver", notify_human_approver, request)

# Wait for an approval event. Up to 24 hours; expires otherwise.

approval = await ctx.step.wait_for_event(

"wait-for-approval",

event="refund/approval.decided",

timeout=timedelta(hours=24),

if_exp=f"async.data.request_id == '{request_id}'",

)

if approval is None or not approval.data.get("approved"):

return {"status": "rejected_or_timeout"}

# Either it was under $500, or it was approved

refund = await ctx.step.run(

"issue-stripe-refund", call_stripe_refund_api, request,

)

return {"status": "issued", "refund_id": refund["id"]}

What is happening:

- The function reaches

wait_for_event. It suspends. Zero compute consumed. - A human looks at the Slack notification, clicks "Approve" in your admin UI, your UI calls

inngest_client.send(events=[Event(name="refund/approval.decided", data={"request_id": "...", "approved": True})]). - Inngest matches the event to the waiting function (the

if_expensures only events for this request_id match) and resumes the function with the event as theapprovalreturn value. - The function continues to the refund step. The Stripe refund happens after the human approved.

step.sleep and step.wait_for_event are timeouts you do not pay for. The function looks synchronous in your code ("wait three days, then send the email"), but the runtime semantics are async and durable. This is one of the two things Inngest is famous for (durable retries being the other). Without it, the alternative is a queue plus a state machine plus a database plus a poller, and you would write a thousand lines instead of three.

Quick check. Three claims. Mark each True or False. (a) If

step.sleepis set for 30 days and your service is redeployed five times in those 30 days, the sleep continues uninterrupted on a paid plan. (b) Ifstep.wait_for_eventtimes out, the function raises an exception. (c) Twostep.wait_for_eventcalls in the same function can wait for the same event simultaneously.

Answers: (a) True on a paid plan: sleeps are stored in Inngest's infrastructure, not in your service's memory, so redeploys do not lose them. Note the tier ceiling: a 30-day sleep is fine on a paid plan but exceeds the free Hobby plan's seven-day sleep cap. (b) False: on timeout, wait_for_event returns None. Your code checks for it and decides what to do (rejection, escalation, default-approval, whatever the policy is). (c) True, but suspicious: both will fire when a matching event arrives. If the two wait_for_event calls have different if_exp filters, this is fine. If they are identical, you are probably looking at a refactor opportunity.

Try with AI

Build a delayed-investigation flow with my AI coding assistant.

Specification:

1. Triggered by event 'customer/refund.failed'.

2. Immediately notify the on-call human via Slack with the refund

details and a "Investigate" button.

3. Wait for the human to click the button (which fires

'customer/refund.investigation_started') for up to 4 hours.

4. If the click arrives in time: run the agent to draft an

investigation summary.

5. If 4 hours pass without a click: escalate to a senior reviewer

by firing 'customer/refund.escalated'.

Use the dev-server MCP's send_event tool to simulate the

human-click event during testing. Use get_run_status to inspect

how the suspended function shows up in the dashboard. Before

writing, use list_docs to scan the Inngest documentation tree

for the right page on wait_for_event semantics, then

read_doc on the page you find to get the exact syntax for

the if_exp filter expression.

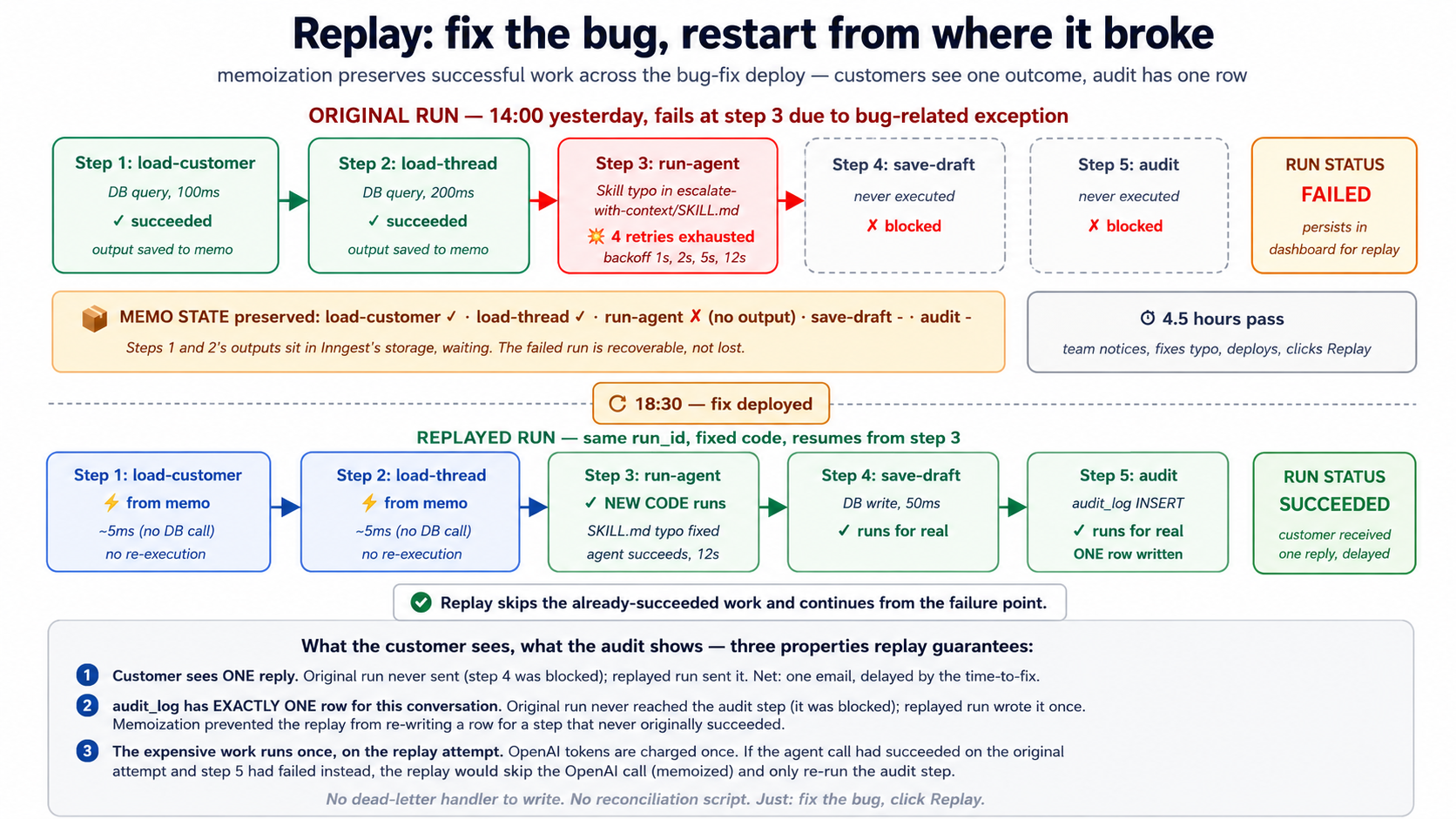

Concept 9: Retries, error handling, dead-letter

By default, Inngest retries failed steps. The defaults are sensible: ~4 retries with exponential backoff, ranging from a few seconds to a few minutes between attempts. After the final retry fails, the run enters a failed state and stays there for inspection and (optionally) replay. You can tune this per function: retries=10, retries=0 (do not retry at all), specific exception types that should not be retried.

@inngest_client.create_function(

fn_id="charge-customer",

trigger=inngest.TriggerEvent(event="order/checkout.completed"),

retries=2, # only retry twice; this involves Stripe; don't keep hammering

)

async def charge_customer(ctx: inngest.Context) -> dict[str, str]:

try:

charge = await ctx.step.run(

"call-stripe", call_stripe_charge, ctx.event.data,

)

return {"status": "charged", "charge_id": charge["id"]}

except StripeCardDeclinedError as e:

# A declined card is not a transient failure. Don't retry.

# Mark the order as failed in our database and emit an event

# for the dunning flow.

await ctx.step.run(

"mark-failed", mark_order_failed,

ctx.event.data["order_id"], reason=str(e),

)

await ctx.step.run(

"emit-dunning-event", emit_dunning, ctx.event.data["order_id"],

)

return {"status": "card_declined"}

Three patterns matter.

Pattern 1: Transient vs permanent failures. Inngest retries everything by default, but some errors are not transient. A card-declined error from Stripe will be declined again on retry. A 401-unauthorized from your downstream API will not become a 200 just because you wait. Your function should catch these specifically and handle them: write to your DB, emit a downstream event, return cleanly, so they do not waste retry budget on hopeless attempts. Inngest's NonRetriableError explicitly tells Inngest to skip retries for a thrown exception.

Pattern 2: Step-level vs function-level errors. A step that throws is retried. After step-level retries are exhausted, the function fails. Sometimes you want a function to survive a failing step: log the failure, mark the work as "partial," continue. Wrap the step.run in try/except. The step still gets its retries; if all retries fail, the exception propagates to your catch block, where you can decide what to do.

Pattern 3: Dead-letter and replay. When a function fully fails, it does not disappear. It enters the Inngest dashboard's "failed runs" view, with the full trace, all step outputs, the exception, and a Replay button. After you ship a bug fix, you can replay the failed runs: they resume from where they broke, with the fix in place. This is the "dead-letter queue" pattern from traditional queues, except you do not write the dead-letter handler. You just fix the bug and replay.

PRIMM, Predict. Your function calls Stripe in step 2 and your customer-data MCP server in step 4. Stripe returns 503 (service unavailable, transient) on the first attempt of step 2. Step 2 retries 4 times with exponential backoff (~1s, ~2s, ~5s, ~12s); on the 4th retry, Stripe is back, the charge succeeds. Now step 4 runs, and the customer-data MCP server is down with a 500. Does Inngest retry the whole function, or just step 4? How many times? Confidence 1-5.

The answer: just step 4, and it gets its own retry budget. Steps do not share retries. Step 2's four retries are independent of step 4's. Inngest will retry step 4 (default ~4 times) and if the MCP server comes back, step 4 completes, and the function succeeds. The Stripe charge from step 2 is not re-issued, because step 2's output was memoized after its successful retry. The customer is charged exactly once even though the function spent 20 seconds across retries.

Try with AI

With my AI coding assistant: extend the customer-support Worker

function from Concept 6 with explicit retry and failure handling.

Specification:

1. The OpenAI Agents SDK call should retry 3 times on transient

failures (rate limit, timeout), but NOT retry on a content-policy

refusal from the model.

2. The Slack notification should retry up to 10 times (Slack is

often flaky; don't lose the notification).

3. The Postgres write should retry once; if it fails again, log the

failure and continue (don't fail the whole function over a

transient DB blip).

For each step, decide what's transient vs permanent and structure

the try/except accordingly. Use grep_docs to find the Python SDK's

NonRetriableError equivalent.

Concept 10: step.run for AI calls in Python (step.ai.wrap is TypeScript-only)

Concepts 6-9 work for any side-effecting code: DB writes, API calls, file writes, agent invocations. Inngest also ships AI-specific step primitives that handle the patterns LLM calls are prone to: rate-limit retries, observability into prompts and responses, and (optionally) inference proxying that reduces serverless compute costs.

Important Python-vs-TypeScript note up front. Inngest's

step.aimodule has two methods, and they have different language support.step.ai.infer()is available in both TypeScript and Python (Python SDK v0.5+): it offloads inference to Inngest's infrastructure and traces the call.step.ai.wrap()is TypeScript only: there is no Python equivalent today. For Python projects (like this course's Worker), the correct pattern for wrapping an OpenAI Agents SDK call isctx.step.run(...), which already gives you full durability, retries, and observability of the wrapped step's inputs and outputs. You just do not get the LLM-specific prompt/response telemetry that the TypeScriptstep.ai.wrapadds. (Verified against the AI Inference docs as of May 2026.)

step.run for OpenAI calls in Python (the recommended pattern). Your function makes the OpenAI call inside ctx.step.run("name", fn, ...). Inngest traces the inputs and outputs of the step (the arguments you passed and what was returned), retries on transient failures, and memoizes the result so retries of later steps do not re-pay the OpenAI cost. The prompt and response are recorded as the step's input/output in the dashboard:

from openai import AsyncOpenAI

oai = AsyncOpenAI()

async def call_openai_summary(thread_text: str) -> str:

"""A normal async function. Inngest doesn't care that this is an LLM call."""

response = await oai.chat.completions.create(

model="gpt-4o",

messages=[

{"role": "system", "content": "Summarize this support thread in 3 sentences."},

{"role": "user", "content": thread_text},

],

)

return response.choices[0].message.content

@inngest_client.create_function(

fn_id="summarize-customer-thread",

trigger=inngest.TriggerEvent(event="customer/thread.summary_requested"),

)

async def summarize_thread(ctx: inngest.Context) -> dict[str, str]:

thread: list = await ctx.step.run(

"load-thread", load_thread, ctx.event.data["thread_id"],

)

# The OpenAI call is wrapped in step.run. Inngest sees this as a step:

# the inputs (formatted thread text) are recorded, the output (summary

# string) is recorded, the call is memoized on success, and retries are

# automatic on transient failures.

summary: str = await ctx.step.run(

"openai-summary", call_openai_summary, format_thread(thread),

)

return {"summary": summary}

In the dashboard, this run shows the function's step trace (load-thread followed by openai-summary) with the inputs and outputs of each step. If OpenAI returned a 429 (rate limited), Inngest retries openai-summary with backoff automatically: same memoization semantics as Concept 7, so retries do not double-bill the prior load-thread step. What you do not get (compared to TypeScript's step.ai.wrap): automatic LLM-specific telemetry like token counts, model name, and provider-specific traces broken out in the dashboard's AI view. For most Python production workloads, the standard step trace plus your own OpenAI client telemetry (for example, the OpenAI Agents SDK's tracing) covers this gap.

Because step.run records each step's inputs and outputs to Inngest's observability store, the content you pass through a step is stored and visible in the dashboard. If your prompt includes PII (names, emails, addresses), secrets (API keys, internal tokens), contractual or financial data, or regulated content (HIPAA, GDPR-scoped data, PCI), do not pass the raw content into the step body. Redact, hash, summarize, or pass a reference (a customer_id and ticket_id, not the full ticket text) and reload the sensitive content inside the step body from your authoritative store, where retention and access controls are yours to configure. The same discipline applies to the OpenAI Agents SDK's own tracing if you enable it. Treat step traces as you would treat any production log: useful by default, regulated by policy.

step.ai.infer: a niche tool for serverless cost reduction (Python-supported). You will rarely reach for this; step.run is the default for every AI call in this course. step.ai.infer exists for one specific situation: instead of calling OpenAI from your function process, you ask Inngest's infrastructure to make the call, so while the request is in flight your function process can deallocate. On serverless platforms (Vercel, Cloudflare Workers, AWS Lambda) that bill for in-flight time, this saves compute cost during the wait. For long-running inferences (Deep Research, large embedding batches) the savings are real. For sub-second calls, it adds latency without much benefit. The one shape, so the Quick reference decision-tree has a concrete referent:

import os

from inngest.experimental.ai.openai import Adapter as OpenAIAdapter

@inngest_client.create_function(

fn_id="long-research-call",

trigger=inngest.TriggerEvent(event="customer/research.requested"),

)

async def long_research(ctx: inngest.Context) -> dict[str, str]:

response = await ctx.step.ai.infer(

"call-openai",

adapter=OpenAIAdapter(

auth_key=os.environ["OPENAI_API_KEY"],

model="gpt-4o",

),

body={

"messages": [

{"role": "user", "content": ctx.event.data["prompt"]},

],

},

)

return {"response": response["choices"][0]["message"]["content"]}

Two details that trip people up. The keyword is adapter=, not model=: you pass an Adapter instance imported from inngest.experimental.ai.<provider> (adapters ship for openai, anthropic, gemini, grok, and deepseek). And the inngest.experimental.ai namespace is flagged experimental in inngest-py 0.5.18, so pin your SDK version if you depend on it. The return value is a plain dict, so the response["choices"][0]["message"]["content"] subscript above is correct. The function's compute time is roughly the time between firing the request and processing the response, not the OpenAI call itself; on serverless, this can shave seconds off your billable time per invocation.

Quick check. True or false. (a) In Python,

ctx.step.run("name", call_openai, ...)makes the OpenAI call durable, retried on transient failures, and memoized on success. (b)step.ai.inferis a hard requirement for using Inngest with the OpenAI Agents SDK in Python. (c) Replacingstep.runwithstep.ai.inferin the example above would always make the function cheaper to run.

Answers: (a) True: this is the recommended Python pattern. The OpenAI call goes inside the step body; Inngest treats the whole step as the unit of work. (b) False: step.run is enough for most cases. step.ai.infer is an optimization for serverless compute cost, not a requirement. The OpenAI Agents SDK integration in the Worked Example uses plain step.run. (c) False: step.ai.infer saves money only when (i) you are on a serverless platform that bills for in-flight time AND (ii) the call is long enough that the request-offload savings dominate the added orchestration overhead. For sub-second calls on always-on servers, plain step.run wins.

See the same caveat from earlier in this course: if your Course Four Worker streams DeepSeek with @function_tool, the openai-agents==0.17.2 streamed-path SDK bug documented in build-agents Decision 4 applies to Version A below. Apply that course's OpenAI-fallback resolution before wrapping Runner.run_streamed in step.run.

Try with AI

With my AI coding assistant: take the Course Four customer-support

agent invocation and produce TWO versions of the Inngest function

that calls it:

Version A: Wrap the Runner.run_streamed call in step.run (the

recommended Python pattern: durable, retried on transient failures,

memoized; you get the standard step trace).

Version B: For comparison, write a SEPARATE small Inngest function

that calls a single OpenAI completion via step.ai.infer (the

Python-supported step.ai primitive that offloads inference to

Inngest's infrastructure to save serverless compute cost).

For each version, explain (a) what the dashboard trace shows for a

successful run, (b) what happens when the OpenAI call hits a 429

rate limit, (c) whether the Course Four SQLiteSession state gets

corrupted by a mid-run crash, and (d) on which kind of deployment

(always-on server vs serverless) Version B's offload saves real money.

Part 3: Flow control and recovery, production scale

Flow control is the third layer: it keeps the Worker healthy under load. Concurrency stops the Worker from melting downstream systems. Throttling keeps you off rate-limit walls. Priority and fairness prevent one chatty customer from starving everyone. Batching turns "10,000 events at midnight" into "100 manageable function runs." Replay turns "yesterday's bug cost us 200 failed interactions" into "we fixed it; 200 conversations resumed." HITL gates suspend the agent until a human approves. Part 3's five concepts give you the production policies that turn a working Worker into one you can put in front of paying customers.

Concept 11: Concurrency and throttling

Concurrency is the maximum number of runs of a function that can execute simultaneously. Throttling is the maximum number of runs that can start per unit of time. Both are configured per function with one line each. Both are the most common production gap when teams move from prototype to scale.

from datetime import timedelta

@inngest_client.create_function(

fn_id="customer-support-conversation",

trigger=inngest.TriggerEvent(event="customer/email.received"),

concurrency=[inngest.Concurrency(limit=10)],

throttle=inngest.Throttle(limit=100, period=timedelta(minutes=1)),

)

async def handle_email(ctx: inngest.Context) -> dict[str, str]:

...

concurrency=10 says: at most 10 of these functions are running at any moment. The 11th event waits in queue until one of the 10 finishes. throttle=100/minute says: at most 100 new runs start per minute. The 101st event waits even if there is concurrency headroom.

Why both matter in practice. Concurrency protects downstream systems: if your customer-support Worker talks to OpenAI and Postgres, having 1,000 concurrent runs means 1,000 simultaneous OpenAI calls and 1,000 simultaneous Postgres connections. You will exhaust your OpenAI rate limit, exhaust your connection pool, or both. Throttle protects against bursts: if 500 customer emails arrive at 9:00am sharp, you do not want 500 functions starting in the same second; throttle smooths the start rate.

Per-key concurrency. A single concurrency limit applies to the function globally. A more interesting pattern is per-key concurrency: limit by some property of the event.

@inngest_client.create_function(

fn_id="customer-support-conversation",

trigger=inngest.TriggerEvent(event="customer/email.received"),

concurrency=[

inngest.Concurrency(limit=10), # global cap

inngest.Concurrency(limit=2, key="event.data.customer_id"), # per-customer cap

],

)

async def handle_email(ctx: inngest.Context) -> dict[str, str]:

...

This says: at most 10 functions running globally, AND at most 2 per customer at a time. If a single customer sends 100 emails in a minute, only 2 of their emails are processed simultaneously; the other 98 queue behind. Meanwhile, other customers' emails flow normally; they are not blocked by the chatty customer. This is multi-tenant fairness in two lines of code. Concept 12 develops the pattern further.

Quick check. Three claims, True or False. (a) If you set

concurrency=10and 1,000 events arrive at once, 990 of them are dropped. (b) Throttling and concurrency limits both reduce total throughput. (c) Per-key concurrency requires a key that is deterministic from the event data.

Answers: (a) False: events are not dropped; they queue. Inngest's queue is durable; the 990 events wait until concurrency slots open up. (b) False. Throttling caps start-rate; concurrency caps in-flight runs. Neither drops work; both shape when work executes. Throughput over a long window is unchanged if your average load is below the limits. Throughput over a peak is shaped: bursts are absorbed by the queue. (c) True: the key expression is evaluated on the event data; it has to produce a stable string for the same logical scope (customer_id is fine; current_timestamp is not).

Try with AI