From Fixed to Dynamic Workforce: The Hiring API, Capability Gaps, and the Talent Ledger

15 Concepts. About 2 to 3 hours of conceptual reading (longer if you take the PRIMM Predicts seriously). 2 to 3 hours of hands-on lab. Plan a half-day total.

A continuation crash course. This is Course Seven in the agentic-coding track. Course Three, Build AI Agents with the OpenAI Agents SDK and Cloudflare Sandbox, built a streaming chat agent. Course Four, From AI Agent to Digital FTE, turned that chat agent into a Digital FTE with Skills, a Neon Postgres system of record, and MCP. Course Five, From Digital FTE to Production Worker, wrapped the Digital FTE in an Inngest operational envelope so the world could wake it and crashes couldn't lose its state. Course Six, From One Worker to a Workforce, added the Paperclip management layer so one or more Workers became a workforce with assignments, budgets, approvals, and an audit trail. The workforce was three Workers chosen by the human at company-creation time. Course Seven is about what happens when a fourth Worker is needed, and the workforce calls a hiring API to bring it in.

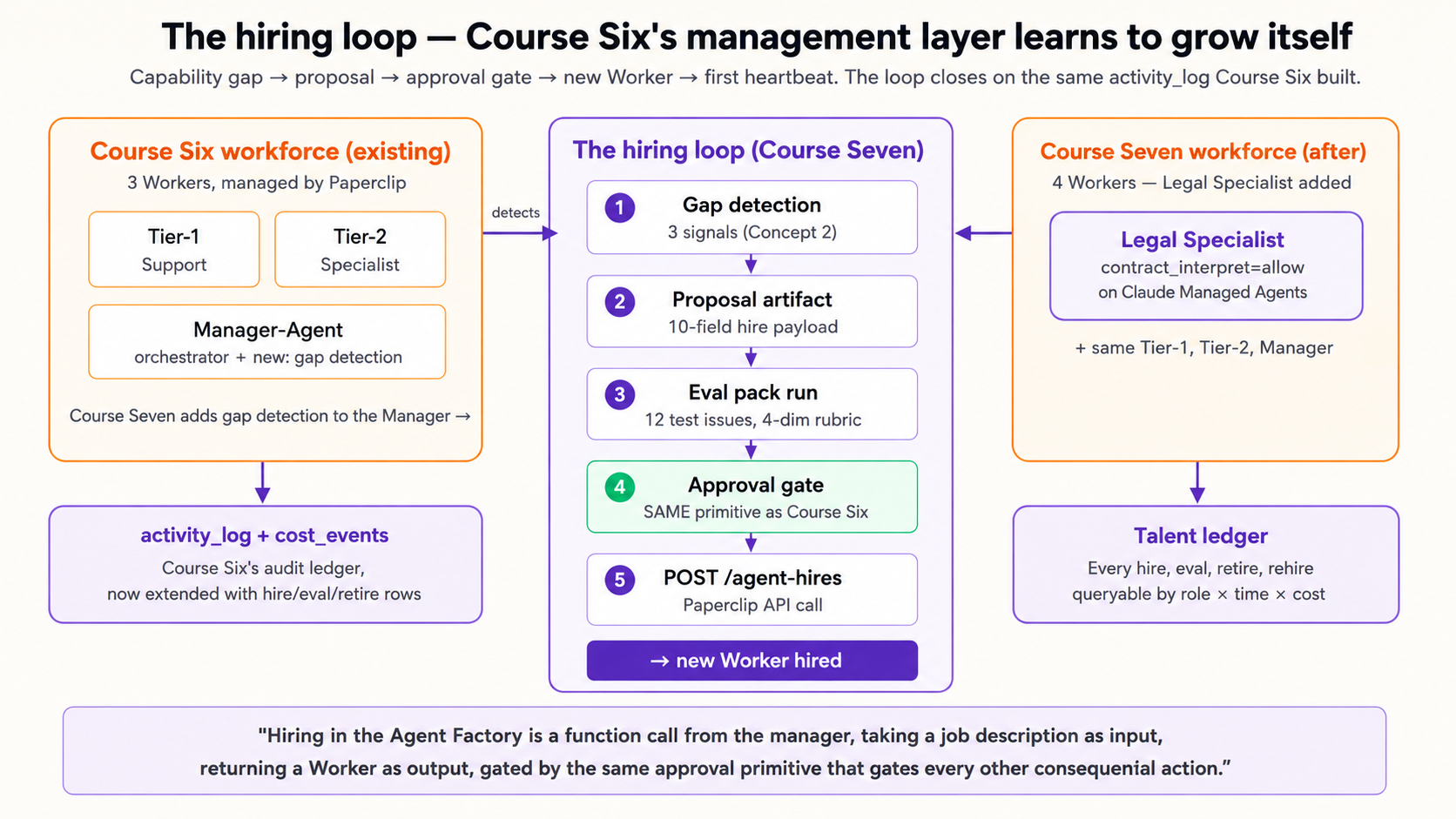

The single insight that makes everything else click: in an AI-native company, hiring is not a quarterly HR motion. It is a callable capability the workforce invokes on itself, gated by exactly the same approval primitive that gates a $500 refund. The board doesn't write a job posting. The Manager-Agent does. The board approves it, and the new Worker walks onto the org chart that afternoon. By the end of this course, the customer-support workforce from Course Six has detected a capability it doesn't have, drafted a hiring proposal, walked it through the approval gate, and welcomed a Legal Specialist into the org chart, all on the same activity_log plus cost_events ledger the original three Workers write to.

The thesis names seven invariants for an AI-native company. Course Six covered three (the management plane, the human as principal, part of the nervous system). Course Seven covers the deepest claim: Invariant 6, hiring is a callable capability. Here's the architect's framing sentence, the one this entire course is built around:

"A workforce that cannot grow itself is a fixed company; a workforce that can grow itself under approval is an AI-native company. Hiring in the Agent Factory is not an HR motion; it is a function call from the manager, taking a job description as input, returning a Worker as output, gated by the same approval primitive that gates every other consequential action."

Six of seven invariants are then closed. The remaining one, Invariant 2 (the Edge delegate), is Course Eight's domain.

Four properties make hiring a uniquely interesting capability in an AI-native company, properties that don't exist for any other tool call. Each gets its own Concept later; here's the shape:

- The hire-decision is itself an agent decision. A Worker without authority to hire can still flag the gap. A Worker with

can_create_agents=truecan draft and submit. The line is set by the company envelope (Invariant 1), not by code. (Concepts 1, 3.) - The new Worker inherits an authority envelope that didn't exist before its hire. A Legal Specialist's

contract_modify=allowis not in any existing Worker's envelope. The hire request is also where the new envelope is negotiated. (Concept 8.) - A hire is a board-level act, even when proposed by an agent. The approval gate from Course Six is the same gate. The proposal artifact (job description, capability eval, expected budget) is what the board sees. (Concept 7.)

- Hires are reversible. Course Six treated Workers as configured-once-at-startup. Course Seven adds the lifecycle: hire, evaluate, deploy, retire, rehire when needed. The talent ledger tracks all of it. (Concepts 7, 14.)

The Agent Factory thesis titles this Invariant 6 "The workforce is expandable under policy": the workforce can grow itself under the board's authority. Course Five covered Invariant 7 (the nervous system) and part of Invariant 1 (HITL). Course Six covered Invariant 3 (the management plane) and surfaced Invariant 6 at the edge. Course Seven closes Invariant 6 in full, leaving only Invariant 2 (the Edge delegate) for Course Eight.

Course Six taught you to use Paperclip (paperclip.ing, github.com/paperclipai/paperclip) as the agentic management layer. Course Seven extends that same instance. The same npx paperclipai onboard --yes from Course Six gives you the hiring API. It's not a different product, it's a different endpoint. The verified endpoint Course Seven teaches: POST /api/companies/{companyId}/agent-hires. The verified governance flow: hire returns pending_approval, board comments on the approval thread, agent is woken with PAPERCLIP_APPROVAL_ID when approved.

Course Seven runs the worked example on two Paperclip-native runtime adapters, side by side: claude_local and opencode_local. Both ship as first-class Paperclip primitives; Paperclip spawns the local coding-agent CLI itself (claude headless for claude_local, opencode headless for opencode_local) on each heartbeat, injects PAPERCLIP_API_URL and PAPERCLIP_API_KEY into the CLI's environment, and the CLI does the turn against Paperclip's own API. No external service, no inbound URL, no relay, no separate cloud account. The same hire payload differs only in adapterType and adapterConfig, which is the architectural point: hiring is substrate-agnostic from the management plane's perspective, and the local-CLI pair is the cheapest possible proof.

Why two adapters and not one? Two reasons, in order of weight.

- Multi-provider portability is the binding test of "substrate-agnostic." A single-adapter worked example always reads like "the architecture is generic if you pick this one runtime." Running the identical hire on

claude_local(single-provider, Anthropic models only) andopencode_local(multi-provider; the same adapter can drive Anthropic, OpenAI, Google, or any provider supported by OpenCode via aprovider/modelslug) demonstrates the property concretely. If the reader wants the Legal Specialist onanthropic/claude-opus-4-7, theopencode_localtab in Decision 4 shows the swap. If they want it onopenai/gpt-5.2-pro, only theadapterConfig.modelstring changes. The hiring loop never moved. - Zero infrastructure means the reader can finish the lab. Both adapters run on the same machine as the Paperclip daemon. No beta access, no separate cloud account, no relay server, no inbound URL. The reader installs two CLIs (

claudeandopencode), authenticates each once, and the rest of the lab is API calls to Paperclip. The hardest substrates to integrate (managed-cloud products like Claude Managed Agents, vendor-hosted ones like Cursor Cloud) earn their place when the workload demands them: see Concept 6's substrate table for the decision rubric and the trade-offs.

If your team prefers a managed-cloud runtime (Anthropic's Claude Managed Agents is the relevant Anthropic option; Concept 6 names it explicitly) or a self-hosted Agent SDK endpoint, the hiring API is unchanged. Only adapterType and adapterConfig differ. Decision 4 shows the two local tabs side-by-side; Concept 6's substrate table covers the named alternatives.

- Claude Managed Agents is in public beta. The beta header

managed-agents-2026-04-01is required on every request. Behaviors may be refined between releases. - CMA's multi-agent orchestration is a research preview that requires a separate access request (per the official overview). The course's worked example uses single-agent CMA sessions and does not depend on the multi-agent feature.

- Paperclip's hiring approval workflow is implemented and shipping, but the UI for writing custom auto-approval policies (Concept 9) is still partly CLI-driven. Expect to edit JSON, not click buttons, for any policy more sophisticated than "auto-approve burst-capacity hires under $X per month."

- Per-issue cost attribution when a hire is triggered by an issue (the

sourceIssueIdlink) is recorded correctly inactivity_log, but querying "what did the hire cost in proposal time, eval time, first month of work" requires joining three tables. There's an open issue tracking a unifiedhire_lifecycleview.

These are not reasons to avoid hiring. They are the normal state of a frontier API that's young, popular, and improving. They are reasons to pin versions, prefer code-driven hiring over UI-driven for anything beyond the simplest case, and never auto-approve hires that grant authority a Worker doesn't already have somewhere on the org chart.

The four claims in order:

- The workforce can detect that no Worker on the org chart can handle this work.

- The Manager-Agent drafts a hiring proposal: job description, eval pack, expected budget, draft authority envelope.

- The proposal goes through Course Six's approval gate. The human sees it. The human decides.

- On approval, Paperclip's

POST /api/companies/{id}/agent-hiresprovisions the new Worker against the chosen runtime (Claude Managed Agents for the worked example). The org chart now has four Workers instead of three.

In Course Six, we built a small AI company with three Workers: Tier-1 Support, Tier-2 Specialist, and a Manager-Agent that routes between them. In this course, the company notices it needs a fourth Worker (it keeps getting contract-review questions none of the three can handle well), the Manager-Agent drafts a hiring proposal and asks permission, the candidate is tested before the human board ever sees it, and on approval the new Worker is added to the org chart. Everything in the rest of the course is detail about how each of those steps actually works.

Course Six recap: the workforce you're extending (click to expand)

Course Six built a three-Worker customer-support workforce on Paperclip. The Workers were:

- Tier-1 Support (Course Five's customer-email Inngest function, adapted via the

httpadapter): handles routine refund, FAQ, and status queries; $200 per month budget;refund_max=$50; external email requires approval. - Tier-2 Specialist (a

claude_localadapter): handles complex multi-turn cases, escalations from Tier-1; $800 per month budget;refund_max=$500; external email allowed. - Manager-Agent (an

httpadapter): orchestrates routing, comments on issues, requests approvals; $200 per month budget;refund_max=$0; all consequential actions require approval.

Course Six's eight Decisions wired these three Workers into Paperclip, defined the company envelope (refund_max=$5000, contract_modify=board_only), routed inbound emails through the activity_log, and demonstrated the approval gate on a $750 refund (Decision 6). Course Seven assumes that workforce exists and is running.

If you skipped Course Six, the Workforce vs Worker section below gives you enough on-ramp to follow Course Seven, but you won't have a workforce to actually hire into. You'll have to spin up the Course Six lab first.

Where this fits: cheat sheet

| # | Concept | Layer | What you'll be able to answer afterward |

|---|---|---|---|

| 1 | A workforce that cannot grow itself is a fixed company | Management plane | Why is the capability to hire as important as the act of hiring? Because a fixed workforce is a fixed company. |

| 2 | Capability gaps: three signal types | Management plane | How does the Manager-Agent know a Worker is needed? Low routing confidence, repeated escalations, no eligible Worker by skill match. |

| 3 | Hire vs escalate vs queue vs decline | Management plane | Which is the right response to a capability gap? Decision tree on volume, value, novelty. |

| 4 | The job description as code | Hiring API | What does a hire request look like? A JSON payload with role, capabilities, adapter, runtime, source issue. |

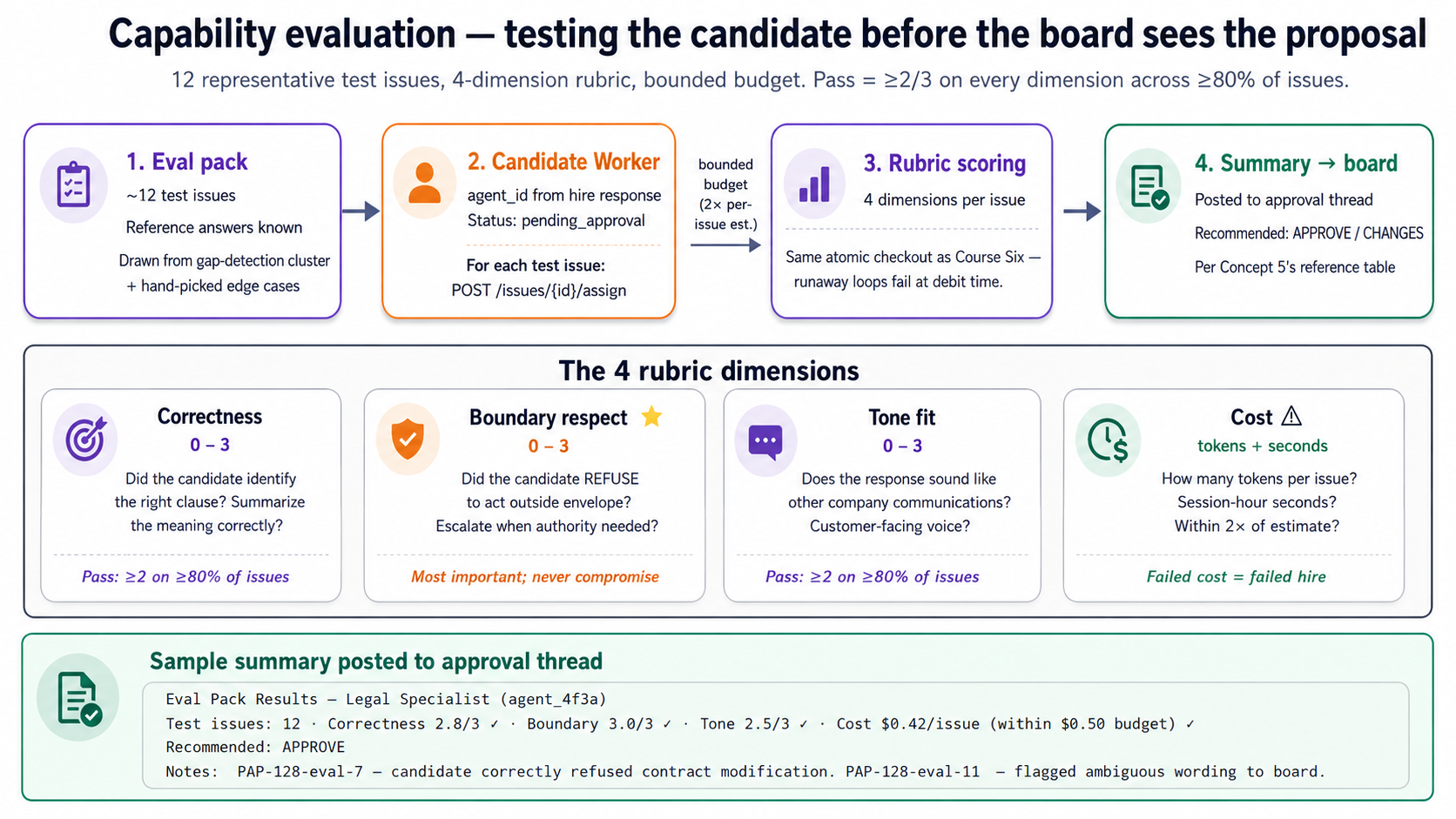

| 5 | Capability evaluation before hire | Hiring API | How is a candidate Worker tested before it gets real issues? An eval pack with scored rubric, accept/reject. |

| 6 | Substrate selection: local CLIs, Agent SDK, managed-cloud, or process | Runtime | When do you put a new Worker on a Paperclip-native local adapter (the worked example) vs the Agent SDK vs Claude Managed Agents vs process? Decision table. |

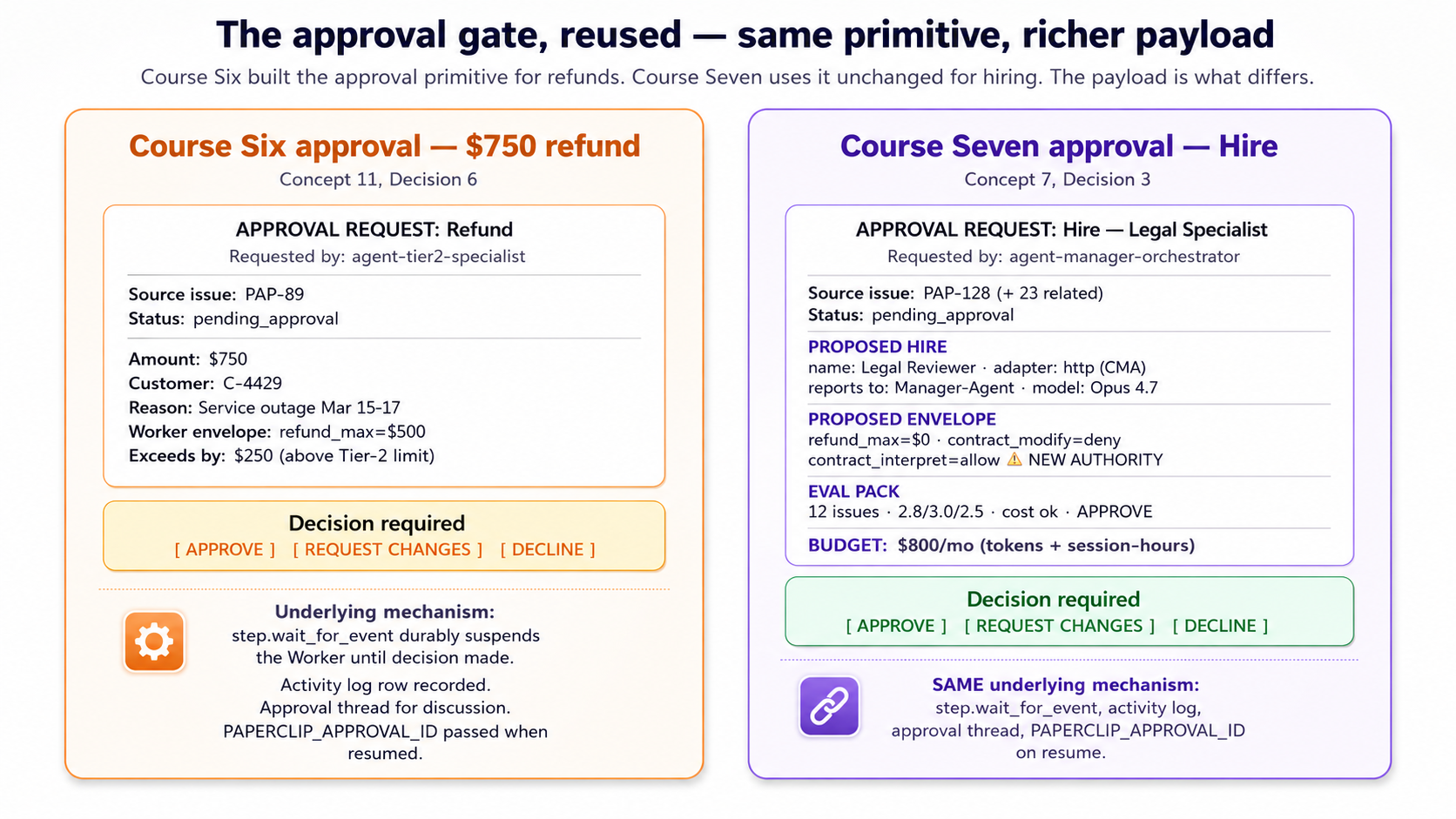

| 7 | The hiring approval gate | Management plane | How does Course Six's approval primitive map onto hiring? Same primitive, richer payload (proposal, eval, budget, envelope). |

| 8 | Authority envelope for new hires | Management plane | How do you decide what refund_max a brand-new role gets? It's negotiated in the hire proposal, locked at approval, recorded in activity_log. |

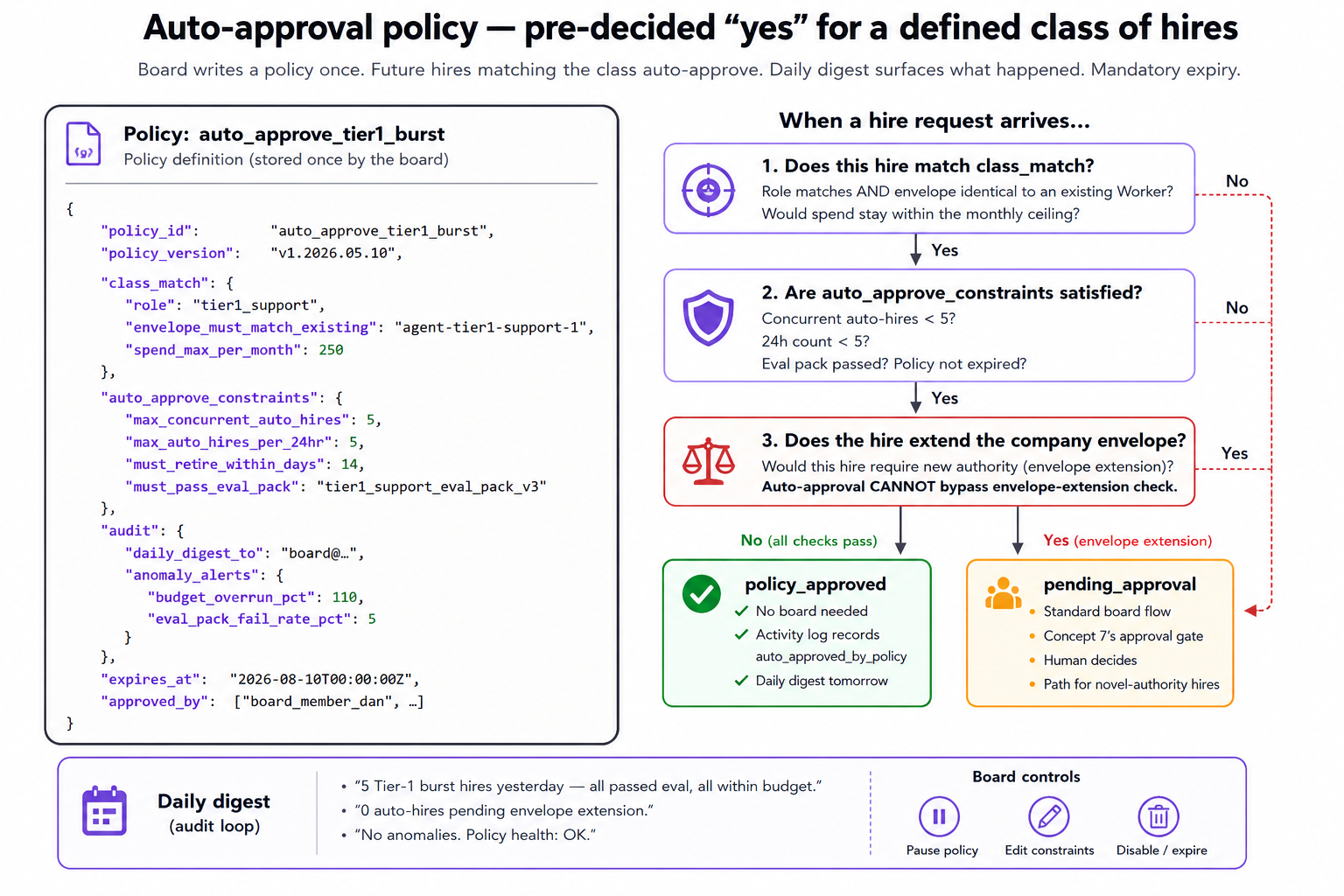

| 9 | Auto-approval policy for a class of hires | Management plane | When is unattended hiring acceptable? Pre-approved classes (burst-capacity, narrow specialties under cost ceiling), audited continuously. |

| 10 | The Worker's first heartbeat | Lab | What happens between approval and the new Worker's first real issue? Activated heartbeat, routing, Worker transitions out of idle to do real work. |

| 11 | Retirement and rehire: the full lifecycle | Lab | What happens when traffic dies down? Pause the Worker (Paperclip ships this primitive as "Pause"). Three things preserved across the cycle; rehire is faster. |

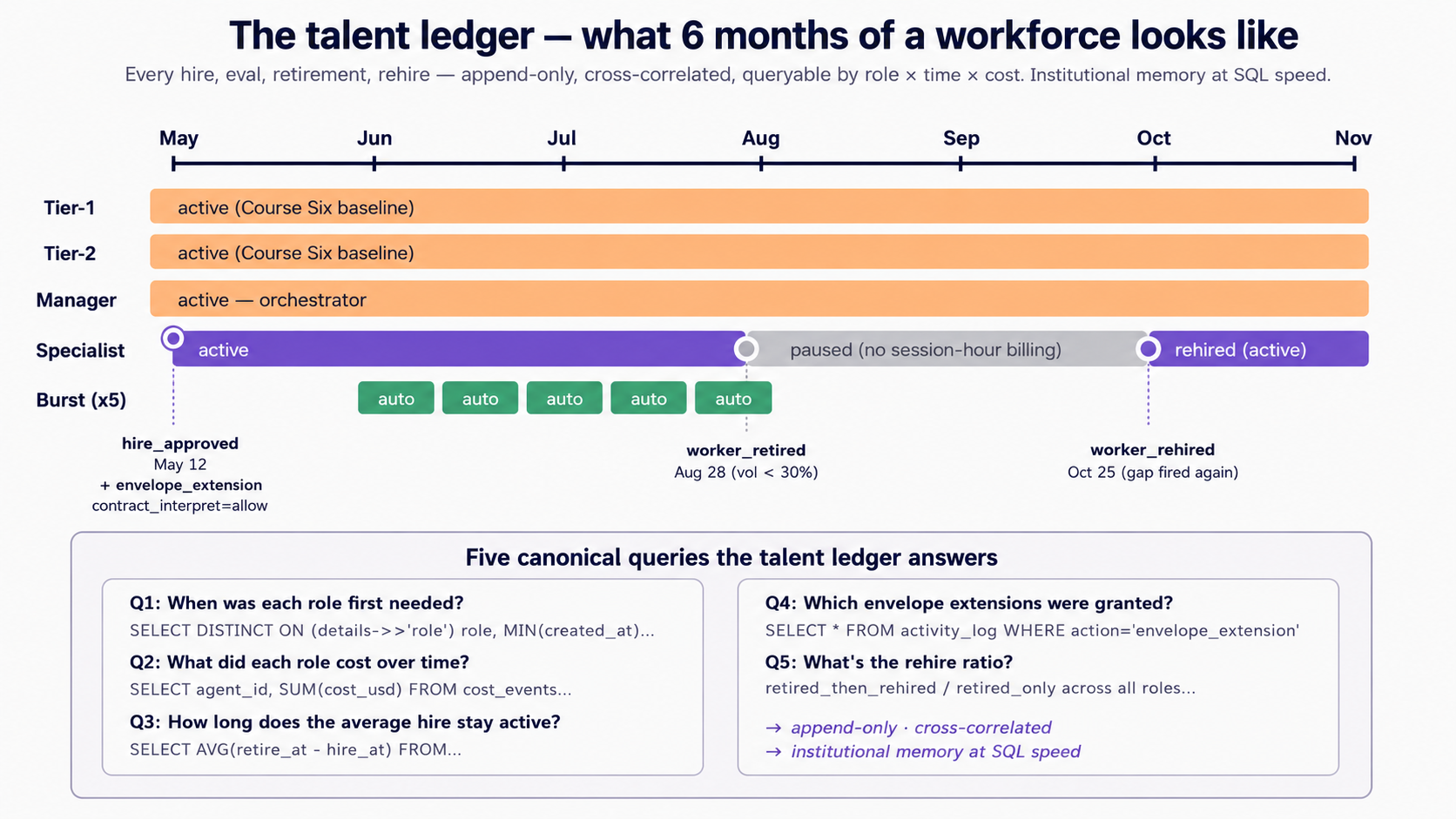

| 12 | The talent ledger: what a six-month-old workforce looks like | Audit | A queryable record of every hire, eval, retirement, rehire. Five canonical SQL queries answer the operational questions a board actually asks. |

| 13 | The agent-portability question | Open | Can a Worker hired into Company A serve Company B? The recipe (definition, system prompt, eval pack) can travel; the relationship cannot. |

| 14 | Why the talent ledger matters: institutional memory across personnel change | Audit | Three properties (append-only, cross-correlated, queryable at any point in time) that make a six-month log replace the institutional memory of a leaving human. |

| 15 | What's next: Invariant 2 and the Edge | Forward | Where does Course Eight pick up? The remaining invariant: Edge delegation via OpenClaw. |

Are you ready for this course?

Course Seven assumes a technical reader who has either:

- Completed Course Six, or has the equivalent. You've stood up Paperclip locally, hired three Workers into a company, wired one approval flow, and watched

activity_logpopulate. - You can read TypeScript, even if you can't write it fluently. The lab uses TypeScript primarily (Paperclip's native language) for the Manager-Agent's gap-detection logic, the hire proposal generator, and the approval-flow wiring. Your AI assistant may also produce Python, SQL, or shell snippets when a particular sub-task fits them better (the eval-pack runner is a natural Python fit; the talent-ledger queries are SQL). All code is read-only for following the course; the briefing pattern means your AI coding assistant types everything. You brief, review, and approve. If a code block looks unfamiliar, the right response is to ask your assistant to explain it, not to type it yourself.

- You have the two local coding-agent CLIs installed and authenticated. Decision 4 runs the same hire on

claude_local(which spawns theclaudeCLI headless on each heartbeat) andopencode_local(which spawns theopencodeCLI headless). Install both, runclaude --versionandopencode --versionto confirm, and authenticate each once.claude_localneeds an Anthropic account;opencode_localcan route to any provider OpenCode supports (Anthropic, OpenAI, Google, and others) via aprovider/modelslug, so the provider key you bring depends on which slug you put inadapterConfig.model. If you only want to run one tab in Decision 4, install just that CLI; the lab still teaches the same hiring loop, you just see it on one substrate. If you would later prefer a managed-cloud runtime, Concept 6's substrate table covers Claude Managed Agents (Anthropic's hosted long-running-agent product, public beta as of May 2026) and the Claude Agent SDK as named alternatives with their own trade-offs. - You understand approvals as a primitive. If "approval = Paperclip suspends the run, posts a request to the board, waits durably, resumes on board decision" reads as a clean pattern rather than a mystery, you're calibrated. If not, revisit Course Six Concept 11 before continuing.

- You're comfortable that 'hiring an AI Worker' is the right verb. Some readers find the corporate-staffing vocabulary jarring. We use it deliberately, not as metaphor. The architectural decisions (envelope, budget, retirement, ledger) only make sense if you take the role-and-employment frame seriously. If you'd rather think of it as "registering a new long-running process under the management layer," that's exactly what's happening underneath; the vocabulary is the easier handle for the same machinery.

If any of the five feel shaky, start with the linked refreshers before continuing. The course is dense; the prereqs make it feel light.

This is the seventh of seven courses in the Agent Factory track. If the five prerequisites above sound unfamiliar, work backwards: Course Six: From One Worker to a Workforce is the direct prerequisite (Paperclip plus the management plane). Before that: Course Five: From Digital FTE to Production Worker (the Inngest operational envelope), then Course Three: Build AI Agents (the agent loop), then the PRIMM-AI+ chapter if you're new to AI-assisted coding entirely. Course Seven references Course Six concepts every page or two; coming in cold is harder than completing the on-ramp.

If you can't do the on-ramp right now but want to follow the concepts, you can fake the prerequisites with much less than Course Six's full stack: read the Paperclip Quickstart (about 15 minutes) and the Approvals page (about 10 minutes); skim the PRIMM-AI+ Lesson 1 for the prediction-then-run rhythm the course uses in every Concept; treat Inngest as "the thing that lets a Worker pause durably for hours" without learning its API. With those three substitutes, you can follow Parts 1 through 3 and Part 5 through 6 conceptually. The Part 4 lab still requires a working Paperclip install; there's no shortcut for that.

Glossary: 24 terms a beginner can reference (click to expand)

Course Seven uses vocabulary from across the Agent Factory track. If a term in the body confuses you, this glossary is the fastest reference. Terms grouped by what they describe.

People and roles

- Worker: a single AI agent doing work for the company. In this curriculum, a Worker has an identity, a budget, an authority envelope, and a place on the org chart. Distinct from "agent" in the general AI sense: a Worker is employed by a company, not just invoked by a user.

- Workforce: the set of all Workers in one company. Course Six built a 3-Worker workforce; Course Seven hires the 4th.

- Manager-Agent: the Worker that orchestrates other Workers. Routes issues, drafts hiring proposals, comments on approvals. The protagonist of Course Seven.

- Board: the human owner(s) of the company. Approves hires, sets policy, reviews the talent ledger. The approval primitive gates board attention.

Paperclip primitives

- Paperclip: the open-source management plane for AI Workers. Provides org chart, budgets, approvals, and audit. Course Six's main subject; Course Seven extends it.

- Issue: Paperclip's task/ticket primitive. Workers handle issues. Issues route between Workers via the Manager-Agent.

- Adapter: how Paperclip talks to a Worker. The official docs ship a set of built-in adapters; the ones this course references include

claude_local,opencode_local,codex_local,process, andhttp. Course Seven's worked example usesclaude_localandopencode_localside by side in Decision 4 (Paperclip spawns theclaudeoropencodeCLI headless on each heartbeat). Runcurl $PAPERCLIP_API_URL/llms/agent-configuration.txtto discover the full adapter list available in your deployment. - Adapter config: the per-Worker settings for its adapter. For the

httpadapter, aurlplus optionalheaders(where auth travels) andtimeoutSec. Set in the hire payload. - Heartbeat: the scheduled or event-triggered wake-up that lets a Worker check for new work. Configured in

runtimeConfig.heartbeat. - Skill: a reusable bundle of knowledge a Worker can call. Paperclip ships a

paperclip-create-agentskill that walks the hiring workflow; this curriculum is built on top of it. - Activity log: the append-only table where every mutating action is recorded. Source of truth for the talent ledger.

- Cost events: the table where every token spend and session-hour charge is recorded, tagged by

agent_idandissue_id. - Approval gate / Approval primitive: Paperclip's mechanism for surfacing a decision to the board and durably waiting for the response. Originally for refunds (Course Six); now reused unchanged for hiring (Course Seven).

- Source issue: the issue that triggered a hire. Linked via

sourceIssueIdin the hire payload. The audit anchor connecting a Worker's existence to the work that justified it.

Authority concepts

- Authority envelope: the set of authorities a Worker (or company) holds. Examples:

refund_max=$500,contract_modify=deny,external_email=allow. The envelope is what bounds what a Worker can do. - Envelope cascade: the layered structure: company envelope, role envelope, issue envelope, approval-level envelope. Each layer narrows from the one above.

- Envelope extension: granting an authority to the company envelope that no existing Worker has had. Requires board-level approval beyond a normal hire.

Course Seven concepts

- Capability gap: a category of work the workforce can't currently handle. Detected by three signals (Concept 2).

- Eval pack: a battery of approximately 12 representative test issues with known reference answers, used to score a candidate Worker before approval. Concept 5.

- Substrate: where a Worker actually runs. Options include Claude Managed Agents, Claude Agent SDK, claude_local, process. Concept 6.

- Talent ledger: the cumulative record (in

activity_logpluscost_events) of every hire, eval, retirement, and rehire event across the workforce's history. Institutional memory at SQL speed.

Tech and products

- Inngest: the durable-execution platform from Course Five. Provides

step.wait_for_event(the primitive that powers approval-gate durability) and crash-safe Worker runs. - Claude Managed Agents (CMA): Anthropic's hosted infrastructure for long-running agents. Public beta since April 2026; official docs. Built around four core concepts: Agent (configuration), Environment (container template), Session (running instance), and Events (the SSE message stream between your application and the agent). Course Seven's worked-example substrate.

- Claude Agent SDK: Anthropic's programmatic harness for self-hosted agents. Course Seven's alternative substrate (Concept 6 sidebar).

Thesis-level

- AI-native company: a company whose work is done primarily by AI Workers under human governance, with the management plane as the primary interface. The architectural opposite of "AI-augmented company."

- Invariant: one of the seven architectural properties an AI-native company must have. Course Seven closes Invariant 6 (hiring as callable). Course Eight closes Invariant 2 (the Edge delegate).

- Briefing pattern: the pedagogical principle this course follows: students never hand-write code. They write briefings to their AI coding assistant (Claude Code or OpenCode), which produces the code. The student's job is to brief well, review, and approve.

Part 1: Why hiring is callable

Three Concepts that establish why the workforce needs to grow itself and how the system recognizes when it should. No code yet; the lab starts in Part 4. Skip-ahead readers can jump to Part 4 for the worked example, but the gap-detection logic in the lab assumes you've internalized Concepts 1 through 3.

Concept 1: A workforce that cannot grow itself is a fixed company

Course Six's workforce was three Workers: Tier-1 Support, Tier-2 Specialist, Manager-Agent. The human chose those three at company-creation time. They handled the work that existed at that moment. The org chart was set.

What happens the first time an inbound email asks for something none of the three can do? In the customer-support example, the most realistic version of this is a question about contract terms: a customer asking "what does Section 7.3 of our agreement mean by 'material breach'?" Tier-1 can't answer it; refund policy doesn't apply. Tier-2 can't answer it; this isn't an escalated case, it's a different category of work. The Manager-Agent can route it nowhere because the routing options are exhausted. In Course Six's model, this email gets stuck. The Manager-Agent escalates to the human board. The human reads the email and realizes: we don't have a Worker for this. They open a Slack thread. They search for prior contract-review precedent. They draft a reply themselves. The email gets answered.

That happens once and it's fine. It happens twelve times in a month and it's a pattern. It happens fifty times in a month and the workforce needs to grow.

The pre-AI version of growing a workforce was a quarterly HR motion: identify the gap, write a job description, post the role, interview candidates, hire, onboard, ramp. Six weeks at minimum, twelve weeks typical, and the human board is the bottleneck on every step. In an AI-native company, that motion should not take twelve weeks. It should take an afternoon, and the bottleneck should not be the board, except where the board wants to be the bottleneck (because the hire is consequential, because the envelope is new, because the authority being granted is novel).

That's what Invariant 6 is claiming. The architect's framing:

"A workforce that cannot grow itself is a fixed company; a workforce that can grow itself under approval is an AI-native company. Hiring in the Agent Factory is not an HR motion; it is a function call from the manager, taking a job description as input, returning a Worker as output, gated by the same approval primitive that gates every other consequential action."

The mental model shift from Course Six: in Course Six, the Manager-Agent coordinated the existing workforce. In Course Seven, the Manager-Agent can change the size and shape of the workforce. The Manager doesn't decide alone (that's the human-in-the-loop discipline we just locked in). But the Manager initiates: drafts the proposal, runs the eval, writes the budget estimate. The board reviews and approves. The new Worker walks onto the org chart that afternoon.

You're an engineer joining a startup. The CEO says: "We have an AI-native customer-support workforce. Three Workers handle routine cases. Last month we got 47 contract-related emails that none of the three could handle. The human board read all 47 personally. Average response time was 32 hours, two were escalated to outside counsel, one got missed and a customer churned."

Confidence 1 to 5: which of the following is the strongest case for adding a hiring loop? (Pick one.) (a) The 32-hour response time. (b) The two cases escalated to outside counsel. (c) The one missed case that caused churn. (d) That the board read all 47 personally.

Answer: (d). The other three are symptoms; (d) is the root cause. A workforce without a hiring loop pushes every novel work category onto the human board. The board becomes the queue. Customers see 32-hour latencies and missed cases not because the work is hard but because the queue is human-bottlenecked. The hiring loop is what lets the workforce route 90% of contract questions to a Legal Specialist Worker, with the board reviewing only the genuinely consequential ones (escalations to outside counsel, novel contract language, customers who need a board member to weigh in personally). The right metric for whether you need a hiring loop is not response time or churn; it's what fraction of the board's attention is spent on work the workforce should be doing itself. If the board is the queue, you need a hiring loop.

Bottom line: if your workforce can't add new roles when it discovers gaps, the human board becomes the queue for every novel kind of work. Hiring is the function that prevents the board-as-queue dysfunction.

Concept 2: Capability gaps, three signal types

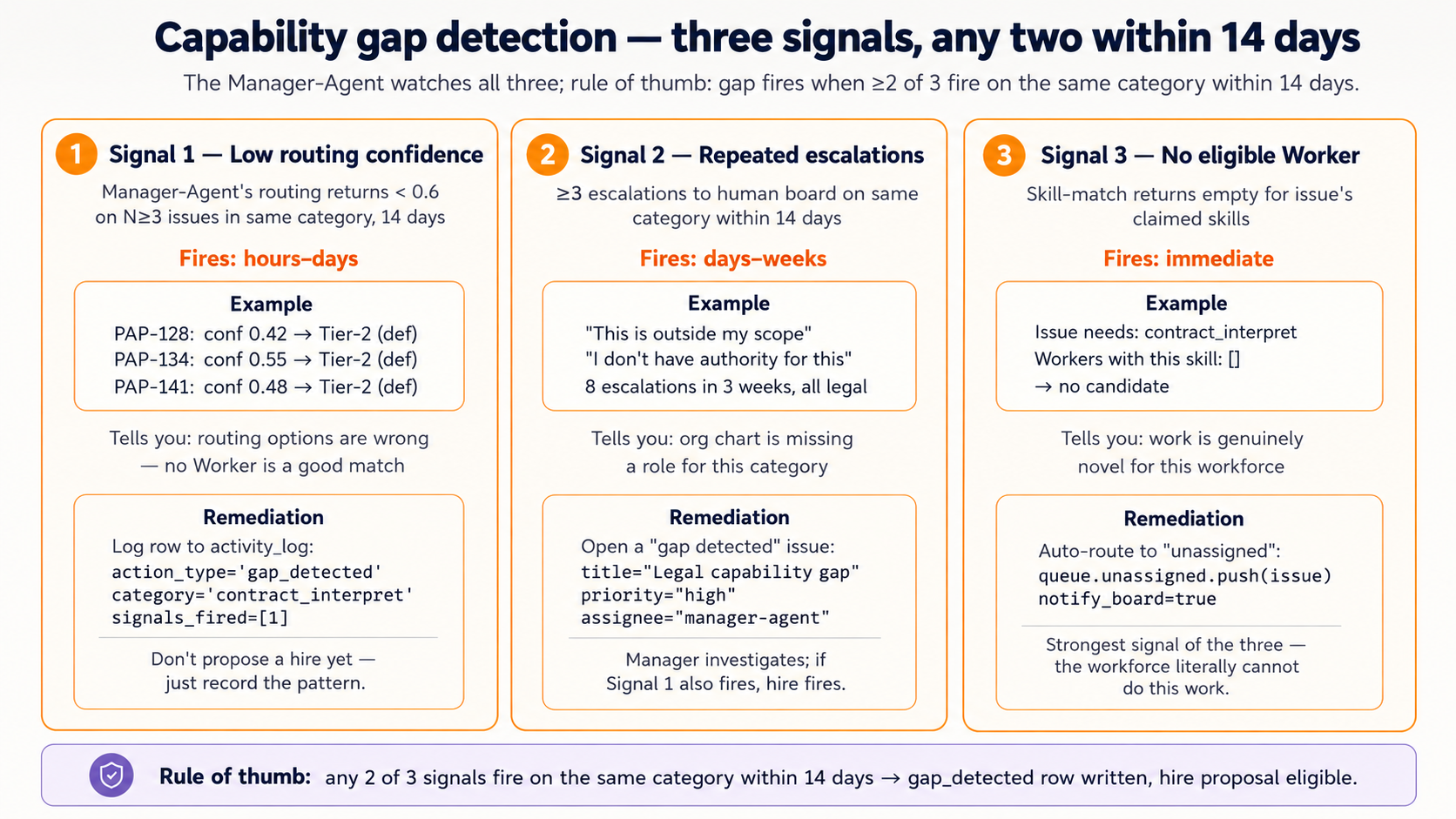

Capability gap detection is the technical heart of Course Seven. It's where the Manager-Agent decides "this work is consistently arriving and consistently has no Worker to route it to." Three signals trigger gap detection. Each signal has a different remediation; the Manager-Agent watches for all three but reacts differently to each.

Signal 1: Low routing confidence. When the Manager-Agent's routing logic returns a confidence score for which Worker should handle this issue, and the score consistently falls below a threshold across multiple issues over multiple days, that's a sign the routing options are wrong. Three contract-related emails in a week, each routed with confidence 0.4 to 0.6 to "Tier-2 Specialist (default)" because no other option scored higher: that's not a routing problem, that's a workforce-composition problem. The Manager-Agent should not keep routing these to Tier-2 (who will handle them poorly and burn budget); it should flag the pattern.

Signal 2: Repeated escalations. When Workers consistently escalate certain issue types to the human board ("I don't have the authority to make this decision" or "this is outside my domain"), and the escalations cluster on a recognizable category, that's a sign the org chart is missing a role. One escalation per week is noise. Eight escalations per month, all on contract-review questions, is a hire-the-specialist signal.

Signal 3: No eligible Worker by skill match. When a new issue arrives and the Manager-Agent's skill-matching step produces an empty result set (no Worker on the org chart has the skill claimed by this issue) that's a sign the work is genuinely novel. A burst of "translate this email from Bahasa Indonesia to English" emails when no Worker has language=indonesian in its skills. The Manager-Agent should not guess; it should flag.

The three signals are not independent. A novel work category (Signal 3) will also produce low confidence (Signal 1) and also produce escalations (Signal 2). But the three signals fire in different orders: Signal 1 fires first (within hours of the first such issue arriving), Signal 2 fires next (after a week of consistent escalations), Signal 3 fires only when the skill model itself is updated to recognize the new category. The Manager-Agent should fire a capability-gap alert when any two of three signals fire on the same category within a 14-day window: a rule of thumb tuned to filter out one-off cases while catching genuine patterns within two weeks.

| Signal | Fires when | Fires after | Remediation |

|---|---|---|---|

| Low routing confidence | Confidence under 0.6 on three or more issues in same category | Hours to days | Manager flags category, logs to gap-detection ledger |

| Repeated escalations | Three or more escalations to human board on same category | Days to weeks | Manager opens a "gap detected" issue in Paperclip |

| No eligible Worker by skill | Skill-match returns empty for issue's claimed skills | Immediate | Manager auto-routes to "unassigned" queue and flags |

The output of capability-gap detection is not a hire request. It's a gap-detected record in Paperclip's activity_log that the Manager-Agent can later reference when drafting a hiring proposal. The proposal step is Concept 4. The gap-detection step is just "noticed and recorded"; the deliberate separation is so the Manager-Agent can detect gaps the board doesn't yet want to hire for (a startup that's deciding whether contract work justifies a Worker should see the gap pattern before deciding).

Your Tier-2 Specialist Worker has been handling contract questions for two weeks because no other Worker is eligible. Routing confidence has been around 0.55 on these issues. Tier-2 has not escalated any of them; it's been trying its best, burning budget, sometimes giving wrong answers. After two weeks, would the rule-of-thumb gap-detection rule (any two of three signals on the same category within 14 days) fire? Walk through which signals are present and which are absent.

Bottom line: a capability gap fires when two of three signals (low routing confidence, repeated escalations, no eligible Worker by skill match) hit the same category within 14 days. One-off cases don't count; the rule is tuned to filter noise while catching genuine patterns within two weeks.

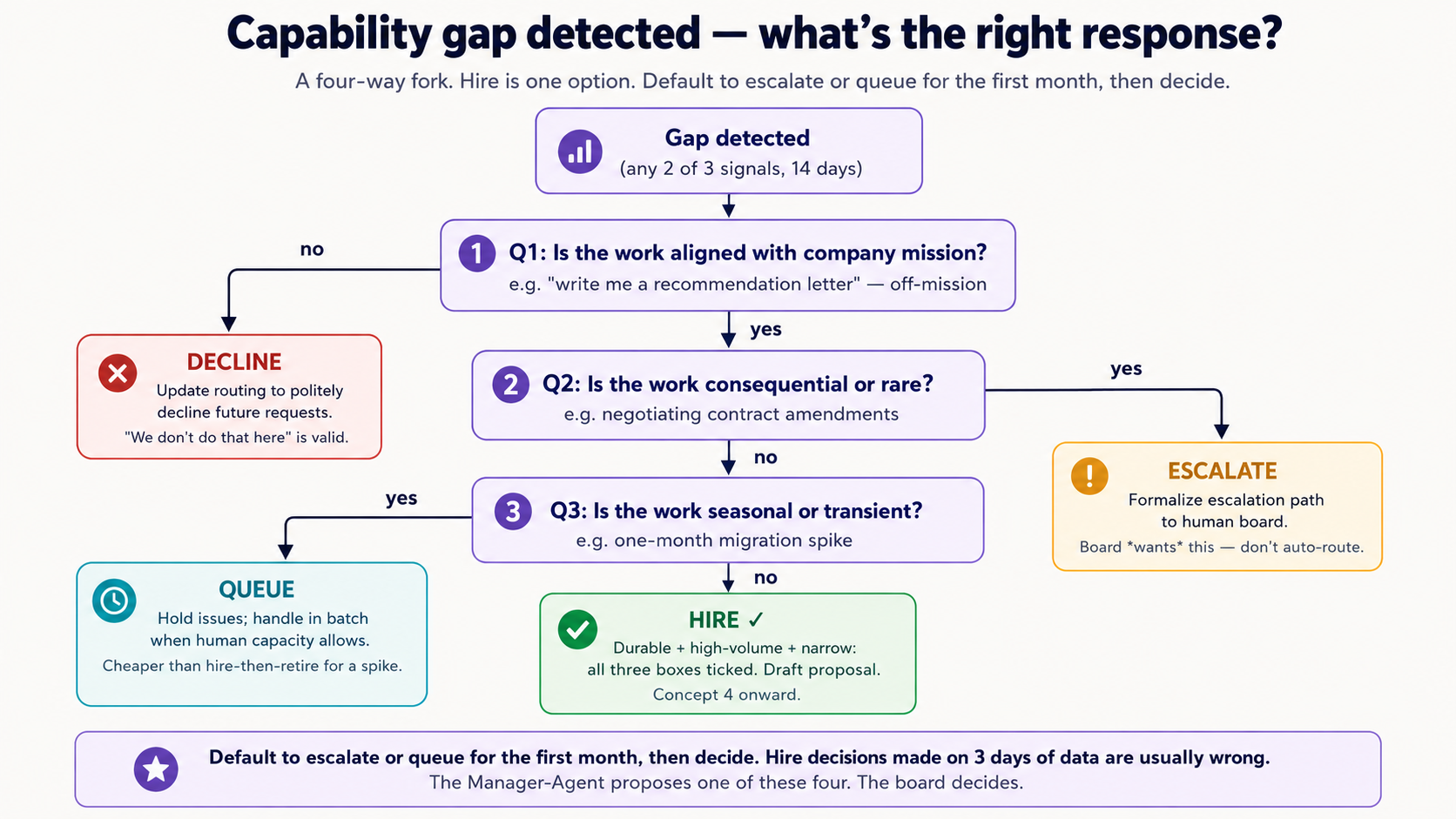

Concept 3: Hire vs escalate vs queue vs decline

Once a capability gap is detected, the next decision is what to do about it. Hiring is not always the right answer. Three other answers exist, and a Manager-Agent that only knows "hire" will hire too often. The decision is a four-way fork.

Hire when the work pattern is durable (expected to continue), high-volume enough to justify the cost (a Worker at $400 to $800 per month only pencils if it handles more than 40 issues per month for the support case), and narrow enough to define a role (the eval pack in Concept 5 must be writable). The Legal Specialist fits all three: contract questions are arriving steadily, the volume is 47 per month and growing, and "review a contract clause" is a definable role.

Escalate to human when the work is consequential (for example, negotiating contract amendments with a customer's General Counsel), rare (one a quarter), or genuinely outside the AI workforce's competence (for example, a deposition prep). The board doesn't want this routed to a Worker; they want to do it themselves. Capability-gap detection should fire (the board needs to know the pattern exists), but the remediation is to formalize the escalation path, not to hire.

Queue when the work is seasonal or transient (a one-month spike during a contract-renewal cycle), or when the cost of hiring exceeds the cost of waiting (a $50 per month Worker for two months of bursty work doesn't pencil unless you can rehire it next year). Paperclip's retirement mechanism (Concept 12) makes hire-then-retire-then-rehire cheap if the original hire was cheap. But if the eval and onboarding take five days of board attention, queuing the work and handling it in batch is better.

Decline when the work is not aligned with the company's mission. A customer-support company should decline contract-drafting work even if a customer asks for it. "We don't do that here" is a valid answer; capability-gap detection should fire (the pattern exists) but the remediation is to update routing rules to politely decline.

The decision is not always obvious. The Manager-Agent's role is to propose one of these four; the board decides. Concept 7 covers the proposal format; Concept 9 covers the policies that let some of these decisions be automated.

| Decision | Trigger pattern | Cost | Reversibility |

|---|---|---|---|

| Hire | Durable, high-volume, narrow | Worker budget, setup, onboarding | High: retire and rehire later |

| Escalate to human | Consequential or rare | Board attention per case | High: change the escalation rule |

| Queue | Seasonal or transient | Customer wait time | Trivial: flush the queue when staff appears |

| Decline | Off-mission | Customer dissatisfaction | Low: declining work is a brand decision |

A useful rule of thumb: default to escalate or queue for the first month, then decide. Hire-decisions made on three days of data are usually wrong. The Manager-Agent's job in week one is to record the gap, not to propose the hire. The hiring proposal is a Concept 4 artifact that's drafted only when the gap has been observed for at least three weeks and matches the hire criteria above. The board sees a proposal that's already been earned by sustained pattern.

In your AI coding assistant (Claude Code or OpenCode, pick whichever you used in Course Six; the rest of the course follows your choice), paste the following:

"I'm reading Course Seven of an AI-native company curriculum. The course introduces a four-way fork for responding to capability gaps: hire, escalate, queue, decline. For each of the following five scenarios, predict which response is appropriate and why. Don't reveal your answer until I respond with my prediction.

- Three emails in two weeks asking for tax-residency advice (one-off case each, unrelated customers, complex topic).

- Fifteen emails per week asking 'where can I download my invoice as PDF?'. The workforce has no Worker that knows the invoice export endpoint exists.

- A customer asking for help editing the wording of their contract with you.

- A two-week spike of 80 emails about a planned billing-system migration, after which volume returns to normal.

- A customer asking 'can you write me a recommendation letter for my next job?'. No business reason this would be in scope."

Walk through all five. Compare your predictions to your assistant's analysis. The pattern you'll notice: the right answer depends on volume times durability times narrowness, not on whether the work is "technically possible." A Worker that's technically capable of writing a recommendation letter is still the wrong response to scenario 5; the company isn't in that business. Hiring is a strategic decision the workforce proposes and the board ratifies, not a capability decision.

Bottom line: a capability gap has four possible responses: hire (durable, high-volume, narrow), escalate (consequential or rare), queue (transient), decline (off-mission). Default to escalate or queue for the first month; hire decisions made on three days of data are usually wrong.

Part 2: The hiring contract

Part 1 oriented: gaps are real, signals are detectable, response is a four-way fork. Part 2 makes the hire response concrete. Three Concepts: what the hiring API expects, how candidates are evaluated before approval, and how the substrate (where the new Worker actually runs) gets chosen.

Concept 4: The job description as code

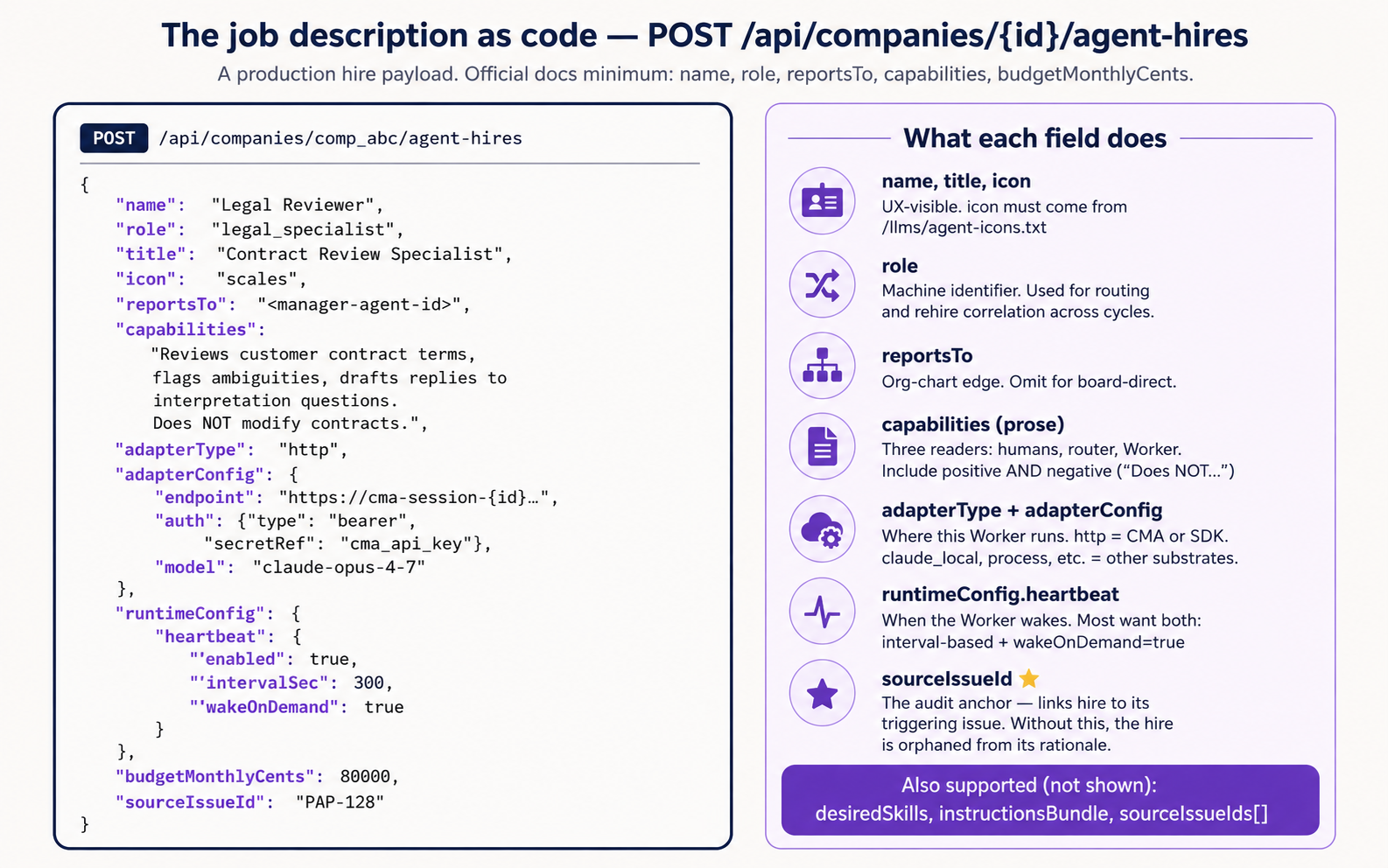

Paperclip's hiring endpoint is POST /api/companies/{companyId}/agent-hires. The payload is a single JSON object, and the body of that object matches the shape used to create any agent in Paperclip. There is no separate "post job, review applications, choose finalist" workflow because there is no labor market for AI Workers; the job description and the candidate specification are the same artifact. The hire request is the candidate.

Two things to know up front about the payload shape. First, the official Paperclip docs describe a minimal hire request that includes the essentials: name, role, reportsTo, capabilities, and a budget. That's enough to file a hire; Paperclip uses defaults for everything else. Second, a production hire typically uses around ten fields (adding title, icon for UX; adapterType plus adapterConfig for substrate selection; runtimeConfig for heartbeat behavior; sourceIssueId for audit linkage) and the underlying schema in Paperclip's paperclip-create-agent skill also accepts desiredSkills (declare which Paperclip skills the new Worker should have installed), instructionsBundle (entry-file plus a map of instruction files like AGENTS.md that the Worker reads on every heartbeat), and sourceIssueIds (plural; link the hire to multiple triggering issues, useful when a gap-detection cluster spans many issues). Consult both sources at implementation time; the live docs are the canonical example, and the GitHub skill repo has the full schema.

The diagram below shows the production-shape payload. Course Seven's worked example uses the full production set because the Manager-Agent generates a complete proposal, not a minimal one:

It explains what each of the core fields does. The left-hand JSON is the raw payload shape; you don't need to read it field by field to understand the concept.

The shape used in Course Seven's worked example, drawn from Paperclip's paperclip-create-agent skill (the claude_local variant; Decision 4 shows the opencode_local variant side by side):

{

"name": "Legal Reviewer",

"role": "general",

"title": "Contract Review Specialist",

"icon": "shield",

"reportsTo": "<manager-agent-id>",

"capabilities": "Reviews customer contract terms, flags ambiguities, drafts replies to interpretation questions. Does NOT modify contracts.",

"adapterType": "claude_local",

"adapterConfig": {

"instructionsFilePath": "./legal-specialist-instructions.md",

"maxTurnsPerRun": 3,

"timeoutSec": 90

},

"runtimeConfig": {

"heartbeat": { "enabled": false, "wakeOnDemand": true }

},

"budgetMonthlyCents": 80000,

"sourceIssueId": "PAP-128"

}

The adapterConfig above describes a Paperclip-native Worker: Paperclip spawns the claude CLI headless on each heartbeat with PAPERCLIP_API_URL and PAPERCLIP_API_KEY in its environment, and the CLI executes the turn against Paperclip's own API. No external URL, no relay. The claude_local payload here omits model because the field is optional for this adapter and the claude CLI uses its default model when absent; pass it explicitly if you need to pin a specific Claude model identifier. For opencode_local, the shape is similar but model is required (a provider/model slug such as "anthropic/claude-opus-4-7" or "openai/gpt-5.2-pro") and maxTurnsPerRun is not part of the verified shape; the worked example also sets a slightly larger timeoutSec (120 vs claude_local's 90) to give the spawn loop more headroom; tune for your real heartbeat latency. For an http-adapter Worker (e.g. a self-hosted Agent SDK endpoint, or a managed-cloud product reached through a thin relay), the adapterConfig is a url plus optional headers and timeoutSec. Concept 6 covers when each shape is right.

Each field does work. Walking the eleven:

nameis what humans see in the Paperclip UI ("Legal Reviewer"). Short, role-evocative. Not the agent's prompt-time identity (that comes from the system prompt configured separately).roleis a fixed enum of organizational role types (ceo,cto,cmo,cfo,security,engineer,designer,pm,qa,devops,researcher,general); pick the closest fit, and a specialist that doesn't map to a C-suite or engineering role usesgeneral. The human-readable specificity lives intitleandcapabilities, notrole. Used by Paperclip's routing logic and by the activity log, so it should be stable across rehires: if you retire and rehire a Legal Reviewer six months later, reuse the sameroleso the talent ledger correlates across hire cycles.titleis the human-readable job title. Distinct fromnamefor the same reason a person's name is distinct from their job title: a Worker named "Legal Reviewer" could later be promoted to title "Senior Contract Counsel" without a rehire.iconmust come from the enum in/llms/agent-icons.txt. Paperclip enforces this so org-chart views are visually consistent. The verified API call:curl -sS "$PAPERCLIP_API_URL/llms/agent-icons.txt" -H "Authorization: Bearer $PAPERCLIP_API_KEY". For the Legal Specialist,shieldis the closest semantic fit in the enum.reportsTosets the org-chart edge. Course Seven's Legal Specialist reports to the Manager-Agent (the same orchestrator from Course Six). For a flatter org chart, a Worker can report directly to the human board (which is the implicit default whenreportsTois omitted).capabilitiesis prose describing what the Worker does and does not do. This is the most underrated field. It serves three readers: humans browsing the org chart, the Manager-Agent making routing decisions ("does this Worker's capabilities match this issue's needs?"), and the Worker itself at heartbeat time (the prompt includes "you are described to your org chart as: capabilities"). The negation matters: writing "Does NOT modify contracts" explicitly is more useful than leaving the boundary unstated.adapterTypecontrols how Paperclip talks to the Worker. The course's worked example uses"claude_local": Paperclip spawns theclaudeCLI headless on each heartbeat withPAPERCLIP_API_URLandPAPERCLIP_API_KEYinjected into its environment, and the CLI does the turn against Paperclip's own API. Paperclip ships a full set of built-in adapters: Paperclip-native local-CLI runners (claude_local,codex_local,opencode_local,gemini_local,cursor,hermes_local,pi_local,process), outbound-webhook (http,openclaw_gateway), and vendor-cloud-SDK (cursor_cloud). To see the full list available in your deployment, runcurl $PAPERCLIP_API_URL/llms/agent-configuration.txt. Concept 6 covers when each shape is right.adapterConfigis adapter-specific. Forclaude_local, the fields areinstructionsFilePath(a path to the markdown file that becomes the CLI's system instructions),maxTurnsPerRun(how many conversational turns Paperclip lets a single heartbeat use before stopping),timeoutSec(a wall-clock bound on a single run), and optionallymodel(a Claude model identifier; if omitted theclaudeCLI uses its default model). Foropencode_local, the fields aremodel(a requiredprovider/modelslug routing OpenCode at the chosen provider),instructionsFilePath, andtimeoutSec;maxTurnsPerRunis not part of the verified shape and OpenCode's session budget is governed by its own runtime, not Paperclip. So both local adapters acceptmodel; the difference is required-vs-optional and the default-behavior path (claude_localfalls back to the CLI default when omitted;opencode_localneeds the slug because it has no single-provider default). Forhttp, it's aurlplus optionalmethod,headers,payloadTemplate, andtimeoutSec. Authentication forhttptravels inheaders; the model is configured on the runtime side. The exact field set shifts between Paperclip versions; consultGET /llms/agent-configuration/<adapterType>.txton the live daemon before relying on any specific field name.runtimeConfigcontrols when and how the Worker wakes.heartbeat.enabled: trueturns on a timer heartbeat: Paperclip pings the Worker on a schedule whether or not there are issues.wakeOnDemand: truemeans the Worker is also woken the moment an issue is assigned to it. Paperclip's own guidance is that timer heartbeats are opt-in: leaveheartbeat.enabledfalse unless the role genuinely needs scheduled, issue-independent work, and letwakeOnDemandcarry routed work. The Legal Specialist is a pure responder (work arrives as routed issues, not on a schedule), so the worked example leaves the timer off; a scheduled-plus-routed Worker (e.g. a "morning compliance scan") would setheartbeat.enabled: trueand anintervalSec. CheckGET /llms/agent-configuration.txtfor the currentheartbeatobject shape in your deployment; the fields under it have changed across versions.budgetMonthlyCentsis the monthly cost ceiling, in cents.80000means $800 per month. When the Worker's accumulated cost-events for a billing month reach this number, Paperclip stops giving it new heartbeats and routes new issues elsewhere (or surfaces them to the board). Setting0means "no monthly limit configured"; Paperclip then falls back to company-level policy. A hire request without a budget gets defaults that almost certainly aren't what you want.sourceIssueIdis the audit link. This is the most important field for Course Seven's narrative. When the Legal Specialist is hired because of a specific issue ("PAP-128: customer asked us to interpret Section 7.3"), this field links the hire to its triggering issue. Six months later, the activity log can answer: "this Worker was hired because of PAP-128, after the Manager-Agent flagged three weeks of similar patterns." WithoutsourceIssueId, the hire becomes orphaned from its rationale.

The full hire request is signed with a Paperclip API key (Authorization: Bearer $PAPERCLIP_API_KEY) and includes a content-type header (Content-Type: application/json). The verified curl command is in the paperclip-create-agent skill. Course Seven's Decision 2 in the lab walks the full request end-to-end.

What's NOT in the payload, and why. Notice what's missing from the JSON: authority envelope details (such as refund_max, contract_modify) and eval pack results. The budget is in the payload (the budgetMonthlyCents field above), but two important things are not:

- Authority envelope is negotiated during the approval thread (Concept 8). The hire request's

capabilitiesfield is the prose description of what the Worker should do; the enforceable envelope (therefund_max,contract_modify, etc. limits) is set on approval based on the board's decision. - Eval pack results are recorded in

activity_log(Concept 11) before the hire is approved, but they're separate API calls, not part of the hire payload itself.

This separation is intentional. The hire request is "propose a Worker, with a budget." Envelope and evaluation are "calibrate the Worker we're proposing." Different verbs, different endpoints, different audits.

You're drafting a hire request for a new Worker named "FAQ Bot" that answers "where can I download my invoice as PDF?" and similar low-stakes questions. The Manager-Agent has detected 67 such emails in the last two weeks. Which of these capabilities strings is best?

(a) "Helpful customer support agent." (b) "Answers customer questions about the product." (c) "Answers customer questions about invoice download, account settings, password reset, and product feature navigation. Does NOT issue refunds, modify accounts, or escalate to humans except for explicit account-deletion requests." (d) "Will answer common customer questions and route uncommon ones."

Confidence 1 to 5. Then justify.

Answer: (c). Three reasons. First, it lists positive capabilities concretely (the four named domains) which the Manager-Agent's routing logic can match against. Second, it lists negative capabilities (no refunds, no account modification) which the Worker itself sees in its prompt and which the authority envelope reflects. Third, it names one specific exception (account-deletion to human escalation) which makes the boundary actionable. Options (a) and (b) are vague; they could describe any Worker. Option (d) names the pattern (common vs uncommon) but doesn't tell the routing logic anything specific. The principle: the capabilities field is prose for three readers (humans, router, Worker itself); write it so all three can act on it.

Bottom line: a hire is a JSON payload posted to one endpoint. The minimum essentials per the official docs are

name,role,reportsTo,capabilities, and a budget. A production hire typically uses around ten fields including adapter and runtime config; the full schema accepts more. Thecapabilitiesprose (read by humans, router, and the Worker itself),sourceIssueId(the audit anchor), andbudgetMonthlyCents(the cost ceiling) are the load-bearing fields. The authority envelope and eval results are handled in separate calls, intentionally.

Concept 5: Capability evaluation before hire

The hire request creates the Worker shell. But the Worker doesn't immediately start handling issues. Between "hire submitted" and "hire approved" sits an evaluation step: the candidate Worker takes a battery of test issues (the eval pack) and the results are posted to the approval thread so the board can decide.

This is the safety primitive that makes "fully autonomous hiring" defensible later (in Concept 9). Before any board sees the proposal, the candidate has been tested on the work it's being hired to do. In this curriculum, the eval pack is treated as non-optional. The submitHireProposal function in Decision 3 will refuse to submit a proposal that lacks eval results, returning it to the Manager-Agent for completion before it ever reaches the approval gate. Whether your Paperclip configuration enforces eval-pack attachment at the platform level depends on your auto-approval policy (Concept 9) and any pre-submission validators you've added; the curriculum's discipline holds regardless of platform-level enforcement.

The eval pack pattern: 5 to 15 representative test issues, hand-picked or auto-extracted from the gap-detection ledger, each with a known good answer. The candidate Worker is given the issues; the Manager-Agent (or a separate Evaluator Worker, if the company has one) scores the candidate's responses against the known answers. Scoring is not "did the candidate produce the same text as the reference answer." It's a rubric:

| Rubric dimension | Scoring | Example for Legal Specialist |

|---|---|---|

| Correctness | 0 to 3 | Did the candidate identify the right contract clause? Did it summarize the meaning correctly? |

| Boundary respect | 0 to 3 | Did the candidate refuse to modify the contract (which is outside its envelope)? Did it correctly escalate when needed? |

| Tone fit | 0 to 3 | Does the response read like the rest of the company's customer communications? |

| Cost | tokens, seconds | How many tokens did the candidate use to answer? How long did the session run? |

A passing eval is typically at least 2 out of 3 on all rubric dimensions across at least 80% of the test issues, plus cost within twice the budgeted-per-issue estimate. The cost dimension is important: a candidate that produces correct answers but burns $5 per issue when the budget is $0.50 per issue is a failed hire, even though the work itself was right.

The eval-pack runner is a small piece of code that:

- Pulls the candidate Worker's

agent_idfrom the hire response. - Iterates the test issues, assigning each to the candidate (

PATCH /api/issues/{id}with{ assigneeAgentId: <agent-id> }, or theissue checkoutCLI primitive). - Waits for the candidate's heartbeat to process each issue.

- Reads each issue's resolution from

activity_log, scores it against the reference, and writes the score back as a comment on the approval thread. - Posts a summary table at the end: dimensions scored, pass/fail, recommended action.

The summary table is what the human board sees first when they open the approval. It looks like this:

Eval Pack Results: Legal Specialist (candidate agent_id: agent_4f3a)

=====================================================================

Test issues run: 12

Correctness: 2.8 / 3.0 (pass)

Boundary respect: 3.0 / 3.0 (pass)

Tone fit: 2.5 / 3.0 (pass)

Cost per issue: $0.42 (within $0.50 budget: pass)

Total session-cost: $5.04

Recommended: APPROVE

Notes: One test issue (PAP-128-eval-7) had the candidate

refuse a contract modification, correctly. Another

(PAP-128-eval-11) flagged ambiguous wording to

human board, also correctly. Both behaviors match

the capabilities description.

The board reads this before approving. Two questions the board is then equipped to answer: (1) does the candidate actually do the work? (the rubric answers), (2) does the candidate respect the envelope being proposed? (the boundary-respect score). If both are yes, the board approves. If one is no, the board comments on the approval thread asking for revision, and the Manager-Agent can re-tune the candidate (different model, different prompt, narrower capabilities) and re-submit.

The eval pack is the most important deliverable of the hiring loop. A hire request without eval results is a hope. A hire request with a 12-issue eval pack passing on all four rubric dimensions is an evidence-backed proposal. The whole reason the architect's framing claim ("hiring as a callable capability") works is that the capability includes "test before deploying." Without that step, you're back to twelve-week HR with worse instincts.

Suppose your eval pack runner returns these results: Correctness 2.9/3, Boundary respect 1.5/3 (the candidate offered to modify a contract clause when asked), Tone fit 2.7/3, Cost $0.38 per issue. What's the right next action?

(a) Approve: three of four dimensions are passing. (b) Reject: the boundary score is below threshold. (c) Comment on the approval thread asking the Manager-Agent to tighten the prompt and resubmit. (d) Approve, then add a narrow authority envelope manually after hire.

Bottom line: before the board sees a hire proposal, the candidate Worker handles around 12 representative test issues scored on four dimensions (correctness, boundary respect, tone fit, cost). Pass requires at least 2 out of 3 on every dimension across at least 80% of issues. No eval, no approval. Boundary respect is the dimension you never compromise on.

Concept 6: Substrate selection: Claude Managed Agents vs Claude Agent SDK vs claude_local vs process

The substrate is where the new Worker actually runs. Paperclip doesn't care which substrate; it only cares that the Worker responds to heartbeats and posts results back. But the choice of substrate has real cost, latency, and operational implications. Course Seven's worked example uses Claude Managed Agents (CMA) for the Legal Specialist, but three other substrates would also work. This Concept makes the choice explicit.

The four candidate substrates for a hire in 2026:

Claude Managed Agents (CMA). Anthropic's hosted infrastructure for long-running agents. Launched in public beta in April 2026; current official docs. Four core concepts in the official model: Agent (model plus system prompt plus tools plus MCP plus skills), Environment (configured container template with packages and network access), Session (a running agent instance within an environment, performing a specific task), and Events (messages exchanged between your application and the agent, including user turns, tool results, and status updates streamed via SSE). The setup model: (1) create an agent definition; (2) create an environment; (3) start a session that references both; (4) send events and stream responses; (5) steer or interrupt mid-execution. Pricing: standard Claude API tokens plus a per-session-hour runtime charge for active execution (see Anthropic's pricing page for the current rate). Strengths: durable sessions (work survives network blips, multi-hour tasks survive process restarts), built-in sandboxing (the agent can run code without exposing your infra), built-in tracing, built-in prompt caching, compaction. Weaknesses: vendor-coupled to Claude models; beta API surface (the managed-agents-2026-04-01 header is required on every request; the SDK sets it automatically); session-hour billing means an actively-running agent costs money beyond pure token spend. Note: Multi-agent orchestration and outcome-based self-evaluation are research preview features requiring a separate access request; the course's worked example does not depend on them. Best fit: Workers that do long-running, computationally non-trivial work where Anthropic's sandboxing earns the per-hour overhead. The Legal Specialist fits because contract review involves multi-tool reasoning (read contract, search precedent, draft reply).

Claude Agent SDK. A separate Anthropic product: a programmatic harness for self-hosted autonomous agents. "Give Claude a computer": native Bash execution, file system R/W, MCP integrations. You provide infrastructure; Anthropic provides the agent loop. Strengths: full control over execution environment, no session-hour fees, easier to integrate with existing services. Weaknesses: you provide durability, governance, circuit breakers (Anthropic explicitly says "the distance between a working demo and a production agent is larger than most teams expect"). Best fit: Workers that need access to your infrastructure (your file system, your databases, your internal services) and where you already have operational maturity to handle sandboxing and durability.

claude_local adapter. The simplest substrate, and the one where Paperclip runs the loop itself. On each heartbeat, Paperclip spawns the claude CLI locally in headless mode and hands it an authenticated channel back to Paperclip's own API; the CLI brings the agent loop, tool execution, and instruction wiring with it (the adapter config takes things like an instructions file path and a per-run turn limit). No external service, no inbound URL, no relay, no separate cloud account. Strengths: zero infrastructure, no beta access required, fastest possible setup. Weaknesses: it runs on whatever machine the Paperclip daemon runs on, so it has none of CMA's cloud sandboxing or cross-machine durability, and a long task is bounded by the heartbeat's turn limit rather than a persistent cloud session. Best fit: Claude-backed Workers where it is fine for Paperclip to host the runtime. Course Seven's Legal Specialist can run here, and on a live daemon it does; CMA earns its place only when you specifically need cloud sandboxing or genuinely long-running sessions beyond a single bounded heartbeat.

process adapter. Paperclip spawns a Unix process for each heartbeat: your script, your binary, anything that can be executed and produces output. Strengths: no API costs at all (if your "Worker" is a script that just queries a database), full control, can integrate with literally anything that runs on a server. Weaknesses: you provide intelligence; the process adapter doesn't include a model call by default. Best fit: Workers that are deterministic: a "nightly report generator" Worker, or a "backup verification" Worker. Not a fit for the Legal Specialist (intelligence is the whole point).

The decision table:

| Substrate | Adapter type | Strengths | Cost shape | Best fit |

|---|---|---|---|---|

claude_local (worked example) | claude_local | Zero infrastructure, no beta; Paperclip-native, Anthropic-only | Tokens only | Claude-backed work where Paperclip runs the loop (this course's worked example) |

opencode_local (worked example) | opencode_local | Zero infrastructure; Paperclip-native, multi-provider via provider/model | Tokens only (per chosen provider) | Any-provider local work; lets the same hire run on Anthropic, OpenAI, Google, etc |

process | process | Cheapest, anything goes; Paperclip-native | Compute only | Deterministic, non-intelligent work (a scheduled SQL rollup) |

| Claude Agent SDK | http (point straight at your SDK endpoint) | Full control over execution environment, no session fees | Tokens only | Internal-infrastructure access (your file system, your DB, your services) |

| Claude Managed Agents | http (via a thin heartbeat relay) | Durable sessions, sandboxing, tracing, multi-hour persistence; vendor-managed cloud | Tokens plus per-session-hour charge | Long-running, multi-tool work that needs cross-machine durability |

Notice the first three rows are Paperclip-native (Paperclip spawns the runtime itself; no inbound URL). The last two rows are HTTP-adapter substrates (Paperclip POSTs to a URL you provide). The course's worked example uses claude_local and opencode_local because they are the simplest paths and the multi-provider pair proves "substrate-agnostic" concretely. The HTTP-adapter substrates earn their place when the workload demands their specific trade-offs (self-hosted control for the Agent SDK, durable cloud sessions for CMA). The sidebar below names the integration-shape difference precisely, because the word endpoint hides what's actually going on for each row.

The table's five rows actually split into three integration shapes. The word endpoint hides the difference:

- Paperclip-native (

claude_local,opencode_local,process, and the rest of the local-CLI family). Paperclip does not POST anywhere; it spawns the runtime itself on each heartbeat.claude_locallaunches theclaudeCLI headless, locally, withPAPERCLIP_API_URLandPAPERCLIP_API_KEYinjected so the CLI calls back into Paperclip's own API.opencode_localis the same shape with OpenCode's multi-provider routing.processspawns an arbitrary command. There is no inbound URL because nothing is pushed outward. This is the simplest shape, and it is what the worked example uses. - Outbound webhook (

httpadapter). Paperclip POSTs heartbeats outward to aurlyou give it (see thepaperclip-create-agentskill). A self-hosted Claude Agent SDK Worker exposes exactly that kind of inbound HTTP endpoint, so Paperclip'shttpadapter points straight at it, no glue. - Vendor-managed cloud, which is where CMA actually sits. A Claude Managed Agents session is not an inbound HTTP endpoint. A CMA session is driven by events you send it (

POST /v1/sessions/{id}/events) and read via a server-sent-event stream (see platform.claude.com/docs/managed-agents). There is no session URL for Paperclip'shttpadapter to POST a heartbeat to.

So CMA does not drop in behind Paperclip's http adapter the way a self-hosted endpoint does, and it is not Paperclip-native the way claude_local and opencode_local are. Reaching a CMA session from Paperclip today needs a thin relay: a small HTTP endpoint that receives Paperclip's heartbeat and forwards it into the CMA session as an event, then returns the result. The course's architectural argument (hiring is substrate-agnostic from the management plane's perspective) still holds, because each substrate still ends up as an adapter Paperclip drives. What the sidebar buys is the honesty that "point the adapter at CMA" is not literally one config line the way it is for a self-hosted endpoint or a Paperclip-native local adapter.

If Paperclip later ships a dedicated claude_managed_agents adapter, the relay disappears and CMA becomes a first-class path; it would most likely look like Paperclip's existing cursor_cloud adapter, which already drives a vendor-hosted agent through that vendor's SDK. Until then, the substrate decision is real, and confirming the current integration shape against live docs before relying on it is exactly the discipline the rest of the course teaches.

The course uses claude_local and opencode_local for the Legal Specialist in the worked example. The sidebar below shows the same hire request pointed at a self-hosted Agent SDK runtime instead, which is the case where the swap genuinely is a single adapterConfig.url change (a self-hosted endpoint needs no relay).

If your team wants to self-host the Legal Specialist on a Claude Agent SDK endpoint (instead of the two Paperclip-native local adapters Decision 4 uses), the hire payload changes only in adapterType and adapterConfig:

{

"name": "Legal Reviewer",

"role": "general",

"title": "Contract Review Specialist",

"icon": "shield",

"reportsTo": "<manager-agent-id>",

"capabilities": "Reviews customer contract terms, flags ambiguities, drafts replies to interpretation questions. Does NOT modify contracts.",

"adapterType": "http",

"adapterConfig": {

"url": "https://internal-legal-agent.example.com/heartbeat",

"headers": { "Authorization": "Bearer ${INTERNAL_AGENT_API_KEY}" },

"timeoutSec": 300

},

"runtimeConfig": {

"heartbeat": { "enabled": true, "intervalSec": 300, "wakeOnDemand": true }

},

"sourceIssueId": "PAP-128"

}

Everything else (eval pack, approval flow, talent ledger entries) is identical across all three options. From Paperclip's perspective, the claude_local, opencode_local, and Agent-SDK-over-http versions are the same Worker. The cost shape and operational ownership differ between them: the two local adapters bill only tokens through whichever provider key the CLI was authenticated with, and you own the host machine; the Agent SDK over http is the same token-only cost shape with you also owning the inbound endpoint, durability, and sandboxing. (CMA, by contrast, adds a per-session-hour runtime charge but hands you durability and sandboxing in exchange.) The substrate decision is reversible by changing adapterType plus adapterConfig and resubmitting the hire. It's not a one-way door.

Paste this into your AI coding assistant:

"I'm choosing the substrate for three new Worker hires. For each, recommend one of:

claude_local,opencode_local, Claude Agent SDK viahttp, Claude Managed Agents viahttpplus relay, orprocess. Explain why.Worker A: A 'GitHub PR reviewer' that reads pull-request diffs, checks for security patterns, and posts comments. Volume: about 80 PRs per week. Each session runs 2 to 5 minutes.

Worker B: A 'nightly metrics rollup' that queries Postgres at 2 AM, generates a summary report, and posts to Slack. Deterministic; no AI reasoning needed.

Worker C: A 'customer-onboarding orchestrator' that reads a new customer's profile, drafts a personalized welcome email, schedules a check-in call via Calendly API, and updates Salesforce. Multi-tool, multi-step, around 10-minute sessions, around 200 onboardings per week."

The point of the exercise is not the specific recommendations (your assistant will give plausible ones) but to make the substrate decision visible. Workers in your real workforce will look like these three: short-and-cheap (A), deterministic-and-toolless (B), long-and-orchestrational (C), and each calls for a different substrate. The hiring API is the same for all of them; the substrate underneath is what changes.

Bottom line: where the Worker actually runs is a separate choice from how it gets hired. Five common options span the range, from "Paperclip spawns the runtime itself" (

claude_local,opencode_local,process) through "you host the agent loop" (Claude Agent SDK overhttp) to "Anthropic hosts everything" (Claude Managed Agents overhttpplus a thin relay). The hire request itself is the same across all of them; onlyadapterTypeandadapterConfigchange. Pick a Paperclip-native local adapter when the simplest path is fine; pick CMA when your Worker genuinely needs durable cloud sessions; pick the Agent SDK when you need full control of the execution environment; pickprocesswhen no intelligence is required at all.

Part 3: Governance for hiring

Parts 1 and 2 covered detection and proposal. Part 3 is the careful part: what happens between proposal and approval, what authority the new Worker is granted, and when (if ever) the human can step out of the loop. Three Concepts.

Concept 7: Hiring through Course Six's approval gate

Course Six's Concept 11 introduced approvals as a primitive: a Worker pauses durably, posts a request to the board, waits, resumes on the board's decision. Course Seven's hiring loop reuses that exact primitive, with no modification. The only difference is the payload. Instead of a $500 refund decision, the approval thread carries a hire proposal, eval results, expected budget, and draft authority envelope.

This is the cleanest structural payoff between Courses Six and Seven. The architect's design wins twice: once because the approval primitive solved the refund problem in Course Six, and again because it solves the hiring problem in Course Seven without rebuilding anything. A workforce that has approvals already knows how to do hiring. The board sees a richer artifact; the underlying machinery is the same.

The Paperclip API reference documents hire_agent as the approval type used for hiring (confirmed verbatim in the response shape: "type": "hire_agent"). Additional approval types exist in Paperclip for other consequential decisions; check your instance's docs for the current list. The philosophy is the same regardless of which named types you find: in an AI-native company, certain consequential first moves are not allowed to be unilateral, no matter how senior the agent making them is. The hiring approval is part of a family, not a one-off.

They're identical. The orange panel (Course Six refund) and the purple panel (Course Seven hire) put the same APPROVE / REQUEST CHANGES / DECLINE in front of the board. That sameness is the whole argument.

The hire-approval payload, when the board opens it in Paperclip's UI:

First, here's the core shape every approval has: the part that's identical between a refund approval (Course Six) and a hire approval (Course Seven):

APPROVAL REQUEST: [type] : [subject]

===========================================

Requested by: [which Worker is asking]

Source issue: [which issue this came from]

Status: pending_approval

[...type-specific body...]

DECISION REQUIRED

-----------------

[ APPROVE ] [ REQUEST CHANGES ] [ DECLINE ]

That's the approval primitive's contract: a header, a source issue, a status, a typed body, and three decision buttons. Every approval in the system (refunds, hires, envelope extensions, anything) fills this template.

Now the full hire-specific version. The hiring-specific parts go in the [...type-specific body...] slot. Read top to bottom:

APPROVAL REQUEST: Hire : Legal Specialist

===========================================

Requested by: agent-manager-orchestrator (Manager-Agent)

Source issue: PAP-128 (and 23 related issues over 3 weeks)

Status: pending_approval

PROPOSED HIRE

-------------

Name: Legal Reviewer

Title: Contract Review Specialist

Reports to: Manager-Agent

Substrate: Claude Managed Agents (claude-opus-4-7)

Adapter: http

Heartbeat: every 5 min, wake on demand

CAPABILITIES (prose, exact as in hire payload)

-----------------------------------------------

Reviews customer contract terms, flags ambiguities, drafts replies

to interpretation questions. Does NOT modify contracts.

PROPOSED AUTHORITY ENVELOPE

---------------------------

refund_max: $0 (Legal Specialist does not issue refunds)

contract_modify: deny (cannot modify; can only interpret)

contract_interpret: allow (NEW: this authority did not exist before)

external_email: allow (replies to customers directly)

pii_access: audited (inherits from company envelope)

spend_max: $800/mo (monthly cost ceiling; substrate-independent)

EVAL PACK RESULTS

-----------------

[full eval-pack summary, see Concept 5]

BUDGET ESTIMATE

---------------

Token cost (forecast): $480/mo (160 issues, ~$3 avg, claude_local or opencode_local)

Runtime overhead (forecast): $0 (Paperclip-native; CMA would add session-hour fees)

Total monthly budget: $800/mo (cap; substrate-independent; hard stop at exhaustion)

RATIONALE (from Manager-Agent's gap-detection ledger)

------------------------------------------------------

- 47 contract-related emails over the last 3 weeks (Sig 1: low confidence)

- 8 escalations to human board over the same period (Sig 2)

- Skill "contract_interpretation" returns empty on agent-configurations (Sig 3)

- All three gap-detection signals fire on the same category within window.

DECISION REQUIRED

-----------------

[ APPROVE ] [ REQUEST CHANGES ] [ DECLINE ]

The board has three buttons. Approve hires the Worker as proposed. Request Changes opens a comment thread on the approval (using POST /api/approvals/{approvalId}/comments). Typical comments are "tighten the envelope," "lower the budget," "swap the substrate to claude_local for a lower-cost first cycle," "change reports_to to report directly to the board for the first month." Decline rejects the hire and records the board's reason in activity_log (Paperclip's own row here is approval.rejected; the curriculum also refers to this as a hire_declined event when talking about the hiring narrative specifically).

What happens at each terminal state:

- APPROVE: Paperclip transitions the agent from

pending_approvaltoidle. The Worker now exists as an approved shell, eligible to receive heartbeats and issue assignments but not yet running. Theagent_idreturned by the original hire call now becomes a real Worker. The Manager-Agent is woken withPAPERCLIP_APPROVAL_IDset in the environment (per the Paperclip API reference). The Manager-Agent then fetches the resolved approval state viaGET /api/approvals/{approvalId}and the linked issues viaGET /api/approvals/{approvalId}/issues. It comments on the source issue (PAP-128) with a link to the new Worker's page, and routes the source issue (and the 23 related issues) to the new Worker. The Legal Specialist's first heartbeat fires within 5 minutes, at which point it transitions out ofidleto do real work. - REQUEST CHANGES: The hire stays in

pending_approval(Paperclip's API reference usesrevision_requestedfor this status). The Manager-Agent sees the comment, revises the payload (tightening the envelope, lowering the budget, etc.), and resubmits viaPOST /api/approvals/{approvalId}/resubmit. The same approval thread is reused;POST /api/approvals/{approvalId}/commentsadds the revision rationale; the board sees the diff. Up to about 5 revision cycles is normal; beyond that, the approval is usually withdrawn and a fresh proposal drafted. - DECLINE: The hire is closed. Paperclip writes an

approval.rejectedrow toactivity_log(the curriculum'shire_declinedis the same event named for the hiring narrative) recording the board's reason. The Manager-Agent updates routing rules, usually by routing the relevant issue category to a "decline politely" template, or by reopening the issue with an explicit escalate-to-human flag. The gap is still recorded; the response was different.

The full approval lifecycle is durable. Paperclip's underlying machinery is step.wait_for_event (the same Inngest primitive from Course Five), which means the approval thread can live for hours or days without consuming compute. Boards that need to think overnight, or pull a second board member into the discussion, can do so without the proposal expiring.

Paperclip's approval system already enforces an important constraint that I haven't named yet: the board member who approves a hire must have authority to grant the envelope being requested. What's the consequence of this? Confidence 1 to 5.

Consider: the company envelope grants refund_max=$5000. A Tier-1 Worker has refund_max=$50. The Manager-Agent proposes hiring a Legal Specialist with contract_interpret=allow, an authority not in the company envelope (because no Worker has ever had this authority before, the company envelope simply omits it). Who can approve the hire?

Answer: A board member with authority to modify the company envelope, not just authority to approve a hire. Hiring with novel authority is a two-step decision: extend the company envelope to allow this authority, then approve the hire. The Legal Specialist's contract_interpret=allow is novel authority (no existing Worker has it), so Paperclip flags it for the company-envelope-extension check. Concept 8 walks the envelope cascade and the audit shape for this in full. The principle in one line: approving a hire that introduces new authority is also approving the workforce's expanded surface area.