The PRIMM Framework

James is new to programming. He has spent his career in a non-technical role, but his company is shifting toward AI-driven workflows and he needs to understand code, not just use tools that generate it. His mentor is Emma, a senior engineer who has spent years building backend systems and has a reputation for turning confused beginners into confident developers.

On his first day of learning, Emma shows James an AI coding assistant. She types a prompt, and fifty lines of Python appear in ten seconds. A program that reads data, checks whether it is correct, cleans it up, and gives back the result -- all working, all ready to run.

"That's amazing," James says. "So the AI writes the code and I just use it?"

Emma points at line twelve. "What does that line do?"

James stares at it. He recognizes some of the words -- for, in, if -- but cannot explain what the line accomplishes. The AI generated fifty lines of working code and he understands none of them.

"Speed means nothing without comprehension," Emma says. "If you cannot read the code your AI produces, you cannot verify it, debug it, or adapt it. You are not programming. You are copying."

"Why not just ask AI to explain code I don't understand?" James asks.

"Try it," Emma says. James asks AI to explain a line of code. It gives a correct explanation. Then Emma asks him to predict what happens if one condition changes. He cannot answer. Reading an explanation is not the same as understanding the code.

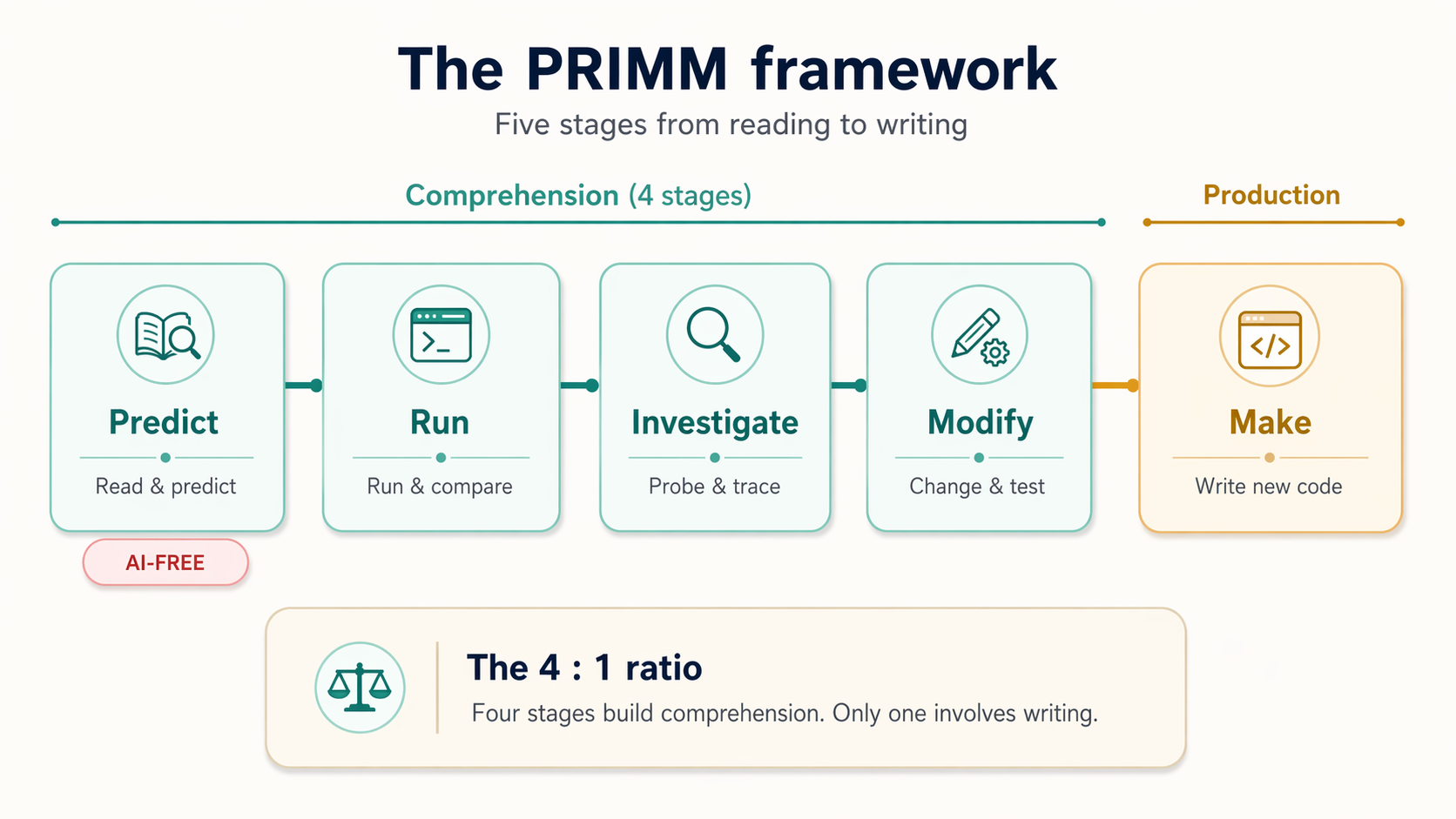

This lesson introduces the framework that solves this problem: PRIMM -- Predict, Run, Investigate, Modify, Make. It was created by computing education researchers Sue Sentance and Jane Waite in 2017 and tested with 493 students across 13 schools in England. The result: students who learned with PRIMM outperformed students who learned without it. The core idea is simple: you learn programming the same way you learn a spoken language. You read it before you write it. Since 2017, PRIMM has been adopted in schools across multiple countries.

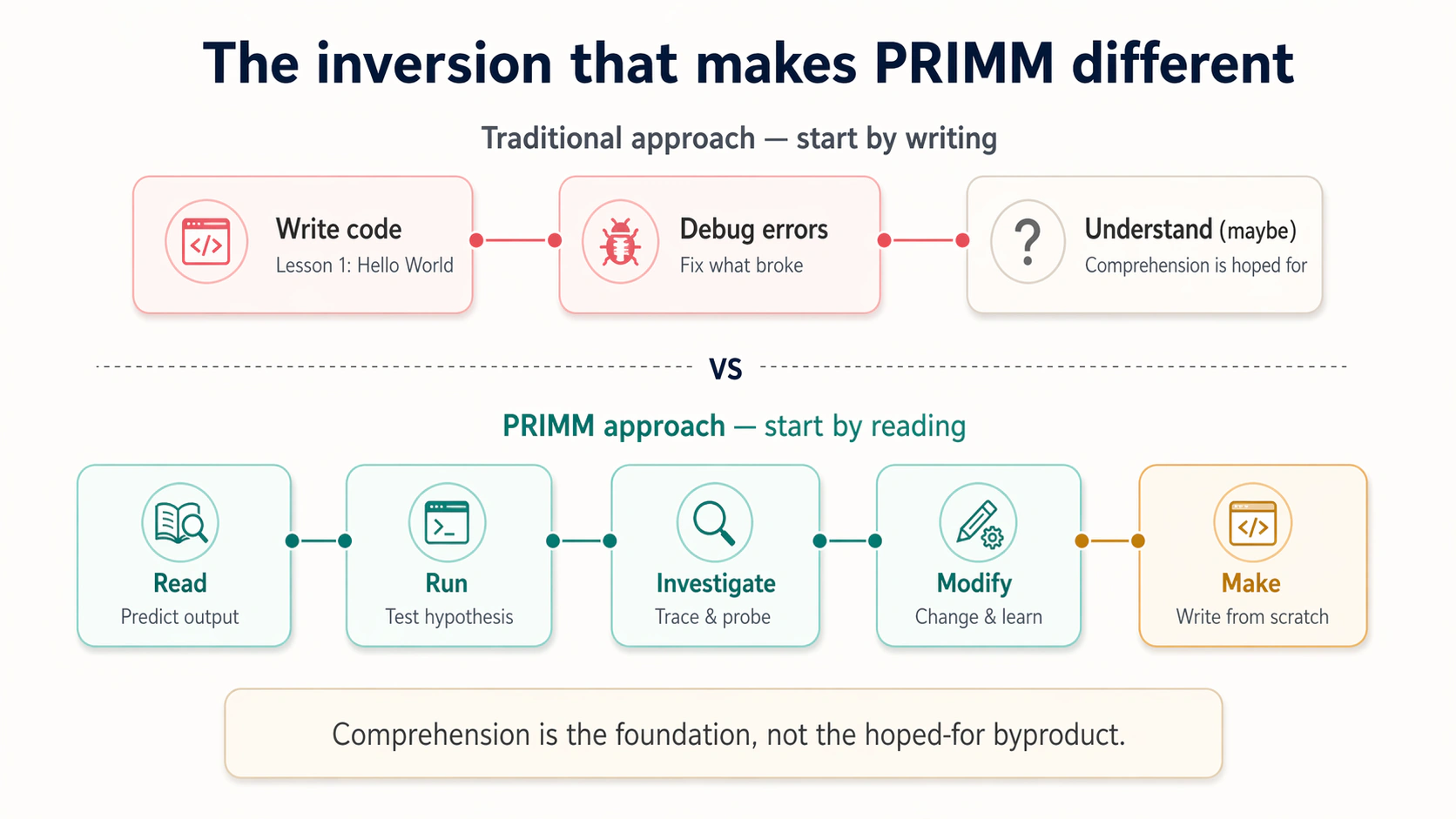

Most programming courses start at the end: write a Hello World program, write a program that adds numbers. PRIMM inverts this. It starts with reading and works toward writing. The five stages are:

- Predict -- Read code and predict what it will do before running it

- Run -- Execute the code and compare the actual output to your prediction

- Investigate -- Probe the code: trace variables, test edge cases, ask questions

- Modify -- Change the code to alter its behavior in targeted ways

- Make -- Write a new program that applies what you learned

The Five Stages in Action

Emma pulls up a short Python program on her screen. "Let me show you what this looks like in practice. I'll walk you through all five stages with one program, and you'll do the thinking, not me."

About the code below: You have not learned Python yet. That is the point. You are seeing what the PRIMM process looks like with real code. Focus on the process, not the syntax. When you encounter Python in Chapter 45, you will already know how to approach it.

Here are four things you need to know to follow this lesson. A variable is a named box that stores a value: name = "Sarah" creates a box called name and puts the text Sarah inside it. Text inside quotes (like "Sarah") is called a string. The + sign joins strings together end-to-end. And print() displays the result on your screen. You will learn all of this properly in later chapters. Right now, we are using simple code just to show you how the PRIMM-AI+ method works.

Stage 1: Predict [AI-FREE]

Read the following program. Do not run it. Do not ask your AI assistant. Stop and predict what it will print.

name = "Sarah"

greeting = "Welcome"

message = greeting + ", " + name + "!"

print(message)

Write down your prediction. Be specific: what exact text will appear on screen?

Stuck? Click here for a plain-English walkthrough

Read the program line by line, top to bottom:

name = "Sarah"stores the text Sarah in a box calledname.greeting = "Welcome"stores the text Welcome in a box calledgreeting.message = greeting + ", " + name + "!"glues four pieces of text together in order: whatever is ingreeting, then a comma and a space, then whatever is inname, then an exclamation mark. The+sign joins text end-to-end.print(message)displays the final joined text on screen.

Now that you know what each line does, write down the exact output you expect.

Rate your confidence from 1 (complete guess) to 5 (certain). Write that down too. After you run the code, you will compare your confidence to what actually happened. Over time, this teaches you to know when you really understand something versus when you are guessing.

Do not scroll past this point until you have both a prediction and a confidence score.

Stage 2: Run

James writes his prediction on a sticky note: Welcome, Sarah!, confidence score 4. He is fairly sure, but the comma placement makes him hesitate. Time to find out.

Here is the actual output:

Output:

Welcome, Sarah!

Compare your prediction to the actual output. Check each detail: the comma, the space, the exclamation mark.

Got it wrong? Good. That tells you exactly which part you misunderstood. Figure out why, and you just learned more than someone who guessed right.

Now compare your confidence score to your result:

| Your Confidence | Prediction Right | Prediction Wrong |

|---|---|---|

| High (4-5) | You understand it | You thought you understood it. Find the gap. |

| Medium (3) | Solid, but test yourself again | Normal. Find the specific part you misread. |

| Low (1-2) | You know more than you think | Expected. The prediction still showed you something. |

Stage 3: Investigate

James got the output right. "I predicted it correctly. I understand this. Let's move on."

"Not so fast," Emma says. "What if name were empty?"

James pauses. He changes name = "Sarah" to name = "" and traces through the code:

| Variable | Value |

|---|---|

name | "" (empty) |

greeting | "Welcome" |

message | "Welcome" + ", " + "" + "!" |

| Output | Welcome, ! |

The comma and exclamation mark still show up, but there is no name between them. The + operator does not know what makes sense. It glues text together exactly as you write it.

"Getting the right answer is not the same as understanding the machinery," James says. That is what Investigation is for: changing one thing at a time and watching what happens.

This is where your AI coding assistant becomes genuinely useful, not to generate code, but to answer questions about code you are reading:

I am reading a Python program with these lines:

name = "Sarah"

greeting = "Welcome"

message = greeting + ", " + name + "!"

print(message)

Question: What would happen if I swapped the order and wrote

name + ", " + greeting instead of greeting + ", " + name?

What would the output look like?

Your AI assistant will explain that the output would become Sarah, Welcome! because + joins text in the order you write it. But here is the critical rule of investigation:

Verify every AI explanation by running the code yourself. The AI might be wrong. It might be right but imprecise. The only way to know is to run the experiment. Investigation is not about getting answers -- it is about building the habit of questioning and verifying.

Stage 4: Modify

"Now change something," Emma says. "Don't ask AI. Don't ask me. Open the code and change it yourself."

James hesitates. "What if I break it?"

"Then you'll learn more than if you hadn't tried."

Modification requires understanding where to change code and what the change will do. Each task below demands a little more comprehension than the last.

Change the name. Replace "Sarah" with your own name. Predict what the output will be, then run it. This is the simplest modification -- you change one value and the rest follows.

Change the greeting. Replace "Welcome" with "Hello". Predict the new output. Now you are changing a different piece and watching how it flows through to the final message.

Add a second line of output. After the existing print(message), add a new line that prints just the name by itself. You need to figure out where to place the new line and what to write.

Each modification is small, but each one forces you to understand a different aspect of the program. You cannot change the greeting without understanding which variable feeds into message. You cannot add a second print without understanding the order in which lines execute.

Stage 5: Make

James managed both modifications. Now he is ready to build something on his own. The goal: a project badge that prints Sarah - Team Lead.

-

Describe what you want to build. Write one sentence before you touch code: "Given a name and a role, print them on one line separated by a dash."

-

Try it yourself. Use what you learned from the greeting program. You need two variables, one combined variable, and a print statement. If something breaks (like using

-instead of+), that mistake teaches you more than getting it right on the first try. -

Ask AI for specific help when stuck. Good: "How do I join two strings with a dash in Python?" Bad: "Write me a badge program."

-

Predict before you run. Before executing your new program, write down what you expect. Then run it and compare. You are now using the same Predict-Run cycle on your own code.

The Make stage completes the cycle. You started by reading someone else's code. You end by writing your own. Every stage in between built the comprehension that makes writing possible.

Why This Matters in the AI Era

James just completed his first full PRIMM cycle on a four-line program. It took twenty minutes. He could have asked AI to write the same program in ten seconds. "Is this really worth the time?" he asks.

Emma scrolls back to the fifty lines of AI-generated code from the beginning of the lesson. James looks at line twelve. He still cannot explain it. Then he looks at the greeting program, four lines he traced, modified, and rebuilt from scratch. He knows every line.

"Twenty minutes on four lines, and I actually understand them," he says. "Ten seconds on fifty lines, and I understand nothing. Yeah. It's worth the time."

AI can generate code in seconds, but it cannot give you understanding. Four of five PRIMM stages (Predict, Run, Investigate, Modify) build comprehension. Only the last one (Make) involves writing from scratch. You are starting with the skill that matters most in the AI era: the ability to read code and know whether it is correct.

Try With AI

Prompt 1: Explore the Prediction Process

I am learning the PRIMM framework for reading code. Here is a short

Python program:

first = "Agent"

second = "Factory"

result = first + " " + second

print(result)

Before you tell me the answer, ask me what I think the output will be.

After I give my prediction, show me the actual output and explain

any differences. Then ask me one investigation question about the code.

What you are learning: The Predict-Run-Investigate cycle with AI as a structured learning partner. The prompt asks AI to quiz you rather than give you answers -- this keeps you in the active learning role that PRIMM requires.

Prompt 2: Investigate an Edge Case

I am practicing the Investigate stage of PRIMM. Here is a program:

name = "Sarah"

greeting = "Welcome"

message = greeting + ", " + name + "!"

print(message)

I want to investigate what happens when things change. Walk me through

these scenarios one at a time, asking me to predict before revealing

the answer each time:

1. What if name is an empty string ""?

2. What if I swap greeting and name in the message line?

3. What if I write Name (capital N) instead of name on the message line?

For each one, explain WHY the output is what it is.

What you are learning: Systematic investigation through edge-case exploration. Each scenario tests a different assumption about how the code works, building your mental model through concrete experiments rather than abstract rules.

Prompt 3: Predict a New Program

I am learning the PRIMM framework. Generate a short Python program

(4-6 lines) that stores a city name and a temperature, then prints

a weather report. Do not use type hints. Do not explain the code.

After I write my prediction, show me the actual output and explain

any differences. Then ask me two investigation questions.

What you are learning: Applying the Predict-Run-Investigate cycle to a program you have never seen before. This tests whether you can transfer the skills from the greeting program to a new context with different variables.

James leans back from his screen. "Five stages. Predict, Run, Investigate, Modify, Make. Four of them are about understanding. Only the last one is about writing." He pauses. "That's like onboarding a new warehouse team. You don't hand someone a forklift on day one. You walk the floor with them, let them observe the flow, quiz them on safety protocols, have them shadow a veteran. The actual driving comes last."

"And 493 students across 13 schools confirmed that sequence works," Emma says. "Not a theory. Tested."

"The confidence scoring is what gets me, though. Forcing yourself to commit to a number before you see the answer." He taps his notebook. "I rated myself a 4 on that greeting program. Got it right, but only because it was simple. I bet my calibration falls apart on anything harder."

"Mine did, for years." Emma looks at her screen for a moment. "Early in my career I reviewed a pagination function, told the team it looked correct, moved on. It dropped every eleventh result. I was confident. I was wrong. That's why I trust the predict-first habit now."

"So I've got the method," James says. "But I did this whole lesson without AI. I have a coding assistant sitting right there. When do I actually get to use it?"

Emma smiles. "Next lesson. You'll learn exactly when to bring AI in, when to keep it out, and how to tell whether it's helping you learn or just doing your thinking for you. Same five stages, but with boundaries, checkpoints, and rules for your AI partner."