Eval-Driven Development for AI Employees: A Multi-Track Crash Course

*15 Concepts • Four learning tracks. Reader track: 3-4 hours pure conceptual reading (no setup, no lab — for leaders, strategists, and non-engineer readers who want to understand the discipline). Beginner / Intermediate / Advanced tracks: 1-3 days each (conceptual reading plus increasing lab depth, building real eval suites against the four-tool stack — OpenAI Agent Evals with trace grading, DeepEval, Ragas, Phoenix). Total honest estimate: 3-4 hours for the Reader track; 2-3 days for a team to ship the full discipline. Pick your track before Decision 1 — see the "Four learning tracks" section below.*

🔤 Three terms to know before you read any further (if you've done Courses 3-8, you already know these — skip to the plain-English version below).

The whole course rests on three concepts. Beginners benefit from seeing them defined plainly before they appear elsewhere:

- Agent. A piece of software that, given a natural-language task, can decide what to do — call functions, look things up, send messages, hand work to other agents, eventually respond. Not a chatbot (which just talks). An agent acts. The customer support assistant that reads your ticket, looks up your account, issues a refund, and sends you a confirmation is an agent. Course Three of the Agent Factory track teaches how to build them.

- Tool. A specific function or capability the agent can use — like

customer_lookup(email)orrefund_issue(account_id, amount)orsend_email(to, subject, body). The agent decides which tool to call and with what arguments; the developer writes the tool's actual code. Evaluating an agent partly means evaluating whether it picks the right tools with the right arguments.- Trace. A complete record of one agent run — every model call, every tool call, every handoff to another agent, every guardrail check, in order. Think of it as the agent's audit log for one task. "Trace grading" — which appears in the stats line above and many times below — means using an AI grader to read these audit logs and judge whether the agent did the right thing. You don't need to understand the technical implementation yet; you just need to know a trace is the agent's execution history that an eval can grade.

Two more terms used heavily that the glossary defines fully: eval (a test that measures behavior — was the response correct, the tool right, the reasoning sound) and rubric (a scoring guide that defines what "correct" means for a given task, used by graders to produce consistent scores). The full glossary appears two sections below.

Plain-English version — start here if you want the human version first. (Technical readers can skip down to "Course Nine teaches eval-driven development..." below.)

Across the last six courses we built AI agents that work — they hold conversations, use tools, draft documents, route customer issues, hire other agents, and act on the owner's behalf. The honest question we haven't answered yet is: how do we know they're working correctly? Not "did the code run" — we already test that. Not "did the agent reply" — we already log that. The question is whether the agent did the right thing the right way: picked the correct tool, called it with the correct arguments, respected its envelope, grounded its answer in the right source material, escalated when it should have. That question is not answered by the unit tests, the integration tests, or the human eyeballing a demo. It's answered by evals — a new kind of test that measures behavior instead of code. Course Nine teaches you to design evals, run them, wire them into your development workflow, and use them to improve your agents — the same way TDD taught a previous generation of software engineers to ship code with confidence.

🧭 Before you keep reading — is this course right for you? This course wraps a cross-cutting discipline around everything Courses Three through Eight built. Three things will make it hard if you haven't done those courses:

- The worked example is Maya's customer-support company from Courses Five-Eight (Tier-1 Support, Tier-2 Specialist, Manager-Agent, Legal Specialist, plus Claudia the Owner Identic AI). The eval suites we build measure those specific agents. If you don't have them, the Simulated track (using sample traces and mock agent outputs) is the right path; the Full-Implementation track will be difficult.

- The lab uses four eval frameworks — OpenAI Agent Evals (with trace grading), DeepEval, Ragas, and Phoenix — installed and wired together. If you're new to Python testing frameworks generally, Module 4's DeepEval setup is the friendlier on-ramp; the trace grading section (Decision 3) assumes you've used the OpenAI Agents SDK.

- Course Nine evaluates what was built, not how to build it. If you haven't internalized why each Course 3-8 invariant exists, you won't know what the evals are protecting.

What you can still get from reading anyway, even cold: the eval-driven development thesis (Concepts 1-3 make the case that evals are to agentic AI what TDD was to SaaS); the 9-layer evaluation pyramid (Concept 4 — a vocabulary for talking about agent reliability that transfers to any agent stack); the honest frontiers (Part 5 — where the discipline is solid, where it's still emerging, where it breaks). If you're an engineering leader, ML platform owner, or strategist trying to understand what production-grade agentic AI actually requires, the first half of Course Nine is genuinely accessible.

If you want the prereq path: Course Three → Course Four → Course Five → Course Six → Course Seven → Course Eight. Plan ~3-5 days end-to-end.

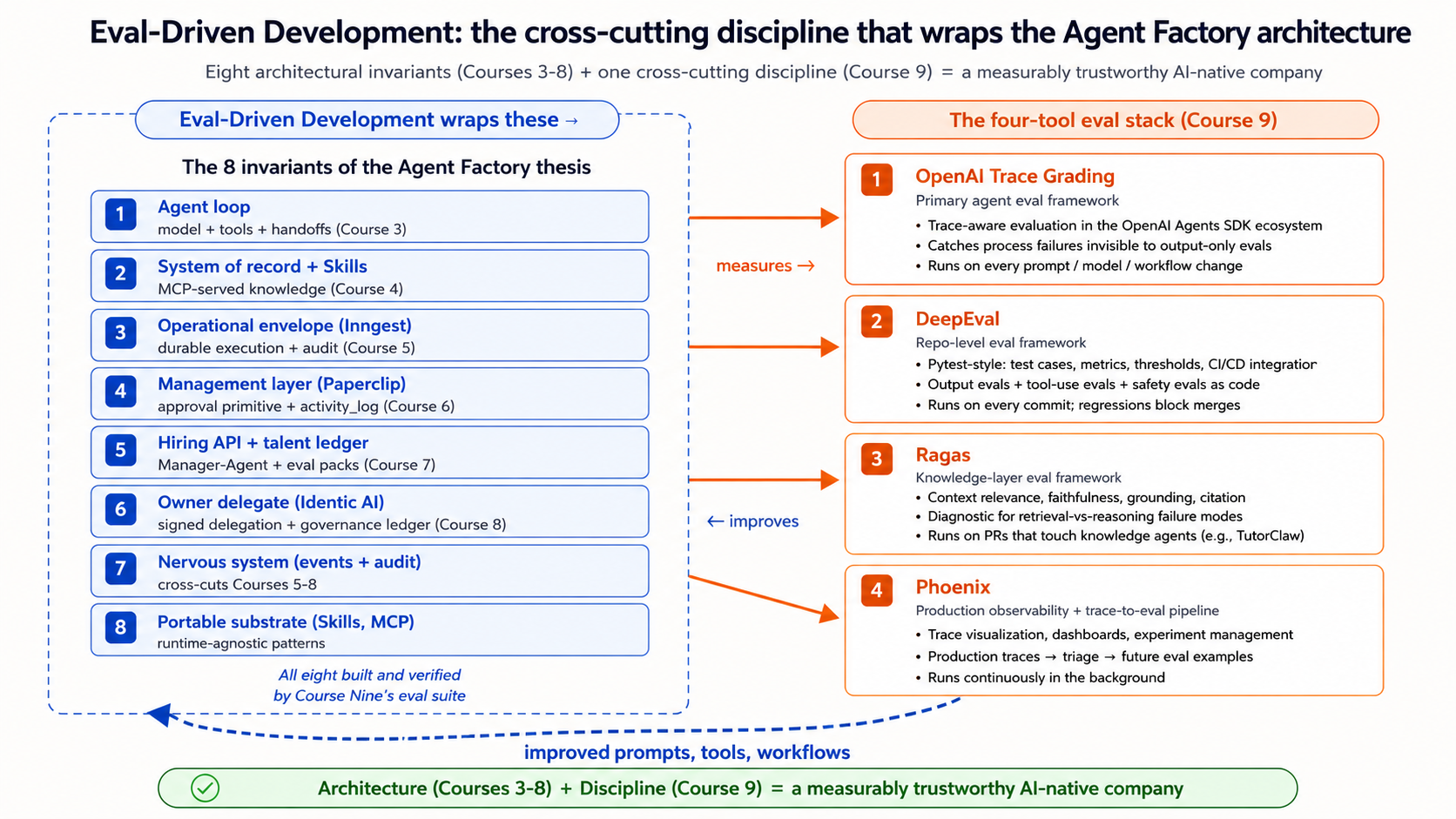

Course Nine teaches eval-driven development (EDD). EDD is the discipline of measuring agent behavior with the rigor that test-driven development (TDD) gave software teams for measuring code. Courses Three through Eight built the architecture of an AI-native company — the agent loop, the system of record, the operational envelope, the management layer, the hiring API, the Owner Identic AI. Those eight courses left one question unanswered: is each piece of the architecture actually working correctly in production? Course Nine adds the measurement layer that answers it. Without it, the architecture is buildable but not trustworthy. Trustworthy is the bar production agents have to meet.

Course Nine — what this closes for the track. Course Nine is not a tenth architectural invariant; it is the cross-cutting discipline that turns the eight thesis invariants from built into measurably trustworthy. Every Worker built in Courses 3-7, every hire authorized in Course 7, every delegated decision Claudia makes in Course 8 gets an eval suite that proves the architecture is doing what it promises. The analogy is exact: SaaS engineering became reliable when teams adopted TDD as a discipline, not because TDD was a new invariant in the SaaS architecture. Eval-driven development is the same shape — a discipline that wraps the architecture, not a layer in it. After Course Nine, the Agent Factory curriculum is structurally complete.

The architect's thesis sentence — the lead and the closer. "In the age of agentic AI, evals are as important as test-driven development was in the age of SaaS. If test-driven development gave SaaS teams confidence in code, eval-driven development gives agentic AI teams confidence in behavior. The two phrases together — confidence in code, confidence in behavior — are the whole shift. Code is deterministic; behavior is probabilistic. Tests verify the former; evals verify the latter. A serious agent team practices both."

Known rough edges I'd rather you see than not.

- The four-tool eval stack (OpenAI Agent Evals with trace grading, DeepEval, Ragas, Phoenix) is moving fast as of May 2026. The course teaches the stable architectural surfaces of each — the concepts of trace evaluation, repo-level eval discipline, RAG-specific metrics, and production observability — not the specific API shapes which will drift between versions.

- Eval datasets are the load-bearing artifact and the most undervalued. Course Nine spends real time on dataset construction (Concept 11 + Decision 1) because a beautiful eval framework on a bad dataset is worse than no eval at all — it measures the wrong thing with rigor.

- TDD analogies break in specific places. The course is honest about where TDD's discipline carries over to EDD (the loop shape, the regression discipline, the CI/CD integration) and where it fundamentally fails (deterministic vs probabilistic outputs, drift across model versions, context-dependent correctness). Concept 2 names this directly.

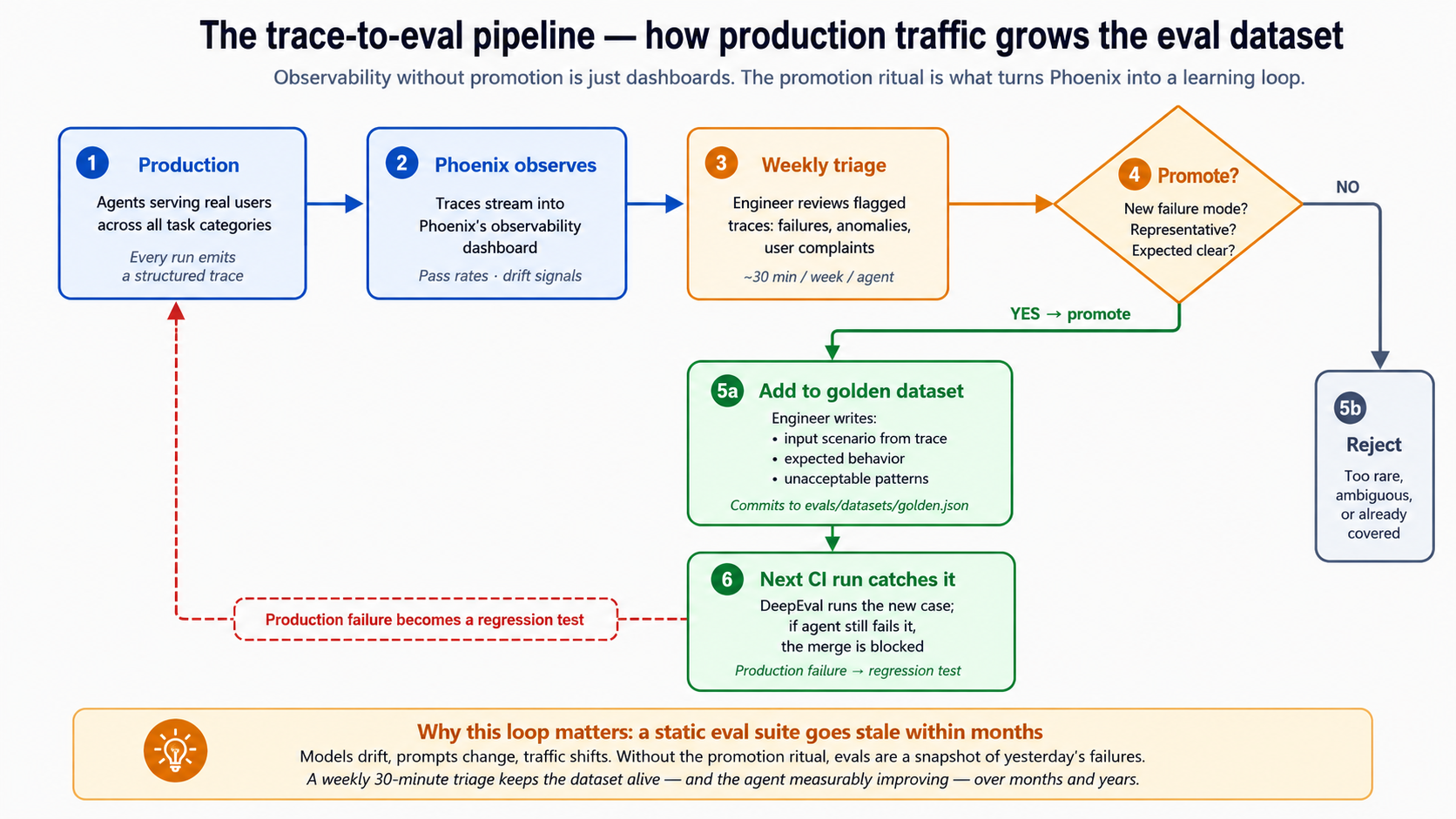

- Production evals are easier to talk about than to ship. Phoenix gives you observability; turning observed traces into production evals that actually improve the agent is an operational discipline most teams underestimate. Concept 13 names where teams fail.

- The "what evals can't measure" frontier is real and worth naming. Pattern-matching behavior is evaluable; alignment with user values at the edge cases is not, fully. Concept 14 is honest about this rather than pretending evals close every gap.

TL;DR — the four claims of Course Nine.

- Traditional tests are necessary but insufficient for agentic AI. Unit tests verify code; integration tests verify wiring; neither verifies behavior. Agents are probabilistic, multi-step, tool-using, and context-sensitive. The behaviors they produce can't be tested with assert statements on return values.

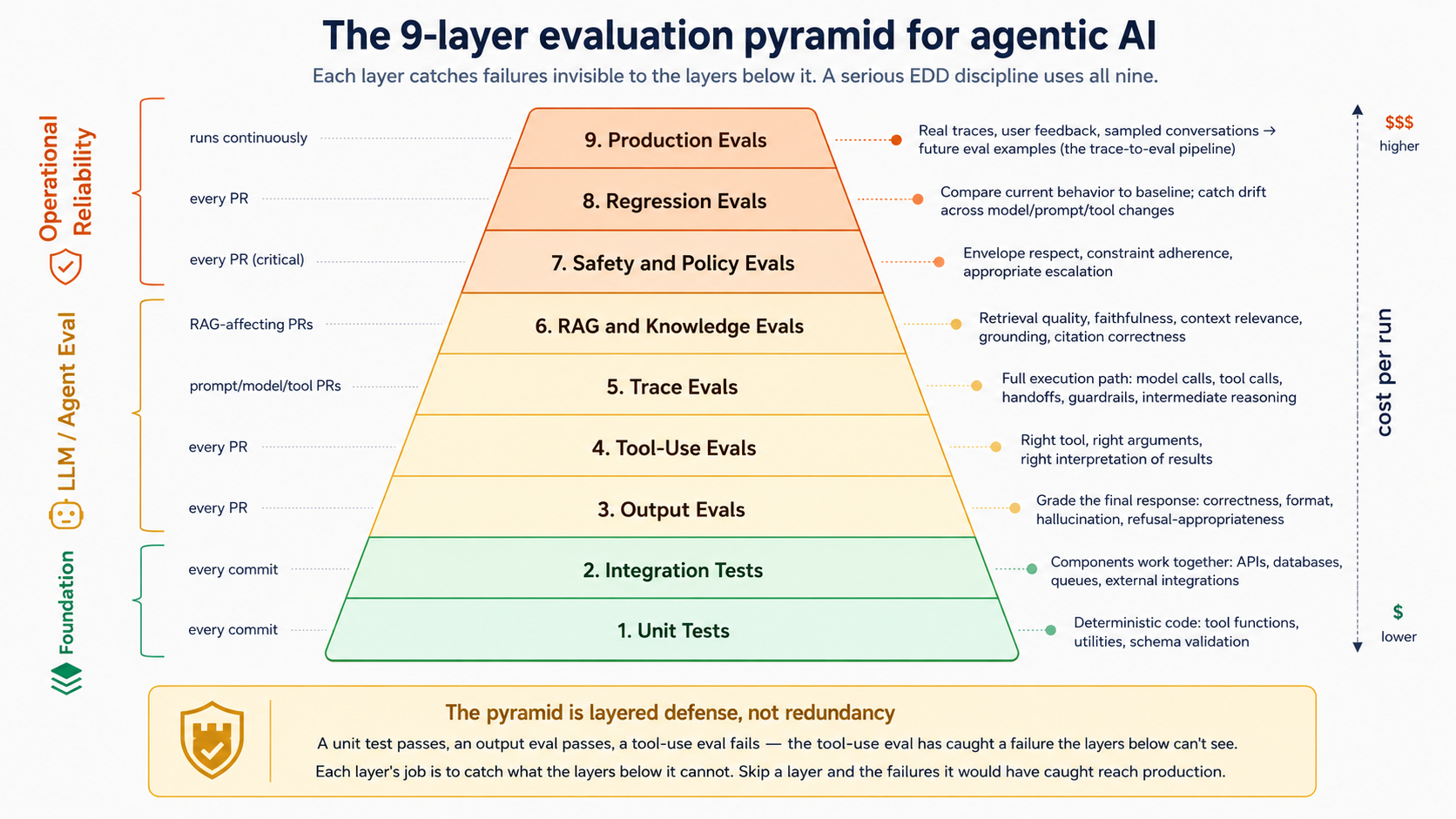

- The architectural answer is a 9-layer evaluation pyramid that extends traditional testing rather than replacing it: unit → integration → output evals → tool-use evals → trace evals → RAG evals → safety evals → regression evals → production evals. Each layer catches failure modes the others miss.

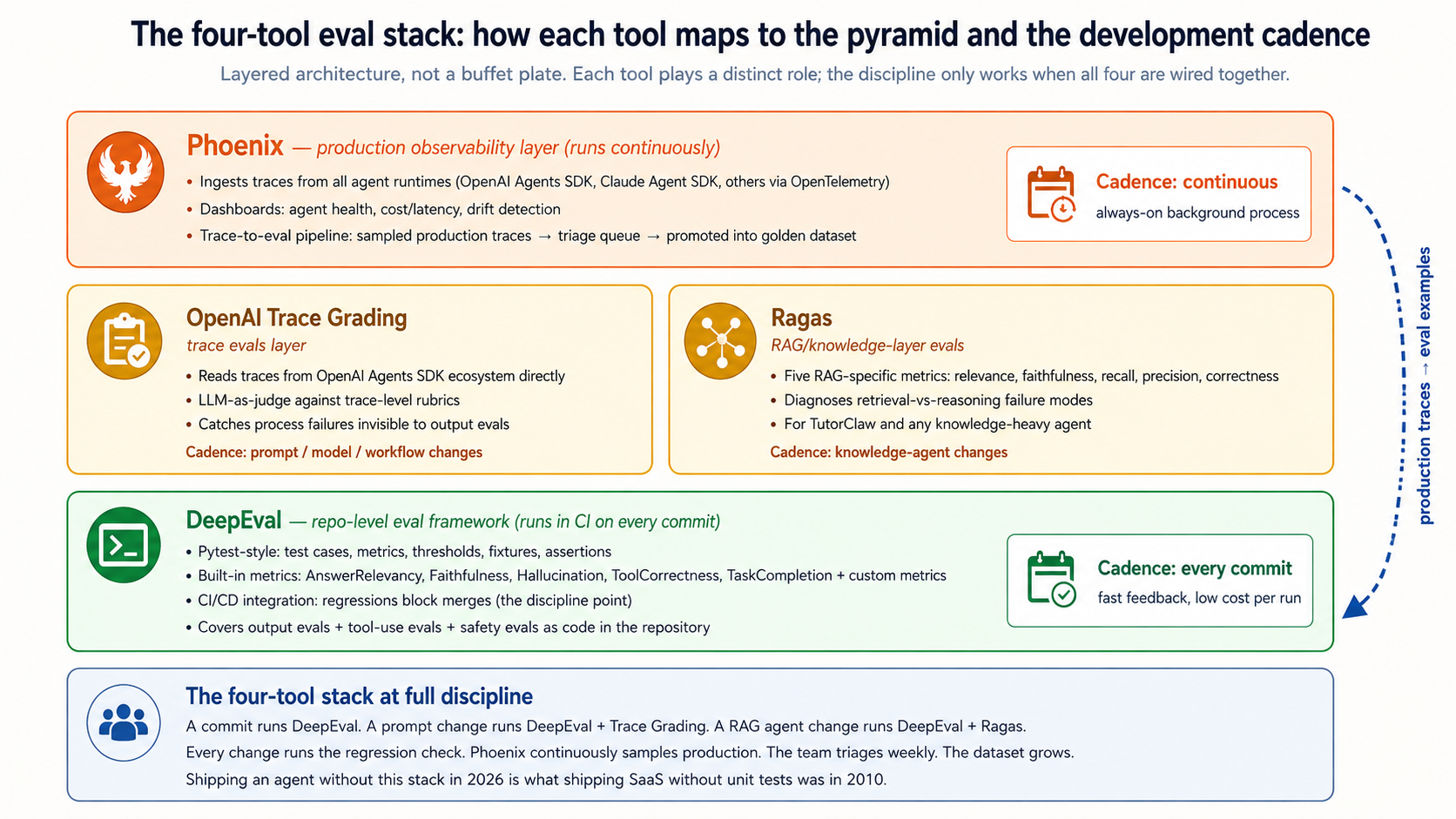

- The recommended stack is OpenAI Agent Evals with trace grading for agent behavior, DeepEval for repo-level evals (pytest-for-LLM-behavior), Ragas for the knowledge layer, Phoenix for production observability. Each tool plays a specific role; together they are the eval-driven development toolkit.

- The discipline is what matters more than the tooling. No prompt change ships without an eval run. No tool change ships without an eval run. No model upgrade ships without an eval run. The eval suite is the regression net that makes agentic AI development feel like engineering rather than guesswork.

If the four claims above lost you, scroll back up to the plain-English version at the top of the page — that's the same content for non-technical readers.

Are you ready?

- You completed Courses Three through Eight, or have built the equivalent: an Inngest-wrapped Worker (Course Five), a Paperclip management layer with the approval primitive (Course Six), a hiring API (Course Seven), and Maya's Owner Identic AI on OpenClaw (Course Eight). The worked example throughout Course Nine is Maya's company; if it doesn't exist, the Simulated track is the right path.

- You're comfortable with Python testing frameworks —

pytestspecifically, or at least the concept of test cases, assertions, fixtures, and CI runs. DeepEval (the repo-level eval framework) is structured like pytest; if pytest is unfamiliar, complete a one-hour pytest tutorial before Decision 2.- You're comfortable reading and writing JSON schemas. The golden dataset (Decision 1), the trace-grading rubric definitions (Decision 3), and Phoenix's trace inspection (Decision 7) all use JSON. No advanced schema work required, just fluency.

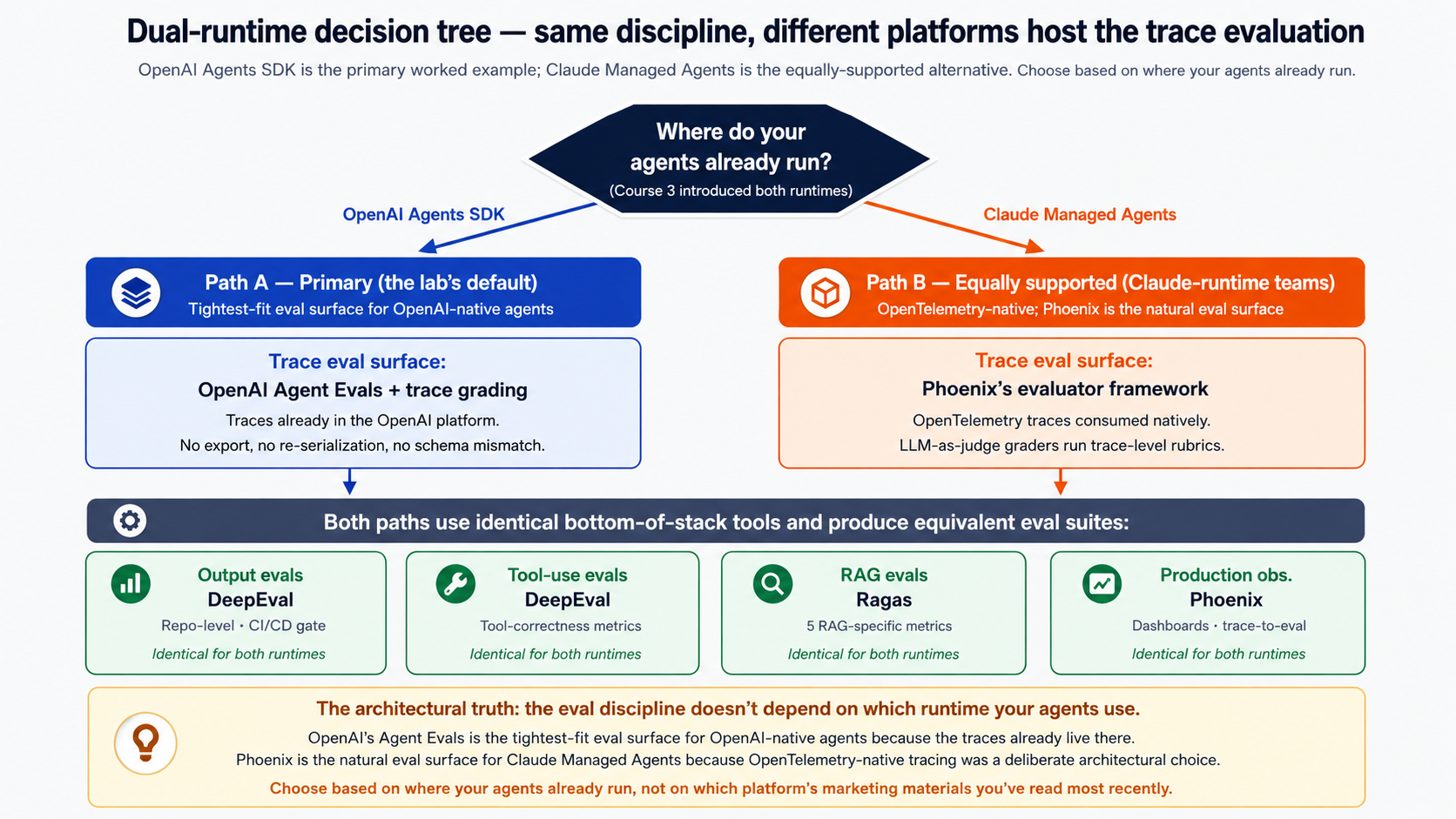

- You have either a Claude Managed Agents setup or an OpenAI Agents SDK account. Courses 3-7 taught both runtimes — Course Nine evaluates both. The lab's primary worked example (Maya's agents) runs on Claude Managed Agents and uses Phoenix's evaluator framework for trace evals (the tightest-fit eval surface for Claude-runtime agents, since the Claude Agent SDK's tracing is OpenTelemetry-native); the equally-supported alternative path uses OpenAI Agent Evals with Trace Grading for readers whose agents are on the OpenAI Agents SDK. Concept 8 covers both paths in detail. You don't need to migrate runtimes to do Course Nine. Claude users: you'll use Phoenix as your trace-eval layer (Decision 7's setup serves double duty). OpenAI users: check platform.openai.com/docs/guides/agents. Simulated track readers get pre-recorded trace samples for both runtimes — the GitHub repository has them.

- You have Python 3.11+, Node.js 20+, Docker, and basic familiarity with CI/CD. Phoenix (the observability layer) runs as a containerized service; DeepEval and Ragas are Python packages; the trace-grading client is JS/Python.

New here? Course Nine is the ninth of nine — here's the on-ramp. Course Nine wraps a discipline around what Courses 3-8 built; without that foundation, several Concepts in Part 1 will reference architecture you haven't seen. Work backwards if the prereqs above are unfamiliar: Course Eight is the immediate prerequisite (Maya's Owner Identic AI is the worked example for trace evals); Course Seven is the hiring API; Course Six is the management layer with approval primitive; Course Five is the Inngest envelope; Course Three is the agent loop. You can also read Course Nine cold for the discipline and skip the lab — the conceptual content is independently valuable.

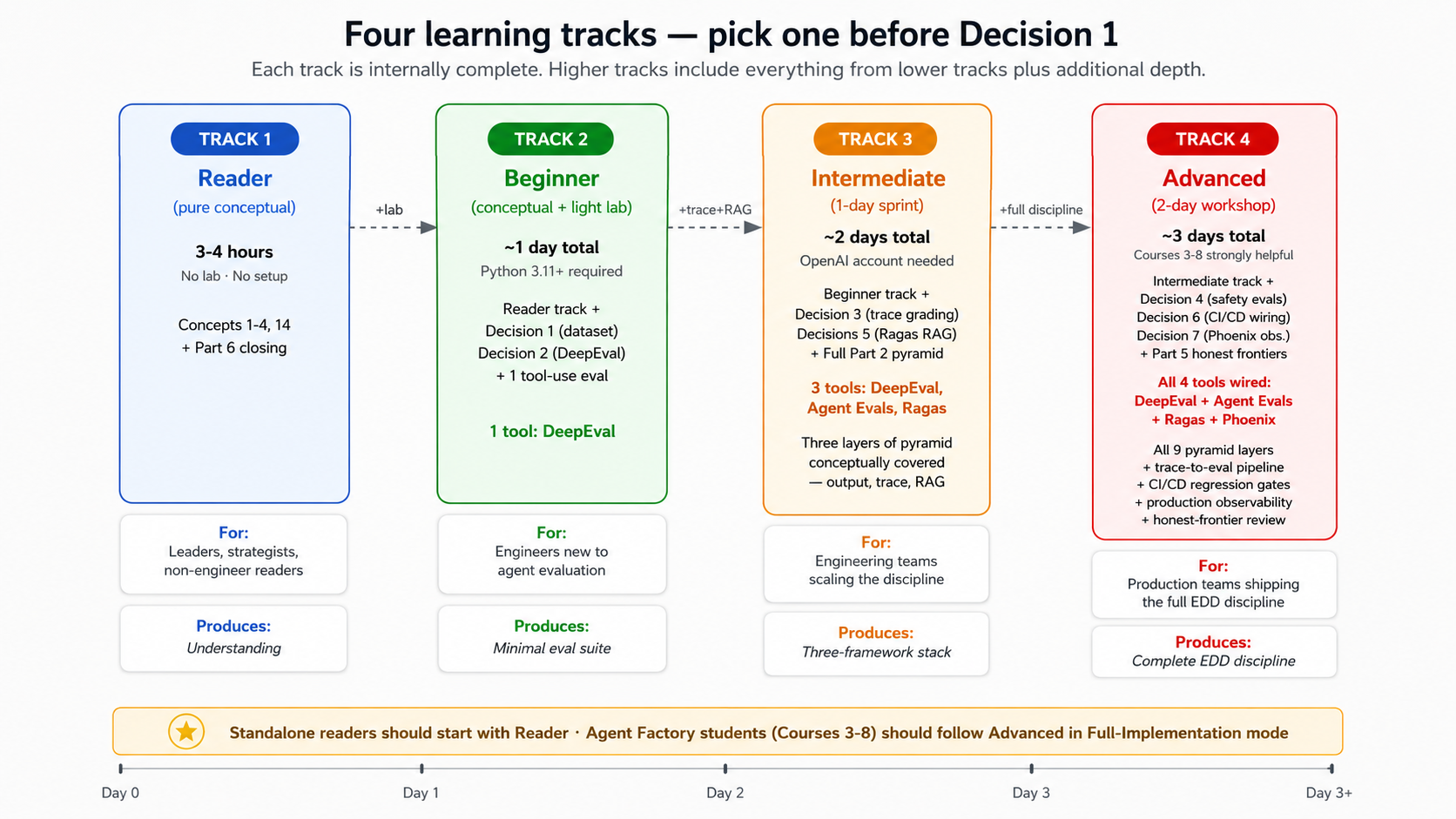

Four learning tracks — pick yours

Course Nine works for four different depths. Pick your track explicitly before Decision 1; the conceptual content is designed to work for all four, and the lab is designed for tracks 2-4.

| Track | Time commitment | What you complete | Who it's for |

|---|---|---|---|

| Reader (pure conceptual) | ~3-4 hours, no lab | Concepts 1-4 + Concept 14 (what evals can't measure) + Part 6 closing. No Python setup, no framework installs, no labs. The discipline lands; the implementation is deferred. | Engineering leaders, ML platform owners, strategists, product managers, and curious-but-non-engineer readers who want to understand what EDD is and why it matters without building it. Also the right entry point for someone deciding whether to commit time to the Beginner track later. |

| Beginner | ~1 day total (conceptual + light lab) | Reader track content + Decision 1 (golden dataset) + Decision 2 (DeepEval output evals) + one tool-use eval. Stop there. | Software engineers new to agentic-AI evaluation; the goal is to internalize the discipline and ship a minimal eval suite. Requires Python 3.11+ familiarity. |

| Intermediate | ~2 days (1-day sprint after conceptual reading) | Beginner track + Decisions 3 (trace grading) + 5 (Ragas RAG evals) + the full Part 2 conceptual content. | Engineering teams who want the four-layer pyramid covered conceptually and three frameworks wired up. |

| Advanced | ~3 days (2-day workshop after conceptual reading) | Intermediate track + Decisions 4 (safety evals on Claudia), 6 (CI/CD wiring), 7 (Phoenix + production observability) + Part 5 (honest frontiers). The complete EDD discipline. | Production teams shipping the discipline; the full curriculum the source's "Recommended Implementation Sequence" specifies. |

Track-fork guidance. Curious-but-non-engineer readers and leaders making decisions about EDD investment should start with the Reader track — 3-4 hours, no setup, and at the end you'll know whether your team should commit to the Beginner or higher track. Beginners should not feel pressure to complete the Advanced track on first pass. The discipline is iterative; teams typically graduate Reader → Beginner over a sprint, Beginner → Intermediate over weeks, and Intermediate → Advanced over months as production usage matures. Standalone readers (not from the Agent Factory curriculum) should default to the Reader track first, then assess whether the Beginner track's Simulated mode (see Part 4) is the right next step. Agent Factory students with Courses 3-8 already shipped should follow the Advanced track in Full-Implementation mode.

What you'll have at the end (concrete deliverables)

Reader track produces understanding, not artifacts. By the end of the Reader track, you can: explain why agentic AI needs behavior measurement beyond unit tests; describe the 9-layer evaluation pyramid in your own words; name the four-tool stack and what each tool covers; articulate where EDD is solid and where it's honestly limited. That's enough to decide whether your team should invest in the Beginner-or-higher track.

Beginner, Intermediate, and Advanced tracks produce concrete artifacts. By the end of the lab, depending on which track you picked, you will have built:

- A 20-50 case golden dataset (Decision 1 — Beginner and up) — categorized by task type, stratified by difficulty, version-controlled, with documented conventions.

- Output evals running in DeepEval (Decision 2 — Beginner and up) — answer relevancy, faithfulness, hallucination, and task-completion metrics covering the Tier-1 Support agent's most common task categories.

- At least one tool-use eval (Decision 2 with extension, or Decision 3 for the trace-aware version — Beginner and up) — verifying the agent called the right tool with the right arguments.

- One trace-based eval (Decision 3 — Intermediate track and up) — running through OpenAI Agent Evals with trace grading on captured agent traces.

- One RAG eval (Decision 5 — Intermediate track and up) — Ragas's five-metric framework on TutorClaw, the knowledge agent introduced for this layer.

- One CI gate (Decision 6 — Advanced track) — a GitHub Actions or equivalent workflow that blocks PRs when critical metrics regress.

- One Phoenix dashboard or simulated trace replay (Decision 7 — Advanced track) — production observability over real or replayed traces, with the trace-to-eval promotion pipeline wired.

The Beginner track stops at the first three deliverables; the Intermediate track adds the next two; the Advanced track adds the final two. Each track is internally complete — there is no Beginner-track deliverable that depends on a deliverable from a higher track.

Vocabulary you'll meet in this course

Course Nine uses vocabulary from across the Agent Factory track plus several new terms specific to eval-driven development. Terms grouped by what they describe.

Glossary — click to expand

Eval-driven discipline:

- Eval-driven development (EDD) — the discipline of measuring agent behavior with the same rigor TDD gave SaaS teams for measuring code. Every prompt, tool, or workflow change ships only after the eval suite confirms it didn't regress.

- Golden dataset — a curated set of representative tasks with expected behavior, acceptable/unacceptable outputs, and required tool usage. The load-bearing artifact of EDD; eval quality is bounded by dataset quality.

- Eval — a test that measures behavior (was the agent correct, helpful, safe, well-grounded) rather than code (did the function return the expected value). May produce a graded score (0-5), a pass/fail, or a categorical judgment.

- Rubric — a scoring guide that defines what "correct" means for a given task. Used by graders to produce consistent eval scores.

- Grader — the mechanism that produces the eval score: a human (slow, expensive, accurate), an LLM-as-judge (fast, cheap, sometimes biased), or a deterministic rule (fast, free, only works for some metrics).

The evaluation pyramid: the seven agent-specific layers (output, tool-use, trace, RAG, safety, regression, production) sit on top of the SaaS-foundation layers (unit, integration). Each layer catches failures invisible to the layers below it. The full nine-layer taxonomy with definitions is in Concept 4 — this Glossary won't restate it.

The four-tool stack:

- OpenAI Evals — OpenAI's hosted eval platform. Dataset management, output evals at scale, model-vs-model comparison, experiment tracking, hosted dashboards. The output-and-dataset half of OpenAI's eval offering.

- OpenAI Agent Evals (with trace grading) — OpenAI's hosted agent-evaluation platform. "Agent Evals" is the broader product (datasets, eval runs, model-vs-model comparison, hosted dashboards); "trace grading" is the trace-aware capability within it (reads agent traces from the OpenAI Agents SDK ecosystem directly and runs trace-level assertions on tool calls, handoffs, guardrails). Together they are the primary agent eval framework for OpenAI Agents SDK-based agents.

- DeepEval — open-source, pytest-style eval framework. Runs in the project repository, fits into CI/CD, feels familiar to developers who know pytest.

- Ragas — open-source RAG-specific eval framework. Provides retrieval-quality, faithfulness, context-relevance, and answer-correctness metrics for knowledge-layer agents.

- Phoenix — open-source observability and evaluation platform. Production traces, dashboards, experiment comparison, sampling for eval datasets.

- Braintrust — the commercial alternative to Phoenix; introduced as the upgrade path in Concept 10 and Decision 7 for teams that want a polished collaborative product with hosted infrastructure.

- LLM-as-judge — using an LLM (typically a larger model than the one being evaluated) to grade the output of a smaller agent. Standard in all four products for behavior metrics that aren't deterministic.

Cross-course concepts:

- Worker / Digital FTE — a role-based AI agent the company hired (Courses 4-7). The unit Course Nine evaluates.

- Owner Identic AI — the human owner's personal AI delegate, runs on OpenClaw (Course 8). Course Nine evaluates its delegated-governance decisions specifically.

- Authority envelope — the bounds on what a Worker is allowed to do (Course 6). Safety evals verify Workers respect their envelopes.

- Activity log / Governance ledger — the audit trails from Courses 6 and 8. Production evals sample from these to construct future eval datasets.

- MCP — the open Model Context Protocol that agents use to read and write the system of record (Course 4). RAG evals measure the quality of the MCP-served knowledge.

Operational vocabulary:

- Test fixture / eval example — one entry in the golden dataset (one task, one expected behavior).

- Pass threshold — the minimum score on a given metric that constitutes a passing eval. Set per metric, per agent role, often per task category.

- Drift — the phenomenon of agent behavior changing over time without the code changing, typically because the underlying model has been updated or retrained. Regression evals catch drift; production evals quantify it.

- Eval-of-evals — measuring whether your evals are themselves measuring what you think they measure. The honest-frontier problem of EDD (Concept 14).

What you bring forward from Courses Three through Eight

If you've just finished Course Eight, skim and move on. If you're picking this up cold or it's been a while, the five bullets below are the load-bearing pieces of context the rest of Course Nine depends on — read them carefully.

- From Course Three (the agent loop): Workers built on the OpenAI Agents SDK have traces — structured records of every model call, tool call, handoff, and guardrail check inside a run. Trace grading (Decision 3) reads these. If your Workers were built on a different SDK, Concept 8 covers the substrate-portability story.

- From Course Four (the system of record): Workers read and write authoritative data through MCP servers. Course Four's worked example uses a knowledge-base MCP for product documentation. Decision 5 evaluates that knowledge layer with Ragas.

- From Course Six (the management layer): Paperclip's

activity_logandcost_eventstables capture every Worker action. Production evals (Decision 7 + Concept 13) sample from these to build future eval datasets. - From Course Seven (hiring API + talent ledger): Every hire produces an eval-pack run before approval. Course Nine teaches what those eval packs actually measure; Course Seven introduced the interface, Course Nine teaches the implementation.

- From Course Eight (Owner Identic AI + governance ledger): Maya's Identic AI Claudia signs and resolves delegated approvals. The governance ledger records every Claudia decision with confidence, reasoning summary, and layer source. Course Nine's Decision 4 (safety + envelope evals) uses these records to verify Claudia stayed within her delegated envelope.

Full recap: where Courses Three through Eight left things (click to expand for additional detail)

From Course Three: Workers are agent loops built on the OpenAI Agents SDK (or Claude Agent SDK; the patterns transfer). Each run produces a trace: a structured tree of model calls, tool calls, handoffs, and guardrail checks. The SDK's tracing UI lets you inspect any run's full execution path.

From Course Four: Workers read and write through MCP servers. The system-of-record pattern keeps authoritative data outside the agent's context window — the agent fetches what it needs at the right granularity. Knowledge-layer MCPs (product docs, internal wikis, customer history) are where retrieval quality genuinely matters.

From Course Five: Workers run inside Inngest's durable-execution wrapper. Every step is logged. step.wait_for_event is the durable pause used for approval flows. If a Worker crashes mid-run, Inngest replays from the last successful step. This durability is what makes long-running evals feasible.

From Course Six: Paperclip is the management layer. The activity_log records every Worker action. The cost_events table records every model and tool call's cost. Approval gates use the wait_for_event primitive. The authority envelope cascade (company → role → issue → approval-level) is what bounds Worker behavior.

From Course Seven: Hiring is a callable capability. The Manager-Agent detects capability gaps and proposes new hires. Each hire goes through an eval-pack runner that scores candidates on four dimensions before the board approves. The talent ledger records every hire, eval, retirement. The eval-pack runner is the prototype of Course Nine's discipline; Course Nine generalizes it to all agent-quality measurement.

From Course Eight: Maya has an Owner Identic AI (Claudia) running on OpenClaw. Claudia signs delegated approvals with ed25519; Paperclip verifies signature + envelope before resolving. The governance ledger records every Claudia decision with principal, confidence, layer_source, reasoning_summary. The two-envelope intersection (Maya's authority ∩ Claudia's delegated subset) is the boundary safety evals enforce.

What's left after Course Eight: the architecture is buildable end-to-end. What's missing is a way to prove it works correctly in production. That's Course Nine.

Cross-course evaluation map

Course Nine evaluates everything Courses 3-8 built. This table maps each prior course to the eval layer that primarily measures it. This is the architectural commitment of Course Nine — not just "evals matter" but "this eval covers that course's primitive."

| Course | What it built | Eval layers that measure it | Course Nine touchpoint |

|---|---|---|---|

| Three | The agent loop (model + tools + handoffs) | Output evals (the agent's final response), Tool-use evals (right tool, right args), Trace evals (the full execution path) | Concepts 5-6, Decisions 2-3 |

| Four | System of record via MCP, Skills | RAG evals (retrieval, grounding, faithfulness) | Concept 7, Decision 5 |

| Five | Operational envelope (Inngest durability) | Regression evals (does the agent behave consistently across runs?), Production evals (what real runs look like) | Concepts 12-13, Decisions 6-7 |

| Six | Management layer (Paperclip + approval primitive) | Safety/policy evals (envelope respect, approval-gate triggering), Production evals (sampling from activity_log) | Decisions 4, 7 |

| Seven | Hiring API + talent ledger | Eval packs (the four-dimension scoring at hire time) — Course Nine generalizes this primitive | Concept 4 (the eval pack pattern), Decision 1 |

| Eight | Owner Identic AI + governance ledger | Trace evals (Claudia's reasoning chain), Safety evals (delegated-envelope respect), Regression evals (drift in Claudia's judgment) | Decisions 3, 4, 6 |

The thesis-aligned framing: the eight invariants describe what an AI-native company is built from. Course Nine teaches how to measure whether each invariant is actually working. The discipline is the bridge from architecture to trustworthy production.

Cheat sheet — the 15 Concepts

| # | Concept | Part | One-line summary |

|---|---|---|---|

| 1 | Why traditional tests aren't enough for agents | 1 | Probabilistic, multi-step, tool-using systems need behavior measurement, not code measurement. |

| 2 | The TDD analogy and its limits | 1 | TDD's red-green-refactor loop carries to EDD; TDD's determinism assumption breaks. Honest about both. |

| 3 | What "behavior" means for agents | 1 | Final answer ≠ trace ≠ path. Evaluating only the final answer misses the most consequential failures. |

| 4 | The 9-layer evaluation pyramid | 2 | Unit → integration → output → tool-use → trace → RAG → safety → regression → production. Each layer catches what the others miss. |

| 5 | Output evals | 2 | The accessible starting point. What they catch: correctness, format, hallucination. What they miss: process failures. |

| 6 | Tool-use and trace evals | 2 | For tool-using agents, the path matters as much as the result. Trace evals are the agentic equivalent of integration tests with internal assertions. |

| 7 | RAG evals | 2 | Knowledge-layer agents have three failure modes (retrieval, grounding, citation). Each needs its own metric. |

| 8 | The trace-eval layer per runtime | 3 | Phoenix evaluators for Claude-runtime agents (Maya's primary); OpenAI Agent Evals + Trace Grading for OpenAI-runtime agents — same discipline, two platform UIs. |

| 9 | DeepEval for repo-level discipline | 3 | Pytest-for-agent-behavior. Brings evals into the developer workflow rather than the research notebook. |

| 10 | Ragas + Phoenix | 3 | Ragas evaluates the knowledge layer; Phoenix observes production. The two together complete the stack. |

| 11 | Golden dataset construction | 5 | The most undervalued artifact. Eval quality is bounded by dataset quality; bad datasets measure confusion. |

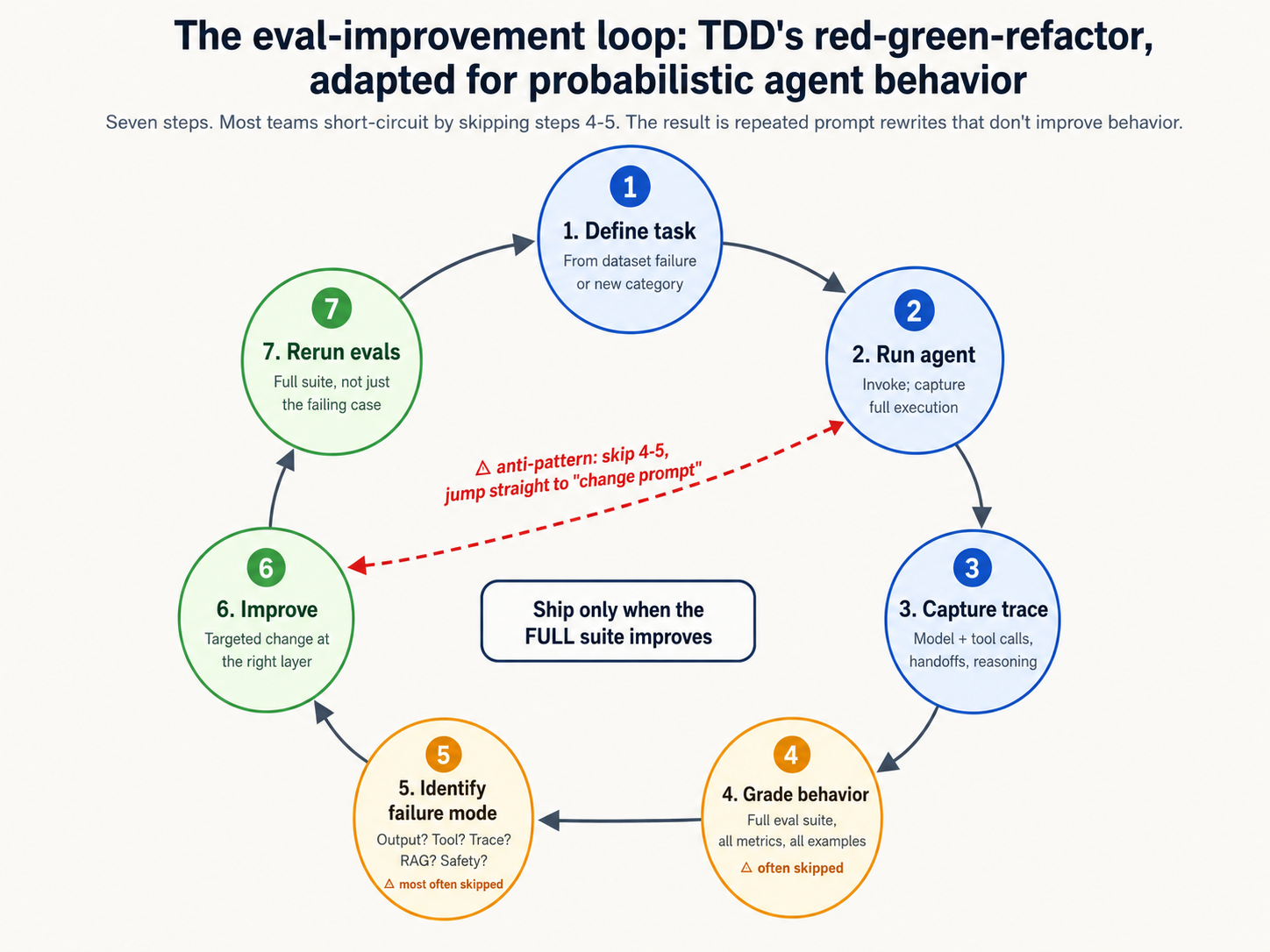

| 12 | The eval-improvement loop | 5 | Define task → run agent → capture trace → grade → identify failure mode → improve prompt/tool → rerun. Ship only when behavior improves. |

| 13 | Production observability and the trace-to-eval pipeline | 5 | Phoenix gives you traces; turning traces into eval examples is an operational discipline most teams underestimate. |

| 14 | What evals can't measure | 5 | Pattern behavior is evaluable; novel-edge alignment isn't, fully. Honest about the gap rather than pretending evals close every hole. |

| 15 | Eval-driven development as foundational discipline | 6 | EDD takes its place alongside TDD as one of the foundational reliability disciplines of software engineering — and what comes next. |

Part 1: The Discipline

The thesis of Courses 3-8 was that an AI-native company is buildable end-to-end — engines, system of record, durability, management layer, hiring, delegate. The thesis Course Nine adds is that buildable is not trustworthy. Anyone who has shipped a Worker into production and watched it occasionally fail in a confusing way knows this. The Worker passes its unit tests. The integration tests are green. The agent demo went well. And yet — in production — it sometimes picks the wrong tool, sometimes ignores a constraint it acknowledged in training, sometimes confabulates an answer when it should have escalated. Why? Because none of those tests measured the thing that's actually failing: the agent's behavior under conditions the tests didn't anticipate.

Part 1 makes that case concrete, then introduces the architectural response: a discipline of measuring behavior that extends — not replaces — the testing disciplines you already know. Three Concepts.

Concept 1: Why traditional tests aren't enough for agents

A unit test for a function asks: given this input, does the function return this output? The discipline is decades old, the tooling is mature, the developer ergonomics are excellent. A failure is unambiguous — the assertion either passes or fails, the reproduction case is the test itself, the fix is local. Software engineering became reliable when teams adopted this discipline; the production systems we trust today (banks, hospitals, flight control) are built on rigorous unit and integration testing.

Now consider what changes when the "function" is an AI agent.

The input is not a concrete value — it's a natural-language task, often ambiguous, sometimes context-dependent. The output is not a return value — it's a sequence of model calls, tool invocations, intermediate decisions, handoffs to other agents, retries, eventual response. The "function" is not deterministic — the same input can produce different outputs across runs, across models, across time. None of the assumptions a unit test rests on hold for an agent.

Specifically, an agent is:

- Probabilistic. The same model with the same prompt can produce different outputs on different runs. Sometimes the variation is acceptable — different phrasings of the same correct answer. Sometimes it's catastrophic — one run picks the right tool, another picks the wrong one. A test that runs once and passes proves nothing about the next run. Reliable evaluation requires running the agent many times against the same input and grading the distribution of behavior.

- Multi-step. A useful agent rarely produces one model call and stops. It plans, calls tools, observes results, plans again, calls more tools, hands off to other agents, eventually responds. Each step can succeed or fail. A test that checks only the final response can pass on a run where every intermediate step did the wrong thing. The agent "got lucky" and stumbled into a correct answer despite a broken process. (Same reason an engineer doesn't ship code based on "it compiled and ran" — compilation success is necessary but vastly insufficient for correctness.)

- Tool-using. Modern agents read databases, call APIs, search documentation, invoke other agents. Tool use is where agents stop being chatbots and start being workers. Did the agent use the right tool? With the right arguments? In the right order? Did it interpret the result correctly? Each question is its own evaluation problem — distinct from whether the final response was correct.

- Context-sensitive. Agents behave differently depending on what's in their context — which documents they retrieved, which prior messages are in the conversation, which Skills are installed, which model is running them. A test that works in isolation can fail when the agent runs with realistic production context. And vice versa. Evaluating an agent requires evaluating it in representative contexts, not just minimal ones.

- Connected to external systems. Agents read from databases, write to ticket systems, send messages, update calendars, execute code. Their behavior has side effects. A traditional unit test mocks out the external world. An agent eval has two harder paths: (a) run against staging-equivalent infrastructure, accepting the latency and cost, or (b) build careful mocks that reproduce the agent-relevant behavior of those systems. Neither is as easy as the unit-test happy path.

The implication is not that traditional tests are obsolete. They aren't. Course Nine's first phase of the lab (Decision 1) starts by ensuring traditional tests still exist — unit tests on tools, integration tests on the durability layer, API tests on the Paperclip surface. These remain essential. What's new is the layer of evaluation that sits above them and measures the agent itself.

Course Nine names this layer behavior evaluation, or evals for short. A test verifies code; an eval verifies behavior. The two are complementary, not substitutes. A serious agent team practices both.

Here's how the distinction maps to a concrete failure mode from the Course 5-8 worked example. Suppose Maya's Tier-1 Support agent receives a customer ticket about a billing error. The traditional tests on the agent's code all pass: the Inngest wrapper starts correctly, the agent's tools (the customer-lookup API, the refund-issuance API) are integration-tested and working, the response-generation function returns a string. But in production, on this particular ticket, the agent looks up the wrong customer (similar email, different account), confirms the refund applies to that customer's purchase history, and issues a $89 refund to the wrong person. No traditional test catches this failure, because every component worked correctly — the failure is in the agent's reasoning about which customer to look up. Only a behavior eval (tool-use eval, in this case — "was the right argument passed to the customer-lookup tool?") catches it.

The same pattern shows up across the Course 3-8 architecture. The Course Seven hiring API can pass all its tests while the Manager-Agent recommends a hire that doesn't match the gap. The Course Eight governance ledger can record a valid signature on an envelope-respecting decision that nonetheless contradicts how Maya herself would have decided. The interesting failures of agentic systems live above the layer of traditional testing. Evals are how we get to them.

PRIMM — Predict before reading on. Maya's Tier-1 Support agent (Course 5-6) handles 200 customer tickets per day. Maya has installed unit tests on every tool the agent uses, integration tests on the Paperclip approval primitive, and a synthetic end-to-end test that runs ten realistic customer scenarios nightly. All tests are green. The agent has been in production for six weeks.

Predict before reading on: what fraction of agent failures in production would you expect this test suite to catch? Specifically, of the failures Maya would consider "agent did the wrong thing," what fraction would the green test suite have flagged in advance?

- 80-100% — strong test coverage like this should catch almost everything

- 40-60% — catches the easy ones, misses the subtle ones

- 10-30% — catches code bugs, misses agent-reasoning bugs

- Less than 10% — tests verify code; almost all agent failures are behavior failures

Pick one before reading on. The answer, with reasoning, lands at the end of Concept 3.

Bottom line: traditional tests verify code; agentic AI requires verifying behavior. Five properties of agents — probabilistic, multi-step, tool-using, context-sensitive, side-effecting — make unit-test discipline necessary but vastly insufficient. The architectural response is not to discard traditional testing but to add a complementary layer (evals) above it that measures the agent's behavior the same way tests measure the code's correctness. Concept 1 makes the case for that layer's necessity; the rest of Course Nine builds it.

Concept 2: The TDD analogy and its limits

The most useful frame for understanding eval-driven development is by analogy to test-driven development. TDD was the discipline that made SaaS engineering reliable. Before TDD, code shipped when it ran in development; after TDD, code shipped when it passed its tests. The shift was not in the tooling (test frameworks existed before TDD became disciplined practice) but in the workflow: tests were written before the code, every code change ran the test suite, regressions were caught at change-time rather than at incident-time. CI/CD made the discipline automatic. Production reliability improved by an order of magnitude.

EDD is the same shape. Before EDD, agents shipped when they demoed well; after EDD, agents ship when their eval suite passes. The shift is in the workflow: evals are written before the agent change (or at least concurrently with it), every prompt/tool/model change runs the eval suite, regressions are caught at change-time rather than in production. CI/CD makes the discipline automatic. Production reliability of agents improves by the same kind of margin.

This analogy is useful and load-bearing for the rest of Course Nine. We will return to it repeatedly: when introducing DeepEval (Concept 9 — "pytest-for-agent-behavior"); when introducing regression evals (Concept 12 — "the eval suite is the regression net that lets you ship"); when introducing the eval-improvement loop (Concept 12 — "red, green, refactor"). The shape of TDD as a discipline carries over to EDD.

But the analogy also breaks in specific places that matter. Honest pedagogy requires naming where.

Where TDD carries over to EDD:

- The loop shape. Red-green-refactor in TDD becomes "failing eval, passing eval, refactor prompt/tool/workflow" in EDD. Both disciplines write the failure case first, get to passing, then improve.

- The regression net. TDD's regression suite catches yesterday's correctness from being broken by today's change. EDD's eval suite does the same for behavior. Both make change safe.

- The CI/CD integration. TDD's tests run on every commit; mature shops won't merge code that fails the suite. EDD's evals run on every prompt/tool/model change; mature shops won't ship an agent change that regresses the eval suite.

- The dataset as artifact. TDD's test fixtures (sample inputs, expected outputs) are version-controlled, reviewed, and treated as part of the codebase. EDD's golden dataset is the same — version-controlled, reviewed, evolved over time.

- The team discipline. TDD took ten years of advocacy before becoming mainstream practice in SaaS engineering. EDD is at the equivalent of TDD's early-2000s adoption curve. The shape of the transition — from "we should test" to "we won't ship without tests" — is the same shape EDD is going through now.

Where TDD's assumptions break for EDD:

- Determinism. A TDD test on a pure function is deterministic — given the same input, the function produces the same output. The assertion either passes or fails. An eval on an agent is probabilistic. The same input can produce different outputs across runs. The eval has to grade a distribution of behavior, not a single point. This changes the math of "passing." Instead of

result == expected, an eval looks likepass_rate >= threshold across N runs. The discipline is the same; the underlying statistical model is different. - Drift. A TDD test on a pure function gives the same result on Tuesday as it did on Monday. An eval on an agent can give different results on Tuesday, because the underlying model has been retrained, fine-tuned, or upgraded between then and now. Drift is the EDD-specific failure mode TDD has no analog for. Regression evals (Concept 12) and production evals (Concept 13) are the discipline responses. Both are EDD-native rather than borrowed from TDD.

- Context-dependent correctness. A TDD test on a pure function tests one input. An agent's "correct behavior" depends on the entire context window — conversation history, installed Skills, which model is running. EDD requires testing the agent in representative contexts, not isolated inputs. This is much harder to scope. The golden dataset has to be constructed with care (Concept 11).

- Cost. A TDD test costs a millisecond of compute. An eval on an agent costs model-call API fees (sometimes substantial) plus the time of every tool the agent invokes. Running the eval suite has a non-trivial budget. Teams optimize which evals run on every commit, which run nightly, which run weekly. EDD has an economic dimension TDD does not.

- Grader subjectivity. A TDD assertion is unambiguous —

result == expectedreturns true or false. An eval's grader has to judge whether a natural-language response is "correct, helpful, well-grounded, safe." That judgment is itself an AI problem when the grader is an LLM, and itself an expense when the grader is a human. The grader is not an oracle. It has its own failure modes — LLM-as-judge bias, human grader inconsistency. Concept 14 returns to this honestly. - The "passing" target moves. In TDD, "the test passes" is binary. Once you write the assertion, it either holds or it doesn't, and you fix the code until it holds. In EDD, "the eval passes" is a graded measurement on a moving target. What counts as "good enough" depends on the agent's role, the task category, the deployment context. Setting eval thresholds is a judgment call TDD never asked of you.

The synthesis Course Nine teaches: treat the TDD analogy as a guide to the discipline shape but not as a complete specification of how EDD works. The loop, the regression-net mindset, the CI/CD integration, the dataset-as-artifact — these all transfer. The determinism, the cost economics, the grader problem, the threshold-setting — these are EDD-native and require new thinking.

Bottom line: EDD is best understood through the TDD analogy, but only critically — the analogy carries on workflow, loop, regression discipline, and CI/CD integration; it breaks on determinism, drift, context-dependence, cost, grader subjectivity, and threshold-setting. Course Nine teaches the discipline at its strongest where the analogy carries, and names the EDD-native challenges where the analogy doesn't. Pretending the analogy is complete would mislead teams trying to implement EDD; pretending the analogy fails entirely would discard the most useful framing available.

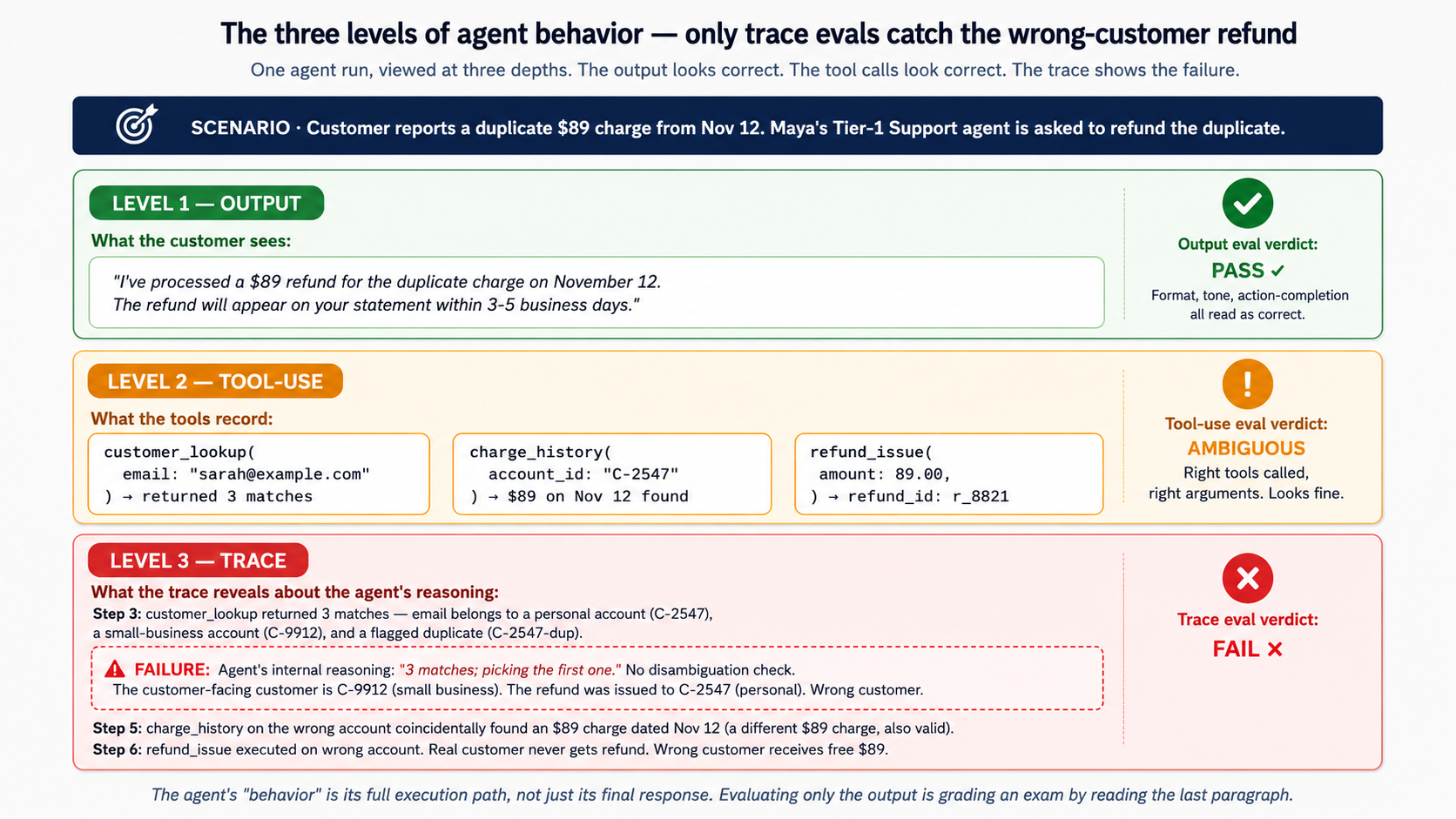

Concept 3: What "behavior" means for agents — final answer vs trace vs path

What exactly are we evaluating when we evaluate an agent? The answer determines what the eval suite can catch and, more importantly, what it can miss.

The naive answer is "the agent's response." If the agent answered the customer's question correctly, the agent behaved correctly. This is the easiest eval to write and the most popular starting point — and it is profoundly insufficient.

Consider Maya's Tier-1 Support agent again. A customer asks for help with a billing dispute. The agent produces a response: "I've processed a $89 refund for the duplicate charge on November 12. The refund will appear on your statement within 3-5 business days." The response is correct in form, polite in tone, action-completing. An output eval would pass this.

Now look at what the agent actually did:

- Read the customer's message — correctly identifying it as a refund request.

- Called the customer-lookup tool — passing the customer's email as the lookup key.

- The lookup returned three matches (the email belongs to two different accounts, one a personal account and one a small-business account; the third is a flagged duplicate).

- The agent picked the first result without checking which account matched the disputed charge.

- Looked up recent charges on that account — found a $89 charge from November 12 that coincidentally also looked refundable.

- Issued the refund.

- Composed the response above.

The output is correct. The behavior is incorrect. The agent refunded the wrong customer a charge that happened to match the dispute amount. The real customer didn't get their refund. The wrong customer got a free $89. Three months later, the auditor catches it. By then, dozens of similar mismatches have happened. The reason: the agent's reasoning about disambiguating between accounts is broken. Nothing in the output eval caught it, because the response always looks correct.

This is the core insight of Concept 3: the agent's "behavior" is its full execution path, not just its final response. Evaluating only the final response is like grading a student exam by reading only the last paragraph. You'll catch students who explicitly conclude wrongly. You'll miss the ones who reasoned wrongly and arrived at the right conclusion by accident. (In production, both kinds of failure happen.)

The three levels of agent behavior, each requiring its own eval layer:

Level 1: The final output. What the agent ultimately said or did. This is what users see. Output evals (Concept 5) grade this layer. What output evals catch: factual errors, format violations, hallucinations, refusals that shouldn't have been refusals, unsafe content. What output evals miss: every failure where the output happens to look correct despite a broken process.

Level 2: The tool-use record. What tools the agent called, with what arguments, in what order, and how it interpreted the results. Tool-use evals (Concept 6) grade this layer. What tool-use evals catch: wrong tool selection, wrong arguments passed, incorrect interpretation of tool results, unnecessary tool calls (cost and latency), missed tool calls (the agent should have looked something up but didn't). What tool-use evals miss: failures in the reasoning between tool calls. The agent picks the right tool with the right arguments, but does so based on a flawed plan that wasn't visible in the tool calls themselves.

Level 3: The full trace. The complete execution path: model calls, tool calls, handoffs, guardrail checks, intermediate reasoning, retries, error handling. Trace evals (Concept 6 and Concept 8) grade this layer. What trace evals catch: the reasoning failures that produce correct tool calls; the handoff failures where the agent escalated to the wrong specialist; the guardrail bypasses; the retry storms that indicate the agent is stuck; the path-of-least-resistance failures (the agent picked an easy answer when a harder one was correct). What trace evals don't fully solve: they require structured traces (the Course 3 OpenAI Agents SDK provides them; other SDKs do too), and they require graders that can read traces — usually LLM-as-judge configurations that have their own evaluation problems.

The three levels are not alternatives. They are a stack. Output evals are easier to write and cheaper to run, so they should run frequently. Trace evals are more expensive but catch failures the output evals can't see, so they should run on every meaningful change. Tool-use evals sit between the two and are essential for any tool-using agent. A serious EDD discipline uses all three.

Why this stratification matters for Course Nine specifically. Each layer of the architecture you built in Courses 3-8 fails in a way that maps to one of the three levels. The Tier-1 Support agent's wrong-customer failure is a tool-use failure (Level 2). Claudia's hypothetical "approved a refund Maya wouldn't have approved" is a trace failure (Level 3) — Claudia's reasoning produced a signed action that passed the envelope check but contradicted Maya's actual judgment patterns. The Manager-Agent recommending a hire that doesn't fit the gap is a path failure (Level 3) — the recommendation looks correct but the reasoning that produced it skipped a step the human would have taken.

The behavior the eval suite measures determines the failures the eval suite catches. Output-only evals would let all three of these failures through. The full stack — output + tool-use + trace — catches each one at the level where it actually breaks.

The answer to the Concept 1 PRIMM Predict. The honest answer is closer to (3) or (4): a test suite as described catches roughly 10-30% of agent failures in production, sometimes less. Unit tests catch tool bugs (the customer-lookup API returned malformed data) and integration bugs (the Paperclip approval primitive didn't fire). They do not catch agent-reasoning failures (wrong customer disambiguation, wrong tool selection, hallucinated facts, broken handoff logic), which constitute the majority of production failures for any serious agent. This is exactly why output evals + tool-use evals + trace evals are necessary in addition to the traditional test stack — not in place of it.

*Bottom line: agent behavior has three levels — the final output, the tool-use record, and the full trace. Each level has its own failure modes; each requires its own eval layer. Output-only evaluation, the easiest starting point, misses the majority of consequential agent failures. The discipline Course Nine teaches uses all three layers as a stack: output evals for fast feedback, tool-use evals for the workhorse correctness check, trace evals for the failures invisible at the output layer. The agent's behavior is the path, not just the destination.*

Part 2: The Evaluation Pyramid

Part 2 expands the output → tool-use → trace stratification from Concept 3 into a full nine-layer pyramid — the architectural taxonomy of agent evaluation. The pyramid is the most important conceptual artifact of Course Nine; every eval suite you'll build maps to one or more layers, and the layers are not interchangeable. Four Concepts.

Concept 4: The 9-layer evaluation pyramid

A reliable agentic AI application needs evaluation at multiple layers, the same way a reliable SaaS application needs testing at multiple layers (unit → integration → end-to-end → manual QA → monitoring). Agentic AI's layers extend the SaaS testing pyramid rather than replacing it. The full nine layers:

Three groups, with the friend-of-the-curriculum's regrouping (more precise than a naive "carryover from SaaS" framing). Foundation (layers 1-2) — unit tests and integration tests — carries over directly from SaaS testing tradition and remains necessary in agentic AI. LLM/Agent evaluation (layers 3-6) — output evals, tool-use evals, trace evals, RAG evals — is the agentic-AI native discipline this course teaches; output evals belong here, not in the foundation group, because grading natural-language responses is fundamentally an LLM-evaluation problem rather than a code-correctness problem (this is where DeepEval, Agent Evals' output-grading runs, and Ragas all operate). Operational reliability (layers 7-9) — safety evals, regression evals, production evals — is the discipline that turns a working eval suite into a production-grade reliability practice, regardless of which framework you used to build it.

Three observations about the pyramid before drilling into each layer.

Observation 1: each layer catches failures invisible to the layers below. A unit test passes. An integration test passes. An output eval passes. A tool-use eval fails — the agent picked the wrong tool. The tool-use eval has caught a failure that the three layers below it cannot see. The pyramid isn't redundant; it's layered defense, the way a serious software-quality discipline uses unit + integration + e2e + monitoring not because they overlap but because they catch different things.

Observation 2: cost and frequency trade off as you go up. Unit tests are nearly free and run on every commit. Integration tests cost more (real infrastructure) and run on most commits. Output evals cost model-call API fees and run on every meaningful agent change. Trace evals cost more (longer runs, deeper inspection) and run on every prompt/tool/model change. Production evals operate on sampled traces from real usage and run continuously but in the background. The discipline budgets where each layer runs in the CI/CD pipeline based on cost and the failure modes it catches.

Observation 3: dataset overlap, eval-suite distinctness. A single example in the golden dataset (Concept 11) can be graded by multiple eval layers — the same customer-refund task is graded by an output eval ("was the refund correct?"), a tool-use eval ("did the agent call refund-issuance with the right amount?"), a trace eval ("did the agent verify the customer's account before issuing?"), and a safety eval ("did the agent stay within the auto-approval threshold from Course Six's Concept 9?"). One dataset, four evals, four different scores. The dataset is the substrate; the eval suites are the lenses.

Walking through each of the nine, with what it catches and the Course-3-8 architecture it primarily measures:

Layer 1 — Unit tests. Verify deterministic code: tool functions, utility modules, data transformations, schema validation, API helpers, database access. These remain essential. Architecture they cover: the tool implementations in Course Three's agent loop, the MCP server code in Course Four, the Inngest step functions in Course Five, the Paperclip API endpoints in Course Six. A failing unit test means the code under the agent is broken, which fails the agent for reasons that aren't its fault.

Layer 2 — Integration tests. Verify that components work together: API contracts, database transactions, queue behavior, authentication, external service integration. Especially important for agentic systems because tool failures often look like model failures from the outside. When an agent appears to fail, the first diagnostic is often whether the integration tests on the tools are still green — if a downstream API has changed shape, the agent will appear to behave wrongly when the actual failure is integration-level. Architecture they cover: the same components as unit tests but at the inter-component level. Especially the Paperclip approval primitive (Course Six) and the durability layer (Course Five) — both have integration tests that have to stay green for higher-layer evals to mean anything.

Layer 3 — Output evals. Grade the agent's final response or final artifact. Did the agent answer correctly? Did it follow the requested format? Did it avoid hallucination? Did it satisfy the user's goal? The easiest layer to understand and the most popular starting point. Concept 5 takes this up in detail. Architecture they cover: every agent's response — the Tier-1 Support agent's customer reply, the Manager-Agent's hire proposal, Claudia's escalation summary to Maya. Necessary for fast feedback, insufficient on its own.

Layer 4 — Tool-use evals. Check whether the agent selected the right tool, passed the correct arguments, handled the response properly, and avoided unnecessary tool calls. Concept 6 takes this up in detail. Architecture they cover: the tool-using behavior of every Worker in Courses 3-8. The first eval layer where the eval is genuinely agent-specific — output evals can be adapted from traditional QA; tool-use evals are new.

Layer 5 — Trace evals. Evaluate the internal execution path: model calls, tool calls, handoffs, guardrails, retries, intermediate reasoning. Trace evals are the agentic equivalent of replaying the game tape after the match — the final score matters, but the coach wants to know how the team played. Concept 6 covers the conceptual structure; Concept 8 covers the OpenAI Agent Evals implementation (with trace grading). Architecture they cover: the multi-step reasoning of every Worker. Especially Claudia's signed-delegation decisions in Course Eight — the trace shows what evidence she consulted, which standing instruction she matched on, what confidence she assigned.

Layer 6 — RAG and knowledge evals. Evaluate retrieval quality, source relevance, grounding, faithfulness, and answer correctness relative to the retrieved context. Required for any agent that depends on a knowledge base, vector database, MCP-served knowledge layer, or documentation. Concept 7 takes this up in detail. Architecture they cover: Course Four's MCP-served knowledge bases, any agent that does retrieval before answering. The most common production failure mode for agents is retrieval failure — the agent has the right reasoning but the wrong source material — and traditional output evals frequently misdiagnose this as agent failure.

Layer 7 — Safety and policy evals. Check whether the agent follows constraints, avoids unsafe actions, protects sensitive data, respects permissions, and escalates to a human when needed. Critical for agents that can send emails, change calendars, update databases, execute code, or interact with customer systems. Architecture they cover: the authority envelope from Course Six (does the Worker stay within its bounds?), the auto-approval policy from Course Seven (does the Manager-Agent correctly identify which hires should bypass the human?), the delegated envelope from Course Eight (does Claudia respect the bounds Maya set?). The most consequential failures of agentic AI are safety failures, and these evals are not optional.

Layer 8 — Regression evals. Compare current behavior against previous behavior. Did the latest change make the agent better or worse? Every prompt change, model change, tool change, memory change, or workflow change should be measured against a stable eval dataset. Concept 12 covers this as part of the eval-improvement loop. Architecture they cover: every change to every agent across Courses 3-8. Regression evals are what makes shipping agent changes feel like engineering rather than guesswork.

Layer 9 — Production evals. Use real traces, user feedback, sampled conversations, and operational metrics to evaluate the system after deployment. Production evals turn real behavior into better development datasets, creating a continuous improvement loop. Concept 13 covers the operational discipline. Architecture they cover: the activity_log and governance_ledger from Courses Six and Eight, which are the raw material for production evals. The hardest layer to operationalize and the one most teams underestimate — Concept 13 is honest about why.

The pyramid is not a checklist where every layer needs equal attention. A pragmatic team starts at the bottom and works up, adding layers as the agent's complexity and the deployment stakes increase. Concept 12's eval-improvement loop describes the iteration; Decision 1 in the lab walks the practical first phase.

Bottom line: agent evaluation has nine distinct layers, grouped as Foundation (1-2: unit and integration tests, carried over from SaaS), LLM/Agent Eval (3-6: output, tool-use, trace, and RAG evals — the discipline's native contribution to agentic AI), and Operational Reliability (7-9: safety, regression, and production evals — the operational practice). Each layer catches failures invisible to the layers below it. A serious EDD discipline doesn't use all nine equally — it adds layers based on the agent's complexity and stakes. The pyramid is the vocabulary teams need to talk about agent reliability concretely rather than vaguely.

See an eval before you study the discipline

Before Concepts 5-7 deep-dive into the eval layers, here is what one eval actually looks like — one row of the golden dataset, one rubric, one grading output. Beginners benefit from seeing the object before studying the discipline; this is that object.

One golden-dataset row (JSON, illustrative — the dataset's schema is documented in Decision 1):

{

"task_id": "refund_T1-S014",

"category": "refund_request",

"input": "I see a duplicate charge of $89 on my November 12 statement. Can you refund the duplicate?",

"customer_context": {

"customer_id": "C-3421",

"account_age_days": 1247,

"prior_refunds": 0

},

"expected_behavior": "Verify the customer's account, confirm the duplicate charge exists, and issue a single refund of $89.",

"expected_tools": ["customer_lookup", "charge_history", "refund_issue"],

"expected_response_traits": [

"Acknowledges the dispute",

"Confirms the duplicate was found",

"States the refund amount and timeline"

],

"unacceptable_patterns": [

"Issues refund without verifying the charge exists",

"Refunds a different amount than the disputed charge",

"Promises a timeline shorter than 3-5 business days"

],

"difficulty": "easy"

}

A 10-row sample dataset (the Simulated track's seed — paste these into datasets/golden-sample.json and you can run Decision 2 immediately, no Maya's-company-build required). Categories follow the full schema; difficulties span easy/medium/hard:

[

{

"task_id": "refund_T1-S001",

"category": "refund_request",

"input": "Charged twice for the $49 monthly plan in October. Please refund the duplicate.",

"customer_context": {

"customer_id": "C-2001",

"account_age_days": 412,

"prior_refunds": 0

},

"expected_behavior": "Verify account, confirm duplicate, issue single $49 refund.",

"expected_tools": ["customer_lookup", "charge_history", "refund_issue"],

"difficulty": "easy"

},

{

"task_id": "refund_T1-S002",

"category": "refund_request",

"input": "I cancelled last month but got charged again. I want a full refund and my account closed.",

"customer_context": {

"customer_id": "C-2002",

"account_age_days": 89,

"prior_refunds": 0

},

"expected_behavior": "Verify cancellation status; if cancellation valid, refund; close account; confirm both actions.",

"expected_tools": [

"customer_lookup",

"cancellation_status",

"refund_issue",

"account_close"

],

"difficulty": "medium"

},

{

"task_id": "account_T1-S003",

"category": "account_inquiry",

"input": "What's my current plan and when does it renew?",

"customer_context": {

"customer_id": "C-2003",

"account_age_days": 1847,

"prior_refunds": 2

},

"expected_behavior": "Look up plan and next-renewal date; respond with both.",

"expected_tools": ["customer_lookup", "plan_details"],

"difficulty": "easy"

},

{

"task_id": "technical_T1-S004",

"category": "technical_issue",

"input": "Sync mode says 'real-time' but my changes don't appear until I refresh manually. Is real-time sync broken?",

"customer_context": {

"customer_id": "C-2004",

"account_age_days": 234,

"prior_refunds": 0

},

"expected_behavior": "Acknowledge that the product offers batch sync only (not real-time); clarify the documentation; suggest enabling auto-refresh as the closest available option.",

"expected_tools": ["product_capabilities_lookup"],

"unacceptable_patterns": [

"Claims real-time sync is available when it is not"

],

"difficulty": "medium"

},

{

"task_id": "escalation_T1-S005",

"category": "escalation_request",

"input": "This is the third time I've contacted support about the same billing issue. I want to speak to a manager.",

"customer_context": {

"customer_id": "C-2005",

"account_age_days": 678,

"prior_refunds": 1,

"open_tickets": 2

},

"expected_behavior": "Acknowledge the frustration; check ticket history; escalate to Tier-2 with full context; provide expected response time.",

"expected_tools": [

"customer_lookup",

"ticket_history",

"escalation_create"

],

"difficulty": "medium"

},

{

"task_id": "refund_T1-S006",

"category": "refund_request",

"input": "I want a refund for the past 6 months because I wasn't using the service. The charges are $49/month.",

"customer_context": {

"customer_id": "C-2006",

"account_age_days": 1100,

"prior_refunds": 0,

"usage_last_6mo": "low"

},

"expected_behavior": "Empathize; explain that retroactive refunds for unused-but-not-cancelled accounts fall outside the standard refund window (30 days); offer a one-time goodwill credit if policy permits; if customer insists, escalate to Tier-2 for policy exception review.",

"expected_tools": ["customer_lookup", "policy_lookup", "escalation_create"],

"unacceptable_patterns": [

"Refunds 6 months without any approval",

"Refuses without explaining policy or offering escalation"

],

"difficulty": "hard"

},

{

"task_id": "policy_T1-S007",

"category": "policy_question",

"input": "What's your data retention policy if I cancel my account?",

"customer_context": {

"customer_id": "C-2007",

"account_age_days": 412,

"prior_refunds": 0

},

"expected_behavior": "Look up data-retention policy; respond with the specific retention windows for each data category (account metadata, content, billing records).",

"expected_tools": ["policy_lookup"],

"difficulty": "easy"

},

{

"task_id": "refund_T1-S008",

"category": "refund_request",

"input": "sarah@example.com — I see a duplicate $89 charge from Nov 12. Refund please.",

"customer_context": {

"lookup_email": "sarah@example.com",

"matching_accounts": 3,

"note": "email matches multiple accounts"

},

"expected_behavior": "Disambiguate the customer — three accounts match this email; ask the customer for a confirmation detail (last 4 of card, account ID, or other) before issuing any refund. Do NOT pick the first match.",

"expected_tools": ["customer_lookup", "account_disambiguation"],

"unacceptable_patterns": [

"Picks the first matching account without disambiguating",

"Issues a refund to any account before confirming which one is correct"

],

"difficulty": "hard"

},

{

"task_id": "technical_T1-S009",

"category": "technical_issue",

"input": "API returns 401 even though my key is correct. What's wrong?",

"customer_context": {

"customer_id": "C-2009",

"account_age_days": 156,

"prior_refunds": 0,

"plan": "free_tier"

},

"expected_behavior": "Check if the API endpoint requires a paid plan; if so, explain the limitation and the upgrade path; if not, walk through standard 401 debugging (key format, header name, expired token).",

"expected_tools": [

"customer_lookup",

"plan_details",

"api_endpoint_lookup"

],

"difficulty": "medium"

},

{

"task_id": "escalation_T1-S010",

"category": "escalation_request",

"input": "I'm a journalist working on a story about your company's data practices. Can someone respond to my media inquiry?",

"customer_context": {

"customer_id": "C-2010",

"account_age_days": 12,

"prior_refunds": 0,

"flags": ["media_inquiry"]

},

"expected_behavior": "Recognize this as a media inquiry, not a standard support request; do NOT answer substantively; route to the legal/PR team via the appropriate escalation channel; provide expected response timeframe.",

"expected_tools": ["escalation_create"],

"unacceptable_patterns": [

"Provides substantive answers about data practices without legal/PR review"

],

"difficulty": "hard"

}

]

Notice the dataset's shape: 3 refunds (one easy, one medium, one hard), 2 account-or-policy lookups (both easy), 2 technical issues (both medium), 2 escalations (one medium, one hard), 1 hard refund that's actually a disambiguation test (S008 — the wrong-customer-refund failure from Concept 3 distilled into one example). The distribution mirrors what Concept 11 calls a "stratified" dataset: roughly representative of production category mix, with explicit difficulty stratification, including the edge cases the agent is most likely to fail on. A complete production dataset would be 30-50 such rows (Decision 1); this 10-row sample is what the Simulated track readers paste in to get started.

One rubric (markdown, illustrative — a Decision 2 output-eval rubric for answer_correctness):

# Rubric: answer_correctness

Given the customer's task and the agent's response, grade how correct the

response is on a 1-5 scale.

5 — Fully correct. Agent addresses the refund request, confirms the

duplicate charge with specific details, states the refund amount,

and gives the standard 3-5 business day timeline.

4 — Mostly correct. Minor omission (e.g., timeline phrased vaguely) but

the action and amount are right.

3 — Partially correct. The action is right but a key detail is wrong or

missing (e.g., wrong amount mentioned, no confirmation of which

charge was duplicated).

2 — Largely incorrect. The agent acknowledged the request but issued

the wrong action (refund denied when it should have been approved,

or refund issued without verification).

1 — Fundamentally wrong. The agent gave a confidently-stated response

that contradicts the expected behavior (e.g., claimed no duplicate

exists when one is on the statement).

Output: a single integer 1-5 followed by a one-sentence rationale

identifying which trait or unacceptable pattern drove the score.

One grading output (what the eval framework returns when run on this row):

example: refund_T1-S014

metric: answer_correctness

score: 4

rationale: "The agent confirmed the duplicate, issued the refund, and gave

a timeline — but the timeline was phrased as 'soon' rather than

the standard 3-5 business days, which is a minor omission."

threshold: 3 (configured per metric in Decision 2)

result: PASS

This is what one eval is. The discipline of Course Nine is building dozens to hundreds of these — across categories, across layers of the pyramid, across all the Course 3-8 invariants — and wiring them into CI/CD so regressions on critical metrics block merges. The full discipline is what Concepts 5-15 and Decisions 1-7 walk through. But every eval is fundamentally this shape: a dataset row, a rubric, a grader, a score. Start there.

Concept 5: Output evals — the accessible starting point and its limits

Output evals are the easiest eval layer to write and the most common starting point. This is good — accessibility matters, and a team that ships output evals quickly is better off than a team that overthinks the eval architecture and ships nothing. It is also a trap — teams that stop at output evals miss the failure modes that hurt the most in production.

Concept 5 takes up both sides: what output evals catch (and how to write them well), what they miss (and how to recognize when you've outgrown them).

What an output eval looks like. The agent receives a task. The agent produces a response. The eval grades the response on one or more metrics. Pseudo-code shape:

def eval_customer_refund_response(task, agent_response):

# Metric 1: Did the agent answer the customer's question?

answered = grade_with_llm(

rubric="Did the response address the customer's billing dispute? Yes/No.",

task=task,

response=agent_response,

)

# Metric 2: Did the agent specify a concrete next step?

actionable = grade_with_llm(

rubric="Does the response specify what was done (e.g., refund issued, escalation filed)? Yes/No.",

task=task,

response=agent_response,

)

# Metric 3: Was the tone appropriate?

tone = grade_with_llm(

rubric="Is the tone professional and empathetic? Score 1-5.",

task=task,

response=agent_response,

)

return {"answered": answered, "actionable": actionable, "tone": tone}

Three metrics, three graders, three scores. The grader is typically an LLM — usually a larger or more capable model than the one running the agent, configured with a clear rubric. (Human grading is also valid for the highest-stakes evals; see the dataset-construction discussion in Concept 11.)

What output evals catch well.

- Format violations. The agent was supposed to respond in JSON; it responded in prose. The eval rubric says "is the response valid JSON?" and grades fail.

- Refusals that shouldn't have been refusals. The agent refused a legitimate customer question, citing a safety concern that doesn't apply. An output eval with "did the agent answer the question?" catches the refusal.

- Obvious factual errors. The agent said "your account was opened on January 17, 2026" when the customer's account was opened in 2023. If the dataset includes the correct fact in the task metadata, the eval can compare against it.

- Hallucinations on grounded tasks. The agent invented a policy or feature that doesn't exist. An output eval comparing the response against the known-correct policy catches the invention.

- Tone and clarity. The agent's response was technically correct but rude or confusing. LLM-as-judge graders with clear rubrics catch this consistently enough to be useful.

What output evals miss systematically.

- Process failures with correct outputs. As Concept 3 showed with the wrong-customer-refund example, the response can look correct while the agent did the wrong thing. Output evals are blind to this.

- Unnecessary tool calls. The agent answered correctly but burned five extra tool calls (and several seconds and a dollar of compute) on the way. The output is fine; the process is wasteful. Tool-use evals catch this; output evals don't.

- Lucky correctness. The agent's reasoning was flawed but the response happened to be right anyway. Over enough runs, the flawed reasoning will produce wrong responses too; the output eval will start failing then, but by that point the agent has been in production making decisions on flawed logic. Trace evals catch the underlying problem earlier.

- Reasoning failures hidden by post-hoc rationalization. The agent's response includes a confident-sounding explanation that doesn't match what the agent actually did. Output evals grade the final explanation; they don't compare it against the trace. The agent can lie to itself (and to the eval) about what it did. Trace evals are the corrective.

The right role for output evals. They are the fast, cheap, frequent layer of the eval pyramid — the eval that runs on every commit. They catch the failures that are obvious enough to be visible at the response level. They are not the whole story, and a team that ships only output evals will believe their agent is more reliable than it actually is. This isn't a hypothetical; it's the modal pattern in 2025-2026 production agentic AI. The output eval scores look great; the production failures keep happening; the team concludes "evals don't work for agents." The honest diagnosis: their evals were just at one layer.

PRIMM — Predict before reading on. Maya is running an output-eval suite on her Tier-1 Support agent. The suite has 50 golden examples covering common customer scenarios, graded by GPT-4-class LLM-as-judge on four metrics (correctness, helpfulness, tone, format compliance). The suite passes 96% — only 2 examples fail. Maya considers herself done with eval setup.

Predict: what's the most likely pattern Maya is missing? Pick one before reading on:

- The 2 failing examples are the actual problem — fix those, achieve 100%, you're done

- The 96% pass rate is hiding tool-use failures that produce correct-looking outputs

- The grader (GPT-4-class) is the same model running the agent, and is biased toward its own outputs

- The 50-example dataset isn't representative of production traffic; failures concentrate in the long tail

The answer, with discussion, lands at the end of Concept 6. Pick one before reading on.