The Agent Factory Thesis: Plain-English Version

Fluent in AI? Read the Professional version →

Who is this version for? This version is written for two kinds of readers: people who are new to technology and business, and people who do not speak English as their first language. The English in this version is kept simple. Sentences are short. Every important word is explained the first time it is used. The original thesis is also available for readers who already know the words and want the deeper version. Both versions say the same thing. They just take different paths to get there.

Three ways to read this document

The 10-minute path — Read only the next section, "Start Here: The Whole Thesis in 2 Pages." That alone gives you the complete argument.

The 30-minute path — Read Sections 1, 5, 9, 13, 15, and 17. You get the core ideas plus a worked example.

The full path — Read every section in order. About 60 to 90 minutes. Best if you want depth, evidence, and stories.

All three paths end at the same place. Pick the one that fits your time.

Start Here: The Whole Thesis in 2 Pages

If you only have ten minutes, read this section. It contains the whole argument. Everything after this is the same idea, just slower and with more examples.

The big change

For the last twenty years, technology companies sold you software. You logged in. You did the work yourself. You paid every month for access.

That model is no longer the only one. A new model is rising beside it. New companies are being built where the workers are AI — not the tools. You no longer buy a tool from these companies. You hire their AI workforce to do a job for you.

Think about the difference. Microsoft sells you Word, and you write the document. The new model sells you the finished document — written by an AI worker, checked for quality, delivered to you. You paid for the result, not for the tool.

Three words to learn first

- AI Worker (also called Digital FTE — Full-Time Employee made of software): An AI built to do one specific job, like a human employee does. Customer support, bookkeeping, sales — each one is a role-shaped AI.

- AI-Native Company: A company where most of the workers are AI. What it sells is whatever those workers produce.

- The Agent Factory: The method for building AI workers and the companies they staff. Not a product you buy — a practice you learn.

How the work splits

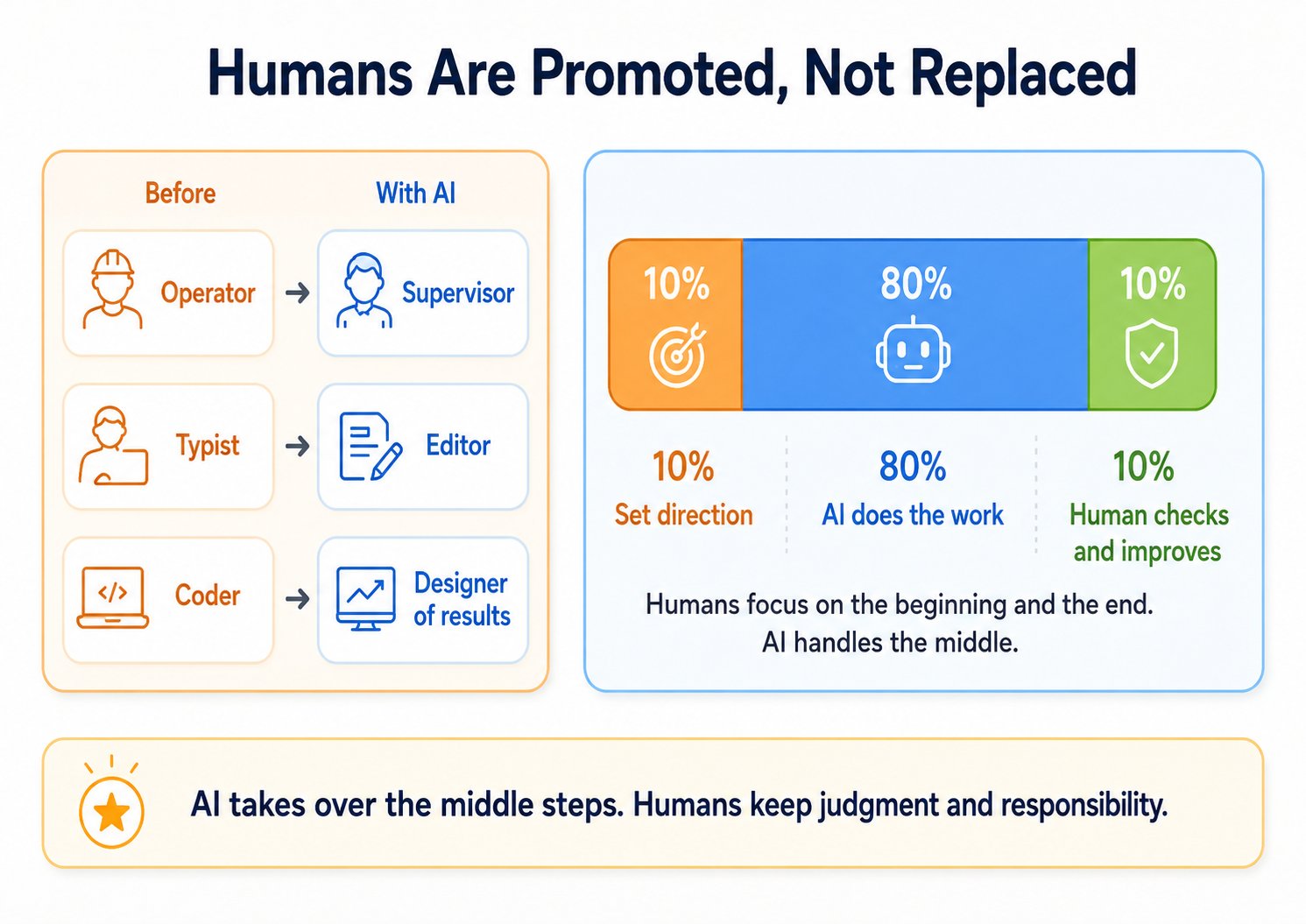

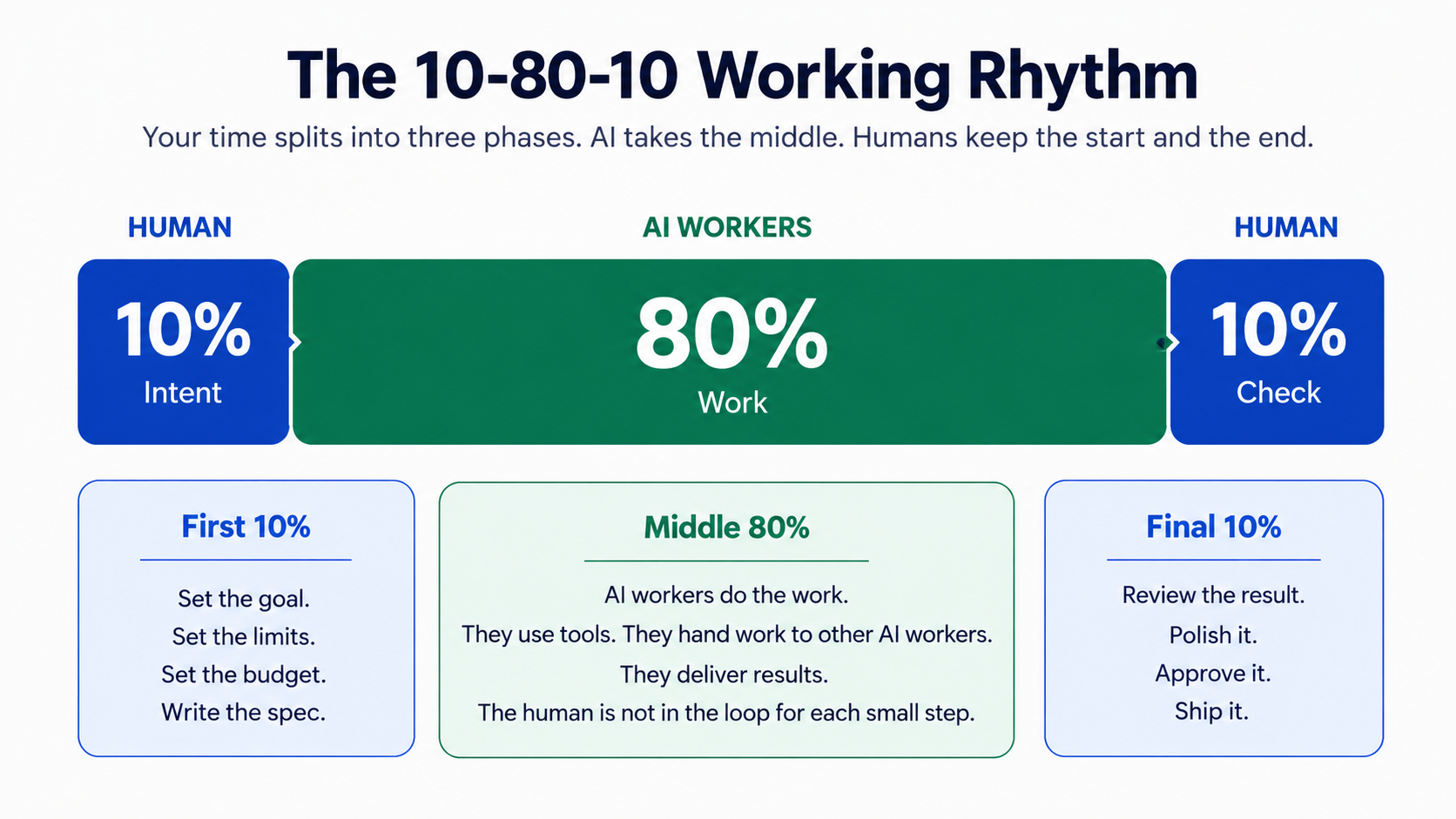

Humans set direction. AI does the work. Humans check results.

This is the 10-80-10 rhythm:

- First 10% — a human writes a clear plan (goal, limits, budget)

- Middle 80% — AI workers do the actual work

- Final 10% — a human checks and approves

Three things stay with humans and never move to AI: intent (knowing what you want), verification (knowing whether you got it), and outcome ownership (being responsible for the result).

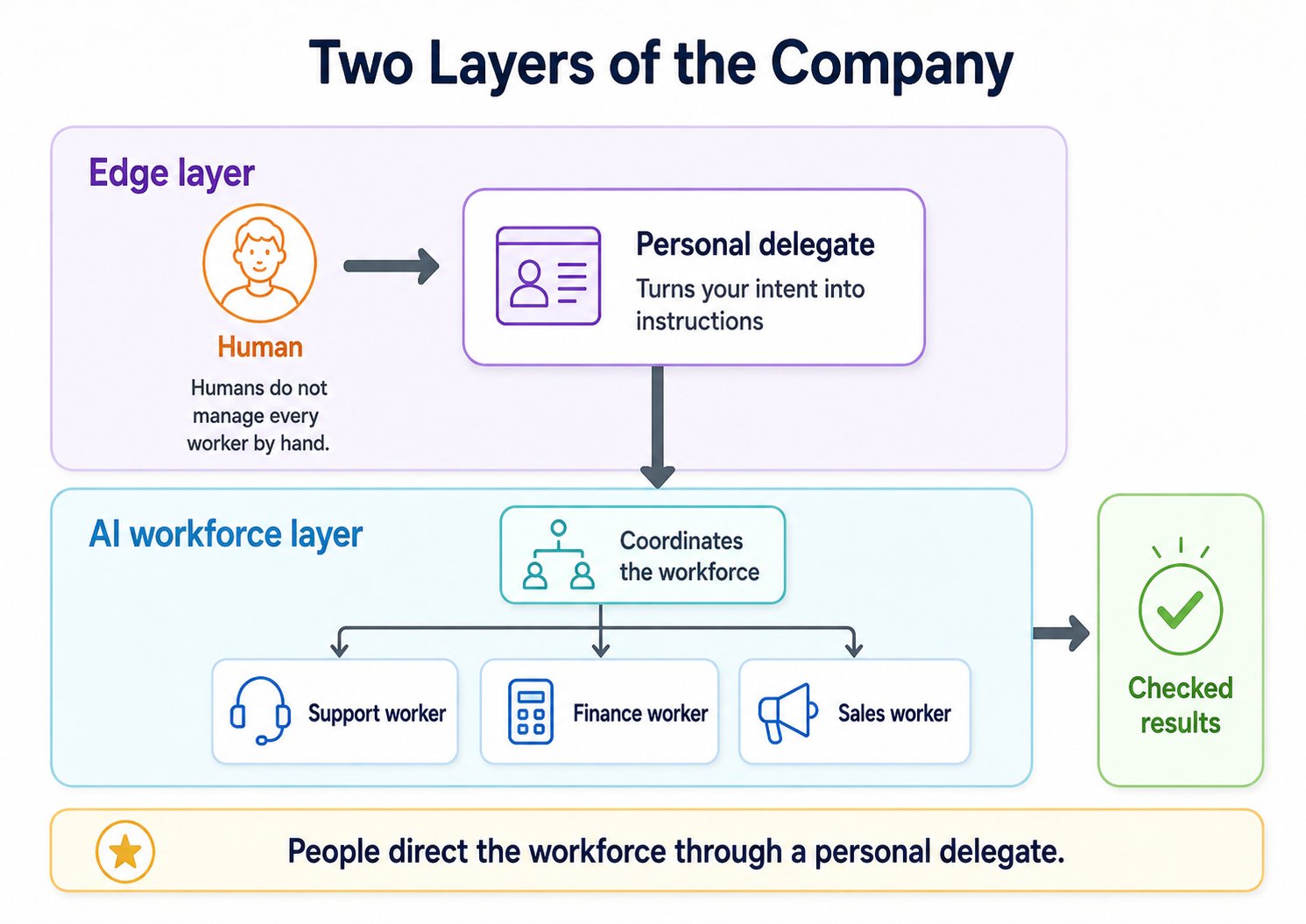

Two layers of the future company

A human cannot manage twenty AI workers by hand. So the company has two layers:

- Edge Layer — Each human has a personal AI agent (a delegate) that knows them and acts on their behalf.

- AI Workforce Layer — Specialist AI workers do the actual jobs, coordinated by a management layer.

You talk to your delegate. Your delegate talks to the workforce. Results come back to you.

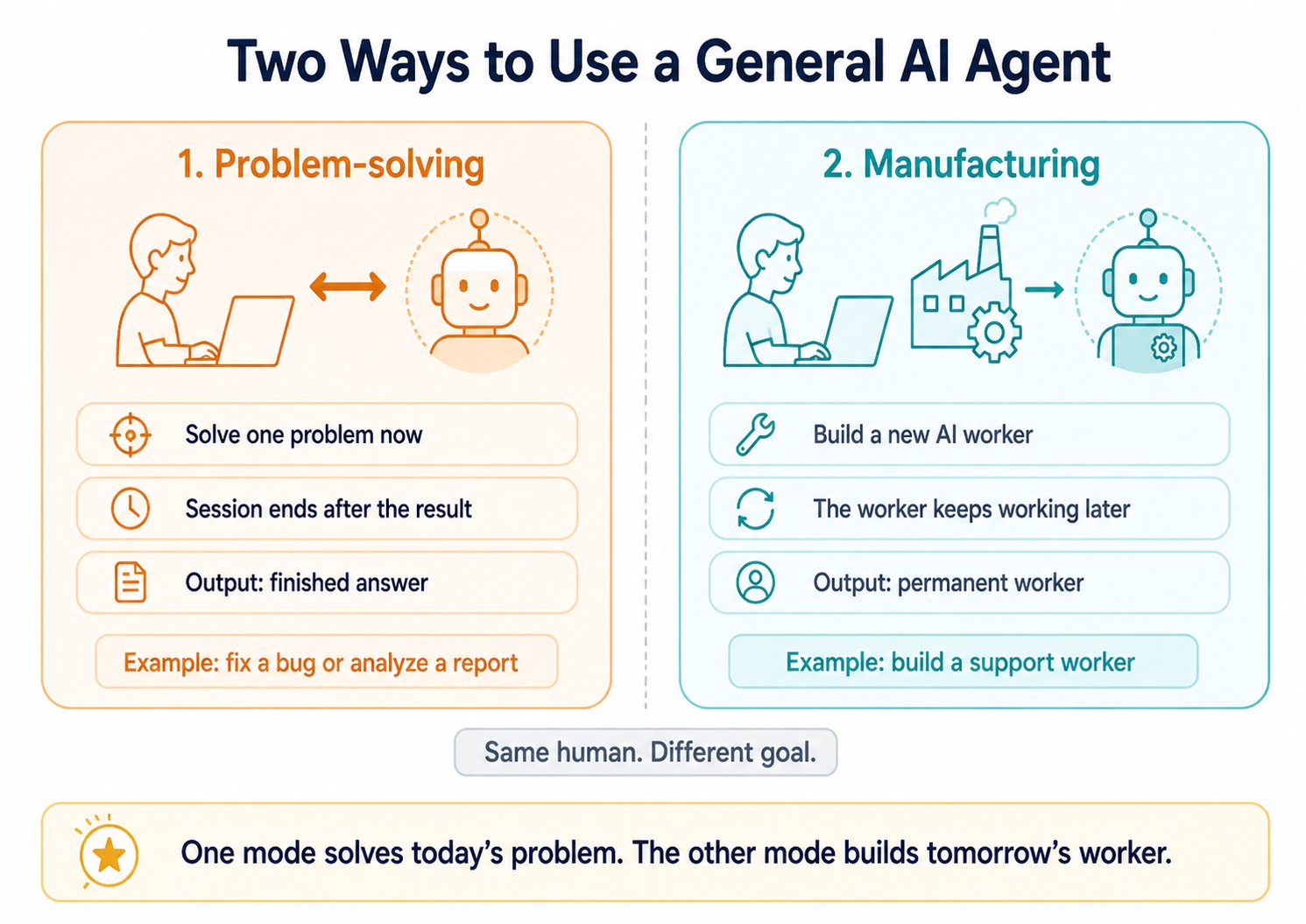

Two ways to use a powerful AI tool

When you sit down with a general AI tool, you are doing one of two things:

- Problem-solving — You have a problem now, you want a finished answer, the session ends. Fix a bug. Analyze a report.

- Manufacturing — You are building a new AI worker that will keep running long after this session. The output is not an answer. It is a permanent worker that will produce answers from now on.

One mode solves today's problem. The other mode builds tomorrow's worker.

Seven rules that do not change

The architecture of any AI-Native Company obeys seven rules:

- Human is in charge. Every action traces back to a human who set the direction.

- Every human has a delegate. One AI agent represents you and acts on your behalf.

- The workforce has a management layer. It hires workers, assigns work, controls budgets.

- Each worker uses the right engine. Reliable engines for important work. Cheap engines for routine work.

- Every worker uses a system of record. AI workers read from and write to the company's official memory.

- The workforce can grow under rules. When a gap appears, the system hires a new worker — within the limits the human set.

- The company runs on a nervous system. Events flow between workers automatically, survive crashes, and control traffic.

The rules do not change. The specific products that fill them today (OpenClaw, Paperclip, Inngest, and others) will change — and that is fine. Tools change. Rules stay.

Why this is real, not a forecast

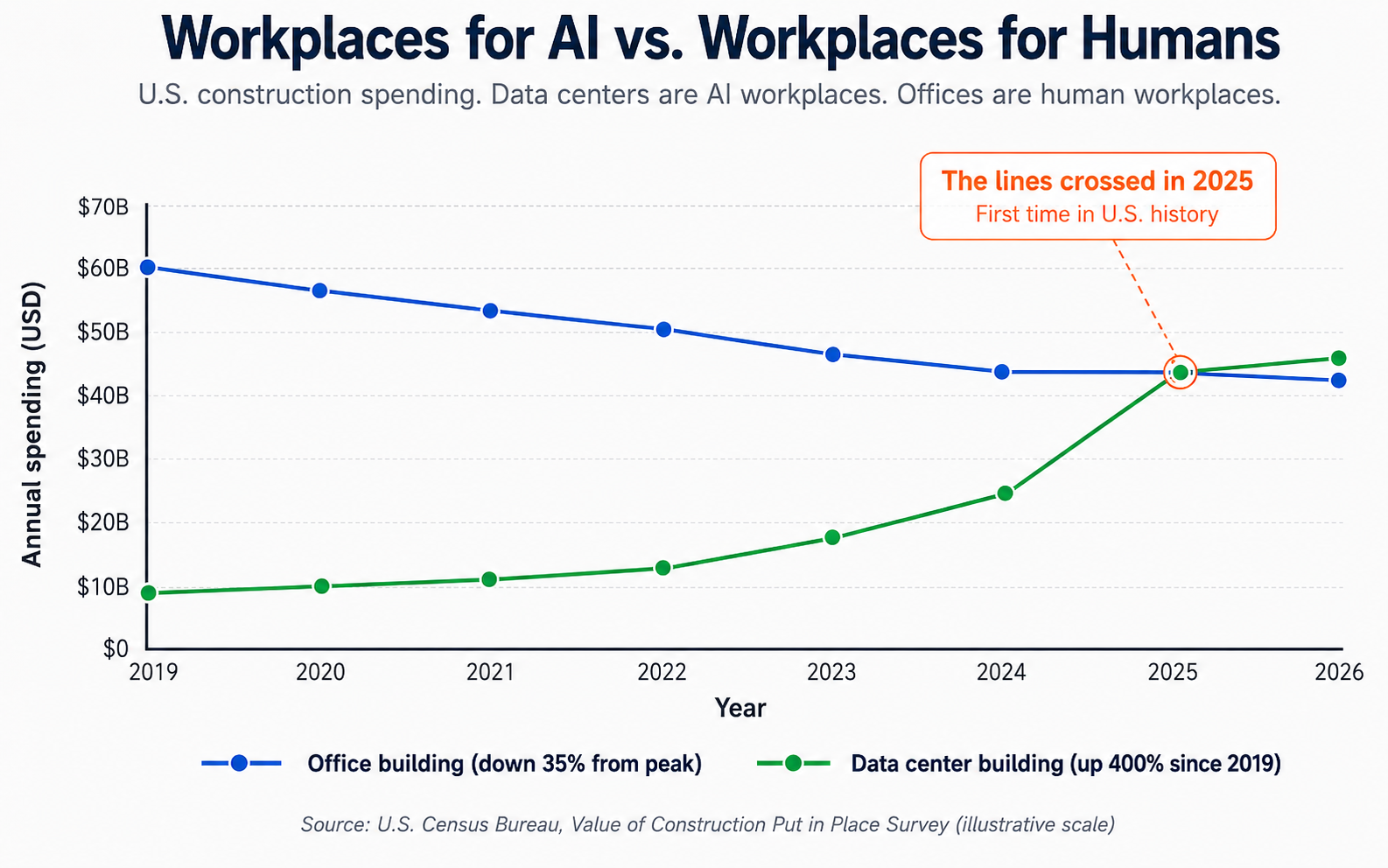

This is not a 2030 prediction. As of 2026:

- AI agents can already pay for things on their own using four open payment standards (ACP, AP2, x402, MPP).

- Some companies with only a handful of human employees are reporting a billion dollars per year in revenue, with workforces that are almost entirely AI.

- For the first time in U.S. history, more money is being spent building workplaces for AI workers (data centers) than for human workers (offices).

- 35% of changes to Cursor's own product are made by AI agents working on their own, with humans only setting the problem and reviewing the result.

What this means for you

- If you are a developer, you are promoted from coder to designer of results. You do not write every line. You direct AI workers to build whole products.

- If you run a business, you are moving from buying tools to hiring workforces. The unit of pricing shifts from "per seat" to "per result."

- If you are studying, the most valuable skill is no longer being a fast typist. It is writing clear specs, picking the right system of record, and verifying quality.

Five parts to remember

If you remember nothing else from this whole document, remember these five parts of an AI-Native Company:

- A human decides what should happen.

- A personal agent (the delegate) represents the human and carries their authority.

- A management layer assigns work to AI workers and controls budgets.

- AI workers do the actual work, each one shaped like a job.

- A system of record stores the truth — what the company really knows.

Everything else in this book is detail on top of these five parts. If you ever feel lost, come back to this list.

Where to go from here

If this summary makes sense and you want the depth, here is what each key section adds:

- Section 1 — The big picture: what an AI-Native Company is and what it sells

- Section 5 — The four vocabulary words that recur everywhere

- Section 9 — How the Production Engine works (the heart of the architecture)

- Section 13 — The Two-Layer Model

- Section 15 — The Seven Rules in detail

- Section 17 — A worked example: build a support-ticket AI worker in 5 steps

These six sections are the 30-minute path. Or read every section in order — each idea builds on the last.

That is the whole thesis. Everything after this is the same idea, more slowly.

Glossary at a Glance

Here are the most important words in this book. Each one is explained again, in more detail, when it first appears. If you get lost later, come back here.

- AI Worker (or Digital FTE) — An AI system built to do one specific job, like a customer support rep or a financial analyst. FTE means Full-Time Employee.

- AI-Native Company — A company where most of the workers are AI, not human. Also called the Agentic Enterprise.

- Agent Factory — The method for building AI-Native Companies. Not a product. A way of working.

- Delegate — Your personal AI agent. It knows you and acts for you. Also called identic AI or a personal agent.

- System of Record — The company's official memory. The place that holds the truth: customers, orders, money, contracts. AI workers read from it and write to it.

- MCP — A universal connector for AI. Stands for Model Context Protocol. Like USB, but for AI. It lets any AI worker connect to any tool or data source through one shared standard.

- Spec — A clear, written instruction that tells an AI worker what to do. Not a casual chat message — a careful written plan.

- Skill — A small, portable package that teaches an AI worker how to do one specific thing well.

- Invariant — A rule that does not change. A structural requirement of the design.

- Reference Implementation — The specific product we use today to follow a rule. Can be replaced tomorrow without breaking the rule.

- Engagement — A single session where a human works with a general AI agent. Two modes: problem-solving (solve a thing now, the session ends) and manufacturing (build a permanent AI worker for the company).

📚 Teaching Aid

A slideshow version of this thesis is also available. Some readers learn better from slides than from prose. The same ideas, with visuals: View the Full Presentation on Google Slides

1. A New Kind of Company is Being Born

Think about how technology companies make money today.

Microsoft sells you Word. Salesforce sells you a tool to track customers. Zoom sells you video calls. You pay them every month. You log in. You do the work yourself. The software is a tool. It is useful, but only if a human presses the buttons.

This way of selling software is called SaaS. The word is said "sass." It stands for Software-as-a-Service. You do not own the software. You rent it. SaaS has been the main business model in technology for the last twenty years.

That model is no longer the only one. A new model is rising beside it.

In the AI era, the most valuable companies will not sell you tools. They will build AI workers and rent the workers to you.

Let's slow down and explain.

An AI worker is an AI system built to do one specific job — the same kind of job a human employee does. Some examples:

- An AI worker that handles customer support. It reads questions, checks customer accounts, writes replies, and sends hard cases to a human.

- An AI worker that does bookkeeping. It sorts expenses, checks accounts, and prepares monthly reports.

- An AI worker that does sales work, legal contract review, or data analysis.

Each one is an AI shaped like a job. It takes instructions, uses tools, and finishes work.

In this book, we also call these AI workers Digital FTEs. FTE stands for Full-Time Employee — the way companies count permanent staff. One FTE means one full-time job. So a Digital FTE is simply "a full-time employee made of software instead of a person" — working 24/7, taking on a whole role, not just helping with a single task.

A company built around these AI workers is called an AI-Native company. Inside this company, most of the workers are not human. They are AI. And what the company sells is whatever its AI workers produce: software, decisions, services, advice, transactions, finished work of any kind.

Here is the big change.

You do not buy a product from these companies. You hire their AI workforce to do a job for you. It is like hiring an accounting firm to close your books, or a law firm to draft a contract. You are not paying for a tool. You are paying for finished work.

And there is more to come. AI workers will soon become independent economic actors. This means they will:

- Buy services on their own.

- Pay for computing power they need.

- Buy data they need.

- Pay other AI workers to help them.

All of this without a human approving each small purchase.

This is bigger than a new kind of software. It is a new kind of company.

This book is about how to build one.

The Agent Factory is the method for building these companies. It is a set of rules, a design, and a discipline. You use it to design AI workers, put them to work, and run a business around them. The word factory is important. A real factory follows a clear, repeatable method to build cars or phones. The Agent Factory follows a clear, repeatable method to build AI workers. It is not a product you can buy. It is a way of working that you learn and use.

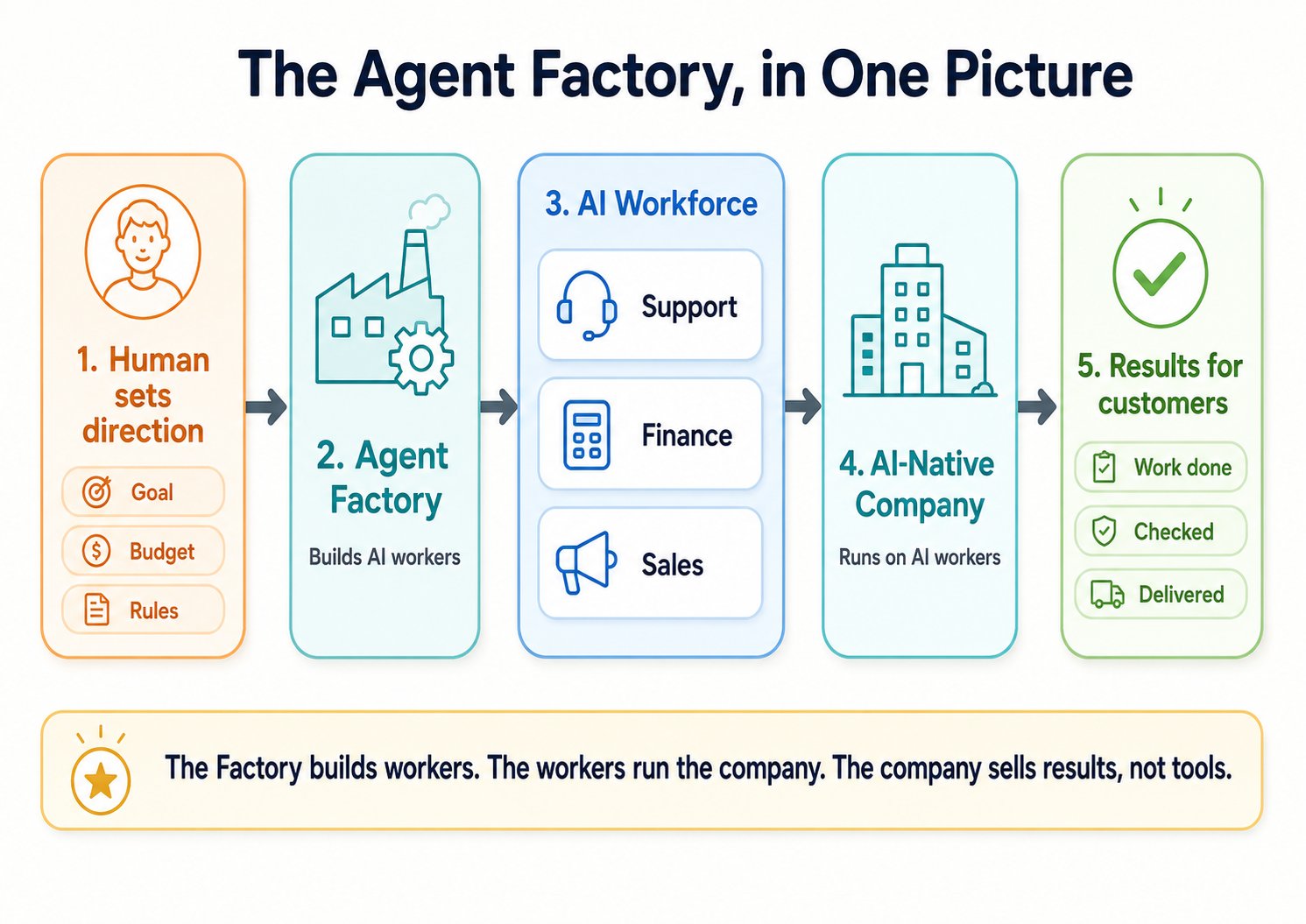

The complete picture in five stages. (1) A human sets the direction — goal, budget, rules. (2) The Agent Factory builds AI workers. (3) The workers staff different departments — Support, Finance, Sales. (4) Together they run the AI-Native Company. (5) The company delivers finished results to customers.

2. AI Can Already Pay For Things

When we say AI workers will become "independent economic actors," it may sound like science fiction. It is not. The basic systems that let AI agents pay for things are already working in 2026.

Here are four payment systems built so AI agents can buy and sell for humans. You do not need to remember the names. The point is: this is real today.

- ACP — Built by OpenAI (the company that makes ChatGPT) and Stripe (a large online payment company). When ChatGPT buys something for you inside a chat — for example, ordering a product you asked about — ACP handles the payment.

- AP2 — Google's version. More than 60 companies have agreed to use it. It works like a digital permission slip. The human signs a slip that says, "This AI agent can spend up to $500 on cloud services this month." The agent carries this slip when it tries to pay. The slip is signed with strong digital security, so no one can fake it.

- x402 — A payment standard built around cryptocurrency. It was first made by Coinbase. In early 2026, Stripe connected it to normal payment systems. Now crypto payments and card payments can use the same agent payment system.

- MPP — A system built for very small, repeated payments. Imagine an AI agent that streams a service and pays a fraction of a cent every second. With normal credit cards, the fees would eat the payment. MPP makes this kind of tiny payment possible.

The plumbing is in place. What it changes is the shape of work itself.

3. The Shift From Tools to Results

SaaS sold subscriptions. You paid every month for access. Whether you used the tool well or badly, the seller got paid the same. The seller gave you access. You did the work.

The Agent Factory era sells results. Humans say what they want. AI agents do the work. Humans check whether the result is good.

The middle step — typing, clicking, connecting things, doing the work — is what AI takes over. What stays with humans is the work machines cannot do for us: knowing what we want, and knowing whether we got it.

Three things stay in human hands:

- Intent — knowing what you want, and saying it clearly.

- Verification — checking whether the AI's work is good and correct.

- Outcome ownership — being the person who is responsible for the result.

You cannot give these three things to AI. The judgment, the values, the standard of "good enough" — all of these have to come from a person. AI does the middle part.

4. Your Personal AI Helper

Here is a problem. If a company has many AI workers doing many things, no single human can guide all of them by hand. You cannot write instructions to each one all day. So how do you stay in charge?

The answer: you have your own personal AI agent — one that knows you — and you direct the workforce through it.

This personal AI agent has different names. We call it a delegate. Some people call it a personal agent. The business thinker Don Tapscott calls it identic AI. The word identic means "carrying identity." This agent carries your identity. It knows your judgment, your preferences, and your authority to make decisions. It is not a general assistant that anyone can use. It is your representative. It speaks for you. It makes choices that match your values. It hands work to the right AI workers for you.

Think of it like a second self that runs in parallel with you. It knows what you would say, what you care about, and what you would approve. When small things come up — a routine reply, a scheduling choice, a normal yes-or-no — it handles them the way you would. When something genuinely needs you, it stops and asks. You are not its boss; it is you, lightly, when you are busy with something else.

So the picture is this:

- The Agent Factory builds the AI workforce of a company.

- Identic AI (your personal agent) is how each human controls that workforce.

You set the direction. Your personal agent turns your direction into specific instructions. The AI workforce does the work. You check the results.

5. The Words You Need to Know

Before we go further, let's lock in four important words. They sound similar, but they mean different things. Mixing them up is the most common reason people get confused.

The Agent Factory is the method. It is a careful way of designing, building, and using AI workers. The Agent Factory is what you learn to use. It is not a product you buy. It is a practice you adopt — like adopting a new way of running a kitchen or a new way of running a team.

The AI-Native Company is the output. It is the running business that the Agent Factory produces. Most of its staff are AI workers. A management layer holds them together. Humans direct everything at the top and at the edges. The AI-Native Company is what you end up running. In this book, we also call it the Agentic Enterprise.

AI Workers are the workforce. They are the role-based AI agents inside the AI-Native Company. They get hired, given work, kept on the team, and retired when their role ends. We also call them Digital FTEs or Digital Workers. They are the real labor of the company.

The system of record is the foundation. It is the company's official memory. It is the place that holds the truth: customer records, money records, stock counts, contracts, support tickets, all real facts of the business. The AI workers read from this place. They write back to this place. Without it, an AI worker is only talking. There is no place for its work to stick. (We will explain why this matters a lot, in a moment.)

Putting it together: The Factory builds the Company. The Company employs the Workers. The Workers do their work against the system of record.

There is one more pair of words to introduce here, because we will keep using them.

An engagement is a single session where a human works with a general AI agent. There are two types:

- Problem-solving engagement — You sit down with an AI agent to solve a problem. You get an answer. Example: a developer using an AI coding helper to fix a bug. The session starts, the work finishes, the session ends.

- Manufacturing engagement — You sit down with an AI agent to build a new AI worker. The new AI worker will keep working long after this session ends. Example: building a customer support AI that runs every day, answering tickets.

Same tools. Different goal. We will come back to this difference.

6. Rules That Never Change vs. Tools That Will Change

In the next sections we will describe the design of an AI-Native Company. As we do, you will see two kinds of statements. They look similar, but they mean very different things.

An invariant is a structural rule that must always be true. The word invariant is just a fancy word for "a rule that does not change." Think of it like a building rule that says, "Every building must have a strong wall to hold up the roof." The rule does not say which material the wall is made of. Brick, concrete, steel — any of them works. But something must hold up the roof.

A reference implementation is the specific product we use right now to follow that rule. It is today's best choice. Next year, a better choice may appear. When that happens, you swap in the new product, and the rest of your system keeps working — because your system was built around the rule, not the product.

In this book, when we name a product — for example, "we use OpenClaw as the personal agent" — that product is a reference implementation. The rule is "every human needs a personal agent." OpenClaw is one way to follow the rule. Next year, there may be a better personal agent. You can switch to it without breaking anything else.

This is important. Technology moves fast. The products you use today may not exist in two years. But the rules change much more slowly. The building stands even when the furniture changes.

When we name products, treat them as 2026's best choice. Not as the rule itself.

A simple guideline for reading this book: do not memorize the product names. Memorize the job each product performs. The job is what stays. The product is what changes.

7. The Old World vs. The New World

Here is a side-by-side comparison of how things used to work and how they work in the Agent Factory era.

The shift in one picture. Old model: you rent software and do the work yourself. New model: you hire AI workers and they deliver finished results to you. The table below shows the full breakdown.

| Question | The SaaS Era (Tools) | The Agent Factory Era (Labor) |

|---|---|---|

| What is sold? | Software tools | AI workers |

| How is the price set? | Monthly fee, often per user ("per seat") | Per result delivered |

| Who does the work? | The human, using the tool | The AI, supervised by the human |

| Who buys the things the work needs? | Humans buy tools and services | AI agents buy compute, data, and services on their own |

| What is the human's job? | Operator — the one pressing buttons | Supervisor — the one setting direction and checking quality |

| How do tools connect? | One-by-one custom connections | A universal connector called MCP (explained below) |

| Focus | How the work is done | That the work is done — and is correct |

The shift from "per seat" to "per result" is one of the biggest changes. Today, a company pays $30 per month for each employee to use a tool. In the new world, a company pays for results: $5 for each support ticket closed, $50 for each good sales lead, $200 for each finished monthly close. What you pay for matches what you receive.

8. The Three Layers: Intent, Engine, Result

The Agent Factory has three high-level layers.

- Intent — the plan. What do you want done? What are the goals? What are the limits? What is the budget? What is allowed?

- The Production Engine — the system that turns intent into a result. We will explain this in the next section.

- Result — the finished work, delivered to you, checked for correctness, and improved over time through feedback.

You write the intent. The engine produces the result. Humans check and improve. That is the loop.

9. How the Production Engine Works

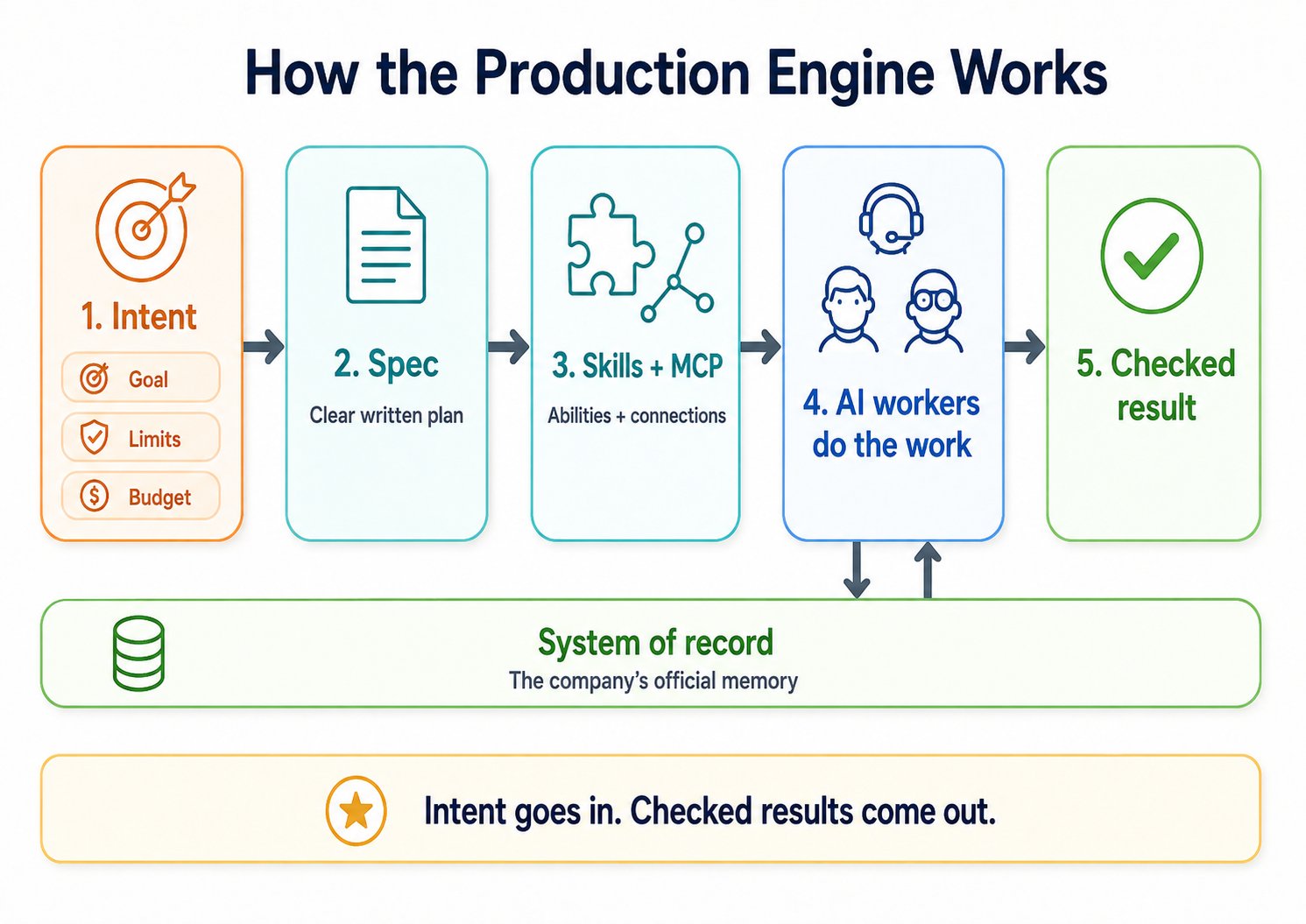

The production engine is the most important idea in this entire book. It is the system that takes what you want and turns it into what you get. Everything that happens between your instruction and the final result lives inside this engine.

It is not an app you download. It is not a single piece of software. It is a design. It is a plan, plus a set of rules, for building systems where AI workers are created, combined, and put to work.

The car factory example. Picture a car factory. Raw materials — steel, rubber, glass — come in at one end. The steel moves to the welding station. There, workers shape it into a frame. The frame moves to the paint station. There, it gets its color. Then it moves to the assembly station. There, the engine, seats, tires, and electronics are added. At the end of the line, a finished car rolls out. It is checked and ready to drive.

The Agent Factory works the same way. The only differences are:

- The raw material is your intent — what you want done.

- The stations are AI workers — each one handling a part of the job.

- The finished product is a checked result — the real answer, confirmed to be correct.

Four things power this factory.

Specs are written instructions. They tell AI workers what to do — the goal, the limits, the rules for success. A spec is not a casual chat message. It is a careful written plan, like the kind a project manager writes for a human team. The skill of writing good specs is called spec-driven development.

Skills are the abilities each AI worker brings to the job. A skill is a small, portable package. It teaches an AI worker how to do one specific thing well. Examples: "how to write a professional email" or "how to read a sales contract." Skills follow an open standard called the Agent Skills format. Anthropic (the company that makes Claude AI) created the standard. Now the whole industry uses it. Because skills follow a shared format, you can move them between different AI systems. Like a USB stick that works in any laptop.

Feedback loops are how the system learns. After each result is delivered, the result is reviewed. What we learn is fed back into the system. Mistakes are fixed. Good patterns are kept. The factory gets better over time.

MCP is the universal connector. The letters MCP stand for Model Context Protocol. The easiest way to understand MCP: think of it as USB, but for AI. Before USB, every device had its own special cable. Printers had one type. Mice had another. Cameras had a third. It was a mess. USB fixed this. Now every device uses the same cable. MCP does the same thing for AI. It lets any AI worker connect to any tool or any data source through one shared standard. Without MCP, every AI worker would need a custom connection for every tool. With MCP, every important data store can be reached by any AI worker that has permission.

Together: Skills give AI workers their abilities. MCP gives them their connections. These two open standards are the floor of the factory.

Below everything sits the system of record — the company's official memory we talked about earlier. Every action by an AI worker either reads from this memory or writes to it. The system of record is the truth of the business.

The production engine in one picture. Intent (with goal, limits, budget) goes in on the left. It flows through a spec, then through skills and MCP, then through AI workers. A checked result comes out on the right. The whole engine sits on top of the system of record — the company's official memory.

10. AI Agents That Can Buy Things

We touched on this earlier. Now let's go deeper. This changes the economics.

Today's AI agents do tasks. Tomorrow's AI agents will take part in markets. The change is from "AI as a tool" to "AI as a buyer."

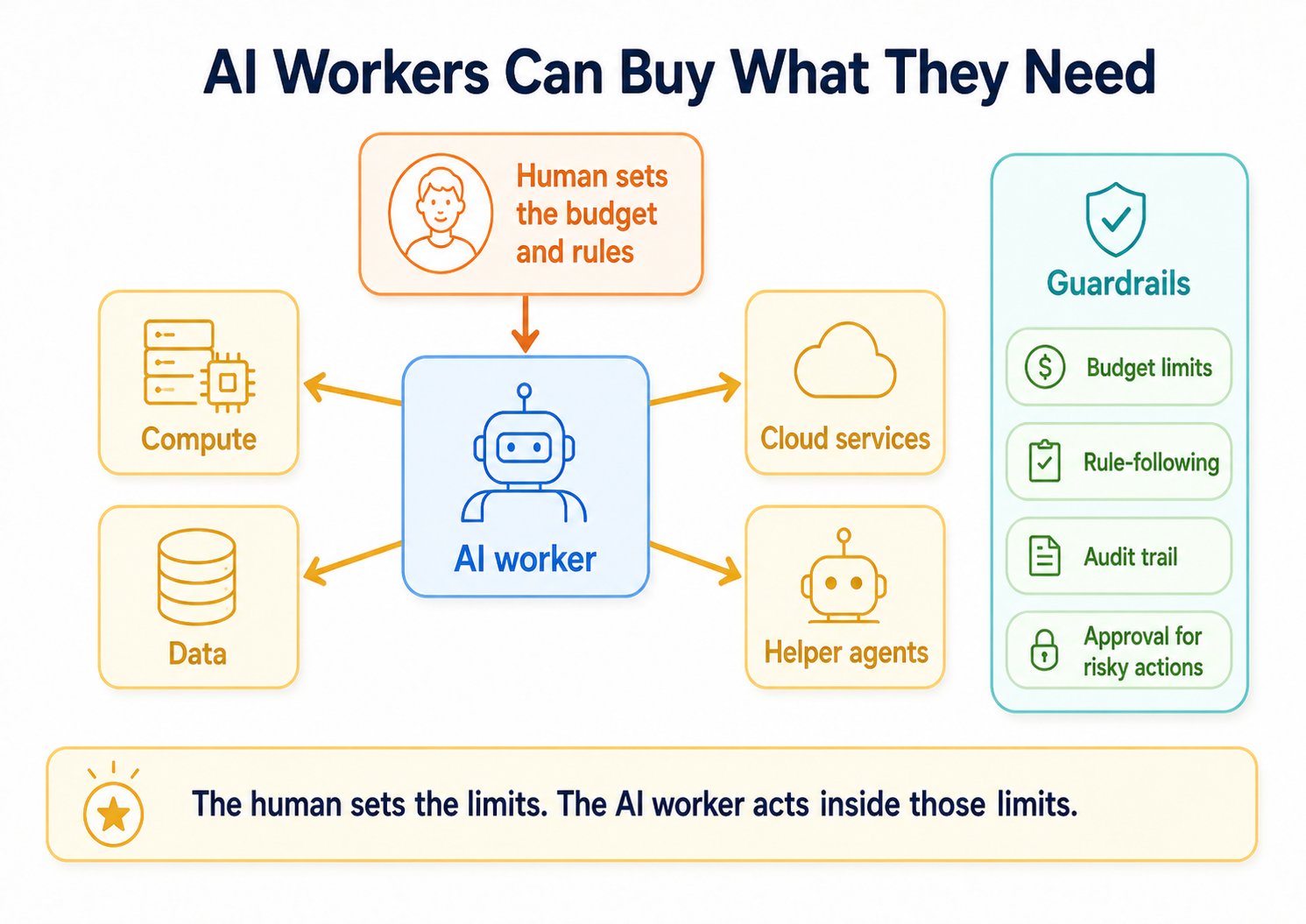

AI workers as economic actors. The AI worker can buy compute, data, cloud services, and even help from other AI workers — but only inside guardrails the human set: budget limits, rule-following, an audit trail of every action, and approval gates for risky decisions.

Picture an AI worker with this goal: "Reduce customer churn by 15%." (Churn means the rate at which customers leave a business.) To reach this goal, the AI worker might need to:

- Buy computing power to train a model that predicts which customers might leave.

- Pay for richer customer data from a data provider.

- Set up and pay for cloud services to run the solution.

All of this — inside a budget and a set of rules that the human supervisor set first.

Notice what the AI worker is not doing. It is not asking a human for approval on every small decision. The human set the budget and the rules. Inside those rules, the AI worker acts on its own.

The hard problem here is not ability. The AI can already do the work. The hard problem is trust — making sure the AI stays inside the rules. So the focus is moving to things like:

- Rule-following — making sure the agent stays inside the limits the human set.

- Audit trails — keeping a complete record of every choice and every transaction the agent made, so we can check later.

- Liability — figuring out who is legally responsible when something goes wrong.

When AI workers can buy things, the money side of an AI-Native Company changes deeply. The company does not just use resources that humans gave it. It finds its own resources. It looks for what it needs. It compares options. It buys in real time. The company becomes a system that takes care of its own needs.

The lesson for builders: design your AI agents and your systems for buying and selling from day one. Agents need budgets, not just permissions. They need contracts for results, not just access keys. The companies that get this right will capture the next wave of value — just like the companies that moved from subscriptions to result-based pricing are capturing this one.

11. Humans Are Not Replaced — They Are Promoted

A common fear: AI agents will replace people.

The evidence shows the opposite. For almost every kind of task, a human working with AI does better than either one alone. The Agent Factory does not remove the human. It promotes them.

- From operator → to supervisor.

- From typist → to editor.

- From coder → to designer of results.

Humans are promoted, not replaced. The human moves up to a higher role. AI takes over the middle steps. The human keeps the start (direction) and the end (judgment and responsibility).

This changes what it means to be a "tech professional." A web developer or a mobile developer is not only someone who writes code in a specific language. They are a technology expert. They understand systems. They understand how data flows. They understand how apps connect to each other. They understand what users really need. In the Agent Factory era, this expertise becomes much more valuable. It is no longer spent writing buttons one at a time. It is spent designing, deploying, and supervising AI workers that deliver whole products.

The developer does not disappear. The developer does more.

Steve Jobs figured out the working rhythm for this many years ago, when he was leading humans. We can borrow it directly.

12. The 10-80-10 Working Rhythm

Steve Jobs followed what is now called the 10-80-10 rule:

- Spend the first 10% of your time setting the vision.

- Let your team do the work for the middle 80%.

- Come back for the final 10% to polish, improve, and approve.

The business teacher Dan Martell explains it the same way: 10% for ideas, 80% for doing the work, 10% for refining.

Jobs did not start out this way. Early in his career, he was famous for controlling every small detail. He once told the team exactly how every pixel of the Macintosh's calculator should look. Over time, he changed. He learned to trust good people with the middle 80%. Apple became one of the most valuable companies in the world partly because of that change.

Now replace "good people" with "AI workers," and you have the working rhythm of the Agent Factory.

| Step | Jobs at Apple | The Agent Factory |

|---|---|---|

| First 10% — Intent | Jobs sets the vision and the limits | The human writes the spec: goals, limits, budget, rules |

| Middle 80% — Work | Apple's teams build the product | AI workers do the work: use tools, hand work to other AI workers, deliver results |

| Final 10% — Check | Jobs polishes and says "ship it" | The human reviews, improves, and approves the checked result |

The 10-80-10 working rhythm. First 10% is human direction. Middle 80% is the AI worker. Final 10% is human checking.

In early 2026, the AI coding company Cursor reported that 35% of the changes added to its own product were made by AI agents working on their own on cloud computers. The human developers guided the agents by setting the problem and reviewing the finished work. They did not guide the code line by line. Cursor's CEO Michael Truell expects that most software development will look this way within a year.

The 10-80-10 rhythm is not a prediction anymore. It is a measurement of where the leading companies already work.

What the human checks is also changing. In the past, a developer reviewed code line by line. Now, AI agents work for hours on cloud computers. They return short videos of what they did, working previews you can click around in, and easy-to-read logs. You will not read code line by line anymore. You will watch a short video or click around in a preview to check the work. This matters: a human cannot read twelve sets of code changes at once. But a human can scan twelve previews at once. This is what makes it possible for many AI agents to work in parallel.

This rhythm is not only for coding. It works for every kind of professional job. The first 10% — clear thinking, setting context, careful prompting — is only for humans. The middle 80% — summarizing, generating, analyzing, formatting, drafting — is what AI takes over. The final 10% — judgment, refinement, quality control — is only for humans again.

You stop spending 80% of your time doing the work. You start spending 100% of your attention on the 20% that only a human can do — setting direction at the start, and making sure the quality is good at the end.

13. Two Layers: Personal and Workforce

We can now draw the full picture of the future company.

AI workers are how the work gets done. But humans do not talk to AI workers directly. That would be too hard to manage. Instead, humans talk to their personal AI agent (the delegate or identic AI from earlier). The personal agent turns human intent into instructions for the workforce.

This gives us a Two-Layer Model:

| Layer | What it contains | Who it serves | What it does |

|---|---|---|---|

| Edge Layer | Your personal AI agent (your delegate) | The individual human | Turns your intent into instructions, hands work to AI workers, makes choices for you |

| AI Workforce Layer | Role-based AI workers | The company | Does the tasks, runs the workflows, delivers the checked results |

The Two-Layer Model in motion. Intent flows down: you → your personal delegate → the workforce coordinator → AI workers. Results flow back up to you, already checked. You do not manage every worker by hand. You direct the workforce through your delegate.

Both layers are needed.

- Without an AI workforce behind it, a personal agent is just a chatbot with no one to give orders to.

- Without personal agents at the edge, the workforce sits idle, waiting for humans to write instructions one at a time. This is exactly the problem the Agent Factory was built to solve.

The Two-Layer Model completes the picture. An industrial-style workforce in the middle of the company. Human control at the edge. Clear written specs as the language between them.

14. The Two Modes of Using a General AI Agent

Earlier we said there are two kinds of engagements. Now we can place them correctly.

A general AI agent is a powerful AI tool that humans use directly. Some are made for engineers — examples are Claude Code and OpenCode. These are AI tools that engineers use in the terminal (the text-only screen developers work in). Some are made for non-engineers — examples are Claude Cowork and OpenWork. These are AI tools for knowledge workers like finance analysts, lawyers, marketers, and writers.

Humans use these general agents in two different modes.

| Mode | Who uses it, with what | What is delivered at the end | Governed by |

|---|---|---|---|

| Problem-solving engagement | Engineers use Claude Code or OpenCode. Domain experts (non-engineers) use Claude Cowork or OpenWork. | A finished result for the human | Seven Principles |

| Manufacturing engagement | Anyone — always with Claude Code or OpenCode | A new AI worker that joins the workforce | Seven Invariants |

Two modes, one core difference. Mode 1 is a conversation — you talk to the AI, you get a finished result, the session ends. Mode 2 is a production line — you use the AI to build a new AI worker that keeps running long after this session. One mode solves today's problem. The other mode builds tomorrow's worker.

Mode 1 — Problem-solving. A developer opens Claude Code and improves a piece of software. A finance analyst opens Claude Cowork and rebuilds the monthly financial close. The session starts. The work finishes. The session ends. No new permanent AI worker is built. The general agent itself does the work, just for that session. When the session is over, it is over.

Problem-solving engagements split by audience. Engineers reach for Claude Code or OpenCode — terminal-native tools tuned for code, infrastructure, and systems work. Domain experts reach for Claude Cowork or OpenWork — knowledge-work tools tuned for documents, spreadsheets, briefs, and reviews. Same engagement mode. Same rules. Two interface families.

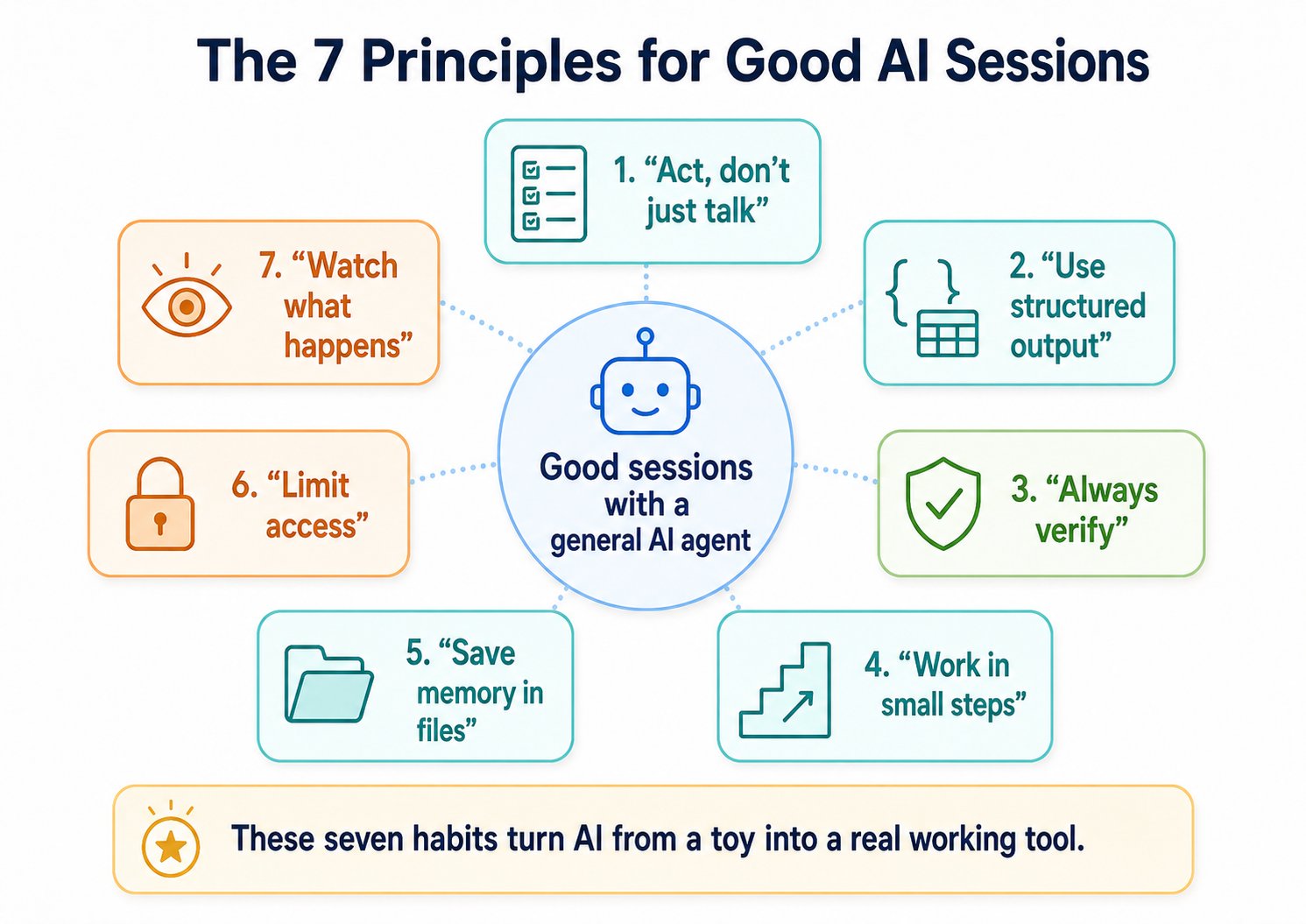

This mode is governed by the Seven Principles of General Agent Problem Solving. These are the seven rules of a good problem-solving session. They came from looking at thousands of sessions — successful ones and failed ones — across coding, contract review, financial models, hiring, and research work. The same seven rules apply to all four tools. Only the surface changes.

-

Bash is the Key — let the AI act, not just talk. A general AI agent is most useful when it can really do things — run commands, read files, save work. (Bash is the text-based command system that lets a computer run commands directly.) Make sure your AI can run real commands. Do not let it only describe what it would do.

-

Code as Universal Interface — use structure when precision matters. When you need a precise result, ask the AI for a structured format — a table, a list, a code block, a checklist, a fixed outline. Do not ask for a paragraph. Structure makes the result sharper. It also makes mistakes easier to find.

-

Verification as Core Step — always check the work. Every result needs a check. Run tests on code. Score memos against a clear standard. Have a second AI review the first AI's work. "It looks right" is not enough. Confident-looking output can still be wrong.

-

Small, Reversible Decomposition — take small steps you can undo. Break work into small pieces. Save each piece before moving to the next. Never let the AI run for an hour without a save point. One big change can wipe out hours of work when something goes wrong.

-

Persisting State in Files — files are the memory. The chat history is temporary. It disappears. Files are permanent. Anything worth remembering across sessions — decisions, plans, conventions, customer details — write it to a file. The next session will read it.

-

Constraints and Safety — give the AI only the access it needs. Start with limited access. Read-only at first. Approve-each-step at first. Watch how the AI behaves. Give it more access only after you have seen it work correctly inside tight limits. Do not give an AI broad access from day one.

-

Observability — watch what the AI does. If you cannot see what the AI is doing, you cannot trust it. Every action should be visible — through logs, a step-by-step view, a short video, or a preview you can click around in. If you cannot watch the work, you cannot check it.

Together, these seven rules turn a powerful AI tool from a clever toy into something you can ship real work on. The first five rules — act, structure, verify, small steps, files as memory — are the daily discipline. The last two rules — constraints and observability — are how you keep the first five safe.

Seven habits for good sessions with a general AI agent. The first five are the daily working rules. The last two — limit access and watch what happens — keep the work safe.

Mode 2 — Manufacturing. This is when the goal is to build something that lasts — a new AI worker that will keep running long after this session is over. Manufacturing always uses engineering tools (Claude Code or OpenCode). It does not matter if the human is a finance analyst or a marketer. Building an AI worker is mostly a coding task. The same developer might use Claude Code to design, build, and deploy an AI worker that reviews code. The finance analyst, often working with an engineer, might use Claude Code to build an AI worker that does the monthly close every month. The output is not the answer to a problem. The output is the worker that will produce answers, again and again, from now on. This mode is governed by the Seven Invariants — the design rules of any AI-Native Company. We cover them in the next section.

Principles guide the session. Invariants guide the design. A problem-solving engagement is guided by principles because it produces a result that ends with the session — there is no lasting structure to obey. A manufacturing engagement is guided by invariants because its output must fit into a workforce that holds together across many sessions, many agents, and many product cycles.

In both modes, the 10-80-10 rhythm applies. Whether you are using AI to solve your problem or to build a worker that will solve it for you, your time still splits into intent, work, and checking.

15. The Seven Rules That Do Not Change (The Seven Invariants)

We now come to the heart of the book — the seven design rules that any AI-Native Company must follow, no matter which products are used to follow them.

Remember the difference. An invariant is a rule that must always be true. A reference implementation is the product we use in 2026 to follow that rule. The rule is the main idea. The product is one option.

Before we go through the seven rules, let's name the players. In an AI-Native Company:

- You are the leader and the owner. You set the direction.

- A delegate is your personal assistant — the one AI agent that knows your context and speaks for you.

- A management layer is the operating system of the company. It hires AI workers, gives them work, controls budgets, decides what each worker is allowed to do, keeps records, and retires workers when their role ends.

- AI workers are the staff who deliver the results.

- Runtime engines are the machines each worker actually runs on. Different workers can run on different engines, depending on the job. We will explain this.

- A nervous system carries messages between workers, keeps the company running even when there is a crash, and controls traffic so the workforce keeps working under heavy load.

Every rule below is about how this company runs. Every named product is one option that can be replaced.

Rule 1: The human is the principal.

The rule. Every action in the company starts with a human. The human is the principal — the one who sets the goal, gives the budget, draws the line of what the AI is allowed to do, and owns the result. No exceptions. This part is never given to an AI.

Why it must be there. Direction does not appear by itself. Judgment, values, the right to spend money, and responsibility for results — none of these can be given to a machine. A system that acts without a human in charge is not independent. It is unowned. And unowned systems make results that no one can answer for.

What goes wrong without it. No one is responsible. There is no one whose values the system follows. The budget belongs to no one. The result has no judge.

How it works today. Through written specs, approval points, declared budgets, and check steps. Any method that captures human intent, authority, and responsibility — and hands it down to the rest of the system — follows the rule.

Rule 2: Every human needs a delegate.

The rule. A human cannot personally direct dozens of AI workers by hand. Every human needs a personal AI agent. This agent holds their context, speaks for them, carries their authority, and gives work to the right places for them.

Why it must be there. One person cannot direct twenty AI workers at once. There are not enough hours. And humans type much more slowly than AI works. Without a delegate, the human is forced back into giving instructions by hand — which is exactly the problem the Agent Factory was built to solve.

What goes wrong without it. The human becomes the slow point. The AI workforce waits for instructions. The whole system slows down to human typing speed.

How it works today. OpenClaw is the personal agent we use in this book. Any personal agent that can hold your identity, your context, and your authority — and that can pass work to a management layer — follows the rule.

Rule 3: The workforce needs a management layer.

The rule. A group of AI workers is not a company. The workforce needs a layer that coordinates them. This layer is the operating system of the company. It hires workers. It assigns work. It controls budgets. It approves risky choices. It decides what each worker is allowed to do. It keeps records of who did what at what cost. It retires workers when their role ends.

Why it must be there. Coordination, responsibility, and cost control do not appear by themselves. They need a layer that knows who is doing what, what it costs, what is allowed, what was made, and what happened when something went wrong. AI workers only become controllable as a workforce when a single layer makes them visible — as units of ability, cost, speed, and result. And they only stay affordable when that same layer can shut them down when they are no longer needed.

What goes wrong without it. Workers do the same work twice. Budgets leak. The records break apart. Finance cannot answer what the workforce cost. Operations cannot answer what the workforce made. Retired workers keep running because no one is responsible for stopping them.

How it works today. Paperclip is the management layer we use. It is built as the operating system for an AI-Native Company. Any system that can hire, assign, govern, observe, and retire workers under correct authority follows the rule.

Rule 4: Each worker picks its own engine.

The rule. Every AI worker must run on some kind of engine — the software that actually runs its work. The engine is chosen per worker, not per company. Different workers can use different engines, matched to what each job actually needs.

Why it must be there. Some work is very important — it cannot fail silently or lose data. That work needs a strong, reliable engine. Other work is routine and can survive small failures. That work can use a cheaper, lighter engine. Forcing every worker onto one engine means you either pay too much for the reliability the job does not need, or you do not pay enough for the reliability the job does need. Both are bad.

What goes wrong without it. One engine means one set of trade-offs. The company either cannot afford its reliable workers or cannot trust its cheap ones.

How it works today. We use several engines depending on the job — Dapr Agents, Claude Managed Agents, OpenAI Agents SDK, Cursor SDK, and OpenClaw-native. The names do not matter for now. What matters is the rule: pick the engine to fit the job.

Rule 5: Every worker runs against a system of record.

The rule. Engines are what each worker runs on. The system of record is what each worker runs against. Every AI worker reads from and writes to an official memory store. This is the company's lasting record of what it really knows: customers, orders, stock, contracts, money entries, support tickets, real facts of the business.

Why it must be there. An AI's short-term memory (called its context window) is temporary. It disappears when the session ends. A system of record is permanent. Without an official store of truth, AI agents start to invent facts, save the same transaction twice, lose work between sessions, and produce results that no one can check later. The system of record is what keeps real work separate from confident-sounding made-up answers. It also makes the workforce readable later — every action a worker takes leaves a record in a system that stays after the worker's session ends.

What goes wrong without it. Results drift away from real life. Two workers tell the same customer two different things because their short-term memories disagreed. Responsibility cannot be traced. The company becomes a maker of confident-sounding output with no real basis underneath.

How it works today. The company's existing databases and operational systems serve as the system of record — the CRM (a customer database), the ERP (the system that runs the operations of a company), the support ticket system, the data warehouse, the financial ledger. MCP (the universal connector we mentioned earlier) is how AI workers reach them. Any lasting, reachable, governed store that the workforce can read from and write to follows the rule.

Rule 6: The workforce can grow on its own, under rules.

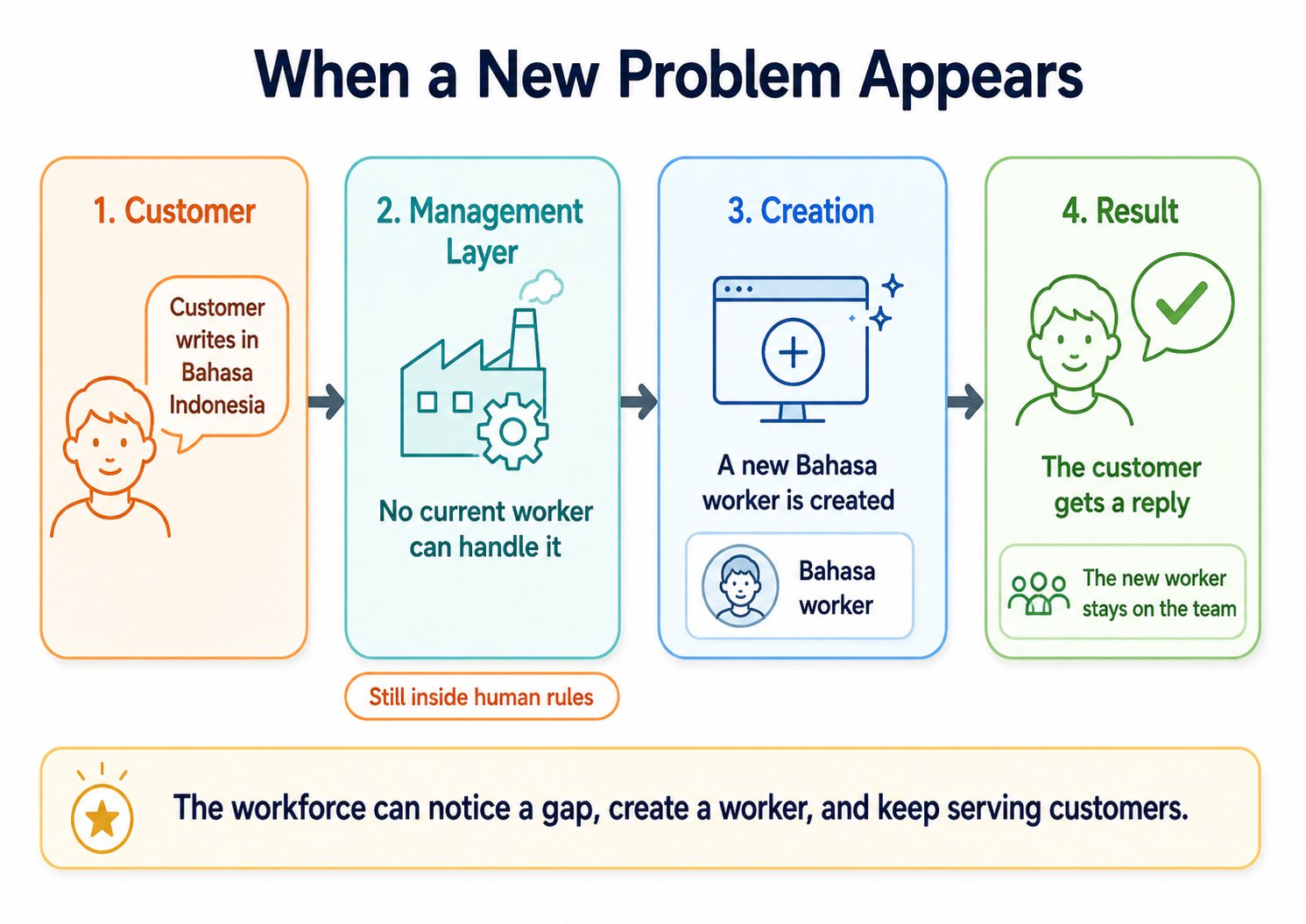

The rule. The management layer must be able to hire new AI workers automatically. When a gap appears — a customer writes in a language no current worker speaks, or a new kind of work appears that no current worker can do — an authorized agent should be able to write a spec for a new worker, set it up, register it with the management layer, and put it to work. All inside the rules the human set.

Why it must be there. A fixed list of workers cannot keep up with a changing problem. If every new gap needs a human to manually build a new worker, the system slows down. The workforce must be able to grow on its own — but always inside the limits the human set, never outside them.

What goes wrong without it. The list of workers is frozen. Every new kind of problem needs a human. The company can only grow as fast as its humans can manually build new workers.

How it works today. Claude Managed Agents is the technology we use for this. Any system that can create new AI workers and set up their environment at runtime, while staying inside the rules the human set, follows the rule.

Rule 7: The workforce runs on a nervous system.

The rule. Work arrives on its own. It flows between workers without a human routing each step. A scheduled time comes. A customer fills in a web form and a small message fires off. One worker finishes a task and passes it to the next. All of this is carried by a single event system — like the company's nervous system. It wakes workers when there is something for them to do. It survives crashes in the middle of work. It controls traffic so one busy customer does not block all the others.

Why it must be there. Four things must be true for the workforce to operate without a human at every step:

- External triggers. The system must wake up on its own — when a scheduled time arrives, when a webhook fires (a webhook is a small message sent from one system to another when something happens), or when a customer sends a request.

- Internal handoffs. Workers must be able to pass work to other workers without a human routing each handoff.

- Durability. Multi-step work must survive a crash in the middle. Why this matters: each step has a small chance of failing. When you chain six steps in a row, the small chances multiply together. If each step works 95% of the time, six steps in a row only work 95% × 95% × 95% × 95% × 95% × 95% ≈ 74% of the time. That means one in every four runs would silently fail. With proper durability (where the system remembers what is already done and only retries what failed), the same task finishes about 99.7% of the time. That is the difference between a workforce that delivers and one that quietly drops one in four jobs on the floor.

- Flow control. If one customer suddenly sends a thousand requests, the system must handle that spike without leaving the others waiting forever.

What goes wrong without it. Without external triggers, the system only moves when a human prompts it — and the economics fall back to the economics of a chatbot. Without internal handoffs, workers cannot coordinate without a human in the middle. Without durability, reliability gets worse over multi-step runs. Without flow control, one busy customer blocks all the others.

How it works today. Inngest is the nervous system we use — one system that carries all four of these features at once. Day AI, a real company building an AI-native CRM (a customer database), describes its Inngest layer in exactly these terms. Founding engineer Erik Munson called it "the nervous system" of the product. That language comes from a real builder in a real market, not from a textbook.

16. The Whole Design in One Picture

Here is the seven-rule design, all in one table.

| Rule | What it requires | What we use in 2026 | What can replace it |

|---|---|---|---|

| Principal | A human who sets intent, budget, authority, responsibility | — | — |

| Delegate | A personal agent that holds your context and authority | OpenClaw | Any MCP-speaking personal agent |

| Management layer | The workforce operating system — hire, assign, govern, observe, retire | Paperclip | Any system that meets the management contract |

| Engine | A per-worker runtime that matches the job | Dapr / Claude Managed Agents / OpenAI SDK / Cursor / OpenClaw-native | Any runtime that meets the job's reliability needs |

| System of record | An official store the workforce reads and writes | Existing databases, workflows, MCP-exposed platforms | Any lasting, reachable, governed store |

| Workforce growth | The ability to hire new workers on demand, under rules | Claude Managed Agents | Any system that can create new agents at runtime |

| Nervous system | Events, durability, and traffic control under authority | Inngest (workforce); Claude Code Routines (for coding agents) | Any system carrying events with durability and flow control |

Seven rules. One chain. You can swap any product in the middle column tomorrow, and the design still works — because the design was never the products. It was the rules.

The seven rules of an AI-Native Company, all in one view. These are the rules that do not change. The specific tools (OpenClaw, Paperclip, Dapr, Inngest) can be replaced — the rules stay.

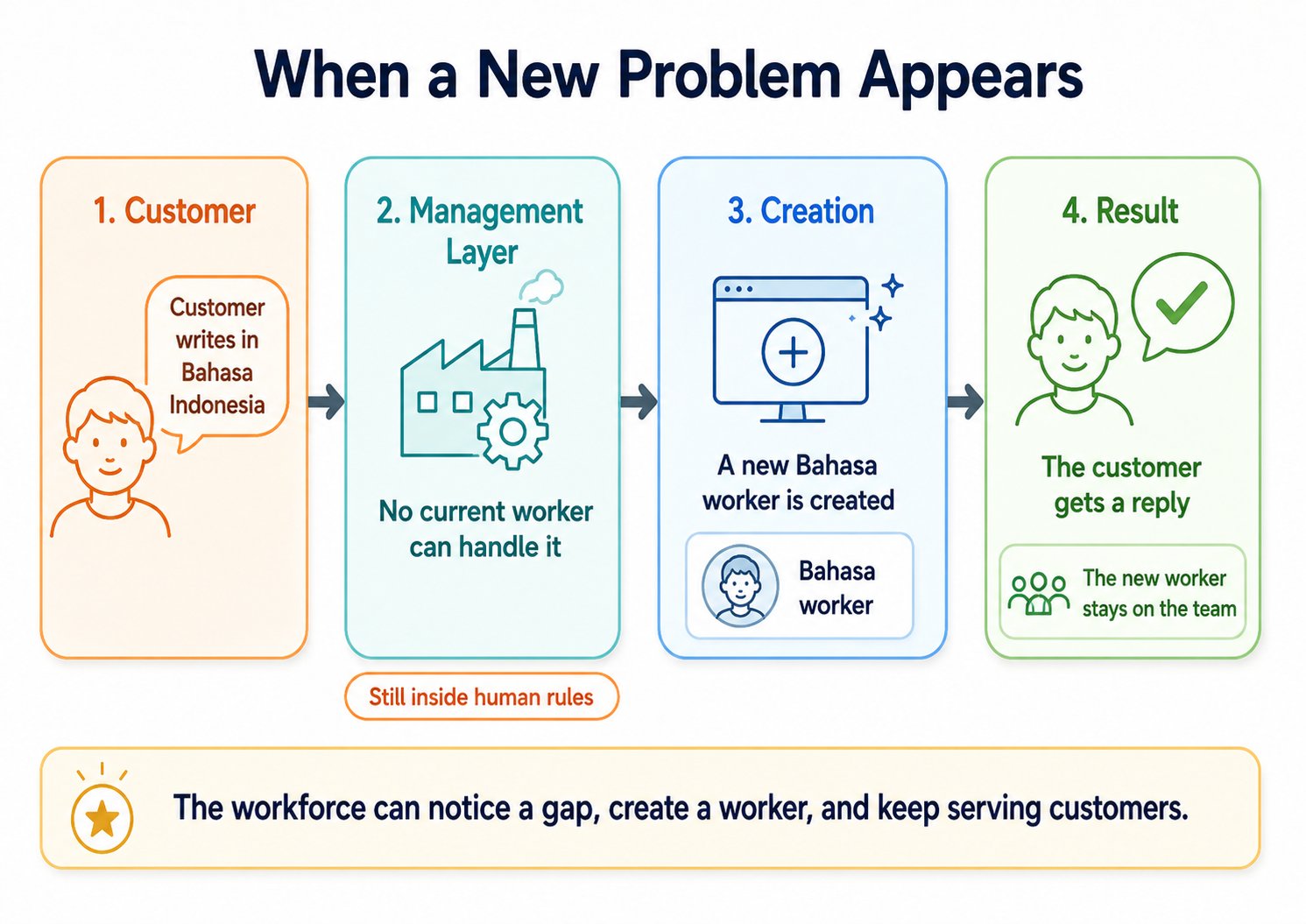

Here is how this looks in real life. Imagine a customer writes a support message in Bahasa Indonesia, and no current AI worker speaks that language. Here is what happens:

- The customer's message arrives through the nervous system (Inngest).

- The management layer (Paperclip) sees that no current worker speaks the language. There is a gap.

- Paperclip, acting inside the rules the human set, calls its own hiring system (Claude Managed Agents) to create a new Bahasa-speaking worker.

- The new worker reads the customer's history from the system of record, writes a reply, saves the conversation back to the system of record, and sends the reply.

- The reply reaches the customer through the same chain.

- The new worker stays on the team, ready for future Bahasa-speaking customers.

No human was woken up. The company handled a new situation, expanded its own workforce inside the rules, served the customer, and recorded the whole event for later checking. That is what the seven rules make possible.

A customer writes in a new language. The workforce notices the gap. A new worker is created — inside the rules the human set. The customer gets a reply. The new worker stays on the team for next time.

17. A Worked Example: Build a Support-Ticket AI Worker in 5 Steps

So far this book has been about ideas. Now let's make it concrete.

Imagine you run customer support for a small online store called Mehndi Mart (a fictional shop selling henna products to customers worldwide). You are drowning in support tickets — refund requests, shipping questions, product inquiries. You decide to build an AI worker to handle the first response on every ticket.

Here is how you would do it, using the seven rules from Section 15 and the seven principles from Section 14.

Step 1 — Write the spec (Rule 1: the human is in charge)

You open Claude Code or OpenCode (the engineering tool — see Section 14, Mode 2). You write a spec for the worker. The spec is just a written plan. It might look like this:

WORKER: First-Response Support Agent for Mehndi Mart

GOAL:

- Read every incoming customer ticket

- Classify it: refund, shipping question, product question, or other

- Write a first draft reply

- Hand off to a human if the case is complex or angry

LIMITS:

- May not promise refunds above $100 without human approval

- May not commit to delivery dates not in the shipping system

- May not respond in any language the worker is not trained for

BUDGET:

- Maximum $200/month in compute costs

- Maximum 30 seconds per ticket

SUCCESS LOOKS LIKE:

- 80% of tickets get a useful first reply within 5 minutes

- Less than 5% of replies need human correction before sending

- All tickets are recorded in the support system of record

This spec captures your intent, your budget, and your limits. You are now the principal of this worker. You own its results.

Step 2 — Choose the system of record (Rule 5)

The worker must read from and write to your company's official memory. For a support worker, this means three places:

- Customer records (your CRM — for example, HubSpot or Salesforce)

- Order records (your e-commerce platform — for example, Shopify)

- Support history (your ticket system — for example, Zendesk or Freshdesk)

You connect these systems to your AI worker through MCP (the universal connector — like USB for AI, from Section 9). Each system is exposed as an MCP server. The worker can now query any of them when it has permission.

You decide: this worker can read from all three systems and write to the ticket system. It cannot write to customer or order records — those changes still require a human.

Step 3 — Attach the skills the worker needs

A skill is a small, portable package that teaches the worker how to do one specific thing well (Section 9). For this support worker, you attach skills like:

- "How to write a professional support reply" — tone, structure, signoff

- "How to classify a support ticket" — a list of ticket types and how to tell them apart

- "How to read an order record" — what fields matter, what they mean

- "When to escalate to a human" — triggers like anger, legal threats, complex refunds

Skills can be downloaded from a skills library or written by you and your team. They follow the open Agent Skills format, so they work in any AI system.

Step 4 — Pick the engine and set the guardrails (Rules 3, 4, 6)

You decide where the worker runs. For a support worker — where reliability matters but cost also matters — you pick a middle-tier engine. (You can change this later. Rule 4 says each worker picks its own engine.)

Then you set the guardrails through the management layer (Paperclip, or whatever management layer you use):

- Budget cap: $200/month, hard stop

- Approval gate: any refund over $100 requires a human "yes" before the reply is sent

- Audit trail: every ticket the worker touches is logged with the worker's actions, the cost, and the time taken

- Rate limit: maximum 50 tickets per minute (so a flood of messages does not exhaust the budget in five minutes)

The worker now exists inside a clear envelope of what it can and cannot do.

Step 5 — Verify and deploy (Rule 7 + the Seven Principles)

Before you let the worker handle real customers, you verify it. You run it on a sample of past tickets — say, 100 historical ones — and check the replies. Does it classify them correctly? Are the replies professional? Does it escalate the right cases?

You watch every action the worker takes — every file it opens, every step it runs, every decision it makes. This is observability: nothing the worker does is hidden from you. You catch mistakes. You adjust the spec. You retest.

Once you are satisfied, you deploy. The nervous system (Inngest, or whatever event system you use) starts feeding real tickets to the worker as they arrive. The worker handles them. A human reviews a small sample every day. Over time, you refine the spec and add skills, and the worker gets better.

What just happened

You wrote a spec. You connected a system of record. You attached skills. You set guardrails. You verified and deployed. You have just built a Digital FTE.

The worker now runs 24 hours a day, 7 days a week, inside the rules you set. When your business grows, you do not scale by hiring more humans to triage tickets — you scale by tightening the spec and adding skills. The humans on your team move up the value chain: complex cases, customer relationships, product feedback, strategy.

This is what the Agent Factory does. One worker at a time.

Your first-worker checklist

Before you ship any AI worker you build, run through this list. If every box is checked, you have a worker that satisfies the Seven Invariants and is ready to deploy.

- Spec written — clear goal, clear limits, clear budget, clear definition of success

- Principal named — a specific human owns this worker and is accountable for its results

- System of record chosen — the worker knows where to read company truth and where to write back

- MCP connections set — the worker can reach the systems it needs, and only those

- Skills attached — the abilities the worker needs are loaded as portable skill packages

- Engine picked — the runtime matches the job's reliability and cost needs

- Budget cap set — a hard limit on monthly spend, enforced by the management layer

- Audit trail enabled — every action is logged, inspectable, and replayable

- Approval gates set — risky actions require a human "yes" before they happen

- Verification plan ready — you know how you will check the worker's first 100 outputs

If you can tick every box, your worker is no longer a clever toy. It is a Digital FTE.

18. What Stays the Same vs. What Will Change

Here is a table showing which parts of this design are permanent rules (left column — the main idea) and which parts are this year's specific tools (right column — 2026).

| Stable (the rule, the invariant) | Will change (the specific tool, the implementation) |

|---|---|

| Human principal with clear authority | Authoring tools, approval screens, spec formats |

| Personal delegate at the edge | Delegate products and what comes after them |

| Management layer with full workforce control | Management-layer products and what comes after them |

| Per-worker engine choice | SDKs, runtimes, platforms that run the agents |

| Official store the workforce runs against | Database engines, CRM/ERP/ticketing products, MCP server lists |

| Workforce that can grow under rules | Managed-agent APIs, setup systems |

| Events, durability, and flow under authority | Schedulers, webhook frameworks, durable-execution platforms |

| Spec-driven definition of work | Spec languages, formats, tools |

| Seven operator principles for using general agents | Specific agent products, command-line tools, prompt patterns |

| Result-based money model | Pricing units, contract formats |

| Agents as economic actors | Payment systems, liability frameworks |

| Observable, audit-able runs | Tracing systems, log formats |

| Clean joints between layers, so vendor lock-in can shift without breaking the design | Which layer carries the lock-in — the AI model in 2024, the "harness" layer (the software around the AI) in 2026, the orchestrator next |

| Workforce that can be measured as cost, speed, result | Finance systems, ledger implementations |

| Abilities packaged as portable skills | Skill formats, registries, distribution platforms |

The left column is the main idea. The right column is 2026.

If you build to the left column, your company survives changes in the right column. If you build to the right column without understanding the left, you will have to rebuild every two years.

19. The Specific Tools We Use Today

For completeness, here is the list of products we use in 2026 to follow each rule. These are reference implementations. They will change. The rules will not. Remember: do not memorize the product names. Memorize the job each product performs.

- Delegate — OpenClaw (an open-source personal AI agent)

- Management layer — Paperclip (the AI-native company operating system; it exposes hire, assign, govern, observe, and retire as functions other systems can call)

- Engines — Dapr Agents, Claude Managed Agents, OpenAI Agents SDK, Cursor SDK, and OpenClaw-native (each suited to different job profiles)

- Skills — the Agent Skills format from agentskills.io (a portable folder format for packaging AI abilities)

- Nervous system — Inngest (the company's main event system) and Claude Code Routines (a specialist trigger for coding agents)

For Rule 6 (the workforce can grow on its own), we use Claude Managed Agents. The same technology that serves as one of the engine options also serves as the hiring system, because its ability to create new agents at runtime is exactly what makes "hiring as a callable function" possible.

Real-world support. This is not just a theory. In February 2026, Cursor's CEO described his company's shift from being an AI coding tool to being an "agent factory" in words very close to this book: groups of AI agents working as teammates, humans defining problems and reviewing finished work, agents working on cloud computers in parallel instead of being guided line by line. In May 2026, The New Stack reported the same pattern as an industry-wide pattern across Anthropic, OpenAI, Google, Microsoft, and Cursor.

The leading view in 2026 is sharp: the AI model is becoming a commodity (a commodity is something where many companies make good versions of it, so they all become interchangeable — like sugar or wheat). Many companies make good AI models now, and one model can be swapped for another. What is becoming valuable is the "harness" — the software wrapper around the model that lets it act safely, reliably, and at scale. A senior Google leader openly said that the company no longer cares which AI coding tool developers reach for. The model layer is no longer where the competition lives. The seams this book names — between human, delegate, management layer, engine, system of record, and nervous system — are now being built into real production systems at large scale.

The rules are not a forecast. They are a picture of where the leading edge already lives.

A small note on words. In this book, every part of the system is technically an agent — OpenClaw is an agent, Paperclip is an agent, the AI workers are agents. But only AI workers are the workforce — they are the ones that get hired, given work, kept on the team, and retired. OpenClaw and Paperclip are permanent parts of the company, not workforce. So when this book says AI Worker, it means workforce. When it says agent, it means anyone in the company — permanent part or workforce.

20. The Workforce Opportunity

AI will break jobs apart into individual tasks. Some of those tasks will be done fully by machines. But breaking jobs apart also creates new combinations — new roles, new businesses, new markets that did not exist when work was locked inside fixed job titles.

The future workforce will need to build flexible skill sets instead of relying on fixed career paths. The professionals who learn to think with AI, who use AI tools every day, and who treat AI as a digital teammate will not just survive the change. They will do well in it.

The SaaS era made millions of jobs for developers, designers, and product managers. The Agent Factory era will make millions more — for agent designers, result architects, verification specialists, and domain experts who teach machines what "correct" looks like in their field.

It is also one of the largest worker training opportunities in history. By 2030, the World Economic Forum estimates that 59 out of every 100 workers in the world will need new training to keep up with new technologies and new ways of working.

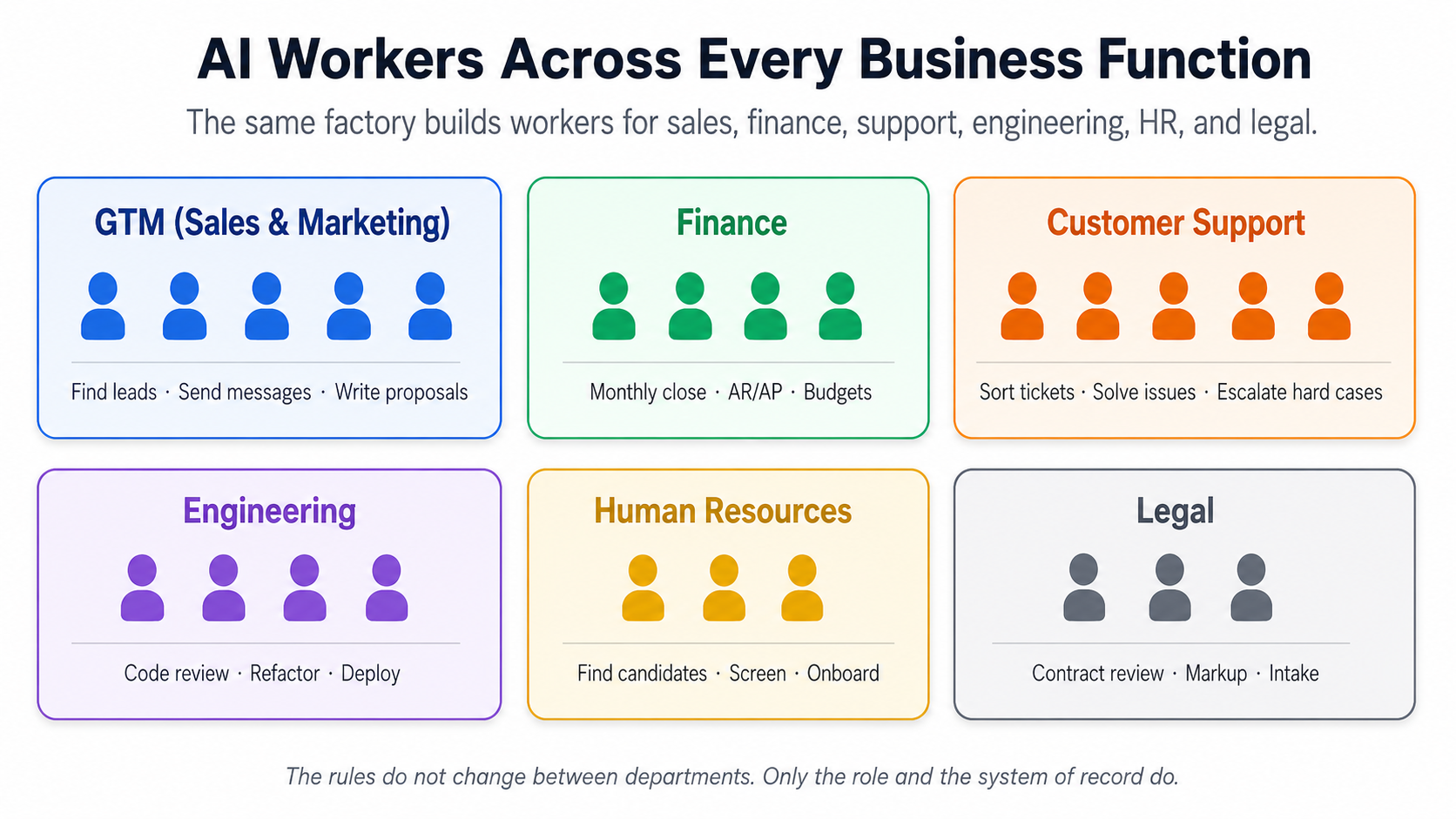

The workforce opportunity. The same Agent Factory builds specialist AI workers across every business function — sales, finance, support, engineering, HR, legal. The rules do not change between departments. Only the role and the system of record do.

The same factory makes specialist workers across every business function:

-

In GTM (this means Go-To-Market — the combined sales, marketing, and revenue work that turns possible buyers into paying customers). A group of AI workers handles many tasks here:

- Finding new leads.

- Sending outreach messages.

- Keeping the customer database clean.

- Analyzing the sales pipeline.

- Writing proposals.

- Customizing demos.

Work that was done by hand in the SaaS era is now built as AI workers and supervised by a human GTM lead.

-

In Finance: monthly close (preparing the books at the end of each month), AR/AP (which means accounts receivable — money owed to the company — and accounts payable — money the company owes others), and FP&A (which means financial planning and analysis — the team that builds budgets and forecasts).

-

In Customer Support: sorting tickets, solving issues, escalating hard cases.

-

In Engineering: code review, refactoring (improving existing code), deployment.

-

In Human Resources: finding candidates, screening them, onboarding new hires.

-

In Legal: contract review, marking up changes, intake of new matters.

Each AI worker is hired through Paperclip, supervised by a human in the right department, and runs against that department's system of record — the CRM for sales, the general ledger for finance, the ticket system for support, the code storage for engineering. The rules do not change between departments. Only the role definitions and the systems of record do.

The opportunity is not smaller. It is wider. And it rewards those who adapt.

21. The Money is Already Being Spent

If you are not sure this change is real, look at where the money is going.

By January 2026, US data center construction had grown to $42 billion per year, while office construction dropped 35% from its peak.

For the first time in history, America now spends more money building workplaces for AI workers (data centers) than for human workers (offices).

Data centers are using copper and electricity at an industrial scale. A single very large AI data center needs up to 50,000 tons of copper — about ten times what a normal data center needs. Meta, Google, Amazon, and Microsoft together plan to spend more than $600 billion on AI infrastructure in 2026 alone.

The factories of the AI age are not just an idea. They are being built right now.

U.S. construction spending. Office building (the workplace for human workers) is going down. Data center building (the workplace for AI workers) is going up. The two lines crossed in 2025. Source: U.S. Census Bureau, Value of Construction Put in Place Survey.

Winners will not be measured by how many software subscriptions they sold. They will be measured by the results they delivered.

22. Where This All Points

Before closing, it is worth marking where this thesis already stands. The AI-Native Company is no longer a future idea. By mid-2026, firms with single-digit human headcounts were reporting a billion dollars per year in revenue against AI-operated workforces — a category of company that did not exist in any meaningful form three years before. Some specific companies will succeed. Others will fail. Some will face regulator problems. But the category will stay. The thesis predicted the shape of the company. The company has arrived.

This thesis defends what the Agent Factory builds today and in the near future: software AI workers, organized into AI-Native Companies, working at the edges of human business. That is the scope this book earns.

But the design extends further. Three directions are worth naming.

Physical AI workers. The same factory design that builds software AI workers extends to physical ones — robots. A robot doing warehouse work. A vehicle that delivers packages on its own. A machine on a factory floor. Each of these is an AI worker under the same rules, hired through the same management layer, running on an engine that moves physical parts instead of software calls. The rules do not change. The computer just gets a body. As physical AI grows up, the AI-Native Company's workforce will not be only digital. It will also include physical workers built by the same method, controlled by the same design, responsible to the same rules.

Fully independent economic agents. The opening of this thesis named this direction. As AI workers gain lasting identities, payment systems, reputations, and the legal ability to enter into contracts, they stop being tools that their company uses. They become economic actors in their own right — buying services from other companies' AI workers, selling capacity to companies that need it, building up capital, entering into contracts without a human approving each transaction. The Agent Factory stays the same. What changes is how independent the workers built by it become. The questions this raises — legal status, responsibility, taxes, antitrust (laws that stop companies from becoming too powerful or hurting fair competition) — are not design questions, but they will become urgent ones, and the design must be ready for them.

AI workers moving between companies. Today, an AI worker is built by one company and works only for that company. As the manufacturing layer grows up, AI workers become portable — hired into one company, then transferred to another, or even working for several companies at the same time. The hiring system grows from inside-the-company to between-companies. Authority rules from different companies meet on the same AI worker, controlled by contract. The labor market for AI workers becomes a real market — with rates, reputations, specialties, and turnover. The Agent Factory ships the worker. The market routes it.

These three directions — physical workers, full independence, and worker mobility — are extensions of the design, not departures from it.

In Closing

The rules hold. The tools change. The thesis stands.

A new kind of company is being built — staffed by AI workers, coordinated by a management layer, directed by humans through personal agents. The method for building it is the Agent Factory. The company it produces is the AI-Native Company. The workers it employs are Digital FTEs. The truth they run against is the system of record. The rules they follow are the Seven Invariants. The products that follow those rules today will change. The rules will not.

If you are new to all of this, that is the whole thesis in one paragraph. The rest of this book is the design, the practice, and the work of building it.

Notes and Sources

-

Don Tapscott, interview on HBR IdeaCast, "With Rise of Agents, We Are Entering the World of Identic AI", Harvard Business Review, February 17, 2026.

-

World Economic Forum, Future of Jobs Report 2025, January 2025.

-

Michael Truell, "The third era of AI software development", Cursor Blog, February 26, 2026.

-

Matthew Burns, "Cursor's $60 billion bet is on the harness, not the model", The New Stack, May 1, 2026.

-

Erik Munson, Founding Engineer, Day AI, quoted in "Day AI – Customer Story", Inngest, accessed May 2026.

-

Jodie Cook, "The 2-Person $1 Billion Company Is The Real Business Goal — And How To Do It", Forbes, May 10, 2026.

This is the beginner-friendly version of the Agent Factory Thesis, in simple English. The original technical version is available at agentfactory.panaversity.org. Both versions argue for the same design and reach the same conclusions. This version uses simple words and short sentences. The original uses industry words and longer sentences. Read whichever fits where you are right now — and switch when you are ready.