From Agent to Digital FTE: A 90-Minute Crash Course

15 Concepts, 80% of Real Use - Skills, System of Record, and MCP

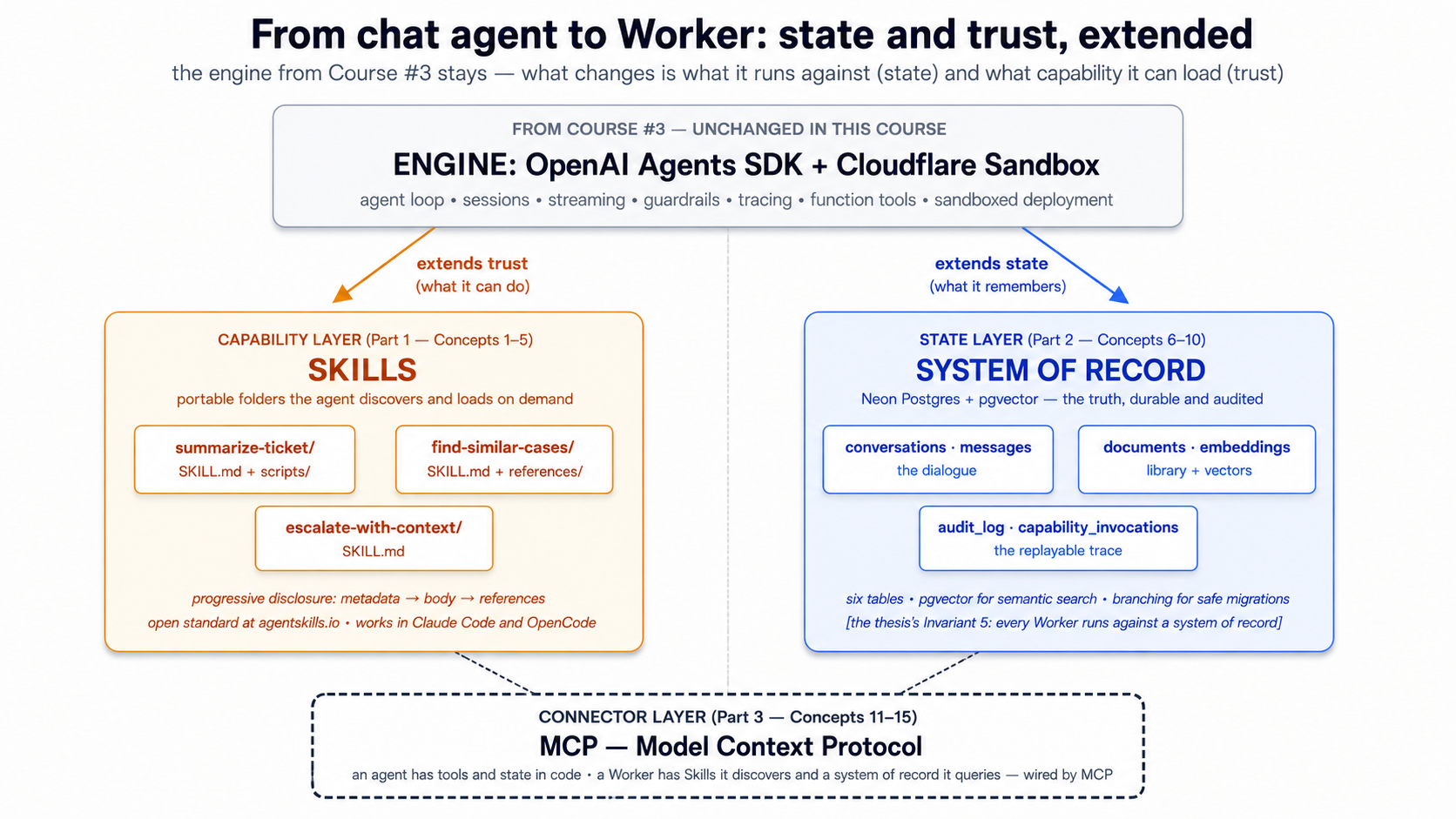

A continuation crash course. This is course #4 in the agentic-coding track. The previous course — Build AI Agents with the OpenAI Agents SDK and Cloudflare Sandbox — taught you to build a streaming chat agent with sessions, guardrails, and a sandboxed deployment. By the end of that course you had an agent that worked. By the end of this course you'll have a Worker — same OpenAI Agents SDK foundation, same Cloudflare Sandbox runtime, but with three additions that change what the agent is: portable Skills it loads on demand, a Neon Postgres system of record it reads from and writes to, and Model Context Protocol connections that wire the two together.

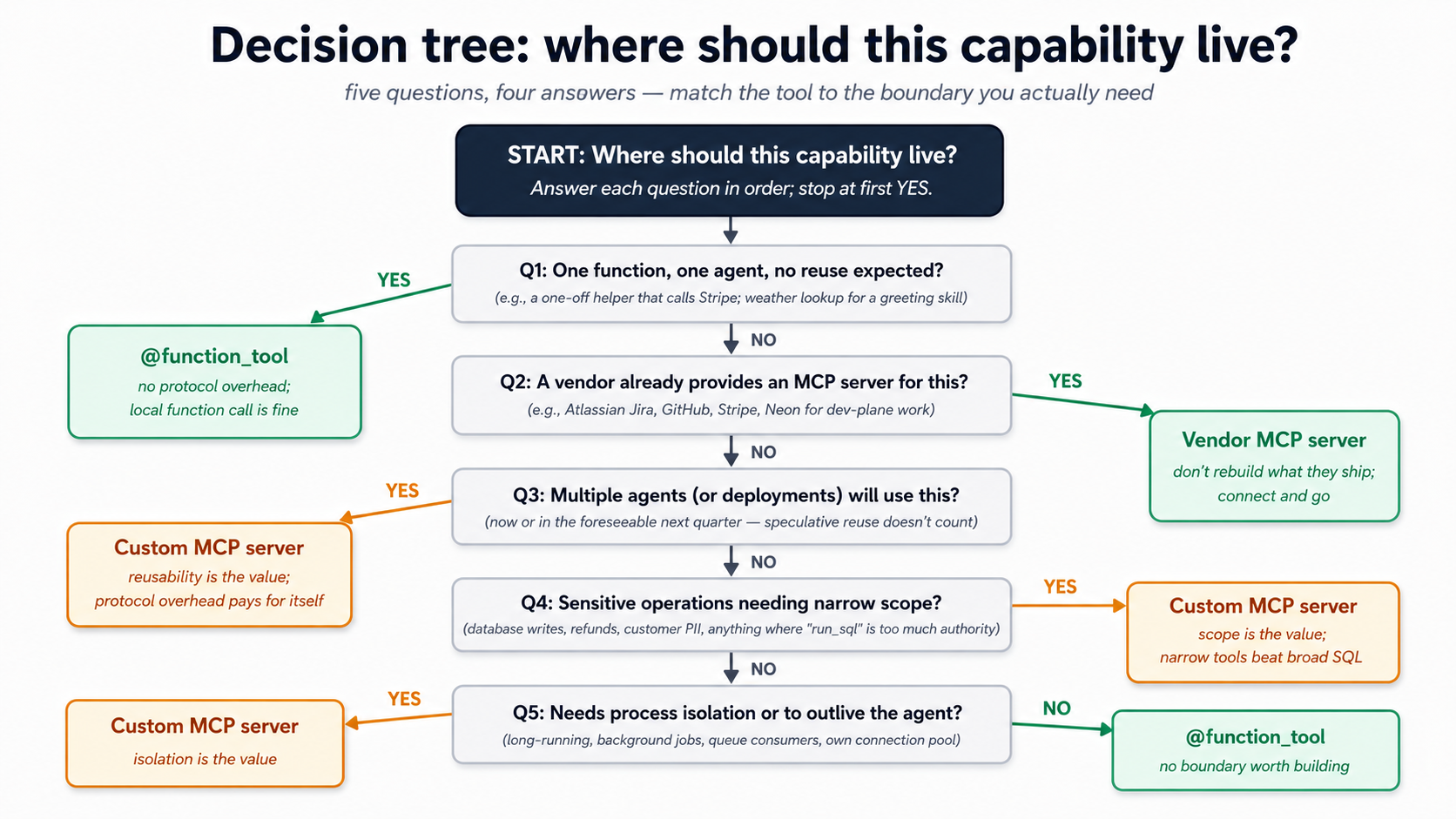

The single insight that makes everything else click: turning an agent into a Worker is two architectural decisions — where its capabilities live, and where its truth lives. Skills answer the first. MCP-connected systems of record answer the second. Everything in this crash course is one of those two answers or the wiring between them.

In plain English: a chat agent that lives in a single Python process has its capabilities baked into code and its memory baked into RAM. Restart the process and both disappear. A Worker — the architectural term our thesis uses for an agent that operates inside a company — has its capabilities packaged as Skills (portable folders the agent discovers on demand) and its memory packaged in a system of record (a durable database the agent reads from and writes to through the Model Context Protocol). The previous course built the engine. This course builds what the engine runs against.

Start here → the architectural placement and the 15-concept cheat sheet (open once, refer back)

Where this course sits in the architecture. The Agent Factory thesis describes Seven Invariants that any production agent system must satisfy. Course #3 covered the engine layer (Invariant 4): the OpenAI Agents SDK as the harness, Cloudflare Sandbox as the compute. This course covers two more invariants and one of the production engine's three pillars:

- Invariant 5: Every Worker runs against a system of record. "Engine is what each Worker runs on; system of record is what each Worker runs against. Every AI Worker reads from and writes to an authoritative store of state." Neon Postgres + pgvector is one concrete realization of this invariant. The thesis is clear: any durable, addressable, governed store the workforce can read and write satisfies the requirement. We use Neon because it's free to start, scales to zero, and ships an official MCP server. The architecture is invariant; the product is replaceable.

- The Skills capability layer. Your thesis names "capability packaged as portable skills" as one of the architecture's stable invariants. Skills are the open standard (originally Anthropic, now ecosystem-wide at agentskills.io) that lets a Worker's capabilities live outside its code — in folders an agent discovers, loads, and executes on demand.

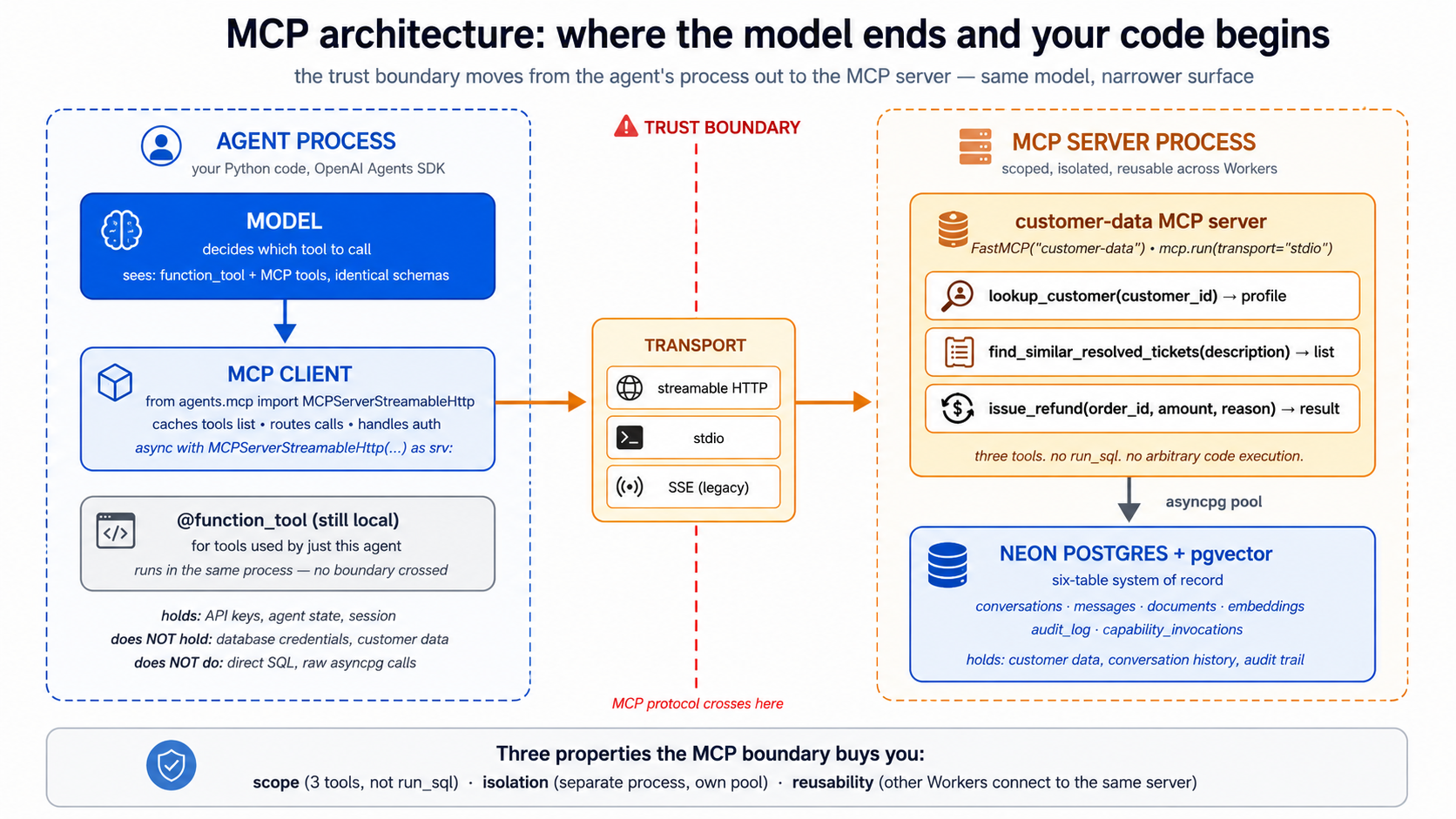

- MCP as the connector. Your thesis frames MCP as "how the workforce reaches" its systems of record. Course #3 used MCP lightly; this course makes MCP the load-bearing pattern between the agent and Neon.

The thesis sentence, expanded. Two architectural decisions turn an agent into a Worker:

- Where do its capabilities live? In code, as

@function_tooldecorators → fine for a demo. In Skill folders the agent discovers at startup and loads on demand → fine for a Worker that ships, evolves, gets added to without redeploying, and is sharable across Workers in the same company. - Where does its truth live? In RAM and a local SQLite file → fine for a chat demo. In a durable Postgres database the agent reads from and writes to under audit, with vector search over what it has learned → fine for a Worker that has to answer "what did you tell the customer six weeks ago?" and "have we seen this question before?"

The connector that joins the two is the Model Context Protocol — an open standard for how an agent reaches external state and external capability. By the end of this course, you will have replaced this course's predecessor's stub tools and SQLite session with: three Skills the agent loads on demand, a Neon Postgres + pgvector database holding the agent's authoritative state, and an MCP layer that wires them to the agent without coupling the agent to either.

The 15-concept cheat sheet. A failure in production almost always traces to one of three root causes — a missing or wrong skill, a system of record that isn't actually the source of truth, or an MCP wiring that loses data. This table is the diagnostic.

| # | Concept | Layer | What question it answers |

|---|---|---|---|

| 1 | What an Agent Skill is | Skills | Where does reusable capability live? In a folder, with SKILL.md plus optional scripts/references. |

| 2 | Progressive disclosure | Skills | Why are skills cheap to keep on hand? Discovery → activation → execution loads only what's needed when it's needed. |

| 3 | Writing a SKILL.md | Skills | What does a skill file actually contain? Metadata, trigger description, operational instructions. |

| 4 | Skill packaging conventions | Skills | How do skills travel between tools? Same folder works in Claude Code, OpenCode, and any compliant client. |

| 5 | Composing skills | Skills | When to chain small skills via filesystem handoff vs. write one big skill. |

| 6 | Why managed Postgres | System of record | What store earns "system of record"? One with persistence, branching, governance, and the vector primitives an agent needs. |

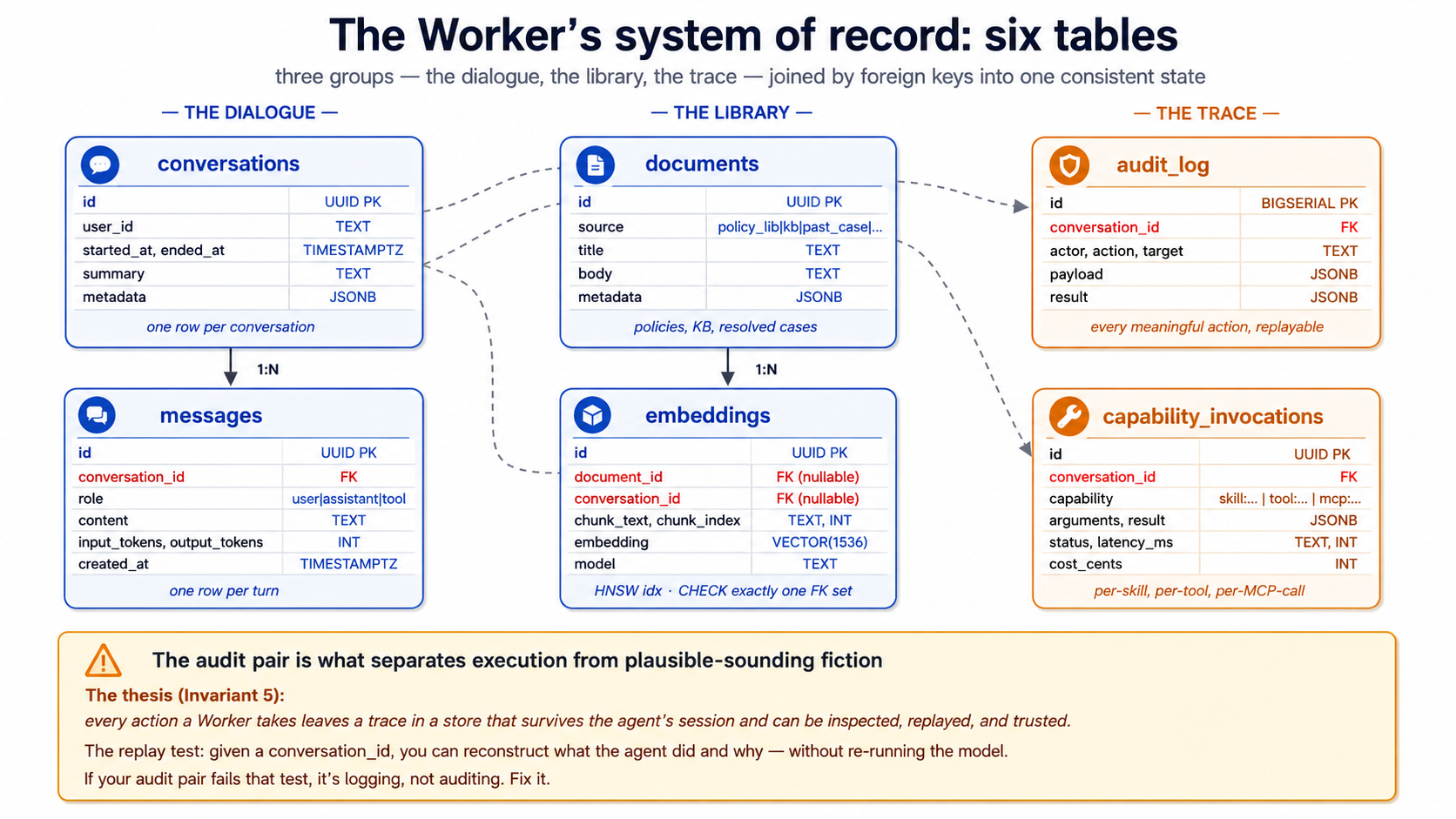

| 7 | The Worker's schema | System of record | What tables does an agent actually need? Conversations, messages, documents, embeddings, audit log, capability invocations. |

| 8 | pgvector basics | System of record | How does semantic search work in Postgres? Embedding column, distance operators, index types. |

| 9 | The embedding pipeline | System of record | How does text become a queryable vector? Chunking, the embedding model, when to re-embed. |

| 10 | Audit trail as discipline | System of record | What does "reads and writes" mean for a Worker? Every action a Worker takes leaves a trace the company can replay. |

| 11 | What MCP is and isn't | MCP | A protocol for tools, resources, and prompts — not a framework, not a service. |

| 12 | The Neon MCP server | MCP | The agent's interface to its database — what it exposes, how it authenticates. |

| 13 | Connecting MCP to the Agents SDK | MCP | The SDK's MCP integration: how to register a server, what the model sees, where the trust boundary lives. |

| 14 | Custom MCP servers | MCP | When to write your own server vs. just use @function_tool. The decision tree. |

| 15 | MCP under load | MCP | Transport choices, connection pooling, when to queue. |

Once you have this mapping, the rest of the document is mostly mechanics. A failure in production traces to one of: a Skill that doesn't get discovered (description too vague), a system of record that two Workers disagree about (schema race), or an MCP wiring that drops events (transport not chosen for the workload). The diagnostic tells you which.

About the "45 minutes" in the title. The subtitle has the honest numbers; the title keeps "45-Minute Crash Course" for series-naming parallel with the sibling Agentic Coding Crash Course and the previous Build AI Agents course — three crash courses, one Foundation track. If you only have 45 minutes, read Parts 1 and 4 (Skills + worked example); come back for the system-of-record and MCP detail.

Audience. This is an intermediate-to-advanced crash course, denser than its predecessors. You need to have completed Course #3 (or be comfortable with everything it taught), because we extend its chat agent rather than re-build it from scratch. The OpenAI Agents SDK, the agent loop, sessions, streaming, function tools, sandboxing — all assumed.

Prerequisites. This page assumes four things.

- You have completed Build AI Agents with the OpenAI Agents SDK and Cloudflare Sandbox. This is non-negotiable. We pick up where its worked example left off — same project layout, same

agents.py, samecli.py, sametools.py. If you have not, do that course first; this one will read as friction without it.- You have the Agentic Coding Crash Course discipline. Plan mode, rules files (

CLAUDE.md/AGENTS.md), slash commands, context discipline. This course's worked example uses Skills as slash commands at one point, so the rules-file discipline is load-bearing.- You have done at least one PRIMM-AI+ cycle from Chapter 42. The Predict prompts in this course assume you know to predict, run, investigate, modify, make.

- You have a working Postgres mental model. Tables, indexes, transactions, foreign keys. You don't need to be a DBA. You should know what

SELECT ... WHEREdoes, what an index is for, and roughly whatJOINdoes. If you have written one CRUD app in any language, you're calibrated.

How to read this page on first pass (click to expand)

Same rule as the previous course in the series:

- Expand on first read: anything labeled "What you'll see," "Sample transcript," "Expected output," "Verify." These contain the runnable behavior to check predictions against.

- Skip on first read: anything labeled "What

skill.mdlooks like in full," "The complete migration SQL," and other full-file listings in Part 5's worked example. The narrative above each block tells you what changed; you only need the file contents when you actually build. - Optional throughout: the "Try with AI" blocks at the end of each concept. Extension prompts for Claude Code or OpenCode; skip without guilt if your tool isn't set up.

The goal of first pass is to internalize the three-layer model — Skills are the capability layer, the Neon system of record is the state layer, MCP is the connector — and the way they sit on top of the OpenAI Agents SDK + Cloudflare Sandbox stack you already know. Second pass with your hands on the keyboard is where you build.

Glossary: terms you'll meet (click to expand)

These are the terms most likely to trip a reader on first encounter. Each is explained again in context as it appears.

-

Skill — A folder with a

SKILL.mdfile and optional scripts, references, and assets. The folder is the skill; the file inside it is the entry point. An agent loads skills via progressive disclosure: name+description at startup, full instructions when triggered, referenced files on demand. -

SKILL.md— The skill's entry file. YAML frontmatter withnameanddescription(and optional metadata), then markdown body with the instructions the agent follows when the skill activates. -

Progressive disclosure — The three-stage skill-loading model. Discovery: agent reads names and descriptions of all available skills at startup. Activation: agent reads the full

SKILL.mdof the matching skill when a task triggers it. Execution: agent loads referenced files (or runs bundled scripts) on demand during execution. -

System of record (SoR) — Authoritative store of state the Worker reads from and writes to. The thesis term for "the database that holds the truth." For this course: a Neon Postgres database.

-

Neon — A managed Postgres service with serverless branching, scale-to-zero, and a free tier. Its differentiator versus other managed Postgres is branching (copy-on-write database copies in seconds) and its first-class MCP server.

-

pgvector — A Postgres extension that adds a

vectorcolumn type plus distance operators for similarity search. Lets one database hold both relational data and embedding-based semantic search. -

Embedding — A fixed-length numerical vector representing a piece of text (or other data) such that semantic similarity maps to vector distance. Generated by an embedding model (

text-embedding-3-smallis the OpenAI default). -

MCP (Model Context Protocol) — An open standard for how AI agents connect to external tools, resources, and prompts. Defines a client/server architecture, three primitives (tools, resources, prompts), and three transports (stdio, SSE, streamable HTTP).

-

MCP server — A program that exposes capabilities (tools/resources/prompts) to MCP clients. The Neon MCP server is one example; you can write your own in Python or TypeScript.

-

MCP client — The agent-side counterpart that connects to MCP servers, lists their capabilities, and surfaces them to the model. The OpenAI Agents SDK has an MCP client built in.

-

Tool (MCP) — One of three MCP primitives. A function the model can invoke. From the model's perspective, an MCP tool looks similar to a

@function_tool— but the implementation lives in the MCP server, not the agent's process. -

Resource (MCP) — One of three MCP primitives. A read-only data source the agent can fetch. Files, database query results, API responses. Read but not write.

-

Prompt (MCP) — One of three MCP primitives. A reusable prompt template the server provides for the model to invoke. Less common than tools and resources; useful for standardized templates across teams.

-

Audit log — A database table that records every meaningful action a Worker takes — every tool call, every database write, every capability invocation — in a form the company can replay, query, and reason about after the fact.

Current as of May 13, 2026. Verified against

openai-agents==0.17.2,mcp==1.27.1, Neon's MCP server documentation, andpgvector0.8+. The state-and-trust architecture this course teaches does not change when the products do; the products are this year's best fit. The Cloudflare Sandbox tutorial and Neon docs are the canonical sources for vendor-specific details. And see Part 6's Swap guide for the per-product alternatives at each layer (Supabase, Pinecone, Cohere embeddings, LangGraph, and others).

The OpenAI Agents SDK and Cloudflare Sandbox stack from the previous course is the foundation of this course, not a stepping stone we move past. Your agent in Part 5 still uses Agent, Runner, function_tool, sessions, streaming events, guardrails, and SandboxAgent with Shell() and Filesystem() capabilities. What changes: those primitives now sit on top of a Skills library and a Neon system of record connected via MCP. The previous course taught you the engine. This course teaches you what the engine runs against.

Cloudflare Sandbox remains the trust boundary for anything the agent executes — including Skill scripts that run shell commands. Neon is the system of record. MCP is the wiring. The architecture combines an open SDK (OpenAI Agents SDK, MIT-licensed), an open connector protocol (MCP), and replaceable managed infrastructure (Cloudflare Sandbox for execution, Neon for storage) — each piece swappable without changing the others. Everything below assumes the same Python-first stack as Course #3 with uv for package management.

This is a Python-first course, like its predecessor. The Skills format is language-agnostic (a SKILL.md is a Markdown file, whether your agent is Python or TypeScript), and MCP is transport-agnostic, but the agent we extend in Part 5 is the same Python chat-agent from Course #3.

The dual-tool pattern from Course #3 continues here. Sections that diverge between Claude Code and OpenCode have a switcher; pick one and the page syncs across visits.

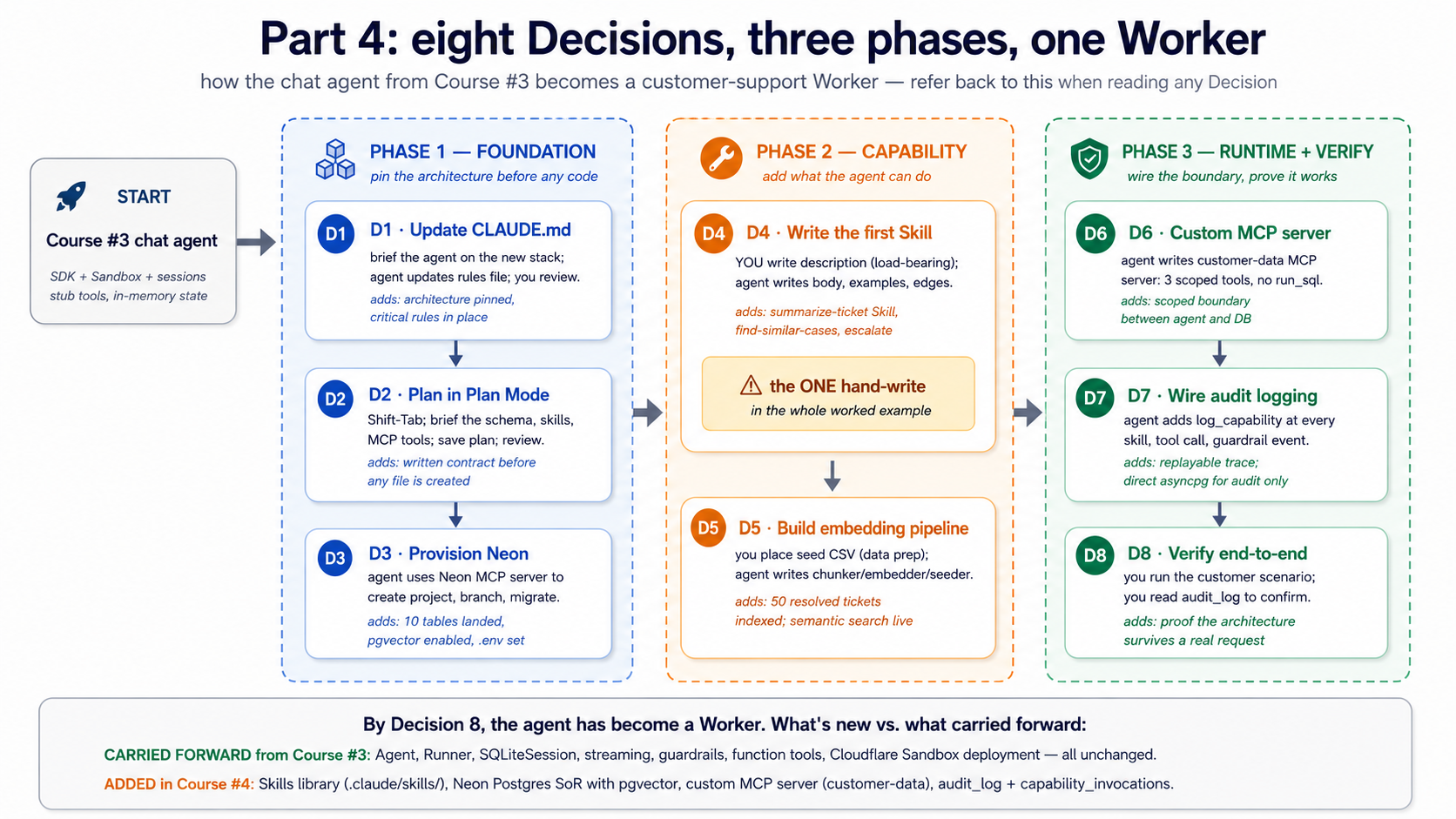

There's a complete worked example in Part 5: the chat agent from Course #3, evolved into a customer-support Worker with three skills, a Neon system of record, and an MCP wiring layer. Eight build decisions, same shape as the previous course's worked example. If you learn better from doing than reading definitions, skim Parts 1–3 and jump to Part 5.

Architecture in one line. Engine = OpenAI Agents SDK + Cloudflare Sandbox (from Course #3). Capability = Skills (Part 1). Truth = Neon Postgres + pgvector (Part 2). Connector = MCP (Part 3). The eight build decisions in Part 5 wire all four together. If only one sentence of this whole document sticks, that's the one.

The fifteen-minute quick win — succeed once, then study why it worked

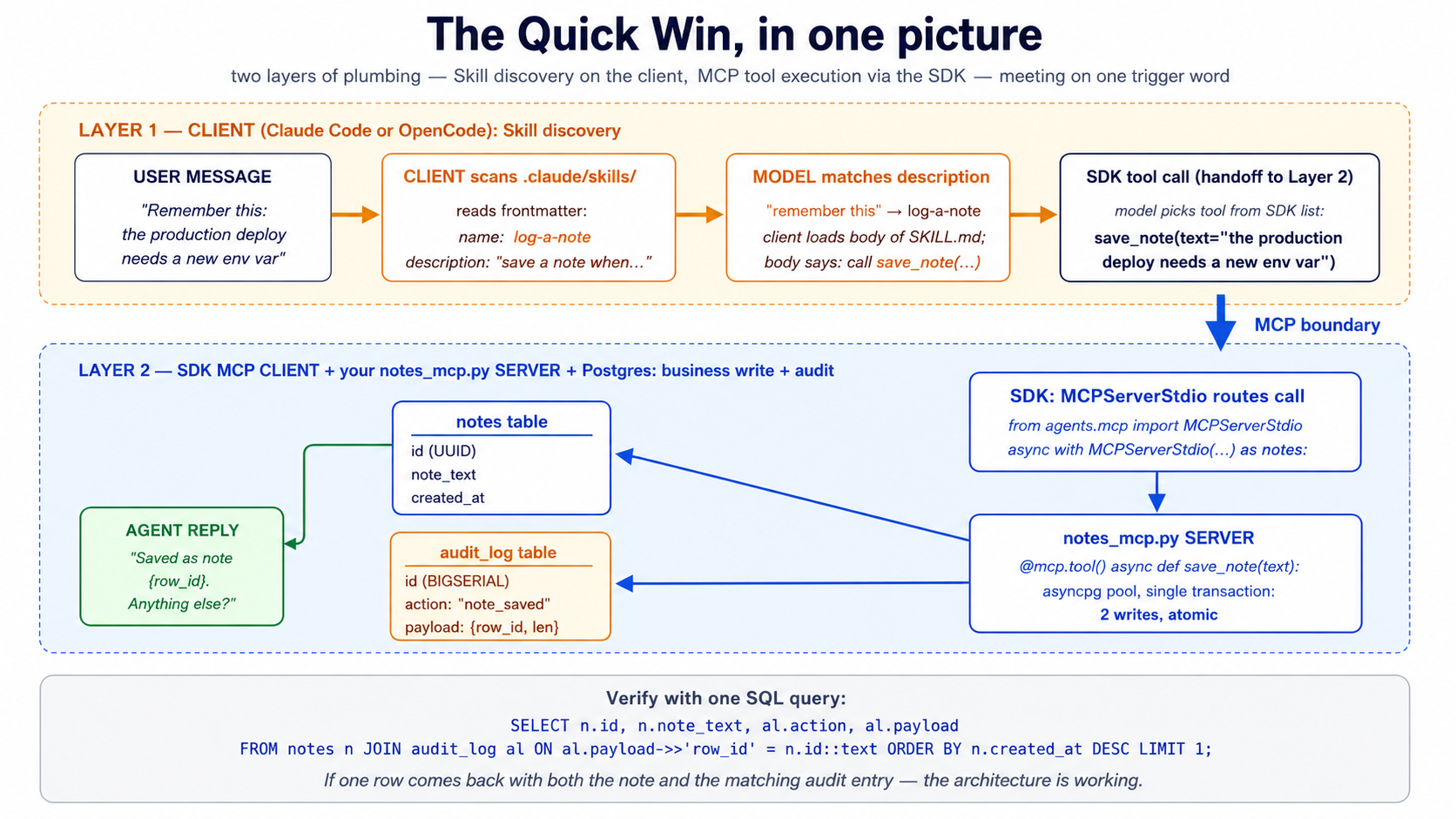

Before you read the 15 concepts that explain why this architecture works, build the smallest possible version of it that actually works. Four files, two uv commands, one shell session. By the end of this section you'll have:

- one Skill the agent discovers and invokes

- one Postgres table with one row written by the agent

- one audit-trail entry recording what happened

- a working answer to the question "did Skills + system of record + MCP actually do anything for me?"

This is not the Part 5 worked example — that's the full Worker, eight Decisions, hundreds of lines. This is one screen. If you only have one sitting, do this, then come back for the concepts when you want to know why each piece was shaped the way it was.

One precondition worth being explicit about. Skill discovery — scanning

.claude/skills/, reading frontmatter, presenting descriptions to the model, loading the body on activation — is a client capability, not an OpenAI Agents SDK primitive. Claude Code, OpenCode, and Codex all ship with it. A bareAgent(...)from the SDK does not automatically find.claude/skills/SKILL.mdfiles; it only sees the tools you pass viamcp_servers=and@function_tool. Run the Quick Win below from inside Claude Code or OpenCode, the same way you'd run your Course #3 chat agent from those clients. If you want to invoke skills from a standalonepython -m chat_agent.clisession, you'd add a small Skill-loader (scan the folder, read frontmatter, inject descriptions intoAgent(instructions=...), load the body when the model picks it). The full Part 5 worked example assumes that loader exists; the Quick Win below keeps things simple by letting the client handle discovery.

Step 1. In your Course #3 chat-agent/ project, create a Skill folder with one file:

mkdir -p .claude/skills/log-a-note

cat > .claude/skills/log-a-note/SKILL.md << 'EOF'

---

name: log-a-note

description: Saves a short text note to the durable notes table in Postgres, along with a timestamp and the conversation_id. Use when the user says "remember this", "save a note", "log that", or otherwise asks for something to be persisted between sessions. Returns the row_id of the saved note.

---

# Log a note

When this skill activates:

1. Extract the note text from the user's message — everything after "remember:" or "save this:" or similar trigger phrases, or the whole message if no trigger phrase is used.

2. Call the `save_note` tool with the extracted text.

3. Reply with one short sentence confirming the save and citing the row_id.

EOF

Step 2. Create one Postgres table and a tiny audit table. (Use Neon's web console for this, or your existing Course #3 database if you have Postgres handy — you don't need pgvector yet.)

CREATE TABLE notes (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

note_text TEXT NOT NULL,

created_at TIMESTAMPTZ NOT NULL DEFAULT NOW()

);

CREATE TABLE audit_log (

id BIGSERIAL PRIMARY KEY,

action TEXT NOT NULL,

payload JSONB NOT NULL,

created_at TIMESTAMPTZ NOT NULL DEFAULT NOW()

);

Step 3. Write a 30-line MCP server exposing one tool. The full pattern shows up again in Concept 14 and Decision 6; this is the smallest possible version:

# notes_mcp.py

import os, asyncpg

from mcp.server.fastmcp import FastMCP

mcp = FastMCP("notes")

_pool = None

async def pool():

global _pool

if _pool is None:

_pool = await asyncpg.create_pool(os.environ["DATABASE_URL"])

return _pool

@mcp.tool()

async def save_note(text: str) -> dict[str, str]:

"""Save a note to the durable notes table. Returns the row_id."""

p = await pool()

async with p.acquire() as c:

async with c.transaction():

row_id = await c.fetchval(

"INSERT INTO notes (note_text) VALUES ($1) RETURNING id::text", text)

await c.execute(

"INSERT INTO audit_log (action, payload) VALUES ($1, $2::jsonb)",

"note_saved", f'{{"row_id": "{row_id}", "len": {len(text)}}}')

return {"row_id": row_id, "status": "saved"}

if __name__ == "__main__":

mcp.run(transport="stdio")

Step 4. Tell your agent (in cli.py from Course #3) to use the new MCP server. Add four lines to the imports and the Runner.run_streamed setup:

from agents.mcp import MCPServerStdio

async with MCPServerStdio(

name="notes",

params={"command": "python", "args": ["notes_mcp.py"],

"env": {"DATABASE_URL": os.environ["DATABASE_URL"]}},

) as notes:

agent = Agent(name="ChatAgent", mcp_servers=[notes], ...) # rest as in Course #3

Step 5. Run it. Send a message: "Remember this: the production deploy needs a new env var before Friday."

What happens, in order: when you run this from Claude Code or OpenCode, the client reads .claude/skills/log-a-note/SKILL.md and surfaces the description to the model alongside the SDK's tool list. The agent's MCP client (the SDK's MCPServerStdio) discovers the save_note tool from notes_mcp.py. The model sees one description ("save a note") and one tool (save_note) and connects the two — the user says "remember this," the client activates the skill, the skill body says "call save_note," the model calls it. The MCP server writes a row to notes, writes a row to audit_log, and returns the row_id. The agent replies with the row_id. Two layers of plumbing, one trigger word.

The verification, in one SQL query:

SELECT n.id, n.note_text, n.created_at, al.action, al.payload

FROM notes n

JOIN audit_log al ON al.payload->>'row_id' = n.id::text

ORDER BY n.created_at DESC LIMIT 1;

If you see your note text in one row and "action": "note_saved" with a matching row_id in the joined audit row — the architecture is working. A Skill the agent discovered. A system of record that holds the truth. An MCP boundary the agent crossed to write it. An audit trail you can replay.

This is one Skill, one tool, two tables, and ~50 lines of new code. The Part 5 worked example scales the same pattern to three Skills, ten tables, three MCP tools, and an embedding pipeline — but the shape is identical. If this quick win worked, the rest of the course is explaining why each piece is shaped the way it is and what changes when you scale it up.

If something didn't work, scan the "When something feels wrong" table near the end of the document — it maps each common failure to the concept that explains it. Then come back here.

Part 1: Skills — capability as portable folders

The previous course's agent had its capabilities baked into Python. Every tool was a @function_tool-decorated function in tools.py; every behavior was an instruction string in agents.py. That worked for one demo. It does not work for a workforce. Skills extract capability out of code and into folders the agent discovers, loads, and executes on demand — version-controlled, sharable across agents, addable without redeploying.

Concept 1: What an Agent Skill is

An Agent Skill is a folder containing a SKILL.md file plus optional scripts, references, and assets. The folder is the skill; the SKILL.md is its entry point. The format was originally released by Anthropic, is now an open standard maintained at agentskills.io, and is supported by Claude Code, OpenCode, and a growing list of other agent clients.

The minimum viable skill:

hello-skill/

└── SKILL.md

With contents:

---

name: hello-skill

description: A skill that responds with a friendly greeting tailored to the user's name and the time of day. Use when the user asks to be greeted, says hello, or starts a conversation.

---

# Hello skill

When the user greets you or asks to be greeted:

1. Determine the current local time of day.

2. Compose a friendly, time-appropriate greeting that uses the user's name if available.

3. Keep the greeting under 25 words.

Examples:

- Morning + name known: "Good morning, Sara — hope your week is starting well."

- Evening + no name: "Good evening — happy to be of help."

That's a complete, valid skill. The agent will see it at startup (the name and description), load the body when a task matches the description, and act on the instructions. No code. No deployment. No SDK call. A folder with a markdown file.

This is the load-bearing observation. Skills are not Python objects you import. They are not API endpoints you call. They are not framework primitives you instantiate. They are files on disk the agent discovers and executes. That property — the file-on-disk property — is what makes them portable across tools, shareable across teams, and version-controllable like any other text artifact.

PRIMM — Predict. Given the

hello-skillfolder above, what does the agent load into context at startup, before any user message arrives? Three options: (a) the entireSKILL.mdfile, including the instructions; (b) just the frontmatternameanddescription, nothing else; (c) nothing — skills load only when invoked. Confidence 1–5.

The answer is (b). At startup, the agent reads only the metadata for every skill in its skill library. The full body — the instructions, the examples, the references — loads on demand. This is progressive disclosure, and it's the next concept.

Try with AI

I want to write my first Agent Skill. Give me three skill ideas

suitable for a customer-support agent. For each, write the frontmatter

(name + description) only. The description must be specific enough

that the agent knows EXACTLY when to invoke it — vague descriptions

like "Helps with tickets" don't count. After I review your three,

ask me which I'd refine first.

Concept 2: Progressive disclosure — the three-stage skill loading model

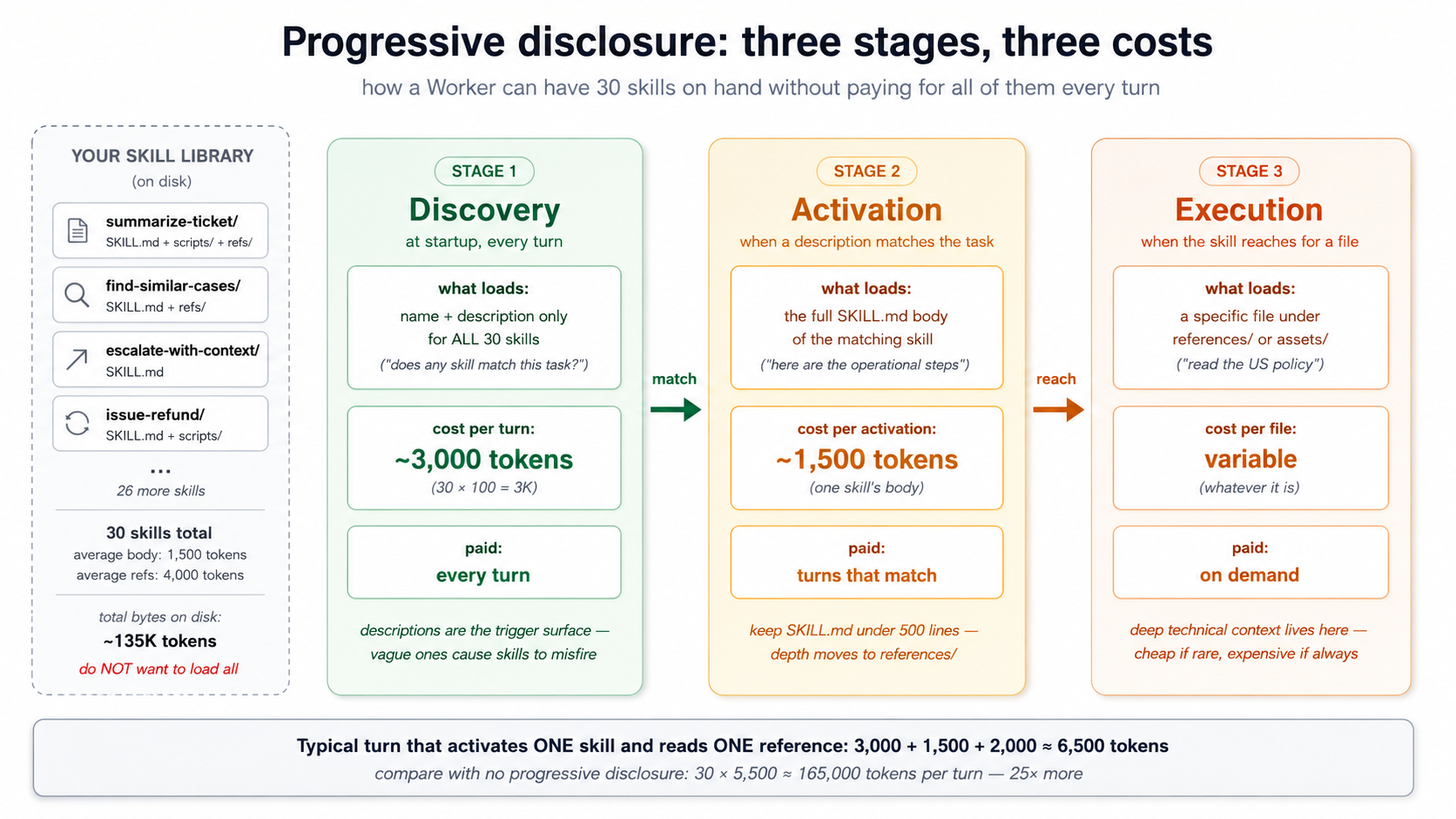

A chat agent with one skill has trivial context cost. A Worker with fifty skills doesn't — unless the loading model is clever. Progressive disclosure is the clever part. Skills load in three stages, each more expensive than the last, only loading the next stage when the previous stage indicates the skill is relevant.

Stage 1 — Discovery. At startup, the agent loads the name and description of every available skill. The spec recommends keeping this footprint tiny — roughly 100 tokens per skill. A Worker with fifty skills pays roughly 5,000 tokens for discovery, every turn, every conversation. That's the cost of knowing what's in the library.

Stage 2 — Activation. When the model decides a task matches a skill's description, it loads the full SKILL.md body into context. The spec recommends keeping this under 5,000 tokens per skill. Most well-written skills sit at 500–2,000 tokens. The activation cost is paid only on turns that actually use the skill.

Stage 3 — Execution. If the skill's instructions reference other files — a script under scripts/, a reference under references/, a template under assets/ — those load only when the agent explicitly reaches for them. Some skills never load any references; deep technical skills might load three or four.

A short walkthrough — what this looks like in practice. Imagine your customer-support Worker has 30 Skills in .claude/skills/, each with a SKILL.md. The agent starts; the runtime reads each SKILL.md and extracts the YAML frontmatter only — name and description — for all 30 files. Roughly 3,000 tokens land in the model's context as a table of "available skills." The body of each SKILL.md is not loaded; the references under references/ are not loaded; the scripts under scripts/ are not even read. The model now knows what tools it has, but not how to use any of them.

The user sends a message: "Bring me up to speed on ticket TKT-1042." The model scans its 30-description list, matches the summarize-ticket description (which mentions "ticket ID," "bring me up to speed"), and decides this skill applies. Now the runtime loads the full summarize-ticket/SKILL.md body — about 1,500 tokens — into context. The model reads the operational instructions: "extract the ticket ID, call lookup_customer, call find_similar_resolved_tickets, compose a five-section summary." It follows the instructions.

Step 3 of those instructions references references/style.md — the formatting guide for summaries. The model reaches for that file; the runtime loads it on demand, ~2,000 more tokens. The other 29 skills' bodies, the other reference files, the scripts under scripts/ — none of it ever entered the model's context. Total context cost this turn: ~6,500 tokens of skill machinery. Cost without progressive disclosure (all bodies + all references loaded at startup): ~165,000 tokens. That's the difference between a Worker that's affordable to run and one that isn't.

PRIMM — Predict. A Worker has 30 skills in its library. The average SKILL.md frontmatter is 80 tokens; the average body is 1,500 tokens; each skill has on average two reference files totalling 4,000 tokens of references. On a typical turn that activates one skill and reads one reference, what is the rough context cost of the skill system? Three options: (a) ~2,400 tokens (startup metadata + one activation + one reference); (b) ~6,900 tokens; (c) ~135,000 tokens (everything loaded all the time). Confidence 1–5.

The answer is (b), roughly 6,900 tokens — 30 × 80 tokens for discovery (2,400), plus 1,500 tokens for the one activated skill, plus ~2,000 tokens for one referenced file. The cost scales linearly with library size for discovery, and constant per-turn for activation and execution. This is what makes a skill library affordable. Without progressive disclosure, 30 skills × 1,500 tokens of body × 4,000 tokens of references = 165,000 tokens per turn just to know what the agent can do. Nobody runs that.

Two consequences for skill design:

-

Descriptions are the load-bearing field. They're what fires in Stage 1 and determines whether Stage 2 happens at all. A vague description ("helps with PDFs") fires too often and burns activation tokens for nothing; a sharp description ("extracts text and tables from PDFs, fills PDF forms, merges PDFs — use when working with PDF files") fires when it should and stays quiet when it shouldn't. Most skill failures are description failures.

-

Long SKILL.md bodies are expensive. Keep

SKILL.mdunder ~500 lines, ideally 100–300. Move depth into reference files. The 500-line limit isn't arbitrary; it's the threshold where activation cost starts crowding out actual reasoning context.

Try with AI

I have a SKILL.md that's grown to 1,200 lines because I kept adding

edge cases. Walk me through how to split it. The skill is a

"customer-refund-issuance" skill: it has the core refund process,

five edge cases (international, partial, post-30-days, with-restocking-fee,

fraud-flagged), three reference policies (US, EU, UK), and a Python script

that calls the payment gateway. Help me decide what stays in SKILL.md,

what moves to references/, what moves to assets/, and what stays as scripts/.

Concept 3: Writing a SKILL.md — the contract a skill makes with the model

The SKILL.md file has two parts: YAML frontmatter (the contract) and the Markdown body (the operational instructions). The frontmatter is the agent-facing API; the body is the doing.

The frontmatter, by field.

| Field | Required | Constraint | Purpose |

|---|---|---|---|

name | Yes | 1–64 chars, lowercase alphanumeric + hyphens, no leading/trailing/consecutive hyphens, must match folder name | The skill's identifier. |

description | Yes | 1–1024 chars, non-empty | The trigger surface. What the agent reads at discovery to decide whether to invoke this skill. |

license | No | License name or path to bundled license file | What terms the skill ships under. |

compatibility | No | ≤500 chars | Environment requirements (intended product, system packages, network access). Most skills don't need this. |

metadata | No | Arbitrary key-value mapping | Client-specific extensions (author, version, etc.). |

allowed-tools | No | Space-separated tool list | Pre-approved tools the skill may use. Experimental — support varies. |

The minimum viable frontmatter is two fields:

---

name: my-skill

description: One sentence explaining what this skill does and when to use it.

---

A more production-shaped frontmatter:

---

name: extract-meeting-notes

description: Extracts structured action items, decisions, and follow-ups from raw meeting transcripts. Use when the user provides a transcript, meeting recording text, or asks to summarize a meeting. Produces a markdown summary with explicit sections for Decisions, Action Items (with owner and deadline), and Follow-ups.

license: MIT

compatibility: Requires Python 3.12+ and access to the OpenAI API.

metadata:

author: panaversity

version: "1.2"

---

The description is the load-bearing field. Reread the spec's good vs. poor examples and internalize the difference:

Good: "Extracts text and tables from PDF files, fills PDF forms, and merges multiple PDFs. Use when working with PDF documents or when the user mentions PDFs, forms, or document extraction."

Poor: "Helps with PDFs."

The good description says what ("extracts text and tables, fills forms, merges"), when ("when working with PDF documents or when the user mentions PDFs, forms, or document extraction"), and surfaces specific keywords the model can match against ("PDFs," "forms," "extraction"). The poor one says none of those. Description quality is the single biggest determinant of whether your skill fires when it should and stays quiet when it shouldn't.

The body, by convention. No format requirements — the spec says "Write whatever helps agents perform the task effectively." But three sections show up in nearly every well-written skill:

-

Step-by-step instructions. What the agent does, in numbered steps, in operational language. Not narrative ("This skill is designed to help with refunds") but imperative ("Look up the order. Check the policy. Issue the refund."). Imperative skills run better than narrative ones.

-

Examples of inputs and outputs. One or two short examples that show the expected shape of input and the expected shape of output. Examples are worth roughly five times more than descriptions for steering model behavior. Don't skip them.

-

Common edge cases. Two or three cases that aren't obvious from the happy path — typically the cases that have actually broken in production. Edge cases earn their place by being from real failures, not imagined ones.

PRIMM — Predict. Two skills have the same

namefield (summarize-document) but live in different folders — one in~/.claude/skills/(user-level) and one in.claude/skills/(project-level). The current task matches both descriptions. What happens? Three options: (a) the agent picks one at random; (b) the project-level skill wins because of folder precedence; (c) the agent loads both and lets the model choose. Confidence 1–5.

The answer depends on the client, but the prevailing convention across Claude Code and OpenCode is (b) — project-level wins over user-level. Same precedence pattern as CLAUDE.md resolution, the agentic-coding crash course's rules-file behavior, and most tool configuration. The principle: more specific context overrides more general context. Two skills with the same name from the same folder is a different problem — that one is usually an error, since folder names must match name values.

Try with AI

You have two skills in the same project:

- name: lookup-customer

description: Looks up a customer record.

- name: get-customer-profile

description: Retrieves customer profile information.

A user asks: "What's John Smith's email?"

Walk through three steps with the AI:

1. For each skill, predict whether it WILL fire on this prompt.

Confidence 1-5.

2. If both fire, the model has to pick one — predict which it picks

and why.

3. Rewrite BOTH descriptions so exactly one fires on this prompt,

and the other fires only on a clearly different prompt. State the

prompt that SHOULD fire the other skill.

Concept 4: Skill packaging — where skills live and how they travel

Skills are filesystem artifacts. That's what makes them portable; it's also what makes their packaging conventions matter. Get the folder structure right and your skill works in every compliant client; get it wrong and it works in none.

Where each tool looks for skills.

| Tool | Project-level | User-level (global) |

|---|---|---|

| Claude Code | .claude/skills/<skill-name>/SKILL.md | ~/.claude/skills/<skill-name>/SKILL.md |

| OpenCode | .opencode/skills/<skill-name>/SKILL.md | ~/.config/opencode/skills/<skill-name>/SKILL.md |

| OpenCode (fallback) | .claude/skills/<skill-name>/SKILL.md | ~/.claude/skills/<skill-name>/SKILL.md |

The OpenCode fallback is the load-bearing piece for portability. OpenCode reads from its own .opencode/skills/ first, but falls back to .claude/skills/ if a skill isn't found there. The practical consequence: write your skill once in .claude/skills/, and it works in both tools without modification. This is the most concrete payoff of the open Agent Skills format. The same SKILL.md you ship to a Claude Code user works for an OpenCode user, byte-for-byte.

The full folder structure, by directory.

my-skill/

├── SKILL.md # Required: frontmatter + body. The entry point.

├── scripts/ # Optional: executable code the skill can run.

│ ├── extract.py

│ └── normalize.sh

├── references/ # Optional: deep documentation, loaded on demand.

│ ├── REFERENCE.md

│ └── policies/

│ └── us-refund-policy.md

└── assets/ # Optional: templates, schemas, lookup tables.

├── report-template.md

└── status-codes.json

Each directory has a specific job:

-

scripts/holds executable code the agent can run. Python, Bash, JavaScript — language support depends on the client and the sandbox. The agent invokes scripts by relative path (e.g.,scripts/extract.py). Scripts should be self-contained, document their dependencies in a comment header, and handle edge cases gracefully because the agent will not. -

references/holds deep documentation the agent loads on demand. The convention: keep each reference file focused on one topic (finance.md,legal.md,error-codes.md) so the agent loads only what the current task needs. A 5,000-token reference file the agent reaches once per session is cheap; a 50,000-token reference file is not. Keep references one level deep from SKILL.md. Avoidreferences/category/subcategory/file.md-style nesting — the spec is explicit on this. -

assets/holds static resources: document templates, configuration templates, lookup tables, schemas, images. These are consumed by the agent (or by scripts the agent runs) but not read as instructions.

The file reference syntax inside SKILL.md. Use relative paths from the skill root:

For policy details, see [the US refund policy](references/policies/us-refund-policy.md).

To extract the structured data, run `scripts/extract.py`.

The output should follow the template in `assets/report-template.md`.

The agent reads the SKILL.md body, sees the path, and loads the referenced file on demand. No special syntax, no link resolution gymnastics, just relative paths.

Quick check. You have a skill at

.claude/skills/refund-issuance/SKILL.mdthat referencesreferences/policies/us.md. The skill is being invoked while the agent's working directory is/home/user/projects/customer-support. Where does the agent look for the policy file? Answer:/home/user/projects/customer-support/.claude/skills/refund-issuance/references/policies/us.md— paths are relative to the skill's directory, not the agent's working directory. Get this wrong and your skill breaks in subtle ways under deployment.

Try with AI

I'm porting a skill from Claude Code to OpenCode and want to verify

my folder layout is correct. The skill is at .claude/skills/extract-meeting-notes/

and contains SKILL.md, scripts/parse.py, and references/glossary.md.

For each of these claims, tell me TRUE or FALSE and explain why:

1. Without any changes, this skill will be discovered by OpenCode.

2. If I move the folder to .opencode/skills/extract-meeting-notes/,

Claude Code will stop discovering it.

3. The script reference inside SKILL.md should use the absolute path

~/.claude/skills/extract-meeting-notes/scripts/parse.py.

4. If two skills have the same name and one is in .claude/skills/

and the other in ~/.claude/skills/, the user-level one wins.

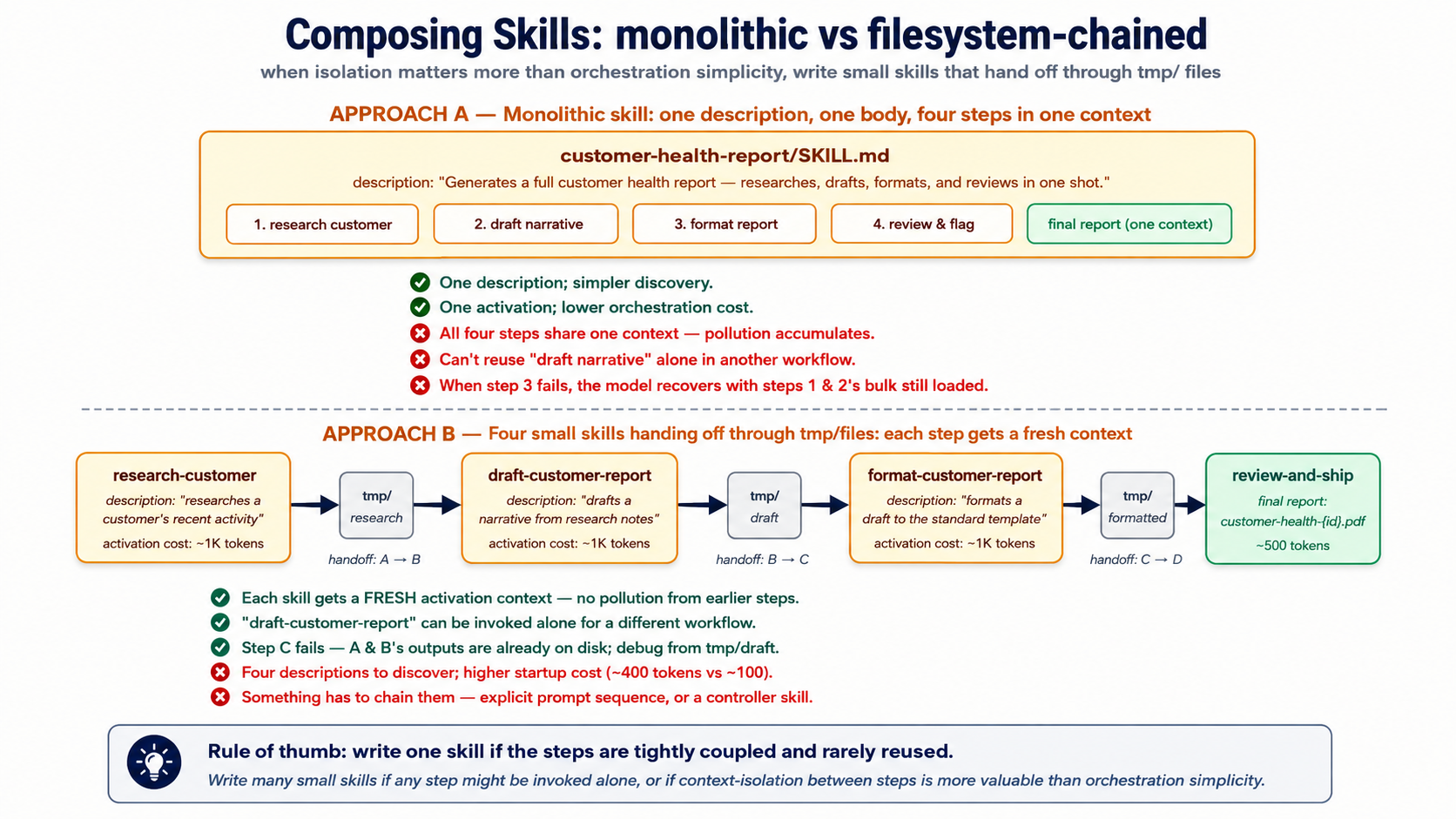

Concept 5: Composing skills — when to chain small skills vs. write one big one

Skills compose. A "weekly customer-health report" capability could be one giant skill that does research, drafting, formatting, and review in one shot. Or it could be four skills — research-customer-health, draft-customer-health-report, format-customer-health-report, review-customer-health-report — that hand off to each other through the filesystem.

Both work. They have very different properties.

One big skill. Easier to discover (one description, one match). Lower discovery cost (one entry in the discovery stage). Tighter coupling: when the activation triggers, all the steps run, in order, in one context. Hard to test in isolation. Hard to reuse a piece elsewhere. When something fails halfway through, the model has to recover with all of the now-irrelevant earlier work still in context.

Many small skills. Higher discovery cost (four entries instead of one). Higher activation orchestration cost (something has to chain them). Looser coupling: each step can be tested, replaced, or reused independently. When one fails, the failure is localized; the prior steps' artifacts are already on disk. Each step gets a fresh activation, meaning no accumulated context pollution.

The decision rule that works in practice:

Write one skill if the steps are tightly coupled and rarely reused in isolation. Write many skills if any step might be invoked on its own from a different workflow, or if context-isolation between steps is more valuable than orchestration simplicity.

For a Worker that does customer support, summarize-ticket is probably its own skill (used by humans, used by the escalation flow, used by the weekly digest, used by the audit replay). escalate-to-tier-2 is probably its own skill (the escalation criteria and tone requirements are reused). The decomposition is value-of-isolation versus cost-of-orchestration; isolation usually wins past two or three composed steps.

The filesystem handoff pattern. When skills compose, the cleanest handoff is the filesystem, not the conversation. Skill A writes its output to tmp/research-customer-{id}.md. Skill B is invoked, reads from tmp/research-customer-{id}.md, writes to tmp/draft-customer-{id}.md. Skill C reads draft, writes report. The conversation only sees the final report; intermediate artifacts live on disk where the agent can re-read them, the human can inspect them, and the audit trail can find them later. This is the same pattern Course #3 used for subagent output isolation — same insight, applied at skill granularity.

customer-health-pipeline/

├── tmp/ # ephemeral handoff dir

│ ├── research-customer-{id}.md # skill A output

│ ├── draft-customer-{id}.md # skill B output

│ └── reviewed-draft-customer-{id}.md # skill C output

└── output/

└── customer-health-{id}.pdf # skill D final

This is also where the next two parts of the course start to matter. Some skill handoffs don't belong on the filesystem — they belong in the system of record. The "customer health" snapshot from Skill A doesn't just feed Skill B; it's also a record the company needs to keep, to query later, to find similar cases against, to audit if the customer complains six weeks later. A skill that writes to a temp file is a draft. A skill that writes to the system of record is an action. That distinction is what Part 2 builds.

Try with AI

Compare two design approaches for a customer-refund-issuance workflow:

Design A: One big skill called "issue-refund" that handles eligibility check,

policy lookup, amount calculation, gateway call, ticket update, and customer

notification.

Design B: Five small skills (check-refund-eligibility, lookup-refund-policy,

calculate-refund-amount, call-payment-gateway, update-support-ticket,

notify-customer) chained via filesystem handoffs in tmp/.

For each design, name (1) one situation where it's the right choice and

(2) one specific failure mode it's vulnerable to. Then tell me which

design you'd ship and why.

Part 2: Neon Postgres + pgvector as system of record

Part 1 gave the agent capabilities it can load on demand. Those capabilities are useless without state to work against — the customer history, the prior conversations, the policy library, the audit log. The previous course's agent kept state in a local SQLite file (the session DB) and stub data in Python lists. That works for a demo. It doesn't work for a Worker that has to answer "what did we tell this customer six weeks ago?" or "have we seen this question before, and what did the resolution look like?"

This Part replaces both with a system of record — the architectural term from the thesis for "the authoritative store of state the workforce reads from and writes to." We use Neon Postgres with the pgvector extension. The architecture is the invariant; Neon is the product. Any durable, addressable, governed Postgres satisfies the requirement.

First-pass compression note. Part 2 is the densest stretch of this course — six tables, SQL, embedding pipelines, vector index math. If your Postgres is rusty, read Concepts 6 and 7 for the shape, then skim 8–10 and come back when you build. The minimum-viable Worker doesn't actually need all six tables on day one:

messages+embeddingsis enough to feel the architecture work. Adddocumentswhen you have a real library to embed. Addaudit_logandcapability_invocationsthe first time you have to answer "what did the agent do at 3am last Tuesday?" Addconversationswhen one user starts having more than one of them. The six-table schema below is where you end up, not where you start.

Concept 6: Why managed Postgres, and why Neon specifically

The thesis is product-agnostic about systems of record — "the AI-Native Company's existing databases, workflows, and operational platforms — CRMs, ERPs, ticketing systems, data warehouses, ledgers — serve as the system of record." For an agent you're building from scratch, though, you need to pick something. The question is not "Postgres vs. MongoDB vs. a vector DB" — it's "which Postgres."

Why Postgres, not a dedicated vector database. Three reasons that hold even in 2026.

-

One database, one transaction, one auth boundary. A vector DB plus a relational DB means two stores to keep consistent, two auth systems to scope, two backup pipelines to maintain. The pgvector extension puts vectors next to the user records, ticket records, and audit logs they're related to — a JOIN is a JOIN, not a network hop between two eventually-consistent services. Eight million-plus pgvector installs is the empirical answer to "is this enough." It almost always is.

-

Postgres already does the hard parts. Transactions, indexes, foreign keys, row-level security, point-in-time recovery, query planning. A dedicated vector DB has to invent these from scratch and usually does some of them worse. The default boring choice has compounding advantages.

-

MCP servers exist for Postgres at every layer. Neon ships one (for management). General Postgres MCP servers exist (for SQL execution). You can write your own (for scoped runtime access). The MCP ecosystem around Postgres is the most mature.

Why Neon specifically — three differentiators.

-

Serverless with scale-to-zero. Free tier costs nothing when idle. A Worker that handles 50 conversations per day spends most of its time costing $0, not $50/month for a provisioned instance. Critical for the economics of a multi-Worker AI-Native Company where many Workers are bursty.

-

Branching. A Neon database branches in seconds — a full copy-on-write clone of your production data, ready to query. The intended use is dev/test environments and migration safety; the agent-relevant use is letting the agent experiment on a branch without touching production. A migration that goes wrong on a branch is reverted by deleting the branch. The same operation on a non-branchable database is a restore from backup.

-

First-class MCP integration. Neon ships an official MCP server (remote at

https://mcp.neon.tech/mcp, OAuth-authed) that exposes project management, branch lifecycle, SQL execution, and migration tooling to any MCP client. We'll use this for development. Critically — and we'll return to this — Neon explicitly does not recommend the Neon MCP server for production runtime use. It's a powerful natural-language interface; production agents talk to Postgres through narrower, scoped MCP servers you write yourself, or through direct connections. The distinction matters; Part 3 makes it operational.

Quick check. Three claims, mark each True or False before reading the next paragraph: (a) The Neon MCP server is intended to be the runtime database connection for production AI Workers. (b) A Neon database branched from production starts with all the production data already in it. (c) A Neon database that hasn't received a query in an hour is still costing you money under the free tier.

Answers: (a) False — Neon's own documentation says the MCP server is for development/testing only. (b) True — branches are copy-on-write, so the branch starts as a logical clone of production with no data movement. (c) False — scale-to-zero means idle databases cost nothing on the free tier. These three answers are the operational shape of why Neon fits as the SoR.

Try with AI

Your customer-support Worker handles EU customers. Your legal team

says: "All customer data must stay in Frankfurt." Neon's free tier

offers US-East regions only; their paid tier offers EU regions.

For each of these three approaches, decide GO or NO-GO and give ONE

sentence of justification:

A) Use Neon's paid EU tier (~$69/month minimum) and ship.

B) Use Postgres on a Frankfurt VPS you manage yourself (no pgvector

MCP server, no branching, no scale-to-zero) and ship.

C) Use Neon's free US tier; embed everything client-side before

sending; tell legal it's "encrypted at rest."

Then say which one you'd actually pick and what you'd ask legal

before committing.

Concept 7: The Worker's schema — what tables an agent actually needs

A Worker's system of record is not just "store conversations somewhere." It's a structured schema that supports the four things the thesis says every Worker does: read truth, write outcomes, leave traces, find similar prior work. Six tables cover the 90% case. You'll add more for domain specifics; these are the load-bearing six.

-- 1. CONVERSATIONS: the top-level unit of work

CREATE TABLE conversations (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

user_id TEXT NOT NULL,

started_at TIMESTAMPTZ NOT NULL DEFAULT NOW(),

ended_at TIMESTAMPTZ,

metadata JSONB NOT NULL DEFAULT '{}'::jsonb,

-- searchable summary; populated by the agent at conversation end

summary TEXT

);

CREATE INDEX idx_conversations_user ON conversations(user_id, started_at DESC);

-- 2. MESSAGES: the turns of a conversation

CREATE TABLE messages (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

conversation_id UUID NOT NULL REFERENCES conversations(id) ON DELETE CASCADE,

role TEXT NOT NULL CHECK (role IN ('user', 'assistant', 'tool', 'system')),

content TEXT NOT NULL,

-- token counts let you reason about cost without re-counting

input_tokens INT,

output_tokens INT,

created_at TIMESTAMPTZ NOT NULL DEFAULT NOW()

);

CREATE INDEX idx_messages_conv ON messages(conversation_id, created_at);

-- 3. DOCUMENTS: the agent's reference library

CREATE TABLE documents (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

source TEXT NOT NULL, -- 'policy_library', 'kb_article', 'past_case', etc.

title TEXT NOT NULL,

body TEXT NOT NULL,

metadata JSONB NOT NULL DEFAULT '{}'::jsonb,

created_at TIMESTAMPTZ NOT NULL DEFAULT NOW(),

updated_at TIMESTAMPTZ NOT NULL DEFAULT NOW()

);

CREATE INDEX idx_documents_source ON documents(source);

-- 4. EMBEDDINGS: vector representations of documents AND past conversations

CREATE TABLE embeddings (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

-- one of these is populated; the other is NULL

document_id UUID REFERENCES documents(id) ON DELETE CASCADE,

conversation_id UUID REFERENCES conversations(id) ON DELETE CASCADE,

chunk_text TEXT NOT NULL,

chunk_index INT NOT NULL,

embedding VECTOR(1536) NOT NULL,

model TEXT NOT NULL, -- 'text-embedding-3-small', etc.

created_at TIMESTAMPTZ NOT NULL DEFAULT NOW(),

CHECK (

(document_id IS NOT NULL)::int + (conversation_id IS NOT NULL)::int = 1

)

);

-- the load-bearing index for semantic search; see Concept 8

CREATE INDEX idx_embeddings_hnsw

ON embeddings USING hnsw (embedding vector_cosine_ops);

-- 5. AUDIT_LOG: every action the Worker takes, replayable forever

CREATE TABLE audit_log (

id BIGSERIAL PRIMARY KEY,

conversation_id UUID REFERENCES conversations(id) ON DELETE SET NULL,

actor TEXT NOT NULL, -- 'worker:customer-support', 'system', etc.

action TEXT NOT NULL, -- 'message_sent', 'skill_invoked', 'db_write', etc.

target TEXT, -- table name, skill name, etc.

payload JSONB NOT NULL, -- the data of the action

result JSONB, -- what happened

created_at TIMESTAMPTZ NOT NULL DEFAULT NOW()

);

CREATE INDEX idx_audit_conv ON audit_log(conversation_id, created_at);

CREATE INDEX idx_audit_action ON audit_log(action, created_at);

-- 6. CAPABILITY_INVOCATIONS: every skill or tool call, for replay and metrics

CREATE TABLE capability_invocations (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

conversation_id UUID NOT NULL REFERENCES conversations(id) ON DELETE CASCADE,

capability TEXT NOT NULL, -- 'skill:summarize-ticket', 'tool:search_docs', etc.

arguments JSONB NOT NULL,

result JSONB,

status TEXT NOT NULL CHECK (status IN ('ok', 'error', 'timeout')),

latency_ms INT,

cost_cents INT, -- approximate cost in 1/100 cents

created_at TIMESTAMPTZ NOT NULL DEFAULT NOW()

);

CREATE INDEX idx_cap_conv ON capability_invocations(conversation_id, created_at);

A few notes on the design choices, since each is load-bearing:

-

One embeddings table for both documents and conversations. A

CHECKconstraint ensures exactly one ofdocument_idorconversation_idis set. This lets you do semantic search across "policies AND past conversations" in one query — the question "have we answered this before?" gets one index, not two. -

audit_logusesBIGSERIAL, notUUID. Audit rows are written constantly; the simpler integer key keeps inserts fast and ordering trivial. The other tables useUUIDbecause rows leave the database (in API responses, in URLs) and UUIDs avoid leaking row counts. -

capability_invocationsseparates skills from tools. A skill invocation and a@function_toolinvocation are conceptually similar but operationally different (different code paths, different cost profiles, different failure modes). Storing them in one table with acapabilitydiscriminator lets you query both together when asking "what did the agent do?" while still splitting them when asking "what did skills cost vs. tools?" -

metadata JSONBcolumns are escape hatches. The schema can't predict every domain-specific field you'll need; JSONB lets you add fields without migrations. Use sparingly — fields that appear in many queries should be promoted to columns.

This is the minimum operational schema for a Worker — three tables for the work (conversations, messages, documents), one for the embeddings, two for the trace (audit_log, capability_invocations). You'll add more for domain specifics: a customers table, a tickets table, a policies table with versioning. Those are the same shape — relational data the agent reads and writes via MCP.

PRIMM — Predict. A Worker handles 200 conversations/day, each averaging 10 messages, with 30% triggering one skill invocation and 50% writing two audit rows beyond the skill row. After one month (30 days), roughly how many rows are in each of the six tables? Three options: (a) similar volumes across all six (~6,000–60,000 each); (b) audit_log dwarfs the others by 10×–50×; (c) embeddings dwarfs everything because each message gets embedded. Confidence 1–5.

The answer is (b) — audit_log will be the largest by a wide margin because every interaction produces multiple audit rows: message sent, skill invoked, db write, db write again, conversation closed, etc. A rough estimate: conversations ~6,000; messages ~60,000; capability_invocations ~1,800; audit_log probably 150,000–300,000. Plan your retention and indexing accordingly — audit_log is the table you'll partition first as you grow.

Try with AI

I want to extend this schema for a customer-support Worker that

handles software bug reports specifically. What three additional

tables would you add, and what columns would they have? For each,

say what the agent will use it for (read access? write access?

both?) and what foreign keys connect it to the six core tables.

Concept 8: pgvector basics — types, distance operators, indexes

The embeddings table above is what makes the agent's memory semantically searchable. The technology that powers it is pgvector — a Postgres extension that adds vector data types and similarity-search operators. As of 2026 it has 8M+ installs and is the default-choice answer for "should I use a separate vector DB?" for most workloads — no.

The vector type. VECTOR(n) is a fixed-length floating-point column. n is the dimensionality of your embeddings — 1536 for OpenAI's text-embedding-3-small, 3072 for text-embedding-3-large, varies for other models. The dimension must match the model that produced the embedding. Mixing dimensions is the most common pgvector bug: insert 1536-dim vectors from model A, query with 1536-dim vectors from model B, and the results are nonsense even though every query "works."

For dimensions above 2000, the HALFVEC type uses half-precision floats and roughly halves the storage at minor recall cost. For our 1536-dim case, plain VECTOR(1536) is fine.

The three distance operators. pgvector exposes three ways to measure how similar two vectors are. Pick one for your use case and stick with it; mixing is a recipe for confusion.

| Operator | Name | What it measures | When to use |

|---|---|---|---|

<-> | L2 distance (Euclidean) | Straight-line distance in n-dimensional space | Image embeddings, geometric similarity |

<#> | Negative inner product | Dot product (negated) | When your embedding model produces un-normalized vectors and you care about magnitude |

<=> | Cosine distance | Angle between vectors, regardless of magnitude | Text embeddings — the default for our case |

OpenAI's text-embedding-3-small and text-embedding-3-large produce normalized vectors, which means cosine distance and Euclidean distance give equivalent rankings. Use cosine distance (<=>) by convention for text embeddings; it's the most common, the most documented, and the index ops are named vector_cosine_ops for it.

A semantic-search query, in full:

-- Find the 5 documents most similar to the user's question

SELECT d.id, d.title, d.body,

e.embedding <=> $1 AS distance

FROM embeddings e

JOIN documents d ON d.id = e.document_id

WHERE e.document_id IS NOT NULL -- excludes conversation embeddings

ORDER BY e.embedding <=> $1 -- smaller distance = more similar

LIMIT 5;

$1 here is the embedding of the user's question, generated at query time. The <=> operator is what makes this O(n) into O(log n) given the right index.

Two index types: HNSW and IVFFlat. pgvector has two practical index choices. As of 2026, the recommendation has converged:

- Start with HNSW unless you have a reason not to. Graph-based, builds slowly but queries fast, has the broadest operator support, predictable performance. The default for new projects.

- Use IVFFlat if build time matters more than query time. Partition-based, builds 5–6× faster than HNSW, queries slower. Useful when you re-build the index frequently or have an insert-heavy workload.

- DiskANN exists (via the pgvectorscale extension from Timescale) for very large indexes that don't fit in RAM. You almost certainly don't need it.

The HNSW index from the schema above:

CREATE INDEX idx_embeddings_hnsw

ON embeddings USING hnsw (embedding vector_cosine_ops);

Two tuning knobs you'll set if you tune at all: m (the number of connections per node, default 16) and ef_construction (the build-time effort, default 64). The defaults are sensible for most workloads; only touch them when you've benchmarked.

Quick check. Three claims to mark True or False. (a) You can have multiple HNSW indexes on the same

embeddingcolumn, one per distance operator. (b) Inserting a vector into a table with an HNSW index incurs more cost than inserting into a table with no vector index. (c) An HNSW index can be created before any data is loaded into the table. Answers: all three are True. Pgvector lets you index for multiple distance operators (rare but possible), the index maintenance cost is real (which is why some workloads prefer batch-insert-then-index), and HNSW (unlike IVFFlat) doesn't need a training step.

Try with AI

Two scenarios. For each, pick HNSW or IVFFlat and justify with one

specific property of the index:

Scenario A: A research index of 10M scientific papers. Built once,

queried millions of times. Build time is "whatever it takes —

overnight is fine." Query latency directly affects user experience.

Scenario B: A live index of customer support tickets that's

re-indexed every 4 hours because thousands of new tickets stream in.

Query patterns are simple (top-5 nearest neighbors). The current

HNSW build takes 20 minutes — a third of the re-index cycle.

After you answer: name ONE thing that would change your answer for

each scenario. Be specific about what you'd need to see in

production metrics before switching.

Concept 9: The embedding pipeline — text in, queryable vector out

Embeddings don't appear by magic; you generate them by calling an embedding model. The pipeline is straightforward, but each step has a decision point that bites if you get it wrong.

The four-step pipeline.

- Chunk the document into pieces small enough to embed coherently.

- Embed each chunk by calling the embedding model.

- Store the chunk text, the embedding, and metadata in the

embeddingstable. - Query by embedding the user's question and finding nearest neighbors.

Chunking. Embedding models have token limits — OpenAI's text-embedding-3-small accepts up to 8,191 tokens per chunk, far more than you typically want. The question isn't "what's the maximum" but "what's the chunk size that preserves the unit of meaning the user will search for." Three rules that work in practice:

- Chunk at semantic boundaries when you can. Section headings, paragraph breaks, structural markers in your source. A chunk that ends mid-sentence retrieves badly.

- Target 200–500 tokens per chunk for most text. Long enough to carry meaning, short enough to be specific. Documents with longer chunks tend to "match everything weakly" rather than "match the right thing strongly."

- Include 10–20% overlap between chunks for chunks that fall across natural boundaries. The overlap costs storage but improves recall when the user's question lies near a chunk boundary.

A typed chunking function for the worked example:

# src/chat_agent/embedding/chunker.py

from dataclasses import dataclass

@dataclass(frozen=True)

class Chunk:

text: str

index: int

source_offset: int # character offset in the original document

def chunk_text(

text: str,

target_tokens: int = 400,

overlap_tokens: int = 60,

) -> list[Chunk]:

"""Split text into overlapping chunks at paragraph boundaries.

Approximates tokens as words * 0.75 — fine for chunking; not for

actual token counting. Use tiktoken for precise counts.

"""

paragraphs: list[str] = [p.strip() for p in text.split("\n\n") if p.strip()]

target_words: int = int(target_tokens / 0.75)

overlap_words: int = int(overlap_tokens / 0.75)

chunks: list[Chunk] = []

current: list[str] = []

current_word_count: int = 0

source_offset: int = 0

for para in paragraphs:

para_words: int = len(para.split())

if current_word_count + para_words > target_words and current:

chunks.append(Chunk(

text="\n\n".join(current),

index=len(chunks),

source_offset=source_offset,

))

# carry overlap forward

overlap_chunk: list[str] = []

overlap_count: int = 0

for prev in reversed(current):

if overlap_count + len(prev.split()) > overlap_words:

break

overlap_chunk.insert(0, prev)

overlap_count += len(prev.split())

current = overlap_chunk

current_word_count = overlap_count

source_offset += sum(len(p) for p in current) + 2 * len(current)

current.append(para)

current_word_count += para_words

if current:

chunks.append(Chunk(

text="\n\n".join(current),

index=len(chunks),

source_offset=source_offset,

))

return chunks

Embedding. Call the model with batched chunks (the OpenAI API accepts arrays of inputs in one request, much more efficient than one call per chunk):

# src/chat_agent/embedding/embedder.py

from openai import AsyncOpenAI

EMBEDDING_MODEL: str = "text-embedding-3-small"

EMBEDDING_DIM: int = 1536 # the table column must match

async def embed_chunks(

client: AsyncOpenAI, chunks: list[str],

) -> list[list[float]]:

"""Embed a batch of chunks. Returns one vector per chunk, in order."""

response = await client.embeddings.create(

model=EMBEDDING_MODEL, input=chunks,

)

return [item.embedding for item in response.data]

Three model choices that matter:

| Model | Dimensions | Cost (input, per 1M tokens) | When to use |

|---|---|---|---|

text-embedding-3-small | 1536 | $0.02 | The default. Cheap, fast, good for most retrieval. |

text-embedding-3-large | 3072 | $0.13 | When you've measured -small underperforming. |

Local (e.g., bge-small) | 384–1024 | "free" (your compute) | When data residency or cost matters more than convenience. |

The headline number: embedding 50,000 chunks at ~300 tokens each = 15M tokens × $0.02/M = $0.30. Embedding the same with -large is $1.95. Embeddings are cheap; embedding choice is rarely the cost lever.

Re-embedding. When do you re-embed? Three triggers:

- The source document changed. Delete and re-insert all embeddings whose

document_idmatches. - The embedding model changed. Migration of a lifetime — but if you switch from

-smallto-large, every existing embedding is incompatible with new ones. Re-embed everything, or run two embedding columns during a transition. - The chunking strategy changed. If you decide 400 tokens was wrong and 250 is right, re-chunk and re-embed. Versioning your chunks (storing

chunk_strategy_versionin themetadataJSONB) lets you do this safely.

PRIMM — Predict. You've embedded 100,000 chunks with

text-embedding-3-small. You then decide to also embed all messages (not just documents) so the agent can do "have we discussed this before?" lookups. You write the message embeddings into the sameembeddingstable with the same column. A semantic search query — find the 5 nearest neighbors to a user question, no filter — comes back with mixed document and message results. Is this what you wanted? What's the right query shape? Confidence 1–5.

The answer: almost certainly not what you wanted. Mixing documents and messages in retrieval results without distinguishing them produces incoherent answers — the model sees a top result that's a customer's prior message and treats it as authoritative documentation. The right pattern is to filter by source type in the query — either by joining and filtering on WHERE document_id IS NOT NULL for document search, or by running two separate searches and ranking them differently. The schema's CHECK constraint that exactly one of document_id/conversation_id is set makes this filter cheap and the intent explicit.

Debugging poor retrieval. When top-k results don't match expectations, four checks in order, each ruling out one common cause:

| Symptom | Likely cause | Fix |

|---|---|---|

| All top-5 distances are > 0.7 (cosine distance, so anything > 0.5 is "far") | Query embedding model differs from corpus embedding model | Confirm both went through the same EMBEDDING_MODEL. Mixing text-embedding-3-small with -large, or local with OpenAI, produces nonsense ranks across both pools. |

| Top-5 results are all from one source type when you expected variety | Source-type filter missing | Add WHERE document_id IS NOT NULL (or the right side of the CHECK constraint) to the query, or split into two ranked queries and merge them with explicit weights. |

| Top-k changes wildly between near-identical queries | Chunk size too small (each chunk lacks enough context) | Re-chunk with larger chunks (200, then 400, then 600 tokens) and re-embed. Re-running the same query should now produce stable top-k. |

| Query returns nothing | HNSW index missing, or vector dimension mismatch | \d+ embeddings in psql to confirm the column is VECTOR(1536) and the HNSW index exists with vector_cosine_ops. If the column is the wrong dimensionality, re-create it. |

Three diagnostic queries worth keeping in a scratch.sql:

-- 1. Distance band on the top-5 for a known-good query

SELECT chunk_text, embedding <=> :query_vec AS distance

FROM embeddings ORDER BY distance LIMIT 5;

-- 2. Source-type breakdown of top-20 (catch the "wrong source dominates" bug)

SELECT

CASE WHEN document_id IS NOT NULL THEN 'document' ELSE 'message' END AS src,

COUNT(*)

FROM (SELECT * FROM embeddings ORDER BY embedding <=> :query_vec LIMIT 20) t

GROUP BY src;

-- 3. Confirm the index is actually being used

EXPLAIN (FORMAT TEXT) SELECT id FROM embeddings ORDER BY embedding <=> :query_vec LIMIT 10;

Retrieval quality is the silent killer of Worker accuracy. The final answer can sound perfectly reasonable while citing the wrong evidence; the only way to catch this is to evaluate retrieval before the final answer (see Part 4, Concept 19's retrieval evals).

Try with AI

I'm chunking a corpus of legal contracts (each averaging 8,000 words)

for semantic search. The user will query things like "what's the

termination clause in this contract" — phrases that map cleanly to

specific sections. Walk me through three chunking strategies:

A) Fixed 400-token chunks with 60-token overlap (the default)

B) Chunk at section headings only, with no overlap

C) A two-level approach: store both 400-token chunks AND

whole-section chunks, search both, combine results

For each, name (1) when it wins and (2) when it loses.

Concept 10: Audit trail as discipline — what "reads and writes" means for a Worker

The thesis is unusually direct about this: "every action a Worker takes leaves a trace in a store that survives the agent's session and can be inspected, replayed, and trusted. The system of record is what separates execution from plausible-sounding fiction. It is also what makes the workforce legible after the fact."

Translated to operational terms: every meaningful action the agent takes writes a row to audit_log, plus a more structured row to capability_invocations if it's a skill or tool call. The data being acted on lives in its appropriate table (a message goes in messages, a document update goes in documents); the fact that the action happened lives in audit_log. The two are joined by foreign key.

What to log.

- Every model call: input tokens, output tokens, model name, cost estimate

- Every skill invocation: skill name, arguments, result summary, latency, success/error/timeout

- Every database write: which table, what changed (the JSONB payload), under which conversation context

- Every external tool call: tool name, input, output summary, latency

- Every guardrail event: which guardrail tripped, what the input was, what the action was (blocked/allowed/modified)

What not to log.

- The full conversation history on every turn — that's already in

messages, you'd be storing it twice - Sensitive payload data verbatim if the row is queryable to humans — store a hash or summary, keep the full data in a restricted table

- Internal model reasoning that the user shouldn't see — that's a context-management decision, not an audit decision

The replay test. A good audit trail passes one specific test: given a conversation_id and a timestamp, you can reconstruct what the agent did and why, without re-running the model. If your audit log doesn't pass this test, it's logging, not auditing.

A concrete write-on-action helper, used everywhere a capability runs:

# src/chat_agent/audit.py

import time

import json

from typing import Any

from uuid import UUID

import asyncpg

async def log_capability(

pool: asyncpg.Pool,

conversation_id: UUID,

capability: str,

arguments: dict[str, Any],

result: Any,

status: str,

started_at: float,

cost_cents: int | None = None,

) -> None:

"""Write a capability_invocations row plus a denormalized audit_log row.

Both writes happen in one transaction so they're either both present

or both absent — the audit trail never gets partial.

"""

latency_ms: int = int((time.monotonic() - started_at) * 1000)

async with pool.acquire() as conn:

async with conn.transaction():

await conn.execute(

"""INSERT INTO capability_invocations

(conversation_id, capability, arguments, result, status,

latency_ms, cost_cents)

VALUES ($1, $2, $3::jsonb, $4::jsonb, $5, $6, $7)""",

conversation_id, capability,

json.dumps(arguments), json.dumps(result),

status, latency_ms, cost_cents,

)

await conn.execute(

"""INSERT INTO audit_log

(conversation_id, actor, action, target, payload, result)

VALUES ($1, $2, $3, $4, $5::jsonb, $6::jsonb)""",

conversation_id, "worker:customer-support",

"capability_invoked", capability,

json.dumps({"arguments": arguments, "latency_ms": latency_ms}),

json.dumps({"status": status, "result": result}),

)

The two writes in one transaction is the load-bearing detail. Half-written audit trails are worse than missing audit trails — they suggest completeness without delivering it. Either both rows go in or neither does.

Why this isn't just logging. Three properties separate audit data from log data:

- Replayable. The schema lets you reconstruct the agent's reasoning trace from

audit_logjoined withmessagesjoined withcapability_invocations. A log line in a JSONL file doesn't. - Queryable. "What did the agent tell customer X last month, and which policy did it cite?" is a SQL query. "Which skill triggered the most timeouts in the last 7 days?" is a SQL query. Logs require grep and luck.

- Trustworthy. Audit rows are in the same database as the business data. They're backed up together, point-in-time-recoverable together, access-controlled together. They survive the agent process, the deployment region, and the model version.

This is what the thesis means when it says "Workers only become governable as a workforce when a ledger makes them legible — as units of capability, cost, latency, and outcome." Your audit_log and capability_invocations tables are that ledger. Treat them like one.

Try with AI

Here's a customer support scenario: a customer claims the Worker told

them they would receive a $50 refund, but the actual refund issued was

$30. The Worker handled the conversation 19 days ago.

Walk me through the audit-trail query path to resolve this:

1. Find the conversation. (Which columns of which tables?)

2. Find the message where the refund amount was promised. (How do you

distinguish "discussed" from "promised"?)

3. Find the capability invocation that issued the refund.

4. Find the database write that recorded the $30 amount.

For each step, name the table you'd query and the WHERE clauses.

Then say what's MISSING from the six-table schema that would make

this query easier.

Part 3: MCP — wiring the agent to the system of record

Part 1 gave the agent a library of Skills. Part 2 gave it a Postgres system of record. Part 3 wires the two together with the Model Context Protocol — the open standard for how agents reach external state and external capability. The thesis is direct about MCP's place: "MCP is how the workforce reaches [its systems of record]: every authoritative store becomes addressable to any Worker through an MCP server, under policy." This Part makes that operational.

Concept 11: What MCP is and isn't