The AI-Native Finance Catalog: Pricing, Forecasting, and Financial Architecture for AI Companies

If you're new to all this — start here

This is a long document. You do not need to read it all to start using it. If you are new to finance, or running an early-stage AI company, here is the simplest possible answer to "what should I do?"

This week. Set up Stripe (or equivalent) to handle billing. Connect it to a simple bookkeeping tool — Pilot, Bench, Puzzle, Mercury Treasury, or something similar that automates the basics. Track three numbers from this point forward: revenue, gross margin (revenue minus the cost of compute and any other usage-based vendor costs), and cash runway in months.

This month. Build a simple spreadsheet with one row per month for the next 18 months, projecting those same three numbers forward. Update it on the first business day of every month. Compare actuals to forecast every month. The discrepancies are where you will learn what your business actually does.

This quarter. Once you have three months of revenue data, look at the average gross margin. If it is below 50%, your unit economics are likely broken — most AI-native businesses need 60%+ gross margin to survive at scale, and SaaS norms expect 75–85%. Below 50% is a signal to investigate compute costs, vendor pricing, or whether your pricing model fits your cost structure.

This year. Do not hire a CFO. Do not hire an accounting team. Do not buy enterprise FP&A software. Do not run an audit unless an investor explicitly requires one. Use the time you save to grow revenue, because most of finance only matters once you have meaningful revenue to manage.

That is the entire prescription for the first 12 months of an AI-native company. Stripe + a bookkeeping tool + three numbers + a simple forecast spreadsheet. The rest of this document is for the moment you outgrow that setup — when your revenue model gets complex enough, your investors get demanding enough, or your team gets large enough that the simple stack stops scaling.

If you want a slightly broader overview before returning to the prescription above, the Beginner's 10-minute version below gives you the wider map.

The beginner path through this document

If you are a true beginner, do not read this document linearly. The catalog is built for many readers — founders, CFOs, controllers, investors — and most of it is not for you yet. Read these five sections, in this order, and skip everything else until you have actual revenue:

- If you're new to all this — start here (above) — the literal year-one prescription.

- Beginner's 10-minute version (below) — the broader picture: four families, twelve approaches in one sentence each.

- Approach 2 — Per-Call / Usage Pricing (in Section A) — the most common AI pricing model and the one you will likely run first.

- Approach 7 — Compute COGS Accounting (in Section B) — what every founder needs to understand about gross margin in AI businesses.

- Appendix A — Glossary (at the end) — open this whenever a term is unfamiliar.

That is the entire beginner reading path. Roughly 4,000 words across five sections. You can skip the executive summary, the finance diagnostic, the strategic fit matrix, the other ten approaches, the cross-cutting concepts, the AI-era shifts, the common failures, and the anti-patterns until you have specific questions those sections happen to answer.

After you have meaningful revenue (typically $1M+ ARR), come back to the document and read the rest in whatever order interests you.

Where this document fits

This document sits inside The AI-Native Company series. The Agent Factory Thesis defines the architecture. The AI Worker Catalog defines what gets built. The Sales Catalog and The Marketing Catalog cover how the company sells and creates demand. The Finance Catalog defines how the company keeps the books, prices its products, forecasts the future, and reports to the people who fund it.

This document answers an operational question: how do you actually run the financial side of an AI-native company, given that the cost structure, the pricing models, and the forecasting problems are meaningfully different from traditional SaaS?

You can read this document standalone. The few cross-references to the Sales Catalog (where pricing motions are introduced) can be skipped without losing the argument.

How to read this document

This document is a tool, not a story. Different readers will use it differently.

If you are new to finance. Follow the Beginner path through this document above. Do not try to read the whole catalog on first pass — most of it is not for you yet.

If you are a founder running an early-stage AI company. Use the Finance Diagnostic and the Strategic Fit Matrix below to find which pricing architectures fit your buyer and stage. Read the relevant approaches in Section A. Skip the deeper accounting and forecasting sections until you have revenue worth forecasting.

If you are a CFO, controller, or finance lead at an AI company. The document is built for you. Read top to bottom. The approaches are sequenced from pricing (the most common entry point) through accounting mechanics, forecasting, and external reporting.

If you are an investor or board member. The Investor & Board Reporting approach (Section D) and the Common finance failures section near the end are most directly relevant.

One note on jargon. This document uses technical vocabulary from accounting, FP&A, and SaaS finance. The first time a specialized term appears, it is usually explained in plain language nearby. Appendix A: Glossary gives a quick reference. The "Finance terms you must know first" section below covers the fifteen most important terms you will encounter.

Note on professional advice. This document provides strategic frameworks and operational reference, not professional accounting, tax, legal, or financial advice. Revenue recognition under ASC 606, capitalization of training costs, audit treatment, sales tax, and corporate-structure questions all require qualified professional guidance for your specific situation. Engage qualified professionals for material decisions; this catalog is a starting point for the conversations, not a substitute for them.

Note on confidence tagging. Throughout the document, individual benchmark claims and numerical ranges are sometimes tagged to signal how confident the reader should be in the specific number. [Industry benchmark] claims have broad practitioner consensus and are widely cited across SaaS finance literature (LTV/CAC > 3; mature SaaS gross margins 75–85%; Burn Multiple under 1.5× as the healthy SaaS bar). [Emerging pattern] claims have been observed across multiple AI-native companies in 2024–2026 but are not yet codified in canonical references (AI-native gross margins of 50–70%; compute as 20–60% of revenue; foundation-model price decay of 30–60% per year). [Author thesis] claims are informed extrapolations from observed patterns; the reader should treat them as one perspective rather than settled fact (specific cost-per-outcome ranges in worker cards; stage-by-stage employee productivity benchmarks; per-modality compute cost ranges). Untagged numerical claims sit somewhere within this spectrum; the tagging is selective rather than exhaustive.

Beginner's 10-minute version

If you only have ten minutes, read this section. It gives you everything you need to understand how AI-native companies handle finance — without the depth of the rest of the document.

What is "AI-native finance" and how is it different from regular SaaS finance?

AI-native finance is the practice of pricing, accounting, forecasting, and reporting for companies whose products use foundation models, AI agents, or other compute-intensive AI workloads. It differs from traditional SaaS finance in three important ways. First, cost structure: traditional SaaS has 75–85% gross margins because hosting costs are tiny relative to revenue [Industry benchmark]; AI-native companies typically have 50–70% gross margins because compute is a meaningful share of cost [Emerging pattern]. Second, pricing models: traditional SaaS sells per-seat subscriptions; AI-native companies frequently use per-call, per-token, per-outcome, or hybrid pricing because the cost-of-service varies with usage. Third, forecasting complexity: traditional SaaS forecasts can assume stable unit costs; AI-native forecasts have to account for foundation-model prices that fall 30–60% per year [Emerging pattern], customer ramp curves that are usage-driven rather than seat-driven, and contract structures that recognize revenue differently.

The four families of finance approaches

This document organizes twelve approaches into four families:

- Pricing architectures (1–5). How AI companies charge customers. Examples: per-seat (traditional), per-call (the AI infrastructure standard), per-outcome (service-as-software), value-based (percentage of measured customer value), or hybrid combinations.

- Revenue & cost mechanics (6–8). How AI companies account for what they earn and spend. Examples: revenue recognition for usage-based contracts, compute COGS treatment, cohort analysis with model-cost decay.

- Planning & capital allocation (9–11). How AI companies forecast and budget. Examples: pilot-economics modeling, revenue forecasting under falling compute costs, capital allocation between compute and people.

- External reporting (12). How AI companies talk to investors, boards, and auditors. Examples: investor metrics, board dashboards, audit-defensible disclosures.

The twelve approaches in one sentence each

- Per-Seat Pricing. Charge a fixed monthly fee per user; familiar from traditional SaaS, increasingly inappropriate for AI products with variable compute costs.

- Per-Call / Usage Pricing. Charge per API call, per token, or per query; the dominant pricing model for AI infrastructure and the most common starting point for AI products.

- Per-Outcome Pricing. Charge only when the AI delivers a defined result — a resolved support ticket, a processed claim, a booked meeting.

- Value-Based Pricing. Charge a percentage of measured customer value created; reserved for strategic enterprise deals with sophisticated buyers.

- Hybrid Pricing. Combine multiple architectures: a base subscription plus usage overages, or a subscription plus outcome bonuses.

- Revenue Recognition for AI Contracts. The accounting rules (ASC 606) that determine when revenue counts on the books, made more complex by usage-based and outcome-based contracts.

- Compute COGS Accounting. How to treat the cost of foundation-model API calls, GPU rentals, and infrastructure compute on the income statement.

- Cohort Analysis with Model-Cost Decay. Tracking how customer cohorts get more profitable over time as foundation-model costs fall.

- Pilot Economics & Contract Mechanics. Accounting for paid pilots, expansion to production contracts, and the multi-stage commercial structure most enterprise AI deals use.

- Revenue Forecasting Under Falling Compute Costs. Building 12–24 month revenue and gross-margin forecasts that explicitly model 30–60% annual compute price reductions.

- Capital Allocation. Deciding how to split incremental dollars between compute, people, marketing, and runway.

- Investor & Board Reporting. Designing metrics, dashboards, and disclosures that AI-native investors and boards expect — which differs meaningfully from traditional SaaS norms.

Beginner difficulty per approach

- Easy (intuitive, common starting point): Per-Seat Pricing (1), Per-Call Pricing (2)

- Medium (requires operational discipline): Per-Outcome Pricing (3), Hybrid Pricing (5), Revenue Recognition (6), Compute COGS (7), Pilot Economics (9), Capital Allocation (11), Investor Reporting (12)

- Advanced (requires sophisticated finance function or external advisors): Value-Based Pricing (4), Cohort Analysis (8), Forecasting Under Falling Costs (10)

That is the entire document in ten minutes. The rest explains each piece in detail and gives you the tools to choose, sequence, and run the financial architecture of your own AI company.

Finance terms you must know first

If finance is unfamiliar territory, these are the fifteen terms you will see most often in this document. Once you know what these mean, the rest of the document becomes navigable without constant glossary lookups. (For the comprehensive glossary covering all fifty-plus terms used in the catalog, see Appendix A at the end.)

Revenue. Money the company earns from customers. The top line of the income statement.

Bookings. The total contract value of deals signed in a period. Differs from revenue: a $1.2M one-year contract is $1.2M in bookings on the day signed but produces $100K of revenue per month over the contract term.

Recognized revenue. The portion of contracted revenue that hits the income statement in a given period under GAAP rules. For traditional subscription contracts, recognized revenue is bookings divided by contract length; for AI-native usage- and outcome-based contracts, the two diverge meaningfully.

ARR (Annual Recurring Revenue). The annualized contract value of subscription customers. The single most-tracked SaaS metric. A customer paying $10K/month on an annual contract contributes $120K of ARR.

COGS (Cost of Goods Sold). The direct costs of delivering the product to customers. For AI-native companies, COGS includes foundation-model API costs, hosting and infrastructure, and the variable customer-success time required to deliver the service. Compute is typically the largest line item.

Gross margin. Revenue minus COGS, expressed as a percentage of revenue. The most important profitability metric. Traditional SaaS norms are 75–85%; AI-native norms are 50–70% because compute is a meaningful share of cost.

NRR (Net Revenue Retention). The percentage of recurring revenue retained from existing customers including upsell. Above 100% means the existing customer base is growing in revenue terms. A 130% NRR means $1M of revenue from a year ago is now $1.3M from the same customers.

CAC (Customer Acquisition Cost). The fully-loaded cost to acquire one new customer — sales spend, marketing spend, and any other functions that contribute to acquisition.

LTV (Lifetime Value). The total gross-margin contribution a customer is expected to produce over their lifetime as a customer.

LTV/CAC ratio. Lifetime value divided by acquisition cost. Healthy SaaS programs target above 3×.

CAC payback period. The number of months required for a customer's gross-margin contribution to repay the cost of acquiring them. Mature SaaS targets under 18 months.

Cash runway. The number of months the company can fund operations at current burn rate before running out of cash. The most fundamental finance metric for early-stage companies.

Burn rate. Net cash leaving the company per month, typically operating expenses minus revenue collected. A company spending $500K/month and collecting $200K/month has a burn rate of $300K/month.

Burn Multiple. Cash burned divided by net new ARR added in the same period. Lower is better; under 2× is healthy for AI-native; under 1.5× is healthy for mature SaaS. Popularized by David Sacks.

Compute COGS. The cost of running AI workloads — foundation-model API calls, GPU inference, infrastructure compute. Treated as a primary line within COGS for AI-native companies, often 20–60% of revenue.

ASC 606. The US accounting standard governing revenue recognition. Determines when revenue counts on the books, particularly important for AI-native companies with usage-based and outcome-based contracts. International equivalent: IFRS 15.

These fifteen terms appear hundreds of times across the document. The remaining vocabulary (variable consideration, deferred revenue, contribution margin, capital efficiency ratio, Rule of 40, audit defensibility) builds on these. If you understand the fifteen above, you can read the rest of the document.

Minimum financial metrics for AI-native companies

If you track only ten metrics, track these. The table below is the simplest possible scorecard for an AI-native company at any stage — the metrics that determine whether the business is viable, the formulas to calculate them, and the targets you should be aiming for. Section E and Section F give the comprehensive metric set; this table is the floor, not the ceiling.

| # | Metric | Formula | Why it matters | Target |

|---|---|---|---|---|

| 1 | Revenue (recognized) | Sum of revenue earned in the period under GAAP rules | The top line; what the income statement reports | Growing month-over-month |

| 2 | ARR | Annualized recurring revenue from subscription contracts | The standard SaaS scale metric | Stage-dependent |

| 3 | Gross margin | (Revenue − COGS) / Revenue | Whether the unit economics work | 50–70% AI-native, 75–85% mature SaaS |

| 4 | Compute as % of revenue | Compute COGS / Revenue | The AI-specific cost ratio | 20–35% at scaling stage |

| 5 | Cash on hand | Total liquid cash at period end | Survival metric | At least 18 months of runway |

| 6 | Monthly burn | Operating expenses − revenue collected | The drain on cash | Stage-dependent |

| 7 | Cash runway | Cash on hand / Monthly burn | How long survival is funded | 18+ months |

| 8 | NRR | (Starting ARR + Expansion − Churn − Contraction) / Starting ARR | Existing customer health | >110% healthy, >130% strong |

| 9 | CAC payback period | CAC / (Monthly recurring revenue per customer × Gross margin) | How long to break even on acquisition | <18 months |

| 10 | Burn Multiple | Net cash burned / Net new ARR added | Capital efficiency in growth phase | <2× AI-native, <1.5× mature SaaS |

Track these weekly (cash, runway), monthly (revenue, ARR, gross margin, compute %, NRR, burn), and quarterly (CAC payback, Burn Multiple). Update from your bookkeeping tool; do not maintain in a spreadsheet that diverges from the books.

If you track these ten metrics consistently, you have the operational discipline to know whether the business is healthy and the credibility to talk to investors. Everything else in this document is supplementary depth.

Executive summary

The AI-Native Finance Catalog is a recipe book for handling the financial side of an AI-native company in 2026 and beyond. There are many ways to price, account for, forecast, and report on an AI business, and the right way depends on your buyer, your stage, your contract structure, and your investor expectations. This document names twelve approaches, organizes them into four families, and tells you which fit your situation.

The four families — what each kind of approach is for.

Pricing architectures (Approaches 1–5) define how the company charges customers. The choice cascades through everything else — revenue recognition, forecast complexity, sales-team compensation, customer-success focus. Most companies start with one architecture and evolve toward hybrid as they scale.

Revenue & cost mechanics (Approaches 6–8) define how the company accounts for what it earns and spends. The technical work of finance lives here: turning customer activity into auditable books, classifying compute costs correctly, and maintaining the cohort discipline that surfaces unit-economics truth.

Planning & capital allocation (Approaches 9–11) define how the company looks forward. Forecasting an AI business requires modeling not just revenue ramp but also falling compute costs, expanding usage, and the customer behavior changes that come with shifting AI capability. Capital allocation determines how dollars are split between the company's three main cost centers: compute, people, and customer acquisition.

External reporting (Approach 12) defines how the company talks to its investors, board, and auditors. AI-native companies report on metrics traditional SaaS does not: model cost as percentage of revenue, gross margin including compute, contribution margin per outcome, and forecast accuracy adjusted for model-price decay.

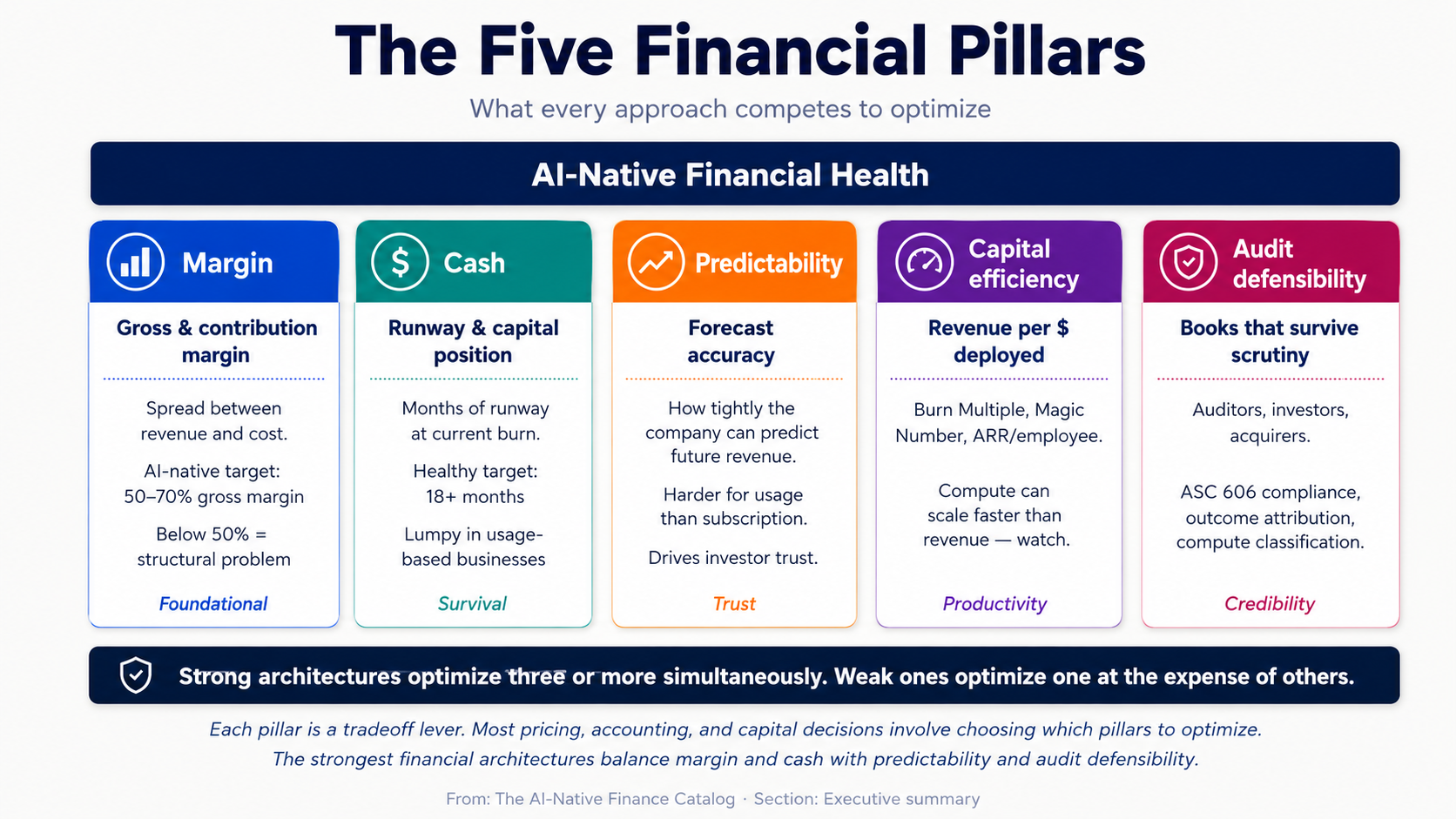

The five financial pillars — what every approach competes to optimize.

Margin is the spread between revenue and cost. Gross margin (revenue minus compute and direct costs) is the metric that determines whether the business model works at all. AI-native companies that ship with gross margin below 50% rarely recover; companies above 70% have meaningful pricing power.

Cash is the runway-determining metric — how much capital the company has and how long it lasts at current burn rate. AI-native companies often have lumpy cash flows because of usage-based revenue (which can spike or contract with customer activity) and prepaid compute commitments to foundation-model providers.

Predictability is the accuracy of the forecast. Traditional SaaS achieves high forecast accuracy because subscription revenue is predictable; AI-native businesses face structural forecast uncertainty because of usage variance, model-price decay, and outcome-attribution complexity.

Capital efficiency is revenue produced per dollar of capital deployed. The "Burn Multiple" metric (capital burned divided by net new ARR) and the "Magic Number" (sales efficiency) are common shorthand. AI-native companies face a particular efficiency challenge because compute spend can scale faster than revenue.

Audit defensibility is the books' ability to survive scrutiny — from auditors during a year-end audit, from investors during due diligence, and from acquirers during M&A. AI-native companies face new audit-defensibility challenges around outcome attribution, usage-based revenue recognition, and the capitalization-versus-expense treatment of model fine-tuning costs.

The strongest financial architectures optimize three or more of these pillars simultaneously. The weakest optimize one (typically margin or cash) at the expense of the others — which produces a short-term win and a long-term collapse.

A note on scope. This catalog focuses primarily on B2B AI-native companies at any stage from seed through Series C. Consumer AI companies (apps with millions of free users monetized through tiered subscriptions or ads) follow different rules and are not the primary subject here, though several approaches — Per-Seat Pricing, Per-Call Pricing, Hybrid Pricing — apply to both contexts. Late-stage public-company finance (IPO readiness, public-company reporting, segment disclosures) is also out of scope.

The maturity spectrum. Each approach is tagged Proven, Emerging, or Speculative based on how widely AI-native companies are running it successfully today.

- Proven approaches have many at-scale companies operating on them, with established playbooks and benchmarks.

- Emerging approaches are being run by AI-native companies in 2026 but are evolving rapidly with the underlying tooling and accounting standards.

- Speculative approaches depend on practices or buyer behaviors that do not yet exist at scale.

What this page is for

This document serves three purposes.

First, as a chooser. A founder or finance leader designing an AI company's financial architecture can use the Strategic Fit Matrix, the Finance Diagnostic, and the Approach Summary Table to find the architectures that fit their stage, buyer, and contract structure.

Second, as a reference. A finance team running an existing architecture can use the deep sections to audit their own operation against the documented mechanics — comparing their gross margin, cohort behavior, and forecast accuracy against the patterns described.

Third, as a sequencing guide. Most successful AI-native companies evolve their financial architecture as they scale. The Common Hybrid Models section maps the most common evolution paths.

How to choose a financial architecture

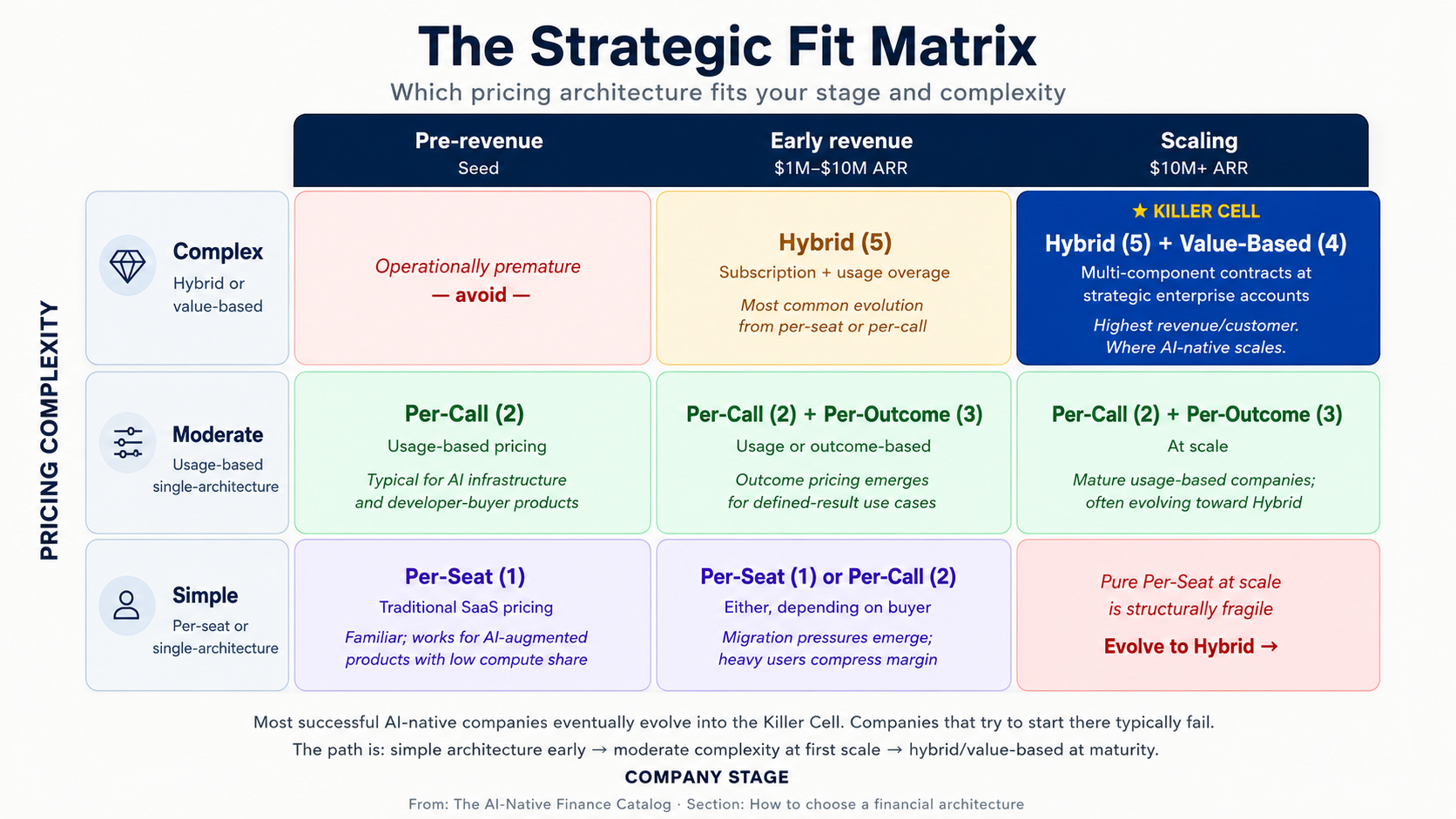

The cleanest predictor of which financial architecture fits is the intersection of pricing complexity and company stage. The matrix below maps the twelve approaches onto those two axes.

| Stage → / Pricing complexity ↓ | Pre-revenue (Seed) | Early revenue ($1M–$10M ARR) | Scaling ($10M+ ARR) |

|---|---|---|---|

| Simple (per-seat or single-architecture) | Per-Seat (1) | Per-Seat (1), Per-Call (2) | — |

| Moderate (usage-based, single-architecture) | Per-Call (2) | Per-Call (2), Per-Outcome (3) | Per-Call (2), Per-Outcome (3) |

| Complex (hybrid or value-based) | — | Hybrid (5) | Hybrid (5), Value-Based (4) |

The cell that matters most is complex × scaling — Hybrid Pricing and Value-Based Pricing. These are the architectures that produce the highest revenue per customer and the most defensible pricing power, but they require sophisticated finance, sales, and customer-success operations to execute. Most successful AI-native companies eventually evolve into this cell; companies that try to start there typically fail because the operational maturity is not yet present.

Finance diagnostic: eight questions

Before picking a financial architecture, score yourself honestly on the eight dimensions below. The approaches each row points to are the ones most aligned with that condition.

-

Buyer type. Developer / API consumer → Per-Call (2). Operator buying SaaS → Per-Seat (1) or Hybrid (5). Enterprise buyer with budget for outcomes → Per-Outcome (3) or Value-Based (4).

-

Average deal size. <$10K/year → Per-Seat or Per-Call. $10K–$100K → Per-Call or Hybrid. $100K+ → Per-Outcome, Value-Based, or Hybrid.

-

Cost structure variability. Compute cost is small and stable → Per-Seat works fine. Compute cost varies significantly with usage → Per-Call required. Compute cost is significant but the value-per-outcome is much higher → Per-Outcome possible.

-

Sales motion. Self-serve PLG → Per-Call or Per-Seat. Vendor-led mid-market → Per-Seat, Per-Call, or Hybrid. Enterprise field → Per-Outcome, Value-Based, or Hybrid (see Sales Catalog Motions 7–10).

-

Customer technical sophistication. High (developers, technical operators) → Per-Call works; users tolerate variable bills. Low (executive buyers, ops) → Per-Seat or Hybrid; users want predictable bills.

-

Contract length. Monthly self-serve → Per-Call or Per-Seat. Annual SaaS → Any architecture. Multi-year enterprise → Hybrid or Value-Based.

-

Forecast accuracy required. Tight (board-driven targets, public-company-style discipline) → Per-Seat or Hybrid (more predictable). Loose (early-stage, growth at all costs) → Per-Call or Per-Outcome.

-

Internal finance maturity. Founder doing books in spreadsheet → Per-Seat or Per-Call (simplest accounting). Controller in place → Per-Outcome possible. Full finance team → Value-Based and complex Hybrid feasible.

The diagnostic does not tell you which architecture is correct. It tells you which architectures are available given your starting position. The matrix above and the deep sections below tell you which available architecture fits the buyer you are pricing for.

Approach summary table

A one-page reference for all twelve approaches.

| # | Approach | Maturity | Best for | Main strength | Main risk |

|---|---|---|---|---|---|

| 1 | Per-Seat Pricing | Proven | Predictable-usage SaaS | Forecast simplicity | Disconnects price from cost |

| 2 | Per-Call / Usage Pricing | Proven | Developer-buyer infrastructure | Aligns price with cost | Customer bill anxiety |

| 3 | Per-Outcome Pricing | Emerging | Defined-result use cases | Maximum value capture | Outcome-attribution complexity |

| 4 | Value-Based Pricing | Emerging | Strategic enterprise deals | Premium pricing | Contracting maturity required |

| 5 | Hybrid Pricing | Proven | Mid-market and enterprise scale | Balance of predictability and capture | Complexity to communicate |

| 6 | Revenue Recognition | Proven | Any company with revenue | Audit defensibility | ASC 606 complexity for usage/outcome |

| 7 | Compute COGS Accounting | Proven | Any AI-native company | Margin clarity | Misclassification risk |

| 8 | Cohort Analysis with Model-Cost Decay | Emerging | Companies $5M+ ARR | Truth about unit economics | Requires data discipline |

| 9 | Pilot Economics & Contract Mechanics | Proven | Enterprise sales motions | Pilot-to-production conversion | Premature production accounting |

| 10 | Forecasting Under Falling Compute Costs | Emerging | Companies on usage models | Realistic margin trajectory | Over-optimism on compute decay |

| 11 | Capital Allocation | Proven | Any post-Series A | Strategic spend discipline | Compute over-investment |

| 12 | Investor & Board Reporting | Proven | Any post-Series A | Stakeholder alignment | Vanity metrics over substance |

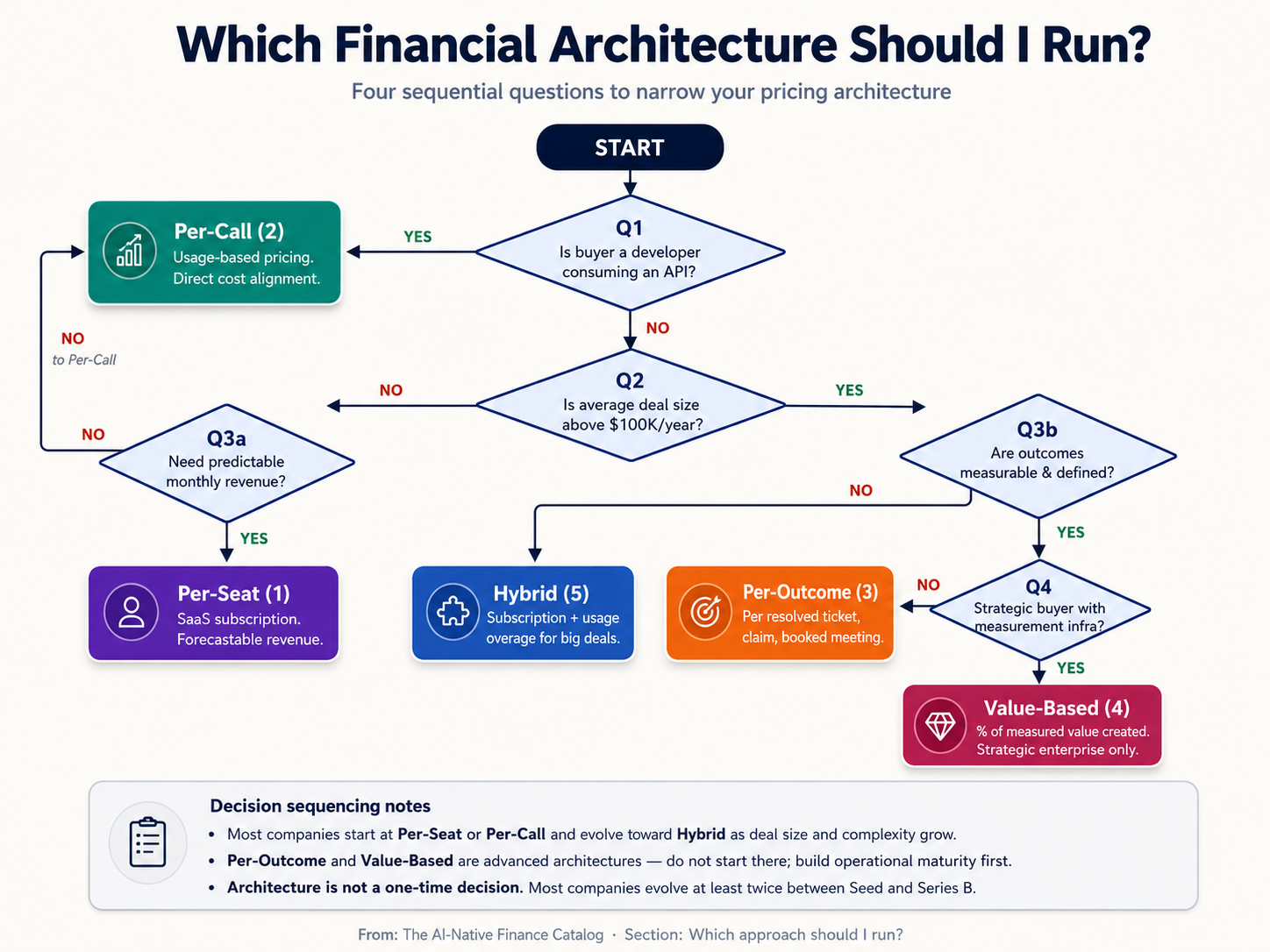

Which approach should I run?

A decision flowchart sequences the most important questions for narrowing your architecture choice.

The four key questions are: (1) Is your buyer a developer using your API? (yes → Per-Call). (2) Is your average deal size above $100K? (yes → consider Per-Outcome, Value-Based, or Hybrid). (3) Do you need predictable revenue for forecasting? (yes → Per-Seat or Hybrid; no → Per-Call or Per-Outcome). (4) What is your finance team's operational maturity? (low → simpler architectures; high → complex architectures feasible).

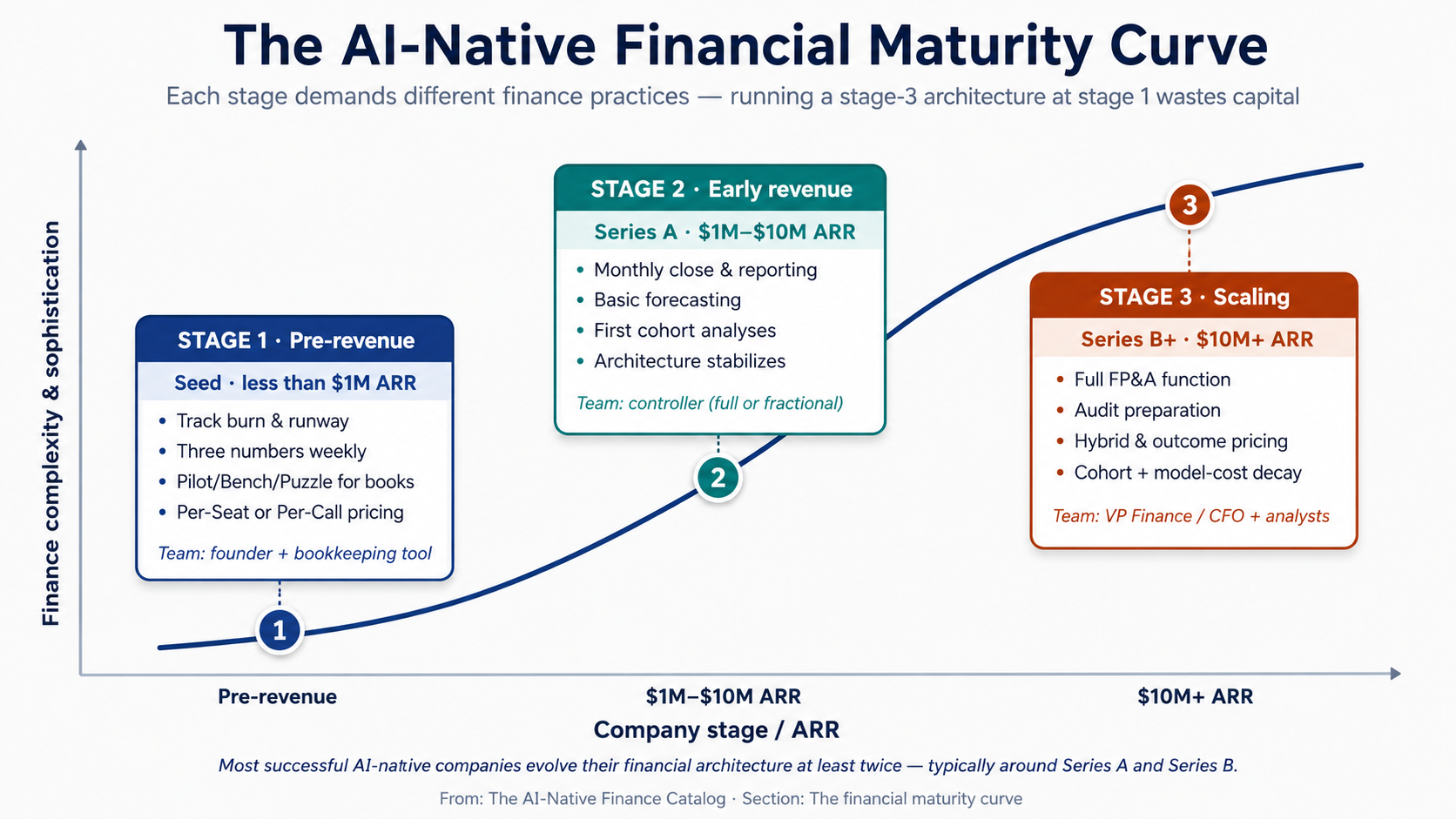

The financial maturity curve

Every AI-native company moves through three stages of financial maturity. The architecture and operational practices that fit each stage are different — and trying to run a stage-3 architecture at stage 1 is one of the most common ways founders waste money.

Three stages define the Financial Maturity Curve:

Stage 1 — Pre-revenue (Seed-stage). The company has product but limited revenue. Finance work is minimal: track burn, manage runway, file basic taxes, prepare for the first audit-equivalent (typically a Quality of Earnings review during Series A diligence). The right architecture is whichever pricing model is simplest to implement and easiest to explain to early customers — usually Per-Seat (1) or Per-Call (2). Finance team: founder, supplemented by Pilot/Bench/Puzzle for bookkeeping.

Stage 2 — Early revenue ($1M–$10M ARR). The company has product-market fit signals and meaningful customer count. Finance work expands to include monthly close, board reporting, basic forecasting, and the first internal cohort analyses. Pricing architectures stabilize, but the team begins seeing pressure to evolve — enterprise customers want different terms, customer-success metrics demand outcome thinking, investors expect cleaner unit economics. The right architecture is whichever pricing model produces clear cohort retention with manageable accounting complexity. Finance team: controller (full-time or fractional), bookkeeper, founder still involved in major decisions.

Stage 3 — Scaling ($10M+ ARR). The company is preparing for or has completed a Series B. Finance work includes full FP&A, audit preparation, complex contract accounting, and increasingly sophisticated investor and board reporting. Hybrid Pricing (5) and Value-Based Pricing (4) become operationally feasible. Cohort analysis with model-cost decay (Approach 8) becomes a board-level metric. Capital allocation (Approach 11) becomes the central strategic question. Finance team: VP Finance or CFO, controller, FP&A analyst(s), and increasingly specialized roles (revenue operations, treasury).

The implication for founders is that financial architecture is not a one-time decision. The right architecture for your stage today will probably need to evolve at least twice before the company reaches scale — typically once around the Series A (introducing more sophisticated cohort discipline) and once around the Series B (introducing hybrid pricing or outcome-based components). Companies that lock in their stage-1 architecture and try to scale without evolution typically hit a ceiling in the high-single-digit-millions of ARR.

Maturity legend

- Proven. The approach has many AI-native (and pre-AI) companies operating it at scale today, with established playbooks and benchmarks.

- Emerging. The approach is being run by AI-native companies in 2026 but is evolving rapidly — the canonical playbook has not yet stabilized.

- Speculative. The approach depends on practices or buyer behaviors that do not yet exist at scale.

A. Pricing architectures

The way the company charges customers. Pricing architecture is the single most consequential financial decision an AI-native company makes — it cascades through revenue recognition, sales-team compensation, customer-success focus, forecast complexity, and gross-margin structure. Most companies start with one architecture and evolve toward hybrid as they scale.

Approach 1 — Per-Seat Pricing

Maturity: Proven. Beginner difficulty: Easy.

In Plain English. Per-Seat Pricing is the SaaS model everyone learned in the 2010s: customer pays a fixed fee per user, per month. Ten users at $50/month each is $500/month. The customer's bill is predictable, the company's revenue is predictable, and accounting is straightforward. The only question is how many seats the customer needs.

For AI products, this model is increasingly awkward. AI compute costs scale with usage, not seat count. A customer with ten seats might generate ten thousand AI calls or ten million; the cost of serving them differs by orders of magnitude, but the revenue is identical. Companies that ship Per-Seat Pricing for genuinely AI-heavy products often find themselves with negative gross margin on their heaviest users.

Best as a starting architecture for AI-augmented SaaS where AI is one feature among many. Increasingly inappropriate for products where AI is the core value driver.

Core idea. Charge a predictable fee per user, accepting that revenue will not track usage and that heavy users may produce negative unit economics.

When to use it. When the product is AI-augmented but not AI-defined — AI is a feature inside a broader workflow product. When the buyer is an executive who needs predictable line-item budgeting. When the underlying compute cost per seat is small enough (under 10–15% of subscription revenue) that usage variability does not threaten gross margin.

Mechanism. Per-Seat Pricing works because it gives both the buyer and seller predictability. The buyer can budget; the seller can forecast. Annual contracts produce contracted ARR (annual recurring revenue), which is the metric Wall Street has trained AI companies to optimize for over the last decade.

The structural problem for AI products is the disconnection between price and cost. Foundation-model API pricing is unit-based: per token, per second of audio, per image generation. When the product wraps that API behind a per-seat subscription, every call the user makes is a cost the seller absorbs. Heavy users — typically the customer's most engaged employees, ironically — produce the most usage and therefore the most cost. If the average compute cost across all users is 20% of seat revenue, the heaviest decile may produce compute costs of 80% or more of their seat revenue, leaving thin margin or even negative contribution.

The fix in 2026 is rarely to abandon Per-Seat Pricing entirely; it is to add a usage-based component to the contract — a per-call or per-token overage above an included quota. This converts pure Per-Seat into Hybrid Pricing (Approach 5), which is the most common architecture in AI-native SaaS at scale.

Fictional walk-through. Imagine MeetingMind, an AI meeting-summary tool sold at $30/seat/month. A customer with 100 seats pays $36,000/year. Of those 100 users, 20 use the product heavily (50+ summaries per month each), 60 use it lightly (5–10 summaries), and 20 are inactive. The 20 heavy users generate compute costs of $25/month each ($6,000/year total); the rest generate trivial costs. Total compute is roughly $7,000/year against $36,000 revenue — gross margin around 80%, comfortable. Now imagine the heavy-user share rises to 50% as the product gets stickier. Compute costs rise to $15,000+; gross margin drops to 60%. The seller has to either introduce overage pricing or watch margin erode.

Example. Confirmed pattern: Most AI-augmented productivity tools (Notion AI, Linear with AI, Asana Intelligence) ship Per-Seat Pricing for their core SaaS, often with usage-tier limits to cap compute exposure. Pure Per-Seat without limits is rarely seen in heavy-AI products by 2026.

Primary risk. Negative unit economics on heavy users. The most engaged users are also the most expensive to serve, but they pay the same price as light users. Mitigation: monitor compute-per-seat by user cohort, introduce usage caps or overage pricing once the heavy-user share exceeds a threshold, and consider Hybrid Pricing (Approach 5) as the natural evolution.

First move. Calculate average compute cost per seat across your current customer base. If it exceeds 15% of seat revenue, begin planning the transition to Hybrid Pricing.

Approach 2 — Per-Call / Usage Pricing

Maturity: Proven. Beginner difficulty: Easy.

In Plain English. Per-Call Pricing is the AI infrastructure standard. Customers pay per API call, per token consumed, per second of audio processed, per image generated, or per query executed. Revenue scales with usage; costs scale with usage; the alignment is direct. OpenAI, Anthropic, ElevenLabs, Replicate, and most AI infrastructure companies use this model.

The advantage is that gross margin is structurally preserved — every call's revenue is set above its compute cost, so the company never loses money on a unit basis regardless of customer behavior. The disadvantage is that customer bills are unpredictable, which produces a recurring problem in customer success and renewal: every spike in usage produces a spike in bill, and customers who exceed their internal budget become unhappy customers.

Best as the founding architecture for AI infrastructure products and developer-buyer products. Common as one component of Hybrid Pricing in operator-buyer products.

Core idea. Align price directly with usage and cost. Each call costs the company some amount in compute; charge above that amount with a margin built in.

When to use it. When the buyer is a developer or technical user comfortable with usage-based billing. When the product is genuinely usage-variable — different customers consume dramatically different amounts. When the team is willing to invest in usage instrumentation, billing infrastructure, and the customer-success work of helping buyers manage their bills.

Mechanism. Per-Call Pricing works because it solves the gross-margin problem at the architecture level. Every call is priced above its cost, so margin is mathematically protected. Forecasting is harder than Per-Seat (revenue depends on usage, which depends on customer behavior, which is variable), but for many AI infrastructure products the forecasting penalty is acceptable in exchange for margin safety.

The execution requires three operational disciplines that traditional SaaS does not need. Usage instrumentation — every billable event must be measured, attributed to the right customer, and stored in an auditable record. Billing infrastructure — generating accurate, defensible invoices monthly is harder than fixed-fee billing; mistakes are visible to customers immediately. Customer-success around bill management — customers need dashboards to monitor their usage, alerts when usage spikes, and the ability to set caps or budgets to avoid surprise bills. Companies that ship usage-based pricing without these three disciplines see customer churn driven by bill anxiety, not by product dissatisfaction.

The constraint at scale is bill-shock. A customer who used $5K in compute in January and $50K in February sees a 10x bill increase that requires internal approval to pay. The default response — "we'll review next year" — translates to lost revenue. Mature usage-based companies invest heavily in bill-prediction tools, capacity-planning conversations, and proactive outreach when usage trajectories suggest budget concerns.

Fictional walk-through. Imagine TextAI, an LLM API company. Customers pay $0.005 per 1K input tokens and $0.015 per 1K output tokens. A typical customer signs up, builds an integration, runs experiments costing $200/month for the first three months, then deploys to production and ramps to $5,000/month over the next six months. By month nine, they are processing 50M tokens daily and paying $150K/month. The customer's bills are unpredictable; their CFO complains every month; the customer-success team spends 30% of its time helping them forecast. But TextAI's gross margin on the customer is steady at 65% across every month — the architecture protects the business model regardless of how the customer ramps.

Example. Confirmed examples: OpenAI, Anthropic, Cohere, Mistral, ElevenLabs, Replicate, Together AI, Fireworks AI, and the long tail of AI infrastructure companies. Almost every AI-API business in 2026 uses some form of usage pricing.

Primary risk. Bill-shock and customer churn. Customers who exceed budget become unhappy customers regardless of how good the product is. Mitigation: invest in usage dashboards, budget alerts, monthly capacity-planning conversations with major customers, and the option for customers to set hard caps on spend (accepting that hitting the cap produces a different kind of pain — service interruption — that needs to be managed carefully).

Secondary risk. Forecast unpredictability. Usage-based revenue is harder to forecast than subscription revenue, which complicates fundraising, board reporting, and operational planning. Mitigation: build cohort-based forecast models that project usage growth from prior customer behavior; invest in lead indicators (calls per active user, active-user growth rate) that are more predictable than total usage.

First move. If your product is genuinely usage-variable and your buyer is technical, ship Per-Call Pricing from the start. Set a price per unit of consumption that gives you 60%+ gross margin [Emerging pattern: the AI-native floor below which scaling becomes structurally difficult], instrument usage carefully, and build a usage dashboard before you have your first customer.

Approach 3 — Per-Outcome Pricing

Maturity: Emerging. Beginner difficulty: Medium.

In Plain English. Per-Outcome Pricing means the customer pays only when the AI delivers a defined result. A resolved support ticket, a processed insurance claim, a booked sales meeting, a successfully completed agent task. The customer is not paying for access, time, or compute — they are paying for outcomes. If the AI fails to deliver, the customer does not pay.

This pricing model — sometimes called "Service-as-Software" — is the most distinctive innovation in AI commercial structure in the last few years. It is operationally complex, accounting-heavy, and dependent on the company's ability to attribute outcomes accurately. But for use cases where outcomes are measurable, it produces dramatically higher per-customer revenue than Per-Call or Per-Seat alternatives, because the price is anchored to the customer's labor budget rather than their software budget.

Best for use cases with clearly defined, measurable outcomes that the AI can reliably deliver. Almost always combined with Sales Catalog Motion 9 (Pay-Per-Outcome). Operationally complex; requires substantial outcome-attribution infrastructure.

Core idea. Charge per delivered outcome, anchoring price to the customer's labor cost rather than the seller's software cost.

When to use it. When the use case has a clear, measurable, attributable outcome. When the customer's alternative is hiring humans to do the same work (so the comparison anchor is human labor cost). When the company is willing to invest in outcome-attribution infrastructure — typically the largest single non-product engineering investment in the early years of running this architecture.

Mechanism. Per-Outcome Pricing works because it lets the seller capture a fraction of the customer's labor budget rather than a fraction of their software budget. A mid-market company spends ten times more on customer-support headcount than on customer-support software. The AI vendor that captures a fraction of the headcount budget through outcome pricing operates in a different revenue category than the vendor capturing a fraction of the software budget.

The pricing math anchors to human labor cost. If a customer-support representative costs roughly $5 per resolved ticket all-in (salary, benefits, management overhead, workspace), the outcome price ceiling sits around $1–3 per resolved ticket — enough below human cost that the customer captures real savings, enough above the seller's compute cost that gross margin is positive. The seller's compute cost per outcome (typically $0.20–0.80 for a well-optimized agent [Author thesis: based on observed deployments in 2026; sensitive to model choice and prompt efficiency]) sets the floor; the customer's human cost sets the ceiling; price lives somewhere in between.

The technical foundation is outcome attribution. The vendor must produce audit-grade telemetry — for every priced outcome, a verifiable record of what the AI did, what it processed, and how the result was confirmed. Without this, customer disputes have no objective basis and revenue collection becomes a quarterly negotiation. Companies running this architecture well treat outcome-attribution infrastructure as part of the product, not as accounting overhead, and staff it with engineers, not finance analysts.

The accounting complexity is real. Revenue is recognized as outcomes are delivered (not when the contract is signed), which means the contract-to-revenue conversion is not 1:1 — the company books bookings of $1M but recognizes revenue only as outcomes accrue, potentially over many months. Combined with the standard ASC 606 requirements (Approach 6), this produces a deferred-revenue mechanic that traditional SaaS finance has not had to manage.

Fictional walk-through. Imagine TicketBot, an AI customer-support agent. TicketBot does not charge customers per seat or per call. Instead, the customer pays $0.50 for every support ticket TicketBot resolves on its own (without escalating to a human). A customer with 50,000 tickets per month gets a $25,000 monthly bill — but only if TicketBot actually resolves the tickets. If TicketBot resolves only 30% of incoming tickets, the bill is $7,500. The customer's CFO loves the model; the customer's procurement team needs to learn how to structure the contract; TicketBot's own finance team has to invest in outcome-attribution infrastructure to defend each billable event.

Example. Confirmed examples: Sierra's per-resolution pricing for AI customer service. Decagon's outcome-based contracts. EvenUp's per-claim pricing for personal-injury legal work. The pattern is among the most actively-expanding pricing structures in 2026, and almost universally appears in companies that also run Sales Catalog Motion 9.

Primary risk. Outcome-attribution disputes. Without audit-grade telemetry, customer disputes about what counts as a "resolved" outcome turn collection into negotiation. Mitigation: invest in attribution infrastructure as a core engineering function. Build the telemetry before the first contract; do not retrofit it later.

Secondary risk. Revenue recognition complexity. Outcome contracts under ASC 606 require careful structuring and may produce surprising deferred-revenue patterns. Mitigation: work with an AI-experienced revenue accountant from the first contract; do not assume traditional SaaS revenue recognition rules apply.

First move. Define one outcome that is unambiguous, measurable, and attributable. Price the first contract conservatively (closer to your cost floor than your value ceiling) to learn the operational mechanics. Scale price upward only after you have lived with attribution disputes for at least six months.

Approach 4 — Value-Based Pricing

Maturity: Emerging. Beginner difficulty: Advanced.

In Plain English. Value-Based Pricing means the customer pays a percentage of the measured business value the AI creates for them. A hedge fund deploys an AI tool that improves trading efficiency by $40M per year; the AI vendor's contract is structured at 15% of measurable improvement, paying $6M/year. The price is anchored not to the seller's cost or to comparable software, but to the customer's measured outcomes.

This is the highest-revenue-per-customer pricing model in AI, and the rarest. It requires sophisticated contracting, executive sponsorship at the buyer (typically C-suite), and substantial investment in measurement infrastructure to defend the value calculation. By 2026, it appears mostly in strategic enterprise deployments at financial services, large healthcare systems, and consulting firms — buyers with both the analytical sophistication to measure value rigorously and the procurement flexibility to structure non-standard contracts.

Best for strategic enterprise deals where measured value is large enough to support the operational overhead. Always combined with Sales Catalog Motion 10 (Value-Based Engagement).

Core idea. Charge a percentage of measured customer value created, removing the conventional vendor-buyer adversarial dynamic where the vendor wants to charge for access and the buyer wants to pay for results.

When to use it. When the customer is a sophisticated enterprise with both the data infrastructure to measure value and the procurement flexibility to structure non-standard contracts. When the deployment will produce measurable, attributable outcomes large enough to support the operational overhead (typically $5M+ in annual measured value). When the executive sponsor at the buyer has authority to override standard procurement.

Mechanism. Value-Based Pricing works when both parties can agree on what value means and how to measure it. The contract structure is materially more complex than seat-, usage-, or outcome-based pricing. A typical agreement has four components. A baseline measurement period (typically 30–90 days before deployment) establishes what the customer's metrics looked like without the AI. A value-share formula defines what fraction of the measured gain the vendor captures — typically 5–25%, varying by deal complexity and buyer sophistication. A ceiling and floor caps both upside (so the vendor does not earn more than the customer's executives can defend internally) and downside (so the vendor is not paying the customer to deploy the product). And audit rights give the vendor the ability to verify the customer's reporting on the metrics that drive billing — without audit rights, customer procurement will under-report measured value at the first true-up cycle.

The operational constraint is contracting maturity. Most enterprise procurement organizations are not yet equipped to structure value-based deals at scale; legal, finance, and operations all need representatives who understand the model and have authority to commit to non-standard contract terms. This is why these deals typically require an executive sponsor at the C-suite level — only that authority can override the procurement organization's default of "we don't structure deals this way." Without the sponsor, the proposal stalls in mid-organization indefinitely.

The financial accounting complexity is substantial. Revenue recognition under ASC 606 for value-based contracts is non-trivial — the variable consideration is constrained to the amount the company can support with reasonable reliability, which often means revenue is recognized at much less than the contract's nominal upside until a track record is established. Auditors examining these contracts in year one are typically conservative; year-three auditors with multiple periods of comparable data are typically more permissive.

Fictional walk-through. Imagine CashFlow, an AI tool for hedge funds. A $50B fund deploys CashFlow and, over a 12-month measurement period, attributes a $40M annual improvement in trading efficiency to the deployment. CashFlow's contract is structured at 15% of measurable improvement above baseline: the fund pays $6M annually for the duration of the contract. The deal took nine months to negotiate, required the fund's CIO and CFO to personally approve, and only made it through procurement because the executive sponsor pushed it through. CashFlow's accounting team spent the first year recognizing revenue conservatively at $2M while the audit-defensible track record was being built; in year two, after the value calculation has been confirmed by multiple measurement cycles, full $6M revenue recognition becomes defensible.

Example. Emerging analogues: Some Anthropic Applied AI engagements with strategic enterprise customers. Some Palantir deployments structured around mission outcomes. Forward-leaning AI deployments at financial services, healthcare, and large consulting firms. The pattern is too young to have a canonical exemplar, but the contract templates are increasingly available through Big Four consulting practices.

Primary risk. Contracting collapse. The deal stalls in mid-organization for months because procurement has no template for the contract structure. Mitigation: identify and recruit the executive sponsor before drafting the contract. The sponsor's authority is the unblocking mechanism; without it, the deal will not close regardless of merit.

Secondary risk. Audit conservatism. Year-one revenue recognition under ASC 606 may be substantially below the contract's nominal value, producing a surprising P&L that confuses investors. Mitigation: engage an AI-experienced revenue accountant before signing the first value-based contract; structure investor reporting around bookings as well as recognized revenue.

First move. Do not pursue Value-Based Pricing as a first architecture. Build operational maturity through Per-Call (2), Per-Outcome (3), or Hybrid (5) first. Only attempt Value-Based once the company has a controller, an experienced contracts attorney, and an executive sponsor inside a target buyer.

Approach 5 — Hybrid Pricing

Maturity: Proven. Beginner difficulty: Medium.

In Plain English. Hybrid Pricing combines two or more of the architectures above into a single contract. The most common pattern is a base subscription (Per-Seat or platform fee) plus usage overages above an included quota — the customer gets predictable budgeting for normal usage and pays incrementally for heavy usage. Other hybrids combine subscriptions with outcome-based bonuses, or platform fees with per-call infrastructure charges.

By 2026, Hybrid Pricing is the dominant architecture for AI-native companies at scale.⁵ Pure single-architecture pricing is increasingly limited to early-stage companies that have not yet evolved their model. The reason hybrids dominate is that they balance the structural strengths of multiple architectures — the predictability of subscription, the cost-alignment of usage, and (for some hybrids) the value capture of outcome.

Best as the natural evolution from Per-Seat or Per-Call once the company reaches mid-market and enterprise scale. Adds operational complexity; requires careful contract design and customer-success investment in helping buyers understand the structure.

Core idea. Combine architectures to balance predictability, cost-alignment, and value capture in a way no single architecture can achieve alone.

When to use it. When customer revenue has reached a scale where pure per-seat or per-call breaks down (heavy users producing margin compression, light users producing churn risk, or enterprise buyers demanding more sophisticated contracts). When the team has the contracting and operational maturity to design and execute multi-component pricing.

Mechanism. The most common Hybrid Pricing structure in AI-native SaaS is "Per-Seat plus Usage Overage": customers pay a fixed fee per seat per month, with an included quota of AI calls per seat per month and per-call charges for usage above the quota. This structure preserves the budgeting predictability buyers love about Per-Seat while protecting the seller's gross margin against heavy users. Variants include "Platform Fee plus Usage" (a fixed fee for the right to use the API plus per-call charges), "Subscription plus Outcome Bonus" (a base subscription plus per-outcome charges for advanced agents), and "Tiered Subscription" (multiple subscription tiers, each with different included quotas and per-call rates).

The execution requires three disciplines. Contract design — multi-component pricing requires careful legal and pricing-strategy work to avoid customer confusion or unintentional margin leakage. Usage instrumentation — even hybrid contracts need clean usage tracking, both for billing the overage component and for forecasting customer behavior. Customer education — buyers in operator and executive roles often struggle to forecast hybrid bills; the customer-success team has to invest meaningful time in helping customers understand their projected costs.

The financial accounting complexity sits at the intersection of subscription and usage accounting. Revenue from the subscription component is recognized ratably over the contract term; revenue from the usage component is recognized as usage occurs. ASC 606 treats these as separate performance obligations, which means the contract must allocate the transaction price across components based on relative standalone selling prices — a non-trivial exercise that often requires explicit guidance from a revenue accountant.

The constraint at scale is communication complexity. Customers who cannot easily forecast their bills become anxious customers; anxious customers churn. Mature hybrid-pricing companies invest in dashboards, projection tools, and contract structures that maximize predictability — for example, monthly true-up windows rather than continuous metering, or quarterly commitments with overage review at the end of the quarter rather than at the end of every month.

Fictional walk-through. Imagine AgentPlatform, an AI agent infrastructure company. The pricing is hybrid: customers pay $5,000/month for the platform (including 1M agent calls per month) plus $0.005 per call above the quota, with annual contracts and quarterly true-up. A typical customer signs a $60K base annual contract and ramps usage from 200K calls/month at signup to 5M calls/month by month twelve. By the end of year one, the customer's actual revenue contribution is $60K (subscription) plus $180K (overage on 36M extra calls × $0.005) = $240K annual revenue, four times the base contract. The customer's bills are predictable enough to forecast (they get quarterly true-up notices); AgentPlatform's gross margin stays clean because heavy usage is priced above its compute cost.

Example. Confirmed examples: GitHub Copilot's Business and Enterprise tiers (subscription with usage components), Cursor's enterprise plans (subscription plus token overages), most enterprise AI vendors with mature pricing (Glean, Harvey, Sierra at large accounts). Hybrid Pricing is the dominant architecture among $10M+ ARR AI-native companies in 2026.

Primary risk. Contract complexity confusing customers. Buyers who cannot easily forecast their bills churn at higher rates than buyers on simpler pricing. Mitigation: invest in projection dashboards, quarterly true-up windows rather than monthly, and customer-success conversations that walk new customers through their projected costs.

Secondary risk. Revenue recognition complexity. ASC 606 treatment of hybrid contracts is more complex than pure subscription or pure usage; mistakes in standalone-selling-price allocation can produce material restatements. Mitigation: engage a revenue accountant familiar with multi-component AI contracts before designing the pricing structure; do not rely on standard SaaS revenue-recognition templates.

First move. If you have a Per-Seat product hitting margin compression on heavy users, or a Per-Call product producing customer-success burden on bill anxiety, design a hybrid that adds the missing component (usage overage or subscription floor). The simplest first hybrid is "current pricing plus a single overage component"; do not try to design a six-component contract on day one.

B. Revenue & cost mechanics

The technical work of finance — turning customer activity into auditable books, classifying compute costs correctly, and maintaining the cohort discipline that surfaces unit-economics truth. These approaches are less visible than pricing but more consequential to long-term financial health. A company can survive imperfect pricing for years; it cannot survive imperfect revenue recognition or COGS misclassification past the first audit.

⚠ A note on accounting and tax advice. This section discusses revenue recognition (ASC 606), COGS classification, capitalization of training costs, deferred revenue, and audit defensibility. The catalog provides strategic frameworks and identifies the questions you need to answer; it does not provide professional accounting, tax, or audit advice for your specific situation. The interpretations of ASC 606 for AI-native usage-based, outcome-based, and value-based contracts are still evolving among auditors and standard-setters. Engage a CPA with AI-native practice experience before signing your first non-subscription contract, before your first audit cycle, and before any material decision that depends on the rules below.

Approach 6 — Revenue Recognition for AI Contracts

Maturity: Proven. Beginner difficulty: Medium.

In Plain English. Revenue recognition is the accounting question of when revenue counts on the books. A customer signs a $1.2M one-year contract and pays $100K monthly; do you book $100K of revenue every month, or $1.2M on day one, or something else? The answer is governed by a global accounting standard called ASC 606 (in the US) or IFRS 15 (internationally). For traditional SaaS, the answer is straightforward: recognize revenue ratably over the contract period. For AI-native companies, it gets complicated — usage-based contracts, outcome-based contracts, and value-based contracts each have different recognition rules, and the rules are still being interpreted by auditors as the contract structures evolve.

Getting this right matters because it determines what the company tells investors, what the audit looks like, and what the P&L actually shows. Companies that get it wrong face material restatements during their first audit, surprise revenue holes during fundraising, and credibility damage with investors that takes years to repair.

Best treated as a foundational discipline at every stage. Cannot be deferred indefinitely; the moment a company has any revenue, ASC 606 applies.

Core idea. Apply the five-step ASC 606 framework — identify the contract, identify performance obligations, determine the transaction price, allocate the price to obligations, recognize revenue as obligations are satisfied — to AI contracts that frequently have variable consideration, multiple performance obligations, and outcome-dependent payments.

When to use it. Always, from the moment the company has any contracted revenue. The complexity of the application varies (Per-Seat is simple; Value-Based is complex), but the framework applies universally.

Mechanism. Traditional SaaS revenue recognition is simple because the contract is a single performance obligation (access to the software) delivered ratably over the contract term. Revenue equals contract price divided by contract length, recognized monthly. ASC 606 adds nothing controversial.

AI contracts complicate this in three structural ways. First, variable consideration: usage-based and outcome-based contracts have transaction prices that depend on customer behavior, which is not known at contract signing. ASC 606 requires the company to estimate variable consideration but constrains the estimate to the amount the company can support with reasonable reliability — typically much less than the contract's nominal upside until a track record is established. Second, multiple performance obligations: a hybrid contract bundling subscription plus usage plus outcome bonuses has three or more obligations, each requiring separate price allocation and separate recognition timing. Third, outcome dependency: in pure outcome-based contracts, revenue cannot be recognized until the outcome is delivered and confirmed — which can produce a six-to-twelve-month lag between contract signing and revenue recognition.

The practical implication is that an AI-native company's bookings (the contractual value of signed deals) and recognized revenue (the GAAP revenue on the P&L) diverge meaningfully. Bookings might be $5M for a quarter while recognized revenue is only $1.5M because the bulk of the contracts are outcome-based and revenue recognition is constrained to the conservative estimate. Investors and boards must learn to read both numbers; founders unfamiliar with the gap often misjudge the company's financial state.

Fictional walk-through. Imagine OutcomeAI, an AI customer-support company. In Q1, the company signs $4M in new annual outcome-based contracts at an average of $2/resolved-ticket, projecting roughly 2M tickets across its customer base. ASC 606 requires recognizing revenue only as outcomes are delivered. By the end of Q1, only 200K tickets have been resolved (deployment ramps slowly), producing $400K in recognized revenue. The company's bookings are $4M; the recognized revenue is $400K; the deferred revenue (contracts signed but not yet recognized) sits at $3.6M. The P&L shows $400K of revenue; the board needs to see all three numbers — bookings, recognized revenue, deferred revenue — to understand the business state. A founder who sees only the $400K recognized revenue and thinks the business is stagnating is wrong; a founder who sees only the $4M bookings and thinks the business has $4M of GAAP revenue is also wrong.

Example. Confirmed pattern: Every AI-native company with non-subscription contracts faces this complexity. Sierra, Decagon, and other outcome-priced companies report meaningfully different bookings and recognized revenue figures in their investor materials. Companies on pure subscription pricing (early Per-Seat or Per-Call) face simpler recognition but still must demonstrate ASC 606 compliance to auditors during fundraising or M&A.

Primary risk. Aggressive recognition that auditors later restate. The company recognizes revenue under optimistic assumptions about variable consideration; auditors disagree at year-end; revenue is restated downward; investors lose confidence. Mitigation: engage an AI-experienced revenue accountant before signing the first non-subscription contract; document the recognition policy formally; review the policy with auditors during the first audit cycle rather than after.

Secondary risk. Conservative recognition that hides growth. The company recognizes revenue too conservatively; the P&L looks weaker than the underlying business performance; investors and the board misjudge the company's trajectory. Mitigation: report bookings, deferred revenue, and recognized revenue separately and consistently; train investors and board members on how to read all three numbers.

First move. Read the FASB's ASC 606 standard (or have your accountant brief you). Document your company's revenue-recognition policy in a one-page memo. Review it with an external accountant before your first audit cycle.

Approach 7 — Compute COGS Accounting

Maturity: Proven. Beginner difficulty: Medium.

In Plain English. Compute COGS Accounting is how an AI-native company treats the cost of running its AI workloads on the income statement. Foundation-model API calls, GPU rentals, inference infrastructure, fine-tuning compute, and embedding generation are all costs that flow through cost of goods sold (COGS) — the line on the P&L that determines gross margin. Getting these costs classified correctly is the foundation of every margin metric the company will ever report.

Traditional SaaS hosting costs are small (typically 5–15% of revenue) [Industry benchmark], so the COGS line is conceptually unimportant. For AI-native companies, compute is often 30–60% of revenue [Emerging pattern], which makes COGS the most consequential line on the income statement. Mistakes in classification — capitalizing what should be expensed, or expensing what should be capitalized — produce gross-margin numbers that do not reflect economic reality.

Best treated as a foundational discipline at every stage. The classification rules are not optional; they affect every external metric the company reports.

Core idea. Classify compute costs correctly between cost of goods sold (which reduces gross margin) and operating expenses (which do not), and apply consistent treatment so margin trends reflect economic reality.

When to use it. Always, from the moment the company has compute costs. The complexity scales with the cost magnitude, but the discipline applies universally.

Mechanism. Compute costs in an AI-native company fall into three categories that get different accounting treatment.

Direct production compute — the cost of running the AI workloads that fulfill customer requests. Foundation-model API calls when serving customer queries, GPU inference when generating customer outputs, embedding generation for customer data. This category is unambiguously COGS — it is the cost of delivering the product, and it scales with revenue.

Product-development compute — the cost of training and fine-tuning models, evaluation runs, research experiments, and infrastructure work that improves the product but is not directly tied to customer requests. This category is generally R&D expense (operating expense, not COGS), though some companies capitalize fine-tuning costs as intangible assets when the resulting model has a defined useful life. The capitalization choice is consequential — capitalized costs do not reduce current-period earnings, while expensed costs do.

Internal-use compute — the cost of AI tools used by employees (engineering productivity, customer support tooling, sales enablement). This is operating expense, not COGS, regardless of magnitude.

The structural problem in AI-native companies is the gray zone between production and product-development compute. A team running an evaluation pipeline is doing both — producing data that improves future model performance (R&D) and validating the current production model (potentially COGS). A clear allocation policy, documented and applied consistently, is what auditors require.

The other accounting question is prepaid compute commitments. Companies that commit to large compute purchases from cloud providers (AWS Bedrock, Azure OpenAI, GCP) for discount pricing get the accounting treatment of any prepaid expense — booked as an asset on the balance sheet, expensed to COGS as the compute is consumed. Companies that buy reserved capacity for one or three years get even more complex treatment that may involve embedded leases under ASC 842.

Fictional walk-through. Imagine AgentCo, an AI agent platform with $5M ARR. The company spends $2M annually on compute: $1.5M on production inference (serving customer requests), $300K on training and evaluation, and $200K on internal employee tooling. Under correct classification, $1.5M flows through COGS (gross margin: 70% on the $5M revenue), $300K is R&D expense, and $200K is general operating expense. A founder who incorrectly puts all $2M into COGS reports gross margin of 60% — a significantly worse number that misrepresents the business. A founder who incorrectly puts only the production inference into COGS but excludes a portion of the inference compute that genuinely served customer requests (perhaps the team batched evaluation runs onto the same GPU pool) overstates gross margin. Both errors compound at scale; neither will survive an auditor's first review.

Example. Confirmed pattern: Every AI-native company has to develop compute-COGS classification policies. The Bessemer Cloud Index and a16z's writing on AI margins both reference the importance of consistent compute classification when comparing AI-native company margins.¹ Public AI companies (when they emerge) will have to disclose their classification policies in detail.

Primary risk. Inconsistent classification that masks margin trends. The company classifies compute one way in Q1 and another way in Q3; the resulting margin numbers are not comparable; investors lose confidence. Mitigation: document the classification policy formally; apply it consistently; review it with auditors during the first audit cycle.

Secondary risk. Capitalizing development compute aggressively to inflate near-term earnings. Some companies capitalize model training and fine-tuning costs as intangible assets, which improves near-term profitability at the cost of future earnings (the capitalized costs are amortized over the asset's useful life). Aggressive capitalization is a frequent audit-comment area. Mitigation: be conservative on capitalization; expense most development compute unless there is a clear, documented case for asset treatment.

First move. List every compute cost the company incurs. Classify each into production / product-development / internal-use. Document the classification rules in a one-page policy memo. Apply consistently from this point forward.

Approach 8 — Cohort Analysis with Model-Cost Decay

Maturity: Emerging. Beginner difficulty: Advanced.

In Plain English. Cohort Analysis tracks groups of customers acquired in the same period over time — how their revenue, retention, and gross margin evolve as they age. Traditional SaaS cohort analysis assumes unit costs are stable: a customer acquired in 2023 costs roughly the same to serve in 2026 as they did in 2023, so the cohort's gross margin is stable.

For AI-native companies, this assumption is wrong in a structurally important way. Foundation-model prices have fallen 30–60% per year for several years and continue falling [Emerging pattern: observed across major foundation-model providers 2023–2026; rate is driven by competition, hardware improvement, and architectural innovation, none of which are guaranteed to continue at the same pace]. A customer cohort acquired in 2023 at a 50% gross margin may be operating at a 70% gross margin in 2026 — not because the cohort has done anything different, but because the compute they consume costs less. AI-native cohort analysis requires explicitly modeling this model-cost decay, separating "cohort improvement from price changes" from "cohort improvement from customer behavior."

This is one of the most analytically sophisticated approaches in the catalog. It requires data infrastructure, finance discipline, and patience that early-stage companies typically do not have. But the companies that get it right see a fundamentally clearer picture of their unit economics than companies that ignore it.

Best as a discipline that develops gradually as the company matures, becoming essential by Series B. Most powerful in usage-based and outcome-based pricing models where compute is a meaningful share of cost.

Core idea. Track customer cohorts over time, separating the contribution of cohort behavior (retention, expansion) from the contribution of falling model costs (compute price decay) to understand the true underlying unit economics.

When to use it. When the company has at least 12–24 months of customer data with consistent measurement. When compute is a meaningful share of cost (typically 20%+ of revenue). When the finance team has the data infrastructure to track per-cohort gross margin over time.

Mechanism. Cohort analysis with model-cost decay separates two effects that traditional cohort analysis conflates.

Cohort behavior effect — does the cohort retain, expand, churn? Are heavy users getting heavier? Are light users dropping off? These are the questions traditional cohort analysis asks, and they remain critical.

Model-cost decay effect — how has the cost of serving the cohort changed since acquisition? If foundation-model prices have fallen 40% since the cohort was acquired, the gross margin on that cohort has improved by a corresponding amount even if customer behavior has not changed at all.

The methodology requires holding customer behavior constant (or measuring its change separately) while attributing margin changes to compute-price decay. Most companies do this by maintaining a "synthetic cost" baseline — the cost the cohort would have incurred at the original acquisition-period prices — and comparing actual current cost to the synthetic baseline. The difference is the model-cost decay benefit, which can be substantial.

The strategic implication is that AI-native companies have a built-in margin tailwind that traditional SaaS does not. Cohorts acquired today will be more profitable in 2028 than they are today, even with no change in customer behavior, because compute will be cheaper. Companies that model this effect explicitly can make better decisions about CAC payback (acceptable longer than traditional SaaS norms because the cohort gets more profitable over time), pricing reductions (the company can lower prices over time to drive growth without sacrificing margin), and capital allocation (compute-cost-decay is a real form of margin expansion that competes with revenue growth as a margin driver).

Fictional walk-through. Imagine Sigma, a $10M ARR AI company with usage-based pricing. The 2024 cohort was acquired at an average gross margin of 55%. By the start of 2026, the same cohort is operating at 72% gross margin. The naive interpretation: "the cohort has expanded usage and become more profitable." The cohort-with-model-cost-decay analysis reveals that customer behavior has changed marginally (7% margin contribution from increased usage and small price increases), but the dominant effect is model-cost decay (10% margin contribution from foundation-model prices falling). Sigma can now make informed decisions: hold prices steady and let margin expand further, lower prices and use the cost decay to accelerate growth, or invest the margin tailwind in expanding features. Without the analysis, Sigma might mistakenly attribute all of the margin improvement to its own pricing power and make decisions that do not survive the next round of model-price competition.