Problem Solving with general agents: A 90-Minute crash course

7 principles, 4 tools, 80% of Real Use

Aik amali crash course in the operating discipline that turns any general agent - Claude Code, OpenCode, Claude Cowork, or OpenWork - from a chalak khilone into a aistool jis se aap real kaam ship kar sakein. Same seven principles, four interfaces, one engagement.

A note on the "80% of Real Use" framing. This is a coverage claim about content, not a metric about users or sessions. Most of what aap actually do with a general agent in Mode 1 problem-solving - across coding, contract review, financial modeling, hiring loops, research briefs, marketing operations - exercises these same seven principles. The long tail of edge cases (deep performance tuning, multi-agent orchestration, custom evals, exotic integrations) lives in the depth chapters this crash course points to. 80% is shorthand for "the high-value subset that pays back the most for the time you spend learning it." Pareto, applied to which discipline to learn first.

Yeh kis ke liye hai. Anyone who's about to use a general agent to solve a real problem - engineers reaching for Claude Code or OpenCode, domain experts (lawyers, accountants, marketers, HR leaders, healthcare administrators, consultants, analysts, founders) reaching for Claude Cowork or OpenWork. The work changes; the discipline doesn't. Aakhir tak of yeh page aap know the seven principles that govern every productive session and what each one looks like in aap ka tool.

One thesis underneath this whole page. Yeh course is Mode 1 of general agent use - the problem-solving engagement. You open tool, you solve a thing, the session ends, the outcome ships. (Mode 2 - manufacturing engagement - is when you use Claude Code or OpenCode to build a durable AI Worker for an AI-Native company; that's a different course, governed by a different rule set.) Mode 1 is where most professionals live for the foreseeable future, and the seven principles below are the rules that make it work.

Pehle se kya chahiye - read these first. yeh page assumes you've already done two things:

AI Prompting in 2026. Is ke thirteen concepts (briefing like a colleague, siyaq o sabaq as the whole game, thinking modes, neutral framing, the iterate loop, AI desktop apps and permissions, structured outputs, models checking models) woh bunyad hain every section here builds on. The principles below are ke woh discipline kaisi lagti hai once agent has hands.

One of the two tool-pair crash courses - whichever matches the tool family aap use. One course, one tool, is enough. The tool-pair courses teach two tools side by side (Claude Code and OpenCode together; Cowork and OpenWork together), but you only need to have actually worked with one tool from the pair to follow yeh page. Knowing Claude Code alone - or OpenCode alone, or Cowork alone, or OpenWork alone - is the right shuruaati nuqta.

- Engineers - read the Claude Code and OpenCode: A 90-Minute Crash Course; pick the one tool aap actually use.

- Domain experts - read the Cowork and OpenWork: A 90-Minute Crash Course; pick the one tool aap actually use.

You should be comfortable with aap ka chosen tool's surface - folders, plan mode, approvals, the rules file, the execution view - before reading further. The seven principles on yeh page sit on top of that surface; they don't replace knowing how the surface works.

(If you ever cross the divide later - a developer who wants Cowork for client deliverables, a finance lead who eventually needs Claude Code for Mode 2 manufacturing, or even just curious about the other tool in aap ka own family - aap kar sakte hain pick up additional tools any time after yeh page. The seven principles transfer immediately; only the surface changes.)

If you haven't taken aap ka tool's crash course yet, do that first. yeh page dikhata hai the operational discipline that turns aap ka tool from a novelty into something aap kar sakte hain ship work on. The discipline doesn't land if you're still figuring out where the buttons are.

Reading order, in one line: AI Prompting → one tool-pair crash course (any one tool from it) → yeh page.

Pick aap ka tool, the page follows

You already know one of the two tool families from the prerequisite crash course - either the engineering family (Claude Code or OpenCode, terminal-native) or the knowledge-work family (Cowork or OpenWork, desktop apps). Two families, four tools total. Sections on yeh page that diverge between the four tools have a switcher so aap kar sakte hain read in aap ka tool's voice:

Engineering family

- Claude Code - Anthropic's terminal-native engineering tool, polished out of the box.

- OpenCode - open-source, model-agnostic terminal-native engineering tool.

Knowledge-work family

- Claude Cowork - Anthropic's desktop-based knowledge-work agent for domain experts.

- OpenWork - open-source desktop knowledge-work agent from Different AI (repo). Is course mein, we treat it as the open-source counterpart to Claude Cowork - a folder/document/workflow-oriented interface for domain experts, analogous to how OpenCode relates to Claude Code for engineers.

Read in aap ka tool's column whenever there's a four-column table; the rest of the page applies to all four tools identically and isn't split. Where prompts, examples, and exercises don't have tabs, that's because the same thing works in all four tools - just run it in aap kas.

The convention, in one line: four columns only where the four tools genuinely differ (file paths, UI surfaces, mechanism). Everything else - the principles themselves, the prompts in the examples, the practice exercises, the capstone - is universal and shown once.

The dual-track structure for examples. Each principle below has two example tracks: non-engineering examples (a lawyer, an accountant, a marketer, an HR partner, etc.) and engineering examples (a developer working with files, schemas, queries, deployments, Git). Read the track that matches aap ka kaam; skim the other for the contrast. The principle is the same in both tracks - domain changes, discipline doesn't.

If you work across both families (say, Claude Code for aap ka codebase and Cowork for client deliverables), pick whichever you're in right now. The discipline is identical across all four.

Want the deep version of any concept on yeh page?

This is a crash course - the seven principles in one read. For the full treatment, see Chapter 18: The Seven Principles of General Agent Problem Solving. For tool-specific depth, the downstream pages are Claude Code and OpenCode: A 90-Minute Crash Course and Cowork and OpenWork: A 90-Minute Crash Course. yeh page dikhata hai the principles; those pages are the surfaces.

Choose aap ka reading depth. yeh page dikhata hai denser than 90 minutes can cover cover-to-cover. Three tiers, depending on how much time you have and where aap hain in aap ka agent journey:

Tier 1 - 30-minute taste (best for first-time readers, especially non-engineering). Read three principles only: Principle 1 (Part 2 - action over talk), Principle 3 (Part 4 - verification), and Principle 5 (Part 6 - persistence). Do one practice exercise from each principle's example track that matches aap ka kaam. That's enough to feel the three biggest shifts: brief the hands instead of asking a chatbot, never trust "looks right," and put what mattered into file the next session will read. Come back for the other four principles on a separate sitting once you've felt the difference in aap ka own work.

Tier 2 - 90-minute essential path (the standard read). Read Part 0, the Lindy Effect section, Part 1 (the seven principles map), Part 9 (the char marhalon walworkflow), and Part 11 (the capstone). Inside each principle in Parts 2 - 8, read only the four-tab table and the example track for aap ka kaam - non-engineering examples if you use Cowork/OpenWork, engineering examples if you use Claude Code/OpenCode. The other track is there when you want to cross-train. That covers roughly 80% of the conceptual mileage. The remaining 20% - second-track examples, the worked example in Part 10, the unifying patterns, the depth callouts - is worth a second pass on a different day. Don't try to read it all in one sitting; the principles land better on two reads than on one heroic one.

Tier 3 - full read (the operational manual). Everything, including both example tracks, both worked examples in Part 10, the depth callouts on SQL/typing/system-of-record, and the engineering CLAUDE.md samples. About 2 hours. Best after Tier 2 has settled in for a few days of real work.

Non-engineering readers, at any tier: skip every code block, the typing callout in Part 3, system-of-record callout in Part 6, and any section labeled "Engineering examples." Those are written for engineers and assume engineering vocabulary. Read the non-engineering examples instead - that's where the discipline shows up in aap ka kind of work. The principles themselves are identical in both tracks; only the surface changes.

Part 0: Why yeh page exists

Two people use the same general agent for the same task. One finishes in 20 minutes with a clean output. The other spends an hour in correction loops, ends up with a polluted siyaq o sabaq, and starts over. Same agent. Same capabilities. What's different?

The answer is principles.

A safety warning, up front. general agents act on aap ki taraf se - they read files, write files, run commands, call services, and sometimes touch production systems. Never grant broad shell, file, browser, email, calendar, or connector access until you understand the tool's permission model. Start in read-only or approve-each-step mode. Expand autonomy only after you've watched agent behave correctly inside a tighter envelope. Principle 6 (constraints aur safety) makes this concrete, but you should default to "less access" from aap ka first session - not from aap ka tenth, after something went wrong.

The seven principles below emerged from analyzing thousands of successful and failed agent sessions - across coding tasks, contract reviews, financial models, hiring loops, and research briefs. They answer questions like: Why does agent sometimes go in circles? Why do long sessions degrade? Why do some prompts work and others don't?

Each principle addresses a specific failure mode:

| Principle | Addresses |

|---|---|

| 1. Bash is the Key | "Why does agent only talk about doing things instead of doing them?" |

| 2. Code as Universal Interface | "Why does my prose request keep getting misread?" |

| 3. verification as bunyadi qadam | "Why does output look right but break in production?" |

| 4. Small, Reversible Decomposition | "Why did one big change just nuke an afternoon of work?" |

| 5. state ko files mein mehfooz rakhna | "Why does agent forget what we decided yesterday?" |

| 6. constraints aur safety | "Why did agent touch files I didn't authorize?" |

| 7. Observability | "Why don't I know what agent actually did?" |

The thesis in one line. The principles govern the session; the tools are interfaces to the same session. Learn to think with the principles and aap ka skill transfers whichever tool you happen to be in.

The chapter in five bullets - read this if you read nothing else

If you internalize only these five points, you have 60% of the value:

- Action over talk. A general agent's value comes from doing things - running commands, reading files, calling services. Treat every prompt as something that should result in an action or an artifact, not a paragraph of explanation.

- Code (and structured artifacts) over prose. When precision matters, ask for a schema, a table, a code block, a checklist - not a paragraph. agent's output quality goes up sharply when the format is constrained.

- verify, don't trust. Every meaningful output needs a verification step - tests for code, a rubric for a memo, a cross-model review for a high-stakes deliverable. "Looks right" is the failure mode.

- Small steps, atomic checkpoints. Decompose work into reversible units. Commit, snapshot, or save-version after each unit lands. Never let agent run an hour of work without a single checkpoint.

- files are memory. The conversation is volatile; filesystem is durable. Anything worth remembering across sessions - decisions, plans, conventions, glossaries - belongs in file, not in a chat history.

The remaining two principles (constraints and observability) are how you operationalize the first five. They are what keeps agent inside the lane you set and what tells you whether it stayed there.

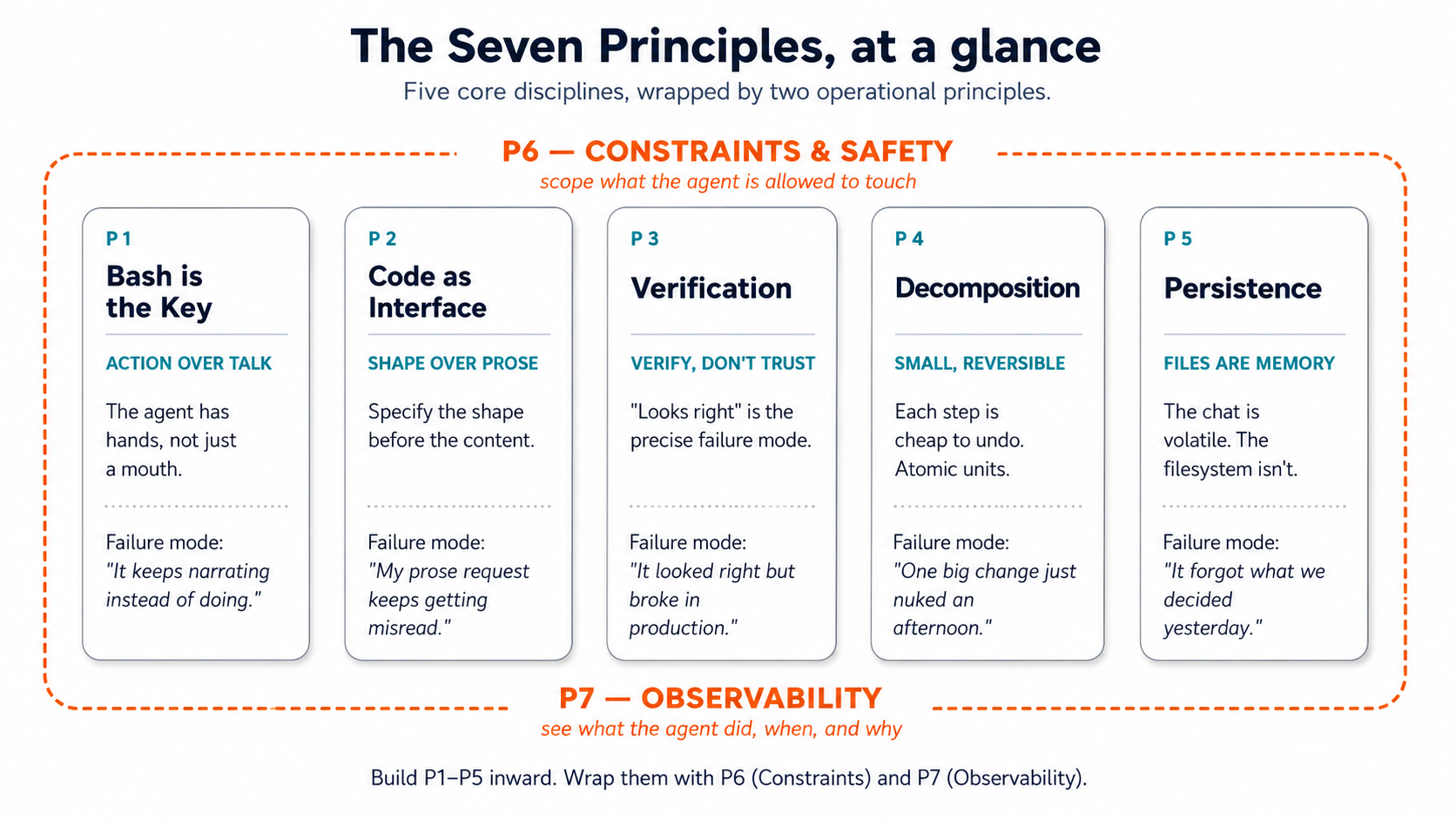

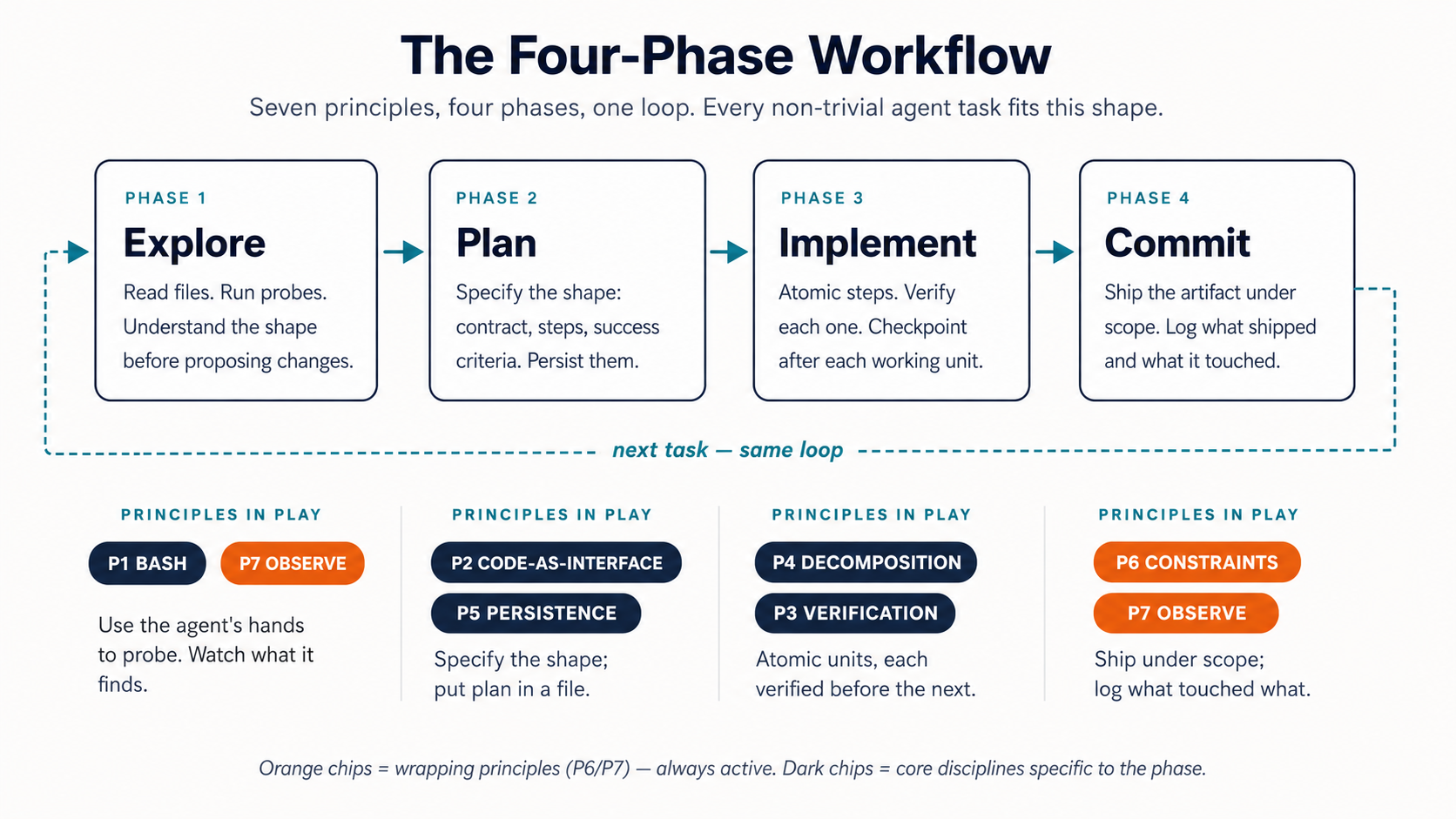

Figure 1: The five core disciplines, wrapped by the two operational principles. Print this and tape it to aap ka monitor.

Figure 1: The five core disciplines, wrapped by the two operational principles. Print this and tape it to aap ka monitor.

The Lindy Effect: Why the Seven principles Look the Way They Do

The Lindy Effect is a heuristic - not a universal law - about non-perishable things like technologies, ideas, and tools. The observation: in many such categories, longer past survival is evidence of likely continued survival, so tool that has been kaam ka for 40 years is plausibly more likely to remain kaam ka for the next 40 than tool that has been kaam ka for 4 years is to remain kaam ka for the next 4. Age isn't a weakness; in the right category, it's evidence of durability. The heuristic doesn't apply uniformly to everything (fashion, hardware specs, and APIs all break the pattern), but it applies powerfully to the layers underneath them - the protocols, formats, languages, and execution surfaces that the surface-level churn relies on. Those layers have already survived everything novel that came along - including, now, the rise of general agents.

This matters in agentic era because general agents don't operate in a magical new world separate from existing computing infrastructure. They act through the same surfaces engineers, operators, and organizations have been using for decades: the terminal, files, Bash, Git, SQL, HTTP, JSON, logs, containers, schemas, tests, version control, package managers. agent reasons in natural language; it acts through proven technical interfaces. These interfaces survived because they work.

Three implications for yeh page:

-

Old technologies become more aham, not less. Bash lets agents execute. Git lets agents track and reverse. SQL lets agents query structured truth. files give agents persistent working memory. Logs give agents observability. Containers give agents repeatable environments. The Lindy stack is agent's stack. tool that did one thing well for thirty years is now doing that one thing well plus serving as a qabil-e-aitemad substrate underneath agent that can use it ten thousand times a day.

-

Coding doesn't disappear; the insani's role shifts to two specific skills. Where insan used to type most of code, they now do two things and let agent do the rest: define the problem logically (frame the work as a spec - an interface, a schema, a typed signature, a structured output) and read code well enough to verify it (read, not write - enough to catch a wrong

WHEREclause, a missing edge case, a dangerousDROP). agent writes, modifies, tests, and executes. Code remains the medium through which problems become precise, repeatable, testable, and scalable - but the insani's interaction with that medium is now mostly upstream (problem definition) and downstream (verification). The typing in the middle is agent's job. -

The synthesis - five properties, seven principles. agents don't need new programming languages or revolutionary tools; they need surfaces with five specific properties. Each property maps to a principle below - and the surfaces that already have these properties are mostly long-lived technologies:

(First-time readers - especially non-engineering - can skim or skip the table on a first read. The thesis sentence below the table is the takeaway; the table is the technical mapping that makes the case. Return to the table on a second pass once the seven principles have shown up in aap ka own work.)

Property Principle Long-lived surfaces that already have it Stable P5 (Persistence) files, relational databases, version-controlled repositories Typed P2 (Code as Interface) SQL schemas, Python with type hints, TypeScript, JSON Schema, Pydantic Reversible P4 (Decomposition) Git commits, Neon branches, BEGIN; ... ROLLBACK;, file backupsInspectable P7 (Observability) Logs, activity views, query histories, the terminal scrollback itself Governable P6 (Constraints) Hooks, RLS, OAuth scopes, permission files, systemd unit users In one sentence: agents need stable, typed, reversible, inspectable, and governable action surfaces - and many of the best ones are indeed long-lived technologies. P1 (Bash is the Key) is the access principle: agent has to be able to reach these surfaces. P3 (verification as bunyadi qadam) is the discipline principle: the insani has to read what agent did on them. Together, the seven principles are the operating manual for any work that happens on a surface with these five properties. The surfaces existed before agents and survived for decades because typed schemas catch insani bugs too, reversible commits let insan experiment too, inspectable logs let insan debug too. agent era didn't invent the requirements; it raised the volume on them by orders of magnitude.

The takeaway. Agentic AI doesn't eliminate the old stack; it activates it. The most durable technologies become agent's tools, memory, and execution environment. If you're an engineer wondering whether psql, git log, find, and grep are still worth learning when "agent will do it for you" - they're worth learning more than they were before. You won't be running them by hand. aap be reading what agent ran, judging whether it was right, and constraining what it's allowed to run. That reading skill rides on the Lindy foundations. If you're a domain expert wondering whether folders, files, schemas, and explicit conventions are still "professional" in the AI era - they are more central to professional work, not less, because they're the substrate aap ka agent runs against.

Part 1: The Seven principles, briefly

Before we go deep, here is the whole map in one place:

| # | Principle | What it gives you | Where it lives in aap ka tool |

|---|---|---|---|

| 1 | Bash is the Key | agent can do, not just describe | Terminal (CC/OC) / Code-execution sandbox (Cowork/OW) |

| 2 | Code as Universal Interface | Precise outputs through structured formats | Code blocks, JSON, schemas, tables, .xlsx/.csv |

| 3 | verification as bunyadi qadam | Wrong work caught before it ships | Tests, lints, rubrics, cross-model review |

| 4 | Small, Reversible Decomposition | Bad runs are cheap to undo | Atomic commits / numbered file versions / /undo |

| 5 | state ko files mein mehfooz rakhna | agent doesn't forget what mattered | CLAUDE.md / AGENTS.md / folder instructions |

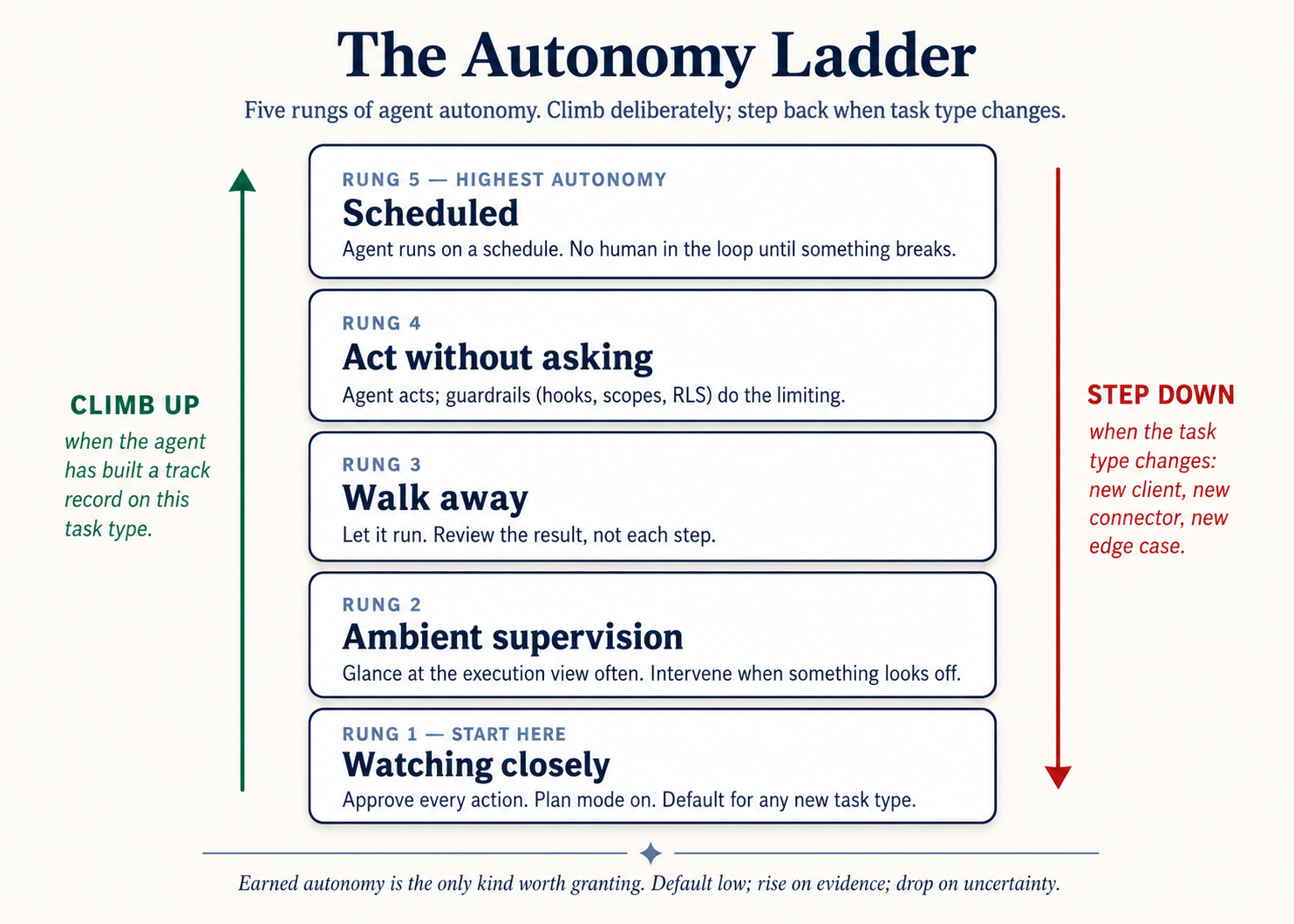

| 6 | constraints aur safety | Autonomy aap kar sakte hain actually trust | Permissions, folder scope, approval mode, autonomy ladder |

| 7 | Observability | You see what agent is doing | activity logs, plan stage, execution view |

These seven aren't in order of importance - they're in order of building dependency. Each one rests on the ones above it. aap kar sakte hain't meaningfully verify (3) without small reversible steps to verify (4). aap kar sakte hain't trust observability (7) without persisted state to log against (5). Read them in order at least once.

P1 and P2 look similar but are orthogonal. A common first read: "isn't 'Bash is the Key' just another way of saying 'use code instead of prose'?" No. P1 is about whether agent acts - the failure mode is agent narrating, summarizing, suggesting, but not actually running the command, touching file, or producing the artifact. P2 is about what shape agent's output takes - the failure mode is fluent prose ("here's a thoughtful analysis...") when aap ko chahiyeed a structured artifact (a table, a schema, a typed signature). agent can act without producing structure (a

findcommand that dumps raw output you have to re-parse) - that fails P2. agent can produce a structured artifact without acting on it (a beautiful schema in chat that never gets run against a database) - that fails P1. The two principles compose: P1 gets you action, P2 gets you a kaam ka artifact from that action. aap ko chahiye both.

The four tools, briefly, for cross-reference. Engineers writing code use Claude Code or OpenCode (terminal-native, file-and-bash-shaped). Domain experts use Claude Cowork or OpenWork (desktop-app, document-and-folder-shaped). Inside each pair, "Claude X" is the Anthropic-hosted, polished, proprietary option; "X" without the Claude prefix is the open-source, model-agnostic, configurable option. The principles below are identical across all four; only the surfaces differ - and you already know aap ka surface from the prerequisite course.

A vocabulary gotcha across the four tools. The word "plugin" means slightly different things across this family: in Cowork it's a role bundle (skills + connectors + commands packaged together); in OpenCode and OpenWork it's an npm package with event hooks (a different category entirely). The word "skill" is portable (agentskills-compatible across all four), but the invocation paths and UI surface differ. When yeh page uses these terms inside a four-column table, the meaning is the column's; when prose uses them generically, mentally substitute "aap ka tool's equivalent." A Cowork plugin and an OpenCode plugin are not interchangeable artifacts.

Part 2: Principle 1 - Bash is the Key

"Why does agent only talk about doing things instead of doing them?"

The principle. A general agent's defining capability - the one thing that separates it from the chat AI you've been using for two years - is that it can take actions on aap ka system. Run a command. Read file. Write file. Call a service. Chain a dozen of those together until task is done. agent is not a chatbot that knows code; it's a co-worker with hands. The first principle is to treat it that way.

Why this principle exists. The most common new-user mistake is asking agent the kinds of questions you'd ask ChatGPT in 2023 - "How do I add auth to my Express app?", "What should this contract clause say?" - and getting back a wandering monologue. agent had hands and you asked it for advice. The fix is to specify the action and let agent execute against it. "How do I add auth" is a chatbot prompt. "Add email/password auth to this Express app using bcrypt, store users in the users table, write tests" is agentic prompt. The first produces text. The second produces a working feature.

This is concept 1 of AI Prompting - novice vs. power user - with hands attached. Same shape of brief, higher stakes, because agent acts on it.

What "bash" means in each tool

| Claude Code | OpenCode | Cowork | OpenWork | |

|---|---|---|---|---|

| Action surface | Terminal: literal shell commands (npm test, git status, grep) | Terminal: literal shell commands, same model | Code-execution sandbox: Python/bash inside an isolated container, plus file ops on granted folders | Same as Cowork (OpenCode engine driving it): code sandbox plus local file ops |

| What's visible | Each command appears in the terminal as agent runs it; output streams inline | Same as Claude Code | Each step appears as a card in the execution view ("Used tool", "Read file", "Ran code") | Same as Cowork, rendered as a timeline of step chevrons |

| approval default | Asks before each Bash action; allow-listed commands run silently | Same as Claude Code, configurable to the tool level | Asks before significant operations (file writes, sends, scheduling); reads pre-approved per folder | Same as Cowork; per-tool approval granularity via OpenCode permissions |

| Where this fails quietly | --dangerously-skip-permissions flag off, agent waiting for approval you didn't notice | "permission": "allow" set globally without thinking; agent does things you didn't intend | Untrusted PDF in folder; instructions inside it trigger actions you didn't ask for | Same as Cowork; risk amplified if multiple connectors are wired without scope review |

The mental model that ties all four together: agent has hands, and aap ka job is to brief the hands, not give a lecture to a brain.

Non-engineering examples

Two contrasting non-software examples, same principle. (One unstructured-search shape, one structured-to-structured-matching shape. Same discipline applies to any batch-review or reconciliation task in any domain.)

Litigation (unstructured search). A litigator wants every reference to "indemnification" across 47 deposition PDFs.

- Chatbot framing: "What does indemnification mean in deposition transcripts?" → essay, no PDFs touched.

- Agent framing: "Search every PDF in

/depositionsfor 'indemnification' and close synonyms ('hold harmless', 'indemnify'). For each hit, return file name, page number, and the surrounding paragraph. Save toindemnification-hits.md." → 47 files searched, hits indexed.

Accounting (structured reconciliation). A controller needs to reconcile last month's bank statement against the GL export.

- Chatbot framing: "How do I reconcile a bank statement against a GL?" → tutorial.

- Agent framing: "Open

bank-statement-march.csvandgl-export-march.xlsx. For each bank transaction, find the matching GL entry (same date ±2 days, same amount, same vendor). List unmatched transactions inmarch-reconciliation-gaps.md, separated into 'in bank not GL' and 'in GL not bank'." → the gap list, twenty minutes.

The shape is identical across both: an action on specific inputs producing a specific artifact. The chatbot column is where most new users live for their first month. agent column is where 80% of the productive use lives forever after.

Prompt pattern that invokes this principle. Whenever you find aap kaself typing a question, ask: can I rephrase this as an action with an artifact? Almost always, yes. "Explain the difference between these two contracts" → "Read both contracts in /drafts, produce a side-by-side diff table to contract-diff.md."

Practice - Reframe a chatbot prompt as agent prompt. Pick a recent question you asked a chat AI that produced a mostly-useless answer. Reframe it as an action with: (a) an explicit input source (file, a folder, a connector), (b) a structured output (file, a table, a list), (c) no questions for agent to answer in prose. Run both versions in aap ka tool of choice. Note which one produced something you could actually use, and how much tezer agent-framed version was to act on.

Engineering examples

Two engineering examples, same principle.

The Downloads folder. You open Claude Code and ask: "I have a cluttered Downloads folder. What's in here, what's eating space, what duplicates exist?" You don't pick a single command. agent runs ls -la ~/Downloads, notices it only shows the top level (23 items), self-corrects to find ~/Downloads -type f | wc -l, gets the real count (847 files), runs four more find commands to classify by type, then du -sh and du -ah | sort -rh | head -10 to find the space hogs. Total time: 30 seconds. You ran zero commands. You described a problem; agent picked the tools - and corrected its own mistake mid-run when its first count was wrong.

Bash can't do math; that's fine. You type echo $((47.50 / 3)) in aap ka terminal. Syntax error - Bash's $((...)) is integer-only. Worse, echo $((10 / 3)) silently returns 3, not 3.333..., with no warning. (In 1982 the Vancouver Stock Exchange shipped truncation like this in production; after 22 months their index read 524 when its correct value was 1009 - half the market's value silently lost.) So agent writes a 6-line Python script that reads numbers from stdin, sums them with Decimal, prints the result to stdout. Now you have a decimal-add command. Bash is the right tool for files; Python is the right tool for math; agent stitches them via pipes. The principle isn't "use Bash" - it's use the action surface; let agent pick which command on it.

The unifying rule for engineers: if aap kar sakte hain describe task tezer than aap kar sakte hain do it, ask agent. If aap kar sakte hain do it tezer than aap kar sakte hain describe, just do it. Three files to move? Drag them. Three hundred files to categorize by type, date, and project? Agent territory.

Practice - Clean up a folder by description, not by command. Pick a real folder you've been meaning to clean up (Downloads, a project archive, last quarter's misc files). Describe the problem in one sentence: "What's in here, what's eating space, what's duplicated, what can be archived?" Don't specify a single command. Watch what agent runs. Note how often you had to choose a command vs. let agent pick. If you found aap kaself dictating commands, aap ka problem description wasn't sharp enough.

Part 3: Principle 2 - Code as Universal Interface

"Why does my prose request keep getting misread?"

The principle. Natural language is ambiguous. A formal interface - code, a schema, a typed table, a templated form - is not. When you give agent a prose request, aap hain forcing it to guess at the shape of what you want. When you give it a structured artifact to fill in, you remove the guessing. Output quality goes up sharply, and disagreements show up at the interface boundary instead of inside output.

Why this principle exists. Prose is a high-siyaq o sabaq, high-bandwidth medium between insan who share a culture. Between you and agent, it's a lossy compression. agent reads aap ka "make this report sharper" and has to infer twelve things you didn't say (which audience? what tone? what length? remove which parts? add which?). Each inference is a chance to drift. Specifying a format (a table with these columns, a JSON object with these fields, an outline with these section names) eliminates most of the drift before agent writes a word.

This is concept 7 of AI Prompting - outline before drafting - with the outline made formal. The outline is now an interface, not a suggestion.

What the insani still does - code AND content. The universal interface is "code" in the broad sense: anything with a precise, formal shape. In agentic era, you almost never need to produce the shape from scratch - agent does that. aap ko kya chahiye to do well is two things at the bookends of workflow, and the work looks structurally similar whether you're an engineer or a domain expert.

For engineers (working with code, schemas, queries):

- Define the problem logically. Frame the work as a precise spec - an interface, a schema, a typed signature, a structured output, a constraint. The clearer the contract, the less room agent has to drift.

- Read code well enough to verify it. Read, not write. Reading SQL is enough to catch a wrong

WHEREclause; reading a function signature is enough to catch a misnamed parameter; reading a migration is enough to catch a dangerousDROP.For domain experts (working with documents, models, analyses):

- Define the deliverable's shape. Specify template, sections, max lengths, column structure, allowed values - not the prose. "Memo with these four sections, 1 page max, exec summary first, three risks max in the risks section." The shape is the spec; agent fills it.

- Read output for factual grounding. Does this number tie back to the source? Does this claim match the document cited? Does this analysis use the right population? Agent prose is fluent - that's the trap. Read for what's true, not what sounds right.

Why this works now, and gets better over time. Agent output is increasingly built in small composable components (P4): short functions and atomic commits for engineers; one section, one table, one paragraph at a time for domain experts. Each component is readable in ek minute se kam waqt mein. As models improve, more of the verification pass moves into agent stack itself - type-checkers like

pyright/tscalready run as independent verifiers on every save; a second model from a different family reviewing the first model's diff is the models-checking-models pattern made structural; fact-grounding tools cross-check claims against sources automatically. Over time, the level of abstraction at which you verify rises: today you read lines or sentences; soon aap mostly review section summaries; eventually aap mostly approve outcomes. The reading skill and the spec-writing skill are what survive every shift - every level of automation still needs a insani to be the independent judgment at the top, and that insani's only durable contributions are defining the problem precisely and reading carefully enough to know when to trust the answer.

What "code" means as an interface in each tool

| Claude Code | OpenCode | Cowork | OpenWork | |

|---|---|---|---|---|

| Literal interfaces | TypeScript/Python interfaces; JSON schemas; function signatures | Same as Claude Code | Cell-and-column schemas for .xlsx; templates for .docx; YAML/JSON for structured outputs | Same as Cowork |

| The pattern "specify the shape first" | Write the interface, then implement to satisfy it: "Write a User interface, then a function that returns one" | Same as Claude Code | Define output template, then fill it: "Here's the rows-and-columns template for the deal review memo. Populate one row per deal" | Same as Cowork |

| Where prose creeps back in | Asking model to "make this code cleaner" without a constraint → drift | Same as Claude Code | "Polish this memo" → drift; instead, constrain the format (max 1 page, three sections, bullet structure) | Same as Cowork |

Non-engineering examples

Two example patterns, one engineer-shaped and one domain-expert-shaped:

ENGINEER (Claude Code / OpenCode):

"Write a TypeScript interface for the API request body -

fields: customerId (string), items (array of {sku, qty}),

shippingZip (5-digit string). Then write a validation function

that takes an unknown and returns a typed Result. Then write

the tests. In that order."

DOMAIN EXPERT (Cowork / OpenWork):

"Produce a one-page deal review memo. Required sections, in this order:

1. Deal summary (3 lines: customer, value, close date)

2. Risks (bullet list, max 5, sorted by severity)

3. Mitigations (one per risk, parallel order)

4. Decision recommendation (one of: GO / NO-GO / HOLD)

5. Open questions (numbered)

No other sections. No preamble. Tone: direct, no hedging."

Both prompts are the same shape - a formal interface specified before generation. The first uses TypeScript syntax; the second uses an outline. Both eliminate the same class of misreading.

Three more domain-expert patterns.

Consultant: client interview synthesis.

"Produce a client-interview synthesis. Required structure, no other sections:

## Interview metadata

- Client, date, interviewees (one per line)

## Stated problems (numbered, max 5, in priority order they implied)

## Unstated problems (numbered, max 3, with the evidence for each)

## Quotes worth carrying forward (max 5, each ≤25 words, attributed)

## Open questions for the next interview (numbered)

Tone: clinical, no advocacy. Length cap: 1 page."

Lawyer: deposition summary as a table.

"Produce a deposition summary, one row per witness in this table format:

| Witness | Role | Key admissions | Key denials | Open follow-ups |

'Key admissions' and 'Key denials' are bullet lists with page:line citations

from the transcript. 'Open follow-ups' is questions for the next deposition.

Save as `deposition-summary.md`. No prose paragraphs anywhere."

Real estate: property comp table.

"Read every listing in `/comps/`. Produce a comparison table with columns:

address, sale date, sale price, $/sqft, beds/baths, lot size, days on market,

key features (max 3 bullets), distance from subject (miles). Sort by $/sqft.

Bold any row where $/sqft is within 5% of our subject. Save as `comps.md`."

HR: candidate screening output.

"For each resume in `/inbound`, produce one record in this exact format:

## [Candidate name]

- Required quals met (Y/N per required qual, evidence for each Y)

- Preferred quals (count of matches, list which)

- Credential flags (anything to verify with a human)

- Recommendation: ADVANCE / HOLD / DECLINE (one word)

- Rationale: one sentence

No subjective adjectives. No comparisons across candidates."

What every example above has in common: the structure of output is specified before agent generates anything. output can't drift into the wrong shape - there is no wrong shape to drift into, because the shape is part of the prompt.

The prose escape hatch. Sometimes prose is the right interface - for an explainer, a brainstorm, a creative draft. The principle isn't "never write prose prompts"; it's "when precision matters, structure beats prose every time." The signal that you should reach for structure: you've iterated twice on prose and output is still wrong.

A subtle wrinkle. Code-as-interface also applies to inputs, not just outputs. If you're feeding agent five vendor proposals and asking for a comparison, paste them as a single table with consistent columns, not as five blocks of prose. agent's comparison quality is bottlenecked by aap ka input shape.

Practice - Replace a prose deliverable with a structured one. Pick a deliverable type you produce regularly (an analysis memo, a summary, a recommendation). Write the prompt you'd give agent. Then also write the structural spec for output: sections in order, max lengths, required vs. optional, banned content. Run agent on each prompt separately. Compare the two outputs side by side. The structured version will be sharper, more reviewable, and tezer to revise. The prose version will require more edits to ship.

Engineering examples

Two engineering examples, same principle.

CREATE TABLE as the contract. You ask agent to "store user expenses." You get a table with reasonable columns. Three months later, a null amount ships to the dashboard as zero, an expense for a deleted user creates an orphan, and a negative amount slips through and credits user. The fix isn't more validation in application - it's writing the schema as the contract first. Open a free Neon database (serverless Postgres, ~60 seconds to provision, no credit card, browser SQL editor), and ask agent for the DDL:

CREATE TABLE expenses (

id SERIAL PRIMARY KEY,

user_id INTEGER NOT NULL REFERENCES users(id) ON DELETE RESTRICT,

amount NUMERIC(10,2) NOT NULL CHECK (amount > 0),

category_id INTEGER NOT NULL REFERENCES categories(id),

description TEXT NOT NULL,

created_at TIMESTAMPTZ NOT NULL DEFAULT now()

);

That schema rejects nulls, orphans, and negatives at database layer. The interface IS the constraints; application can't accidentally bypass them no matter what code agent generates. Run the DDL in Neon's SQL editor, try to insert a bad row, and watch Postgres refuse it. Reading the rejection message is the cheapest verification step aap ever run.

A note on Neon for these examples. Neon is serverless Postgres on a free tier with branches aap kar sakte hain spin and discard in seconds - exactly the substrate the principles need. The SQL works in any Postgres; Neon just removes the "where do I run this?" friction so the examples are runnable in the time it takes to read them. Every Neon mention in yeh page dikhata hai ke liye pehle free tier; nothing requires a paid plan.

If Neon isn't an option for you (employer policy, data residency, an internal Postgres mandate, no third-party signup), every example on yeh page runs unchanged on any Postgres. Quick substitutes: Supabase free tier (web SQL editor, similar branching workflow), local Postgres in Docker (

docker run -e POSTGRES_PASSWORD=... postgres:16), Postgres.app on macOS, or aap ka existing managed Postgres (AWS RDS, GCP Cloud SQL, Azure DB for Postgres). The branching mechanic in Part 5's reversibility examples is Neon-specific; on other Postgres providers, the equivalent is a database copy or apg_dump/restore pair, slower but functionally identical. The schema, the queries, and the verification discipline transfer everywhere.

A note on language choice: typing is the new standard. agentic era didn't bring new programming languages; it made the typed versions of existing ones the default. Python with type hints (PEP 484+, checked by

mypy/pyright) and TypeScript (checked bytsc) have become the de facto standards for agent-generated code because they share one property: the type signature is a machine-checkable interface contract.Why typing matters with agents specifically:

- It constrains agent. A function typed

(x: int) -> strwon't be filled with code returning a list. The contract narrows drift before agent generates a token.- Errors caught at write time.

pyright/tscreject drift before any code runs - cheaper than the runtime bug you'd otherwise debug at 11pm.- An independent verification path. The type-checker isn't another instance of agent; it's a separate, deterministic checker. The "two coats of paint" problem from P3, made automatic.

- Living documentation. Six months later, the next agent that touches the function reads the signature alone and is bound by it.

When you specify the shape before generating content (the P2 move), specify it as a type whenever aap kar sakte hain. Prose says what you mean; types make it enforceable.

Function signature before implementation - typed. Don't ask "write a function that processes the CSV." Ask for the typed signature first, in aap ka language of choice:

# Python with type hints (PEP 484+):

from decimal import Decimal

def category_totals(csv_paths: list[str]) -> dict[str, Decimal]:

"""Sum amounts per category across the given CSV files.

Args:

csv_paths: paths to CSV files with columns (date, category, amount).

Returns:

Mapping of category name to total amount (Decimal for precision).

Raises:

FileNotFoundError: if any path doesn't exist.

ValueError: if any row has a malformed amount.

"""

...

// TypeScript:

async function categoryTotals(

csvPaths: string[]

): Promise<Record<string, number>> {

// Throws if any path is missing or any row has a malformed amount.

...

}

Now ask agent for three unit tests - empty input, one valid file, one malformed file - using pytest or vitest. Then ask for the implementation. In that order, with a pause for review at each step. The signature is the contract; the tests are the verification; the implementation comes last - and at every step, pyright (or tsc --noEmit) refuses code that drifts from the signature. agent's drift surface shrinks at each gate, and the type-checker catches its mistakes automatically.

The pattern in all three (CREATE TABLE, typed signature, the JSON Schema / Pydantic model in the same family): specify the shape before generating content. The shape is the interface. Output quality goes up sharply when the format isn't negotiable - and when the format is machine-checked (database constraint, the type-checker), it's not negotiable even if agent forgets.

Practice - Spec the contract before the inserts (schema), then spec the typed signature before the function (code). Part A - schema, 15 min: Spin up a free Neon project (one click; pick the smallest region near you). Ask agent: "Design a schema for tracking [pick a domain: expenses / inventory / contacts / book loans / time entries - anything with relationships]. Write the DDL with appropriate

NOT NULL,CHECK, andREFERENCES ... ON DELETEconstraints. Don't include any inserts yet." Read the DDL. Run it. Ask for ten realistic INSERT statements - including three that should be rejected by aap ka constraints. Run those. The rejections are the verification. Part B - types, 10 min: The same discipline applies to code. Pick a function you wrote recently with agent. Re-prompt it spec-first: ask agent to write the Python type-hinted signature (or the TypeScript signature) first, then threepytest/vitestcases, then the implementation - pause at each step. Runpyright(Python) ortsc --noEmit(TypeScript) on the result. Did the type-checker accept agent's implementation on the first try? If not, agent drifted from the signature - and you caught it for free, before any code ran. Schema is to SQL what the type signature is to code: both are the contract that doesn't depend on agent being careful.

Part 4: Principle 3 - verification as a bunyadi qadam

"Why does output look right but break in production?"

The principle. A finished-looking output is not a verified output. "Looks right" is the precise failure mode this principle exists to prevent. Every meaningful artifact agent produces should pass through a verification step before you accept it as done. verification is not a code-only idea - it applies to memos, models, plans, schedules, and contract redlines. The form changes; the discipline doesn't.

Why this principle exists. models - especially helpful ones - produce outputs that are plausible, which is not the same as correct. They will confidently miscount items in a list. They will mis-cite a paragraph that doesn't exist. They will produce code that compiles, passes types, and silently does the wrong thing on the third edge case. The only defense is to make verification a step in workflow, not an afterthought you might do if you remember.

This is concept 13 of AI Prompting - models checking models - promoted from a habit to a structural step.

What "verification" means in each tool

| Claude Code | OpenCode | Cowork | OpenWork | |

|---|---|---|---|---|

| Primary verification mechanism | Unit tests, type-checks, linters - run by agent after each change | Same as Claude Code | Output rubric: "Does the memo meet all five required sections? Are claims sourced? Is the recommendation a single word?" | Same as Cowork |

| Automated gate | Hook in .claude/settings.json blocks commit if tests/types/lint fail | Plugin in .opencode/plugins/ does the same with richer events | Less common but possible: a second agent pass that scores against a rubric and refuses to save until the score passes | Same as Cowork; can pin a smaller model for the verification pass to control cost |

| Cross-model review | Second tool (different family) reads the diff and writes a critique to docs/reviews/feature-x.md | Same pattern, different tool combination | Open a second chat (different model family) and run a "find what's wrong with this memo" pass | configure a second model provider in Settings and ask agent itself to do the cross-pass |

| Where verification gets skipped | "Tests pass" but you didn't write tests for the right thing | Same as Claude Code | "Memo looks good" but you didn't read every sentence against the source documents | Same as Cowork |

The pattern, in all four tools: don't accept output the first time agent says it's done. Force a verification step that has a clear pass/fail and that agent has to satisfy before moving on.

Non-engineering examples

Two contrasting non-software verification patterns. (One catches a fabrication, one catches an arithmetic drift. Same discipline applies to any claim-heavy deliverable in any domain.)

Litigation: citation grounding (catches fabrication). A litigator drafts an opposition brief and uses agent to summarize the procedural history.

- Without verification: the brief cites Smith v. Acme, 234 F.3d 567 (2d Cir. 2020) for a proposition the case doesn't actually stand for. Caught (embarrassingly) by opposing counsel's reply.

- With verification: agent is required to ground every citation in the actual case file: "For every case citation, open the underlying opinion and quote the specific paragraph that supports the proposition. Flag any citation aap kar sakte hainnot ground." Two flagged citations get reworded. The brief ships clean.

Marketing: numbers against analytics (catches arithmetic drift). A marketer asks agent to write a Q3 performance memo summarizing campaign results.

- Without verification: the memo says "open rates improved 34% quarter-over-quarter." Looks reasonable. Actual lift was 23%; agent compared the wrong baseline.

- With verification: "Before finalizing, re-fetch the underlying CSV totals for each cited metric and confirm the math. List each cited metric, source range, and computed value in an appendix." The 34% becomes 23% before the memo leaves the marketer's desk.

The pattern in both: the verification step has agent re-grounding its claims in the actual source. verification isn't "re-read aap ka draft and check it" - models are bad at catching their own errors that way. verification is re-fetch the source and prove the claim again.

Prompt pattern that invokes this principle.

"Before saving the final version, do a verification pass:

- List every factual claim in the draft

- For each one, identify the source document and exact location

- Flag any claim you can't ground in a source

Refuse to save the final version until every flag is resolved."

Drop this into the prompt of any high-stakes deliverable in any of the four tools. agent will catch what aap ka eyes would have missed in review.

Practice - Add a verification step to a high-stakes output. Pick any output agent produces this week where being wrong has a real cost (a client memo, a financial number, a citation, a recommendation, a metric in a report). After agent finishes, run a separate verification pass: "List every factual claim in the deliverable. For each one, identify the source location and quote the supporting text. Flag any claim aap kar sakte hainnot ground." Compare the verified version to the original. Note how many flags came back - that's the number of mistakes that would have shipped without the verification step.

Engineering examples

Two engineering examples, same principle.

The boss-finance number mismatch. Boss asks for a report on Q3 revenue by region. Agent writes a SQL query, runs it, hands back a clean number: $4.2M for the West region. You paste it into a board deck. A week later, finance pulls the same number from the ledger and gets $3.8M. You ask agent why. Confidently, agent rewrites the query and gives you a third number: $4.5M. Asking the same agent that wrote the query whether the query is correct is not independent verification. It is two coats of the same paint. The fix isn't to learn to write SQL - it's to learn to read it. SQL is declarative; four lines tell you what data is being returned. You don't need to write a single query to spot a missing WHERE clause, a wrong JOIN type, or a GROUP BY that excludes the rows that matter most. Open the query in Neon's SQL editor next to agent's output number. Read it. Predict what rows should come back. Then run it. If aap ka prediction matched, agent's number is probably right. If not, you've just found the bug before it shipped - and the cost of finding it was 30 seconds of reading.

Rollback the destructive ones before approving. The verification habit gets sharper for writes. When agent proposes an UPDATE or DELETE you're not sure about, wrap it in a transaction in Neon's SQL editor:

BEGIN;

DELETE FROM users WHERE last_login < '2024-01-01';

-- Check: how many rows did that touch?

SELECT count(*) FROM users;

ROLLBACK; -- Nothing was actually committed.

The ROLLBACK puts database back where it was. You've just dress-rehearsed the destructive change without taking the risk. If the row count looks wrong, you didn't lose anything; if it looks right, change ROLLBACK to COMMIT and run it for real. A DELETE you couldn't first rehearse is a DELETE you shouldn't run.

The tests that only cover the happy path. agent writes a payment-processing function. It writes the tests. The tests pass. Looks done. But the tests only exercised the happy path: valid card, valid amount, valid currency. verification step: "For each of these failure modes, write a test that asserts the right behavior - expired card, declined transaction, network timeout, currency mismatch, negative amount, amount over daily limit. Run all of them. Refuse to consider this feature done until each failure-mode test fails the function for the right reason." Half the time, one of the tests "passes" only because the failure mode silently returned success - exactly the bug that would have shipped if you'd skipped the verification step.

The type-checker as a free, automatic verifier. The cheapest independent verification path in code is the one that runs every time you save file. Use Python with type hints (checked by pyright or mypy) or TypeScript (checked by tsc), and agent's drift gets caught by a different program from the one that wrote code. If agent generates a function that ignores the typed signature - passes a str where an int was declared, returns None where a Result was promised - the type-checker refuses code before any test runs. This is the "two coats of paint" point made automatic: the type-checker isn't another instance of agent; it's a separate, deterministic checker reading the same code. Run it in pre-commit, run it in CI, run it before you call the function done.

The pattern in all four: agent that produced output is the worst possible verifier of that output. It has the same blind spots that produced the original. verification needs an independent path - aap ka own reading of code, a different model from a different family, a test that exercises what agent didn't think to exercise, a BEGIN; ... ROLLBACK; that lets the destructive change be inspected before it's committed, or a type-checker that mechanically refuses code that drifts from the contract.

Practice - Read the SQL agent wrote last week. Open Neon's SQL editor (or aap ka local

psql). Pick a query, function, or migration agent generated in the last week that you accepted without reading carefully. Paste it in. Before you run it: read it. For SQL: check theWHEREclause (does it scope by tenant / date / user as you expect?), theJOINtype (INNERwill drop unmatched rows;LEFTwon't), theGROUP BY(are you aggregating across the right grouping?). For destructive statements (UPDATE,DELETE,DROP): wrap inBEGIN; ... ROLLBACK;and run the safe version first. Note: would you have caught a wrong answer if you'd been reading along, or did you trust output? If the second, that's the calibration this principle costs to fix - and the cheapest place to start is on yesterday's diff, not tomorrow's.

Part 5: Principle 4 - Small, Reversible Decomposition

"Why did one big change just nuke an afternoon of work?"

The principle. Decompose the work into the smallest reversible units aap kar sakte hain. Land each unit. verify it. Checkpoint it. Then start the next one. Big atomic changes are the enemy. They take longer to debug when they go wrong, they're harder to review, and they make the failure mode "throw away an hour" instead of "throw away five minutes."

Why this principle exists. models are good at small, specified moves and progressively worse at large, vague ones. A 12-step task framed as one big prompt drifts further from aap ka intent at every step, and there's no place to course-correct in the middle. The same 12-step task framed as 12 small prompts - each verified before the next starts - keeps agent on the rails the whole way through. The cost of stopping after step 4 to redirect is nothing. The cost of unwinding 12 wrong steps at the end is aap ka afternoon.

The rule of thumb in all four tools: anything aap kar sakte hain't undo cheaply, you shouldn't do in one step. If reversing the change would take more than two minutes, the change was too big.

What "decomposition" and "reversibility" look like in each tool

| Claude Code | OpenCode | Cowork | OpenWork | |

|---|---|---|---|---|

| Atomic unit | Git commit after each step that works | Git commit, same pattern | Numbered file versions (memo-v1.md, memo-v2.md); or a drafts/ folder | Same as Cowork; /undo rewinds last message and file changes via git |

| Undo mechanism | git revert, git reset, Esc Esc to rewind conversation (does NOT revert files) | /undo rewinds conversation AND reverts file changes (requires git repo) | Save numbered versions; revert by copying back; or rely on cloud storage version history | /undo (inherited from OpenCode) - same mechanism as OpenCode |

| course correction mid-run | Esc to interrupt, then redirect; model picks up from where you stopped | Same as Claude Code | Stop button on active session halts immediately; redirect in next message | Same as Cowork |

| Where reversibility breaks down | A 200-line refactor in one prompt that touches 15 files | Same as Claude Code | A "rewrite the entire deck in our new template" prompt that overwrites the original | Same as Cowork; aggravated if no git is initialized in the workspace folder |

The prompt pattern that enforces decomposition:

"Break this task into the smallest steps you can. After each step:

1. Show me what you did

2. Run the verification check for that step

3. Commit / save a numbered version

4. Wait for my OK before starting the next step

Do not begin a step until the previous step's commit/save has landed."

That paragraph, prepended to any non-trivial task, single-handedly prevents most of the "one big change nuked everything" failures. The first time you try it aap feel like you're slowing agent down. After three tasks aap notice you finish tezer and with less rework.

Non-engineering examples

Two contrasting non-software decomposition patterns. (One prose deliverable, one cross-tab artifact with internal dependencies. Same discipline applies to any multi-step output in any domain.)

Founder: the Q3 board memo (sequential prose). Big atomic prompt - "write the Q3 board memo" - produces 6 pages of mostly-right content with one paragraph that misstates revenue, two structural problems, and a tone that doesn't match the board's preferences. Cleanup: 90 minutes. Same task decomposed - outline first (wait), section 1 (wait), section 2 (wait) - produces a clean deliverable in 40 minutes, with zero cleanup, because problems got caught at the section boundary before they compounded.

Accountant: a 12-tab Excel model (cross-dependent artifacts). The disaster pattern is "build me a full 3-statement model for this acquisition target." Two hours later model exists but cross-tab references are broken, the assumptions tab has the wrong currency, and the working-capital schedule double-counts AR. Decomposed: "Tab 1: assumptions only. Pause. Tab 2: revenue build, pulling from assumptions. Pause. Tab 3: operating expenses..." Each tab validated against the previous before the next is built. model that survives audit is the one that was built one tab at a time.

The unifying rule: if reversing the change would take more than two minutes, the change was too big. If aap kar sakte hain't git revert it, can't /undo it, can't delete one numbered file version - you're in trouble. The principle isn't "small for its own sake"; it's "reversible at low cost when you discover agent went off the rails."

Where this links to verification. Decomposition and verification are sisters. Small steps make verification cheap. verification makes small-step progress real. The two together are what turn agent work from "hope the big prompt was good enough" into "ratchet forward, never lose ground."

Practice - Decompose task that previously went sideways. Pick a recent agent task where output disappointed you and you had to redo it. Reconstruct: was it asked in one big prompt? Re-prompt the same task this week, decomposed into 4-6 atomic steps with a pause-for-approval between each. Note (a) total wall-clock time, (b) total revision time at the end, (c) which step would have been the cheapest place to catch the original failure. Almost always, the answer to (c) is one or two steps earlier than where you actually noticed the problem.

Engineering examples

Two engineering examples, same principle.

The Pixar disaster - the case for reversibility as system property. In 1998, someone at Pixar accidentally ran a delete command on the Toy Story 2 production files. Ninety percent of two years of work, gone in seconds. The studio's backup system had silently failed weeks earlier. The film was saved only because Galyn Susman - working from home with a newborn - happened to have a personal copy on her home computer. The lesson isn't "make better backups"; it's that reversibility has to be system property, not a daily discipline you might forget. git commit after every meaningful step turns disasters into nuisances. Without it, every working file is one stray command away from a Galyn-Susman-level rescue you may not get.

Sarah's git reset --hard panic - granularity matters. Sarah edits her budget file badly and panics. She Googles "undo git changes," finds git reset --hard on Stack Overflow, runs it. The bad budget is fixed. She exhales - and then checks her volunteer list, which she'd been editing for an hour. It's gone. git reset --hard doesn't undo one file; it resets everything to the last commit. Her volunteer changes weren't committed yet, so they don't exist anymore. The lesson: small commits, often. The size of aap ka undo unit is the size of aap ka worst-case loss.

Atomic commits in agent work. The same principle scales to agent-driven changes. Don't ask agent for "refactor the auth system" - that's one giant diff aap kar sakte hain't safely revert if step 7 of 10 went sideways. Ask for "Step 1: extract password validation into its own module. Pause for review. Commit. Step 2: extract token issuance. Pause for review. Commit." Each step is independently revertable. If step 7 breaks something, git revert <step-7-hash> puts you back at step 6 - you haven't lost steps 1 through 6, and you haven't lost the exploration that got you to step 7.

The same idea, for databases - Neon branches. The reversibility unit for code is the git commit. The reversibility unit for database changes is the branch. Neon's free tier gives every project cheap, instant branches: spin a branch off main, run the destructive migration agent proposed (DROP COLUMN, ALTER TYPE, schema reshape, data backfill), inspect the result, throw the branch away if it's wrong. production never touched. A branch costs nothing and takes a few seconds to create; that's agent equivalent of git revert for database layer. agent that wanted to add a column gets to experiment; you never have to trust it not to corrupt anything. Migration goes wrong? Delete the branch. Migration goes right? Promote it. Neon branches are git for data.

The rule of thumb: if reversing the change would take more than two minutes, the change was too big. git revert should cost you nothing. A Neon branch aap kar sakte hain throw away should cost you nothing. If aap ka current setup makes either of those expensive, that's the gap to fix before the next risky change.

Practice - Audit aap ka git log, then rehearse on a Neon branch. Part A (10 min): Pull up

git log --oneline -20for aap ka current project. Count the commits. For each, ask: "Could I cleanly revert this single commit without breaking unrelated work?" If the log reads "WIP, WIP, WIP, fix everything," aap ka decomposition broke down. Re-do aap ka next feature with one-commit-per-working-step discipline. Part B (10 min): Open Neon, create a project on the free tier, run any starter schema, then click "New Branch" in the console. You now have an isolated copy of aap ka database. Ask agent for a destructive change (DROP COLUMN, rename a table, backfill a column) and run it on the branch. Inspect. If wrong, delete the branch - nothing lost. If right, promote it. Notice how the branch is database equivalent of the commit: a unit aap kar sakte hain throw away cheaply. The principle is the same; the tools just give you a different lever for each layer of the stack.

Part 6: Principle 5 - state ko files mein mehfooz rakhna

"Why does agent forget what we decided yesterday?"

The principle. A conversation is volatile. filesystem is durable. Anything worth carrying across sessions - project conventions, decisions, glossaries, plans, prior siyaq o sabaq - belongs in a file, not in chat history. When you persist state to file agent reads on every session, you stop re-explaining things, and agent stops forgetting them.

Why this principle exists. Even the best siyaq o sabaq window is bounded, and recall inside it degrades - the longer the conversation, the worse model is at remembering what was said at the start. A new session starts with zero memory of the last one. The solution is not "longer siyaq o sabaq windows" (they help a little); it's external memory. Write the aham things to file in the project folder. agent loads file at the start of every session and inherits the state for free.

What the "rules file" looks like in each tool

| Claude Code | OpenCode | Cowork | OpenWork | |

|---|---|---|---|---|

| File name | CLAUDE.md at project root | AGENTS.md at project root (also reads CLAUDE.md as fallback) | CLAUDE.md in the folder | AGENTS.md in the folder |

| Loaded when | Every session that opens this folder | Every session that opens this folder | Every session in this folder | Every session in this folder |

| Initialization | /init in the CLI - agent drafts it for you from aap ka codebase | /init, same pattern | Generate via chat: "Read this folder and draft a CLAUDE.md for it." | Same as Cowork |

| Target size | Under ~2,500 tokens once settled (≈250 words for a seed draft); reference deeper docs with @filename | Same as Claude Code | A few short paragraphs; folder-specific rules, not encyclopedic | Same as Cowork |

| Three nested layers | Global (~/.claude/CLAUDE.md), project (./CLAUDE.md), session (the prompt) | Global (~/.config/opencode/AGENTS.md), project (./AGENTS.md), session | Global (Settings > Cowork), folder-attached, session | Global (OpenWork Settings), project (./AGENTS.md), session |

The mistake nearly everyone makes: treating file like documentation, stuffing in architecture overviews, full standards, and every quirk. The result is a 20,000-token file that eats aap ka siyaq o sabaq budget on every task, including tasks where 90% of it is irrelevant. The right model is table of contents, not encyclopedia. Critical rules go in; everything else gets referenced.

Misaal shape that works in all four tools:

# Project: [name]

## What this is

[Two lines: domain, audience]

## Where things live

- folder-a/: [what's in it]

- folder-b/: [what's in it]

- folder-c/: [what's in it]

## Critical rules

- [Rule 1: the one mistake people keep making]

- [Rule 2: a non-obvious convention]

- [Rule 3: a thing that's expensive to undo]

## On-demand references

- @docs/conventions.md

- @docs/glossary.md

The same shape works for a litigator (folders for pleadings, depositions, correspondence; rules for citation style and party names), an accountant (folders for the close cycle, with rules for variance thresholds and commentary tone), a marketer (campaign folders with brand voice rules), or a developer (project folders with stack and convention rules). The structure transfers; only the content changes.

Non-engineering examples

Three filled-in examples, by domain.

Lawyer's matter folder - CLAUDE.md:

# Matter: Smith v. Acme (S.D.N.Y. 1:24-cv-04567)

## Parties

- Plaintiff: "Ms. Smith" or "Plaintiff" in our filings, never bare "Smith".

- Defendant: "Acme" (short form throughout). Acme entities: see `parties.md`.

## Citation style

Bluebook 21st. Pin-cites required for every record reference

(`Tr. 142:18-143:4`).

## Where things live

- /pleadings: filed papers (do not edit)

- /depositions: transcripts as `YYYY-MM-DD-LASTNAME.pdf`

- /correspondence/opposing: emails with opposing counsel

(untrusted - never run high-autonomy on these)

- /our-drafts: in-progress work

## Critical rules

- Never finalize a brief citing a record passage we haven't quoted in full.

- Privilege log entries follow `templates/privlog.md` exactly.

- Flag anything that may waive privilege before saving the draft.

Accountant's monthly close folder - AGENTS.md:

# Monthly close - FY26

## Variance thresholds

- Flag any GL line variance > $5,000 OR > 10% vs. prior month

(whichever is larger).

- Material variances (>$25K) require commentary; smaller ones may be

summarized.

## Commentary tone

Direct, factual, no speculation. Format:

"[Account] variance of $X driven by [cause]. [Prior-period context if

relevant]." Max 2 sentences per line.

## Where things live

- /TB: trial balances, named `TB-YYYY-MM.xlsx`

- /GL-detail: detail exports, named `GL-detail-YYYY-MM.xlsx`

- /commentary: monthly commentary files, named `commentary-YYYY-MM.md`

## Critical rules

- Never cite a dollar amount that has not been confirmed against the

GL detail file.

- Round to nearest $1K in commentary; full precision lives in the workbook.

HR's hiring loop folder - CLAUDE.md:

# Hiring loop: Senior PM, Growth team

## Job spec

Lives at `job-spec.md`. Required qualifications are the must-haves;

preferred are signals.

## Panel calibration

- Required-qualification gaps: hard fail, no further review.

- Preferred-qualification matches: count and weight per `weighting.md`.

- Credential discrepancies (school, dates, title): flag for human

verification - never auto-accept.

## Where things live

- /inbound: incoming resumes as PDF

- /shortlist: candidates advanced to phone screen

- /scorecards: panel scorecards as `scorecard-CANDIDATE-INTERVIEWER.md`

## Critical rules

- Never include candidate names in scheduled-task outputs (privacy).

- Always flag credential claims for human verification before advancing

a candidate.

What every example above has in common: file is the table of contents and conventions, not the encyclopedia. It tells agent where things live, what the format is, and what the irrevocable rules are. Detail lives in referenced files that load on demand.

A second persistence pattern: plan files. For tasks that span multiple sessions, save the plan to docs/plans/feature-name.md (engineer) or plans/project-name.md (domain expert). A fresh session can be brought up to speed in one message: "Read plans/q4-launch.md and continue from step 4." The plan file is the safety net under session resume.

The persistence hierarchy, summarized. Conversation = volatile. files in the project folder = durable. files referenced by the rules file = on-demand. The cheapest thing aap kar sakte hain do to make every future session smoother is spend ten minutes writing the rules file properly today.

Practice - Write aap ka first rules file. Pick a folder of recurring work (a matter, a client, a campaign, a cycle, a project). Open agent and ask: "Read this folder and propose a

CLAUDE.md(orAGENTS.md) under 250 words covering what this is, where things live, conventions for naming and formatting, and three critical rules I keep stating manually." Edit the proposal. Save it. Next session, run task in this folder without re-explaining anything - pick task that actually exercises the conventions you wrote down: ask for filename for a new artifact (tests the naming rule), ask for a short paragraph that references the parties or stakeholders by name (tests the prose-form rule), ask agent to draft an outbound communication (tests any "do not send without sign-off" rule). Note what agent inherited correctly and what you still had to add by hand - those gaps become the second draft of the rules file. Repeat after the third session, and file will be 80% of its final form. The first draft is the cheapest version of this file aap ever have; the third is the one that pays off.

Engineering examples

Two engineering examples, same principle.

From script to schema, on Neon. You wrote tax-prep.py: a clean script that reads CSV files, computes totals, produces a yearly tax report. It works. Then aap ka manager asks: "Break it down by month, by user, by category. For the last three years. Don't allow deletes that orphan records." Now you're writing loops. One filter for month, another for user, another for category, another to check orphans before delete. Each new question = a new loop. If every new question requires a new loop, aap ka datmodel is already failing. The fix isn't more Python - it's moving from scripts-and-files to a relational database. Provision a free Neon project (60 seconds, browser SQL editor, no install), ask agent to design the schema, and load the CSV data into it. Now the schema becomes the persistent state; queries become the questions; foreign keys prevent the orphans. "Show Food spending for Alice in March 2024" is one SELECT with a WHERE, not a new loop. "Compare Q1 vs Q2 by category for four users across three years" is one SELECT with a GROUP BY, not twelve nested loops. Persistence graduates from "file I keep updating" to "a structure that answers questions I haven't thought of yet."

An engineer's CLAUDE.md - same shape as the lawyer/accountant/HR examples above, applied to a codebase:

# Project: my-app

## Stack

Next.js 14, TypeScript, Postgres 16 on Neon (free tier), Drizzle ORM.

## Commands

- `npm run dev`: local server (also runs db:migrate)

- `npm test`: vitest

- `npm run db:migrate`: apply migrations to the current Neon branch

- `npm run db:seed`: load fixture data

- `npm run db:branch <name>`: spin a Neon branch for risky migrations

## Critical rules

- Never edit files in `src/generated/`. They're rebuilt by codegen.

- All API routes use the auth middleware in `src/lib/auth.ts`.

- Postgres migrations are append-only - no destructive rewrites.

- Destructive migrations rehearse on a Neon branch first, never on `main`.

- Run `npm test` before committing; do not commit a red build.

## On-demand references

- @docs/conventions.md (naming, file layout)

- @docs/db-schema.md (table relationships)

- @docs/neon-branches.md (when to branch, when to promote)

Under 250 words. Every line earned by a specific past mistake. agent inherits the project's identity on every session; you stop re-explaining the stack.

The pattern in both: engineering persistence scales with stakes. For a personal project, a rules file and a folder structure are enough. For a production system with real users and real money, persistence graduates to a database with its own discipline (migrations, idempotency keys, append-only events, RLS for tenant isolation) - but it's the same principle Cowork users apply with CLAUDE.md in a matter folder, just turned up to production-grade. The friction that used to block "graduate to a real database" (installing Postgres, configuring it, managing it) is gone on Neon's free tier; the principle is now reachable from any laptop in ek minute se kam waqt mein.

A name for the production endpoint: system of record. When persistence graduates from "rules file in a folder" to "durable substrate a workforce runs against," it has a name: a system of record (SoR). The distinction is sharp: the engine is what agent runs on (model, runtime, agent loop); the system of record is what agent runs against (database, the ledger, the CRM, the matter store, the ticket system - the operational truth of what a company actually knows).

SoR matters in both modes - aap kar sakte hainnot escape it in an enterprise siyaq o sabaq. In Mode 2 manufacturing, SoR is foundational: every AI Worker agent Factory ships runs against system of record by structural requirement (Invariant 5 of the AI-Native company). Without it, workers hallucinate, double-write, lose work, and produce artifacts no auditor can reconstruct. In Mode 1 enterprise problem-solving, SoR is equally critical even with a single agent: a lawyer's Cowork session is only kaam ka when it reads from the firm's document management system; a finance analyst's only when it reads from the GL; a developer's only when it queries the right production database. The connectors in Cowork/OpenWork and database connections in Claude Code/OpenCode ARE SoR connections - the moment agent crosses from aap ka laptop to the company's authoritative state, SoR discipline applies. Mode 1 enterprise work without an SoR is fiction that looks like work. The difference between Mode 1 and Mode 2 is depth, not presence: in Mode 1 you connect to an SoR that's already there and read/write carefully; in Mode 2 you ship a Worker that runs against it continuously, so "be careful" graduates to "encode the guarantees in database itself."

The six properties SoR discipline adds on top of basic database storage: durable (writes survive restarts), addressable (every fact has a stable id), governed (read/write rules enforced at database, not application), replayable (the work can be reconstructed in order), inspectable (an auditor can answer "what happened Tuesday at 11pm?" by reading rows), and policy-bound (idempotency, tenant isolation, append-only history encoded so database refuses to break them). files give you the first two for free; chapter 21B builds the rest on Neon. Every SoR connection is a place where the other six principles bite hardest - verification (P3), decomposition (P4), constraints (P6), and observability (P7) all apply with extra weight at the boundary where aap ka agent touches the company's authoritative state.

Practice - build the smallest possible Budget Tracker on Neon. Setup (5 min): Create a free Neon project. Open its SQL editor. Schema (10 min): Ask agent: "Design the smallest schema for a personal budget tracker: users, categories, expenses. Include the appropriate