Axiom I: Shell as Orchestrator

The overview ended with a question: when an AI agent has access to a terminal, what should it actually do with it? This axiom's answer is simple: the terminal should coordinate work, never do the work itself. That sounds like a small distinction, until you are the one staring at line 247 of a broken script at 2am.

None of that mattered at 2:14am on James's first on-call rotation, when his phone buzzed: the deployment was stuck and 50,000 users were affected. He opened the deployment script (a 400-line file he had never seen) and stared at line 247. Variable names like temp2 and OUT told him nothing. Somewhere above, a failed test should have stopped everything, but someone had removed that safety check three months ago and nobody noticed. The script kept running past broken tests, built a broken version of the app, and pushed it live.

James called the senior engineer at 2:30am. "Yeah," Emma said. "That script breaks every few weeks. Nobody wants to touch it because everything is tangled together."

Emma rewrote the entire process that weekend. The new version was 12 lines long. Each line called a specialized tool: pytest for testing, Docker for building, kubectl for deployment. The file did nothing except decide what runs, in what order, and what happens if something fails. When a test failed, the process stopped. When a step succeeded, it moved to the next one. No tangled logic. No mystery variables.

The 2am emergencies stopped. Not because Emma wrote better code. Because she stopped cramming everything into one script and started using the shell for what it was designed for: orchestration (coordinating tools, not doing the work itself).

This was James's first week on Emma's team. The deployment script was his warm-up assignment; every task she gave him would teach a different axiom.

The Problem Without This Axiom

James's deploy.sh was not written by a bad engineer. It was written by a series of good engineers, each solving an immediate problem. The first version was 15 lines: a clean sequence of commands. Then someone added input validation. Then error logging. Then a Slack notification. Then a rollback mechanism. Each addition was reasonable in isolation. Together, they created a 400-line script that treated the shell as a programming language.

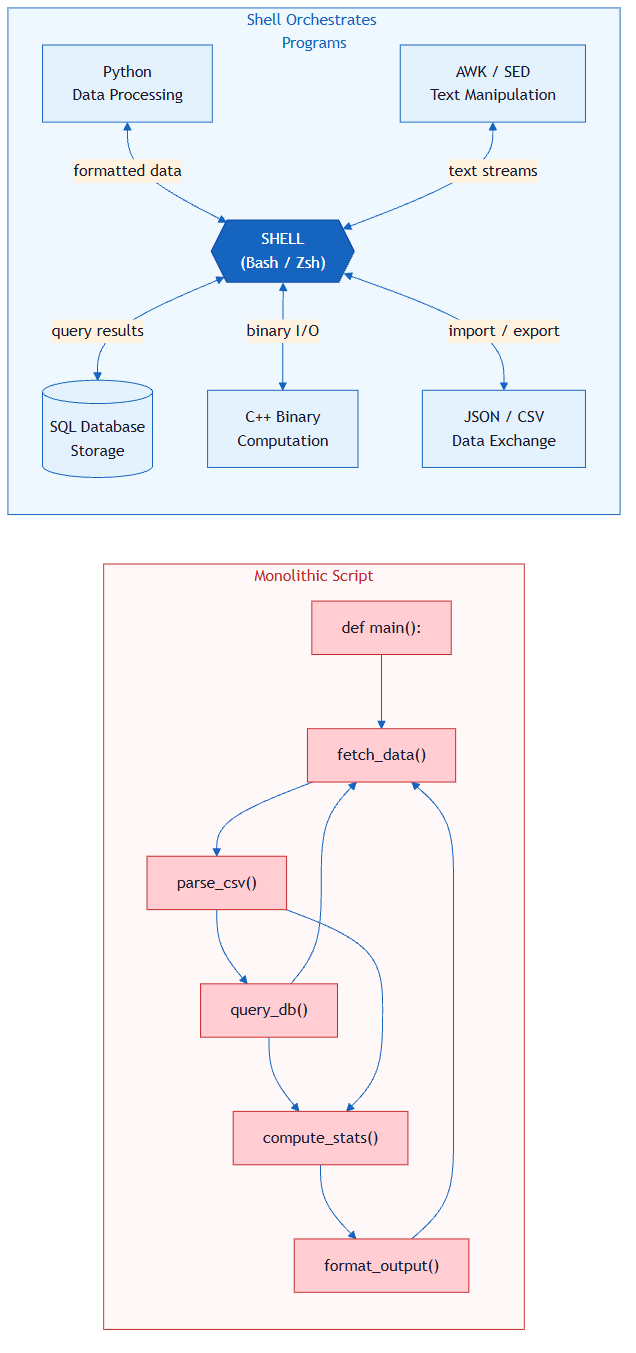

This is the universal failure mode. When developers first encounter the shell, they treat it as a programming language. They write loops, parse strings, manipulate data, implement business logic, all inside .sh files. This works for small tasks but collapses at scale.

The symptoms are predictable, and James experienced all four on that 2am call:

- Debugging becomes archaeology. A 300-line bash script has no stack traces, no detailed error reports, and no IDE support to help you navigate. When it fails on line 247, you have to read from line 1.

- Testing becomes impossible. You cannot test one piece of the script in isolation because every part depends on what ran before it: variables set earlier, files created by previous commands, the state of the whole system.

- Collaboration becomes hazardous. Two developers editing the same script inevitably break each other's work because they make different assumptions about what the script's variables contain at any given point.

- AI agents cannot reason about it. An AI reading a 500-line bash script sees an impenetrable wall of string manipulation. An AI reading a 12-line Makefile sees clear intent: run tests, build the app, deploy.

The root cause in every case is this: computation and coordination are tangled together. The script is simultaneously deciding what to do and how to do it. These are fundamentally different responsibilities.

The Axiom Defined

Axiom I: The shell is the universal coordination layer for all agent work. Programs do computation; the shell orchestrates programs.

The boundary is sharp and non-negotiable:

| Responsibility | Belongs To | Examples |

|---|---|---|

| Coordination | Shell | Sequencing, parallelism, piping, error routing, environment setup |

| Computation | Programs | Data transformation, business logic, parsing, validation, algorithms |

The shell's job is to answer: What runs? In what order? With what inputs? What happens if it fails?

A program's job is to answer: Given this input, what is the correct output?

When you respect this boundary, every component becomes independently testable, replaceable, and understandable. When you violate it, you get James's 2am pager.

Historical Background: The Unix Roots (click to expand)

This axiom did not originate with agentic development. It was discovered over six decades ago at Bell Labs.

In 1964, Doug McIlroy (who would go on to lead Bell Labs' Computing Sciences Research Center) wrote an internal memo arguing that programs should connect to each other like garden hoses. That single idea became the Unix pipe, and it reshaped how an entire generation thought about software.

By 1978, McIlroy had distilled the accumulated wisdom of Unix's creators (Ken Thompson, Dennis Ritchie, and their colleagues) into three rules that appeared in the Bell System Technical Journal:

- Write programs that do one thing and do it well.

- Write programs to work together.

- Write programs to handle text streams, because that is a universal interface.

Read those rules again. They are Axiom I in its original form. Rule 1 says programs should compute, not coordinate. Rule 2 says something else handles the coordination; that something is the shell. Rule 3 says the interface between them is text, which is exactly what pipes, redirection, and exit codes provide.

The Unix philosophy endured because it solved a fundamental engineering problem: complexity management through separation of concerns. The same 400-line deploy script that plagues today's junior developer would have plagued a Bell Labs engineer in 1978. The solution was the same then as it is now: stop writing monoliths, start composing small tools.

What makes this relevant to agentic development specifically is that AI agents rediscovered this pattern independently. When Claude Code, Cursor, or any coding agent operates through a terminal, it naturally falls into the McIlroy pattern: invoke a focused tool, read the output, invoke the next tool. The shell is not just a convenient interface; it is the architectural pattern that makes tool-using AI possible.

From Principle to Axiom

In Chapter 18, you learned Principle 1: Bash is the Key: terminal access is the fundamental capability that makes AI agentic rather than passive. You saw how Vercel's AI agent became 3.5x faster and went from 80% to 100% success rate by giving it basic shell commands instead of complex custom tools. That principle answered the question: What enables agency?

This axiom answers a different question: How should the shell be used once you have it?

| Principle 1 (Chapter 18) | Axiom I (this lesson) | |

|---|---|---|

| Question | What enables agency? | How should the agent use the shell? |

| Answer | Terminal access | As an orchestration layer |

| Focus | Capability | Architecture |

| Level | "Can I act?" | "How should I act?" |

| Metaphor | Having a key to the building | Knowing which rooms to use for what |

The principle gave you access. The axiom gives you discipline. Chapter 18 showed that giving an AI terminal access turns it from a chatbot into an agent, because it can now do things. But what should it do? This axiom says: use the terminal to call specialized tools (run tests, build the app, deploy), not to write hundreds of lines of logic inside the terminal itself. An agent that has terminal access but uses it for 500-line computation scripts is like a conductor who grabs a violin mid-performance: technically capable, architecturally wrong.

Practical Application

Composition Primitives

When Emma showed James the 12-line replacement, he was not convinced. "Twelve lines can't be reliable. We should rewrite the whole thing properly, in Python, with error handling, with tests."

"What would the Python version do?" Emma asked.

James thought about it. "It would... call pytest. Then call Docker. Then call kubectl." He paused. "That's what the Makefile does. I'd just be wrapping the same tool calls in Python syntax."

"And now you have a Python program to maintain instead of twelve lines that call the tools directly."

James looked at the Makefile again. It used no framework, no libraries, no custom tooling. It used three primitives that the shell has shipped since 1973.

The bash commands below demonstrate three shell primitives: pipes, exit codes, and redirection. You do not need to memorize them. Focus on the pattern each one illustrates: connecting programs, checking results, and routing data. You will use these commands hands-on in later chapters.

Pipes are the oldest and most elegant composition primitive. One program's output becomes another program's input, with nothing in between but a | character.

# Orchestration: the shell routes data between four programs

# Each program handles its own computation

cat server.log | grep "ERROR" | sort -t' ' -k2 | uniq -c

Here, cat reads, grep filters, sort orders, uniq counts. The shell wrote zero logic; it only connected programs.

Exit codes are the shell's error protocol: a program returns 0 for success and anything else for failure, and the shell decides what to do next.

# Orchestration: the shell decides what happens based on program results

python run_tests.py && docker build -t myapp . && docker push myapp:latest

The && operator is pure orchestration: "run the next program only if the previous one succeeded." The shell makes no judgment about what "success" means; it trusts the program's exit code.

Redirection decouples programs from their data sources entirely. A program does not need to know whether its input comes from a file, a pipe, or a user's keyboard; the shell handles that routing.

# Orchestration: the shell routes output to appropriate destinations

python analyze.py < input.csv > results.json 2> errors.log

Three symbols (<, >, 2>) and the program's entire I/O is rewired without changing a single line of its code. That is orchestration at its most minimal.

Makefiles as Orchestration

Pipes compose programs linearly. But real workflows have dependencies: tests must pass before building, building must succeed before deploying. Makefiles express these relationships declaratively, and they have been doing so since 1976:

# This entire file is orchestration. Zero computation.

.PHONY: all test build deploy clean

all: test build deploy

test:

python -m pytest tests/ --tb=short

npm run lint

build: test

docker build -t taskapi:latest .

deploy: build

kubectl apply -f k8s/deployment.yaml

kubectl rollout status deployment/taskapi

clean:

rm -rf dist/ __pycache__/ .pytest_cache/

docker rmi taskapi:latest 2>/dev/null || true

The clean target uses rm -rf, which permanently deletes files without confirmation. Always verify the paths before running cleanup targets. Never use rm -rf with variables or user input that could expand to unintended directories.

Notice what the Makefile does NOT do:

- It does not parse test output to decide if tests passed (pytest handles that via exit codes)

- It does not implement Docker image layer logic (Docker handles that)

- It does not manage Kubernetes rollout strategy (kubectl handles that)

The Makefile's only job: sequence the programs and respect their exit codes. This is orchestration in its purest form.

You will learn Makefiles later. For now, ignore details like .PHONY and the tab indentation. Focus on the structure: each named section (test, build, deploy) calls a tool and nothing else. The line build: test means "run build only after test succeeds," and that is orchestration expressed in two words.

The Shell in Agent Workflows

This is where Axiom I becomes central to everything this book teaches, and where James's story connects to yours.

Consider what separates an AI chatbot from an AI agent. A chatbot receives text and returns text. An agent receives a goal and takes actions in the world: it reads files, runs tests, queries databases, deploys services. How? Through the shell. The shell is the bridge between language and action.

Watch what Claude Code actually does when you ask it to fix a failing test:

# Step 1: Understand the failure (grep does the searching)

grep -r "def process_payment" src/

python -m pytest tests/test_payment.py --tb=short

# Step 2: Read and edit the code (agent's own capabilities)

# [reads file, identifies bug, writes fix]

# Step 3: Verify the fix (pytest does the validation)

python -m pytest tests/test_payment.py

# Step 4: Confirm and record (git does the version control)

git diff

git add src/payment.py

git commit -m "fix: handle null amount in process_payment"

Count the shell commands. Each one is a single invocation of a specialized program. The agent wrote zero logic in bash: no loops, no string parsing, no conditionals. It orchestrated. This is not a coincidence. It is the only pattern that scales.

Why orchestration is the only viable pattern for agents:

| If the agent... | Then it... | Problem |

|---|---|---|

| Writes complex bash logic | Must debug bash (no types, no stack traces) | Agents are worse at bash debugging than humans |

| Reimplements tool functionality | Duplicates existing, tested code | Higher error rate, slower execution |

| Uses shell as orchestrator | Leverages every tool on the system | Maximum capability, minimum code |

The insight is architectural: an AI agent's power is proportional to the number of tools it can compose, not the amount of code it can write. A 12-line orchestration that chains pytest, docker, and kubectl accomplishes more than a 500-line custom script, and it accomplishes it reliably because each tool is independently maintained and tested.

This pattern holds across every major AI coding tool. Whether it is Claude Code, Cursor, Windsurf, or GitHub Copilot's workspace agents, they all converge on the same architecture: the model reasons, the shell orchestrates, and specialized programs compute. Axiom I is not our invention. It is what every successful AI agent discovered independently, because it is the architecture that works. Had James been able to point an AI agent at his team's deployment on that 2am call, the agent would have done exactly this: invoking pytest, reading the exit code, and stopping. It would never have written a 400-line bash script to do so.

The Complexity Threshold

James's deploy.sh did not start as 400 lines. It started as 15: a clean sequence of commands. But each week, someone added a loop here, a string parse there, a conditional that checked whether the Docker registry was reachable before pushing. By the time James inherited it, the script had crossed from coordination into computation without anyone noticing the moment it happened.

Axiom I does not mean "never write more than one line of bash." Short scripts that set up environments, sequence commands, and route errors are legitimate orchestration. The danger zone begins when your script starts doing the work instead of delegating it.

Heuristics for detecting the threshold:

| Signal | Shell (Orchestration) | Program (Computation) |

|---|---|---|

| Lines of logic | Under 20 lines | Over 20 lines of actual logic |

| Control flow | Linear or single conditional | Nested loops, complex branching |

| String manipulation | Filenames and paths | Parsing, formatting, transformation |

| Error handling | Exit codes and set -e | Try/catch, recovery strategies, retries |

| State | Environment variables for config | Data structures, accumulators, caches |

| Testing | Not needed (trivial coordination) | Required (complex logic) |

Example: Crossing the threshold

This starts as orchestration but has crossed into computation:

# BAD: This is computation disguised as shell

for file in $(find . -name "*.py"); do

module=$(echo "$file" | sed 's|./||' | sed 's|/|.|g' | sed 's|.py$||')

if python -c "import $module" 2>/dev/null; then

count=$(grep -c "def " "$file")

if [ "$count" -gt 10 ]; then

echo "WARNING: $file has $count functions, consider splitting"

total=$((total + count))

fi

fi

done

echo "Total functions in importable modules: $total"

The fix is to extract the computation into a program.

You will learn to write Python later in Part 4. For now, focus on the structure: the messy shell script above tries to do everything inline, while the program below is a separate file that the shell calls. The shell orchestrates; the program computes. That architectural distinction is the lesson, not the syntax.

# analyze_modules.py: the PROGRAM handles computation

import os

def analyze(directory, threshold=10):

total = 0

for root, dirs, files in os.walk(directory):

for filename in files:

if filename.endswith(".py"):

filepath = os.path.join(root, filename)

with open(filepath) as f:

count = sum(1 for line in f if line.strip().startswith("def "))

if count > threshold:

print(f"WARNING: {filepath} has {count} functions, consider splitting")

total += count

print(f"Total functions found: {total}")

if __name__ == "__main__":

analyze("src")

# The SHELL orchestrates: one line, clear intent

python analyze_modules.py || echo "Code complexity review needed"

The program is testable, type-checkable, debuggable with a real debugger, and readable by any Python developer. The shell line is pure orchestration (run this program, handle its exit code).

Anti-Patterns

James's deployment script was a Mega-Script, and every team has one. It starts with a comment from 2019: # TODO: refactor this someday. It has mystery variables nobody understands, commands that call services that no longer exist, and logic so tangled that nobody dares change one part for fear of breaking something else. The script works. Mostly. Until it doesn't, and then everyone discovers what James discovered: when computation and orchestration are tangled, no one can fix anything without risking everything.

The Mega-Script is the most common anti-pattern, but not the only one. Here are the three mistakes that violate this axiom most often:

| Anti-Pattern | What It Looks Like | Why It Fails | The Fix |

|---|---|---|---|

| The Mega-Script | A script that grew to hundreds of lines with loops, data processing, and error handling all mixed together | Cannot be tested, debugged, or understood by anyone (including AI agents) | Move the computation into programs; keep the shell to just calling those programs in order |

| Ignoring Exit Codes | Commands chained with ; (which means "run the next command no matter what") instead of && (which means "only continue if the previous step succeeded") | Failures go unnoticed; the script keeps running past broken steps | Always use && or set -e so the process stops when something fails |

| Shell as Data Processor | Using the shell to transform, parse, or analyze data through long chains of text-processing commands | Fragile, unreadable, and impossible to test for edge cases | Write a proper program for any data processing beyond simple filtering |

Try With AI

Prompt 1: Classify Orchestration vs Work

I'm learning about the difference between orchestration (coordination) and work (producing results).

Here are 8 steps involved in publishing a school newsletter:

1. Decide which articles to include

2. Write the lead article

3. Edit all articles for grammar

4. Choose the layout template

5. Design the cover image

6. Arrange articles into the template

7. Decide the publication date

8. Print and distribute copies

For each step, classify it as ORCHESTRATION (coordination: deciding what happens, in what order, and who does it) or WORK (producing a specific result). Explain your reasoning for each.

Then answer: if the editor-in-chief also writes all the articles, edits them, AND designs the cover, what problems would this cause? What is the orchestration principle being violated?

What you're learning: How to see the architectural boundary between coordination and work. You are developing the pattern recognition to identify when someone responsible for orchestration has taken on too much work (the same failure mode that caused James's 400-line deployment script).

Prompt 2: Design a Workflow for a Real Process

I want to understand how to design a workflow where the coordinator only coordinates and never does the work.

Here is a scenario: A student club is organizing a charity fundraiser. The steps include:

- Book a venue

- Design and print posters

- Set up an online donation page

- Recruit volunteers

- Buy supplies for the event

- Send thank-you emails after the event

- Write a summary report of how much was raised

Help me design a coordination plan:

1. For each step, who should do the WORK? (Name a role: venue team, design team, finance team, etc.)

2. What is the COORDINATOR'S only job for each step? (Assign, check, decide; never do)

3. What is the correct ORDER? Which steps depend on other steps finishing first?

4. What happens if the coordinator also tries to design the posters AND recruit volunteers personally?

Show me the plan as a table with columns: Step, Worker, Coordinator's Job, Depends On.

What you're learning: How to express workflow coordination where the coordinator only decides, assigns, and checks (never produces the result). You are practicing the discipline that makes orchestration scalable: one coordinator directing many workers, rather than one person doing everything.

Prompt 3: Apply Orchestration to Your Own Life

I want to apply the orchestration principle to a process I manage in my own life.

[Describe a process you coordinate regularly. For example:]

- Planning a family dinner or gathering

- Organizing a study group session

- Managing a group project for school

- Coordinating a move to a new apartment

Help me analyze my process:

1. List every step involved

2. For each step, identify: is this ORCHESTRATION (deciding, sequencing, checking) or WORK (producing something)?

3. Am I currently doing both coordination AND work for any steps? Which ones?

4. Redesign my process so that coordination and work are clearly separated (even if I am the only person, I should know which hat I am wearing at each moment)

Then help me understand: why does separating these roles matter even when I am the only person involved?

What you're learning: How to apply Axiom I to your own life, not just analyze someone else's. You are making the architectural decision about what belongs in the coordination role versus what belongs in the worker role (the core skill this axiom teaches). By working with your actual process, you build the habit of thinking "am I coordinating or working?" every time you manage something.

PRIMM-AI+ Practice: Shell as Orchestrator

This axiom teaches one distinction: coordination vs. work. Before you start the exercises, make sure you understand the difference:

- Work means producing a specific result: running tests, packaging code, pushing files to a server. A worker takes an input and produces an output. It does not care what happened before it or what happens after it.

- Coordination means deciding the sequence, handling failures, and routing between workers: choosing which step runs first, stopping the process when a step fails, notifying people when something goes wrong. A coordinator never produces the result itself; it tells workers when to start, checks whether they succeeded, and decides what happens next.

Here is one example to anchor the difference: "Run all the tests and report which ones passed" is work (a testing tool does that). "If any test failed, stop the whole process" is coordination (that is a decision about what happens next based on a worker's result).

Predict [AI-FREE]

Enter Plan Mode in Claude Code (Shift+Tab). You are in James's shoes. Your team needs to ship an update to the app. Here are six things that must happen before the update goes live:

- Run all the tests and report which ones passed or failed

- Stop the entire process if any test failed

- Package the app into a single file that can be installed on the server

- Make sure tests run first, packaging runs second, and deployment runs last

- Push the packaged file to the live server

- Send a notification to the team if anything failed along the way

Classify each as coordination or work. Write your classifications down before continuing. Rate your confidence from 1 (guessing) to 5 (certain).

Hint: some tasks are trickier than they look. Task 6, for example: is sending a notification "work" (a messaging tool sends the message) or "coordination" (someone decided that a failure should trigger a notification)? Think carefully about whether the task is producing a result or making a decision about what should happen.

Run

In Claude Code, type: "My team needs to ship an app update. Classify each of these six tasks as coordination or work: (1) run all tests and report results, (2) stop the process if any test failed, (3) package the app into an installable file, (4) ensure tests run before packaging and packaging before deployment, (5) push the package to the live server, (6) send a notification if anything failed."

Compare the AI's classifications to yours. Pay special attention to task 6: did the AI classify it the same way you did? If you disagreed, can you see the reasoning behind the other answer?

Answer Key (check after comparing with AI)

| Task | Classification | Why |

|---|---|---|

| 1. Run all tests and report results | Work | A testing tool produces a result (pass/fail report). It does not decide what happens next. |

| 2. Stop the process if any test failed | Coordination | This is a decision: "if failure, then stop." It produces no result itself. |

| 3. Package the app into an installable file | Work | A build tool takes code and produces a package. It does not care what runs before or after. |

| 4. Ensure tests → packaging → deployment order | Coordination | This is pure sequencing: deciding what runs in what order. |

| 5. Push the package to the live server | Work | A deployment tool takes a package and installs it. It produces a result. |

| 6. Send a notification if anything failed | Both | This is the tricky one. The decision to send a notification on failure is coordination, routing based on a result. The act of sending the message is work; a messaging tool does it. In a well-designed system, these are two separate steps: the coordinator decides "something failed, trigger the notification tool," and the notification tool does the sending. |

If you got 5 out of 6 correct, your mental model is solid. If task 6 tripped you up, that is expected; it is genuinely two things wrapped into one sentence, and recognizing that is exactly what this axiom teaches.

Investigate

Think back to James's story. His 400-line deployment script tangled coordination and work together. At 2am, when the deployment broke, nobody could figure out what went wrong. Emma replaced it with 12 lines that only coordinated, where each line called a specialized tool and checked whether it succeeded.

Write in your own words, without asking AI, the answer to this specific question: Why was the 12-line version easier to debug at 2am than the 400-line version? Think about what you would see when you open each file during an emergency.

In Claude Code, type: "James had a 400-line deployment script that mixed coordination with computation. Emma replaced it with 12 lines that only coordinated, where each line called a tool and checked its result. Why is the 12-line version easier to debug, test, and understand? Give specific reasons."

Apply the Error Taxonomy: tangling coordination with work = orchestration error. James's script failed not because the tools were broken, but because the coordination logic (what runs in what order, what stops when something fails) was buried inside hundreds of lines of computation. When something went wrong, finding the "stop if tests fail" decision inside all that code was like finding one sentence in a 400-page book.

Parsons Problem

Emma is rewriting James's broken deployment process. Here are the five steps in scrambled order. Put them in the correct sequence:

- (A) The testing tool runs all tests and reports pass or fail

- (B) The orchestration file checks the test result: if tests failed, stop here

- (C) The build tool packages the application into a deployable file

- (D) Emma triggers the deployment process

- (E) The deployment tool pushes the package to the live server

Write your sequence (e.g., D, A, B, C, E) before checking.

Then answer two questions:

- Which steps are coordination and which are work?

- In James's original script, step B did not exist, so the process kept going even when tests failed. This is exactly what caused the 2am outage. Why does removing one coordination step break the entire process?

Modify

In James's original 400-line script, the step that checked whether tests passed did not just check. It also did all of this:

- Read through the entire test output file

- Counted how many tests failed and how many passed

- Calculated a pass percentage (e.g., "94% passed")

- Formatted the results into a readable summary table

- Composed a notification message with the summary attached

That was 40 lines of computation crammed into what should have been one coordination decision: "Did the tests pass? Yes or no."

Emma replaced all 40 lines with the equivalent of a single question: "Did the testing tool report success or failure?" The testing tool already knows how many tests passed. The notification tool already knows how to send messages. The orchestration file does not need to do any of that; it just needs the answer (pass or fail).

Why is Emma's approach better? What goes wrong when the orchestration file starts doing computation that the tools already handle?

Make [Mastery Gate]

Think about a multi-step process you go through regularly: submitting a school assignment, publishing a social media post, preparing a presentation, or setting up for a study session. Write a 5-step plan using this format.

In this exercise, you are the coordinator (like Emma's 12-line Makefile). Your job is to check results and decide what happens next. The tools and apps do the actual work. You never do the work that a tool could do for you, just as the Makefile never ran tests itself; it told pytest to run them and checked whether pytest reported success.

Here is an example for "submitting a homework assignment":

| Step | Work (what happens) | Tool that does it | You (the coordinator) decide... |

|---|---|---|---|

| 1 | Check spelling and grammar | Spell-check tool | Are there errors left? If yes, fix them before moving on. |

| 2 | Check that all required sections are included | Checklist / rubric | Does the assignment meet every requirement? If not, stop and fill the gaps. |

| 3 | Convert to PDF | File converter | Did the conversion succeed? Is the formatting correct? |

| 4 | Upload to the submission portal | Upload tool | Did the portal confirm the upload? If it failed, retry. |

| 5 | Send confirmation to yourself | Email app | Only trigger this if steps 1-4 all succeeded. |

Notice this: you never do what the tools do. You do not manually check every word for typos (the spell-checker does that). You do not convert the file format yourself (the converter does that). You only look at each tool's result and decide: move forward, stop, or retry? That is coordination.

Now write your own 5-step plan for a different process. For each step, ask yourself: "Am I doing the work, or am I checking a result and making a decision?" If you are doing the work, name the tool or person who should do it instead. If no tool exists, that step is genuinely work, but the coordinator's job is still just to check whether it succeeded before moving to the next step.

Your plan is your mastery gate; you should be able to explain what would go wrong if the coordinator started doing the work (like James's 400-line script did).

You just predicted which tasks are coordination and which are work, then checked your prediction against reality. That predict-then-check habit is Rung 1 of the Verification Ladder, the foundation that every other verification practice builds on.

The Responsibility of Orchestration

The shell's strength as a universal coordinator comes with a risk: when the orchestration is wrong, everything downstream breaks (not just one piece, but the entire pipeline).

A startup learned this the hard way. Their deployment script ran five steps in sequence: check code quality, run tests, build the app, update the database, deploy. But the steps were connected with ; instead of &&, meaning "run the next step no matter what." When the tests caught a real bug, the script ignored the failure and kept going. The database update ran, deleted a column that was still in use, and every user request started failing. Six hours of data modifications were lost. Not because the test was wrong (the test worked). The orchestration just didn't stop when it was told "no."

Three rules prevent this:

-

Stop on failure by default. Use

&&between commands (only continue if the previous step succeeded). A pipeline that keeps running after a failure is not orchestrating; it is gambling. -

Protect dangerous operations. Commands that delete files, reset code, or modify databases should never run automatically without a confirmation step. If your orchestration can destroy data without asking, it is a liability.

-

Test your orchestration, not just your programs. Your programs have their own tests. But does the pipeline itself stop when a step fails? Does it skip steps it shouldn't? Run it against test data to verify that the coordination works correctly, not just the individual tools.

James leaned back from Emma's Makefile and shook his head. "It's like running a warehouse. I used to manage a distribution center, and the floor supervisor never packed boxes herself. She decided which trucks loaded first, which pallets went where, and what happened when a shipment was short. The moment she started packing boxes, the whole floor backed up."

"That's it exactly," Emma said. "The shell is your floor supervisor. Pipes, exit codes, Makefiles: those are the routing slips. The programs are the workers."

"And my 400-line deploy script was a supervisor who packed every box, drove every truck, AND decided the schedule. No wonder it fell apart at 2am."

Emma paused. "I actually made the same mistake on a project two years ago. Wrote a 200-line bash script to set up dev environments. Took me three weekends to admit it needed to be six tool calls and a Makefile. Knowing the principle and applying it are different things."

James nodded slowly. "So the shell coordinates, programs compute. But what are the programs actually reading and writing? Like, where does all the knowledge about our system live?"

Emma smiled. "That's exactly where we're going next. Turns out the answer is simpler than you'd think, and you've been reading it all day."