Axiom X: Observability Extends Verification

Axiom IX's pipeline verified everything before deployment: formatting, linting, types, tests, security, all green. James merged the pull request, the order management system deployed, and he went home. At 2:47 AM, his phone buzzed. His second middle-of-the-night crisis since joining the team. But this was different from the deployment script failure in Axiom I. That crisis was caused by bad orchestration. This one was caused by something no test suite could catch: a customer service ticket reporting that shipping rates were wrong for international orders during peak traffic.

He checked the test suite: all fifty-three TDG (Test-Driven Generation) specifications passed. He checked the CI pipeline: green across every stage. He checked the type system: zero errors. Everything his pre-deployment verification said was "this system works correctly." But in production, right now, under real load, it did not.

James opened the server logs. What he found was useless:

Processing order...

Processing order...

Processing order...

ERROR: something went wrong

Processing order...

print("Processing order..."), scattered through the code from his earliest development days. No timestamps. No request IDs. No indication of which order failed, why it failed, or how many orders were affected. He had no metrics to show whether the error rate was 0.1% or 50%. He had no traces to show where the request spent its time. His comprehensive test suite, his type system, his CI pipeline: none of them could tell him what was happening right now, in production, to real users.

"The pipeline passed," James said the next morning, pulling up the green CI badge. "Every check: formatting, linting, types, all fifty-three tests, security audit. All green. If the pipeline is the gatekeeper, how did this get through?"

He pulled up the test results himself. test_international_surcharge: passed. test_international_no_free_shipping: passed. Every international shipping test, passed. With one request at a time, on a clean test machine, with no other load. The tests verified the logic. They could not verify what happens when fifty concurrent requests compete for the same database connection pool at 2:47 AM.

"The pipeline proved the code is correct," James said slowly. "It didn't prove the code survives the real world."

"You need a different kind of verification," Emma said. "One that watches the system while it runs."

This is Axiom X.

The Problem Without This Axiom

James's 2:47 AM incident exposed the gap in Axioms I through IX. Consider what those nine axioms gave him:

- Axiom V (Types Are Guardrails): Caught structural errors at compile time

- Axiom VII (Tests Are the Specification): Verified behavior against fifty-three TDG specifications

- Axiom IX (Verification is a Pipeline): Automated every pre-deployment check

This was powerful. But it all happened before the code reached users. Once deployed, James was blind. His system was:

- Passing all tests but slowly degrading under real production load

- Returning correct shipping rates for test inputs but wrong rates under concurrent requests

- Green on CI but silently timing out when the database connection pool was exhausted

- Functioning perfectly for 95% of orders but failing for the 5% that hit an untested edge case in international surcharge calculation

Pre-deployment verification answers: "Does this code work correctly?" Post-deployment observability answers: "Is this code working correctly right now?" Both questions matter. Neither answer substitutes for the other.

The Axiom Defined

Axiom X: Observability Extends Verification. Runtime monitoring extends pre-deployment verification. Tests verify behavior before deployment; observability verifies behavior in production. Together they form a complete verification system.

The word "extends" is precise. Observability does not replace testing; it extends the verification boundary from "before deployment" to "always." James's TDG tests from Axiom VII verified that calculate_shipping() returned the right values for eleven specific inputs. Observability verifies that the same function returns the right values for every request, under any load, at any time:

| Phase | Tools | What It Catches | When |

|---|---|---|---|

| Pre-deployment | Linting, types, tests, CI | Logic errors, type mismatches, regressions | Before users see it |

| Post-deployment | Logs, metrics, traces, alerts | Performance degradation, edge cases, real-world failures | While users experience it |

A system with only pre-deployment verification is like a car that passes inspection but has no dashboard gauges. James's order management system had passed inspection. It had no gauges.

From Principle to Axiom

In Chapter 18, Principle 7 introduced observability as visibility into what AI is doing. Remember the "Black Box Problem": the agent that silently failed, producing no output and no error, because nobody had instrumented it to report what it was doing? And the "2-Minute Audit" that taught you to check: Can I see what the agent did? Can I tell if it succeeded? Can I trace why it chose that approach? That principle focused on trust: if you cannot see what the agent does, you cannot trust it.

Axiom X takes this further. The principle is about human-AI collaboration transparency. The axiom is about production engineering discipline:

| Principle 7 (Chapter 18) | Axiom X (This Lesson) |

|---|---|

| See what the AI did | Monitor what the system is doing continuously |

| Activity logs for debugging | Structured logs, metrics, traces for operations |

| Trust through visibility | Confidence through measurement |

| Developer experience | Production reliability |

| "What happened?" | "What is happening right now, and is it normal?" |

Principle 7 gave you the mindset: make things visible. Axiom X gives you the engineering toolkit: structured observability as a first-class system concern, not an afterthought.

The Discipline That Preceded Observability

The term "observability" (borrowed from control theory, where it means "the ability to infer a system's internal state from its external outputs") entered software engineering through the DevOps movement. In 2016, Google published the Site Reliability Engineering book, codifying practices their teams had developed for running services at planetary scale. The book's core insight was that reliability is not a property of software; it is a property of operations. Code that passes tests can still fail in production if nobody is watching.

Charity Majors, co-founder of Honeycomb, popularized the distinction between monitoring (watching known metrics for known thresholds) and observability (understanding system behavior from its outputs, including behaviors you did not anticipate). Monitoring answers: "Is the error rate above 5%?" Observability answers: "Why are international orders from the UK failing at 3am?", a question you did not know to ask until the system told you.

James's print("Processing order...") was neither monitoring nor observability. It was noise: unstructured text that disappeared when the process restarted and told him nothing about which orders failed or why.

The Three Pillars of Observability

The sections below show Python code using libraries like structlog, prometheus_client, and opentelemetry. You do not need to understand the syntax. Focus on what problem each tool solves: logs tell you what happened, metrics tell you how much, and traces tell you where time went. The code is here to show that these concepts have real implementations, not for you to memorize right now.

After the 2:47 AM incident, Emma walked James through the three pillars of production observability. "Each pillar answers a different question," she said. "And no single pillar suffices alone."

Pillar 1: Logs (What Happened?)

Logs are structured records of discrete events. They tell you what the system did at specific moments. Emma showed James the difference between his print statements and proper structured logging:

The libraries below (structlog, Prometheus, OpenTelemetry) are production tools you will encounter in Parts 7-8. For now, focus on the three questions each pillar answers: What happened? (logs), How much? (metrics), Where did time go? (traces).

The pattern:

logs answer: "what happened?" (record each event with context)

metrics answer: "how much? how fast?" (count and measure over time)

traces answer: "where did time go?" (follow one request through the system)

import structlog

logger = structlog.get_logger()

# Bad: what James had - unstructured, no context

print("Processing order...")

# Good: structured log with context - who, what, when, why

logger.info(

"order_processing_started",

order_id="ord-7891",

customer_id="cust-456",

endpoint="/api/orders/process",

total_amount=127.50,

)

Structured logs use key-value pairs instead of free-form strings. This makes them machine-parseable: you can search, filter, and aggregate across millions of log entries programmatically. If James had this during the 2:47 AM incident, he could have searched for all orders with status=error in the last hour and seen exactly which customers were affected.

Pillar 2: Metrics (How Much? How Fast?)

Metrics are numerical measurements over time. They tell you about system behavior in aggregate. "Logs tell you what happened to one order," Emma explained. "Metrics tell you what is happening to all orders."

from prometheus_client import Counter, Histogram, Gauge

# Count events - how many orders are we processing?

orders_total = Counter(

"orders_processed_total",

"Total orders processed",

["order_type", "status"] # "domestic"/"international", "success"/"error"

)

More metric types: Histogram and Gauge (expand)

from prometheus_client import Histogram, Gauge

# Measure durations - how fast is shipping calculation?

shipping_duration = Histogram(

"shipping_calculation_seconds",

"Shipping rate calculation time in seconds",

["region"] # "domestic", "international", "express"

)

# Track current state - how many orders are in flight right now?

orders_in_progress = Gauge(

"orders_in_progress",

"Currently processing orders"

)

Metrics answer questions like: "How many orders per second are we processing?" "What is the 95th percentile shipping calculation time?" "Is the error rate increasing?" During the 2:47 AM incident, metrics would have told James instantly: "Error rate spiked from 0.1% to 12% at 2:30 AM, affecting international orders only." Instead, he had no numbers at all.

Pillar 3: Traces (Where Did Time Go?)

Traces follow a single request through the entire system, showing how time was spent across components. "This is the pillar that would have solved your 2:47 AM problem in minutes," Emma told James.

from opentelemetry import trace

tracer = trace.get_tracer("order-service")

async def process_order(order):

with tracer.start_as_current_span("process_order") as span:

span.set_attribute("order_id", order.id)

span.set_attribute("customer_id", order.customer_id)

# Each operation gets its own span for timing...

# (see full implementation below)

Full tracing implementation (expand)

from opentelemetry import trace

tracer = trace.get_tracer("order-service")

async def process_order(order):

with tracer.start_as_current_span("process_order") as span:

span.set_attribute("order_id", order.id)

span.set_attribute("customer_id", order.customer_id)

# Span 1: Validate order details

with tracer.start_as_current_span("validate_order"):

validated = validate_order(order)

# Span 2: Calculate shipping - the 2:47 AM bottleneck

with tracer.start_as_current_span("calculate_shipping") as ship_span:

shipping = await calculate_shipping(validated)

ship_span.set_attribute("region", shipping.region)

ship_span.set_attribute("rate", shipping.rate)

# Span 3: Apply discount

with tracer.start_as_current_span("apply_discount") as disc_span:

total = apply_discount(validated, shipping)

disc_span.set_attribute("discount_applied", total.discount_amount)

# Span 4: Save to database

with tracer.start_as_current_span("save_order"):

await save_order(validated, total)

return total

A trace from this code might reveal: "Order ord-7891 took 4.2 seconds total: 0.1s for validation, 3.8s waiting for calculate_shipping(), 0.2s for discount, 0.1s for database storage." Without traces, James only knew the total time. With traces, he would know exactly where the bottleneck was, and during the 2:47 AM incident, he would have seen that calculate_shipping() was timing out under concurrent load, while every other span completed in milliseconds.

Why All Three Together

| Scenario | Logs Alone | Metrics Alone | Traces Alone | All Three |

|---|---|---|---|---|

| "Why is the system slow?" | Shows individual slow requests | Shows 95th percentile is high | Shows where time is spent | Full picture: which requests, how many, and exactly why |

| "Is something broken?" | Shows error messages | Shows error rate is 5% | Shows which service fails | Full picture: what errors, how widespread, and the exact failure path |

| "How much does this cost?" | Shows per-request token counts | Shows total token usage trend | Shows which operations consume tokens | Full picture: cost per user, per feature, trending over time |

This is a natural stopping point. If you need a break, bookmark this spot and return when you are ready. Everything above covers the core concept; everything below applies it through exercises and practice.

Python Observability Toolkit

This section shows production-level Python code for structured logging, correlation IDs, and configuration. These are professional patterns you will implement in hands-on chapters. For now, read for the concepts: why JSON logs beat print statements, why log levels matter, and why every request needs a unique ID to trace its journey through the system.

After understanding the three pillars conceptually, James was ready to implement them. Emma showed him the tools he would use for his order management system.

Structured Logging with structlog

Full structlog configuration (expand)

import structlog

import logging

import sys

def configure_logging():

"""Configure structlog for production JSON output."""

structlog.configure(

processors=[

structlog.contextvars.merge_contextvars,

structlog.processors.add_log_level,

structlog.processors.TimeStamper(fmt="iso"),

structlog.processors.StackInfoRenderer(),

structlog.processors.JSONRenderer(),

],

wrapper_class=structlog.make_filtering_bound_logger(logging.INFO),

logger_factory=structlog.PrintLoggerFactory(file=sys.stdout),

)

configure_logging()

logger = structlog.get_logger()

This produces machine-parseable JSON output:

{

"event": "request_processing_started",

"request_id": "req-abc-123",

"user_id": "user-456",

"level": "info",

"timestamp": "2025-06-15T14:32:15.123Z"

}

Log Levels: Signal vs. Noise

Choosing the right log level determines whether logs are useful or overwhelming. Emma taught James a simple rule: "Each level answers a different question for a different audience."

| Level | Purpose | Example | Production Visibility |

|---|---|---|---|

DEBUG | Development details | Variable values, loop iterations | Off in production |

INFO | Normal operations | Request started, task completed | Always visible |

WARNING | Unexpected but handled | Retry succeeded, fallback used | Always visible |

ERROR | Failures requiring attention | API call failed, invalid input | Triggers alert |

CRITICAL | System-level failures | Database down, out of memory | Wakes someone up |

# Each level serves a distinct purpose in James's order system

logger.debug("discount_calculation_details", rate=0.15, subtotal=127.50)

logger.info("order_processed", order_id="ord-7891", total=108.38, duration_ms=45)

logger.warning("shipping_rate_slow", order_id="ord-7892", duration_ms=2800, threshold_ms=1000)

logger.error("shipping_calculation_failed", order_id="ord-7893", error="timeout", retry_count=3)

logger.critical("database_connection_lost", host="orders-db.example.com")

Correlation IDs: Connecting the Dots

During the 2:47 AM incident, James had seen ERROR: something went wrong in his logs but could not connect it to a specific order or customer. A correlation ID ties all log entries for a single request together, solving exactly this problem:

import uuid

import structlog

def create_request_context(request):

"""Bind a correlation ID to all logs for this request."""

correlation_id = str(uuid.uuid4())

structlog.contextvars.clear_contextvars()

structlog.contextvars.bind_contextvars(

correlation_id=correlation_id,

user_id=request.user_id,

)

return correlation_id

Now every log entry in that order's lifecycle shares the same correlation_id. When customer service reported the 2:47 AM issue, James could have searched for the correlation ID and seen the complete story, from order submission through discount calculation, shipping rate lookup, and the exact point of failure.

Observability for AI Agents

As James integrated more AI-generated code into his order management system, he discovered that AI agents introduce observability challenges that traditional web applications do not face. The AI that generated his shipping calculator and discount logic had its own failure modes, and monitoring them required new dimensions.

The code blocks below show how James tracks AI agent behavior in production. You do not need to understand the Python or Prometheus syntax. Focus on the four dimensions being monitored: token usage (cost), response quality (correctness), error rates (failures), and cost per operation (budget). Each dimension catches a different kind of AI-specific problem that traditional monitoring misses. You will implement these patterns yourself in hands-on chapters.

Dimension 1: Token Usage Tracking

When James used Claude Code to generate his shipping calculator, each generation consumed tokens, and costs varied dramatically depending on how he prompted. Tokens are both your cost driver and your quality signal.

import structlog

logger = structlog.get_logger()

class TokenTracker:

"""Track token usage per request, per user, per model."""

def log_usage(self, request_id: str, response):

usage = response.usage

logger.info(

"token_usage",

request_id=request_id,

input_tokens=usage.input_tokens,

output_tokens=usage.output_tokens,

total_tokens=usage.input_tokens + usage.output_tokens,

model=response.model,

estimated_cost=self._estimate_cost(usage, response.model),

)

def _estimate_cost(self, usage, model: str) -> float:

"""Estimate cost based on model pricing."""

# Rates change frequently - load from config or environment

rates = {

"gpt-4o": {"input": 0.0025, "output": 0.01},

"claude-sonnet-4": {"input": 0.003, "output": 0.015},

}

rate = rates.get(model, {"input": 0.01, "output": 0.03})

return (

(usage.input_tokens / 1000) * rate["input"]

+ (usage.output_tokens / 1000) * rate["output"]

)

Dimension 2: Response Quality Metrics

James noticed that sometimes Claude Code generated a shipping function that compiled and passed type checks but produced subtly wrong rates for edge cases. Unlike traditional APIs, AI responses can be "correct" structurally but poor in quality.

from prometheus_client import Histogram, Counter

# Track response characteristics that correlate with quality

response_length = Histogram(

"agent_response_length_tokens",

"Length of agent responses in tokens",

buckets=[50, 100, 200, 500, 1000, 2000, 5000]

)

# Track when responses need human correction

corrections_total = Counter(

"agent_corrections_total",

"Times a user corrected or rejected agent output",

["correction_type"] # "factual", "tone", "incomplete", "wrong_format"

)

# Track conversation depth (more turns may indicate confusion)

conversation_turns = Histogram(

"agent_conversation_turns",

"Number of turns before task completion",

buckets=[1, 2, 3, 5, 8, 13, 21]

)

Dimension 3: Error Rate Monitoring

AI agents fail differently from traditional software: they can fail silently by producing plausible but wrong output. A shipping function that returns $0.00 for every international order does not throw an exception. It looks correct structurally. Only observability catches it.

from prometheus_client import Counter

# Explicit failures (easy to catch)

explicit_errors = Counter(

"agent_errors_total",

"Explicit agent failures",

["error_type"] # "timeout", "rate_limit", "context_overflow", "invalid_response"

)

# Implicit failures (harder - detected through quality signals)

quality_flags = Counter(

"agent_quality_flags_total",

"Responses flagged for quality concerns",

["flag_type"] # "too_short", "repetitive", "off_topic", "hallucination_risk"

)

To catch silent failures, James learned two techniques: baseline comparison (alert when today's output distribution deviates from last week's; if average shipping cost suddenly drops 90%, something is wrong even though no errors fired) and shadow testing (run the new AI-generated function alongside the old one on real inputs, flag any divergence for human review before cutting over).

Dimension 4: Cost Per Operation

James's traditional order processing had fixed infrastructure costs: the server cost the same whether it handled ten orders or ten thousand. AI agents are different: they have variable per-request costs that scale with usage.

import structlog

from prometheus_client import Histogram

logger = structlog.get_logger()

cost_per_operation = Histogram(

"agent_cost_per_operation_dollars",

"Cost per agent operation in dollars",

["operation_type"], # "chat", "code_review", "summarize", "translate"

buckets=[0.001, 0.005, 0.01, 0.05, 0.1, 0.5, 1.0]

)

def track_operation_cost(operation_type: str, total_tokens: int, model: str):

"""Track the cost of each operation for budget monitoring."""

cost = estimate_cost(total_tokens, model)

cost_per_operation.labels(operation_type=operation_type).observe(cost)

if cost > 0.10: # Alert threshold

logger.warning(

"high_cost_operation",

operation_type=operation_type,

cost_dollars=cost,

total_tokens=total_tokens,

model=model,

)

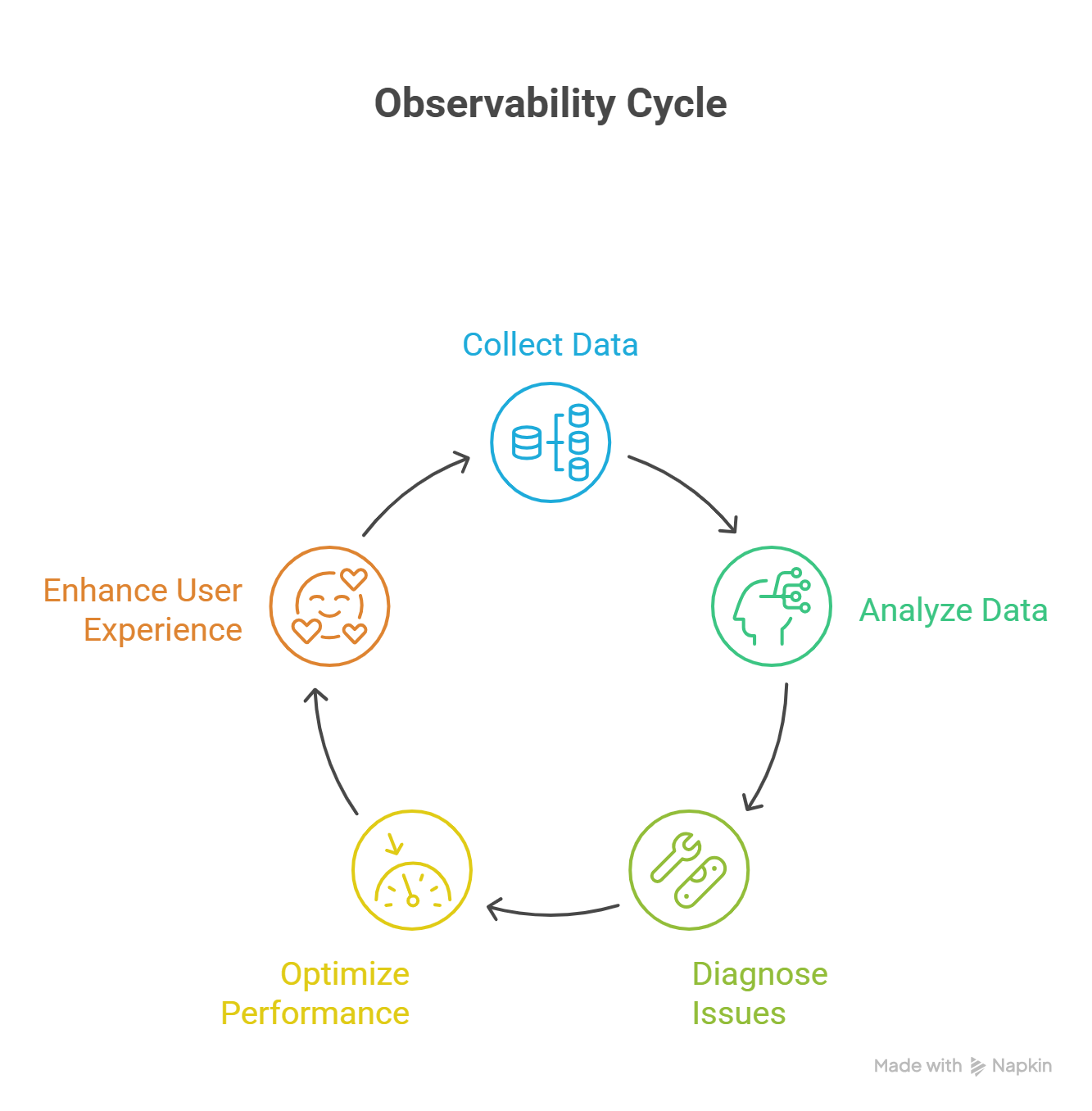

The Feedback Loop: Observe, Insight, Improve, Verify

Observability is not just about watching; it drives a continuous improvement cycle. James experienced this firsthand after the 2:47 AM incident:

1. OBSERVE: Structured logs reveal shipping calculation timeouts under load

|

2. INSIGHT: "International shipping rates fail when concurrent requests exceed 50"

|

3. IMPROVE: Add connection pooling and request queuing to shipping API calls

|

4. VERIFY: Write a load test (Axiom VII) that simulates 100 concurrent orders

|

5. DEPLOY: CI pipeline (Axiom IX) validates the fix passes under load

|

6. OBSERVE: Monitor production to confirm shipping timeouts have stopped

|

[Repeat]

This is where observability and testing become a unified system. The 2:47 AM incident discovered a failure mode James's test suite never anticipated. That discovery became a new load test. The load test now prevents the same regression. And observability confirms, every night, that shipping calculations stay fast under real traffic. The verification system grows stronger with each cycle: observability feeds testing, testing feeds confidence, confidence feeds deployment, and observability watches the result.

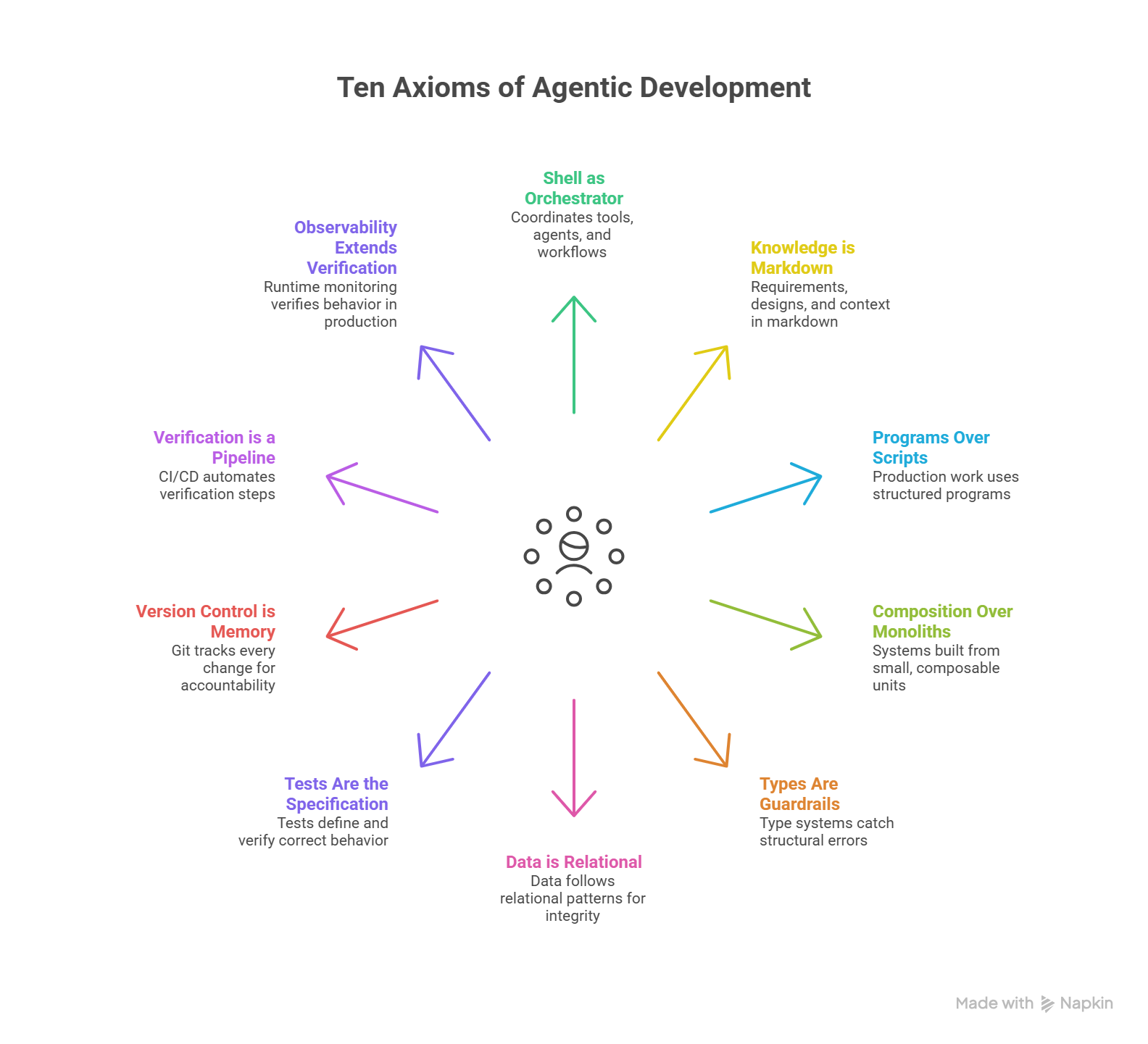

The Complete System: All Ten Axioms

This is the final axiom. Together, the ten axioms form a coherent system for agentic software development, and James has lived every one of them.

Trace James's order management system through the complete stack, every axiom he learned this chapter:

- Shell orchestrates (I): James uses Claude Code to coordinate development: generating code, running tests, deploying changes

- Spec in markdown (II): His order management requirements live in

spec.md, readable by both humans and AI - Proper program (III): His shipping calculator is a structured Python program with modules, not a loose script

- Composed from units (IV):

calculate_shipping(),apply_discount(), andvalidate_order()are independent, composable functions - Types enforce contracts (V):

OrderRequest,ShippingRate, andDiscountResultare typed dataclasses, and the compiler catches structural errors before runtime - Data stored relationally (VI): Orders, customers, and shipping rates live in properly normalized database tables with foreign keys

- Tests specify behavior (VII): Fifty-three TDG specifications define what "correct" means for every discount rule and shipping calculation

- Git remembers everything (VIII): Every change to the shipping logic is tracked, reversible, and attributable. James can show exactly what changed and why

- Pipeline verifies (IX): CI runs formatting, linting, type checking, all fifty-three tests, and a security scan on every push

- Production is observed (X): Structured logs, metrics, and traces confirm the order system works for real customers under real load, and alert James when it does not

No single axiom is sufficient. Without observability, James's tests pass but he is blind to the 2:47 AM shipping failure. Without tests, his observability has no baseline for "correct." Without types, his discount calculations silently corrupt when someone passes a string where a float belongs. The axioms are not a menu to choose from; they are a system that works together, and James learned that lesson one axiom at a time.

Anti-Patterns

Picture the unobservable system. The code is clean, the tests pass, the pipeline is green, and the system is deployed. Then something goes wrong. Not catastrophically, but subtly. Response times creep up. Error rates tick from 0.2% to 0.8%. A handful of international orders fail silently every hour. Nobody notices because nothing alerts, nothing dashboards, nothing trends.

The developers check the logs and find print("Processing order...") repeated ten thousand times with no timestamps, no order IDs, no error context. They check metrics and find none. They check traces and find none. The system is a black box running in production, and the only signal that anything is wrong comes from customer service tickets trickling in over days.

This was James's order management system at 2:47 AM, and it is every system that treats observability as optional.

| Anti-Pattern | Why It Fails | The Fix |

|---|---|---|

| Print statements in production | Unstructured, no levels, no context, lost when process restarts (exactly what James had) | Use structlog with JSON output and persistent log aggregation |

| No error alerting | "We'll notice eventually" means customers notice first (James learned this at 2:47 AM) | Define alert thresholds; wake someone for CRITICAL, notify for ERROR |

| Logging everything at DEBUG | Noise overwhelms signal; storage costs explode | Use appropriate log levels; DEBUG off in production |

| No correlation between requests | Impossible to trace a single order's journey through the system | Add correlation IDs; bind context at request start |

| Observability as afterthought | "Add monitoring later" means after the first production incident (James added it because of his incident) | Design observability into the system from the start, like testing |

| Metrics without baselines | "Is 200ms shipping calculation time good or bad?" You cannot answer without history | Establish baselines first; alert on deviation, not absolute values |

| Monitoring only happy paths | You only track successful orders; failed shipping calculations are invisible | Instrument error paths with the same rigor as success paths |

The Log Avalanche

There is a trap that catches developers right after they learn observability, and Emma warned James about it the same week he instrumented his order system: The Log Avalanche, logging everything at maximum detail because "more data is always better."

James's first instinct after the 2:47 AM incident was to add DEBUG-level logging to every function. Within a day, his order system was generating 2GB of logs per hour. The storage costs spiked. The log aggregation system slowed to a crawl. And when he actually needed to find a specific error, it was buried under millions of irrelevant entries. The signal drowned by noise he created trying to see everything.

"Observability is not about capturing everything," Emma told him. "It is about capturing the right things at the right level."

She had him write this on a sticky note:

| Level | When to Use | Example |

|---|---|---|

| DEBUG | Local development only; never in production | Variable values inside loops |

| INFO | Normal operations worth recording | order_created, payment_processed |

| WARNING | Handled problems that may need attention | Retry succeeded on third attempt |

| ERROR | Failures requiring investigation | Payment gateway returned 500 |

| CRITICAL | System-level emergencies | Database connection pool exhausted |

"If everything is important, nothing is."

The Log Avalanche extends beyond volume. Production logs may contain customer data (order details, shipping addresses, payment references). James learned to apply data minimization: log what you need for debugging and monitoring, never personally identifiable information. Apply retention policies: not every log entry needs to live forever. And remember that observability infrastructure itself needs security: access to production logs should be as controlled as access to the production database.

Try With AI

Prompt 1: Design a Feedback System for an Event You Hosted

You organized a school event (a fundraiser, a talent show, a club meeting).

You planned everything carefully: venue booked, schedule set, volunteers

assigned, supplies purchased. Everything on your checklist was done.

But AFTER the event, you got mixed feedback:

- Some attendees said it was too crowded

- The food ran out halfway through

- Two activities overlapped and people had to choose

- A few people said it was the best event they'd attended

Your planning notes say: "Event completed."

Design a feedback and monitoring system so that NEXT time, you can answer:

1. How many people actually attended vs how many you planned for?

2. Which activities were most popular and which were empty?

3. When exactly did problems happen (food running out, overcrowding)?

4. What is the difference between "3 people complained" and "half the

attendees had a bad experience"?

5. What would you record DURING the event (not just before or after)

to catch problems while you can still fix them?

For each piece of information, explain: could you have caught this

problem with better PLANNING (pre-event checks), or did you need

MONITORING (watching what happens during the event)?

What you're learning: The difference between pre-event verification (your planning checklist) and real-time observability (watching what actually happens). Some problems (like food running out) could have been caught with better planning (count RSVPs, order 20% extra). Others (like unexpected crowding at one activity) can only be caught by watching the event unfold. This maps directly to James's experience: his CI pipeline (planning checks) caught code errors, but only production observability (real-time monitoring) could catch the 2:47 AM shipping failure under actual load.

Prompt 2: Build a Monitoring Plan for a Study Routine

A student has a study plan: study 2 hours per day, review notes weekly,

do practice problems before each test. The plan looks perfect on paper.

But their grades are not improving.

The student says: "I followed my plan! I studied every day!"

Help me design a monitoring system that answers:

- Did they actually study for 2 hours, or did they sit at a desk for

2 hours while checking their phone?

- Which subjects did they spend time on? Are they over-studying easy

topics and under-studying hard ones?

- Are the practice problems they're doing similar to what appears on tests?

- Is their understanding actually improving, or are they re-reading

without retaining?

For the monitoring system, define:

1. What to RECORD daily (the equivalent of logs: what happened?)

2. What to MEASURE weekly (the equivalent of metrics: how much? how well?)

3. What to TRACE monthly (the equivalent of traces: where does time go?)

Then explain: why is "I studied every day" as useless as James's

"Processing order..." log message? What specific information is missing?

What you're learning: The three pillars of observability applied to a personal system. "I studied every day" is the study equivalent of print("Processing order..."); it confirms the process ran but tells you nothing about quality, effectiveness, or where time was actually spent. Logs (daily records) tell you what happened in each session. Metrics (weekly measurements) tell you whether understanding is improving. Traces (monthly reviews) show where your time went across subjects. No single pillar answers all the questions, just as James needed logs AND metrics AND traces to diagnose his 2:47 AM incident.

Prompt 3: Trace a Real-Life Project Through All Ten Axioms

Pick a project you know well: organizing an event, running a club,

managing a group assignment, or planning a trip. Trace it through all

ten axioms using plain language:

1. Shell as Orchestrator: Who coordinates the work? What is their ONLY job?

2. Knowledge is Markdown: Where are the plans and decisions written down?

3. Programs Over Scripts: Is there a structured plan or just a loose idea?

4. Composition Over Monoliths: Is the work broken into independent tasks?

5. Types Are Guardrails: What labels or categories prevent mix-ups?

6. Data is Relational: Is information stored in one place or duplicated?

7. Tests Are the Specification: How do you define "done" before starting?

8. Version Control is Memory: How do you track what changed and why?

9. Verification is a Pipeline: What checks happen before you "ship"?

10. Observability Extends Verification: How do you know it ACTUALLY

worked after delivery?

For each axiom, give a specific example from YOUR project.

Then identify: which axiom is your project WEAKEST on? What would

go wrong because of that gap?

What you're learning: Systems thinking. The ten axioms are not separate rules but an interconnected system. When you trace your own project through all ten, you discover gaps you never noticed. Maybe you planned well (Axioms I-IV) but never defined "done" (Axiom VII). Maybe you tracked changes (Axiom VIII) but never monitored whether the result actually worked (Axiom X). The axioms work together because real projects are systems, and systems need complete coverage to be reliable. This is the same insight James reached: each axiom covers a gap that the others leave open.

PRIMM-AI+ Practice: Observability Extends Verification

Predict [AI-FREE]

Enter Plan Mode in Claude Code (Shift+Tab). A patient has knee surgery. Every pre-operation check passed: blood work normal, imaging confirmed the problem, anesthesia plan approved, surgical team briefed. The surgery itself goes perfectly: procedure completed on schedule, no complications in the operating room.

The patient is discharged. The medical record says: "Surgery complete."

A week later, the patient calls with severe swelling and pain. The doctor opens the record.

Predict:

- Can the doctor figure out WHAT went wrong from "Surgery complete"?

- Can they tell WHETHER the swelling started on day 2 or day 6?

- Can they tell WHICH part of recovery failed: was it the physical therapy, the medication, an infection, or something else?

- What specific information is missing from "Surgery complete" that would help diagnose the problem?

Write your answers. Rate your confidence from 1 to 5.

Run

In Claude Code, type: "Why is 'Surgery complete' a useless medical record when a patient calls with complications a week later? What should a doctor's post-surgery monitoring plan include so that when something goes wrong during recovery, they can trace exactly what happened? Give me at least 5 specific things to track."

Compare. Did the AI suggest the same missing information you identified?

Answer Key: What to Look For

The AI should identify that "Surgery complete" is useless because it records zero recovery information. A useful post-surgery monitoring plan should track at least these 5 things:

- Vital signs over time: Temperature, blood pressure, heart rate, recorded daily, not just at discharge. A fever on day 3 means something very different from a fever on day 7. Without a timeline, you cannot tell when the problem started.

- Pain levels and location: Is pain increasing or decreasing? Is it at the surgical site or somewhere new? "Patient reports pain" is useless without severity (1-10), location, and trend over days.

- Physical recovery milestones: Can the patient bend the knee 30 degrees by day 3? 60 degrees by day 7? Without expected milestones, you cannot tell if recovery is on track or falling behind.

- Medication compliance: Did the patient take antibiotics on schedule? Did they stop pain medication early? Non-compliance is a common cause of complications that "Surgery complete" would never reveal.

- Warning sign thresholds: What level of swelling is normal vs. concerning? What temperature triggers a call to the doctor? Without defined thresholds, patients do not know when to report problems, and doctors cannot distinguish routine recovery from emerging complications.

If your prediction identified at least 3 of these categories, your instinct for post-delivery monitoring is strong. Notice how "Surgery complete" is exactly as useless as James's print("Processing order..."); both confirm the process ran but provide zero information when something goes wrong afterward.

Investigate

Write in your own words the difference between "checking before surgery" (verification: pre-op tests, imaging, team briefing) and "monitoring after surgery" (observability: tracking vitals, pain, recovery milestones). Why do you need both? Why is passing all the pre-op checks not enough?

Now connect this to James's story. His CI pipeline (formatting, linting, types, tests, security) all passed. Every pre-deployment check was green. But at 2:47 AM, his shipping calculator failed under real production load with real customers. His checks verified that the code was correct. They could not verify that the code was resilient under conditions no test anticipated. This is exactly like the surgery passing every pre-op check but the patient developing complications at home under real-world recovery conditions that the operating room never simulated. Both James and the surgeon did everything right before delivery, and both were blind to what happened after.

Apply the Error Taxonomy: the surgery passing all pre-op checks but the patient developing complications at home = data/edge-case error. The procedure was verified under controlled conditions (the operating room), but not under real-world recovery (the patient's home, their activity level, their medication compliance). The checks verified the procedure; observability would have caught the recovery failure early.

Modify

The doctor now tracks all five recovery dimensions (vitals, pain, milestones, medication, warning thresholds). But the patient reports "I feel fine, everything is great" at every check-in, and still shows up a week later with severe swelling.

What went wrong? The monitoring plan relied entirely on self-reported data: the patient's own assessment. Design a modification: what objective signals could the doctor monitor that do not depend on the patient's honesty or self-awareness? How would the doctor distinguish between "the patient is genuinely recovering well" and "the patient is underreporting problems"? Think about the difference between asking someone "how do you feel?" and measuring something that cannot be faked.

Make [Mastery Gate: Capstone]

Pick any process from your life that you have "verified" (checked beforehand) but never "observed" (monitored after completion). Examples: a study routine (you followed the plan but did not track whether you actually retained the material), a budget (you planned spending but did not track actual expenses), a workout plan (you did the exercises but did not measure results).

Write a monitoring plan:

- What would you watch AFTER the process runs? (What signals tell you whether it is actually working?)

- What signals tell you something is wrong even when all the upfront checks passed?

- How would you tell the difference between "one person had a bad experience" and "everyone has this problem"?

This monitoring plan is your mastery gate, and it is the capstone for all ten axioms.

This completes the ladder. Rung 1 (Predict) through Rung 4 (Pipeline) checked things BEFORE delivery. Rung 5 (Observability) watches what happens AFTER. You need both halves: pre-delivery verification catches the errors you can anticipate; post-delivery observability catches the ones you cannot.

James looked at his structured logs flowing through the dashboard. "Three months ago I was staring at print("Processing order...") at 2:47 AM with no idea which orders were failing."

"And now?"

"Logs tell me what happened to a specific order. Metrics tell me how many are affected and how fast things are degrading. Traces show me where in the request the time went." He paused. "It's like the difference between a warehouse manager who says 'something's wrong' and one who can tell you exactly which shelf, which shipment, which hour."

Emma considered this. "I'm honestly not sure how much AI agent monitoring will change in the next year or two. The four dimensions we covered, tool usage, reasoning quality, cost tracking, output verification, those feel right for now. But the field is moving fast, and I wouldn't bet on the specifics staying stable."

"The feedback loop is the part that sold me, though. The 2:47 AM incident became a load test. The load test prevents the regression. Observability confirms the fix holds every night. Each cycle makes the system stronger."

"And that's the point of all ten axioms together," Emma said. "Shell, markdown, programs, composition, types, relational data, tests, version control, pipelines, observability. They're not a checklist. They're a system. Skip one, and a gap opens."

James nodded slowly. "So what's next? We know what the system looks like. When do we actually build it?"

"Next chapter. You install and configure the tools that enforce these axioms on your own machine. The development environment."

The Complete Journey

James closed his laptop and looked at the order management system dashboard, green across every metric, structured logs flowing cleanly, traces showing sub-second response times even during the evening traffic peak. Three months ago, he had stared at print("Processing order...") in a panic at 2:47 AM, unable to tell which orders were failing or why.

The distance between then and now was ten axioms.

He had started with a shell that orchestrated his tools instead of manual copy-paste between windows. He had captured his requirements in markdown that both he and Claude Code could read. He had structured his discount calculator as a proper program with modules and imports, not a loose script. He had composed his system from small, focused functions (calculate_shipping(), apply_discount(), validate_order()), each doing one thing well. He had wrapped those functions in types that caught structural errors before they reached production. He had stored his orders and customers in properly normalized tables with foreign keys enforcing integrity.

Then came the verification stack. Test-Driven Generation gave him fifty-three specifications that defined "correct," and caught the $12,000 discount bug before a single customer saw it. Git gave him memory: every change tracked, reversible, attributable, with commit messages that explained why. His CI pipeline automated every check (formatting, linting, types, tests, security) so that nothing reached production without passing all of them. And finally, observability extended that verification into production itself: structured logs, metrics, and traces watching the system while real customers used it, alerting him when reality diverged from expectation.

"You didn't just learn ten rules," Emma told him. "You built a system. Each axiom covers a gap the others leave open. That is what agentic development means: not AI writing code for you, but AI and engineering discipline working together, from the first shell command to the last production metric."

James nodded. The 2:47 AM incident had been the worst night of his career. It had also been the beginning of everything he built after.

PRIMM-AI+ Thread: How the Framework Operated Across All Ten Axioms

Throughout this chapter, PRIMM-AI+ was not a section you read; it was a method you practiced. Here is how each stage operated across the ten axioms:

Predict [AI-FREE]: In every axiom, you entered Plan Mode in Claude Code and committed to an answer with a confidence score. You classified tasks as coordination or work (Axiom I), predicted which knowledge formats would fail (Axiom II), anticipated what breaks at scale (Axiom III), reasoned about restaurant staffing failures (Axiom IV), caught type errors by reasoning about form fields (Axiom V), counted how many updates duplicated data requires (Axiom VI), identified specification gaps in a pilot's pre-flight checklist (Axiom VII), diagnosed missing information in file versions (Axiom VIII), prioritized assignment checks under time pressure (Axiom IX), and found the gaps in a useless post-surgery record (Axiom X). Each prediction built the habit of thinking before checking.

Run: After each prediction, you asked Claude Code the same question and compared. Sometimes you were right. Sometimes the AI identified failure modes you missed. Sometimes you caught things the AI overlooked. The comparison, not the AI's answer, was the learning event.

Investigate: You wrote explanations in your own words, then applied the Error Taxonomy to classify why things go wrong. You now recognize five error types: type errors (wrong data shape), logic errors (wrong reasoning), specification errors (ambiguous requirements), data/edge-case errors (unexpected inputs), and orchestration errors (tangled responsibilities). This vocabulary gives you precision when diagnosing problems.

Modify: You changed scenarios and reasoned about what breaks: the field trip organizer who packs lunches, the napkin plan scaled to 100 guests, the restaurant cook who also handles billing, the cake spec that missed quality requirements. Each modification built the instinct to ask "what could go wrong?" before it does.

Make [Mastery Gate]: You created artifacts: a coordination plan, a structured decision document, a "program" version of a routine, a task breakdown, a form specification, a relationship map, a spec with checklist, a version history, an ordered checklist, and a monitoring plan. These are not homework. They are proof that you internalized each axiom well enough to apply it independently.

The Verification Ladder climbed with you: prediction (Rung 1) in every exercise, types (Rung 2) in Axiom V, tests (Rung 3) in Axiom VII, pipelines (Rung 4) in Axiom IX, and observability (Rung 5) in Axiom X. You did not just learn about verification; you practiced it at progressively higher levels.

Chapter-End Self-Assessment Rubric

Use this rubric to evaluate your own understanding across the ten axioms. For each dimension, honestly assess where you fall. The goal is not to score "Fluent" in everything immediately. It is to identify where you are strong and where you need more practice.

In this chapter, your mastery gates are conceptual artifacts: coordination plans, decision documents, form specifications, relationship maps, ordered checklists, and monitoring plans. In the hands-on chapters that follow, you will apply these same axioms to real code, tests, and configurations.

| Dimension | Developing | Competent | Fluent |

|---|---|---|---|

| Prediction Accuracy | Predictions are often wrong; confidence scores do not match actual accuracy | Predictions are roughly correct; confidence scores track reality within ±1 | Predictions are consistently accurate; confidence scores reliably reflect true understanding |

| Error Taxonomy | Can name the five error types but struggles to classify real scenarios | Correctly classifies most scenarios; occasionally confuses similar types (e.g., specification vs. logic error) | Classifies errors quickly and accurately; spontaneously applies taxonomy when encountering new problems |

| Explanation Quality | Explanations restate the axiom rather than demonstrating understanding | Explanations use own words and connect the axiom to personal experience | Explanations teach the concept to someone else using original analogies and examples |

| Modification Reasoning | Identifies that something breaks but cannot explain the mechanism | Identifies what breaks AND explains why the modification causes the failure | Anticipates cascading effects: predicts not just the first failure but second-order consequences |

| Mastery Gate Quality | Artifacts are incomplete or generic (could apply to any axiom) | Artifacts are complete and specific to the axiom, with clear structure | Artifacts demonstrate original thinking; the plan, spec, or checklist reveals genuine understanding beyond what was taught |

| PRIMM-AI+ Engagement | Skipped Predict or copied AI answers without comparing to own prediction | Completed all five stages; comparisons between prediction and AI response show genuine reflection | Used the framework independently; applied Predict-Run-Investigate-Modify-Make to a new problem without prompting |