Domain 3: Assurance Services

"The auditor's core professional skill is not testing transactions. It is identifying what could go wrong before designing how to test it. AI executes the testing; the auditor frames the risk."

In Lesson 3, you examined tax and non-assurance advisory and saw how the compliance/advisory bifurcation determines which parts of tax practice AI can automate and which parts require professional judgment. Now you turn to the domain that defines the CA/CPA profession's public interest role: assurance services. This is the domain most protected by regulation: and also the domain where the consequences of AI failure are most severe.

What makes assurance different from the domains you have examined so far is not just the volume of work AI can automate. It is that AI changes the fundamental nature of audit evidence. For over a century, auditors have examined samples of transactions because examining every transaction was physically impossible. AI removes that constraint. The shift from sampling to population testing is not an incremental improvement. It is an epistemological change: a change in what we can know and how confidently we can know it.

What This Domain Covers

Assurance services encompass three sub-categories, each with distinct AI impact dynamics:

| Sub-Category | What It Involves | Regulatory Context |

|---|---|---|

| External audit | Independent examination of financial statements to give users confidence they are true and fair | Statutory requirement: legal consequences for failure |

| Internal audit | Assessing the effectiveness of governance, risk management, and internal controls | Governance function: reports to audit committee |

| Other assurance | Reviews, agreed-upon procedures, specialist assurance on non-financial information | Engagement-specific: less standardised |

Materiality is the threshold below which a misstatement in financial statements is considered unlikely to influence users' decisions. Auditors set a materiality threshold (typically 5% of pre-tax profit or 1% of revenue, depending on the entity) and design their audit work to provide reasonable assurance that no misstatement above this threshold exists.

Audit sampling is the practice of examining a subset of transactions rather than every transaction, to form a conclusion about the full population. Traditional audit sampling is statistical: selecting a representative sample, testing it, and extrapolating results to the whole population.

Why AI changes both: An AI audit agent does not sample: it can examine every transaction in the population. This shifts the audit from probabilistic ("we tested a sample and found no errors") to deterministic ("we tested every transaction and found these specific anomalies"). This is a fundamental change in the epistemics of audit assurance, with significant implications for audit standards, methodology, and the nature of the auditor's opinion.

The materiality concept remains relevant even with population testing: it still determines which findings are significant enough to report. But the evidence base on which the auditor forms an opinion changes from extrapolation to comprehensive examination.

Gen-AI Capabilities Available Now

Three Gen-AI capabilities are already reducing the labour burden of audit execution.

Audit documentation. The documentation burden in external audit (working papers, audit programmes, risk assessments, conclusions) is enormous and largely standardised. Gen-AI tools draft standard working paper sections, populate testing templates, summarise the results of audit procedures, and produce first drafts of conclusions. The auditor reviews and signs off. Documentation time is dramatically reduced.

Contract analysis. Reviewing contracts for key terms (revenue recognition implications, lease classification, contingent liabilities, related party transactions) is a high-volume, document-intensive task. Gen-AI tools read contracts, extract relevant clauses, and flag items requiring auditor attention far faster than manual review.

Risk identification and analysis. Producing the risk assessment for an audit engagement (identifying what could go wrong in the financial statements, assessing the likelihood and magnitude of potential misstatement, and designing the audit response) draws on understanding the client's business, its industry, and its control environment. Gen-AI tools synthesise publicly available information about the client and its sector, identify industry-specific risks, and produce a structured risk register for auditor review.

Agentic AI Capabilities Approaching Production

Two agentic systems represent the next stage of audit transformation.

Autonomous audit agent. This agent executes audit procedures autonomously: extracting data from the client's accounting system, running analytical procedures, testing reconciliations, selecting and testing transactions, documenting the results, and producing a draft audit file for senior review. The audit partner reviews conclusions and signs the audit opinion; much of the execution work is autonomous.

Continuous audit agent. Rather than conducting an annual audit after year-end, this agent monitors financial transactions in real time: flagging anomalies, unusual patterns, and potential misstatements as they occur. This shifts audit from an annual retrospective exercise to a continuous assurance function. The implications are profound: instead of discovering problems months after they occurred, the client and auditor are alerted in real time.

Real-World Deployments

| Platform | What It Does | Current Stage |

|---|---|---|

| KPMG Clara | Analyses entire populations of transactions, identifies anomalies, assists audit execution at scale | AI-integrated audit platform: deployed to 95,000+ auditors globally; AI agents streamlining expense vouching and financial disclosure preparation; built on Microsoft Azure AI |

| MindBridge AI | Autonomously analyses financial transactions, identifies anomalies, flags potential risks across 100% of transactions | AI-driven population analysis: partnered with Genpact (Feb 2026) for global audit analytics; VEON partnership (Jan 2026) for financial analytics and internal controls |

The distinction between these platforms matters. KPMG Clara is an integrated audit workflow platform (it manages the end-to-end audit process and is adding AI capabilities progressively. MindBridge specialises in transaction-level anomaly detection) it analyses 100% of financial data using statistical methods, machine learning, and deep learning to identify risks that sampling-based approaches would miss. Together, they illustrate the two vectors of AI audit transformation: making the workflow more efficient (Clara) and making the evidence base more comprehensive (MindBridge).

The Practitioner Impact

Junior audit roles (document collection, data extraction, sample testing, working paper preparation) face the most immediate displacement. Senior roles shift from supervising execution to reviewing AI outputs, exercising professional judgment on complex areas, and managing client relationships.

The economics change significantly. An audit that currently requires 500 staff hours might require 150 hours of senior professional review and 350 hours of AI execution. The firm that can price this productively (and demonstrate to regulators that AI execution meets the required standard of evidence) holds a significant competitive advantage.

ISA (International Standards on Auditing): The International Auditing and Assurance Standards Board (IAASB) sets audit standards used in most jurisdictions worldwide, including Pakistan. ISA 530 covers audit sampling, and its principles will need to evolve as population testing becomes standard practice.

US (PCAOB): The Public Company Accounting Oversight Board sets audit standards for US-listed companies. PCAOB standards have historically been more prescriptive than ISA. The US market's emphasis on internal controls (SOX Section 404) creates specific opportunities for continuous monitoring agents.

UK (FRC): The Financial Reporting Council oversees audit quality in the UK. The UK's audit reform agenda, including proposals for stronger corporate governance, creates an environment where AI-enhanced assurance may be viewed favourably: provided it demonstrably improves audit quality rather than merely reducing cost.

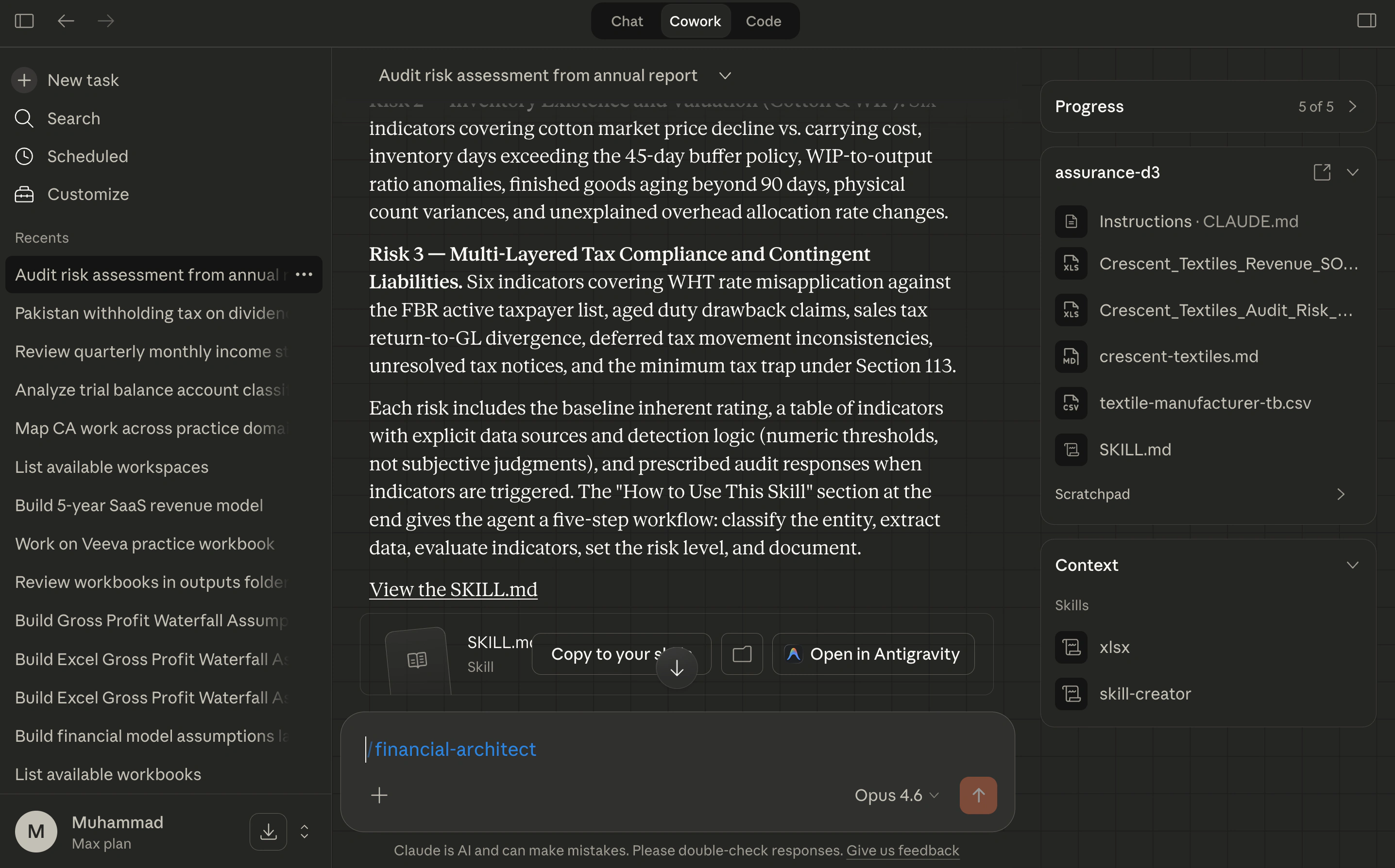

Practice Exercise 3: AI-Assisted Audit Risk Assessment (35 min)

What you'll build: A structured audit risk assessment workpaper, revenue recognition analysis, a continuous monitoring specification: and a reusable skill that encodes your sector-specific audit expertise, created through the natural workflow of doing the analysis, not as a separate writing task.

Requirements: Cowork (any Claude plan). Download the exercise data zip and add the Crescent Textiles entity profile (exercises/entity-profiles/crescent-textiles.md) and trial balance (exercises/trial-balances/textile-manufacturer-tb.csv) to your Cowork project folder. Alternatively, use publicly available financial information about any listed company in a sector you know.

-

Prepare a risk assessment. Ask Cowork:

"Prepare an audit risk assessment for [Company Name] based on its most recent annual report. Structure the output as: (1) significant risks of material misstatement for each major financial statement line, (2) assessment of inherent risk for each significant risk, (3) the audit procedures most likely to address each risk effectively."

If you loaded the exercise data, Cowork reads the entity profile and trial balance from your project folder automatically. Review the output: does it identify risks specific to this company and sector, or generic audit risks that could apply to any entity?

-

Deep-dive on revenue. Continue the same conversation:

"For the revenue recognition line, what are the three most important questions an auditor should answer to determine whether revenue has been recognised correctly under IFRS 15? What evidence should the auditor gather to answer each question?"

-

Design continuous monitoring. Ask:

"If you were designing a continuous audit monitoring programme for this company, which three transaction types or account balances would you monitor in real time, and what anomalies would trigger an alert? Write this as if you were specifying it for an AI monitoring agent."

-

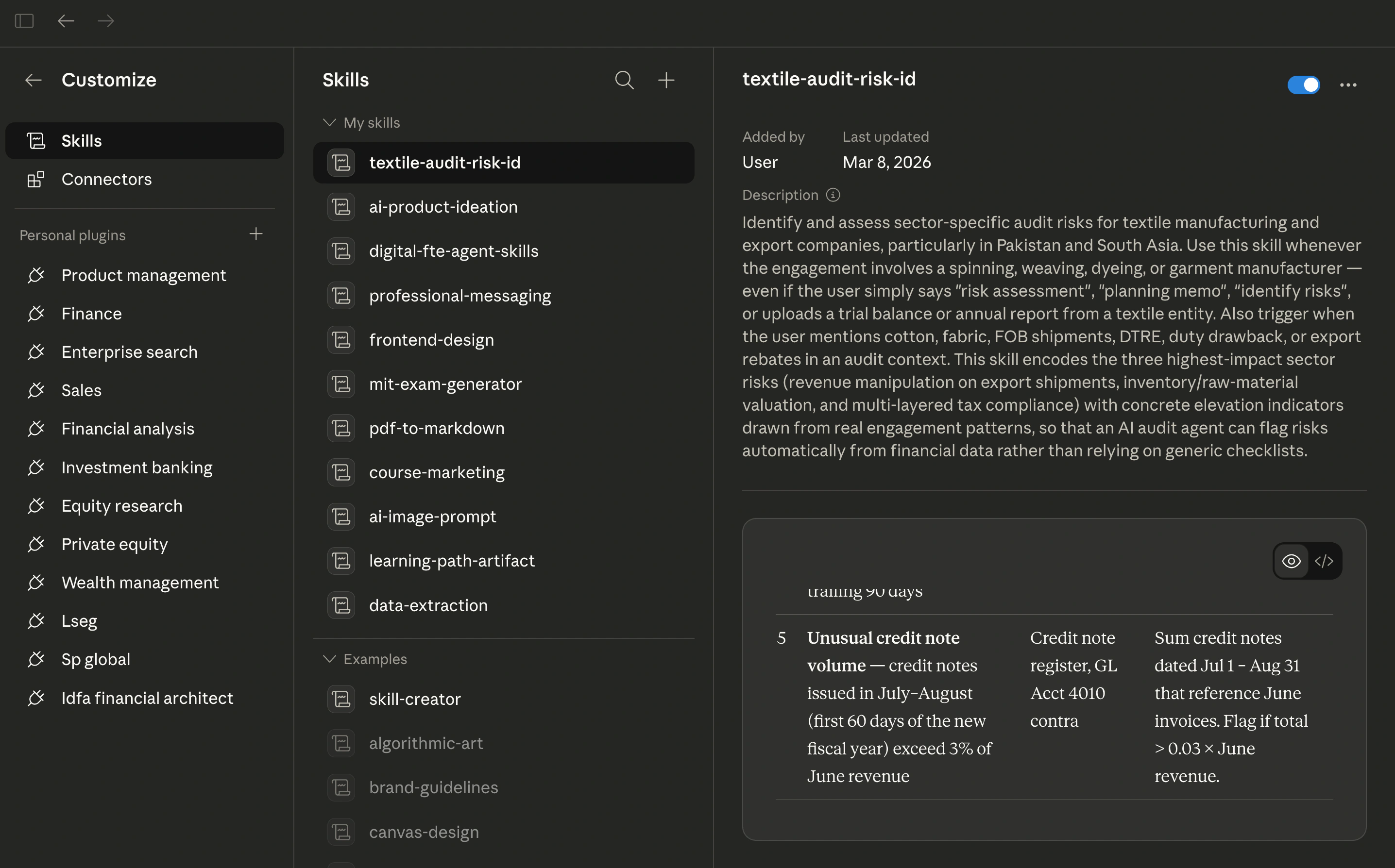

Let the skill emerge from the work. You have now done substantive audit analysis: risk identification, revenue deep-dive, monitoring design. Ask Cowork to encode what it learned:

"Write a skill instruction for the risk identification step: encode the three most important sector-specific audit risks for this company's industry, with the indicators that would cause each risk to be elevated."

Cowork creates the skill as a file artifact. This is the key insight: the skill crystallises from practitioner work, not from a blank-page writing exercise. The conversation you just had (identifying real risks, specifying real thresholds, designing real monitoring logic) is what gives the skill its substance.

-

Review and customise. Open Customize → Skills in the Cowork sidebar. Your new skill appears under My Skills. Read its description and examine what Cowork encoded: the risk categories, the elevation indicators, the detection thresholds. Ask yourself:

- Are the thresholds specific enough? (e.g., "revenue spike > 2.5 standard deviations from trailing 30-day average" vs. "unusual revenue increase")

- Do the indicators reference concrete data sources? (e.g., "bill of lading date vs. invoice date" vs. "shipping documents")

- Would a junior auditor with this skill catch risks that sampling-based approaches would miss?

Edit anything that needs tightening. The skill is yours to refine.

-

Test reusability. Start a new Cowork task for a different company or sector. Invoke your skill and ask Cowork to run a risk identification using it. Does the skill generalise, or is it too narrowly tied to the original entity? If it breaks on a new engagement, that tells you which parts encode genuine sector expertise and which parts were entity-specific details that should have been parameterised.

Check your work: The risk assessment (Step 1) should identify company-specific and sector-specific risks, not generic audit risks. The monitoring specification (Step 3) should define measurable thresholds and alert conditions, not vague instructions. The skill (Steps 4–5) should encode sector expertise that would take a junior auditor years to develop: with concrete elevation indicators drawn from real financial data, not abstract descriptions. The reusability test (Step 6) is the ultimate check: a skill that only works for one company is a template; a skill that works across the sector is encoded expertise.

This exercise demonstrates the core Agent Factory pattern: domain expertise encoded as a skill through the natural act of doing expert work. You did not sit down to "write a skill from scratch": you did audit analysis, and the skill emerged from that analysis. In Lesson 12 (Assurance Practice Lab), you will build on this pattern with more complex multi-step audit workflows.

Explore the real-world platforms discussed in this lesson:

- KPMG Clara: kpmg.com/us/en/capabilities-services/audit-services/kpmg-clara

- MindBridge AI: mindbridge.ai

Try With AI

Use these prompts in Cowork or your preferred AI assistant to explore this lesson's concepts.

Prompt 1: Sampling vs Population Testing Analysis

Explain the difference between traditional audit sampling and

AI-driven population testing using a concrete example.

Take a company with 50,000 purchase transactions in a year.

Under traditional sampling (ISA 530):

1. How many transactions would a typical audit sample include?

2. What statistical confidence does this provide?

3. What is the risk of missing a material misstatement?

Under AI population testing:

1. How many transactions would the AI examine?

2. What changes about the auditor's conclusion?

3. Does this eliminate audit risk entirely? Why or why not?

Use language appropriate for a CA/CPA student who understands

audit methodology but has not yet worked with AI audit tools.

What you are learning: The sampling-to-population shift is not just about volume (it changes the logical structure of audit evidence. By working through a concrete example with specific numbers, you develop an intuition for why this is an epistemological change rather than merely a technological one. The question about whether population testing eliminates audit risk is particularly important) it does not, because audit risk includes factors beyond sampling risk.

Prompt 2: Autonomous Audit Agent Specification

Design the specification for an autonomous audit agent that

executes a substantive test of details on accounts receivable.

The agent should:

1. Extract the aged receivables listing from the client system

2. Select items for confirmation based on materiality and risk

3. Draft confirmation letters for selected balances

4. Track responses and identify exceptions

5. Perform alternative procedures for non-responses

6. Document results and draft a conclusion

For each step, specify:

- The inputs required

- The decision criteria the agent uses

- The conditions that trigger escalation to a human auditor

- The output produced

Structure this as a Cowork skill specification. Include at least

three escalation conditions where human judgment is essential.

What you are learning: Specifying an autonomous audit agent forces you to decompose a familiar audit procedure into explicit steps with decision criteria. The escalation conditions are the most valuable part: they encode the professional judgment boundaries that prevent the agent from making decisions that require human expertise. This is the same specification discipline from Chapter 27, applied to the audit domain.

Prompt 3: Continuous Assurance Business Case

A mid-tier audit firm serving 200 clients is considering

investing in continuous audit monitoring capability.

Current state:

- Average audit fee per client: PKR 2,500,000

- Average staff hours per audit: 400

- Staff cost per hour: PKR 5,000

- Annual revenue: PKR 500,000,000

Projected state with continuous monitoring:

- Staff hours reduced to 200 per audit (AI handles routine testing)

- Continuous monitoring licence cost: PKR 800,000 per client per year

- Additional senior review hours: 50 per client (reviewing AI outputs)

Model the economics:

1. Current cost structure and margin per audit

2. Projected cost structure with continuous monitoring

3. Break-even analysis: at what utilisation rate does the

investment pay for itself?

4. Competitive scenario: if a competitor adopts this first

and prices audits at 70% of current market rate, what

happens to the firm's revenue?

Present the analysis with a recommendation.

What you are learning: The commercial implications of AI in audit extend beyond efficiency. By modelling the economics at firm level (including the competitive dynamics of first-mover advantage) you develop the strategic judgment that partners and senior managers need. The competitive scenario in point 4 is particularly important: it shows why adopting continuous monitoring is not optional for firms that want to maintain market position.

Flashcards Study Aid

Continue to Lesson 5: Domain 4: Management Accounting and Financial Management ->